►

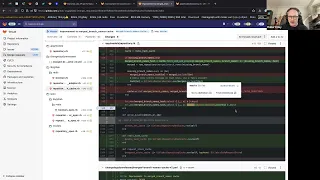

From YouTube: Branch Caching

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

Oh,

yes,

it

was

this

and

there's

just

like

a

huge

gap

in

knowledge,

but

anyway

so

needs

to

add

a

cache

to

this.

But

it's

quite

weird

because

of

the

fact

that

you're,

not

you

can't

really

cache

the

whole

thing,

because

for

the

get

that

repository,

we

have

hundreds

of

thousands

of

merged

branches.

You

know

something

absolutely

insane,

so

you

don't

want

to

Cache

that

whole

thing.

Every

time,

because

for

each

repository

it

would

be

enormous

and

you'd

probably

have

to

either

cache

it

individually

for

every

single

branch.

A

So

every

single

Branch

name

is

cached.

That's

saying

that

it's

merged

somewhere

and

either

you'd

have

to

update

that

list

or

you'd

have

to

delete

the

whole

list

every

time.

So

it

ended

up

in

a

fairly

interesting

solution

and

it

I

did

it

through

two

merge

requests,

so

the

the

first

one

sort

of

shows

the

structure

of

where

I

added

the

caching

and

then

the

second

one

is

where

it

gets

really

interesting.

I

say

really

interesting

slightly

more

interesting

and

it

uses

a

really

weird

cache

that

we

only

use

in

this

area.

A

So

there's

some

basic

sort

of

setup

here

in

in

the

repository

model,

but

mostly

what

we're

looking

at

is

here.

So

this

is

where

there's

the

merged

Branch,

you

can

see

before

it

just

it

just

delegated

it

straight

to

the

repository.

So

there

was

no

caching.

There

was

nothing

going

on

there

and

instead

I'm

just

going

to

move

this

over

here.

A

I

think

that

should

show

up

better

so

yeah.

So,

to

start

with

I

added

a

basic

cache

that

did

it

by

sort

of

just

serializing,

a

hash

of

like

the

names

that

you'd

asked

for

so

you

pass

it.

The

branch

names

that

you

want

to

know

the

status

of,

and

then

it

would

return

those

as

a

hash

instead

or

more

specifically

a

set.

Apparently

that

would

say

this

name,

true

false

as

to

whether

it

was

merged

and

so

for

this

first

first

version

of

it.

A

You

can

see

that

it

just

reads

straight

from

the

rails

cache

and

when

it

writes

them,

it

just

writes

here

it

serializes

a

hash

into

a

single

key

in

in

the

rails

cache.

So

it

doesn't

even

matter

what

back-end

store

it

is

it's

just

a

serialized

hash,

so

you

can't

do

anything

with

that

hash.

It's

just

a

single

cache

value.

Every

time

the

repository

is

updated.

This

key

would

get

deleted

because

we

can't

update

the

individual

entries

in

it

to

say

this.

A

New

branch

is

cached,

but

you

don't

need

to

like

delete

all

the

other

ones,

because

they're

still

they're

still

merged,

like

the

their

status,

is

the

same

as

it

was

before.

But

you

can't

do

that,

so

that

was

just

the

initial

version

too

kind

of

get

a

rough

idea

of

it,

but

it

then

very

quickly

changed

to

this

one.

So

this

is

where

it

gets

a

bit.

A

We

don't

just

want

a

list

of

merged

branches

or

not

merged

branches.

What

we

want

is

a

list

of

the

branches

that

we've

supplied

it

and

whether

they

emerged

or

not.

It's

a

really

really

Niche

use

case.

So

this

was

an

awful

lot

of

code

to

solve

one

specific

problem.

So

this

changes

it

in

changes

it

over

I'm

gesturing

at

the

screen

with

my

hand,

but

that's

not

very

useful,

I,

try

and

gesture

with

the

mouse.

A

So

in

this

method

we're

only

really

changing

one

thing

here

and

that

uses

this

method.

So

I'll

come

back

to

this

in

a

minute

and

it

then

just

returns

the

same

data

as

it

was

before

from

that

previous

cache

implementation.

But

it

does

something

using

this

redis

Boolean

method.

Two

so

I'll

explain

both

of

those

as

they

were

both

added

and

that's

actually

a

useful

class.

A

A

So

this

is

written

into

the

the

class

repository

hash

cache,

which

I

could

probably

have

named

better,

it's

quite

large,

but

most

of

it

makes

sense

to

just

sort

of

read

through

so

you've

got.

You

know

in

an

initiative

and

initializer

a

cash

key

method,

delete

method.

You

know

test

if

something

exists

in

it:

a

way

of

reading

a

set

of

keys,

so

getting

just

the

values

of

specific

things

that

you

want

out

of

the

hash,

a

write

method

you

can

kind

of

like

ignore

a

lot

of

these

yeah

sure.

B

A

There

with

do

redis

so

that

uses

that

sort

of

using

the

connection

pooling

thing

I

think

so

it

takes

a

redis

connection

out

of

a

connection,

pool

and

uses

that

the

pipelining

bit

is

then

doing.

It

was

essentially

a

transaction

like

a

database

transaction

with

redis.

So

it's

doing

a

load

of

these

together.

So

this

looks

inefficient

because

you're

doing

like

a

a

set

per

item

in

the

hash,

but

actually

it's

just

doing

them

all

in

one

transaction.

So.

A

Very

fast

it

it's

not

really

the

same

as

a

transaction

in

a

database,

but

it's

always

worth

looking

up

reading

how

redis

pipelining

Works,

because

it

does

have

some

Oddities,

but

it

if

you

think

of

it

like

it,

works

that

way.

It

kind

of

works

that

way.

Okay,

so

that's

how

the

right

method

works,

but

then

the

interesting

one

is

fetch

and

Dad

missing.

So

this

is

where

the

real

work

happens,

and

this

is

the

most

efficient

I

could

make

it.

A

It

will

then

call

a

block

only

once

so

not

per

key,

but

it

all

like

for

all

the

ones

that

can't

find

it

stores

them

in

an

array

calls

a

block

with

that

array

and

then

uses

the

result

of

that

block

to

populate

the

cache,

but

also

merges

the

results

with

the

stuff

it

did

get

from

the

cache

and

returns

it.

So

what

you

can

do

is

you

pass

it

a

set

of

keys

and

you

will

get

data

back.

A

So

when

you're,

looking

at

the

Fetch

and

admissing

Method

I,

think

it's

largely

explanatory

that

I

wrote

a

lot

of

comments,

because

I

figured

I

wouldn't

even

remember

how

this

works

two

years

down.

The

line

and

I

was

correct.

I,

don't

really

remember

how

so

if

it

works,

but

that's

essentially

what

it

does.

So

you

can

see

it

most

easily

in

how

this

is

called

here.

So

we've

got

oh

I

actually

had

opened

up

in

there.

A

A

Oh,

it

returns

a

hash.

So

the

hash

is

passed

as

a

value

as

well,

and

you

just

update

it

with

what

you

want

to

see.

It

doesn't

care

about

the

return

value,

you're,

directly,

updating

that

block

argument

and

so

for

each

of

the

missing

Branch

names.

It

then

sets

the

value

in

that

hash,

using

this

Boolean

helper

and

so

the

belay

and

helper

just

how

to

best

describe

it.

A

So

the

reddish

boulay

in

class

is

the

exact

same

size

for

both

values,

so

true

values

and

false

values-

yeah

it

just

basically

does

like

an

underscore

being

colon

one

or

zero

I.

Think

and

that's

basically

all

it

does

we'll

have

a

quick

look

at

it

yeah

here

you

go

so

it's

probably

the

most

boring

class

in

the

entire

application.

It's

really

really

uninteresting,

but

it

is

quite

useful

because

it

does

allow

you

to

consistently

just

store

Boolean

values

and

it

kind

of

works

all

right

so

yeah.

A

A

A

It's

runs

the

block

with

the

empty

hash

and

the

missing

array

of

keys

it

does

some

standardization

of

the

hash

effectively

just

makes

sure

that

everything's

converted

to

Strings,

because

it

will

be

converted

to

strings

at

some

point,

but

there

could

be

a

difference

between

the

cash

keys

and

values

and

the

non-cashed

ones

by

having

like

symbol

Keys

instead

of

string

keys,

so

just

converts

everything

to

Strings

so

they're

all

exactly

the

same.

It

then

writes

the

new

values

only

back

into

the

cache.

A

So

it's

just

updating

the

cash

value

with

these

new

values.

It

doesn't

clear

the

existing

cache,

because

this

the

size

of

this

is

actually

showing

up

in

some

performance

reports.

This

can

be

like

10,

20,

000,

hash,

key

entries

large

and

he

showed

up

in

a

performance

thing

and

they're

like

oh.

This

is

really

bad.

You

know

we.

We

should

improve

this.

It's

like

this,

this

is

the

Improvement.

This

is

notably

better

than

it

was

before

it.

A

A

All

it

does

and

the

performance

difference

in

it

I

think

shows

up

in

this

graph.

Here

you

go

so

yeah.

You

can

see

towards

the

tail

end

of

the

graph

where

it

just

completely

drops

off,

so

it

effectively

standardizes

the

performance

to

a

base,

slowness,

I,

wouldn't

say

it's

fast:

it's

just

consistently

slow.

Now,

not

spiky

slow.

It

used

to

be

really

bad

like

multiple

seconds

and

then

it's

just

gone

down

a

lot.

So,

let's

see,

if

we

got

it

keeps

going

on

a

bit

longer

and

I.

Think.

A

I

thought

we

had

an

additional

graph

of

that,

but

I

might

not

know

where

it

where

it

is,

but

yes,

essentially

everything's

under

one

second

now,

so

that's

how

it

improved

it,

at

least,

and

as

far

as

I

know,

this

has

never

been

a

useful

piece

of

code

for

anything

else

ever

since

it's

it's

only

useful

for

this

circumstance.

If

anyone

does

think

of

a

use

case

great,

because

if

I

put

a

lot

of

effort

into

it

and

I've

only

used

it

once

and

it

feels

a

bit

stupid.

A

B

A

B

A

Right

but

the

entire

hash

set

does

get

deleted,

so

it

gets

cleared

entirely

in

certain

events.

You

can

see

some

of

it

in

the

so

hearing

expire

branches

cash.

This

gets

called

on

certain

events.

I

can't

entirely

remember

in

which

way

it

gets

called

sort

of

I.

Don't

think

I've

changed

anyone,

so

it's

just

changed

in

the

first

one.

A

So

essentially

it's

added

into

this

clearer

and

I

think

it

gets

cleared

on

certain

types

of

pushes

so

like

a

false

push

or

something

like

that,

but

it

doesn't

get

cleared

like

when

someone

just

merges

a

branch.

If

that

makes

sense,

I

think

there's

a

few.

There

are

a

few

situations

in

which

it

can

get

cleared,

but

yeah.

A

Basically,

we

just

don't

really

care

about

deleted

branches,

because

you're,

probably

not

going

to

be

up

like

querying,

why,

whether

it's

emerged

or

not

anyway,

because

it

doesn't

exist

anymore,

so

it

will

eventually

disappear

and

because

it's

not

used

as

a

whole

set

at

no

point

does

this

get

used

to

return

all

of

the

data

it

has.

It's

only

used

to

checking

against

what

it

knows.

So

you

know

a

set

of

branches.

You

want

to

know

the

status

of

it

Returns

the

status

of

those

branches.

It

doesn't

care

about

returning

everything.

A

A

A

A

The

whole

cache

it

would

only

reverse

it

would

only

delete

certain

caches.

So

probably

the

branch

is

cashier,

so

it

would

delete

the

entire

key

and

all

of

its

values,

so

it

does

end

up

causing

quite

a

lot

of

traffic

in

and

out

of

the

cache,

but

because

this

cache

is

sort

of

added

to

it's,

not

like

the

next

time.

Someone

checks

for

the

merge

status

of

a

branch,

it

fetches

the

whole

list

again

and

rebuilds

the

cache.

A

It

just

adds

that

individual

record

it's

quite

a

a

sort

of

a

gentle

cache

to

repopulate

it's

it.

Sort

of

builds

up

over

time

gets

to

a

certain

size

gets

deleted.

It

results

in

quite

a

lot

of

traffic

in

and

out

of

the

cash,

but

it's

not

as

bad

as,

like

loading,

a

huge

blob

of

data

into

the

cache

each

time,

which

is

what

the

the

first

iteration

this

one

before

the

hashcash

was

doing.

A

B

B

A

So

I

said

on

the

actual

issue

itself:

the

performance

issue

so

yeah.

What

the

problem

was

with

this

was

that

so

it's

not

at

the

database

level

that

the

problem

exists,

or

it

I

mean

it

sort

of

is,

if

you

can't

get

as

a

database

but

effectively

The

Slowdown

here

is

that

it's

hitting

the

hard

disk

every

time

to

read

the

the

information

back.

A

B

A

Yeah,

so

there

was

this

bit

of

info

here

about

which

methods

were

taking

a

long

time.

So

this

is

the

sort

of

starts

showing

how

it

goes

down.

The

stack

Trace

to

sort

of

find

bits,

but

yeah

effectively.

What

he

was

doing

was

making

a

call

to

this

to

the

ref

service

thing.

So

this

is

a

wrappers

to

the

to

our

gitly

client,

so

I

get

client

and

yeah.

That

was

the

one

that's

being

hit

all

the

time.

Okay,.

B

Okay,

okay,

yeah

yeah

Eagle.

Do

you

have

a

question

as

well

yeah

just

to

the

previous

topic?

So

do

you

understand

it

correctly,

so

that

this

merge

branch

is

merged

branches

names

request?

Its

performance

depends

on

the

number

of

branches

yeah

that

we

present

there?

Yes,

so

you

so.

Actually

this

merger

Quest

improves

that

we

do

in

this

request

only

for

missing

branches.

Yes,

so

we

just

reduce,

reduce

the

payload

yeah.

Do

I

understand

it

correctly.

A

It

does

work

quite

quite

effectively

in

that

regard,

but

yeah,

it's

such

a

niche

use

of

of

this

I've

I've

not

actually

found

another

use

for

doing

like

a

partial

cash

read

like

in

the

application

where

you

just

sort

of

like

you're,

adding

to

it

a

little

bit

by

little

bit.

I'd,

be

really

interested

to

know

if

there

are

any,

because

it

was

quite

a

good

performance

Improvement

without

a

lot

of

the

side

effects

of

heavy

caching.

B

Maybe

we're

doing

something

similar

for

highlight

diffs,

we

I

think

we

store

a

hash.

I

guess

so

it's

like

a

key

is

a

file

name

and

value

is

a

highlighted,

deep

dip

for

this

exactly

this

file.

So

if

additional

file

appears

to

be

cached,

so

we

just

add

the

value

to

the

hash

so

yeah

we

don't

rewrite

the

whole

cache

yeah.

A

It'd

be

interesting.

The

only

downside

really

to

using

hash

things

is

that

you

can't

expire

individual

keys

in

it.

I

mean

you,

you

can

delete

them,

so

you

can

manually

delete

them,

but

you

can't

set

time

to

live

on

it.

For

when

that

hash

value

should

expire,

you

can

only

expire

the

whole

thing

so

in

the

implementation

of

it,

it's

a

little

bit

dumb.

Where

is

it

yes,

so

every

time

it

adds

a

new

key,

a

new

value,

it

updates

the

expiry.

So

it

actually

does

this

in

a

loop.

A

A

I,

don't

know

if

we

improved

that

later

on,

but

yeah,

essentially,

because

you

can't

delete

the

individual

value,

that's

kind

of

a

shame

but,

and

it

might

limit

it

in

some

other

implementations,

but

is

partially

a

limit

on

how

the

redis

hashes

work.

We

did

have

some

optimizations

later

on

tied

to

this,

but

also

tied.

A

There's

actually

a

very

similar

class,

that's

repository

is,

is

a

array

caching

instead,

so

it's

caching

a

list

of

things

without

values,

and

that's

got

a

few

areas

that

are

kind

of

shared

with

this,

but

it

doesn't

have

that

really

weird

partial

update

system.

That's

that's

as

far

as

I

know

the

only

place

we

do

that.

B

And

if

we

have

a

half

a

minute,

I

have

a

quick

story

about

radius

pipeline.

Maybe

I

want

to

share

just

a

yeah,

a

quick

note

regarding

radius

pipeline

yeah.

So

it's

like

groups

a

lot

of

calls

and

performs

yeah

like

a

single

round

trip

to

redis

and

saves

us

like.

We

will

save

a

lot

of

time

on

the

run

on

the

round

trips

yeah.

B

We

don't

need

to

perform

around

trip

for

every

redis

call

and

actually

for

the

set

requests

yeah

when

we

don't

need

the

data

it's

pretty

straight

covered,

yet

that

we

just

perform

the

request

and

don't

care

about

the

results.

But

when

we

want

get

request

yeah,

it's

like

when

we

won't

get

data

from

reddit's.

The

data

is

actually

grouped,

but

we

need

to

wait

until

all

requests

are

performed

yeah

until

the

end

of

the

round

trip

in

order

to

get

some

data.

B

So

this

radius

pipeline,

it's

actually

wraps

the

data

in

the

future

class

and

then

we

need

to

call

like

result

in

order

to

receive

the

data

yeah.

That

was

like

lazily

loaded

but

yeah.

My

story

is

about

feature

Flex

so

that

I

used

a

feature

flag

inside

pipeline

block

and

since

our

feature

Flags

like

values,

they

are

stored

in

the

radius

database.

That

feature

when,

when

the

feature

black

value

was

evaluated,

it

was

like

a

future

because

sorry

I

can't

explain

it

but

yeah.

B

Since

it

was

used

in

the

pipeline

and

I.

We

don't

call

like

explicitly

result

method

on

the

feature

flag.

We

received

like

a

future

wrapper,

not

the

value

of

the

feature

flag

and

the

Strange

point

was

that

our

unit

tests

haven't

caught

it

because

we

started

the

values

of

the

feature

flag

to

avoid

doing

radius

calls

and

tests.

So

they

were

only

reproduced

on

the

QA

tests.

So

that

was

my

story

and

yeah.

That's

all

that's.

A

A

that's

a

more

interesting

feature,

flag

problem

than

even

I've

had

the

worst

ones.

I've

had

were

ones

where,

if

you

do,

if

you

use

a

feature

flag

call

too

early

in

the

rails,

initialization

stack

it

attempts

to

perform

it

before

any

of

the

databases

exist

and

I

found

this

out

after

deploying

to

production

twice.

A

The

Json

wrapper

changes

that

I

did

as

well

again

like

two

years

ago

and

yeah

it

just

it

breaks

everything.

It

is

so

frustrating

as

well

and

it

doesn't

show

up

locally

properly

because

the

GDK

were

reloaded

differently

or

it

loaded

things

in

a

different

order

at

the

time

and

yeah.

But

I

don't

like

debugging

feature

flag

problems.

A

B

A

One

of

the

strangest

ones

was

and

how

avatars

were

fetched

and

that

was

doing,

git

calls

or

something

I.

Don't

know.

Something

was

really

weird

with

that

and

it

was

on

a

page

that

literally

no

one

uses

I'll

go

into

that

one

at

some

point

that

one's

quite

fun

but

fixing

a

performance

issue.

That

was

one

of

my

best

fixes

and

it's

on

a

page

literally

no

one

cares

about

or

uses

I

didn't

even

know,

existed,

I

didn't

even

know

we

owned

it.

So.

B

A

B

B

B

Think

this

is

a

great

example

of

that

because,

first

of

all,

it's

obviously

still

highly

relevant

but

yeah.

Some

super

interesting

code

and

it's

good

to

sometimes

dust

things

off

that

you've

looked

at

or

worked

on

a

couple

of

years

ago,

for

you

know

for

who

that

applies

to

and

yeah

thanks

a

lot

Robert

that

was

great

I'll,

stop

the

recording.