►

From YouTube: Actionable Insight Report Walkthrough

Description

Timestamps:

01:07 - Definitions

02:22 - Study Background

04:24 - Executive Summary

06:46 - Top Research Takeaways

07:52 - Q:What does the current state of AI issues?

09:44 - Q:What is an ideal End-State?

12:20 - Q:What is the workflow for AI issues?

16:18 - Q:Who are the DRIs?

18:16 - Q:What is the timeline?

19:35 - Q:What are the factors in success?

21:21 - Next Steps

23:50 - Appendix

A

A

A

The

first

one

is

just

generally

actual

insights,

which

is

a

label

that

has

two

scoped

levels

to

it.

Actionable

insights

are

a

follow-up

action

or

any

follow-up

action

that

takes

place

after

research

has

been

collected

and

an

actual

insight

is

supposed

to

both

define

what

the

insight

is

and

clearly

call

out

the

next

step.

Think

of

it

as

the

conclusion

and

the

the

final

touches

of

research.

A

So

in

actionable

insights

there

are

two

scoped

levels.

One

of

those

scoped

levels

is

the

exploration

needed,

so

that

is

when

the

follow-up

action

is

something

like

more

exploration,

follow-up,

research,

design,

explorations

desk

research

or

just

conversations

that

take

place

in

order

to

continue

the

conversation

and

the

overall

goal.

A

The

study

initially

was

launched

because

there

was

a

large

number

of

open

issues.

Older

actionable

insight

issues

that

were

not

being

closed,

while

other

actual

insights

issues

were

continuing

to

be

open.

So

there

was

just

a

large

growing

number

of

open,

actual

insights,

and

the

purpose

of

the

research

was

to

understand

why

that

why

they

were

still

open

and

to

develop

potential

solutions

that

we

could

implement

as

the

research

team

to

hopefully

keep

that

from

happening

in

the

future

or

continuing

to

happen

in

the

future.

A

A

A

So,

in

order

to

answer

those

questions,

we

decided

to

do

a

mixed

method

approach

that

was

first,

some

desk

research

in

the

form

of

quantitative

data

exploration

of

the

usage

analytics

of

our

own

actionable

insights.

So,

looking

at

what

labels

we

used

or

the

timeline

involved

in

those

issues

and

stuff

like

that,

then

we

did

some

qualitative

interviews

with

four

gitlab

team

members

about

their

past

experience

with

actual

insights.

And

then

we

launched

a

survey

internal

survey

to

summarize

and

to

drive

home

some

last

details.

A

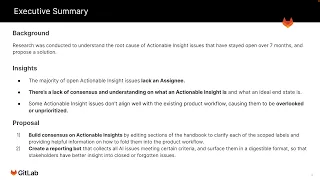

We

wanted

to

answer

so

an

executive

summary

of

the

research

first.

Just

to

understand

the

background.

The

research

was

conducted

to

understand

the

root

cause

of

actual

insight,

issues

that

have

stayed

open

about

over

over

seven

months

and

have

stayed

open

with

no

real

sign

of

being

worked

on

and

to

try

to

propose

a

solution

to

keep

that

from

happening

in

the

future.

A

We

also

saw

that

actual

insight

issues

don't

align

well

with

existing

product

workflows.

All

the

time

causing

them

to

be

overlooked

and

unprioritized

at

times

it's

not

that

it

is

always

the

case,

but

it

is

the

case

with

a

a

certain

type

of

issues

that

continues

to

happen,

causing

a

recurring

problem.

A

The

second

proposal

is

to

create

a

reporting

bot

that

collects

all

of

the

actual

insight

issues

that

meet

certain

criteria

and

surface

them

in

a

digestible

format

for

stakeholders.

So

they

can

see

an

overview

of

all

of

the

insights

that

they

cover

or

ones

that,

potentially

you

know,

may

have

forgotten

or

as

a

place

just

to

surface

those

insights

in

a

different

place

as

a

part

of

their

existing

prioritization

workflow.

A

So

now

I

want

to

go

into

some

specific

takeaways

from

the

research

and

the

questions

that

we

asked.

The

top

takeaways

that

we

found

was

that

first,

if

an

actionable

insight

issue

is

not

closed

within

seven

months,

it's

pretty

unlikely

that

it's

going

to

be

closed

and

it

will

probably

remain

open.

We

also

saw

that

58

of

actionable

insight

issues

were

left

unassigned

and

of

those

issues.

74

of

them

are

still

open.

A

So

first,

the

first

question

we

asked

was:

what

does

the

current

state

of

actionable

insight

issues

look

like,

and

we

saw

that

on

average

21

actual

insight,

labels

were

created

every

month,

but

only

10

of

them

are

closed

each

month,

which

is

about

48

issues

with

the

scope.

Labels

of

product

change

and

exploration

needed

were

created

at

a

pretty

similar

rate,

but

exploration

needed

issues

were

closed

three

times

less

frequently

than

those

product

change

is

issues.

A

We

also

found

that

exploration

needed

issues

are

often

left

unassigned

and

not

prioritized.

We

found

that

a

disproportionate

amount

of

those

exploration

needed

issues

were

left,

unassigned

and

they're

still

open

and

a

slight

number.

A

slightly

higher

number

of

exploration

needed

issues

had

the

sus

and

severity

labels

compared

to

product

change,

issues,

which

was

unexpected.

A

A

Most

of

them

said

it

was

just

generally

something

to

follow

up

on,

but

not

necessarily

implement,

and

it

was

more

a

discussion

that

had

to

be

made

to

a

conclusion,

but

ask

the

same

question

of

how

to

define

an

actual

insight.

Server

participants

said

that

it

just

is

a

change

ready

to

be

made

largely

so.

A

The

insight

that

we

derived

from

this

is

that

there's

ambiguity

and

obviously

lack

of

consensus

on

these

two

questions

and

there's

an

unclear

definition

on

what

an

actual

insight

is,

and

we

think

that

the

difference

between

the

interview

and

the

survey

groups

indicate

that

the

context

of

work

is

a

factor

in

how

participants

think

of

actual

insights,

because

in

an

interview

you

continue,

we

continue

to

talk

about

actual

insights,

walk

through

some

of

the

work

and

talk

about

what

was

involved.

Whereas

a

survey.

A

A

Then

they

asked

the

question

if

the

research

was

either

a

solution,

validation

or

problem

validation?

If

it's

solution

validation,

then

it's

likely

that

it's

going

to

be

changes

made

to

the

product,

usually

through.

Like

an

mr

and

sometimes

even

it's

without

an

actual

insight

issue

necessarily

attached

to

it,

it

could

be

made

so

quickly.

The

change

that

we

product

changed

the

product

could

be

done

so

quickly

that

we

don't

necessarily

get

the

full

workflow

of

creating

an

actual

insight

issue

documenting

and

then

implementing

the

changes

and

the

dri.

For

that

time.

A

That

sort

of

workflow

is

usually

going

to

be

a

product

designer.

But

if

we

go

back

to

when

the

insight

was

created

and

asked

the

question,

if

it

fit

our

assumptions,

if

it

did

not

fit

our

assumptions,

it's

probably

going

to

lead

to

more

research.

That's

going

to

be

scheduled

further

down

the

workflow,

because

there

was

either

something

that

was

unvalidated

or

questioned.

A

If

it

is

problem

validation,

then

it's

likely

going

to

be

either

just

made

to

the

product

or

a

change

made

to

the

product.

If

it's

going

to

be

high

impact

and

low

effort,

basically

an

a

quick

fix

or

an

easy

solution,

then

it's

likely

just

going

to

be

a

change

to

the

product

made

immediately

if

it's

problem

validation.

A

So

in

the

rare

case,

for

instance,

if

it's

a

product

manager

asking

a

somewhat

complex

question

or

a

problem

that

he's

trying

to

figure

out,

but

he

finds

that

the

solution

is

actually

very

easy

to

implement.

Then

it's

likely

that

they're

just

going

to

immediately

the

product

manager

will

immediately

just

make

changes

to

the

product

depending

on

how

easy

or

how

eff,

how

how

much

effort

is

involved.

A

So

much

from

one

group

to

another

group

and

again,

that's

sort

of

tying

into

the

workflow

is

always

going

to

be

done

with

a

mix

of

conversations

between

the

product

manager

and

a

product

designer

and

to

look

at

the

bigger

picture,

the

entire

this

entire

workflow

of

completing,

hopefully,

an

actionable

insight,

is

typically

going

to

be

about

six

months.

Like

we

said

earlier,

if

it's

seven

months

or

longer,

we

found

that

typically,

those

issues

do

typically

stay

open,

which

makes

sense.

A

So

next

we

also

asked

who

are

the

dris

and

the

people

responsible

in

those

all

those

workflows

you

saw

in

the

previous

one.

The

specific

workflows

might

have

certain

owners,

depending

on

certain

questions

and

criteria

like

we

saw

in

the

last

slide,

but

in

the

data

we

also

saw

that

the

actual

authors

or

the

assignees

of

those

actual

insights

was

slightly

different

than

what

we

expected.

A

We

found

that

there

could

be

large,

not

large,

but

common

instances

of

a

random

person

creating

an

actual

insight

issue.

We

also

saw

that

stakeholders

through

stakeholder

interviews

saw

that

the

responsibilities

between

managers

and

designers

was

very

fluid

and

involved

constant

communication,

and

because

of

that,

it

varied

a

lot

from

one

group

to

another

group.

So

it's

a

little

hard

to

have

one

set

workflow

throughout

all

of

the

groups.

A

The

insight

that

we

got

from

this,

I

think,

is

that

the

responsible,

the

dris

and

the

people

responsible

commonly

depend

on

the.

It

depends

on

the

type

of

work

involved

and

the

managers

will

typically

work

on

more

like

strategic

level

actual

insights,

whereas

the

designers

will

often

work

more

on

product

change

insights,

but

this

behavior

is

not

always

persistent

across

all

teams

and

it

could

depend

on

the

skill

set

and

the

ownership

of

projects

for

each

team.

A

To

close,

if

you

look

on

the

graph

on

the

right,

that's

that's

measured

in

months,

and

you

can

see

that

product

change

issues

average

to

close

in

about

5.1

or

5

months,

whereas

exploration

needed

issues

was

closer

to

about

8

months

from

start

to

finish,

to

closing,

interestingly,

though,

issues

which

had

been

assigned

took

slightly

longer

to

close

than

unassigned

issues,

but

unassigned

issues

are

closed

at

half

the

rate

as

unassigned.

Basically,

so,

if

a,

if

the

issue

is

unassigned,

it

is

very

likely

to

remain

open.

A

We

saw

that

actual

insights

are

worked

on

by

folding

them

into

the

product

workflow

by

assessing

them

their

priorities

and

scheduling

them

in

the

world

map

which

makes

sense,

as

that

is

how

product

manager

workflows

typically

are.

We

saw

that

the

priorities

in

that

workflow

typically

depended

on

or

were

assessed

on,

the

impact

which

the

change

would

have

on

the

users,

the

effort

or

the

time

that

it

would

take

to

close

and

whether

or

not

that

issue

was

a

product,

change

or

exploration,

needed

type

of

issue.

A

The

challenges

in

completing

and

getting

to

those

in

states

and

for

those

actual

insights

we

saw

from

the

qualitative

research

was

largely

the

cross

group

or

cross

stage

communication

issues

of

trying

to

get

those

large

workflows

done

or

certain

cross

work

done.

We

also

heard

that

trouble

finding

past

insights

was

a

problem.

A

If

there

was

a

question

that

wasn't

fully

understood

even

trying

to

find

what

research

was

done

to

see,

if

there

is

more

to

understand

at

all

could

become

a

barrier

the

time

to

recruit,

participants

was

also

a

barrier,

but

that's

something

we

expected

because

that's

pretty

common

among

all

research-

and

we

also

lastly,

saw

that

not

knowing

what

success

looked

like

was

sometimes

an

issue.

If

there

wasn't

a

clear

end

state

or

a

clear

goal

in

mind,

then

it

was

sometimes

an

occurrence

that

the

research

or

the

work

could

just

continue

on

and

on.

A

A

So

we

can

separate

those

issues,

increase

the

visibility

of

certain

areas

that

might

create

abandon

issues.

So,

for

instance,

like

we

saw

with

the

data,

the

unassigned

issues

were

very

likely

to

be

open.

So

we

can

create

the

reporting

bot

to

surface

those

unassigned

issues

in

a

way

that

the

stakeholders

can

see

pretty

easily.

So

that

way

they

can

actually

just

be

reminded

of

them

or

know

that

their

work

still

should

be

done

or

a

way

to

help

them

fold

into

the

workflow.

A

And

that's

it.

Thank

you

very

much

for

watching

and

stay

tuned.

If

you

want

to

know

anything

more

feel

free

to

check

out

our

insight,

our

exploration

in

sci-sense,

the

insights

that

we

found

in

there.

You

can

also

look

at

the

different

issues

and

the

krs

involved

in

each

of

the

steps

involved,

as

well

as

the

current

work

in

creating

that

reporting

bot

for

the

actual

insights.