►

From YouTube: UX Showcase: SIG and alert management

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Before

I

dive

into

the

designs,

I

wanted

to

take

a

step

back

just

to

do

a

quick

reminder

of

who

the

monitor

Health

Group

is

officially

the

Health

Group

helps

them

up.

Teams

respond

to

triage

and

fix

both

errors

and

alerts

for

the

systems

and

apps

they

maintain,

which

is

kind

of

a

mouthful,

but

in

plain

English

that

basically

means

that

we

tell

people

when

their

stuff

is

broken,

and

then

we

help

them

figure

out

what's

gone

wrong,

so

they

can

get

everything

working

again

as

quickly

as

possible.

A

As

a

group

we

planted

in

to

introduce

three

new

product

categories

in

2020

status,

page

alert

management

and

digital

experience,

management,

which

will

include

synthetic

monitoring

and

that's

a

whole

lot

of

new

stuff,

which

is

why

I

included

a

little

a

little.

Video

of

me,

panicked,

breathing

in

queue

on

me

tackled

the

MVC

for

status,

page,

which

we

talked

a

little

bit

about

in

a

previous

showcase.

So

I

won't

talk

about

it

today.

A

That's

already

live,

but

next

on

our

list

of

things

to

tackle

is

alert

management,

so

alerts

already

did

exist

in

get

lab

sort

of.

You

could

make

an

alert

from

the

charts

in

the

metrics

dashboard.

You

can

also

receive

alerts

from

Prometheus.

Previously

any

alerts

we

receive.

We

just

automatically

created

issues

404,

but

we

didn't

have

a

single

place

where

the

people

where

people

could

see

the

alerts

they

had

set

and

received

or

fine

tune

them

at

all.

There

wasn't

any

cohesive

workflow

when

it

came

to

receiving,

investigating

and

resolving

alerts

within

get

lab.

A

Do

any

of

these

things.

People

had

to

utilize

other

tools,

which

meant

their

customers

are

having

to

triage

alerts

outside

of

gitlab

and

then

come

back

and

to

get

lab

to

make

the

required

code

changes

to

address

the

alert.

This

meant

tons

of

context,

switching

for

users

in

what's

already

a

super

stressful

situation,

which

is

obviously

not

ideal

in

any

way.

A

It's

also

a

really

great

opportunity

for

us

to

improve

an

existing

pain

point

for

our

users,

so

we

really

needed

to

come

up

with

a

real,

comprehensive

plan

for

how

alerts

would

be

displayed,

investigated

and

resolved

from

within

get

lab,

and

in

order

to

figure

out

how

this

could

work

and

look.

We

wanted

to

better

understand

our

customers

workflows

with

regards

to

alerts,

so

we

wanted

to

know

things

like

what

tools

do

they

use?

What

does

their

workflow

look

like?

Would

it

make

sense

to

have

this

workflow

exist

entirely

within

get

lab

control?

A

We

quickly

realized

that

people

in

large

enterprise

companies

likely

already

have

tools

that

they

use

for

alerts

and

for

incident

management?

More

generally,

it's

unlikely,

therefore,

that

we'd

be

able

to

get

these

sorts

of

customers

to

switch

over

to

get

lab

and

use

a

tool.

That's

in

its

early

stages

of

development,

it'd

be

too

risky

for

them,

but

we

also

have

customers

that

fall

under

the

small

to

medium

size

and

business

category

SMB

customers

and

these

companies

likely

have

less

well

defined

processes

and

they're

less

invested

in

specific

tools.

A

They

might

actually

not

even

have

any

tools

available

to

them

all

at

all.

Currently,

with

customer

selling,

and

in

this

group,

we

might

have

more

of

an

opportunity

to

get

them

to

try

out

our

product

offerings

and

give

us

feedback

on

them.

So

if

we

can

access

SMB

customers,

we

could

potentially

get

them

to

use

our

features

even

at

early

stages

of

development.

So

with

that

in

mind,

we

decided

to

recruit

a

group

of

SMB

customers

to

form

a

special

interest

group

or

cig.

A

Our

hope

is

that

we

could

work

with

them

to

build

an

alerts

platform

that

they

could

start

using

for

alerts

and

for

instant

immediately

in

our

minds.

This

group

could

serve

two

purposes.

They

give

us

an

easy

way

to

get

quick

feedback

on

feature

proposals,

and

it

would

give

us

a

test

group

of

real-life

customers

who

could

theoretically

start

dogfooding.

A

Our

new

alert

features

like

right

away,

which

is

super

exciting,

so

we

went

about

recruiting

the

Stig,

and

our

state

group

has

five

members

and

after

we

recruited

them,

we

started

having

conversations

with

them

about

how

they

worked.

So

we

asked

them

things

like.

How

are

you

notified

that

something's

gone

wrong?

Where

are

your

alerts

sent

if

you're

even

getting

alerts?

What

to

what

tools?

Are

you

using

for

your

alerts

currently

and

maybe

you're,

not

even

using

any

tools?

A

A

So

in

talking

to

the

fit

group,

we

learned

a

ton

about

their

current

workflows

and

we

identified

a

real

gap

in

them.

Our

SMB

customers

were

trying

to

link

together

a

bunch

of

different

tools

to

surface

in

triage

their

alerts,

but

wasn't

really

working

that

kraid.

In

particular,

we

heard

that

investing

of

investigating

alerts

and

multiple

tools

is

disorienting

and

overwhelming

much

of

the

work

they

were

doing

was

manual

and

repetitive,

they're

experiencing

alert,

fatigue,

stress

and

low

morale.

A

More

importantly,

we

realized

that

by

bringing

the

entire

alert

triage

workflow

into

get

lab,

we

could

help

these

customers

address

these

problems.

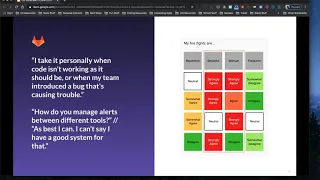

It's

on

the

screen.

You

can

see

a

couple

quotations

from

users

that

communicate

their

stresses

around

alerts

and

their

struggles

with

managing

multiple

tools

like

the

one

on

the

top

left.

I.

Take

it

personally

when

code

isn't

working

as

it

should

be,

or

when

my

team

introduced

a

bug,

that's

causing

trouble.

A

It

feels

very

personally

stressful

and

the

one

the

quotation

right

below

when

we

asked

have

you

manage

alerts

between

different

tools,

the

the

person

we

were

talking

to

actually

just

started

laughing,

and

so

I'm

kind

of

just

best.

I

can

but

I

can't

say:

I

have

a

good

system

for

that

and

then

in

the

sort

of

diagram

on

the

right

you

can

see

overwhelmingly

when

we

talk

to

people,

they

described

their

firefights,

which

is

the

process

for

responding

to

and

triaging

alerts.

A

Managing

incidents

are

stressful

and

manual

overwhelmingly

for

people,

so

this

presents

us

obviously

a

real

great

opportunity

to

improve

the

experience

for

our

users.

So

since

we

had

a

decent

sense

of

what

the

current

workflow

was

for

SMB

customers,

we

started

as

a

design

discovery

issue

to

id8

about

the

new

workflow

within

get

lab.

A

I

started

by

doing

some

initial

investigation

into

other

tools

available

for

helping

people

manage

their

alerts

and

I,

initially

reviewed

big

panda,

Mook

soft,

which

I

hope

I'm

pronouncing

correctly

ops,

Genie

alerts

and

Splunk

alert

manager

and,

though,

there's

a

link

to

the

competitive

analysis

in

the

slides.

If

you

want

to

check

it

out

after

I,

then

went

through

and

mapped

out

the

different

features

each

tool

had

and

identify.

A

Products

and

the

features

and

pages

that

already

existed

in

get

lab

around

alerts

and

our

cig

group's

current

workflows,

I

mapped

out

a

workflow

for

alerts

within

get

lab.

I

also

broke

this

workflow

down

into

several

different

iterations,

so

we

could

start

thinking

about

what

sort

of

functionality

can

be

present

in

an

MVC

and

what

could

come

later.

A

Now,

if

you're

doing

all

that

initial

investigation,

we

came

up

with

a

basic

list

and

detailed

view

for

alerts

within

get

lab,

which

you

can

see

on

the

screen

now.

This

new

functionality

would

allow

users

to

display

their

alerts

from

various

monitoring

tools

directly

within

get

lab.

They

get

search

through

the

alerts,

triage

and

escalate

them

to

an

incident

issue

if

they

required

in

additional

investigation.

A

So

we

went

back

to

the

sync

group

to

show

them

the

designs

we

created

and

I

sort

of

want

to

take

a

moment

to

call

out

how

amazing

it

was

to

actually

be

able

to

do

this

and

I

do

so

with

so

little

effort.

We

didn't

have

to

recruit

people

or

sort

through

a

large

list

of

respondents

to

find

ulis.

There

were

people

to

tattoo.

We

already

had

people,

we

could

talk

to

you,

and

this

was

a

huge

time

saver

for

us.

We

knew

we

knew

who

we

could

ask

so

in

terms

of

solution

validation.

A

We

specifically

wanted

to

see

if

what

we

were

proposing

aligned

with

their

needs

and

if

it

would

be

something

that

they

can

use

within

their

organization.

We

had

a

ton

of

great

feedback

and

overwhelmingly

our

sit.

Groups

of

these

features

would

be

a

useful

addition

to

their

workflow.

They

also

gave

us

a

ton

of

suggestions

for

how

to

continue

building

our

alert

offering

in

the

future.

A

So

where

are

we

at

now

and

what

our

next

steps

alert

management

is

now

in

gitlab,

which

is

very

exciting.

A

good

chunk

of

alert

management

minimal

was

released

in

13.0.

Many

more

features

will

be

introduced

into

those

one.

At

thirteen

point,

two

we're

continuing

to

get

back

from

the

state

group.

Our

plan

is

to

meet

with

them

synchronously

monthly

and

to

start

pinging

them

asynchronously

on

shoes.

When

we

have

small

things

that

we

need

feedback

on

now,

we

also

held

our

first

simulation

day

on

June

4th

with

our

SRA

teams.

A

A

A

A

Now

that

it's

live,

so

that's

all

I

had

and

definitely

let

me

know

if

you

have

questions

either

and

slacker

after

this

called

love

to

chat

more

recently

chatted

with

Andy

about

how

alerts

and

vulnerabilities

how

they're

pretty

similar

and

how

we

can

make

sure

that

the

experiences

are

aligned

and

I.

Imagine

there's

other

opportunities

like

that

with

other

designers.

So

if

you

see

things

in

here

that

are

similar

to

what

you're

working

on

I'd

certainly

be

in

love

love

to

chat.

But

that's

that's

all

I

have.

Are

there

any

questions

or

should

I

hand?