►

From YouTube: IETF100-TCPM-20171116-0930

Description

TCPM meeting session at IETF100

2017/11/16 0930

https://datatracker.ietf.org/meeting/100/proceedings/

A

A

Like

this,

okay,

okay,

let's

get

started

hello,

so

welcome.

This

is

TC

p.m.

working

group

meeting.

Just

you

make

sure

you

are

in

the

right

room.

Okay,

my

name

is

Yoshi

from

in

Ishida

one

of

the

co-chair

of

the

TCP

and

working

group,

and

this

is

also

Michael

show

and

a

coach

of

disappearing

working

group

and

unfortunately,

Michael

Jackson

cannot

come

to

this

meeting,

but

we

expect

he

will

come

next

meeting.

A

Okay,

this

isn't

user,

not

oh

well,

I

think

you

are

in

the

middle

with

it.

Basically,

what

you

say

in

this

meeting

will

be

governed

by

is

not

well,

and

if

you

want

to

take

a

look

kaveri,

you

please

go

to

the

ITF

webpage

or

you

can

google

it

on

the

and

then

you

can

find

a

notable

very

easily

and

not

taking,

and

I

appreciate

no

gory

to

taking

of

note

taking

and

the

media

thanks

for

each

other

and

before

we

start

a

meeting.

A

B

A

Draft

name

so

that

Machias

can

track

the

status

of

the

you

addressed

okay

moving

on

so

this

is

the

agenda

of

that

state.

Meeting

at

first

cheers

we'll

talk

about

working

group

status,

and

after

this

we

have

for

presentation

for

working

group

items.

First

name

we'll

talk

about

our

the

back

off

drug

after

this

Marcel

and

Bob

will

talk

about

generalized

ECN

and

after

this

Bob

and

the

media

will

talk

about

accurate,

easy

and

draft.

And

after

this

are

you

tuned

we'll

talk

about

TCP

rap

and

after

this

a

we

will

talk.

A

A

Okay,

and

we

appreciate

your

cooperation

to

process

these

draft,

and

then

we

have

several

active

working

group

document.

So

first

one

is

alternative

back

off

easy

and

draft,

and

this

is

one

of

the

today's

agenda

and

in

the

last

meeting

we

cuts

several

reviewers

Ferranti

reviewers

for

this

draft

and

then

we

started

receiving

the

reviews

from

the

reviewers

and

which

pretty

nice

so

I

think

this

Trust

is

getting

very

much

worth,

and

so

after

you

know,

we

received

another

one.

A

My

review

was

something

and

then

we

can

think

and

device

a

draft

and

then,

if

everything

goes

smoothly,

I

think

we

can

proceed

to

the

working

group

last

call.

So

our

expectation

is,

you

know,

within

a

couple

of

within

a

month

or

so

we

maybe

able

to

proceed

to

working

with

rusticles.

That

is

our

expectation

and

the

next

one

is

accurate,

EEG

and

draft.

This

is

also

one

of

the

agenda

of

that

today

and

this

drug

has

been

updated

very

recently,

so

I

think

you

know,

let's

discuss

in

the

meeting

and

generalized

issue

yen.

A

So

we

have

to

make

sure

each

drug

do

not

contradict

each

other,

so

we

try

to

make

some.

No.

We

have

the

chance

of

starting

discussion

with

on

this

drought

and

then

try

to

make

some

proposal

to

the

authors

of

some

that

text

that

do

not

cause

any

conflict

with

our

document.

That's

about

you

know

right

thinking

right

now

so

and

then,

if

that

doesn't

work

well,

I

think

we

might

think

about

other

brands.

But

right

now

we

try

to

focus

on

to

make

some

proposal

to

the

authors

so

which

can

also

agree.

A

Well,

we

did

working

a

working

group

adoption

code

and

then,

as

a

result,

I

this

draft

has

been

adopted

and

so

right

now

we

are

trying

to

prepare

the

form

of

this

draft.

And

but

there

are

some

discussion

between

the

author

and

then

Cheers

and

then

the

chairs

know

made

some

type

of

proposal

and

then

I

think

they

also,

you

know

agreeing

some

one

of

my

our

proposal,

so

our

expectation

is,

they

also

will

update

trust

based

on

our

suggestion.

A

That's

the

current

status,

any

questions

so

far,

okay,

moving

on

and

then

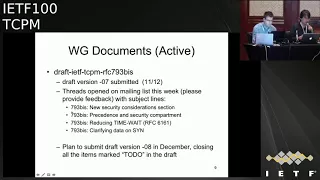

793

B's

draft.

Unfortunately

ways

general

come

to

this

meeting,

but

we

got

some

text

from

him.

So

I

will

talk

about

it

on

behalf

of

the

author,

so

as

you

might

notice,

their

3d

button

has

been

submitted

about

four

days

ago

and

there

are

several

open

discussion

item

in

this

draft,

so

always

open

this

start

opening

the

thread

on

the

mailing

list.

A

With

the

following

entitles,

so

the

straight

will:

if

you

try

to

open

for

straight

I,

think

and

then

after

you

know,

we

settle

down

or

opening

open

discussion

items.

New

budget

will

be

submitted

in

pin

saber

closing

all

the

item

mark

to

do

in

the

draft.

That's

the

plan,

okay,

any

questions

so

far,

okay,

moving

on

and

then

finally,

I

would

like

to

talk

about

MP

TCP

combat

a

draft

so

right

now

chairs

are

having

a

plan

to

run

adoption

call

on

MPT

CP

combated

draft.

A

So

to

be

clear,

we

are

going

to

run

adoption

core

and

then

we're

an

adoption

call

on

this

draft

and

the

if

it's

accepted

well

supported

and

participate

combat.

The

draft

will

be

a

working

group

item

of

booty,

CPM

working

group.

That's

the

prong!

So

really

briefly

describe

the

background

of

this

draft,

and

so

this

draft

first

name

is

a

draft

of

phenomena

and

PTC

become

butter.

This

drug

has

be

presented

in

the

rust,

MPD

CP

meeting

and

then

this

draft

is

mainly

designed

for

to

assist

the

deployment

or

multipass

CCP.

A

But

if

you

look

at

this

throat

carefree,

if

you

look

at

the

architecture

in

the

draft,

this

draft

has

a

certain

generic

architecture

so

to

be

clear,

I,

don't

say

very

January,

quite

I

dont

say

razer

generic!

No,

because

this

will

be

a

part

of

the

decision,

so

it

has

some

generic

mechanism

so

and

if

the

architecture

in

this

raft

is

a

generic

winner,

we

can

apply

this

technology

to

other

tcp

extensions

such

as

this

think.

Maybe

we

can

apply

the

same

technology

to

this

thing.

That's

maybe

very

interesting

use

case.

A

So

if

this

draft

can

have

a

generic

mechanism,

it

will

barely

make

sense

to

have

this

draft

in

TCP

and

working

group,

and

so,

if

you

are

interested,

please

see

or

via

the

email

which

he

sent

very

recently

several

days

ago,

like

contains

lots

of

you

know,

useful

information

to

understand

that

or

his

draft

is

the

intention.

So

please

check

and

then

right

now

you

know

we

cannot

discuss

the

technical

detail

of

this

draft

but

know

we

would

like

to

share

some

opinions

on

learning

adoption

code

in

TCP

and

working

group.

A

C

Me

I

could

have

its

own

right.

Yeah

me

a

cool

event,

M

s

ad

for

both

of

the

groups,

so

I

would

just

like

to

repeat

once

again

the

request

for

reviewing

this

document.

Even

so,

the

use

case

is

MPT

CP

and

that's

a

use

case

that

makes

most

sense.

I.

Think

what's

needed

here

is

like

general,

TCP

expertise

which

is

in

this

room.

So

please

have

a

look.

D

D

So

Dave

back

so

to

be

clear,

I

would

not

I

would

not

try

to

make

this

draft

applicable

to

teeth,

applicable,

TCP,

Inc

I

think

that's

a

I.

Basically,

if

you

make

us

write

a

factor,

a

little

TCP

Inc

you're

describing

a

man-in-the-middle

attack

as

a

feature.

I

think

and

that's

not

good

I

prefer

just

leave.

Looked

at

each

being

left,

probably

left

left

alone.

C

A

A

E

E

Okay,

so

let's

try

it

so.

This

draft

is

basically

an

update

to

the

to

the

alternative

back

off

with

EC

n.

So

next,

please

in

the

last

ITF

three

persons

were

assigned

to

do

the

review.

The

Valen

volunteer

to

do

the

review,

so

we've

got

feedback

from

Roland

and

then

we've

got

also

a

couple

of

days

ago.

We

got

feedback

from

Lawrence

and

and

thank

you

to

them

thanks

to

them,

and-

and

we

have

incorporated

these

these

feedbacks

that

we

received

from

them.

E

So

we

have

submitted

two

revisions

over

the

course

of

the

time

between

the

last

idea.

From

now

the

revision

0-3

was

submitted

a

while

ago

and

and

I

just

submitted

the

revision

zero

for

last

night.

So

what

has

been

basically

happening?

We

have

tried

to

incorporate

all

of

the

comments

from

from

the

two

reviewers.

E

Basically,

we've

got

more

consistent

terminology

and

definitions,

so

there

were

things

that

were

basically

a

bit

more

hand

wavy,

and

then

we

changed

them

and

we

make

them

to

be

more

precise

and-

and

we

define

the

stuff

that

that,

were

there

more

precision

in

the

language,

we

distinguish,

for

example,

between

what

is

a

buffer

and

what

is

the

cue,

because

both

term

terms

were

used

in

the

draft

interchangeably.

So

so

we

provided

a

more

accurate

wording

there

also

everywhere,

where

we

we,

for

example,

I

mentioned

loss.

Now

we

wrote

as

an

inferred

packet

loss.

E

So

we

also

provided

some

clarifications.

One

of

these

color,

if

occasions

were

on

the

safety

of

the

mechanism.

We

explained

that

the

worst

case

scenario,

basically

with

with

alternative

back

of

it

ecn,

is

no

worse

than

the

standard

la

space

DCP

because

anyways,

let's

say

you

basically

don't

react

to

to

ecn

signals.

E

So

if

there

is

a

future

draft,

for

example,

and

specifying

a

CT

piece

condition,

control

behavior

then,

is

up

to

that

draft,

whether

to

adopt

the

alternative

back

off

with

e

CN,

with

with

their

specific,

better

value

that

they

might

want

to

use

or

any

other

type

of

congestion

control.

So

the

scope

of

this

draft

is

basically

limited

to

standard

tcp.

The

references

are

updated.

There

is

no

change

to

the

to

the

technical

content

of

the

draft.

Yes,

please

next.

F

It

was

gory,

fairest

and

I

was

commenting

as

TS

VW

GTI,

rather

than

co-author

and

I.

Think

that's

the

right

thing

to

do

with

things

like

SCTP

and

other

transports.

We

can't

make

a

normative

requirement

on

future

specs,

but

we

can

say

that

this

is

the

right

process.

Whether

we've

got

quite

the

right

words,

we

can

tweak

them

a

little

bit

if

we

need

to,

but

I

I

think

that's

the

right

thing

to

do

from

TSV

WGS

point

of

view.

F

E

Sure

so

so

I

think

we

agree

on

that,

so

this

is

basically

limited

to

to

what

is

in

3168,

and

so

the

references

are

updated.

There's

no

change

to

the

technical

content.

Next,

please,

and

so

we

have,

as

we

mentioned

previously

in

Prague.

We

have

submitted

a

patch

to

the

freebsd

upstream

over

the

course

of

this

time.

This

patch

was

being

reviewed.

Now

the

review

is

complete.

E

G

E

G

D

G

G

E

G

F

Clarification

here

and

okay,

but

the

fundamental

basis

for

the

aid

work-

and

this

go

affair-

has

does

an

individual

off

this

time

and

was

that

if

you

see

loss

ever

you

use

the

standard

TCP

Bally's

for

better

this,

the

change

is

only

when

you

see

an

ECCN

mark.

If

you

ever

see

lost

you

behave

as

a

normal

standard.

Tcp

would

repair

I.

G

Think,

like

yeah,

but

my

question

is

even

if

you

get

an

easy

n

mark,

so

this

is

deviating

from

what

the

original

RFC

said.

That

is,

there

is

a

potential

that

you

might

get

more

easy

on

marks

or

even

a

packet

loss

in

the

very

next

oddity.

So

my

question

is:

should

we

be

more

conservative

in

such

a

case?

G

C

E

C

E

F

E

Only

change

that

we

make

is

that

whenever

in

the

previous

like

like

everything

else,

is

the

same

in

RFC's,

the

only

thing

is

that

that

whenever

you

receive

an

e

CN

mark,

basically

you

go

by

0.8,

so

so

either.

Normally

you

would

react

once

per

our

TT.

So

so,

if

that's

the

case,

we

didn't

touch

anything

else.

So

then,

then

it

should

happen

that

you

would

go

down

by

this

by

0.8

and

but

then,

of

course,

you

may

subsequently

receive

a

packet

loss

in

the

next

hour.

E

E

D

H

F

Think

we

agree

it's

time

to

go

and

read.

The

code

normally

see

a

nun

for

a

because

it's

a

separate

bit

of

cord

surprisingly,

and

let's

just

check

we

Abe

does

not

Abe

does

not

change

it.

That's

all

I

can

guarantee.

But

the

question

is

what

happens

and

I?

Don't

know

what

happens

when

you

get

them

the

two

bits

of

cord

interacting

one

Maricopa.

I

J

J

Essentially

it

said

sin

recover

on

in

response

to

receiving

EC

and

Mark

and

does

not

apply

an

additional

back

off

if

a

loss

were

to

show

up

in

the

period.

So

we

can

alter

that

if

we

think

that's

appropriate

but

as

currently

implemented,

it

would

essentially

do

nothing

until

the

semi

recover

was

reached

and

then,

if

a

loss

showed

up

after

that,

we'd

have

a

50%

back

off.

But

at

moment

it's

only

80%

yep.

D

Sir

david

black

wax

rep

did

this

say

is

that

I

was

third

reviewer

I

finally

got

the

review

done

just

in

time

ie

in

the

last

12

hours.

It's

on

the

list.

It's

almost

entirely

to

troller

the

only

thing

that

might

have

a

little

bit

technical

content

to

it

is.

The

draft

has

a

very

strong

focus

on

recent

modern

atriums

like

pi

and

coddled.

However,

this

is

also

going

to

be

deployed

with

whatever

eight

terms

are

out

there

and

a

little

more

discussion

of

what

else

is

or

might

be

out

there.

B

A

K

Hi

Marcelo

annular,

here

speaking

for

the

authors

of

the

EC

n

plus

plus

draft

next

slide.

So

in

the

last

meeting

we

basically

have

one

open

issue

left.

That

is

what

to

do

with

respect

to

Purex.

So

there

is

a

strong

motivation

for

actually

ECT

marking

pirogues,

because

if

we

don't,

when

there

is

there

are

episodes

of

congestion,

Purex

will

be

more

likely

to

be

dropped

which

will

result

in

performance

impairments.

So

it

would

be

useful

to

mark

them

to

avoid

this

type

of

drugs.

K

K

The

issue

that

was

noted

in

the

previous

meeting

is

that,

if

we

in

the

case

that

there

is

an

endpoint,

that

is

only

sending

pure

acts

and

you

actually

respond

to

that

congestion-

you

have

a

bias,

a

biased

response,

because

the

only

thing

you

can

actually

have

is

response

of

your

acts

having

congestion

and

eventually

you

reduce

and

reduce

more

the

window,

and

you

have

no

opportunity

to

increase

the

window.

On

the

other

hand,

not

responding

to

congestion

to

a

congestion

signal

that

you

actually

receive

seems

like

a

like

the

wrong

thing

to

do

so.

I

K

Okay,

so

so

the

question,

the

question

is:

how

do

how

do

we

handle

the

situation

right?

So

after

some

discussion,

the

proposal

that

that

has

been

done

in

the

mailing

list

is

a

next

slide.

Please

is

to

only

ECT

marks

pure

acts

in

the

case

where

a

key

CN

has

been

enabled

in

the

connection.

The

reason

for

this

is

because

easier

occasion

actually

provides

a

more

accurate

information

and

reports.

K

The

number

of

packs

that

of

packets

that

have

been

see

mark

but,

and

also

a

number

of

bytes

that

encounter

the

congestion

right,

so

that

allows

the

congestion

control

algorithm

in

the

sender

to

actually

be

able

to

respond

to

a

match,

a

finer-grained

signal,

because

in

the

particular

case

of

products,

the

number

of

pack

of

bytes

that

encounter

congestion

will

be

zero

right

because

the

pure

drug

doesn't

contain

date

right.

So

this

is

basically

the

the

proposed

way

forward.

We

have

mentioned

this

in

the

mailing

list.

There

was

no

negative

feedback.

K

L

M

That

is

not

only

about

Asian

and

in

those

cases,

I've

seen

pretty

aggressive

proposals.

So

I'm

really

wondering

whether

we

need

to

deal

with

all

corner

easy

on

cases

correctly.

So

to

me,

I

think

there

could

be

ways

how

we

could

better

decouple

that

draft

here

and

the

equities.

You

want

there's

a

risk,

probably

that

some

information

gets

lost.

Some

odds

may

be

get

lost.

The

question

is:

why

would

we

do

we

really

have

to

care

about

that?

M

If

other

transports

are

doing

completely

other

things

and

as

I

said,

I

think

this

draft

could

be

simplified.

If

we

make

clear

how

this

could

be

deployed

independent

of

the

collision

and

how

it

could

be

deployed

with

segregation,

but

I

personally

would

suggest

to

clearly

define

a

mode

where

accurate

easy

and

it's

not

the

newest.

So.

K

M

M

K

K

Okay,

so

the

the

current

draft

clearly

defines

what

to

do

when

ecn

is

negotiated

and

when

is

not

right,

there

is

a

table

that

actually

describe

which

situations

are.

This

is

perfectly

describing

the

draft

I

think

is

I

mean,

maybe

it

maybe

there

is

some

editorial

effort

needs

to

be

done

to

convey

it

more

clearly,

I'm

happy

with

that,

but

the

information

inside

there.

K

The

best

approach

is

to

actually

have

two

documents:

back-to-back

one

with

accuracy

and

or

another

and

another

week,

without

accurate,

CC

and

or

to

have

the

integrated

version.

That's

an

editorial

discussion

that

we

can

have

and

I'm

happy

to

do

that

right.

The

first

thing

that

I

would

like

is

to

close

this

issue

and

then

talk

about

the

more

editorial

part.

So

the

thing

is

we

have

the

discussion

about

how

much

to

mix

this

with

accurate

CCN,

especially

for

the

scene

right.

The

scene

is

much

more

problematic

than.

K

M

M

M

K

Think

you

have

the

person

who

is

going

to

answer

that

right

now,

right

behind

you

all

right,

I

mean

the

thing

is

I

and

I

agree

with

her.

She

has

made

a

very

clear

statement.

That

is

an

architectural

statement

that

you

should

not

avoid

responding

to

explicit

congestion

signals

that

you

receive

it's

a

bad

practice

I

actually

subscribe.

What

what?

What

her?

What

her

statement

right

now.

M

K

M

F

B

K

But

but

the

problem

I

disagree

that

yeah

I

disagree:

that

accuracy

supporting

accurate

CCM

is

a

is

an

is:

what

complicates

the

problem?

The

problem

is

complicated

in

some

specific

cases

like

in

particularly

in

this

case,

all

the

bias

about

the

negative

feedback.

This

is

not

a

problem

of

accurate

ecn.

This

is

a

problem

right

accuracy

and

actually

provides

you

more

information

that

allows

you

to

go

to

a

better

solution

to

the

problem.

I.

F

Could

easy

an

is

fine

and

we

have

to

do

updates

to

implement

this.

We

have

to

update

student

accurate

easy,

and

could

we

roll

the

2

into

1?

Yes,

ok,

then

we

don't

have

to

just

focus

on

the

case.

We're

actually

easy

and

is

not

enabled

because

we

don't

have

to

do

the

stuff,

but

let's

just

think

about

so

the

equity

CN

bit,

but

myriad

Mira's

going

to

talk

about

that.

So

I'm

I

was

only

rippling

up

the

same

thing

and

saying

I'm

at

the

same

level.

F

I

understand

what

you're

saying

you're

saying

that

we

need

to

drop

all

the

part

that

are

not

accurate,

again

I'm,

saying

that

might

be

a

possibility.

This

document

should

be

easier

to

read.

It

is,

it

is

a

thing

we

want

people

to

do,

and

it's

quite

simple

so

either

we

say

just

use

equity,

CN

and

then

do

this,

because

that

makes

it

all

this

simple

as

one

update

I'm.

Ok

with

that,

if

that's

the

right

thing

or

somehow,

we

make

it

simpler,

because

the

current

text

is

hard

to

pick

out.

F

N

C

You

live

in

so

I,

agree.

I,

think

it

would

make

the

document

simpler

if

we

would

only

use

accurate,

easy

n

if

you

only

discuss

so

it's

a

little

bit

the

opposite

from

what

Micah

says,

but

I

think

that's

actually

right

thing

to

do

because,

first

of

all,

you

want

to

deploy

accurate,

easy

and

we

want

to

deploy

accurate,

easy

and

as

a

replacement,

to

easy

end.

It's

a

change.

So

we

can

do

these

two

changes

together

and

it

makes

it

much

more

safe.

So.

K

C

K

K

K

K

No,

you

don't

know

you

don't

really

need

that

good

I

agree,

I

mean

as

of

today.

What

the

draft

says

is

the

solution

for

the

for

feeding

back

the

con.

The

congestion

signal

to

the

to

the

sender

is

using

accurate

EC

end

because

we

don't

want

to

waste

more

baits

and

blah

blah

blah.

There

is

a

bunch

of

arguments

in

there

all

right.

You

could

try

to

do

something

else.

I

agree.

C

That's

more.

The

second

point

like

do

we

actually

care

about

inserting

being

marked

like

there

is

an

indication

of

congestion.

Do

we

need

to

react

to

it?

Is

it

important?

That's

your

question

right

say

the

difference

between

easy

and

losses.

That's

an

explicit

signal,

so

it

there

is

congestion

for

poor

loss.

C

You

never

know

for

sure

you

don't

know

why

the

sun-god

loss-

you

don't

know

if

it's

congestion,

you

don't

know

what

it

means

you,

but

you

also

have

a

timeout

right

if

you're

soon

as

gone,

you

have

to

wait

for

other,

so

it's

kind

of

a

penalty.

If

you

want

to

say

it,

but

it's

also

kind

of

there

is

some

time

where

you

don't

sense

anything

on

Lincoln,

so

the

condition

might

resolve.

In

this

case,

you

get

an

immediate

signal

that

there

is

congestion,

so

just

not

reacting

to

it

is

just

just

ignoring.

M

K

Let

me

suggest

a

way

forward,

so

I

understand.

We

all

agree

that

if

there

is

accurate

CN,

what

isn't

written

in

the

draft

is

the

right

thing

to

do

so.

What

I

would

suggest

is,

let's

limit

the

scope

of

this

draft

to

a

cure

edition.

If

someone

wants

to

do

this

for

something

other

than

a

cure,

it's

Ian,

please

write

a

draft

and

do

it

right.

K

L

K

But

because

we

also

say

that,

for

instance,

doesn't

matter

if

any

of

the

endpoints

support

accurate,

a

support,

accurate

is

yen,

I,

don't

know

retransmission,

you

should

mark

it

anyway

right

and

that

doesn't

matter

whether

they

are

or

they

are

not.

Accurate

is

yen

I.

So,

basically,

what

I'm

saying

is:

let's

drop

all

that

which

is

pretty

minor

part

of

the

text.

Just

keep

the

case

that

it's

accurate

is

yen.

The

sender

is

accurate

is

yet

right.

K

We

deal

with

all

the

compatibility

of

the

other

endpoint,

of

course,

but

but

we

drop

all

the

part

of

both

endpoints

being

non.

Accurate

is

yet

right,

the

sender

being

not

accurate

is

yet

and

we

we

explicitly

said

that

this

only

covers

the

case

of

the

sender.

Being

accurate

is

yen,

cable

and

we

don't

talk

and-

and

we

don't

mention

I

mean

and

how

the

behavior

is

for

an

endpoint

that

is

not

accurate,

is

yen

is

unspecified,

and

whoever

wants

to

specify

that

can

do

it.

L

But

that

would

mean

then

this

second

response

to

Michael's

point

I'm,

reasonably

okay,

with

being

a

bit

more

liberal

about

responding

to

loss.

You

know,

rather

than

treating

each

one

as

sort

of

each

loss

is

sacred

and

and

all

as

to

it.

However,

very

much

different

on

the

scene,

absolutely

no

non

response

to

loss

on

the

scene.

M

Well

again,

my

comment

is

on

the

East

End

mark

on

this

or

Anderson.

Not

the

loss.

I

mean

fault

for

the

loss.

We

all

agree

so,

but

the

key

question

is

of

why

to

care

about

an

ECCN

mark

on

the

scene,

and

my

point

is

I.

Don't

really

see

the

point:

let's

I

mean

if,

if

the,

if

the

router

is

really

congested,

she

will

drop

the

soon

and

we're

done

I.

L

K

K

F

F

K

So

this

is

a

presentation

regarding

some

measurements

that

we

have

been

doing

regarding

this

experiment,

so

this

is

accepted

to

to

appear

in

nitrile

communication

magazine

soon

so,

but

you

I

will

have

a

link

at

the

end

of

the

slides,

with

a

version

that

you

can

download

in

case

you're

interested

next

slide,

please.

So

what

we

wanted

is

to

see

if

we

can

actually

do

some

measurement

to

get

some

initial

data

for

this

experiment

and

then

in

particular

we're

looking

for

is

to

find

out

whether

Sen

market,

TCP

control,

packets,

barracks

are

treat

or

NP.

K

Then

a

Sen

mark

data,

packets,

sorry,

so,

basically,

what

we

have

done

is

we

started

a

measurement

campaign

to

see

how

easy

n

mark

or

ECT

mark

data

packets

are

treated

by

the

network

to

get

some

ground

troops

on

some

baseline

and

then

measure

how

TCP

control

packets

that

contain

ecn

marks

are

treated

by

the

network

and

compare

the

results

right.

So

that's

basically

what

we

did

next

slide.

So

in

order

to

do

this,

we

use

two

measurement

platforms.

K

A

one

is

planetlab

that

I

guess

you

all

know,

so

we

use

54

planetlab

nodes

in

25

years

and

22

countries

and

the

other

platform

that

we

use

that

you

may

not

be

so

familiar

with

is

a

platform

called

monroe

that

basically

is

a

platform

that

allows

you

to

measurements

in

mobile

networks

right.

So

this,

basically,

what

the

mantra

platform

has

is

monroe

nodes.

K

So

what

we

try,

these

all

these

type

of

packets

with

all

possible

ecn,

flag,

combinations

right,

including

both

in

the

TCP

and

IP

header,

both

for

rating

for

based

a

basic

EC,

any

CN,

+

ec

+,

+

+

+,

accurate,

is

here

so

the

tool

that

we

use

is

we

use

trace

box.

That

is

I,

not

sure

if

you're

familiar

with

this

tool.

This

is

a

tool

that

the

people

from

UCL

Leuven

has

developed.

That

is

basically

like

a

trace

route

that

increments,

that

it

L.

K

So

we

executed

this

bit

from

the

mantra

node

to

Alexa,

100k

servers

and

from

the

mantra

note

to

our

own

servers.

In

this

case,

we

actually

control

both

endpoints

and

we

can

play

with,

for

instance,

the

cynic

next

slide.

Please,

with

the

emergent

campaign

between

January

and

May

of

this

year,

we

use

to

port

80

and

443

with

the

25

million

measurement

blahblahblah,

okay

next.

So

what

have

found

so?

K

So

that

basically

means

you

send

ECT

zero

one

mark

pocket

and

what

happens

is

that

the

first

hope

of

the

mobile

providers

actually

clears

the

ACN

fields

and

turns

it

into

non

ECT

right,

because

we

found

seven

out

of

11,

which

seems

quite

a

bit.

We

find

we

tried

other

few

mobile

carriers.

We

actually

try

seven

more

and

we

actually

found

that

three

of

them

have

the

same

behavior.

Yes,

Emily.

K

K

O

K

P

Sure

sure

Apple,

if

I'm

sound

the

question

correctly

you're

asking

you're,

speculating

that

maybe

the

mobile

phone

handset

itself

is

clearing

its

own

ect

base

before

sends

the

packet.

But

if

it

succeeded

in

four

out

of

eleven

mobile

providers

that

suggests

that

the

handset

is

not

clearing

its

own

right.

K

K

Okay,

so

so

we

found

out

so

we

also

find

that

is

one

mobile

providers

only

clears

it

in

the

port

80

and

not

in

the

four

or

443.

This

I

mean

this

is

a

case

of

a

proxy

and,

as

I

said,

we

didn't

find

evidence

of

clearing

the

the

ecnv

any

the

ACN

fields

in

the

traffic,

from

the

servers

to

the

client

and

for

those

who

didn't

clear,

we

find

evidence

of

little

bit

of

clearing

0.53

percent,

which

is

somehow

similar

with

the

current

literature

on

the

fixed

networks

which

in

this

case

for

the

planet.

K

A

K

K

So

basically

that

means

that

if

you

get

clear,

you

could

get

clear

both

in

contour

pockets

and

in

data

packets.

If

you

don't

get

clear,

you

don't

get

clear

for

none

of

them,

so

basically

it

doesn't

seem

to

matter

the

the

boxes

that

do

this

doesn't

seem

to

look

whether

it

is

a

senora

control

packet

or

a

data

packets.

Basically,

everything

works

the

same

right.

K

K

So

I

tested

in

so

we

use

with

54

planetlab

nodes

that

are

fix

it

and

that's

what

we

use

so

another

result

is

61%

of

the

Alexa

top

500

K

support,

CC

n

and

only

three

point:

51

percent

support,

DC

n

plus.

However,

interestingly

enough,

none

of

them

respond

in

the

way

it

is

defined

by

RC

fifty

to

sixty

two.

Fifty

five

sixty

two

right

so

we'd

have

not

been

able

to

detect

any

server.

That

is

actually

implemented

what

it's

in

there.

K

So,

actually,

a

fifty

five

sixty

two

defines

a

very

weird

behavior

right

so

basically

says:

if

you

receive

a

mark

in

the

Indus

in

a

city

mark

in

the

sea,

not

correct

me

if

I'm

getting

this

one

and

I

think

what

you

should

do

is

start

over

again,

the

the

the

exchange

correct.

So

you

need

to

you

need

to

resend

this

that

the

scene

without

and

and

see

if

you

can

actually

get

the

scene

as

in

act

back

without

without

the

mark

right.

That's

that's

what

it

says.

F

L

But

then,

while

it

was

going

through

the

ietf,

so

there

was

an

academic

paper

written

on

that

and

all

this

expense

was

done

like

that.

Then,

when

it

went

through

the

ITF,

there

was

this

that

it

had

to

be

exactly

like

a

loss

and

and

so

they

they

tried

to

make

it

like

two

round-trip

times

worth

of,

of

delay

like

by

bouncing

the

packet

back

off

the

client

and

back

again

and-

and

it

was

all

a

bit

weird.

K

G

G

K

G

K

Okay,

next

slide,

please

so

you

here

you

have

the

I

mean

you

have

the

the

URL

to

the

paper,

but

I

will

actually

send

respondent

to

make

the

question

in

the

mailing

list.

So

final

remarks,

it

seems

forth

from

for

this.

That

Sen

plus

plus,

is

a

safe,

a

CC

n.

So

probably

that

that's

a

good

news,

there

is

more

work

to

be

done

in

order

to

get

ACN

through,

and

the

question

is

whether

it

is

relevant

that

we

found

evidence

of

EC

and

clearing.

K

Does

it

matter,

I

mean

it's

not

that

it's

dropping

the

packet,

it's

just

that

they

are

cleaning

the

thing

and

the

problem

is,

if

you

have

something

like

a

more

a

multiple

Hopf

path

behind

the

the

same

access

right

that

are

actually

using

easy

n

for

something

that

signal

will

get

lost

right.

So

any

potential

benefits

that

you

may

have

obtained

like

smaller

buffers,

I

mean

smaller

queues

or

anything

like

that

that

you

have

implemented

before

the

our

cellular

access

like,

for

instance,

you

have

our

router.

K

Q

P

P

Just

remember

that

yeah

ecn

has

failed

bad

news,

frisian

not

safe

to

use,

and

if

that's

the

message

you

want

to

send,

which

is

like

forget

about

easy

and

it's

not

worth

trying,

that's

what

your

headline

says

and

I'm

concerned

when

I'm

talking

to

my

management

chain

at

Apple

right,

if

my

VP

says

yeah

I

saw

something

about

ACN

is

not

safe.

11

out

7

out

of

11

mobile

operators

drop

your

connections.

If

you

try

to

use

ezn-

and

he

won't

remember

where

he

read

it-

he

won't

know

the

facts.

He'll

just

have

internalized

this.

P

This

idea

that

the

ACN

is

bad

so

that

that's

my

feedback,

if

we

act

I,

would

call

this

title.

Fantastic

news

for

ecn

completely

safe

to

use

100%

at

the

time

causes

no

broken

connections,

and

if

those

mobile

operators

want

to

upgrade

their

networks

to

suck

less

in

the

future,

then

that's

even

better

news

right.

So

I

would

cast

this

as

good

news,

not

not

catastrophe

and

calamity

for

easy

Anna.

G

Microsoft

I

would

actually

concur

with

what

Stewart

said.

This

is

actually

good

news,

because

I'm

going

to

present

later

about

TCP,

fast,

open

and

here

the

failure

mode

is

just

removing

the

bits

right.

It's

actually

very

safe

to

deploy,

and

you

know

make

incremental

progress

on

versus

some

of

the

other

things

we

are

trying

to

do

with

TCP,

so

I

would

also

classify.

This

is

actually

very

good

news.

L

Right,

I

guess:

I'll,

respond

on

behalf

first

pose

and

say

well

think

about

that

yep

I'll

respond

on

behalf

of

us,

the

co-authors

and

say

well

think

about

those

comments.

Yeah

right,

accurate,

ECN,

there's

the

co-authors

and

we've

got

changes

in

affiliations.

Next

slide

right,

I'm

not

going

to

go

through

the

recaps.

This

is

just

in

the

slide

pack

for

those

that

are

new

here.

Maybe

so

they

can

read

these

slides

in

their

own

time.

Next

again,

a

recap

of

the

solution

which

I'm

not

going

to

go

through

next

all

right.

L

So

this

follows

on

from

the

previous

talk

and

I

asked

for

the

agenda

to

be

changed

so

that

they

would

follow

on

in

in

order

to

respond

to

the

measurements

that

we've

just

heard

about.

We

have

altered

the

accurate

ecn

draft

we've

actually

taken

stuff.

That

was

in

an

appendix

waiting

to

see

where

the

measurement

studies

would

would

find

problems

like

clearing

a

CN

and

we've

put

it

into

the

main

body

of

the

document.

Now,

because

there

are

those

opportunities,

they're,

not

problems,

right

and

or

issues

and.

L

L

We

get

full

information

on

what

the

network

has

done

to

the

IP

layer,

at

least

on

those

first

two

packets

right

now

we

could

get

that

information

later

in

the

connection

by

a

bit

of

heuristics

and

I'm

watching

what's

going

on,

but

particularly

because

it's

an

experimental

protocol.

We

thought,

let's

measure

it

accurately

and

report

those

measurements

as

part

of

the

experiment,

and

it

also

makes

the

code

easier,

because

you've

got

a

clear

indication

of

what's

going

on

rather

than

having

to

do

heuristics

right.

L

L

That

that

I

think

summarizes

everything

on

this

slide,

how

other

than

if

you,

if

you

find

that

the

IP

ACN

field

has

been

mangled.

When

you

get

the

feedback

back

of

what

you

sent,

then

you

disable

it

for

your

half

connection,

but

you

can't

there's

no

mechanism

to

tell

the

other

end.

So

it's

a

half

connection

thing.

L

So

there

are

three

ec

n

bits

used

in

the

TCP

header

and

we

now

use

four

different

combinations

of

those

to

feed

back

all

the

possible

values-

and

this

is

this-

is

during

the

three-way

handshake,

and

so

it

was

already

defined

as

being

a

code

point

based

feedback.

At

that

point,

it's

only

one

that

when

they

think

it

started

after

you've

not

got

Sydney,

it

was

one

on

the

packets,

but

you

start

just

having

a

counter

counting

the

number

see

marks.

L

So

this

is

how

we

do

this

feedback

and

and

actually

the

encoding

we

use

had

to

fit

around

other

uses

of

them

in

other

versions

of

ecn,

but

essentially

you've

got.

If

you

look

at

the

numbers

in

binary,

they

would

be

two

three

four

and

six

for

the

for

the

three

values:

err

on

the

field,

so

it's

reasonably

easy

to

do

in

the

code.

We

view

the

same

in

the

in

the

other

direction.

L

L

Right,

so

that

was

the

main

change

to

the

draft.

The

the

next

main

change

was

to

write

in

the

summary

of

the

discussion

that

we

had

at

the

last

ITF

on

change

triggered

acts.

Now

there

was

concerned

that

this

might

not

be

possible

with

offload

hardware.

What

we've

done

is

we've

changed

it

to

a

must

from

a

shoot

to

a

must

with

the

get

out

clause,

because

we

wanted

to

ensure

the

receiver

could

rely

on

this

behavior.

But,

given

its

experimental,

we

have

described

a

possible

experiment

that

people

could

do

there,

isn't

the

mast.

L

You

know

that

so

it

allows

people

who

are

having

this

problem

to

it

gives

them

a

hint

that

we're

happy

to

see

experimentation

on

this,

and

then

it

says

because

of

this

problem

with

needing

to

rely

on

the

behavior

that

it's

then

their

responsibility

to

deal

with

the

other

end

understanding

this

behavior.

You

know,

that's

it's

an

experiment,

right

fun,

but

it's

their

responsibility.

That's

the

fact

right.

L

L

You

can't

use

offload

hardware

to

do

change

trigger

tax

during

slow

start

and

then

the

gotough

off

load

off

load

once

slow

start

has

ended

right,

and

that

means

that

most

of

the

performance

of

most

of

the

server's

most

of

the

time

he's

using

offload-

and

it's

just

for

the

slow-

starts

that

you're

not

and

I'm,

told

that

there's

very

similar

code

to

that

already

in

place.

Next

right,

we

did

some

minor

edits

as

well.

L

L

We

added

that

deployment

itself

should

be

an

experimental

success

criterion.

We

added

that

you

don't

use

the

congestion

window

reduced

signal,

so

it

just

in

case

anyone

thought

you

still

had

to

use

that

and

we

also

made

sure

that

we

defined

the

behaviors

of

all

unused

values

so

that

it

would

be

clear

in

in

the

future.

What

the

behavior

would

be

for

forward

compatibility

Michael,

yes,.

M

So

this

is

again

Michael

speaking

from

the

floor.

So

thanks

a

lot

for

addressing

my

comment

on

the

experiment.

Success

criteria,

unfortunately,

I

don't

like

the

new

grading

leader,

so

I

think

I

owe

you

something

on

the

mailing

list.

You

still

have

wording

in

the

document

that

refers

to

the

TCP

I'm

working,

poop

and

I.

Think

this

doesn't

address

my

comment

from

the

last

working

group,

but

instead

of

saying

it

on

the

mic,

I

probably

have

to

write

it

and

or

maybe

I

have

to

produce

a

maternity

wear

at

their

place

yeah.

M

So,

regarding

the

the

last

point,

one

very

naive

question

so

I

mean

this

is

an

experiment

so

assume

that

we

move

this

to

standards

track

at

some

point

in

time

and

assume

that

we

have

to

change

something.

How

would

the

negotiate

the

standards

track

version

right?

I

think

this

is

something

I

mean

you

don't

have

to

necessarily

document

this

in

an

experimental

thing,

but

it's

something

that,

in

my

opinion,

would

variant

thinking.

So

how

would

the

standards

track

version

of

this

be

negotiated

right?

What

one

one.

L

M

As

I

said

in

general,

I

think

it's

require

thinking,

I

I

haven't

thought

about

it,

and

but

I

actually

would

look

for

an

abstract

version

of

this

at

some

point

in

time

and

this

why?

It

is

this:

why

even

in

the

experimental

design,

you

should

foresee

that,

in

my

opinion,

how

the

upgrade

parts

to

standard

strike

could

look

like

under

the

possible

assumption

that

the

protocol

has

to

change

so.

C

Me

I

think:

that's

not

a

thing

that

is

any

specific

to

accurate.

Ecn

like

there

are

a

lot

of

cases

where

you

have

the

experimental

thing

and

then

some

things

you

can't

change

anymore

or

like

it

wouldn't

work

anymore.

So,

like

the

things

that

we

just

described

in

the

experiment,

are

things

that

we

can

just

change

without

no

gate

negotiation,

for

example,

not

using

change

triggered

X.

You

just

change

your

fermentation.

So

that's

the