►

From YouTube: IETF101-T2TRG-20180322-1550

Description

T2TRG meeting session at IETF101

2018/03/22 1550

https://datatracker.ietf.org/meeting/101/proceedings/

A

B

B

D

B

Then

Matt

Acosta

will

report

from

the

hackathon

that

we

had

on

the

engine

from

the

Russia

approach.

That

was

underlining

that

then

John

will

talk

about

something

that

sooner

or

later

we

all

will

have

to

do

for

some

machine

learning

and

while

he

will

not

talk

so

much

about

how

that

is

going

to

influence

our

work,

I

think

we

all

can

start

imagining

that.

B

B

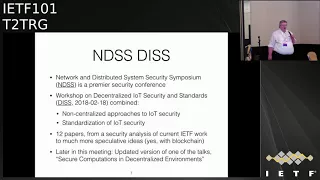

Be

centralized

and

violence

that

earlier

version

of

which

was

at

the

MDS

s

this

workshop

and

we

thought

was

interesting

enough

first

target

and

at

the

end,

don't

know

the

way.

Before

the

end,

we

will

have

a

quick

look

at

meeting

planning

in

this

case.

Mostly

look

at

the

meeting

will

have

to

with

w3c

and

ocf

okay,

so

just

to

remind

people

what

the

research

group

is

about.

Who

here

is

new

to

the

research

group

has

not

been

to

a

previous

meeting.

Okay,

so

let

me

quickly

say

why

we

are

here.

B

B

So

we

are

looking

at

issues

in

this

area,

in

particular

when

there

are

opportunities

for

IETF,

standardization

or

I

should

add

for

cooperation

with

other

SDSU's,

for

others

with

other

standards,

developing

organizations

to

make

them

happen.

So

we

don't

usually

do

radios

here.

We

sometimes

have

to

look

at

the

radios

if

they

behave

strangely.

Here

will

slide

on

that

in

a

minute,

but

we

not

only

started

the

IP

annotation

layer

and

end

in

the

hearts

and

minds

of

end-users.

So

this.

B

B

The

IOT

security

considerations

draft,

which

entered

iesg

IRS

G

last

call

we

had

a

workshop

and

mbss

on

decentralized

duty

security

at

standards.

I

quickly

reported

in

nature.

We

had

an

interesting

side

meeting

so

essentially

we

you

know

all

these

sounds

about

how

many

people

fit

into

a

phone

box.

Of

course,

these

don't

work

anywhere

because

they

are

for

marcus

any

law,

so

we

just

usually

use

the

meeting

room

for

the

same

thing

and

had

a

side

meeting

with

29

people

in

the

room

Waterloo,

which

is

about

the

size

of

the

school.

B

B

B

F

B

Out

early

security

and

the

other

that

IT

security

often

will

not

be

based

on

centralized

approaches,

but

will

require

decentralized

or

non-centralized

approaches.

So

we

had

12

papers

which

are

still

in

the

process

of

being

published,

so

it

will

take

until

about

mid

April

until

you

can

download

them.

B

D

B

About

so

that

would

be

later

in

this

meeting.

So

what

are

we

going

to

do

next

tomorrow?

We

will

be

in

Prague

with

OC,

f

and

w3c.

Where

are

things

so?

Oc

f

is

the

open

connectivity

foundation.

The

organisation

that

resulted

from

the

merger

of

oh

I

see

you

PNP

at

the

ancien

Alliance

and

they

are

doing

a

very

interesting

standardization

work

at

their

heavy

user

of

IDF

technologies.

So

it's

a

good

thing

to

talk

to

them

and

we

will

talk

you

particular

about

requirements.

They.

B

B

What

we

understand

interesting-

and

we

are-

they

are

also

interesting-

local

people

there

that

we

may

want

to

contact.

We

suddenly

will

do

more.

We

share

related

things

there.

There

has

been

some

discussions

about

distributed

this

country,

which

won't

follow

a

little

bit.

The

theme

that

we

had

this

workshop,

but

this.

G

Photo

from

Nokia

so

in

terms

of

these

joint

meetings,

it

may

be

interesting

also

to

have

a

joint

call

with

a

joint

sessions

with

we

may

we

have

a

July.

We

have

a

cos

fest

in

Co,

which

is

done

by

TTA.

It's

an

organization

standardized

a

test

standardization

organization

in

Korea.

It

may

be

interesting

to

showcase

some

of

this

and

also

participate

in

the

light,

a

table

to

amicus

fest

there

that

maybe

I.

B

B

G

B

But

yeah

definitely

is

one

of

the

organizations

that

we

have

been

cooperating

with

an

IETF.

So,

for

instance,

one

of

the

collab

flood

tests

was

hosted

by

IO

a

night

which

was

really

useful

because

in

the

process

we

learn

much

more

about

the

Iranian

trip

specification,

which

was

pretty

young

at

the

time.

So

we

certainly

make

sense

to

deepen

that.

B

B

So

the

other

document

that

is

nearing

completion

is

called

restful

design

for

IOT.

So

this

essentially

takes

the

when

your

architectural

style,

that

is

underlying

the

HTTP

protocol

and

much

of

the

web

work

and

explaining

how

to

use

this

by

Internet

of

Things

applications,

because

there

are

some

things

that

that

may

just

not

be

very

intuitive.

B

H

Okay,

so,

as

Carsten

said,

we

we

tried

to

fit

30

some

people

in

a

room

to

mentioned

for

about

10

or

12

other

than

that

the

meeting

went

very

well.

There

is

a

draft

about

some

of

these

issues,

but

the

main

idea

is

that

when

we

put

administrative,

Lea,

independent

IOT

applications,

so

something

I

put

my

apartment,

something

you

deploy

in

your

apartment,

no

administrative

relationship

between

them

with

these

packets

because

of

radio

interference,

and

that's

not

the

IETF

problem.

H

But

what

some

recent

research

results

suggest

is

that

the

protocols

are

inducing

timing,

behaviors

sensitivity

to

loss

of

certain

kinds

of

it's,

that

ours

asked

that

exacerbate

these

problems

and

we

can

get

kind

of

severe

performance

degradation

at

the

protocol

level

and

potentially.

That

is

something

that

that

we

need

to

think

about

it.

This

layer,

right

now

there

don't

seem

to

be

good

tools,

use

simulators,

test

beds

that

help

us

evaluate

these

sort

of

multi

Network

scenarios

or

if

independent

networks

sharing

the

channel.

H

Just

some

of

the

things

we

discussed,

miss

Ted,

just

potentially

a

number

of

IETF

protocols.

Anything

that's

touching

these

Mac

layers,

six,

five,

six

th,

some

other

things

that

came

at

the

discussion.

This

is

obviously

somewhere

on

the

IETF

I

Triple

E

border.

Clearly,

the

Mac

has

to

do

most

of

the

work

here,

but

it

administrative

ly,

independent

networks.

The

Mac

only

has

limited

abilities

to

isolate,

isolate

the

networks

in

the

link

sense.

H

So

I

think

there

are

some

rules

for

for

IETF

protocols

to

to

try

to

understand

the

performance

in

this

environment

and

potentially

try

to

make

some

improvements.

Some

folks

also

raise

various

possibilities

for

active

or

explicit

coordination

via

high-level

protocols.

So

there

is

a

draft

and

I

encourage

people

to

be

interested

in

this.

I

Go

get

Montenegro

a

yeah

I

was

wondering

if

you

could

elaborate

a

bit

more

on

this

research

because,

for

example,

in

Europe

the

ICM

bans

require

listen

before

talk,

not

that's

not

required

in

US

and

many

other

in

some

other

places,

but

most

that's

why

3gpp

with

court

and

coexistence

problems

in

in

bands

that

are

typically

used

by

Wi-Fi,

assumed,

LBT

and

built

on

top

of

that,

so

my

question

is:

is

this

research

that

you're

pointing

at?

Doesn't

it

US?

I

B

I

J

Juan

Carlos,

when

you

guys

see

cooks,

so

yeah

just

responding

to

to

Gabrielle,

because

I

guess

discussion,

but

you're

right

all

those

points

where

we're

definitely

part

of

the

discussion.

There's

multiple

regulatory

domains.

If

we

focus

let's

say

you're

nice

and

bans,

there's

different

rules

on

there

country

on

how

you

operate

on

these

IES

and

bands.

Not

all

these

protocols

follow

the

same

Mack

for

instance.

So

here

we

talk

about

the

Triple

E,

that's

just

part

of

it.

Some

some

protocols

may

not

be

following

the

same

as

long

as

they

comply

to

the

regulation.

J

They

are

legal

to

operate

in

this

country

that

they

may

not

be

legal

to

operate

in

this

other

country.

However,

I

think

at

least

a

take

that

I

that

I

got

from

from

the

discussion

is:

there's

still

some

potential

to

share

some

knowledge

at

higher

layers

and

and

that's

why,

with

there

there's

a

pointer

there

to

their

white

spaces

protocol,

that

was

the

past

protocol.

That

was

a

fine

before

where

yeah

I

mean

through

the

internet.

J

You

can

get

some

knowledge

that

could

be

useful

for

your

area

and

maybe

this

bans

the

the

sorry

this

operators

are

using

or

either

private

networks,

public

networks,

standardized

protocols

or

from

different

entities,

but

still

there

could

be

some

interesting

information

like

I,

don't

know

to

me.

It

sounds

like

level

of

noise

in

this

region,

something

that

doesn't

touch

on

privacy

necessarily,

but

some

some

useful

information

that

could

help

me

operate

better.

H

The

document

really

kind

of

forces

on

focuses

that

kind

of

laying

out

the

challenges

systematically

it

points

to

a

couple

of

research

results,

both

mine

and

other

people's.

The

main

point

in

the

documents

is

that

I

recommend

and

that

the

TTT

RG

or

some

other

mechanism

we

try

to

understand

what

we

would

like

to

have

for

some

practices

for

evaluating

how

the

ietf

protocols

you

know

six

low,

60s

role

perform

in

this

environment.

We

need

to

have

a

better

understanding.

That's

really

what

the

document

says.

Apologize.

C

For

following

up

even

before

we

make

recommendations

about

protocols

right,

the

I

think

the

first

step

is,

we

might

make

sense.

I

agree

with

you

only

understanding,

as

part

of

getting

the

understanding

of

avista

useful,

to

have

some

sort

of

data

collection,

sauce

analysis

methodology,

so

that

we

can

better

characterize

and.

H

K

H

H

B

L

E

Milk,

media

interoperability

and

we've

actually

had

eight

calls

since

the

ATF

101,

because

we've

had

a

lot

of

activity

in

planning

this.

Our

participation

in

the

hackathon

and

we've

really

covered

a

diverse

set

of

topics

around

the

semantics

and

I

per

media.

I'm,

probably

not

going

to

try

to

read

through

everything

here,

but

essentially

we

looked

at

sort

of

top

to

bottom.

So

what

is

what

is

the

nature

of

abstract

semantic

time?

We

want

to

describe

something

you

couldn't

wait

list

protocol

neutral.

How

do

we

do

semantic.

E

Do

we

connect

with

RDF

ontology

x'

and

other

data

datasets

that

are

better

around

like

these

third-party

vocabularies

yeah?

So

you

know

the

qu

DT

as

though

I

say

SSM

that

are

fairly

hard

to

use

and

how

do

we

make

them

easier

to

use?

How

do

we?

How

do

we

integrate

symmetric

metadata

into

the

system?

Where

does

it

go

and

you

know

who

uses

it?

How

do

they

use

it?

This

idea

of

a

layer

semantic

stack.

There

may

be

different

semantics

at

different

layers

and

the

stack

at

different

protocol

levels.

E

E

The

goals

for

this

this

one

and

we're

probably

going

to

have

a

series

of

these,

but

the

goals

for

this

is

the

second

hackathon,

but

this

is

the

first

hands-on

where

we

bought

hardware

and

tried

to

make

it

do

things,

bringing

they

diverse

things

together

to

interoperate,

and

we

wanted

to

start

focusing

on.

You

know

where

you

would

start

in

an

application,

workflow

of

doing

discovery

and

synaptic

based

discovery

and

software

adaptation

to

different

data

models

and

different

protocols,

and

that's

that's

generally

the

theme

for

this

one.

E

We

started

with

really

simple

application

scenarios

like

can

a

signal

input

like

a

motion

sensor

turn

the

light

on

and

off

just

for

very,

very

basic

orchestration

and

the

implementations

that

people

brought

were

w3c

web

of

things

which

we'll

talk

more

about.

There

was

a

yang

coma.

There

was

a

coli

based

implementation

tried

to

interoperate.

There

was

a

om,

a

lightweight,

m2m,

client

and

server,

and

there

was

some

various

ad

hoc

device.

Api's

protocols,

just

basic

rest.

E

Api,

like

you,

might

see

from

various

connected

devices

that

don't

follow

any

particular

standard,

but

they

still

use

HTTP

and

they

still

have

a

a

rest,

ish

sort

of

API.

So

we

looked

at

connected

home

and

automotive

domains

and

the

idealist

you

know

how

do

we

make

them

work

together?

What's

the

technology

that

we

use

and

we

started

using

some

of

the

stuff

from

the

w3c

web

of

Things

group,

because

a

lot

of

the

goals

and

objectives

are

very

similar,

so

we

brought

that

that

technology

and

it

will

talk

more

about

that.

E

We

we,

we

started

integrating

komai

with

the

descriptions

so

to

show

that

you

don't

really

have

to

have

any

particular

kind

of

protocol

of

what

what's

the

range

of

protocols

that

can

be

connected

to.

We,

we

brought

a

thin

directory

and

we

stored

thing

descriptions

in

the

thing

directory.

I'll

show

more

about

what

that

that

whole

thing

looked

like,

and

then

we

had

a

at

the

end.

We

had

the

web

things

implementation,

communicating

with

these

different

device,

implementations

and

different

protocols.

E

So

the

thing

description

was

was

what

we

used

as

the

metadata

common

metadata

format.

Here,

it's

basically

a

media

type

with

RDF.

It

describes

the

abstract

and

interactions

with

things,

so

you

know,

read

the

temperature

or

lock

the

door,

this

sort

of

thing,

and

it

also

binds

those

abstract

interactions

to

concrete

instances

of

things

that

implement

those

interactions.

So

you

know

things

like

the

data

shape,

payload

structure,

data

types

and

transfer

layer.

E

So

here's

a

little

picture

that

illustrates

this

description

has

both

semantic

annotation,

high-level

information

models

and

capabilities

like

what

do

you

want

to

do

in

an

abstract

sense

and

protocol

bindings

sort

of

from

the

device

specific

problem?

How

do

you

do

it?

You

know

OCF

lightweight.if,

so

dot.

These

are

all

based

on

coop,

but

they

used

collab

quite

a

bit

differently.

There's

not

an

easy

way

to

to

use

them

all

in

a

single

application

without

doing

some

adaptation,

and

so

that's

what

we

do

with

thing

description

is

that's

up

for

me.

E

A

format

for

adaptation

between

these

different

protocols,

so

thing

directory

is

what

we

use

in

the

and

hackathon

as

a

central

registry

of

these

thing

descriptions.

So

basically,

each

each

device

or

connected

thing

registers

its

thing

description

in

the

thing

directory

and

then

applications

discover

them

and

find

them

using

semantic

queries

and

thing

directory

uses

the

same

protocol

as

a

core

resource

directory.

E

E

Small

applications

discover

them

after

that.

The

application

might

interact

directly

with

the

connected

thing

or

it

might

be

interacting

through

some

intermediary,

like

a

proxy

or

a

pub

sub

broker,

and

if

it

was

a

pub

sub

broker.

There

there's

some

coordination

here

to

register

in

thing

directory

the

address

of

the

pub

sub

broker

and

how

to

communicate

with

it,

as

opposed

to

the

thing

itself,

and

some

of

these,

like

my

entries,

for

example,

and

some

others

registered

both.

E

E

So

here's

some

examples,

sort

of

showing

that

the

same

format,

how

the

how

the

gangue

implementation

worked.

It

that

it's

stuff

to

a

thing

directory

and

then

the

Serbian,

which

is

where

the

application

runs,

can

then

access

the

device

same

thing

with

an

HTTP

device

that

might

have

some

ad

hoc

protocol

buttressed

fish

registers,

the

TD

and

then

the

application

discover

from

the

TV

and

interact

with

the

device.

M

N

But

with

which

one

so

I'm

trying

to

make

sense

out

of

this

time,

I

understand

that

that

the

thing

directory

is

the

directory

that

you

previously

explained,

which

one

corresponds

now

to

the

application

sort

of

like

and

to

the

connected

thing

and

the

intermediaries

on

the

next

slide

so

like

here.

So

so,

I

see

the

directory

finger

rectory,

which

one

is

now

the

client

that

wants

to

access

some

data

from

the

IOT

device

is

the

comma

I

device.

Is

that

the

IOT

device

and

and

the

application

is

the

w3c

wrap

of

things

Serbian?

E

This

is

acting

as

a

proxy

for

the

device.

The

Serbian

is

essentially

an

application

proxy

for

the

device,

so

the

Serbian

interprets

the

the

transfer

layer

instructions

from

the

thing

description

that

it

got

from

the

thing

directory

and

it

uses

those

to

access

the

device.

So

in

the

w3c

Serbian

software

we

already

have

the

adaptation

or

the

protocol

for

the

for

the

transfer

layer.

So

we

use

that

as

as

the

application

proxy

I'm.

N

Sorry

I

think

you

have

to

update

the

slides

to

get

them

in

line

with

the

architecture

picture,

because

there's

one

entity

than

apparently

missing

and

some

errors

may

be

missing

as

well,

because

in

a

previous

life

the

IOT

device

registered

itself

at

the

directory

directly.

In

this

case

it

doesn't

and

there's

no

application.

So.

N

E

Here's

a

better

picture

to

show

a

lot

of

these

things.

So,

for

example,

here

the

lightweight

m2m

device

going

through

the

lightweight

m2m

server

and

this

discovery

adapter

will

discover

things

on

the

lightweight

m2m

server

and

register

them

with

the

thing

directory.

So

that

shows

the

whole

loop

that

wasn't

shown

on

the

other

side.

So

unfortunately,

so

like

I,

take

it

a

good

point.

Well,

we

should

fix

those

up

to

make

maybe

put

something

like

this

a

little

more

upfront

so

in

the

same

way

for

the

HT.

E

So

here

it

shows

the

device

isn't

registering

this.

The

device

is

doing

lightweight

m2m

to

the

lightweight

MTM

server.

It

only

needs

to

have

these

tiny

short,

URIs

and

numeric

identifiers,

and

all

of

that

then

this

this

piece

goes

in

and

expands

them

and

knows

how

to

create

all

the

semantic

annotation

based

on

its

knowledge

of

you,

know:

lightweight

m2m

number

codes

and,

if

so,

smart

objects

and

what

have

you

and

then

and

then

the

Serbian

can

can

say.

Oh

I'm,

looking

for

a

temperature

sensor

and

can

find

this

and

then

from

the

transfer

layer.

E

Instructions

knows

how

they

interact

with

the

device

and

the

same

thing

for

the

HTTP

and

comai

devices,

but

they

didn't

show

the

whole

loop

of

so

the

HTTP

device

was

a

Raspberry

Pi.

It

could

just

it

can.

It

can

basically

go

directly

and

register

it's

it's

things

with

the

thing

directory.

Also,

we

had

some

cloud

services

that

had

we're

running

on

a

digital

ocean

instance

that

had

enough

you

know,

CPU

and

enough

resources

to

be

able

to

go

and

both

expose

the

devices

in

and

create

the

semantic

descriptors

and

register

them.

E

So

next

steps

we're

here

we

go,

want

to

go,

look

at

semantic

annotation

and

discovery

like

the

thing

directory

case,

but

using

core

RT

and

link

format.

So

how

can

we,

semantically

annotate

web

links

with

the

same

information

to

enable

the

same

sort

of

discovery

on

a

general

web

linking

format?

We

want

to

look

at

different

end

device

protocols

and

data

models,

so

you

know

please

like

back

that

even

are

part

of

this

right,

more

automation

of

the

semantic

queries

because

we're

mapping.

This

are

:

point

to

you

our

eyes.

N

Mentally,

when

you

did

the

mapping

from

let's

say,

like

a

team

term,

two-finger

scription

from

the

w3c

or

other

like

kamae

to

that,

how

was

was

that

possible

without

losing

some

of

the

semantics

or

like,

because

that

has

always

been

a

challenge

like

we

have

all

these

different

activities

and

we

are

piling

on

more

and

more

standards

activities

on

different

descriptions

in

then

it

becomes

very

difficult

to

map

them

to

each

other

without

losing

some

of

the

semantics

of

it.

So

how

was

that?

What

was

the

experience?

E

When

we're

starting

we're

reusing

these

devices

that

are

biomes

and

temperature,

sensors

and

stuff,

there's

not

much

semantics,

there's

not

any

synaptic

box.

In

fact,

the

the

semantic

annotations

pretty

much

have

there

have

a

way

of

describing

all

of

the

what

you

want

to

do:

sort

of

sort

of

things

and

that's

all

extensible.

N

N

One

of

the

challenge,

or

one

of

the

observation

that

we

learned

from

the

IOC

workshop

was

you

can't

just

look

at

the

data

model,

but

you

also

have

to

look

at

the

interaction

model

because

they

are

closely

covered

together

somehow,

and

so

it

would

be

interesting

to

go

from

the

basic

example

to

something

a

little

bit

more

sophisticated

actuators

may

be

other

more

complicated

things

and

see

how

well

that

works.

I

would

be

curious

in

what

the

result

is.

P

Matthias

Kovich

siemens

talked

about

Michael

a

bit

here,

so

they're

different

tracks

going

on

so

in

in

the

debrief.

You

see

level

things.

We

look

exactly

at

these

interaction

models

that

have

partly

describing

what

protocols

are

used.

What

are

basically

the

abstract

yeah

operations

that

you

perform

there.

We

found

kind

of

this

narrow

waste

of

properties,

actions

and

events

that

cover

from

a

programming

model

perspective.

Basically

what

you

can

do,

and

so

we

can

read

out

some

states.

We

can

write

to

some

state

if

this

is

allowed

in

this

particular

ecosystem.

P

We

can

call

DC's

actions

that

often

correspond

to

something

that

goes

on

for

certain

time.

Some

others

might

be

RPC

based.

So

there's

also

this

coverage

and

we

can

cover

whenever

a

device

can,

as

synchronously

send

something

to

the

client.

So

there

we

have

this

disco

model

in

the

finger

description.

So

there's

a

formal

model

behind

the

description

document

itself,

and

this

is

then

extended

a

bit

by

the

binding

templates

that

Michael

was

mentioning.

P

So

in

this

example

here

the

common

protocol

was

co-opted,

so

we

are

able

to

describe

the

methods

that

can

be

used

by

coop.

We

can

describe

if

there

some

specific

options

that

I

use

for

the

protocol,

and

this

is

basically

a

noted

down

uniformly

in

the

finger

scription.

So

we

can

describe

the

differences

there.

What.

N

I'm

most

interested

in

is

reading

up

on

some

of

that

experience

on

when

you

did

that

I

am

like.

There

are

always

some

new

standards

efforts,

I,

don't

I,

don't

necessarily

care

about

them.

I

want

to

learn

like

did

those

things

that

you

try

to

accomplish

actually

work

out

like.

Where

are

the

problems,

and

maybe

maybe

you've

traded

those

descriptions

already?

Yes,.

P

P

Okay,

this

part

explicitly

about

that,

would

be

probably

a

report

from

this

hackathon,

which

was

just

the

last

weekend

and

I.

Don't

know

how

much

time

you

had,

but

definitely

this

is

something

we

also

working

on.

So

there

is

another

clock

fest

happening

at

the

debrief

you

see

face

to

face

at

the

upcoming

weekend.

We

also

again

people

from

different

organizations

come.

There

will

be

some

reporting

on

that

and

the

last

thing

I

just

wanted

to

add,

because

you

asked

about

a

semantic

level.

P

So

this

is

something

that

goes

on

top,

and

that

is

something

that

we're

currently

collecting.

So

it's

looking

into

ok,

but

in

kind

of

semantic

interactions,

do

we

have

in

the

different

ecosystems

and

actually

listing

this

down

and

creating

vocabulary,

but

there

we

need

basically

yeah

I

could

overview

of

what

is

out

there.

Maybe

activity

to

point

to

is

the

IOT

schema.org,

we're

kind

of

the

the

ones

that

are

easy

to

identify

because

they

occur

across

all

these

ecosystems

are

being

collected

again

in

some

kind

of

uniform

format

that

can

apply

over

these

different

ecosystems.

O

Are

kind

of

the

floor

so

quickly

comment

on

there,

like

with

enzyme

experience,

it

was

very

simple

thing

that

they

did

so

far,

so

for

sure

we're

gonna

be

learning

more

on

that.

How

well

does

it

my

pics

map,

so

right

now,

with

the

prototype

did,

is

that

it

curious

from

the

management

server

what

kind

devices

are

registered

there?

One

of

the

you

are

eyes

that

are

exposed

and

it

maps

them

to

think

thing

that

thing

description

well,

the

next

step

is

to

do

semantic

mapping

and

then

figure

out

like

how

much

tools

how's

vocabulary.

C

So

these

are

like

really

good

next

steps.

I

really

appreciate.

You

know

where

you're

going,

but

before

you

get

to

the

more

complex

devices,

I

think

it

might

be

useful,

also

to

expand

out

your

with

a

more

complex

model

of

the

existing

devices,

that

is

to

say,

Hennis,

has

worked

with

ace

and

other

things.

C

So

one

of

the

challenges

that

when

I

talked

to

my

partner's,

you

know

this

by

the

way

I'm

Elliott

I

work

at

Cisco

the

moment

we

add

in

an

authorization

model

it

gets

even

with

the

simplest

devices

it

gets

pretty

complex

and

I

would

I

think

that's

a

great

area

for

discovery.

I

realize

I'm,

saying

this

without

saying:

I'm

gonna.

Do

it

okay,

but

it's

just

we're

going

through

this

now

for

very

simple

cases

and

they're

turning

very

complex.

You

know

you've

been

trying

to

get

something

to

market,

it's

very

hard,

so.

E

That's

that's

a

really

good

point

and

what

we're

working

on

a

w3c

and

probably

need

to

look

at

here,

also

we're

looking

at

how

do

we

create

security

models

for

individual

endpoints,

so

you

can

authorize

and

learn

how

to

securely

communicate

with

them

and

we

sort

of

seeing

that

as

a

next

step,

but

not

far

behind

so

yeah

you're

using

access

control

entries.

How

do

you

generate

the

correct

token

subject,

role

for

our

back?

All

of

those

things

we're

trying

to

work

that

into

the

next

next

phase

of

design.

For

you

for

that,

my.

M

E

M

E

M

E

Okay,

so

basically

that's

these

all

really

been

covered.

The

one

thing

I

wanted

to

say

that

we

find

we're

looking

at

more

diverse

models,

we're

looking

at

an

automotive

model

and

the

automotive

model

is

very

dependent

on

being

able

to

understand

the

feature

of

interest

that

the

thing

is

bound

to.

So,

if

I

have

a

door

switch,

the

automotive

model

really

requires

us

to

say

which

door

that

is

and

that

it's

a

door

and

and

that's

something

that

we

don't

have

in

the

IMT

schema

on

top

definition

model.

E

D

Turn

your

screen

with

us.

We

can

see

that

hello,

yeah,

so

I

need

something

completely

different,

going

to

talk

about

a

research

project

that

is

being

developed

in

my

team

right

now.

That

allows

us

to

do

deep

learning

on

microcontrollers,

which

I

think

is

one

of

the

most

exciting

products

that

we

can

be

doing

right

now.

My.

D

So

actually

we

went

to

do

fields

sitacles

the

conference

or

six

days.

So

during

that

time,

with

its

IT

training

like

how

do

you

build

devices?

How

do

you?

Where

do

you

store

the

data?

What

do

you

do?

It

is,

and

after

we

actually

went

into

the

fields,

so

we

went

to

dairy

farms

or

with

the

chicken

farms.

Actually

capturing

data

that

was

published

in

open

data

sets

that

a

variety

of

universities

then

used.

D

So

a

university

in

Kenya

was

actually

using

the

samples

that

we

generated

during

that

during

that

workshop

and

returning

in

May

very

happy

about

it

full

day

of

science.

Africa

we're

gonna

do

eight

days

a

hundred

students,

three

field

project,

three

fieldwork

projects

and

again

just

actually

building

something

and

getting

this

technology

in

hand

with

students.

Yes,

very

cool.

D

But

if

we're

taking

one

step

back

to

actually

a

lot

of

the

value

of

machine

learning

is

added

if

we

can

run

all

these

algorithms,

not

in

a

big

data

center,

but

rather

on

very

small

edge

notes.

So

one

of

the

cool

projects

that

we've

seen

in

the

last

year

is

a

sensor

fusion,

where

you

combine

a

number

of

relatively

cheap

sensors

and

get

data

points

from

all

the

sensors

and

see

if

these

these

points

are

not

worth

that

much.

D

You

can't

infer

much

from

it,

but

if

you

combine

a

lot

of

cheap

sensors

together,

you

create

like

this

was

the

guys

at

gee

rats

called

a

super

sensor,

so

there's

a

there's,

a

really

good

video

on

that

link.

The

slides

were

published

after

is

where

they

all

kinds

of

stuff

that

happens

in

the

house

like

stuff

like

a

faucet,

turns

on.

They

can

detect

from

this

very

very

small

group

of

sensors

and

that's

all

just

because

of

training

on

a

variety

of

different

sensors

at

the

same

time

in

the

school.

D

Another

thing

is:

what

happens,

what

what

Google

is

doing

with

their

keyboards

on

androids

a

technique

called

federated

learning?

Now,

there's

a

there's,

a

local

model

running

on

the

Android

phones

that

gets

locally

trained

as

well,

but

what

you

don't

want

to

do

is

send

raw

training.

Data

is

preferably

if

I'm,

if

I'm

a

keyboard

manufacturer

I,

want

to

get

data

from

all

my

users

and

get

that

back

and

refine.

D

My

global

model

then

push

out

to

all

the

Android

phones,

but

don't

want

to

push

any

raw

data

into

a

cloud

because

there

might

be

all

kinds

of

privacy

aspects

in

there

and

Plus.

You

know

from

living

on

how

often

I

type

it

costs

quite

a

bit

of

down

plates

or

just

stream

it

upwards.

So

people

is

doing.

Is

they

have

a

local

model?

They

keep

refining

that

and

they're

sending

in

the

changes

in

the

weights

in

the

model

back

to

cloud,

and

they

use

it

from

all

the

people

to

refine

their

global

model.

D

So

it's

a

hybrid

model

way

to

do

some

training

locally

and

I.

Take

the

learnings

that

you

do

in

that

model

and

push

it

upwards

and

uptake

your

global

model

that

runs

somewhere

on

the

cluster

of

GPUs.

Another

thing

is,

for

a

bunch

of

file

formats

were

really

good

compression.

If

I

draw

a

number

I

draw

a

letter,

then

I

don't

need

to

send

the

whole

image.

I

can

just

say:

okay.

D

Well,

that's

an

eight

and

I

just

send

one

byte

over

line,

but

if

we're

going

to

look

at

larger

data

sets

or

interesting

stuff

that

happens

all

around

us,

then

I

don't

have

a

model

that

can

compress

the

data

straight

away.

So

just

think

we'll

order

encoding,

where

you

basically

have

a

deep

learning

model.

D

That's

going

to

keep

producing

features

up

until

the

moment

that

it

has

its

own

compression

for

Matheny

had

the

model

on

the

outer

sides,

or

it

can

decompress

that

and

basically

give

you

a

learned

representation

of

what

you

try

to

send

it.

This

is

a

model

where

the

deep

learning

model

itself

is

going

to

figure

out

an

encoding

scheme

for

this

training.

Very

very

interesting

compression

schemes

that

we

didn't

device

before

for

spare

very

localized

sets

of

data.

D

It's

like

a

flying

self-contained

systems.

What,

if

you

want

to

do

something

in

an

area

where

there

is

no

internet,

if

I'm

going

to

deploy

a

machine

learning

model

on

a

dairy

farm

so

where

it

comes

in

Nia,

where

the

average

income

is

under

$100

a

month

to

me,

there's

no

internet

I'm

not

going

to

put

down

a

cluster

of

GPUs

extra.

Do

some

deep

learning

some

deep

learning

models

there,

but

I

am

very

interested.

What

machine

learning

can

bring

to

me?

D

So

one

of

the

things

that

we

wanted

to

detect

is:

can

we

see

if

a

cow

is

in

heat

at

any

points

based

on

data

that

we

get

straight

from

the

Scout

skin

and

it's

important

for

the

farmers,

because

a

semen

sample

is

about

two

to

four

dollars

and

to

the

four

dollars

is

a

lot

of

money?

So

you

only

want

to

put

that

in.

If

you

have

the

highest

chance,

the

cow

is

actually

getting

pregnant

and

there's

a

little

blog

post

about

that.

D

Metric

well

suitable

non

once

he

was

doing

her

PhD

internship

at

arm

and

she

was

looking

at

some

inferencing

numbers,

and

this

was

for

a

very

simple

environmental

monitoring

system

or

shakes.

He

found

that

the

energy

per

inference

for

doing

it

on

the

edge,

if

no

thix

relatively

longer

and

more

cycles,

was

over

ten

times

more

efficient

for

other

stuff,

like

image

or

image,

recognition

or

video

or

object.

Recognition

in

videos

might

be

the

other

way

around.

There

are

use

cases

where

do

you

than

the

edge

just

more

efficient.

D

D

Well,

I

say

so:

just

the

small

they're,

cheap

and

they're

efficient,

I

mean

if

they

don't

do

anything

they

just

basically

don't

consume

any

memory

either,

but

the

downside

slow,

a

very

limited

memory

so

that

prompted

us

winning

an

arm

were

a

couple

of

guys

with

in

arm

in

our

research,

not

a

complete

research

team

in

or

the

practical

end

to

come

up

with

a

library

to

do

deep

learning

on

these

type

of

devices.

Deep

learning

on

microcontrollers

and

that's

the

the

micro

transfer

projects,

so

micro

tensor

is

an

open-source

library.

D

It's

a

budget

to

licensed

specifically

made

to

run

tension

flow

models.

So

what

does

it?

You

normally

train

on

your

GPUs

that

you

can

run

directly

on

my

controller,

so

it

only

does

it

is

points

classification,

so

no

training.

So

what

you

do

is

you

push

the

trains

model

that

you

train

in

tensorflow

down

to

the

mic

controller?

And

after

that

you

can

do

classification

with

a

couple

of

really

smart

tricks.

D

We

are

able

to

do

classification

of

a

lot

of

machine

learning

models

and

under

two

minutes,

it's

6km,

so

that

includes

stuff

like

Android

and

digit.

Recognition

will

just

show

in

a

video

but

even

object,

classification

in

and

it's

a

really

good

step

forward.

Think

it's

really

cool

it.

Dis

enables

all

kinds

of

incredibly

interesting

use

cases

without

having

to

either

upstream

all

your

raw

data

to

clouds

or

do

your

inference.

You

know

a

really

expensive

machine,

so

it's

developed,

it's

not

an

arm

project

per

se.

D

It's

originated

from

arm

and

these

two

four

core

contributors,

as

you

can

see,

I'm

not

there

Neil

Neil

was

actually

in

my

team.

He's

the

developer,

evangelist

infinite

things

for

a

pack.

I

helped

it

seem

a

little

bit

so

I

wrote

the

simulator

for

this.

Who

makes

a

little

bit

easier

to

develop

it

I'm,

not

part

the

core

team,

also

not

a

machine

learning.

It's

machine

data

scientist,

anything,

that's

very

fun

it

after

it,

so

he

like,

hey

I,

want

to

develop

on

that

feel

free.

D

You

know

for

people

in

the

core

team

of

which

none

of

them

is

their

full-time

jobs

actually

develop

this.

We

we

definitely

work

on

contributions,

so

just

listen

get

up.

So

here's

a

little

demonstration

and

what

we're

going

to

do

here

is

draw

something

on

touchscreen

banana

for

scale

and

after

that

it

will

do.

It

will

basically

tell

you

what

you're

just

drawn

on

it.

So

I

hope

it's

physical

from

the

back,

because

I

was

expecting

a

little

bit

larger

screen.

D

D

And

there's

a

nice,

so

this

is

a

basic

handwritten

digit

recognition

running

on

a

microcontroller

in

less

than

two

minutes,

56k

of

ram

we're

seeing

school.

So

what

are

we

seeing

here

is

essentially

the

the

hello

world

of

machine

learning,

and

this

is

the

Emma

Stata

set,

which

consists

of

60,000

images

of

handwritten

digits.

D

So

this

is

trained

in

tensorflow

just

on

a

normal

computer

and

the

script

is

listed

there.

So

you

can

run

it

yourself

and

when

we

do

the

classification,

because

that

is

what

we

doing

in

the

microcontroller,

not

the

training

we

take

whatever

we

just

put

in

on

the

touchscreen,

we

rotate

it.

So

it's

upwards.

D

In

the

end,

we

get

an

output.

So

it's

a

single

neuron,

so

we

need

to

work

backwards.

We

need

to

scale

784

neurons

down

to

one.

Just

before

we

have

the

output.

We

have

an

output

layer

which

is

ten

potential

outputs,

because

there

are

10

numbers

0

to

9,

and

then

we

run

softmax

on

it.

So

we

only

picked

one

that

has

the

highest

probability

of

actually

matching

in

there.

Then

we

run

so

what's

kind

of

reported.

Is

that

what

we

do?

We

jump

from

the

input

layer

to

the

hidden

layer?

D

So

all

of

these

neurons

are

connected

on

both

the

input

layer

and

the

hidden

layer,

and

just

before

we

do

a

metrics

multiplication

with

nones

ratings.

We

don't

set

theta

to

be

known

for

the

training

set

with

a

little

bit

bias,

which

also

no

because

we

trained

this

model

and

they

were

in

an

activation

function.

We

did

it

twice.

We

can

talk

about

like

the

actual

details

of

this

later

now.

Why

is

this

important?

Because

how

are

we

going

to

run

this

on

a

mic

controller?

This

looks

hard.

D

This

game

looks

cool

and

so

what's

important.

This

is

matrix

multiplication

table,

because

that

is

what

does

kind

of

drive

the

memory

usage

of

this

model.

So

we

have

on

the

left

side,

784

input

neurons

and

you

have

an

hidden

layer

behind

the

28

and

an

hidden

layer

of

64

and

an

output,

well,

actually

a

layer

of

10

and

an

out

layer

of

one

holding

at

all

in

memory.

D

That's

what

we

want

to

reduce,

because

for

a

lot

of

machine

learning

models

during

classification,

RAM

usage

is

the

thing

that

just

explodes

so

the

biggest

trick

that

we're

doing

is

that

we

do

quantization

and

quantize

it.

Typically,

what

you

hold

to

weights

of

the

neurons

as

health

in

a

32

bits

floats

and

that's

completely

necessary

during

training,

because

it's

the

only

way

can

probably

hold

everything

have

like

proper

accuracy.

We

figure

out

and

the

guys

intense

flow

figured

it

out

as

well,

based

on

research

by

Pete

worden.

D

Is

that

if,

instead

of

32-bit

flows

use

8

bits

integers,

you

have

a

little

bit

of

loss

in

accuracy

so

test

against

the

cypher

10

data

set,

which

is

a

object.

Classification

in

video

feeds

about

0.4

percent

accuracy,

was,

but

that

drastically

reduces

the

amount

of

memory

that

we

need

with

leave

four

times

so

tense

flow

requires

deep

floating-point,

D

quantization.

D

So

if

you

quantify,

if

you

quantize,

you

holds

you

map

a

floating-point

to

tune

into

eight,

so

you

hold

the

minimum

value

and

the

maximum

value

that

you

might

have,

and

then

you

met

with

in

between

tensorflow

requires

to

put

it

back

to

a

float

whenever

you're

done

with

an

arrow.

We

worked

around

that

because

this

is

very

slow

on

my

controllers

that

doesn't

have

a

floating-point

units

of

them.

D

This

way

around

this,

and

now

we

see

the

current

memory

usage,

we're

very

aggressive

with

birching

layers,

so

only

the

if

we're

going

from

the

input

layer

to

hidden

layer,

one

we

don't

let

anything

else

in

memory.

So

and

then

you

see

that

the

the

metric

multiplication

table

he