►

From YouTube: IETF101-DTN-20180323-0930

Description

DTN meeting session at IETF101

2018/03/23 0930

https://datatracker.ietf.org/meeting/101/proceedings/

C

B

D

A

E

A

A

A

F

F

F

G

Yeah,

just

a

few

words

about

the

TCB

CL

work.

I

know:

Brian

has

been

working

very

hard

on

this.

These

put

outs

and

latest

updates.

Very

recently,

I

asked

Victoria

to

sort

of

callate

most

of

the

outstanding

issues

that

were

circling

on

the

list

and

then

some

other

ones

she

worked

with

herself.

That's

really

that's

less

of

a

presentation

about

what

various

people

think

is

wrong.

G

It's

an

opportunity

to

discuss

in

the

working

group

here,

while

everyone

is

currently

paying

attention

to

TCP,

CL

and

DT

and

in

general

just

to

make

sure

that

we

have

the

consensus

of

the

room

and

the

list

and

meet

echo

so

that

we

can

really

get

some

consensus

on

the

behavior.

That's

expected

at

the

TCP

CL

layer,

hopefully

get

the

resolutions

militate

and

then

Brian

with

assistance

from

anyone

who

is

feeling

generous.

G

F

G

C

H

H

This

is

just

that

overview.

The

presentations

not

really

much

information

for

what

we're

doing

become

Vaughn,

so

I've

included

this

slide,

yet

just

for

background

anybody

that

is

reading

this

fresh,

but

it

hasn't

changed

since

the

original

IETF

that

I

presented

this

on

we're

still

trying

to

do

the

same

thing

and

refine

the

draft

to

be

able

to

do

this

in

a

consistent

way.

H

So

you

can

go

to

the

next

slide

and

one

of

the

major

goals

is

not

to

change

behavior,

workflow

or

scope

from

the

original

TCP

CL

and

as

I'll

talk

about

one

of

the

last

things

they

got

missed

in

an

earlier

edit

was

the

negotiation

of

the

reactive

fragmentation,

which

is

in

the

last.

Oh

seven

drast

been

added

back

in

in

a

form

you

can

go

to

the

next

slide

then.

H

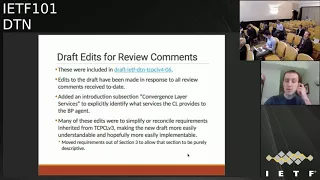

Either

I

tried

to

go

through

all

the

comments

from

review

and

either

address

them

by

an

actual

change

in

the

draft

or

respond

to

the

comments

on

the

mailing

list.

Email

I

did

add

a

section

to

the

frontmatter

I

think

it's

section

1.1,

which

is

the

commercial

Slayer

services.

So

this

should

help

the

understanding

of

exactly

where

the

line

is

drawn

between

the

the

CL

and

the

bundle

protocol

agent

I.

H

So

section

3

is

is

an

overview

to

an

implementer

or

somebody

who's.

Trying

to

troubleshoot

protocol

on

the

later

sections

become

actual

vertical

requirements.

So

next

slide,

please

and

I

didn't

realize

until

it

was

too

late

until

it

was

the

day

after

the

cutoff.

But

I

had

been

making

later

edits

to

my

own

copy

of

the

draft,

which

is

now

version

zero,

seven,

but

I

missed

the

cutoff,

so

they

did

not

get

included

until.

H

Relatively

recently,

so

there

were

a

couple

of

services

and

these

changes

are

relatively

sparse.

There

were

a

couple

of

services

that

were

missed

as

far

as

again,

this

listing

of

services

is

supposed

to

be

complete,

so

the

obvious

one

of

actually

initiating

a

transmission

was

missing,

so

that

was

added

in,

and

there

was

a

little

bit

of

touch-up

in

the

services

listing.

H

One

of

the

actual

changes

in

behavior

that

was

done

in

the

0-7

Draft

was

to

add

back

in

from

TCP

Co

v3

negotiation

of

kin

and

end

of

the

CL

session.

Do

reactive

fragmentation,

so

I'm

sure

we'll

discuss

this

a

few

minutes,

but

the

intent

of

this

as

an

extension

header,

is

a

couple

of

different

things.

One

is

that

it's

not

really

the

convergence

layer,

that's

doing

any

of

the

logic

for

reactive

fragmentation

itself.

It's

really

the

BP

agent.

That's

doing

this.

H

The

reason

for

that-

and

it's

described

a

little

bit

in

the

draft

itself,

but

the

reason

for

that

is

that

the

by

the

definition

of

how

a

transfer

can

fail

at

the

point

the

transfers

failed.

The

TCP

CL

session

really

has

go

bad

in

it,

and

the

session

effectively

will

be

either

closed

by

one

endpoints

or

will

have

failed

at

like

an

operating

system.

H

Tcp

socket

layer

and

the

TCP

CL

session,

and

the

TCP

CL

itself

is

a

bit

useless

from

the

point

of

view

of

doing

the

reactive

fragmentation,

either

for

generating

the

reactive

fragments

or

for

reassembling

them.

So

this

is

a

BP

agent

task

and

the

only

thing

that

the

CL

can

do

in

terms

of

this

reactive

fragmentation

behavior

is

to

negotiate

and

signal

whether

each

of

the

ends

of

the

session

is

capable

of

supporting

this.

H

What

it

really

represents

is

that,

what's

what's

negotiated

in

the

0-7

version

of

the

draft,

is

this

particular

variation

of

reactive

fragmentation

as

an

optional

behavior,

and

if

somebody

wants

to

come

up

with

their

own

late

variation

of

what

reactive

fragmentation

should

be

and

how

it

should

be

parameterize,

then

that

can

be

accomplished

through

a

different

extension

item

and

B.

As

far

as

this

is

concerned,

it's

not

necessarily

directly

affecting

the

TC

PCL

segment

of

either

end

of

a

session.

H

H

H

I

must

have

condensed

this

thing

yeah.

This

looks

right.

So

yes,

so

the

zero

seven

I

know

the

zero

six

version

is

what

was

on

the

agenda,

but

there

is

a

zero

seven

version

that

makes

those

late

changes

in

some

areas,

and

this

was

something

that

I

know

is

mentioned

real

recently

in

the

mailing

list

and

I'm

not

sure

if

it's

worth,

including

in

the

see

the

TCB

CL

draft

itself.

G

It's

an

RFC

correct

today,

yeah

I'm,

sorry,

Scott

I

was

holding

holding

your

question

just

because

it's

very

difficult

to

me

taker.

Do

you

still

have

a

question:

okay,

okay!

So

no

thanks,

Brian

obviously

stay

on

the

call,

because

we're

going

to

move

straight

on

sir.

So

hopefully

Vicki's

got

a

little

bit

of

a

presentation

on

this,

and

then

we

can

try

and

get

a

discussion

forum

I'm.

I

Buying

everyone

so

Rick

caught

me

in

the

bar,

I

think

and

said

hey.

Can

you

give

this

a

read

and

I

did

and

thinking

of

this

from

the

point

of

view

that

having

been

sitting

in

Rick's,

chair,

I,

understand

DTN,

but

I

haven't

actually

implemented

TCP

CL

before

a

couple

of

things

jumped

out

at

me,

one

one

when

you

do

something

like

a

shutdown

for

a

version

mismatch?

Don't

you

end

up

opening

yourself

up

to

essentially

losing

potential

connectivity?

H

There

was

earlier

I

think

a

similar

comment

on

this

and

my

if

I

remember

right

right

now,

there

is

in

the

draft

language

about

that.

A

node

should

start

at

its

highest

version

and

if

it

supports

multiple

versions

to

work

down

the

list.

So

there

is

language

in

there

about

attempting

multiple

versions,

but

not

from

the

perspective

of

like

what

you

just

mentioned,

where

it's

it's

up

front

exposed.

These

are

the

versions

supported

right.

H

I

Okay.

So

when

do

I

make

a

decision

as

to

do

this

or

not

do

this?

Okay,

when

I

cancel

it

that

I

do

a

kind

of

generic

statement

that

I

made

you

recur.

Lior

was

is

that

it

may

be

useful

to

have

something

like

a

state

diagram

that

describes

when

you

move

from

these

different

points

to

other

points

and

what

the

what

the

decision

would

have

to

be

in

order

to

make

those

moves.

J

G

J

So

bringing

back

in

their

contact

had

an

extension

to

to

sort

of

the

reactive

fragmentation

and

some

of

those

signals

that

go

between

the

kovitch

layer

and

the

bundle

protocol,

agent

and

so

I

found.

This

quote,

which

I

thought

it

might

be

quite

useful

to

have

seen

a

little

bit

and

so

reactive

fragmentation.

F

J

I

hope,

I'll

get

on

certain

and

she's

got

the

type

which

is

reactive,

fragment

and

the

actual

value

of

it,

then,

is

a

one

octet

Flags

field,

two

flags

in

that

I

can

generate

which

says

that

the

sending

node

can

generate

reactively,

fragmented

bundles.

So

it

wants

that

feedback

on

how

much

and

how

much

did

actually

get

acknowledged

and

I

can

receive.

J

So

the

idea

is,

then:

if

the

sender

can't

generate

reactive,

fragments

and

the

receiver

can

receive

them,

and

the

convergence

layer

then

will

pass

that.

Well,

the

receive

data

Sall,

the

segments

that

gets

sent

through

and

and

if

the

transfer

line

fails,

it

can

tell

the

bundle

protocol

agent

so

that

it

can

form

a

smaller,

bundled

with

the

unacknowledged

section

per

bundle

on

the

receiving

side

and

the

convergence

layer

would

hand

the

bundle

data

upwards

to

the

agent.

Even

if

the

transfer

failed

and

so

then

it

can

then

create

a

fragment

from

that.

J

But

what

confused

me

was

if

there's

different

combinations

of

having

the

critical

flag

and

then

different

combinations

of

can

generate

on

one

side

in

cameras,

even

the

other,

but

I

think

in

most

cases

you

probably

want

to

establish

the

session,

but

disabled

acknowledgments

and

one

other

point

that

I

want

to

make

on

this-

was

that

since,

in

that

TCP

CL

session,

both

PS

can

then

initiate

transfers.

So

bundle

can

go

in

either

direction.

J

It

wasn't

really

clear

to

me

when

the

data

that

gets

received

and

gets

actually

transferred

up

to

the

bundle

protocol

agent

and

it

does

say

in

the

draft-

it

hints

at

it

in

a

few

places

that

the

bundle

data

can

be

inspected

by

a

bundle,

local

agent,

and

so

that

then,

could

just

be

refused

and

so

I

guess

that's

happening

as

the

segment's

come

in

and

there

was.

It

wasn't

really

clear

to

me

and

and

then

part

of

another

discussion

we

mentioned.

J

J

So

the

bundle

protocol

agent

would

tell

the

convinced

layer

began

in

transmission,

it

would

give

a

bundle

and

there's

one

in

there

at

the

moment

that

says

the

convergence

layer

would

indicate

transmission

availability.

So

the

fact

that

the

session

is

open,

Michael

I,

was

wondering

if

we

were

to

get

the

same

information

from

the

fact

that

we

get

the

session

established

signal

and

also

we

know.

If,

if

we've

begun

a

transmission,

then

we

know

if

it's

succeeded

or

failed

because

there's

another

signal

for

that

and

the

other

ones.

There

are

a

transition.

J

Success

of

the

bundles,

fully

transferred

an

intermediate

progress,

so

I

wasn't

sure

the

code

in

the

draft

is

that

this

is

at

the

granularity

of

each

transferred

segment.

So

I

was

wondering

if

that's

the

number

of

bytes

that's

been

acknowledged

so

far.

If

that

gets

passed

up

for

every

segment

that

gets

acknowledged

and

also

transmission

failure,

so

I

was

wondering

in

some

of

the

intermediate

progresses

with

just

saying:

transmission

failed.

J

But

we

said

this

many

bytes

through

and

if,

in

that

section

of

the

draft

Osweiler

and

also

says

that

TCP

see

our

supports

indication

of

reasons

for

the

transmission

failure

and

so

under,

and

then

in

fact

meant

that

there

was

reason.

Codes

should

go

up

to

the

bundle

protocol

agent

or

not

wasn't

really

clear

on

that

piece

on

the

receiving

side

and

again,

we've

got

that

intermediate

progress.

J

So

I

was

wondering

if

that

probably

meant

that

data

as

it

comes

in

every

segment,

gets

transferred

up

to

the

bundle

protocol

agent,

and

there

are

various

areas

in

the

draft

which

kind

of

hint

at

that,

and

the

fact

that

the

bundle

protocol

agent

can

inspect

that

bundle

and

is

able

to

then

refuse

it,

which

is.

The

second

signal,

is

from

the

bundle

protocol,

agent,

downwards,

say

and

interrupt

that

reception

and

then

the

other

two

are

the

courageous

layer

saying

that

bundle

was

fully

received

or

failed.

G

From

my

perspective,

some

of

these

questions

hint

at

a

layering

model

in

there

so

and

I-

know

Brian's

spoken

about

this

before,

which

is

the

convergence?

There

really

should

be

just

shoveling

the

bytes

of

a

bundle

and

notifying

up

to

the

bundle

processing

agent

that

I

have

a

bundle.

I

only

got

the

first

half

of

a

bundle

per

se

or

it's

failed.

Don't

expect

any

more

bundles

from

me.

I

think

some

of

the

complication

comes

where

you

get

that

in

to

meet

make

upon

you

get

that

progress.

G

Feedback

is

starting

to

blur

the

lines

and

it's

the

questions.

The

working

group

is:

do

we

care

about

this

layering

enough,

or

is

it

just

seen

as

a

as

a

sort

of

computer

science

II

nice

to

have,

or

does

it

have

value

to

to

stick

slightly

more

formally

to

this

separation,

which

says,

are

open

questions.

G

K

K

K

There

are

others

there

could

be

others

that

that

are

similarly

capable

of

doing

reactive

fragmentation

I

think

we

want

to

have

a

common

framework

for

all

those

things

we

don't

want

it

like.

We

invent

it

for

every

single

one,

so

I

think

the

key

to

doing

that

is

maintaining

clean

layer

in

between

bundle

protocol

and

the

convergence

layer,

as

as

Vickie

was

was

speaking.

I

was

thinking.

What

is

there

a

simpler

way

to

do

all

this,

and

my

first

thought

was

when

I

just

so

flag

in

the

bundle

and

not

have

the

curves

there?

K

Then

the

convergence

layer

has

to

parse

the

bundle

and

pick

it

out

of

the

flag

out

of

the

bundle.

So

what

I?

What

I

think

is

possible

is

to

have

a

to

simplify

the

negotiation

and

the

thing

that

came

to

my

mind

as

I

was

doing

this

was

this

I

would

propose

that

we

have

a

flag

in

the

segments

of

the

of

the

the

convergence

their

protocol.

Attentionally

do.

K

So

I

think

it

might

be

a

like

a

bit

too

setting

in

every

in

every

segment

because

he

missed

the

first

one.

You

don't

know

what

to

do

and

I

think

it

only

cost

a

bit

and

I'm

I'm

inclined

to

think

that

it

just

needs

to

be

there

in

each

one,

just

so

that

you

always

have

it

and

that

the

the

and

the

intent

of

that

flag

would

be.

It

would

be

set

by

the

sender

and

it

would

be

different

hop-by-hop

it,

because

it's

convergence

there

and

with

the

flag

would

signify.

K

Is

that

I

require

that

you

fragment

this

bundle.

Reactively

fragment

the

bundle

if

it's

incomplete.

Now

the

the

sender

will

acquiring.

It

doesn't

mean

that

the

receiver

has

to

comply.

It's

that

this

is

what

the

sender

is

intending,

because

the

sender

is

committing

to

being

able

to

do

the

sending

end

of

the

reactor

fragmentation

by

setting

this

flag

when,

when

the

receiver

receives

the

data,

if

it

determines

that

there's

an

incomplete

reception,

then

then,

if

the

sender

simply

authorized

reactor

fragmentation

and

if

it

is

able

to

do

it,

it

does

the

reactor

fragmentation.

K

It

signals

to

the

sender

whether

or

not

it

is

doing

this

by

the

presence

or

absence

of

the

interim

acknowledgments.

So

if,

if

the

receiver

is,

if

the

sender

signals

that

reactor

fragmentation

is

required

on

this

bundle

and

the

receiver

is

able

to

do

it,

then

interim

progress

acknowledgments

come

back

so

that

the

the

sender

knows,

and

it

has

the

information

to

do

the

to

do

the

fragmentation.

If

either

the

flag

is

not

set

or

the

receiver

is

unable

to

do

the

reactor

fragmentation,

then

there

should

be

no

interim.

K

No

progress,

acknowledgments

coming

back,

only

only

a

acknowledgment

at

the

end,

whether

it

is

all

received

or

none

received,

so

the

this.

The

negotiation

is

actually

pretty

simple

and

the

processing

is

pretty

straightforward.

The

absence

or

presence

of

the

interim

progress

acknowledgments

indicates

whether

or

not

the

reactor

fragmentation

can

happen

and

will

happen.

Okay,.

G

Rick

speaking

personally

checked

off

I.

Take

your

point:

what

happens

if

somebody

am

I

allowed

to

change

that

flag

during

the

transmission

or

once

I

commit

to

it?

Do

I

commit

to

it

or

do

we

use

this

as

I'll

flip

it

when

I

got

with,

as

if

I

understand

there

are

suddenly

start

setting

this

bit

when

I

believe

I've

sent

you

enough

data

that

I

now

reacted

from

starts

to

make

sense

exactly.

K

Because

it's

because

it's

a

convergence,

though

it's

by

definition,

it's

hop-by-hop.

So

it's

different

on

every

hop

of

of

the

of

the

end

path,

because

it's

really

a

function

of

what

the

sending

node

can

do

and

what

the

receiving

node

can

do,

and

it

would

be

a

an

implementation

detail.

How

the

sending

node

on

the

protocol

agent

would

convey

this

information

to

the

convergence

layer

adapter

the

converts

their

adapter

then

would

be

responsible

for

setting

the

flag

in

the

in

the

segments

that

pass

to

its.

G

Peer

just

as

a

clarification

point,

because

Scott

Tamiya

sort

of

continuing

a

couple,

Viki

Scott

nurse

or

a

continuing

a

conversation

we

had

earlier

we're

trying

to

when

we

say

reactive

fragmentation.

We

are

focusing

on

a

single

use

case

which

is

I.

Am

the

sender

is

sending

a

very

big

bundle

to

Scott

and

halfway

through

the

transmission,

the

line

goes

down.

The

transmission

breaks.

What

I

don't

want

to

do

is

next

is

throw

away

all

information

that

I've.

Just

you

know

the

one

gig

of

the

two

gig

I've

sent

to

him.

G

I

don't

want

to

resend

next

time

round

and

that's

that's

all

we're

trying

to

scope

reactive

fragmentation

to

is

the

recovery.

Online

failure

and

I

expect

one

was

going

to

say,

but

there

are

some

really

cool,

exciting

things

you

can

do

the

reactive

fragmentation,

but

that

is

out

of

scope

at

this

point

on.

L

Own

info,

do

you

know

yeah

I,

don't

quite

understand

why

reactive

fragmentation

is

now

reeling.

It

took

the

earth

again

because

I

think

at

the

beginning

of

this

working

group,

it

was

established

that

there

are

all

sorts

of

security

complications

associated

with

the

use

of

reactive

fragmentation

and

I've

been

sort

of

half

following

the

discussion

on

the

Middle

East

some

time

back,

I

never

saw

an

email

from

a

terrain,

so

maybe

it

was

not

the

way

I

was

busy

were

or

a

thought.

K

E

C

G

Following

on

for

a

more

general

point

of

following

on

from

Ronald

DTM

is

delay

and

disruption,

tolerant,

networking

now

I

know

a

lot

of

the

delay

focused

members

of

this

group.

Don't

really

care

about

reactive,

because

it's

it's

all

about

delay

management

for

those

of

us

on

the

disruption

side

of

it.

The

reactive

fragmentation

does

make

sense,

because

if

I've

got

a

big

file

and

it

breaks

halfway

through

I'd

kind

of

like

to

not

have

to

send

it

hard

again.

M

N

Brain

EPL,

so

so

we've

talked

about

a

couple

of

things,

but

I

have

a

sort

of

orthogonal

question

for

the

group,

which

is

this.

This

is

starting

to

sound

to

me

like

the

discussions

we

had

in

BP

Biss

regarding

custody

transfer,

which

was

a

feature

that

some

people

needed,

and

some

people

did

not

that

at

the

high

level

seem

simple,

but

could

get

bogged

down

into

details

and

use

cases,

and

there

was

a

thought

of.

N

N

Is

there

value

in

saying

that

the

TCP

CL

is

vitally

important

as

a

draft,

because

it

shows

how

these

three

things,

security

and

protocol

and

convergence

layer

could

work

together

and

that

we

should

be

focusing

on

a

section

in

there

that

deals

with

how

to

extend

it

or

to

accept

the

fact

that

there

may

be

multiple

other

CLS

in

the

future

in

some

way.

But

is

this

discussion

necessary

to

hold

up

the

tcpo

right

now?

This

is

my

is

my

question

to

the

work

in.

G

Response

with

my

chair

hat

on

that

is

a

very

valid

question

and

half

the

reason

I

wanted

to

have

this

discussion

today.

My

response

would

be

if

the

working

group

says

fine

push

it

out,

we'll

do

a

TCP

extension

that

you

know.

As

long

as

we've

got

the

header

contact

header,

we

can

negotiate

extensions

or

something

then,

as

it

currently

stands.

In

my

opinion,

the

TCP

CL

draft

alludes

to

hey.

G

You

can

do

reactive

fragmentation

with

this

chunking

and

this

signaling

has

only

bit

arrived

and

a

bit

didn't

arrive

so

I

think

as

it

currently

stands,

people

will

start

to

do

reactive

fragmentation

because

they

think

they

can

see

the

bits

are

there.

If

we

don't

want

to

do

reactor

fragmentation,

I

suggest

we

take

it

out

of

TCP

CL

and

keep

it

really

simple

or

we

do

reactor

fragmentation

and

we

do

it

properly,

but

that's

going

to

delay

your

edge

and

Scots

drafts

and

that's

the

real

question

for

the

working

group.

N

K

K

Less

of

a

burden

on

the

convergence

there

development,

but

the

sort

of

thing

that

I

was

proposing

a

little

bit

earlier,

actually

is

simple

enough

to

be

workable.

So

in

that

context,

time

seems

to

me

it's

okay

to

proceed

with

with

including

it

in

this

draft.

If

we

can

make

something

as

simple

as

that.

I

By

everyone

and

I

think

I'm

gonna

make

God's

skin

call

in

a

second,

so

two

comments.

The

first

one

is:

is

you

always

have

to

be

careful

when

you're

running

applications

trying

to

play

fun

games

with

fragment

sizes

when

you're

running

over

top

of

a

protocol?

That's

already

designed

to

do

that

for

you,

and

in

this

case

we

have

it

twice

right,

because

TCP

is

going

to

try

and

figure

out

its

maximum

segment

size

and

an

IP

is

going

to

be

doing

fragmentation

underneath

you,

so

you

always

get

all

sorts

of

interesting.

I

You

know

potential

out

of

order

handling

the

packets

that

drive

application

developers

crazy.

The

second

thing

to

consider

is

reading

through

the

reactive

fragmentation

description

in

that's

in

the

spec

right

now,

I'm,

not

sure

it's

going

to

cover

all

the

cases

correctly,

because

I

don't

think,

there's

enough

enough

information

in

each

of

the

segments

in

order

to

make

reassembly

efficient

and

just

to

kind

of

reiterate

something

I

said

to

Rick,

as

we

were

running

past

each

other

in

the

hallway.

I

You

may

want

to

take

this

completely

out

and

actually

make

something

that

looks

more

like

our

sink

running

on

top

of

TCP

CL,

so

that

you

can

actually

do

things

correctly

and

recover

very

quickly

because

they

have

all

sorts

of

hashes

that

allow

you

to

identify

segments

and

bundles

and

blah

blah

blah,

so

that

you're

only

transmitting

the

things

that

you

really

need

to

transmit.

Once

you

should

reconnect

with

with

a

with

a

note.

G

N

So,

just

just

to

speak

to

Tehran's

point

about

security

and

to

Scott's

point.

Whenever

you

do

react,

your

fragmentation,

you,

you

are

opening

yourself

up

to

the

security

concern

of

to

handle

the

case

where

I'm

a

hundred

bytes

away

from

you

know,

I've

received

200

gigabytes,

100

bytes

of

my

large

bundle

and

I

would

prefer

not

to

go

through.

That

again

is

handling

that

case.

It's

hard

to

distinguish

that

from

the

case

of

yes,

that's

2

gigabytes

of

payload

and

since

I

don't

have

the

last

hundred

bytes

of

it.

N

G

G

G

J

The

part

of

it

that

goes

into

TCP

CL

is

not

really

how

you

do

the

reactive

fragmentation,

it's

just

to

enable

some

support

for

it,

so

that

can

be

defined

in

the

future.

So

do

we

still

need

to

be

careful

about

how

much

is

actually

mentioned

in

TCP

CL,

how

we

are

going

to

support

some

future

definition

of

reactive

fragmentation,

or

do

we

just

want

to

strip

that

out

I,

don't

know

how

careful

we

need

to

be

just

to

enable

the

support

for

it.

I.

K

Propose

that

we

strip

it

out

altogether,

it's

it's

a

it's

an

important

capability.

It

shows

up

in

obviously

4358

the

original

architecture

and

people

will

remember

it

and

it

will

come

back

but

I

think

omitting.

It

from

this

specification

does

no

harm

and

possibly

some

good

advice,

by

simplifying

and

and

in

encouraging

simple

implementations.

J

G

N

So

I

don't

have

a

comment

to

that.

What

I?

What

I

would

say

is

this

also

is

reminiscent

of

when

we

discussed

BP

sac,

and

there

was

a

question

as

to

whether

we

included

CMS

and

cryptographic

message,

syntax

and

boxes

and

so

on,

and

the

way

we

resolved.

That

again

was

by

putting

in

giving

some

thought

to

extensibility.

N

If

there's

an

example

that

goes

with

it,

and

that

example

happens

to

be

the

case

of

rector

fragmentation

purely

in

an

informative

way

for

how

extensibility

could

work

that

might

be

a

way

of

sort

of

capturing

thoughts,

but

I

think

the

TC

PCL

needs

additional

language

in

terms

of

how

it

would

be

extended.

If

this

is

the

direction

we're

going

to

go

mark.

H

You

know

one

session,

one

transfer

mechanism

that

there's

a

lot

of

overhead

to

using

it

this

way,

but

it

also

gives

you

a

lot

of

control

versus

keeping

a

session

up

negotiating

parameters

for

that

session.

And

then

those

parameters

are

just

a

blanket

policy

for

every

transfer

that

happens

between

these

two

nodes.

I.

H

For

me,

it

feels

like

it

would

be

a

relatively

simple

copy

and

paste

sort

of

action

to

have

extension

items

to

find

an

area

extension

area

defined

both

for

the

session

itself

and

for

the

individual

transfer.

And

then,

if

somebody

wants

to

come

along

and

say,

I

have

a

fragmentation

extension

for

this

individual

transfer

and

for

this

individual

transfer.

H

The

fragmentation

policy

is

don't

bother

if

it's

less

than

X

number

of

bytes

received

and

do

something

else.

If

it's

more

than

Y

number

of

bytes

received

something

like

that,

but

it

would

be

an

extension

item

applied

to

the

individual

transfer

and

going

back

to

this

is

some

of

Victoria's

early

questions.

H

There's

a

little

bit

of

description

in

the

draft,

but

I

mission.

This

also

on

the

mailing

list

is

that

there's

a

lot

of

interplay

between

the

segment

sizing,

which

is

part

of

the

negotiated

session,

and

what

you

would

do

with

those

segments

in

the

sense

that

if

you

really

didn't

care

about

fragmentation

at

all-

and

you

really

wanted

to

take

the

minimum

amount

of

overhead

and

simplify

the

actual

run

time

behavior

of

the

CL

as

much

as

possible.

Then

a

blanket

policy

would

be

I.

K

I

think

it'd

be

more

awkward,

so

I

think

it

costs

very

little

and

if

it's

not

used

as

great

make

it

make

it,

you

know

vanishingly

small

but

but

I

think

it

I

think

adding

extension

to

the

transfer

initiation,

header

I

would

be

in

favor

of

that.

The

other

thing

that

I

think

we

can

do

that

I

think

would

again

simplify

the

specification

and

would

cost

nothing.

The

interim

progress

acknowledgments

really.

J

K

K

No

there's

no,

no

there's

no

impact

on

this

document.

From

any

of

this

discussion,

there

is

there's

what

a

point

that

ie

that

Brian

brought

up

a

little

while

ago

about

reassembly

that

I

thought

I

would

mention

in

passing,

which

is

that

the

reassembly,

to

the

extent

that

reassembly

is

difficult

and

needs

more

support.

Reassembly

happens

in

the

envy

phoebus.

The

specification

for

for

reassembling,

fragmented

bundles,

happens

there.

So

if

we

need

to

do

something

about

that,

then

we

have

to

revisit

reassembly

and

view

this

I

think.

J

K

G

Rick

Taylor

speaking

with

chair

hat

off

I,

would

propose

that

if

we're

simplifying

this

down,

I

don't

see

the

point

of

the

segment's

anymore

and

I

think

the

segment's

is

is

the

flag

that

kicks

off

reactive

fragmentation

again

and

again.

I

totally

agree

with

the

point

about

an

extension

point

in

the

transfer

header,

as

well

as

the

contact

header.

So

you

can,

you

can

negotiate

extensions

for

the

entire

session

or,

on

you

can

add

an

extension

per

transfer

whatever

that

might

be,

but

I

don't

think

in

the

bare

simplest

form.

G

I

Brian

element

I'm,

going

to

echo

what

Rick

is

saying

in

just

slightly

different

way.

If

you

have

segmentation

in

the

TCP

CL

and

TCP

is

already

doing

that.

For

you,

you

get

these

very

interesting

behaviors

with

overlapping

control

loops

that

you

probably

don't

want

when

you're

talking

about

Layton

sees

as

long

as

what

we

have

possibly

going

on

here.

So

I

think

it

does

make

sense

to

strip

the

segmentation

stuff

out

and

move

that

into

some

type

of

extension.

If

people

want

to

try

and

do

it

separately

from

the

base

bag.

G

J

G

G

Like

I

should

have

already

I

think

and

please

correct

me,

anyone

in

the

room

if

I

get

the

summary

wrong.

That

is

the

proposal.

Is

that

the

extension

point

that

exists

in

the

contact

header.

We

should

have

a

similar

extension

point

in

the

transfer

header,

okay,

so

individual

transfers

could

be

extended

in

some

unspecified

way.

Okay,

that

segmentation

doesn't

need

to

exist

because

it

opens

a

can

of

worms

where

people

start

are

tempted

to

do

the

wrong

thing

myself

included.

G

H

Would

the

the

current

messaging

I'm

talking

about

the

protocol

messaging

with

the

current

messaging

structure

then

be

the

same,

just

removing

any

of

the

logic

about

multiple

segments

for

a

transfer,

so

a

single

transfer

would

amount

to

one

transfer

in

it

and

one

transfer

segment,

although

it

doesn't

necessarily

need

to

be

called

segments

anymore

transfer

data.

Maybe.

K

It

is

that

the

the

stream

of

transmission

from

the

sender,

some

young

t,

CPC

out

to

the

receiving

t

specl

would

be

with,

would

be

with

the

essentially

a

stream

of

transmissions

punctuated

by

transmission

headers.

So

there'd

be

a

transmission

header

and

at

the

end

of

the

transmission

header,

then

you

commence

sending

data

and

then

the

end

of

the

data

and

there'd

be

another

transmission,

header,

okay

and

then,

and

then

the

reverse

traffic

would

be

the

same.

Acknowledgement

messages

that

are

already

in

the

specification.

Okay,.

H

H

K

G

Think

sorry,

Rick

is

I.

Think

the

proposal

is

that

that

transfer

becomes

an

atomic

thing.

You

write,

the

transfer,

a

bundle

or

it

doesn't

get

transferred.

I

does

raise

a

question

about.

If

we

put

an

extension

point

in

the

transfer

header,

then

at

what

point

does

the

other

end

get

to

say

I,

don't

like

that?

Don't

send

me

this.

So

can

you

remind

me

again:

do

you

get

a

transfer

ACK

after

sending

the

in

it

before

you

start

sending

data,

or

do

we

have

to

now

wait

for

the

end

of

the

entire

transmission?

G

J

G

G

K

Know

Scott,

Bailey

I

would

suggest

I

guess

that

that

that,

when

you

get

many

of

these

one

of

these

refusals,

the

refusal

will

identify

the

transfers

or

something

in

the

refusal,

identify

the

transfer

right

and

and

and

and

what

the

sending

TCP

cell

should

do

is

when

it's

informed

somehow

that

it

has

received

one

of

these

refusal

messages.

It

should

say:

Oh

am

I

still

sending

that

if

I

am

I'll

stop

and

if

I've

already

finished,

you

know

too

bad.

G

K

G

J

So

there's

a

list

that

people

catch

up

on

that

I

think

one

thing

that

the

drafters

say

is

that

if

you

receive

a

shutdown,

you

should

just

have

the

shutdown

in

reply,

but

you

don't

have

to

so

I'm,

not

sure.

If

that's

something

that

we

need

to

look

at

and

listen

next

night

so

and

then

the

rules

that

were

in

the

draft

at

the

moment.

J

After

sending

a

shutdown

you're

not

allowed

to

initiate

any

new

transfers,

but

it

doesn't

actually

say

you

can't

accept

any

and

on

the

receiving

side

it

says

you

can't

accept

any,

but

it

doesn't

forbid

you

from

initiating

any

and

you're

allowed

to

carry

on

any

transfer.

That's

in

progress

at

the

moment.

So

that's

if

there's

still

more

segments

to

send,

and

so

maybe

we're

changing

all

that

that

doesn't

really

need

to

be

considered.

J

G

I

J

Well,

I'm

slightly

confused

about

and

I'm

trying

to

pick

out

from

the

description

and

the

draft.

What

actually

means

I

mean

the

the

thing

I

kind

of

interpreted

was

that

when

you

send

a

shutdown

message,

you're,

basically

saying

I'm

not

going

to

send

you

anymore,

but

nevertheless

transfer

finishes,

but

I

wonder

if

that

should

be

kind

of

I,

don't

want

to

receive

any

more

as

well

I'm,

not

really

sure.

With.

H

H

H

Refuse

not

read

not

reject

I'm,

getting

my

language

a

bit

messed

up,

but

it's

basically

it's

an

invalid

message

at

that

point,

a

transfer

in

it

is

not

is

not

accepted.

There

is.

There

is

a

signal.

There

is

an

on.

The

wire

message

of

I

got

a

message

that

I

didn't

expect

to

see

at

this

point

in

the

protocol

and

that's

what

would

be

I

think

the

response,

but

the

shutdown

is

intended

to

be

and

it

should

be

written

that

way,

but

I

can

go

back

and

look.

H

H

I

Okay,

so

yes

you're

separating

the

shutdown

of

a

transfer,

as

opposed

to

also

shutting

down

the

TCP

connection,

because

what?

Because

one

way

that

some

applications

handle

this

is

is

they'll,

do

a

TCP

close

and

that's

that's

a

half

closed,

which

means

they

can't

send

any

more

data,

but

they

can

receive

any

data.

That's

in

transit,

okay,.

H

So

because,

because

the

CL

does

acknowledgments

okay

well,

I,

don't

believe

that

that

would

be

something

that

would

be.

That

would

work

talking

about

the

current

0-7

version

of

the

draft

and

not

what

we

were

just

talking

about

about

single

segment.

Behavior

the

until

they

acknowledgment

until

the

the

CL

transfer

acknowledgement

is

received,

the

transfer

itself

hasn't

finished,

hasn't,

succeeded,

okay,

the

receiver

of

a

bundle,

the

receiver

of

a

transfer

still

has

to

send

out

TCP

connection.

You

know,

TCP

socket

data

to

acknowledge

the

the

transfer.

Okay.

I

K

J

G

J

K

N

So

I

brainy

API

I

am

a

little

worried

about

the

case

that

Viki

just

brought

up,

which

is

if

we

are

using

this

for

very

large

transfers

and

very

early

in

the

large

transfer.

We

have

decided

to

to

reverse

our

opinion

on

sending

the

data

and

I.

Don't

know

if

that's

a

credible

use

case,

I'll

defer

to

others

whether

that's

going

to

happen,

but

if

I

am

100

bytes

into

my

two

gigabyte

transfer

and

I

realized.

N

N

G

K

Scope

early

again,

yeah

I

I,

think

that,

since

we

since

use

cases

for

me

to

stop

a

transmission

to

gigabyte

transmission

after

sending

the

first

hundred

megabyte

hundred

bytes,

don't

leap

to

mind.

I

I

suspect

that

this

is

going

to

happen

infrequently

enough

that

the

cost

of

simply

stopping

you

know,

closing

the

socket

and

the

opportunity

to

speak

connection

is,

is

a

reasonable

cost

and

the

simplification

I.

H

K

J

J

They're

unclean

shutdown,

which

is

basically

I,

thought

the

definition

of

it

was

maybe

a

little

bit

off

and,

and

my

suggestion

was

that

an

unclean

shutdown

was

closing

the

TCP

connection

before

all

the

transfer

had

finished

and

so

I

think

that

kind

of

relates

to

all

the

discussion

you

just

had

and

I

think

on

the

final

slide.

Next

one,

the

next

one

after

oh,

no

like

there

was

two

comments.

They

were

just

sentences.

I

didn't

quite

think

made

sense.

J

We

can

move

on

so

the

reason

code.

So,

if

there's

transferring

progress

and

you

send

the

shutdown

with

a

reason

code,

the

only

born

that's

relevant

is

resource

exhaustion.

But

then

in

the

draft

says

that

you

should

try

and

finish

your

transfers

and

certain

quite

make

sense

to

me,

and

the

other

thing

was

that

you

can

put

in

a

reconnection

delay

in

your

shutdown

message

and

could

send

this

area,

which

means

never

reconnect

it

so

wonder

if

I

was

a

sensible

thing

to

do

or

not

I'm.

That

kind

of

covers

my

point.

Three.

K

Schiaparelli

and

on

these

two

points,

so

I

think

a

good

point

about

stressors

in

progress

than

open

code

being

resource

exhaustion.

Does

it

make

sense

that

you're,

allowing

transfer

to

finish

I,

think

I

think

maybe

we

can

recast

that

as

anticipated

resource

exhaustion,

meaning

we

have

to

allow

this

transfer

finish

but

but

and

it's

possible

but

I'm

actually

a

resources

all

together

and

I'm

gonna

throw

the

whole

thing

away,

but

that's

in

my

business

I'm,

not

yours,

so

it's

I

think

it's

okay

and

I'm.

The

last

point

I

think

that

they

never

reconnect.

K

G

Rick

Taylor,

responding

to

Scott's

coming

I

I

think

sending

zero.

Never

reconnect

is

dangerous

for

two

reasons:

one

I

think

it

might

be

abusable

and

two

I

think

somebody

might

look

at

that

and

go

hey.

I

can

do

routing

with

this.

I

can

say:

I!

Don't

want

you

to

send

me

that

kind

of

data

anymore

and

sneak

it

in

through

the

backdoor

and

we're

trying

to

trim

stuff

out

of

TCP

CL

that

can

be

abused

for

other

reasons.

G

J

H

Taking

a

note

to

remove

the

zero

as

a

valid

value

in

the

recognition

delay

and

anything,

that's

related

to

it.

Zero

value,

I

I,

don't

have

any

problem

with

that,

and

then

the

on

the

resource,

exhaustion,

reason

code,

I,

believe

that

was

really

just

intended

to

be

to

catch

a

whole.

I,

don't

believe

that

that

code

was

in

v3

of

the

the

CL

spec

I

think

it

was

at

it

just

to

cover

the

whole

of

I.

Don't

want

to

I,

don't

want

to

accept

a

connection

or

I.

H

Don't

want

I

want

to

shut

down

any

connection.

It

doesn't

really

say

what

resources

are

being

exhausted.

It

could

be

that

that

the

total

number

of

TCP

CL

sessions

that

a

node

has

established,

hit

some

limit

or

hit

some

above

some

soft

threshold,

and

you

know

I'm

closing

this

session,

because

I've

run

up

against

my

limit

of

how

many

sessions

I

should

be

allowed

to

have

that

kind

of

thing.

It

doesn't

necessarily

mean

my

disk

is

fuller.

My

Ram

is.

J

H

G

Retailer

and

I

think

with

the

removal

of

the

segmentation,

the

fact

that

you

can

no

longer

shut

down

within

a

transfer

I

think

a

lot

of

these

boil

off.

I

actually

think

some

of

the

shutdown

codes

are

really

aborts

anyway,

and

the

ability

to

say

something

complex

has

happened.

That

means

I

can't

recover

you've,

probably

aborted

before

then

so

I.

My

personal

opinion

is

some

of

these

can

be

trimmed

down

to

stuff.

The

other

end

can

do

something

with

that

information.

G

E

G

People

be

interested

in

a

virtual

interim

meeting

to

just

try

and

stay

on

top

of

this

and

make