►

From YouTube: IETF103-BMWG-20181108-0900

Description

BMWG meeting session at IETF103

2018/11/08 0900

https://datatracker.ietf.org/meeting/103/proceedings/

A

A

B

Right

I

think

we'll

get

going

because

we've

got

them,

we've

got

a

fairly

full

agenda

and

we've

also

got

somebody

supposedly

joining

us

remotely

who

didn't

want

to

stay

up

all

night.

So

we'll

we'll

get

going

here

so

good

morning,

everybody

I'm

al

Morton

I'm

one

of

the

co-chairs

of

the

benchmarking

methodology

working

group.

That's

the

session

you're

in

this

morning,

Mike

my

co-chair

Sarah

banks

is

unable

to

join

us

and

I,

don't

see

her

on

remote

access

yet

but

I

see

our

sort

of

our

second

presenter

has

joined

us

via

meet

echo.

B

So

that's

a

that's.

Gonna

work

out

great

timing,

wise.

So,

if

you're,

if

you're

new

to

BMW,

G

and

you'd

like

to

join

the

working

group,

especially

the

mailing

list,

there's

a

link

right

here

in

the

the

first

slide

and

you

can

go

there

and

join

it

quickly.

Who's

who's,

new

to

BMW

G

raise

your

hands.

Oh

four

or

five

people.

That's

that's

great!

So

you

know

different

it.

B

B

Read

some

graphs,

make

comments

on

the

mailing

list

and

you'll

be

one

of

us

before

you

know

it,

and

when

you

read

a

draft

you'll

find

that

there's

usually

the

fundamental

references

are

pointed

out

for

you

right

in

there

and

RFC

2544

2889.

Those

are

some

of

the

key

ones.

Those

are

the

ones

we're

actually

looking

at

improving

in

the

future.

You'll

see

some

of

that

work

today.

So

welcome

to

the

group,

those

of

you

who

haven't

attended

before

and

those

of

you

who

have

welcome

back

all

right.

So,

let's

get

going

here.

B

This

thing

I

can

go

to

full

screen.

Actually,

here

we

go

and

I

can

use

the

clicker

as

well.

So

here's

the

note

well,

one

of

our

rules

is

basically

that

any

contribution

you

make

at

the

microphone

or

anything

you

say

at

the

microphone

is

a

contribution.

It's

a

subject

to

our

IPR

disclosure

rules

have

IPR

and

something

that

you're

presenting

today.

Please

disclose

that

you

have

any

questions

or

you

want

more

details.

You

can

talk

to

me

or

you

can

read

any

one

of

the

yes,

that's

six

BCP

he's

there.

B

So

there's

plenty

of

information

about

our

IQ,

our

disclosure

process

process.

So

here's

our

agenda,

the

the

blue

sheets,

are

going

around

please

sign

in

especially

today,

because

we

have

seen

seemingly

a

small

smallish

group.

Starting

up,

you

mean

we're

not

going

to

do

jabber

today,

I've

I've

offered

people

other

ways

to

join

us,

and

we've

had

some

trouble

with

that

as

well.

B

B

Here,

go

well,

keep

this

out

in

the

audience,

so

you

guys

can

hand

it

around

in

a

little

a

knife:

interaction

with

the

Etsy

nfe

test,

Wharton

group,

in

terms

of

a

liaison

over

the

last

few

months

and

I'll

just

quickly

mention

that

they've

reached

a

publication

on

their

standard

and

then

we're

gonna

have

a

benchmarking

methodology

for

network

security

device

performance

presentation

from

samurai,

schneir

who's

joined

us

he's

going

to

be

making

this

presentation

remotely.

Thank

you

for

doing

that.

Semrush.

B

B

So

what

we'll

cover

Eve

you

can

altogether

then

we're

going

to

look

at

a

couple:

continuing

proposals,

the

updates

to

the

back

to

back

frame

benchmark

and

one

of

our

colleagues,

Yoshiaki

Ito,

has

been

doing

some

work

in

this

area

and

so

he's

very

prolific

in

his

lab

testing

and

has

shared

a

shared.

A

few

slides

with

us

he's

unable

to

join

us.

But

I'll

share

the

slides

and

get

your

feedback

on

that.

We

have

a.

We

have

a

couple

up.

C

B

Okay:

okay,

that's

good!

That's

good,

because

we're

coming

up

to

you

who

has

summarized

very

good!

So,

let's

see

here

yes,

so

then

we

got

to

the

new

proposals

which

are

actually

going

to

be

presented

by

EMS

trolley

drone

who's

here

in

front

Maciek

and

Bracco

are

the

authors,

but

they

neither

one

of

them,

could

make

it

at

the

surrenders

hour

for

a

European

time

zone.

So

Olli

is

here

to

stand

in

much-appreciated

going.

B

The

last

topic

is

liaison

from

itu-t

study

group

12,

which

was

actually

sent

to

the

IPM

work,

but

there's

overlap

and

the

work

that

I

described

there

and

with

our

work

here

so

I've

decided

to

spend

a

few

minutes

on

that

and

I

think

it

would

be

useful

for

us,

assuming

we

have

time

if

there's

also,

if

there's

time

we

may

have

other

topics

brought

up.

Maybe

you

had

some

new

proposals

and

so

forth.

B

D

B

B

So

thanks

for

standing

in

for

Warren

I'm

sure

you

appreciate

okay,

so

we've

got

to

the

point

where,

where

folks

can

go

to

the

microphone

and

bash

the

agenda

or

simply

sit

quietly

and

the

Chairman

will

say

the

agendas

approved

and

we'll

move

on.

Thank

you.

Let's,

let's

just

do

that.

So

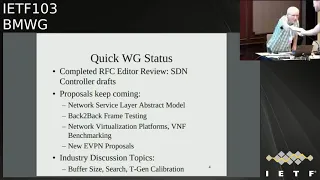

we're

heading

into

the

Working

Group

status.

Now

we've

completed

two

RFC's,

the

SDN

controller

graphs

on

terminology

and

methodology.

We've

got

a

whole

list

of

proposals

that

keep

coming

you're

going

to

hear

about

most

of

them

today.

B

So

these

are

you

know

this

is:

are

sort

of

our

quick

status,

new

RFC's

and

lots

of

proposals.

I

mentioned

that

we've

also

got

this

liaison

relationship

going.

Although

we

looked

at

this

draft

through

a

couple

of

meetings

and

we

didn't

prepare

any

that's

okay,

but

now

it's

been

published

and

I'm

actually

using

it

as

a

reference

in

one

of

my

drafts,

so

I

encourage

people

to

to

take

a

look

at

this.

B

There's

a

link

to

the

document

in

the

basically

in

the

agenda,

the

text

version

of

the

agenda

online

and

so

I

encourage

you

to

look

at

that.

It's

it's

essentially

RFC

2544,

our

throughput

and

latency

and

and

some

other

benchmarks

tuned

up

for

the

new

world

of

network

function.

Virtualization

we've

learned

a

lot

of

things

that

we

had

to

improve

and

we

make

that

that

work

more

reliable,

especially

about

search

algorithms

and

there

we

have

really

documented

some

new

things

based

on

testing

a

lot

of

collaboration

between

the

FDA

Oh

cease

it.

B

You

know

the

systems

integration

testing

at

the

FDA

Oh

project,

that's

a

VPP

form

of

V

Spectre

packet

process

and

also

the

OPN

of

the

vias

perf

and

nfe

bench

projects.

So

a

lot

of

collaboration

here

between

Etsy

MV

and

those

groups.

We

have

collaboration

going

on

with

Opie

and

raphy

and

and

and

soon

FD

IFC

set

as

well.

So

there's

a

there's,

a

nice

community

developing

here

of

folks

testing,

folks

writing

specifications

and

the

feedback

loop.

Where

we're

improving

everything

based

on

what

we

all

find.

B

So

here's

our

current

milestones.

We

got

one

in

red,

but

we've

got.

Is

this

the

one

for

next-gen

firewalls?

It's

it's

in

currently

in

working

group

adoption,

so

I

encourage

you

to

take

a

look

at

that.

Actually

I

think

the

adoption

call

ends

today.

We've

had

some

good

feedback

on

that

on

the

list,

we'll

be

looking

for

more

feedback

here

today

in

the

meeting.

B

Everything

else

is

in

well

precarious

shape

because

we

haven't

adopted

and

any

of

the

other

ones,

except

for

the

the

evpn

benchmarking

craft

there.

That's

the

the

third

one

in

the

list

is

adopted,

we'll

hear

about

that

today,

and

maybe

we

can

move

that

along

as

well.

Let's,

let's

see

how

what

the

what

the

group

feels

about

them.

B

So

congratulations

to

the

authors,

it's

a

long

list

of

authors,

but

we're

we're

now

effectively

done

with

those

and

that's

published,

so

very

good

work.

We're

done

with

our

charter

update

and

we

have

a

supplementary

web

page

and

you're

welcome

to

take

a

look

at

that.

It's

so

there's

some

advice

there

on

how

to

join

the

working

group

quickly.

Our

work

proposal

summary

matrix

is

it's

actually

sort

of

going

beyond

the

bounds

of

one

slide.

B

I

haven't

even

tried

to

update

it

with

the

new

proposals

that

arrived

in

this

context,

and

what

that

means

is

we're

gonna

look

hard

at

the

ones

that

are

here

and

say

you

know.

Is

this

really

still

alive,

I'm

kind

of

doubting

that

SFC

is

still

alive

back

to

back

frame?

Is

there

next-gen

firewalls?

Is

there

the

vnf

proposal

we

haven't

heard

from

that

in

a

while,

we'll

see

so

I

mean

there's

going

to

be

a

little

cleaning

house

on

this

proposal

and

the

tracking

and

I

think

that's

worthwhile

to

do

so.

B

B

Ietf

last

call

that

this

is

a

working

group

that

has

a

laboratory,

only

scope,

so

the

kinds

of

things

that

we

do,

that

absolutely

saturate.

You

know

ten

gig

e

or

40

gig

e

links,

they're

not

going

to

have

operational

implications

or

security

implications,

and

once

people

read

these

these

paragraphs

in

our

Security

section,

they

usually

figure

out

that

that's

the

case.

Otherwise

a

lot

of

reviewers

pick

up

our

drafts

and

have

no

idea

what

our

Charter

is.

So

it's

a

it's

been

helpful,

very

helpful.

B

B

H

D

H

You

everybody

I,

hope

you're

all

enjoying

much

rather

actually

like

to

be

there

so

I'm

here

to

present

the

draft

standard

for

net

sec

open

and

present

on

the

current

status

as

it

stands,

and

do

a

little

bit

on

what

we're

doing

at

netskope

it,

and

my

name

is

Sam

ration

here,

I'm

a

senior

product

manager

working

with

power

to

read

books,

I'm

one

of

the

contact

occurs

towards

nesic,

open

forum

and

I'll

be

happy

to

present

the

cross

RSS

and

take

any

questions.

If

you

have

a

piece.

H

H

All

right

so

currently

the

draft

the

status

it

it's

a

currently

a

draft

standard

and

we

are

in

version

five,

currently

a

version

forward.

We

are,

we

have

done

some

extensive

reviews

and

some

of

the

sections

number

one

to

four

have

been

reviewed

and

we

will

be

presenting

an

update

after

the

IETF

103.

H

H

Obviously,

the

whole

project

is

aimed

at

testing

next-generation

firewall

performance

tests.

So

what

we

want

to

make

sure

is

that

first,

the

security

inspection

functions

on

the

duty

is

turn

on.

So,

unlike

what

was

done,

sometimes

we

are

earlier

where

the

problem

starts.

Saying

well

stand

with

no

security

tests,

no

security

inspection

turn

on

the

objective

over

here

is

to

make

sure

that

the

security

inspection

is

done

on

a

duty,

sorry

for

typos,

but

yeah.

H

H

Next

slide

yeah,

so

the

second

step

is,

we

have

actually

done

aperture

of

the

TV

list,

which

is

basically

the

vulnerabilities

to

the

device

and

the

criteria

that

we

abused

is

basically

a

high

severity,

which

is

CBS

s

core

7

to

10,

and

we've

taken

the

severe

lists

from

2010

to

18.

That's

really

what

the

consider

is

relevant

in

and

and

anything

earlier

than

that

probably

is

not

relevant

now.

So

with

all

of

those

we

have

about

a

1200

lists

of

CBS

that

were

considered,

and

we

also

are

using

couple

of

hairs

tools.

H

We

have

430

CDs

that

we

are

using

for

proof

of

concept

testing

right

now

out

of

those,

we

have

actually

split

it

into

two

versus

four

hundred

CVS

or

what

we

call

is

a

public

list,

and

that

is

shared

with

all

the

vendors

which

are

participating

right

now,

so

we

are

basically

giving

it

it's

like

an

open

book.

We

are

giving

it

away.

Okay,

these

are

all

the

CVS

that

we'll

be

testing.

You

want

to

make

sure

that

all

those

CDs

are

are

detected

by

the

security

device.

H

The

thirty

of

them

is

kind

of

a

secret

list

that

is

kept

aside,

not

shared

with

the

vendors.

The

idea

is

that

the

vendors

should

not

be

cheating

in

the

test,

so

so

with

a

public

list.

Give

me

all

the

details,

the

thirty,

and

we

want

to

make

sure

that

the

vendors

are

not

kind

of

trying

to

work

around

by

just

having

coverage

only

for

those-

and

you

know

trying

to

is

kind

of

call

it

cheap

to

cheat

the

system

with

only

those.

H

It's

like

this,

so

once

all

the

when

we

run

the

test

for

security,

make

sure

that

all

of

those

CDs

are

detected,

the

main

test,

charts

and

and

in

terms

of

the

testing

right

now

we

are

looking

at

all

these

tests

that

you

see

on

a

screen.

So

basically,

this

is

a

bunch

of

throughput

tests

and

session

scale

and

capacity

test,

tcp,

cps

and

HTTP

transaction

per

seconds,

and

things

like

that.

A

whole

bunch

of

tests,

as

you

can

see

on

the

screen.

H

All

right,

so

those

are

all

the

tests

that

are

that

will

be

tested

in

what

are

we

actually

trying

to

achieve

with

this?

The

first

is

this

is

a

proof

of

concept

testing,

which

means

the

test.

Vendors

with

the

test

tools

have

actually

created

certain

profiles

to

be

able

to

use

it.

Next,

the

open

forum

insuk

open

drafts

andrew

you

want

to

make

sure

that

it

has

fools,

are

actually

able

to

do

that,

which

means

that

soul

is

kind

of

ready

for

the

testing.

H

You

also

want

to

make

sure

that

you

have

logistically

everything

set

up,

which

means

the

test

tools,

passwords.

Everybody

is

able

to

run

through

this

test.

One

make

sure

the

practically

there

are

no

issues

in

running

the

test,

so

in

this

whole

exercise

there

are

few

players.

So,

first

of

all,

there

are

three

labs

who

are

actually

participating.

This

ENT

see

you

all

and

UNH

IOL.

These

are

three

labs

and

the

three

labs

are

mainly

using

two

different

cuss

tools,

mainly

X

here

and

parlin.

H

H

H

We

want

to

make

sure

that

we

review

them

and

go

make

some

adjustments

if

necessary,

and

then

we

should

have

an

updated

draft

around

early

December

and

will

submit

to

update

or

draft

to

benchmark

working

group

early

December

once

ready

in

terms

of

the

results.

So

since

this

is

just

sort

of

a

proof

of

concept,

testing

is

not

like

a

public

test,

so

we

do

not

want

to

actually

publish

these

results.

H

It's

kind

of

confidential

and

that's

why

you're

not

publishing

the

results

as

such

and

it's

kind

of

requested

by

test

participant

and

then

they're

there.

There

are

certain

differences

between

test

tools

that

have

been

identified,

so

we

see

a

spine

here

and

they

work

a

little

bit

differently.

So

you

have

looked

at

all

of

those

and

there

are

certain

differences

that

are

found

and

you're

looking

at

making

sure

that

the

overall

objective

is

still

met.

Regardless

of

the

differences.

H

H

I

C

B

Anybody

else

any

comments

on

the

draft.

It's

pretty

big

draft

actually

knows

yeah

yeah,

so,

okay,

well

I,

I'll

I'll

be

considering

this.

This

topic,

with

my

co-chair

of

course,

Sarah,

hasn't

joined

us

yet,

but

hopefully

she'll

join

us

during

the

session

and

then

we'll

we'll

we'll

consider

the

results

of

the

call

for

adoption

and

and

let

the

let

the

working

group

know

SEMRush

a

question

I

had

since

there,

since

no

one's

rushing

to

the

microphone

here,

the

the

proof

of

concept

testing.

B

I

Sorry,

your

question

was

very

hard

for

me.

I

couldn't,

I

just

said:

I'm

sorry

so

I'm

bright,

yeah,

so

yeah

we

would.

We

will

be

reviewing

all

the

list

and

based

on

the

issues

found

any.

We

will

update

draft

and

we

will

provide

you

with

the

changes

that

are

made

beautiful

between

the

previous

rap

dropped

in

a

new

trap

and

to

your

request

on

the

anonymized

feedback.

B

B

B

H

Before

that,

the

previous

the

comments

so

the

topology

expansion

it

there

was

a

comments

from

the

last

IETF,

then

test

case

details

and

details

of

the

environment

and

inclusion

of

EVP

and

VPD

of

Lewis

benchmarking.

Then

we

had

a

discussion

with

different

test

teams

and

because

we

cannot

compare

evpn

and

we

pita

players

because

it's

a

it's,

not

a

apples

to

apples,

comparison

so

be

floated

another

draft

because

it

was

a

need

coming

from

the

community.

H

C

H

H

That's

a

local

as

well

as

the

remote,

because

he

EVP-

and

you

know

that

the

max,

which

is

learned

locally,

will

be

advertised

via

the

BGP,

so

they're

different,

the

learning

we

have

Mac

learning

in

the

local

and

remote,

then

Mac

flesh,

then

the

Mac

aging,

then

Mac

aging,

then

high

availability.

Then

art

scaling

then

scale

the

scale

with

convergence.

How

fast?

It

is

convergence

and

to

you

know,

avoid

the

flight

in

the

network,

because

the

flood

is

very

dangerous

and

because

it's

going

to

chalking

the

bandwidth.

H

So

these

are

all

the

and

then

the

convergence

is

it's

a

common

test,

the

convergence

test,

the

soap

and

I

availability.

It's

just

a

high

availability

is

just

a

failover

test

to

see

ideal

cases

a

zero

packet

loss,

but

there

will

be

some

packet

loss

will

be

there.

When

you

do

the

routing

engine

failed

over,

then

the

soak

soak

test,

which

will

be

running

over

a

period

of

time

so

either

24

to

48

hours.

Normally,

this

48

hours

will

be

running

it

so

that

no

cause

no

nothing

should

be

available.

Yeah

questions.

K

H

This

is

this:

is

you

know,

as

a

service,

it

will

be

testing

both

the

control

and

the

data

plane,

data

plane,

ISM

RFC,

as

mentioned

228,

that

the

data

plane

learning

rate,

as

well

as

the

control

plane

BGP

advertise

to

that.

That

is

where

the

mac

learning

through

the

remote.

So

the

mac

learning

is

first

parameter,

which

you

know,

checks

the

first,

the

local

learning,

as

well

as

the

remote

learning

both

will

be

covered

because

the

BGP

takes

the

serialization

delay

it

advertised

as

a

type

2

routes

2

to

the

D.

H

K

H

H

B

C

B

J

B

B

But

the

if

there's

any,

if

there's

any

additional

comments

folks

would

like

to

make

now

we

could

we

can

do

that.

Otherwise,

you

know

I

didn't

buy

some

of

the

new

folks

who

are

interested

in

this

technology

to

read

the

draft

and

provide

comments

during

working

group

last

call

in

in

BMW

G.

Sometimes

we

have

several

working

group

last

calls

to

kind

of

stir

up

the

comments,

so

that

that's

that's

happened

in

the

past.

H

K

H

Type

5

is

not

type

Phi,

because

it's

a

separate

draft,

that's

a

type

fire

out

as

itself

is

not

part

of

a

VPN

RFC,

so

that

is

not

added,

but

Mac,

plus

IP,

that

is

the

ARP

and

the

ipv6

Mac

plus

ipv6.

That

is

covered

because

it's

one

of

the

parameters,

the

scaling

of

that

it

is

covered

that

so,

at

least

for

my

perspective,

I

would

suggest

type

wave

was

asked

you

know

in

earlier.

H

Also,

then

it

was

a

big

discussion

because,

because

the

type

5

then

with

others

also,

they

said

one

of

the

author

of

the

RFC

to

four

three

two

Jim

also

said

why

you

add,

because

it's

a

separate

one,

it's

a

the

prefixes

which

is

gone

to

that

it

is

a

separate

one,

so

why

it

is

needed

in

this.

So

that

is

a

reason

we

initially

we

added

in

I

think

in

the

six

version

six

or

that

and

after

they

pulled

out

from

one

of

the

authors

of

the

track

team,

then

that's

why

we

pulled

out.

H

K

K

H

Route,

because

it's

still

in

the

draft

phase

that

we

could

not

die

file,

let

me

see

that,

because

already

we

cover

a

generic

as

as

I

agree

with

you

already,

we

included,

then

we

excluded

after

comments

from

the

author,

along

with

Ally,

so

I

will

work

to

it

to

reach

a

middle

ground

on

that

I

promise

you

but

type

six.

No,

because

that's

a

multicast

one.

This

is

purely

on

the

you

know

only

RFC,

seven,

four

three

two,

because

that

is

the

scope

is

defined.

So

that's

why

we

could

not

go

beyond

that

boxing

parameters.

H

K

K

H

Is

evpn

you

know,

benchmarking,

it

is

for

MPLS

is

currently

defined

that

tea

and/or

so

the

same

parameters

you

can

apply

for

IP

because

the

eb

pin

doesn't

change

only

the

underlay

changes

like

v

excellent,

so

now

I

think

genève

is

also

coming

up.

So,

irrespective

of

the

we

defined

the

parameter

there,

irrespective

of

the

underlay,

so

now

we

tested

in

our

lab

with

MPLS,

we

can

have

VX

LAN.

Also

the

same

thing

works

fine,

so

we

have

tested

that

too,

but

now

Jenny

was

also

coming.

So

it's

in

that

draw

phase.

H

I

checked

with

author

use,

telling

it

is

gonna

come

as

RFC,

so

this

is

so

it's

like.

You

know

it's

like

a

container

the

overlay.

It's

like

container,

you

keep

the

payload.

So

what

is

a

payload?

How

we

are

going

to

define

that

payload?

That

is

what

we

are

defining

here.

So,

irrespective

of

the

container,

you

can

have

a

you

know:

II

VPN

MPLS

as

a

container

or

you

can

use

as

a

I

know,

transport

overlay

as

VX

LAN

or

tomorrow,

Geneva's

coming

so

I,

think

I

agree.

K

I

K

H

Both

the

deployments-

because

that's

why

be

somewhere

we

have

to

box

in

because

this

RFC.

So

if

you

are

gonna

because

the

under

life

you've

gone,

it

will

be

a

very

big,

so

the

type

5

we

will

try

to

skews

in

an

ocean

2

into

this.

We

will

see

because

there

was

a

lot

discussion,

I

think

in

Chicago

or

so

soul.

So

this

was

a

discussion

which

was

asking.

Then

the

gym

was

the

one

of

a

as

I

said

earlier.

He

was

the

cause

of

the

RFC

Sun

4

3

2.

H

So

he

said

that

that's

why

we

pulled

it.

So

we

made

it

generic

as

ARP

and

in

descaling,

so

this

I

will

consider

here.

I.

Take

it

positively

the

estimate,

issues

and

type

6.

This

we

will

float

as

a

new

draft,

because

currently

this

is

beyond

the

scope

of

7

three

twice.

You

are

aware

right

in

the

best

world

to

be

still

going

as

it

rafts

so

that

we

will

consider

a

sinew.

One

said:

let

me

observe

this

thanks

for

the

thank

you

so

much

yeah

thanks.

H

Six

and

seven

is

also

a

because

I'm

not

aware

of

the

multi

casting

seven

because

they

have

defined

new

draft

for

the

multi

cast.

So

in

that

draft

we

can

evpn

to

type

routes

like

in

normal

sand.

Four

three:

two

elite,

four

routes:

are

there

one

two

three

and

four,

then

they

added

typhoid

in

a

separate

one

and

prefix,

and

this

for

the

multi

gas.

Okay,

you.

B

H

Iii

love

to

get

their

deployment

because

I

always

follow

the

deployments

and

based

on

their

input

solely

because

that's

how

you

know

you

have

to

have

apples

to

apples

comparison.

So

how

do

you

kind

of

you

know

boxing

the

certain

things

and

you

you

plug

in

your

RT

and

test

and

get

it

hey?

This

is

giving

you

this

much,

and

this

is

having

this

Masoli

as

a

provider

as

implement

or

you

get

you

will

get

the

benefit.

H

B

H

Thank

you.

So

this

is

the

next

Rock

of

the

evpn

vp

w

s,

because

the

last

IETF

you

know

the

the

last

away,

I've

ITF

in

Montreal,

so

they're,

one

of

the

quorum

of

the

seventy

three

to

Jim,

asked

to

you

know

as

one

of

the

comments

which

I

mentioned

that

write

to

incorporate

this

into

the

draft,

then

I

said

it

and

I

test

with

you

know:

I

have

concurrence

with

the

community

as

well

as

the

chair

and

said

because

it's

not

Apple

to

Apple

comparison.

So

this

is

a

reason.

H

We

float

a

new

draft

on

this

to

have

to

benchmark

evpn

vp

w

s,

so

he

will

pn

b.

P.

Ws

is

a

currently

new

RFC,

it's

a

8

to

1

4,

and

this

is

one

of

we

can

say

lot

of

benefits

in

this

particular

RFC

because

mean

this

VP

WS

been

in

the

metro

cloud

for

a

long

time

and

in

the

service

provider.

For

a

long

time.

H

The

one

of

the

basic

drawback

of

VP

WS

is

there

is

no

active,

active

forwarding,

so

one

will

be

your

active

forwarding

and

another

will

be

hot

standby,

so

you

cannot

use

as

a

implementer

or

as

a

customer.

You

cannot

use

both

the

links

for

forwarding.

So

that

is

what's

a

one

drawback

and

you

can,

you

can

have,

cannot

have

you

know.

H

You

know

both

the

links

utilized,

and

these

are

all

the

kind

of

problems

which

are

faced

then

once

the

evpn

came

is

it

was

one

of

the

kind

of

a

mothership

and

lot

of

other

things

which

started

gluing

into

it.

It

starts

growing

and

growing

and

growing

so

now

it

it

becomes

almost

of

the

providers

they

have

adopted.

This

evpn

technologies

and

most

of

the

conventional

which

were

using

the

legacy

systems,

are

now

coming

into

the

evpn

days.

So

the

best

is

working

lot

of

things

on

this.

H

So

I

request

to

lot

of

the

developments,

which

is

asking

I

request

community

to

just

have

a

tab

on.

If

you

are

interested

in

this

technology,

Cape

tau

is

exclusively

on

the

best

working

group.

So

there

as

I

said-

and

this

is

one

of

the

load

balancing

capability-

you

can

utilize

from

the

remote

P.

Also

you

can

send

the

traffic

to

you

know

the

same.

The

customer

site

you

know,

based

on

the

you

know,

multihoming

features

you

can

send

it

and

lot

of

benefits

which

gives

advantages

to

the

customer

and

it

will

reduce

the

Opaques.

H

So

otherwise

it

will

be

wasting

one

link

unnecessary

and

you

are

wasting

your

dollars.

So

this

is

so

to

avoid

that

this

is

one

of

the

capability

you

use

both

the

links,

so

you

get

the

benefit

of

it.

That

is

a

VP

WS

traffic.

So

relatively

16

page

RFC,

it's

a

pretty

good

one,

so

we

defined

a

certain

parameter.

Since

it

is

AE

line

service,

it

have

the

learning

capability.

What

old

comes

in?

Take

it

through

the

pipe

and

send

it

out.

So

we

cannot

have

the

learning.

That

was

one

of

the

challenge.

H

We

cannot

have

a

learning,

Mack

learning

or

anything

in

the

service

provider

edge

router.

What

all

comes

in

just

take

it

and

put

it

into

that:

it's

like

a

pipe!

So

how

do

we?

That

was

a

challenge

when

we

were

testing

in

the

lab?

So

how

do

we

benchmark

this?

How

do

we

performance

monitor

this

particular

service

and

between

the

different

boxes?

I

mean

say

for

X

when

y,

when

Traci

bender,

so

these

are

the

certain

parameters

when

we

test

it.

Okay,

you

jot

down

on

it,

and

we

see

okay,

generalize

certain

parameters

on

this.

H

This

is

one

of

the

local

link

failure.

So

the

link

failure

in

which

how

fast

that

is

in

a

multi,

harming

scenario

how

fast

it

will

switch

from

the

one

PE

to

another.

So

it

depends

on

the

you

know:

the

routers

in

routers,

two

routers,

so

the

timing,

so

we

are

taking

the

average

of

average

of

you

know

they

repeat

the

test

and

get

an

average

on

it.

Then

the

core

link

failure,

so

that

how

fast

is

the

remote

PE

will

switch.

The

you

know

switch

that

to

the

prior.

H

You

know

earlier

primary

to

the

new

primary

and

how

fast

you

know

it

is

coming

up

to

avoid

the

packet

loss.

Then

the

link

flap

link

goes

up

and

down

and

how

fast

the

Dutt

X,

because

these

are

all

tested

or

based

on

the

single

active,

because

in

active

active

there

is

no

D

F

election,

so

it's

both

will

be

the

forwarding,

but

in

single

active

you

have

the

primary

and

the

backup

concept.

So

this

test

is

purely

if

you

go

through

the

draft.

H

This

is

test

is

only

done

on

single

active

scenarios,

so

the

link

flap.

What

happens

is

like

when

you

flap

the

link

once

primary

goes

down

and

it

goes

back

up

becomes

the

primary.

Then

it

comes

up.

Then

again

the

reelection

takes

place

and

again

the

primary

kicks

in.

So

there

will

be

a

you

know:

gap.

If

either

you

have

preference

base,

bf

election

or

be

more

then

so

there

will

be

some

packet

loss

so

how

fast

it

is

converging

that

is,

we

are

measuring.

H

It

then

normally

in

BPW

is

one

thing

is

like

adding

the

services

in

service

provider.

You

add

the

services

like

say:

15,

customers,

you

add

it

or

150

customers,

automate,

testing,

direct

writing

scripts

and

adding

it

and

deactivating.

If

they

didn't

pay

the

bills.

Do

you

deactivate

it?

This

is

automated

testing,

so

you

deactivate

the

customers

and

once

they

pay

the

you

activate

it

so

that

the

services

takes

you,

no

expectation

and

services

should

come

up.

The

packets

should

flow

and

the

existing

services

should

not

be

affected

and

the

scale

convergence.

H

H

Then

high

availability,

you

it's

a

the

common

test

in

the

BMW

G,

you

failover

the

active,

the

routing

engine,

so

it

will

be

running

it

non-stop

forwarding,

so

you

flap

it

or

you

reboot

the

primary

routing

engine,

so

the

other

routing

engine

takes

up

so

ideal

case

is

0

because

idle

is

ideal.

1

and

practically

you

will

see

a

fact.

Few

packet

drops.

So

that

is,

the

soap

is

running.

H

The

full-blown

system

with

traffic

in

a

multi

dimension,

scale

running

over

a

period

of

24

to

48

hours

and

expectation

is

no

code,

no

crashes,

no

memory

leaks,

so

we

have

automated.

You

know

thing

tools

to

check

that.

If

the

you

know

it

will

check

it

will

poll

frequently

every

our.

What

is

the

status

and

gives

us

the

result

at

end

or

end

of

date,

so

thanks

Allan

Zahra

for

the

support

so

next

step.

Please

comment

it.

H

H

H

H

It

is

the

currently

because

it's

a

test

setup,

we

I

have

only

one

peer.

So

if

you

have

you

know

the

I

mean

with

one

peer,

we

have

tested

it.

So

it

is

it's

a

test

setup

right.

So

with

one

peer

I

have

you

know

two

multihoming

P

which

acting

as

the

same

Ethernet

segment,

and

we

have

one

single

home

P

which

I

serve

remote

in

single

home,

P

and

wonder

of

out

reflector.

H

K

C

G

H

Problem

so

in

the

current

scenario,

because

you

know

the,

if

you

have

the

RP

or

the

router

tester

like

exe

our

Spirent,

this

should

support

that

many

peers,

I

think

EVP

nvx

land

ixia

supports.

We

can

automatically

synthesize

the

number

of

peers,

but

in

MPLS,

when

you

generalize

right,

so

we

need

a

physical

box.

So

that's

why

we

boxing

the

topo2

like

this

so

EVP

now

I

think

the

latest

Ixia

IX

Network

version.

They

have

the

synthesizer

who

you

can

have

the

number

of

peers

and

they

support

the

way

we

excellent

payload.

H

H

Prf

it

is

there,

it's

part

of

be

Arabs

and

we

are

F

is

number

of

it.

That's

a

scale

scale

convergence.

It

is

part

of

so

very

nice

as

well

yeah

that

does

vni.

We

are

not

exclusive

silly.

As

I

said

right,

it

works

on

both

evpn

and

you

know:

VX

lag

so

gruffs

rub

scaling.

Is

there,

so

you

might

want

to

consider

both

which

one

yeah,

because

the

proof

as

I

said

right

the

graph

we

can

take

the

next

hop

where

either

it

will

be

the

the

label

I'll

be

provided

by

the

overlay.

H

The

overlay

overlay

versus

an

overlay,

be

nice.

I

didn't

get

you

in

that,

because

the

verbs

comes

in

the

overlay,

so

yeah

that

is,

as

I

said,

write

overlay

verbs

are

considered,

so

the

underlay

it

will

be

independent.

It

will

be

independent

of

as

I

said

earlier.

It

is

independent

of

any

encapsulation.

H

Vn,

I

or

you

can

have

a

you

know

a

VPN

MPLS,

so

that

is

independent,

so

we're

scaling

is

there

scale.

Convergence

is

a

parameter.

It

is

the

power

of

site.

Is

that

you,

you

can

read

that

it

doesn't

mention

clearly.

Thank

you.

Tai

Phi

I

will

take

into

consideration

in

this.

We

cannot

have

number

of

years

in

this

test.

What

currently

I'm

handicapped

in

that?

Why?

Because

the

Exia

or

the

is

this

parent

test

center

right,

you

have

to

emulate

it.

H

That

kind

of

emulation

is

not

our

I

can

have

a

bgp

peering

I

can

I

will

fix

here

should

follow

you,

so

that

is

a

challenge

I

have

so

I

have

to

you

know

this

is

as

all

set

in

there.

This

is

a

lab

setup,

so

you

define

this

is

my

topo,

and

this

is

a

the

you

know.

As

overlay

as

I

said

right,

it's

sorry

overlay.

We

are,

you

know

looking

into

it

underlay.

We

make

it

as

a

fix

to

payload,

and

this

is

a

certain

parameters

we

are

done

on

the

underlay

and

overlay.

H

We

are

scaling

it

and

we

are

parameter.

We

are

defining

the

parameter

to

do

that

now,

if

I

think

I

definitely

work

in

my

lab

and

I'll

get

back

to

you.

The

type

6

I

type,

six

and

seven

I

said

right

now,

because

I

never

tested

on

it.

It's

a

relatively

new

thing,

then

definitely

I'll

get

back

to

you.

You

know

coming

as

a

separate

draft

for

that.

Let

me

go

through

it.

It

was,

as

I

said,

I

have

to

confess

I

didn't

test

it.

It's

really

a

new

one.

So.

H

I

will

look

into

the

type

5

for

this

I

because

since

it's

a

need,

I

I'll

try

to

excuse

cues

in

that

al

because

it's

a

deployment

so

I

will

try

to

I

mean

I'm,

not

you

know,

I'll

walk

to

towards

it,

because

I

have

a

commitment.

I

will

work

towards.

Let

me

see

to

that

how

best

I

can

excuse

in

that

way,

so

that

that's

it

about

this

draft.

So

this

I'll

ask

you

a

question

like

this

is

all

tested

based

on

the

traffic

right

with

traffic.

You

know

northbound

southbound

and

bi-directional

traffic

good.

H

B

B

The

next

one

is

from

your

chair,

acting

as

a

participant

and

my

colleague

Jim

Yutaro

from

18

T

labs,

I

economized

on

my

time

here

and

did

not

prepare

slides

here's.

Why

we've

only

got

two

things

that

we're

trying

to

benchmark

here

in

this

multihomed

evpn

scenario

and

I'm

gonna

flip

ahead

to

what

they

are.

We're

gonna

do

some

throughput

and

other

tests

on

Ethernet

segment,

which

is

this

this

part

of

this

figure

right

here

and-

and

this

is

obviously

for

you

know-

and

it's

another

test

for

you

VPN,

but

then

our

next

plan.

B

B

I

really

welcome

sharp

readers

folks

with

evpn

experience,

to

take

a

look

at

this

and

tell

me

where

I

messed

up,

because

I'm

sure

he

did

and

we'd

be

happy

to

fix

this

thing

up,

but

I

think

that's

I

mean

these

are

a

little

different

than

the

kinds

of

things

you've

done

Sabine.

So

we

might

progress

this

as

an

individual

draft.

B

H

C

H

All

the

remote

routers

in

EBX

land

percent,

so

what

we

are

going

to?

What

are

the

parameters

you

are

going

to

do

it

because

you

get

roid,

then

the

other

one,

the

other

P

will

take

it

as

sorry

to

active

the

forward

or

the

designated

forward.

So

what

what

are

the

parameters

in

this

you

are

defining

it?

I

will.

H

Would

you

what

would

you

like

to

say

I'm

putty

in

your

hands,

okay,

yeah?

What

what?

What

is

actual

the

deployment

is

like

what

is

their

link?

Flap

happens

like

the

mass

withdrawal.

Then

it

has

to

clean

up

the

old

entries.

The

forward

next

up

should

point

to

e

media.

How

fast

it

is

pointing

pointing

to

the

next

outer

and

reduce

the

flag.

So

the

flood

is

a

dangerous

thing

in

the

production

network.

So

the

longer

the

flood.

H

You

know

we

draw

it

clean

up

the

you

know

what

all

the

clean

up,

because

the

1p

is

down,

as

we're

told

one

p

is

down

what

kicks

in

so

the

mass

with

all

happened,

so

there

forward

is

Cle.

Forwarding

table

is

cleaned

up

immediately

switches

to

the

new

one

and

starts

relearning,

so

that

is

the

Delta.

You

have

some.

You

know

packet

drops

coming

in

and

the

relearning

so

that

end,

which

will

cut

down

the

flag.

Okay,.

H

B

B

Yeah

we

I

confess

that

we

we

used

the

even

the

extra

day

on

draft

submissions,

because

that

was

the

only

day

Jim

and

I

could

get

together.

So

so

we've

we

stressed

the

the

end

of

the

envelope

to

get

this

thing

put

together

for

you.

But

thanks

for

your

comment,

city,

I'm,

very

good,

great,

alright,

so

I'm

gonna

make

it

I'm.

Gonna

make

a

quick

note

of

that

here.

K

B

Okay,

okay,

yeah,

in

fact

them

I'm

fairly

sure

that

I

didn't

that

I

didn't

do

the

throughput

test

in

like

a

50/50

kind

of

split

where,

where

it

could

all

be

restored

in

the

next

step

of

the

procedure.

In

other

words,

when,

when

the

let's

say

in

this

picture,

what

PE

one

goes

down

and

and

and

the

traffic

split

across

the

the

ESI

yeah.

G

G

B

K

B

Right

all

right

on

to

the

next,

so

now

we're

so

now

we're

in

the

continuing

proposals.

You

know

we

where

we

did

all

of

the

evpn

stuff

together

and

two

of

those

drafts

were

brand

new,

just

to

get

everything

evpn

done

at

once.

So

now

we're

looking

at

the

updates

for

the

back-to-back

training

benchmark.

This

is

where

we,

this

is

a

stand

up

from

it.

This

is

the

this

is

the

case

where

we're

updating

the

RFC

2544

back

to

back

frame

benchmark.

B

B

We're

on

a

single

point,

single

port

relationship,

the

device

under

test

can't

transfer

all

the

traffic,

in

other

words,

there's

a

limitation

for

64

bytes,

typically

64,

octets

or

128

octets

and

there's

our

those

are

basically

cases

where

you

can't

get

a

single

port

to

single

port

kind

of

connectivity.

Now

you

know

in

physical

devices

today

this

is

not

an

issue,

but

it's

it's

still

an

issue

in

the

virtualized

networking

devices

world.

So

that's

a

good

reason

to

update

this.

B

So,

like

I

said

it's

the

25:44

update

we're

testing

the

extent

of

data

buffering

in

the

device.

The

original

procedure

in

2544

was

very

concise

by

that

I

mean

really

short

and

sweet.

We're

doing

a

lot

more

with

it

here

now,

so

we

ran

some

tests

in

2017

under

the

Oh

piano,

thievius

perf

project

and

those

indicated

we

needed

some

areas

for

refining

this

calculations.

B

So,

besides

calculating

the

number

of

back-to-back

frames

that

we

can

launch

into

a

device

without

loss,

that's

an

indicator

of

the

buffering,

that's

available

and

we're

going

to

measure

that,

in

terms

of

all

these

different

statistics,

but

as

I

was

explaining

to

Barak

and

during