►

From YouTube: IETF106-OAUTH-20191120-1330

Description

OAUTH meeting session at IETF106

2019/11/20 1330

https://datatracker.ietf.org/meeting/106/proceedings/

A

Yeah

we

need

to

get

started

and

it

at

the

moment

Donnelly,

so

the

bridge

so

welcome

to

ours.

Working

group

meeting

I

handed

out

the

blue

sheets

already,

so

they

should

be

circulating

around.

Please

sign.

Tony

is

kindly

doing

the

meeting

minutes.

Do

we

have

someone

logging

into

Tony?

You

don't

know

what

I'm

talking

about.

A

A

This

is

the

agenda

for

today

we

quickly

have

a

look

at

the

working

group

documents

in

terms

of

status

and

Roman

sitting

next

to

me

will

give

you

also

a

short

update

on

those.

We

had

a

virtual

interim

meeting

not

too

long

ago

what

we

actually

ran

through

those

and

sort

of

checked

the

status.

So

we

are

moving

things

along

pretty

nicely.

A

B

Hi

yeah

so

again,

I'm

helping

out

here

since

Rafa

couldn't

make

it.

We

have

a

bunch

of

documents

with

the

is

G

or

past

that

process,

so

we

have

to

in

is

G

evaluation.

There

are

some

blocking

discussed

positions

that

those

particular

ATS

know

that

they

need

to

resolve.

I

have

kind

of

spoken

with

them

and

they're

committed

to

looking

at

them

I'm

trying

to

press

them

to

accelerate

that

review.

Likewise,

we

have

several

documents

already

in

the

RFC

editor

queue,

and

so

they

are

making

their

way

through

the

process

required

for

publication.

B

A

A

A

C

Hanna's

just

mentioned

there

was

a

call

on

4th

of

November,

or

we

talked

about

how

we

move

that

draft

forward.

Since

there

was

a

attack

being

described

and

mentioned

in

the

wharf

session

in

Montreal,

it's

the

pixie

shows

and

challenged

attack.

The

question

was

whether

we

want

to

wait

till

a

solution

is

being

found

for

that

kind

of

attack

or

whether

we

push

the

BCP

forward

and

publish

it.

C

The

same

thing

in

the

call

was

there

is

a

lot

of

good

stuff

in

the

PCP

right

now,

as

it

is

right

now,

so

we

should

move

that

forward

and

subsequently

start

on

the

new

version

of

the

PCP,

which

will

also

incorporate

the

pixie

shows

and

challenge

attack,

and

that's

why

the

chairs

ask

or

how

to

say

that

announced

we're

going

to

ask

all

I

could

sir

sin

on

6th

of

November

and

since

that

time

a

lot

of

people

are

reviewed.

The

PCP.

Thank

you

very

much

for

that.

C

Most

most

feedback

was

editorial

hans

and

also

met

mike

put

up

some

some

topics

that

were

not

addressed

earlier,

but

normative.

We

will

take

a

look

into

that

thanks

for

that

Mike.

There

was

also

the

Alice

and

Bob

collusion

attack.

Bro

brought

up

again

that

we

discussed

about

I

think

two

years

ago

and

decided

it's

out

of

scope

for

dbcp

there's

one

open

issue.

The

tons

raised

I

will

talk

about

that

in

the

last

slide,

because

I

just

want

to

get

through,

and

then

we

have

time

to

talk

about

this.

C

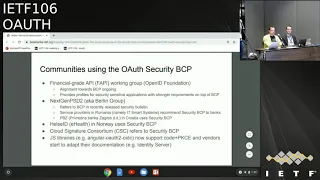

Or

chairs

asked

me

to

come

up

with

a

list

of

people

that

already

used

the

security

PCP

have

reviewed

the

BCP

like

the

BCP

and

dr.

PCP.

So

this

slide

is

contains

a

list

of

communities.

People

companies

I'm

aware

of

that

already

used

the

security

PCP

just

to

to

underpin

the

importance

of

that

work

and

to

get

it

out

of

the

door.

So

the

start

is

to

start

with

the

financial

grade,

a

PR

working

group

at

the

Open

ID

Foundation,

which

does

security

profiles

for

security,

sensitive

applications.

D

D

C

In

the

sense

they

are

applicable

to

their

context,

we

can

dig

into

details

if

you

are

interested

I

think

for

the

most

important

parts,

which

means

authorization

code

redirect

based

stuff

sender,

constraining

and

so

on.

They

are

fully

aware

of

all

recommendations

and

like

them

and

adopt

them

and

live

up

to

them.

Does

that

answer

your

question?

C

Okay,

as

I

said,

that's

just

a

list

of

people

that

that

use

the

security

PCP

are

you

I

can

put

in,

can

put

you

in

contact

with

them.

If

you

want

to

talk

about

the

details

as

a

certified,

integrate

API

working

group

does

security

profiles

for

off

for

security,

sensitive

applications

no

longer

only

for

the

financial

service

industry,

but

also

for

other

areas,

and

then

alignment

with

the

security

BCP

is

is

ongoing.

C

As

we

are

speaking,

then

there

is

one

of

the

initiatives

in

the

open

banking

space,

which

is

the

next

gen

PSD,

also

known

as

the

Berlin

group,

and

they

did

recently

released

the

security

bulletins

that

recommends

all

the

financial

institutions

implementing

Olaf

as

the

authorization

mode

for

their

API

to

consider

the

security

PCP.

The

follow

the

security

PCP

answer

also

had

discussions

with

concrete

search

providers

and

financial

institutions

in

Croatia

and

Romania

that

use

the

security.

Pcp

there's

also

an

project

in

Norway

in

the

eHealth

space

that

use

and

recommend

the

security.

C

Pcp

and

I

also

work

with

a

initiative

in

the

area

of

electronic

signing,

and

they

also

recommend

in

their

specification

to

use

and

follow

and

align

with

the

security

PCP

when

implementing

and

deploying

that

API,

ok,

yeah

and

what

you

also

kind

of

serve

in,

for

example,

the

twitter

space

that,

for

example,

javascript

libraries

are

going

to

adopt

or

recommendations

around

using

pixie

and

codes

for

s

pas

and

also

when

dos

starting

to

adopt

their

documentation.

To

follow

the

recommendations

that

we

give

in

the

security

pcp

hi.

B

From

engineer

I

just

wanted

to

thank

you

for

pulling

together

that

list.

It's

very

often

we

make

drafts

that

we

label

BCP

and

sometimes

it's

not

clear

the

basis

of

what

that

experience

is

coming

from.

So

it's

really

nice

to

see,

you

know

see

a

long

list

of

organization

that's

coming

from,

even

if

we

do

have

to

refine

what

person,

what

what

the

precise

mapping

is

from

organization

to

kind

of

right

up.

So

thank

you

for

pulling

this

together.

E

C

So

if

they,

if

the

chairs

want

I

mean

we

can

maintain

a

list,

I

just

I

just

wanted

to

give

an

example

of

communities

that

I

directly

work

with

so

I

didn't

really

reach

out

the

community

to

get

a

complete

list.

I

think

that

would

be

much

longer

absolutely

I

mean

this

would

be

perfect

to

include

in

the

Shepherd

write-up.

If

we

can,

if

we

don't

there.

B

C

A

C

The

introduction

are

one

have

to

to

admit

and

keep

in

mind

that

the

document

is

in

the

end

really

old,

so

we

started

to

work

on

a

document

early

I,

think

2016,

and

it

has

some

ledges

to

legend

see

from

that

time.

I

did

at

that

time.

It

was

more

a

kind

of

a

laundry

list

of

the

open

topic

security

topic

see

we

have

and

it

then

developed

into

a

security

PCP.

C

So

we

will

clean

that

up

clean

up

the

language

and

thanks

a

lot,

especially

to

hands

and

and

Mike

for

really

giving

a

detailed

feedback

even

a

regarding

editorial

issues.

So

we'll

take

us

some

time,

but

I

think

we

will

come

up

with

a

much

much

better

readable

proposal

and

we

will

also

try

to

even

make

the

recommendations

more

concise.

The

tons

potentially

not

as

concise

as

hen's

wished.

C

F

F

Is

there

some

reference

to

other

documents,

documents

that

are

expired

or

just

in

draft

form

that

are

sort

of

like

see

this

for

more

information

about

the

mix-up

attack

or

something,

and

as

part

of

that

restructuring

and

work

on

the

document?

If

you

could

either

remove

those

or

significantly

downplay

those

references,

so

it's

not

possible

to

mistake

them

for

like

normative

recommendations,

if

it's

just

background,

I

think

that

would

that

would

be

helpful

as

part

of

the

overall

restructuring.

C

G

G

The

terminology

of

ITF

documents

and

their

and

the

exact

explanation

of

those

terms

but

I

would

like

a

security

best

practice

to

be

about

how

you

must

do

this

right.

It

should

be

stronger

than

just

assured

it's

it's

the

best

breakfast

you

can

choose

to

ignore

it,

but

if

you

follow

the

best

practice,

it's

a

must.

That's.

That

would

be

my

point

about

this.

C

D

H

Lady

I

just

want

to

echo

what

Tony

said:

I

think

it's

not

a

blanket

statement

and

also

because

the

implication

is

also

having

an

effect

on

the

hybrid

flow

and

open

ID.

Connect

I

think

we

need

to

take

this

disk

very

seriously,

because

it

does

have

far-reaching

implications

for

deployments

and

products

and

because

there

are

ways

to

secure

it.

I

don't

think

that

we

should

make

it

a

must.

I.

C

H

G

So

there's

my

feeling

and

understanding

that

implicit

was

an

optimization

in

the

first

in

its

first

iteration

anyway.

Right

and

I

would

like

to

get

rid

of

the

optimization,

because

it's

less

secure

right

and

stick

with

the

authorization

code

flow,

because

you

can

and

that's

the

way,

they're

secure

things

not

by

fixing

the

implicit

so.

C

C

Yes

already

see

hi,

but

for

a

hybrid

flow

is

not

vulnerable

to

do

that.

But

we

are

talking

about

implicit

grant

here

and

even

the

open,

ID

connect,

hybrid

flow

has

no

meaning

for

creating

sender,

constraint,

tokens.

So

from

an

architectural

perspective,

we

should

get

the

message

across

that

the

future

is

the

code

plus

pixie

flow

and

not

implicit.

E

Erin

perky,

I

just

want

to

echo

pretty

much

everything.

Torsten

said

I

was

about

to

say

the

exact

same

thing:

the

implicit

flow.

The

history

was

that

it

was

created

when

there

was

no

alternative.

There

are

now

alternatives

in

browsers,

so

we

don't

need

it

was

always

a

hack.

Everybody

knew

was

a

hack.

We

don't

need

the

hack

anymore.

Let's

get

rid

of

it.

E

Just

simplify

things

and

as

far

as

oh

I

DC

is

concerned,

I

don't

see

any

advantage

to

using

that

hybrid

flow

when

you

couldn't

just

get

both

tokens

in

response

to

the

auth

code

exchange

anyway.

So

it

seems

like

it's

no

worse

off

for

oh

I,

see

either

so

yeah

on

a

percent

support

I,

don't

see

any

reason

that

it

should

be

part

of

go

auth

anymore.

D

Tony

hadlen,

so

it

was,

you

can

flip

it

up.

So

you

know

it's

there.

People

have

used

it.

That's

why

it

probably

should

be,

should

not

okay,

because

people

have

deployed

it

and

are

using

it

and

using

it

safely

and

if

you

just

can't

say,

don't

use

it

at

all,

because

it

was

in

the

specification.

Okay

and

so

I

can

see

this

a

couple

years

down.

The

line

to

say

must

not,

but

not

to

turn

it

off

right

now.

That's

ridiculous!

C

H

Agree

with

what

Tony

said,

but

also

responding

to

Aaron's

point

there

that

hybrid

flow

I

mean

I

know

this

isn't

an

open,

ID,

Kinect

working

group

but

in

any

event

the

ID

token

issued

on

the

front

end

and

the

ID

token

on

the

back

end

could

have

different

claims

and

them

could

have

different

information.

So

if

you

have

to

consider

that

also

in

this

I

think.

H

E

Could

see

that

going

both

ways

honestly

I

think

it'd

be

CP,

because

it

is

a

BCP

is

like

fills

that

role

of

it's

okay

to

have

these

sort

of

stronger

statements,

because

maybe

you

decide

you

to

to

not

follow

it

and

you're

still

compliant

with

six

seven,

four

nine!

As

for

whether

there

is

a

separate

document,

I'm

about

to

talk

about

that

in

a

minute

anyway,

so

well,

I'll

address

that

point

later

any.

Although

opinions.

I

J

Dreadful

dick

heart,

a

little

history,

aren't

the

implicit

flow

that

got

added

into

OAuth,

because

Facebook

wanted

to

have

something

optimized

for

being

able

to

show

some

context

in

a

page

where

the

client

really

didn't

have

any

identity,

and

they

want

to

show

us

some

stuff,

and

so

it

kind

of

made

a

little

bit

of

sense

to

be

able

to

do

something.

Where

was

sort

of

a

low-level

authorization.

A

Okay,

I

will

do

a

hum

under

should

vs.,

must

aspect

and

Roman

is

going

to

judge

from

the

from

the

middle

of

the

room.

What

the

feedback

here.

So

there

would

be

two

questions,

namely

the

first

one

is.

But

the

statement

here

on

the

on

the

slide

should

say

should

not

and

the

second

one

what

I

must

not

okay,

so

some.

E

E

A

C

C

K

B

A

G

C

Didn't

hear

anything

from

The

Dropper

all

right,

there's

one

last

slide:

a

new

Wharf

security

workshop

is

coming

up

in

2020

and

I.

Would

everybody

interested

in

having

really

really

substantial

discussions

about

all

of

security

to

consider

to

go

there?

This

year

we

met

in

Stuttgart,

that's

the

left-hand

picture,

and

you

see

all

those

smiling

faces.

I

think

everybody

really

enjoyed

the

discussions

there,

because

those

are

two

days

fully

packed

with

topics

around

the

topic.

C

C

E

All

right,

hi

I'm,

am

Becky

I'm,

going

to

talk

about

OAuth

for

browser-based

apps,

which

follows

on

nicely

from

the

previous

discussion.

This

draft

has

been

in

that

works

for

I

think

almost

a

year

now

so

I

want

to

give

some

quick

summary

of

this

and

then

talk

about

some

of

the

recent

updates

to

it.

This

is

primarily

it's

a

it's.

A

sort

of

a

compliment

to

the

OAuth

for

native

apps,

best

practices

that

we

currently

have.

This

is

for

burning

in

a

browser,

so

primarily

single

page

apps

or

browser-based

apps.

E

The

sort

of

summary

of

it

is

that

browser-based

apps

must

use

the

auth

code

and

pixie

flow

must

not

return

access

tokens

the

front

channel.

These

are

all

sort

of

referenced.

These

reference

out

the

security

BCP.

In

order

to

do

these

as

well

must

use

the

state

parameter

to

carry

CSRF

tokens

and

the

authorization

server

must

do

exact,

redirect

URL

matching.

These

are

the

sort

of

main

high-level

points

in

here

there's

a

handful

of

other

things

in

the

dock,

as

well

as

well

as

some

descriptions

of

architectural

patterns

that

talk

about

different

ways.

E

You

can

actually

accomplish

this,

and

that

goes

over

things

like

when

there

is

a

pure

JavaScript

app

talking

directly

to

an

ASMR

s

itself,

or

whether

it's

got

its

own

dynamic,

back-end

component

or

running

on

the

same

domain

as

as

RS,

for

example.

So

recent

changes

in

this

draft

there

was

a

lot

of

text

updated

to

bring

this

more

in

line

with

the

security

BCP,

including

things

like

disallowing.

The

password

grants

even

for

first

party

applications

which

it

used

to

allow

and

the

previous

draft

basically

said.

E

E

If

you

are

using

pixie,

then

then

you're

already

protected

from

CSRF

attacks.

The

state

parameter

is

another

way

to

protect

against

CSRF

attacks.

Right

now

the

security

BCP

says

that

state

must

be

used

for

that.

And,

however,

if

you

are

doing

pixie,

you

don't

need

it

to

do

that,

which

means

it

opens

up

the

possibility

of

using

state

for

carrying

application

level

logic

that

maybe

doesn't

actually

have

randomized

information

in

it.

Like

you

know,

just

redirect

URI

equals

blah.

E

M

C

Toast

militia

at

Mike

is

right,

so

pixie

and

nuns

are

equivalent,

so

I

think.

We

also

mentioned

that

in

a

PCP.

If

not,

we

should

I

pick

C

unknowns

from

my

perspective

or

equivalent

except

pixie.

R

relies

on

the

as2

to

detect

the

attack,

whereas

the

nonce

relies

on

the

client

or

relying

party

to

get

it

right,

which,

from

my

perspective,

III

lean

to

a

voice

pixie

arm

because

I

more

trust

on

the

AES

to

get

it

right.

C

E

E

Okay,

where

it's

statically

configured

in

the

application,

where

you

know

there's

like

five

different

values

that

could

be,

which

is

effectively

static,

yeah,

that's

that's

the

thing

where

and

I

saw

some

language

in

the

security

BCP

that

was

sort

of

hinting

at

that

as

an

option

as

well.

That's

why

I

started

a

little

worried,

I

hope.

C

I

mean

we

had

the

chat

about

that

on

the

list

right

about

pixie

versus

Tate,

so

based

on

the

light

at

the

recent

analysis

by

the

guys

from

Stuttgart.

Pixie

should

do

fine

for

code

injection

and

and

and

seize

her

F

protection.

So

it's

a

single

nuns

and

even

the

fingerprint

at

nuns

that

can

be

used

for

both

use

cases.

So

I

think

that's,

that's

that's

reasonable

to

go

forward

and

given

that

we

anyway

make

pixie

a

mandatory

to

be

use

of

code.

There's

a

perfect

package

I.

E

C

K

E

K

An

application

can

choose

to

use

both

I

mean

it

doesn't

have

to

be

in

the

OAuth

spec

to

include

the

nonce

parameter,

but

beside

that

the

you

can

determine

whether

or

not

the

server

as

long

as

the

server

supports

discovery,

you

can

tell

whether

or

not

it's

using

pixie.

So

it's

not.

It's

not

like

the.

The

client

has

to

completely

guess.

K

You

know

there

are

libraries

that

people

have

given

you

know

created,

etc,

but

most

people

who

roll

their

own

get

it

wrong

in

any

number

of

ways,

which

is

one

of

the

reasons

why

we

separated

it

open

ID

connect

because

pretty

much

everybody

was

going

to

be

guaranteed

to

get

it

wrong

because

they

were

using

steak

for

other

things

and

yeah

it

just

pretty

much

always

broke.

So,

if

your

question

is,

should

we

recommend

the

pixie

or

potentially

nots

if

you're,

using

open,

ib

connect

as

opposed

to

state?

Then?

K

M

E

E

M

E

B

K

H

H

H

H

E

H

Cross-Site

protection,

even

if

pixie

is

used

okay,

so

if

that

is

a

requirement,

that's

a

requirement

not

only

on

the

client

but

on

the

AES

in

the

authorized

request

yeah

in

the

gut,

but

also

on

the

token

endpoint

I

can't

issue

a

token.

If

you

didn't

follow

the

make

of

proper

requests

to

the

authorized

end

playing

Torsen

hasn't

mega

had.

C

The

BCP

says:

clients

utilizing

the

authorization

run,

type

must

use

pixie

right,

so

one

could

interpret

that

in

a

way

that

the

SAS

two

has

to

enforce

that.

That's

not

the

current

meaning

of

the

DCP,

the

meaning

of

the

PCP,

is

or

will

be.

A

SS

must

support.

Pixie

and

clients

must

use

pixie,

but

is

must

not,

and

first

pixie.

We

could

add

that,

but

that's

not

currently

the

case

and

to

further

them

from

the

discussion.

The

last

segment

of

that

paragraph

says:

open

ID,

Connect

clients

may

use

the

nonce

parameter

of

the

open.

E

N

K

If

you're

using

Open,

ID

Connect

so

making

state

mandatory,

also

violates

the

core

o

aspect,

because

it

is

an

optional

parameter,

it's

not

required

so

I

think

trying

to

make

state

required

for

CSRF

would

be

a

mistake

in

a

number

of

different

dimensions.

I

think

not

making

it

required

in

pointing

to

the

security

BCP

would

make

for

a

much

shorter

conversation.

K

A

E

Okay,

so

these

are

two

more

things

that

came

up

on

the

list

recently,

the

the

issue

around

refresh

tokens.

This

is

a

continual

challenge

for

single

page

apps,

where

you

want

a

good

experience

where

people

are

not

bounced

out

of

the

app

in

order

to

get

a

new

token.

The

question

is:

should

we

make

specific

recommendations

on

how

to

do

that?

E

It

is

currently

possible

to

do

to

do

this

a

number

of

ways,

one

of

which

is

using

a

hidden

iframe

like

oh,

I

DC

you

can

implement

that

according

to

auth,

it's

not

really

defined

anywhere,

in

particular

as

being

explicitly

part

of

oh,

oh

off,

whereas

it

is

in

oh

I

DC.

Is

there

any

value

to

making

that

more

explicit

as

a

thing

that's

possible

to

do

for

browser-based

apps

in

this

draft

toast.

C

C

F

Have

remote

participant

the

the

prompt

parameter?

No

IDC

allows

it

to

happen

by

signal

into

the

as2,

regardless

of

the

outcome,

not

interact

with

the

user

and

return

a

response

back

and

that

way

you

can

count

on

the

iframe

from

the

SP

a

perspective.

Returning

you

a

status

one

way

or

the

other

instead

of

just

getting

hung

up,

and

we

don't

have

that

in

in

baseline

Oh,

a

DC

base.

C

E

H

Define

it

I,

don't

know

this.

This

is

not

the

place

to

define

it

Travis

Spencer

here,

but

the

the

prompt

does

a

lot

of

different

input

parameters

and

it

can

be

spaced

separated

and

it

there's

a

lot

of

things

even

missing

from

that.

So

I

think

that

we

need

to

look

at

that

as

a

separate

specification

and

that

this

should

be

updated,

perhaps

to

normally

really

reference

that

okay.

E

Any

other

comments

on

that

I

think

I

would

tend

to

agree

with

that

assessment,

especially

since

a

BCP

shouldn't

be

adding

too

much

normative

language

and

certainly

not

terribly

functional

stuff.

So

the

outcome

of

that

would

be

to

not

make

any

specific

recommendations

on

how

to

do

this.

In

this

BCP

and

hopefully

somebody

will

propose

an

extension

to

OAuth

to

add

the

prompt

parameter.

What.

E

Hopefully,

hopefully,

somebody

not

gonna

point

any

fingers

I'm,

not

volunteering

myself,

yet

we'll

see.

Okay,

if

I'm

happy

with

that

outcome.

Last

point

here,

there's

a

mention

in

this

draft

about

because

you're

in

a

browser

and

JavaScript

environments

are

less

secure

by

default

than

things

like

native

apps.

It

is

much

more

important

to

have

a

strong

content

security

policy.

E

That's

basically

what

it

says.

Without

giving

any

specific

recommendations,

there

was

a

suggestion

to

explicitly

say

like

a

couple

of

things

that

should

be

in

your

content.

Security

policy

to

at

least

have

a

first

pass

of

making

it

more

secure,

one

of

which

being

disabling

inline

scripts.

Is

that

a

good

idea

for

this

BCP?

Or

should

we

just

point

people

to

a

wasp

and

have

them

go

and

figure

it

out

on

their

own.

H

H

If

you

look

at

our

assisted

token

flow

specification,

we

talked

about

how

registration

of

the

origins

could

be

done

in

the

client

and

how

that

could

update

the

CSP,

but

I

think

that

what

we

need

is

a

more

general

explanation

of

how

to

use

CSP

and

ooofff

and

then

perhaps

profile

it

for

lack

of

a

better

word

it

for

browser-based

applications.

Are

you

volunteering

to

write

that

as

a

as

a

separate

CSP

specification,

you

mean

that

this

one

would

yeah

it's

it's

not

hard.

H

E

H

C

From

a

metaphorical

perspective,

is

it

something

that

the

plow

supplies

to

s

pas

only

that

applies

to

all

kinds

of

web

applications

all

web

applications?

Okay,

if

you

could

suggest

showing

some

text

we

can,

we

can

add

it.

I

mean

Daniel.

Fett

is

much

more

knowledgeable

about

that

I

think

it

will

review

that

John.

What

do

you

think

yeah

makes

sense?

Okay,

okay,

since

we

have

a

plan,

could.

A

E

A

E

Alright,

it's

me

again,

so

this

is

something

that

came

up

in

the

last

couple

of

rounds

of

discussions

around

transaction

loss

that

Justin

has

been

leading

and

came

up

on

Monday

during

the

the

session,

then

a

little

bit

background

on

what

I

want

to

talk

about

here.

So

ooofff,

as

we

all

know,

started

a

long

time

ago

with

actually

all

around

ten

years

ago

and

is

to

find

several

grant

types.

These

are

the

kind

these

are

the

grand

types

that

were

set

out

at

the

beginning

over

the

course

of

the

last

ten

years.

E

This

has

changed.

This

space

has

changed

a

lot,

so

we've

added

pixie

through

another

RFC,

originally

only

for

native

apps,

there

was

a

native

apps

BCP

that

references,

pixie

and

saying

here's.

How

to

you

know,

don't

use

implicit

on

mobile

apps

anymore.

Since

then,

we've

also

added

the

device

grant,

which

is

a

new,

totally

new

top-level

grant

type

in

a

auth.

E

We've

also

now

got

this

single

page

app

browser

ASAP

PCP.

That

also

says

to

do

pixie.

The

security

BCP

is

is

coming

along

now

and

is

sort

of

crossing

out

the

implicit

flow

and

password

flow,

and

also

saying

do

pixie

for

even

confidential

clients,

which

is

a

new,

a

new

concept

there.

So

this

is

kind

of

what

I'm

trying

to

make

here

is.

E

Is

that

basically

everything

uses

auth

code

and

pixie

in

a

browser

and

for

mobile

apps

or

it's

doing

the

client

conventionals

grant?

Because

it's

not

a

you

end-user

application

or

it's

doing

the

device

grant-

and

this

is

basically

all

we

have

left

from

both

core

we've

crossed

out

all

the

other

ones

through

the

various

yes

sourcing,

I'm,

not

splitting

hairs,

but

you

should

mention

to

reefer

grunt.

Oh

thanks.

Yes,

refresh

token

I

didn't

put

any

here

because

it's

not

a

it's,

not

a

it

can't

start

the

flow.

M

M

So,

for

instance,

there's

a

set

of

extensions

that

define

some

additional

response

types.

Those

are

very

important

in

the

wild

as

John

said.

Use

of

the

jot

assertion

flow

is

incredibly

important

and

you

know

there's

some

other

stuff

in

the

pipe

that

we

saw

in

the

first

slide

from

the

chairs.

We

have

token

exchange.

M

E

E

A

A

If

you

are

into

writing

your

own

server

from

scratch,

I

think

you

have

to

understand

that

you

have

to

do

more

reading.

You

have

to

do,

have

to

have

a

better

understanding

and

there's

there's

a

certain

amount

of

effort

that

is

unavoidable.

So

a

book

like

the

one

you

wrote

or

Cheston

would

probably

a

good

starting

point.

Then

then,

you

have

to

read

the

documents

anyway,

I

think

that's

you

have

to

distinguish

between

the

and

the

different

audience

audience.

It

would

appear

to

me

that

this

could

be

useful.

Just.

K

Enricher

I

think

it

should

I

agree

with

Aaron

that

this

is

something

we

should

probably

do

and

considering

that

two

of

the

authors

of

books,

that

kind

of

do

this

thing

for

people

are

saying

hey.

This

should

be

a

real

thing

from

the

working

group

that

should

be

taken

as

a

signal

to

the

community.

All

right,

Aaron

and

I

both

make

a

significant

of

our

income

from

explaining

to

people

how

to

navigate

this

stuff,

and

if

I

have

to

do

that,

less

I'll

be

happier

I

think

this

is

a

good

idea.

K

E

An

RFC

and

I

also

want

to

be

very

explicit

about

this.

This

is

not

meant

to

be

a

new

any

new

behavior

in

here.

This

is

not

meant

to

to

find

anything

new

at

all.

This

will

be

meant

to,

and

it

doesn't

even

have

to

be

an

RFC.

It's

fine

if

it

would

be

a

BCP

turns

out,

people

don't

actually

know

the

difference

and

don't

care.

D

Ya,

Tony

and

Edlund,

so

it's

a

little

bit

confusing

that

this

would

be

yet

another

pcp

or

something

like

that.

Why

we've

got

the

current

BCP

right?

Why

can't

we

roll

that

up

into

an

oauth

2.0

document?

So

you

don't

have

to

go

through

all

that

crap

of

reading

the

BCP,

and

it

would

take

everything

that

BCP

has

and

says

this

is

this?

Is

the

new

base

and

just

go

from

there,

because

that

stuff's

already

been

written?

You

can

just

you

know,

take

out

things

that

it

said.

D

E

Yeah

I'm

agreeing

on

the

particular

format

this

takes

I

think

that's

what

we

want

to

discuss.

I.

Also

I.

Don't

have

a

lot

of

time

left

so

before

I.

Let

you

go

I

want

to

just

mention

a

couple

other

things

and

also

mention

that

we

have

time

allocated

here

at

3

o'clock,

where

I

booked

a

meeting

room

not

in

this

room.

E

It's

in

another

room

here

where

I

would

like

to

actually

continue

those

discussions

on

how

to

make

how

this

might

actually

look

what

this

4

might

actually

look

like,

but

yeah

this

quickly,

I

the

goal

is

not

to

find

anything

new.

The

goals

take

what

is

defined

and

snapshot

that

which

is

these

are

the

things

don't

don't

comment

on

these

particular

points

right

now

the

save

this

for

the

discussion,

but

this

is

sort

of

what

I

see

as

like.

E

Here's

things

that

we

know

works

well

and

there's

a

separate

issue

here,

which

I

want

I

do

want

to

address

or

bring

up,

which

is

that,

if

you're

building

an

authorization

server

that

is

meant

to

be

interoperable

with

arbitrary

resource

servers,

there's

I

think

a

separate

set

of

things.

Those

need

those

a

SS

need

to

do

in

order

to

be

interoperable

and

we

already

have

a

set

of

specs

that

address

that

also

and

I.

E

A

C

C

The

RC

is

being

consumed

by

people

that

work

right,

libraries

or

define

api's

for

ecosystems

or

applications,

and

those

typically

only

read

are

c6

is

m.49,

nothing

more.

So

if

you're

gonna

replace

that

with

a

new

RFC

that

has

all

in

all

the

most

stuff

in

there.

What's

in

the

BCP

right

now,

that's

perfect.

From

my

perspective,.

B

Hi,

I'm

engineer

you

know

hat

so

thinking

about

this

more

abstractly,

not

your

specifically,

it

seems

like

we

have

a

bunch

of

documents

that

specify

all

sorts

of

things.

Sometimes

it's

must

sometimes

it's

kind

of

optional,

but

what

we

want

to

do

is

cherry-pick

from

that

collection

of

documents.

What

we

want

people

to

do,

typically

being

more

restrictive,

saying:

don't

do

a

bunch

of

things

or

up

level

and

kind

of

security.

Making

instances

word

so

should

be

a

must

in

other

forums.

I've

heard

that

call

to

profile.

I've

also

heard

that

called

in

applicability

statement.

E

I

think

not

entirely

what

I'm

describing

because

I

am

actually

describing

sort

of.

If

you,

if

you

play

all

of

the

RFC's

and

BCP,

is

in

order,

you

get

something

that

which

is

what

I

want

to

snapshot,

and

it's

not

that

it's

a

profile

or

tightening

up

or

loosening

of

things.

It's

just

that

at

some

point.

B

I

mean

I,

know,

I,

won't

cut

a

believer,

but

I

think

with

a

profile.

In

addition

to

you

know,

saying,

should

a

must,

which

is

I,

think

what

you

keyed

in

on.

You

could

do

the

other

thing,

which

is

just

to

say,

you're,

not

doing

that

or

we're

just

taking

a

vertical

cut

above

it

maybe

we'd

have

table

V

ously,

look

at

a

lot

more

closely.

Thanks.

E

F

I

guess

I'm

not

sure

if

this

is

a

profile

or

a

2.1

or

miss

or

what,

but

generally

speaking,

I'm

in

favor

of

something

along

these

lines.

I

agree

that

I

think

there's

a

need

there

and

some

sort

of

document

that's

more

accessible

and

covers

more

things.

It's

definitely

worthwhile

to

people.

I

do

think

it

might

be

more

difficult

than

you've

made.

It

seem

to

pick

and

choose

which

pieces

are

included

or

not.

Like

you

pointed

to

the

device

grant

specifically

like

that,

could

go

in

or

out

it's

kind

of

arbitrary.

F

F

I'm

sorry

I

wanted

to

saw

Mike

there

and

reminded

me

well,

it's

sort

of

true

about

the

registry.

I

did

want

to

say

that

most

people

in

the

world

don't

even

know

the

registry

exists,

so

the

expectation

that

people

would

go

there

and

sort

of

work

backwards

from

it

is

is

I,

don't

think

very

pragmatic

and

in

the

real

world

yeah.

M

As

I

said

it

to

make

during

the

set

Dispatch

discussions

I-

and

we

can

talk

about

this

in

the

next

hour-

but

we

need

to

consider

very

carefully

if

we're

going

to

do

things

that

bifurcate

a

working

ecosystem.

I

am

fine

like

Brian,

with

writing

a

profile

or

a

document,

whatever

you

all

it,

that

picks

and

chooses

from

what

we

have

and

doesn't

break

anything.

If

we

go

down

the

road

of

changing

protocols,

that's

a

much

bigger

deal

and

I'm,

not

necessarily

in

favor

of

that

to

twist

ins.

I.

M

C

E

Would

tend

I

would

actually

disagree

with

that

point,

because

it's

really

only

a

s's

where

that

breaking

change

happens.

If

you're

building

an

application

consuming

an

OAuth

API,

you

get

an

access

token

dais

is

supposed

to

be

able

to

change

what

the

access

token

looks

like

already

how

that

application

gets

an

access.

Token

is

probably

be

using

a

library.

You

update

your

OAuth

library,

like

you,

like

you,

update

your

TLS

library,

I,

think

they're

not

as

different

as

you're,

making

out

them

out

to

be.

N

Anabelle

Beckman

Amazon

back

to

the

profile

versus

protocol

version

question.

To

my

mind,

a

a

profile

document

would

be

one

which

you

know

much

like

the

current

BCP,

that's

being

developed

references,

the

existing

documents

and

says

in

says

hey

this

is

this:

is

the

stuff

that

you

should

use

this

stuff.

You

really

shouldn't

anymore,

whereas

a

protocol

version

would

be

a

new

document

that

obsoletes

a

bunch

of

RFC

s

rather

than

refers

to

them

and

the

benefit

of

that

potentially

being

that

developers

have

one

thing

that

they

have

to

read.

N

I-I-I.

I

think

the

the

protocol

versioning

idea

is

appealing,

however,

in

the

face

of

potentially

parallel

developments

on

an

OAuth

3.

That

may

be

a

lot

to

try

and

get

the

community

to

digest

and-

and

we

may

find

a

lot

of

friction

there

with

with

with

development

or

adoption

or

under

of

that

or

people

becoming

confused

about

what

it

is

exactly

we're

trying

to

do

as

to

what

the

pain

points

are

for

for.

Upgrading

that

you

know,

TLS

versus

I,

think

the

simplicity

of

TLS

version

upgrades

is

being

overstated.

N

And

that

there's

it

having

had

some

exposure

to

real

real

world

upgrade

processes,

it's

it's

painful,

although

there's

a

lot

of

backwards,

compatibility

issues

that

you

still

have

to

maintain,

despite

the

fact

that

you

know.

Theoretically,

you

know,

clients

are

just

using

a

library

and

it

all

just

works

yeah.

It's

it's

it's

harder

than

it

might

look.

B

Hi,

everyone

and

you

know

you're

sensitive

to

we're

running

out

of

time.

I

just

wanted

to

suggest

other

working

groups.

Then

we've

had

a

lot

of

discussion.

My

question

would

be

independent

of

what

path

we

would

go

down.

What

information

do

we

need?

You

have

to

help

us

figure

this

out

and

do

we

have

that

information.

A

So

it's

obviously

it's

just

the

beginning

of

a

discussion

and

not

the

end

yet,

but

it's

good

that

you

kicked

it

off

it

bubbled

up

a

couple

of

times,

and

people

approached

us

about

this

topic

before

and

it

seems

that

there's

broader

interest

to

discuss

and

and

to

do

something

about

this.

So

that's

thank.

A

A

A

I

F

A

F

All

right

so

Roth

to

demonstration

of

proof

of

possession

at

the

application

layer,

otherwise

known

as

depop

sure

so,

sort

of

a

quick

overview

of

what

we're

talking

about

here.

Depop

is

a

draft

proposal

for

a

new

newish,

simple

and

concise

approach

to

a

proof

of

possession

for

OAuth

access

and

refresh

tokens,

and

the

idea

is

it's

using

the

application

level

constructs

and

leveraging

existing

library

support

to

make

it

happen.

So

hopefully

it's

actually

implementable

and

deployable.

F

There

have

been,

as

many

of

you

probably

know,

a

lot

of

efforts

around

proof

of

possession

and

OAuth

I,

lift

it

and

lift

them

out

here.

List

them

out

here

and

they've

all

been

somewhat

unsuccessful

to

varying

degrees

and

I'm

responsible

for

some

of

those.

So

don't

try

to

cast

aspersions

here

on

anybody.

I

have

as

many

aspersions

on

me

as

as

the

next

one,

but

we've

tried

a

lot

of

different

things

with

varying

levels

of

success.

F

Just

that

and,

like

I,

said,

we

sort

of

lack

a

suitable

and

widely

applicable

pop

method

right

now.

This

is

especially

true

for

single

page

applications.

We

do

have

the

soon-to-be

RFC

in

TLS

draft

for

OAuth,

but

it

it

has

sort

of,

to

put

it

mildly,

major

UX

implications

when,

when

using

it

in

the

browser,

it's

basically

unusable,

except

in

very,

very

specialized

sort

of

controlled

situations.

So

it's

not

it's

there.

It's

going

to

be

an

RFC,

but

it's

really

not

practically

usable

for

four-spot

type.

F

Applications

and

I

say

here

that

the

status

of

token

binding

is

uncertain.

I

think

that's

putting

it

somewhat

mildly,

and

so

we

also

have

sort

of

uncovered

the

desire,

the

need,

as

of

reach

late,

to

have

proof

of

possession

bound

refresh

tokens

for

public

clients

as

well

having

a

stronger

guarantee

around

the

Refresh

tokens.

It's

issued.

A

public

clients

is

something

that's

that's

desirable

and

useful.

F

So

we

have

this

proposal

for

deep

hop

flow,

just

showing

the

diagram

here.

The

the

thing

that

maybe

we've

learned

or

taken

from

other

things

is

there's

some

similarity

to

how

tow

combining

worked

here

and

that

we

have

the

same

sort

of

key

proof

method.

That's

used,

regardless

of

where

the

request

is

made.

So

whether

the

request

is

made

to

an

authorization

server

as

part

of

the

token

request

or

requests

are

made

to

the

resource

server

a

resource,

the

the

proof

key

is

sent

in

the

same

way

in

this

deep,

deep

proof

header.

F

So

this

this

is

the

consistency

for

that

around

how

it's

used

when

it's

sent

to

the

authorization

server.

It

gives

the

authorization

server

the

opportunity

to

bind

both

access

and

refresh

tokens

depending

on

the

context

to

that

key

and

when

it's

sent

to

the

resource

server,

it

gives

that

resource

server

the

opportunity

to

check

and

see

that

there's

actually

possession

of

the

corresponding

private

key

to

the

Associated

bound

access.

Token,

real,

quick,

look

at

what

this

actually

looks

like,

so

that

that

depop

proof

is

a

header.

F

That's

a

JWT,

that's

sent

in

each

request

and

decoded.

It

looks

a

little

bit

something

like

this.

A

few

aspects

of

it

are:

it

has

its

explicitly

typed

we're

supporting

asymmetric

signatures

only

and

it

must

be

one

of

the

asymmetric

algorithms

inside

of

the

header-

is

the

jwk,

the

public

portion

of

the

the

key

pair

that

the

client

is

asserting

ownership

of,

and

then

in

the

claims

itself.

We

have

a

unique

identifier

and

a

JDI

claim

meant

to

allow

for

replay

detection

and

checking

Brian.

A

F

So

either

the

the

syntax

yeah,

the

semantics-

are

a

little

different.

Although

they're

they're

fungible

that

it's

it's

it's

a

it's

a

non-obvious

line.

But

yes,

what

this

is

saying

basically,

is

here's

a

JWT,

here's,

the

public

key

which

it's

signature

can

be

verified

with.

So

this

token

is

signed

by

the

private

key

corresponding

to

the

public

key

that's

displayed

in

the

jwk

header.

There

you'll

see

the

CNF

claim

coming

to

end

it

into