►

From YouTube: IETF110-BMWG-20210311-1600

Description

BMWG meeting session at IETF110

2021/03/11 1600

https://datatracker.ietf.org/meeting/110/proceedings/

B

A

That's

right

so,

according

to,

according

to

my

wristwatch,

we

we

have

reached

our

start

time

and

so

welcome

everybody.

We

will

have

a

session

for

approximately

two

hours

today.

I'm

I'm

al

morton

co-chairing

the

benchmarking

methodology

working

group

with

sarah

banks,

hello

sarah

good

morning,

and

we

have

our

our

alternate

ops

area

area

director

with

us

today.

Rob

wilton

rob

welcome.

A

A

Let's

see,

there's

something

else

I

wanted

to.

Oh

yes,

so

we

we

have

the

notewell

which

which

we

always

go

through

here,

but

our

our

own

version

of

this

is

that

we,

you

know,

we

work

as

individuals

and

we

try

to

be

nice

to

each

other

and

those

are

not

too

hard

to

do,

but

then

also

as

a

reminder

of

the

ietf

policies,

such

as

patents

in

the

code

of

conduct.

It's

meant

to

this.

This

slide

is

meant

to

remind

you

that

we

we

have

policies

in

those

regard.

A

Well,

thanks

very

much

rob

I

I

was

hoping

to

get

hoping

to

get.

You

some

help

there,

but

you

did

volunteer

in

advance

and

we

very

much

appreciate

that

and

actually,

if,

if

anyone

wants

to

help

rob

the

the

tool

is

our

let's

see

the

tool

is,

is

our

note-taking

tool

here

on

the

on

the

the

user,

interface

and

and

and

actually

to

help

out

here

very

quickly.

A

A

A

A

Yeah

good

to

hear

your

voice

and

thank

you

for

your

offer

of

help.

Everyone

appreciates

it

so

then

you

know

we

can

easily

monitor

jabber

and

and

other

things

in

the

in

the

medeco

interface

that

our

muteco

developers

have

kindly

provided

again.

Thank

you

for

that

guys

and

and

we've

we've

gone

through

the

ipr

and

the

you

know

the

note

well

so

any

so

then,

now

we'll

quickly

talk

about

the

agenda,

any

bashing

needed.

A

We

have

the

working

group

status

that

we'll

talk

about

some

feedback

on

the

back-to-back

frame

draft

we'll

cover

quickly,

because

it

probably

affects

a

lot

of

things.

Then

we

have

the

the

working

group

drafts.

Some

of

these

will

go

quickly.

Others

will

will

need

to

talk

about

a

bit

and

and

then

a

proposal

that

received

a

lot

of

discussion

over

the

last

interim

period

between

meetings,

the

yang

data

model,

so

so

we'll

go

in

this

order

and

any

comments

or

any

bashing

needed

on

that

all

right

sounds

good.

A

A

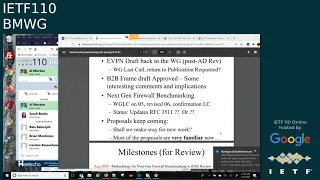

So

here's

the

quick

status,

the

evpn

draft

is

back

to

the

working

group

post

area,

director,

review

and

and

sarah

has

called

for

a

a

working

group

last

call

there

a

day

or

so

ago,

and

then,

after

that,

we

will

return

to

publication

requested

if

it's

a

favorable

last

call.

Thank

you

for

moving

that

along

sarah

and

and

thanks

to

brian

monkman,

for

comments

and

sudeen

the

lead

author

for

providing

a

draft

for

this

meeting.

A

So

in

next

generation

firewall,

while

benchmarking,

we

had

a

working

group

last

call

on

version

o5,

which

really

generated

a

lot

of

good

comments,

and

we,

you

know,

I'm

going

to

shut

my

email

down

now.

I

think

good,

all

right

so

then.

So

now

we

have

the

revised

version:

zero,

six

and

looking

it

over.

I

think

we're

gonna

want

a

conformational

working

group

last

call

after

an

editor,

some

more

looking

at

it.

A

A

So

thanks

and-

and

we

have

a

main

topic

to

talk

about

there

to

the

status

of

that

document.

So

then

proposals

keep

coming

we're

trying

to

make

way

for

new

work.

Here,

I

think,

and

with

with

all

the

drafts

we've

we've

talked

about

just

before,

and

we

did,

we

did

adopt

new

work.

As

I

mentioned,

the

multiple

loss

rate

search,

which

is

part

of

our

working

group

documents.

A

A

Working

group

drafts

or

individual

drafts

that

are

are

really

receiving

attention

from

the

working

group

we

can.

We

can

probably

consider

them

for

as

strong

candidates

for

adoption

all

right

so

on

the

milestones

we're

a

little

bit

behind

on

a

few

things

here,

but

I

think

we

can.

We

can

resolve

quite

a

few

of

these

fairly

quickly

and

and

early

this

year

and

then

we'll

be

we'll

be

in

a

position

to

update

others.

A

A

A

They

could

be

one

and

a

half

seconds

long

and

they

could

be

what

the

transport

area

calls

a

buffer,

bloat

size

buffers

and

anything.

You

know

anything

in

the

one

second

realm,

one

second,

two

seconds:

whatever

it

happens

to

be,

if

you're,

if

you're,

if

you're

sending

with

a

high

enough

rate

to

fill

the

buffers

of

the

device

under

test,

then

the

implication

is

that

you

likely

have

to

wait

longer

than

two

seconds

and

to

be

safe.

A

A

Good,

it's

been

a

good

use

of

of

this

of

this

draft,

so

here's

where

we

ended

up

with

with

text

and

it's

a

lot

more

than

one.

Second.

Second,

I'm

sorry,

it's

a

lot

more

than

one

sentence

now,

as

as

you

can

see

so

in

the

section

on

the

test

for

a

single

frame

size

each

trial

in

the

test.

This

is

where

you're

trying

to

find

the

longest

burst

of

frames

that

will

pass

through

loss

free.

A

So

care

is

needed

since

this

time

component

directly

increases

the

trial,

duration

and

many

trials

and

tests

comprise

a

complete

benchmarking

study.

So

we

can't

really

just

in

you

know,

increase

this

waiting

time

without

an

overall

time

penalty

and

those

of

us

who

have

been

you

know,

testing

and

testing

and

testing

and

waiting

for

results

to

show

up.

You

know

this.

The

the

waiting

time

after

a

trial

has

a

direct

impact.

A

So

it's

a

balance

to

strike

and-

and

I

think

we've

got

some

wording

here

now

that

that

does

that

so,

and

we

also

mentioned

the

upper

limit

for

the

for

the

time

to

send

each

burst

must

be

configurable

to

values

as

high

as

30

seconds

buffer

time

results

reported

at

or

near

the

upper

limit

are

likely

invalid.

We

saw

some

of

that

in

the

open

platform

for

nfv

benchmarking

testing,

where

basically,

the

at

the

at

the

larger

frame

rates.

A

The

packet

forwarding

rate

was

equal

to

the

back

to

back

frame

at

the

large

for

the

large

frame

sizes,

and,

and

that

means

you

don't

you-

don't

accumulate

a

buffer

or

a

queue,

and

you

you,

basically,

you

basically

send

and

send

and

send,

and

then

you

report

this

maximum

configured

time

and

buffer

length.

The

the

the

resolution

for

that

is

something

else.

A

A

A

A

A

A

A

Okay,

good,

thank

you

all

right.

Well

then,

I'll

cover

this

this

quickly.

As

I

said,

we

had

a

good

good

couple

of

reviews,

and

this

is

now

in

working

group

last

call,

I

think,

the

last

day

it

is

march

23rd,

so

that

covers

our

our

our

responsibilities

here

and

we'll

move

this

along

and

again,

thanks

to

everyone,

brian

and

sadine,

who

helped

get

the

draft

to

o7.

Much

appreciated

all

right.

A

B

B

B

B

B

We

clarified

the

security

definitions

contained

in

section

42

table

3..

It's

pretty

significant

rewrite

just

to

make

it

a

lot

clearer

and

to

sort

of

bring

some

sort

of

commonality

to

the

language

used

in

you

know,

to

line

up

with

other

definitions

used

elsewhere,

then

we

totally.

We

did

a

significant

rewrite

of

section

6.3,

which

was

originally

just

the

kpi

section.

B

We

changed

the

name

to

benchmarks

and

kpis,

and

the

goal

was

to

clarify

and

eliminate

any

of

the

ambiguities

that

existed

and

there

were.

There

were

a

number

of

them

and

we

used

rfc

2647

definitions

where

it

was

where

it

was

applicable

and

it

it

was

applicable

in

a

number

of

cases.

I

think

three

or

four

of

them

in

in

the

section.

A

So

those

are

the

terms

and

definitions

just

to

give

folks

a

little

background

here

who

might

be

new

to

this.

Those

are

the

terms

and

and

definitions

that

supported

rfc

3511..

There

were

you

know

there

was

a

time

when

we

traditionally

wrote

our

our

terms

in

a

terminology

draft.

You

know

where

the

definitions

also

appeared,

and

then

the

methodology

was

a

separate

draft

and

that

dates

all

the

way

back

to

the

very

first

pair

of

drafts

that

came

out

of

the

benchmarking

methodology

working

group.

A

So

it's

it's

really

good

that

you're,

you

know

getting

some

consistency

with

those

terms

brian,

since

you've

got

a

lot

of

good

items

on

this

particular

slide

here.

I'd

I'd

like

to

interrupt,

and

and

maybe

we

can

hold

some

of

the

discussion

that

we

had

planned

just

so

that

we

can

close

on

a

few

items.

How

many

by.

A

All

right,

then

this

is,

let

me

take

a

quick

look

at

it

all

right.

So

there's

more

changes

there,

all

right

so

we'll

we'll.

So

let's

handle

this

set

of

changes.

First,

all

right

so

so

does

you

know

I

forgot

to

check

this.

Does

this

mean

that

the

that

the

title

of

the

of

the

document

has

changed

now,

which

is.

A

Good

good,

because

you

know

I

I

suddenly

one

day

I

suddenly

began

to

wonder:

well

what

do

they

mean

by

next

generation?

You

know:

are

we

really

talking

about

the

modern

generation

of

firewalls,

the

ones

that

are

here

now

and

and

and

and

this

is

a

less

a

much

less

ambiguous

title.

So

thanks

for

thanks

for

going

there

with

that

change,

yeah!

That's

that's

good

as

well.

B

A

D

A

A

Oh,

I

get

it

yep,

that's

fine,

all

right!

So

then,

so

so

we

so

we've

got

that

change

and

then

we've

got

another

topic

here.

The

the

author

proposal,

since

we

brought

this

up,

is

to

have

this

draft

supersede

rc

3511,

which

is

the

you

know,

the

roughly

15

year

old

benchmarking

methodology

for

firewalls.

A

A

Where

supersede

is

kind

of

in

the

middle

between

where

we

we

might

call

update

and

and

of

course,

there's

a

you

know,

there's

a

million

definitions

for

what

what

constitutes

an

update

in

in

ietf.

I

think

there's

some

good

ones

out

there

and

they

may

have

even

tried

to

standardize

a

few,

but

but

the

but

the

obsolete

definition

is,

is

fairly

clear

and-

and

I

think

that's

what

you

meant

with.

B

D

B

H

A

A

A

A

Yeah

it

looks

like

there

was

a

there

was

a

bobble

here

that

caused

me

to

drop,

maybe

momentarily

enough

to

lose

everything.

So,

let's

continue

forward.

I

I

I

assume

that

somewhere

in

this

discussion

of

supersedes,

I

I

was

basically

trying

to

get

the

answer

to

the

question.

Do

you

think

supersedes

means

to

make

rfc

3511

rf

obsolete.

B

A

All

right

so

so,

then

it

sounds

like

in

in

our

ietf

terminology.

That

would

we

would

need

to

update

the

header

to

say

that

that

this

sort

of

the

status

obsoletes

rc3511

that's

going

to

have

to

go

into

the

abstract

and

we'll

have

to

edit

the

that

intro

paragraph

right.

At

the

end,

the

last

sentence

to

use

the

terminology,

if

we

did.

B

A

D

Clearly

next

generation

we

can

arm

wrestle

over

next

generation,

but

a

firewall

is

pretty

clearly

in

line

pretty

clearing

pretty

clearly

transmitting

and

receiving,

but

there's

a

whole

another

set

of

devices

that

are

just

passive,

and

so

they

wouldn't

even

be

doing

that,

but

they

would

absolutely

call

themselves

a

network

security

device

so

that

the

change

in

title.

I

was

wondering

if,

if

you

could

say

more

in

in

specifically,

why

go

to

such

a

generic

term

versus

just

shoring

up

your

potential

confusion

over

hey

next

gen

versus

current

or

modern?

A

Well

and

it's

interesting

sarah

I

just

put

up

on

the

screen

here.

Bala

has

made

a

comment

in

which

he

says

no

change

in

the

title.

The

title

is:

benchmarking:

methodology

for

network

security

device

performance

was

used

in

the

previous

version.

I

assume

he

means

rc

3511

as

well.

No,

no!

No,

I

mean

I

mean.

G

D

If

you're

really

going

after

a

firewall,

I

see

it

even

in

the

abstract.

Now

it's

it's.

It's

got

ngids

and

ips,

which

is

an

interesting

term,

but

I

I

I

I'm

just

saying:

hey

as

a

participant

you're,

it's

going

to

take

a

lot

to

convince

me

that

that's

the

right

approach

to

take.

I

definitely

wouldn't

test

my

ideas

the

same

way.

D

B

And

you

know

we

have

moved

all

of

the

all

the

security

effectiveness

stuff

regarding

the

x-ray

stream

firewalls,

the

next

generations

ipx

down

to

an

appendix

and

clearly

focused

on

performance

testing

aspects

of

things.

You

know

we.

We

think

that

it

would

definitely

lend

itself

to.

You

know

next-generation

firewalls,

next-generation

ips's,

where

that

firewalls,

you

know

anything

that

any

network

security

device

that

is

handles.

You

know

traffic

and

makes

a

security

decision

based

on

that.

D

Is

then

hey

you

didn't

mention

ids,

which

is

a

really

good

example,

so

pulling

that

I

think

helps.

But

so

can

I

defer

to

answer

that.

I

mean

knee

jerk.

What

I

would

say

is

benchmarking

firewalls

or

whatever

we're

going

to

call

on

g

fw

and

then

ips's

makes

lots

of

sense.

But

let

me

re-read

it

again

and

come

back

with

that

question

in

the

back

of

my

mind

and

I'll

circle

back

on

the

list

to

give

you

some

feedback.

B

So

so

one

of

one

of

the

things

we're

wanting

to

avoid

is

have

a

test

lab

decide

that

they

want

to

use

this

draft

to

do

testing

and

they

say

well.

We

can

use

it

in

the

web,

app

firewall

space

from

the

performance

requirements,

aspect

of

things

and

then

have

a

waff

vendor,

say

yeah,

but

it's

not

meant

for

wafts,

because

in

the

title

it

says

such

and

such

so

I

mean

we're

trying

to

avoid

that.

A

A

So

I

so

I

I

didn't

hear

any

objections

to

making

3511

obsolete,

but

but

it

sounds

like

we've

got

a

little

more

discussion

to

to

undertake

anyway,

but

in

the

but

in

the

next

in

the

next

draft.

Let's

try

that

let's

try

the

let's

that

the

you

know

that

the

working

group

looks

at

let's,

let's

try

that

as

a

obsolete,

3511,

11

and

and

see

what

the

reactions

are,

because

that's

something

that's

definitely

got

to

be.

A

A

By

the

way

you

know

thanks

so

much

for

taking

on

70

comments

and

and

I'm

sure

that

was

really

honorous

toward

the

end

of

the

process.

Here,

we're

really

making

good

progress

of

toward

clarification

and

and

moving

this

up

to

the

next

step.

Yeah

bella

didn't

sleep

much,

no,

no

and

and

and

and

I

and

I

I

know

exactly

where

you're

living

bala

I

got,

I

got,

I've

got

the

same

same

situation

on

my

hands

elsewhere.

A

In

fact,

what

you'll

quickly

see

here

is

that

the

term

the

term

throughput

it

it's

not

defined

in

2647,

and

I

think

if,

if

and

if

you're,

really,

if

we're

talking

about

tcp

or

or

other

you

know

reliable

transport

layer

traffic,

then

I

think

we're

talking

about.

Where

is

it?

I

think

it's

it's

good

put

yeah.

B

A

A

Do

you

mean

reliable

transport

protocol

throughput

or,

or

specifically

I

mean

I

I

wouldn't

see-

I

wouldn't

want

to

add

an

adjective

here

like

tcp

throughput,

because

you

know

pretty

quickly.

People

are

going

to

want

to

talk

about

reliable

transport

throughput

which

is

based

on

udp

and

quick

right

and,

and

that

was

one

of

the

other

things

that

kind

of

was

bouncing

around.

In

my

mind,

when

we

were

talking

about

next

generation

firewalls

as

well

and

you're,

just

throwing

that

terminology

out.

So

you

know

we

we

need

to.

A

A

Oh

this

is

yeah,

wait

and

then

this

one,

if

we,

if

we

need

to

call

this

good

put

but

then

but

then

make

some

explanatory

statement

later

about

you

know

the

devices

in

the

marketplace

sometimes

call

this

throughput,

but

it's

different

from

the

rc

2544

throughput

and

you

know

on

and

on

and

and

and

and

and

so

forth.

Then

you

know

that

that

might

get

us

to

a

compromise,

but

we,

but

we

can't

leave.

B

D

B

G

G

So

that's

why

I

I

think

rs25

for

2544

is

mentioning

frame

for

seconds,

but

I

think

for

the

state

for

firewall,

we

have

to

measure

bit

bitrate

because

the

firewall

is

taking

take

a

look,

taking

look

on

the

bits

and

packets

payloads,

so

it's

important

to

so

the

bit

rate

than

frame

rate.

So

this

is

one.

I

G

G

A

Yeah,

I'm

not

arguing

that

I'm

and

but

but

I

will

say

that

the

rfc

2544

throughput

is

used

commonly

also

at

the

the

packet

layer,

but

the

results

can

be

expressed

in

bits

per

second,

that's

where

we

compare

it

with.

You

know

the

maximum

theoretical

bit

rates

and

frame

rates

and

and

so

forth.

We

we

we

we

just

have

to.

We

just

have

to

put

the

right

story

together

here,

so

that

we

don't

have.

G

Here,

I

think

the

only

only

the

problem

is

you

see

the

throughput,

the

rfc

264

explaining

not

the

throughput

is

explaining

a

bit

per

seconds,

so

we

just

take

the

same

definition.

The

only

the

problem

is.

If

we

change

the

title.

Okay,

there

you

see

bit

per

seconds

as

a

kpi,

but

in

our

draft

you

see

throughput.

A

Want

consistency,

yeah,

and

so

that's

why

I'm

pointing

to

if

you're,

if

you're

thinking,

good

put

and

and

that's

what

you

just

explained,

bala

with

re-transmissions

and

losses

that

are

are

taken

care

of

you

know,

that's

fine

and

that's

a

term

that

was

used

in

rfc

3511,

the

previous

firewall,

benchmarking

rfc,

but

that's

it.

So

I

it

sounds

to

me

as

though,

and

of

course

you

can

express

good

put

in

bits

per

second,

but

but

it

sounds

to

me

as

though

you

really

want

to

sit.

G

G

So

if

you

want

to

measure

good

put,

you

need

to

eliminate

all

re-transmission

retries

and

everything-

and

the

point

is

the

system

is

complex,

not

only

the

test

and

the

device.

Only

the

the

test

devices

test

equipment,

then

we

need

to

clarify,

make

sure,

okay,

who

is

dropping

packet

or

who

is

making

free

transmission

and

all

kinds

of

things

we

need

to

eliminate

in

order

to

measure

the

good

port.

So

good

put

is

the

the

traffic

rate

without

any

re-transmission

delays

and

anything.

J

Right

so

I

think

this

is

covered

in

8238

definition

of

application

good

put,

but

I

would

like

to

think

about

the

the

majority

of

the

readers.

What

is

the

target

audience

so,

of

course,

now

somebody's

calling

me

the

target

audience

and

the

target

audience

is

just

the

orderly

guy

or

or

a

girl

who

just

has

an

understanding,

an

informal

understanding

of

throughput.

J

A

J

A

A

2544

is

in

the

errata

that,

for

this

is

all

for

25,

rfc,

2544

throughput,

so

yeah

there's

a

long

history

here,

we're

carrying

some

baggage

a

lot

of

it.

You

know

a

lot

of

this

took

place

before

I

got

very

active

here

in

in

2003,

but

I,

but

I'm

responsible

for

noticing

the

mistake

and

and

entering

the

errata

I'll,

take

credit

for

that.

Nevertheless,

we

you

know

we've

in

in

our

context

and

and

and

this

is

a

standard

as

much

as

anything

in

in

our

in

our

industry.

A

This

is

this

is

the

definition

we

use.

You

know

they're

there.

Surely

there

are

lots

of

other

ways

to

define

this,

but

within

our

working

group

we've

got

a

good,

solid

foundation

to

stand

on

and

and

if

we,

if

we

get

close

to

that

with

another

term,

let's

let's

clarify

it

with

with

at

least

one

really

useful

adjective.

I

So

I'll

al

carson,

bella

brian,

if

I

can

add

this

magic

here,

I

think

this

work

first.

Firstly,

thanks

very

much

for

for

driving

it,

as

I

said

in

my

email,

excellent

work,

and

I

think

this

is

an

opportunity

for

us

to

actually

standardize

on

nomenclature

exactly

as

carson.

You

said,

because

making

it

complicated

for

the

for

the

for

the

users,

it's

not

gonna

help

anybody

and

just

a

quick

example

in

in

the

normal.

I

I

So

there

will

be

always

a

number

of

I

think

adjectives,

plus

protocols

or

or

some

other

metadata

associated

with

the

with

the

performance

metric

word,

whether

it's

throughput

or

or

transaction

throughput,

or

something

but

it'll

be

good

to

have

that

clarified

here,

and

I

think

this

is.

This

is

exactly

the

place.

This

draft.

A

J

I

would

like

to

point

to

two

parts

of

this

definition

which

are

not

included

in

to

my

knowledge

in

any

other

throughput

definition.

The

first

one

is

the

word

allowed

so

for

security

devices.

There

is

a

difference

between

traffic

that

could

be

forwarded

and

traffic.

That's

allowed.

Okay,

maybe

that's

not

so

much

a

problem.

The

other

problem

is

the

correct

destination

interface,

so

in

load,

balancing

or

any

other

kind

of

layer,

7

device.

F

A

Actually,

we've

got:

we've

got

the

terms

correct

destination

here

in

the

old

good

definition.

So

that's

that's

a

that's

a

good

start,

and

then

you

know

you

you

you

have

to.

You

basically

have

to

decide

where

in

the

you

know

where

in

the

stack,

this

definition

is

going

to

exist

at

what

layer

you

have

to

nail

that

down

and

and

and

I

I

think,

your

point

about-

allowed

permitted

traffic.

A

I

I

I

mean

they

all

they

all

matter,

and

so

so

somehow

that

the

performance,

slash

efficiency

definition

should

capture

that

we're

dealing

with

the

security

devices

that

are

filtering

the

good

from

bad

and

and

the

definition

should

capture

that

somehow

this

is

not

just.

You

know

dropping

packet

due

to

the

capacity

issue.

It's

not

the

only

action

that

we're

measuring

here

we're

actually

measuring

the

response

to

the

the

the

malicious

traffic.

B

A

B

A

Yeah

yeah

so,

but

you

know,

I'm

I'm

just

trying

to

avoid

the

overhead

of

an

of

an

interim

meeting

when

we're

basically

going

to

be

talking

about

one

definition

and-

and

I

think

that's

I

think,

that's

fairly

reasonable.

It's

you

know

it's

really

just

a

bunch

of

knowledgeable

people

getting

together

each

each

side

of

perspectives,

expressing

their

views

and

and

then

and

then,

as

you

say,

brian

nail

it

that's,

you

know,

go

away

and

get

it

right.

That's

what

we're

talking

about

here,

basically

yeah!

A

So

so

that's,

I

think,

that's

I

think

that's

a

good

good,

a

good

way

forward

on

on

this.

Does

anybody

have

any

objection

to

proceeding

in

that

way

to

have

an

informal

call

at

a

reasonable

hour

where

you

know

where

we

hope

anyone

who

has

a

strong

interest

in

this

topic

and

and

basically

there

were

five

of

us

who

spoke

up

today,

six

on

on

all

the

topics

of

this

draft,

but

plenty

of

people

who've

made

comments

along

the

way

and

might

want

to

join

us.

A

So

I

mean

it's

a

it's

a

small

but

very

interested

group

and-

and

I

think

that

would

keep

in

deep

within

the

manageable

size

and

then

of

course

any

you

know

any

preliminary

agreement,

it's

going

to

be

reported

back

to

the

group,

both

in

the

context

of

like

a

like

an

email

status

and

updated

words

to

the

draft

and

then

working

group

review

that

follows

so

it's

it's.

You

know

nothing's

going

to

happen

here

without

full

access

to

the

information.

B

A

C

Yes,

so

obviously

I

don't

know

this

technology

very

well,

but

I

I

do

want

to

share

the

fact

that

the

comments

that

I

think

a

couple

you're

making

of

this

should

be

a

different

term,

as

in

at

least

it

should

have

some

adjective

to

describe

the

throughput

is

different.

I

think

that

is

key,

and

although

it's

hard

to

speak

for

what

the

isg

would

do

during

review,

I

suspect,

if

you've

tried

to

re,

redefine

throughput

in

this

document

to

have

a

different

meaning.

C

I

suspect

that

that

would

cause

angst

within

the

isg

review,

so

it

doesn't

have

to

be

a

completely

different

word,

but

at

least

making

sure

that

it

is

quite

clearly

different,

I

think,

would

be

a

good

thing

to

achieve,

having

a

meeting

to

discuss

this

going

forward.

That

sounds

like

a

good

idea,

a

good

idea

to

me,

but

just

to

phrase

that.

A

That

that's

that's

good,

that

sounds

that

sounds

supportive

of

the

general

direction.

We're

going

and,

and

also

you

know,

as

a

reminder

to

everyone,

which

is

something

which

rob

is

is

very

familiar

with.

You

know

the

the

iesg

sometimes

has

people

on

the

the

committee

that

on

the

group,

who've

done

a

lot

done,

a

significant

amount

of

benchmarking,

and

and

and

from

that

experience

they

go.

Oh,

what

the

hell

are

you

guys

doing

here,

so

you

know

we're.

A

A

B

The

changes

to

the

actual

test

cases

7.1

through

7.9,

the

basic

intent

and

process,

was

unchanged.

You

know

any

anything

that

we

changed.

There

were,

for

clarity's

sake,

we

added

the

ayanna

considerations,

text

that

wasn't

wasn't

in

in

section

8

previously,

and

then

we

added

the

associated

rfcs

that

we

referenced

to

section

12.2,

informative

references,

and

then

we

moved.

B

We

moved

the

set

classifications

that

we

previously

had

in

section

four

into

appendix

b

and

and

so

on,

because

it

wasn't

applicable

across

the

board,

so

it

just

made

more

sense

to

to

make

it

an

appendix

good,

and

that's

that's

pretty

much

it

I

mean

if

you

take

a

look

at

diff,

diff

2

you'll

see

all

the

whole

work

that

we

did

so.

Oh.

A

Great

well,

I'm

glad

they're,

the

netsec

open

community

who

has

contributed

so

much

to

this

is

also

open

to

the

the

wider

review.

Here.

That's

that's!

What

we've

been

trying

to

get

going

all

along

and

as

as

far

as

the

next

steps

go,

we've

got

the

we've

got

sarah's

review.

We've

got

to

work

on

on

throughput

definition,

with

an

adjective

the

something

something

throughput

and

we've

got

to

get

some

additional

review

by

by

folks.

B

A

B

A

A

D

B

B

A

I

Yeah

sorry,

so

I

I

also

forgot

to

raise

my

hand

earlier,

and

I

was

brought

again

so

apologies

for

that.

Now,

I'm

following

the

discipline

in

my

client

and

I

was

waiting

patiently.

Thank

you

al.

I

have.

I

actually

have

one

or

two

generic

comments.

If

this

is

a

good

time,

because

we're

gonna

leave

this

this,

this

draft

now

correct.

I

B

Tangentially,

I

I'm

not

I'm

we're

going

into

looking

at

this,

with

with

an

expectation

that

things

might

have

to

change.

That

may

lead

to

a

requirement

to

have

to

have

a

new

draft

but

or

our

new

a

new

rfc,

but

we

haven't

reached

that

point

yet

when

I

said

we're

just

starting

to

think

about

it,

I

do

mean

just.

I

Something

to

build

on,

there

is

a

lot

of

new

network

as

a

service.

This

marketing,

sassy

things

secure

access,

secure

edge

and

it

network

security

generally

became

a

much

bigger

thing

than

before,

due

to

covet

and

number

of

people

living

online,

so

work

life

and

so

on.

So,

okay,

so

thanks

very

much

because

there's

a

direct

impact

there

on

sizing

as

the

current

sizing-

and

I

think

I

raised

that

is-

is

clearly

focusing

on

on

physical

appliances,

but

maybe

that's

something

to

keep

in

mind

the

other.

I

One

is

the

comment

I

made,

and

I

guess

I

I

still

need

to

finish

the

the

checking

because

and

because

you

actually

did

address

most

of

most

most

of

my

comments.

So

thank

you

very

much

for

that.

I,

but

there

are

a

few

loose

ends,

which

I

guess

my

questions

or

points

were

not

very

specific

or

specific

enough,

and

that

is

the

the

features

that

are

recommended

to

be

configured.

I

B

So

the

purpose,

the

purpose

of

our

recommendations

when

it

comes

to

the

features

being

considered,

was

not

necessarily

to

test

stringently

the

security.

The

effectiveness

of

these

features,

the

the

goal

was

to

ensure

that

the

features

were

enabled

that

we

verified

that

they

are

acting

and

running

in

a

manner

that

we

would

expect

we

being

the

testers,

would

expect

and

then

leave

them

on

during

during

the

performance

testing,

and

so

you

know

we're

we're

not.

We

were

not

looking

to

make

this

a

document

that

was

an

exhaustive

security,

efficacy

test.

B

I

But

let

me

think

about

that

and

I'll

come

back

to

you

with

with

comments,

and

I

understand

that

there

is

time

for

completing

the

review,

because

I

actually

only

skimmed

through

section

seven

one,

which

was

good,

but

I

would

like

to

spend

a

bit

more

time

on

that.

So

so

I

understand

that

there

is

a

time

for

that

before

the

next

revision

or

or

is

that

not

the

case.

B

We

welcome

we

welcome

comments

and

suggestions,

some

things

we

as,

as

evidenced

by

how

we

responded

to

your

previous

comments.

There

will

be

some

that

we

think

make

a

lot

of

sense

and

that

we

will

change,

but

with

respect

to

the

test

cases,

seven

one

through

seven,

nine,

you

know

it's

it's

going

to

be

a

a

reasonably

high

bar

that

we'll

need

to

discuss

with

you

in

order

to

to

make

changes

in

in

that

area.

If

it's

going

to

affect

the

tests

themselves,

the

execution

of

the

tests.

I

I.

I

B

A

Okay,

so

we

we've

we've

got

our

next

steps.

We've

got

a

couple

of

additional

comments

and,

assuming

all

that's

been

captured

in

the

notes,

we'll

get

it

out

to

the

mailing

list

very

shortly,

brian

bala

karsten.

Thank

you

so

much

for

your

efforts

again,

like

you

said,

thank

you

and

we'll

we'll

now

move

on

to

the

next

topic.

If

that's

okay,

actually

the

next

topic

is,

is

the

multi.

A

A

K

B

A

K

Okay,

so

hello,

I'm

rocco

black,

I'm

presenting

an

update

on

this

draft.

Mlr

search

means

multiple

loss

ratio

search,

and

now

I

see

that

it

is

not

mentioned

anywhere

in

this

presentation,

so

hopefully

next

meeting

it

will

be

somewhere

there.

The

draft

status

is

the

important

thing

and

first

of

all

the

draft

was

adapted.

That

means

that

now

it

has

different

file

name

with

a

different

version

number,

but

otherwise

the

contents

have

not

changed

in

any

meaningful

way.

My

plan

was

to

prepare

some

changes

for

this

meeting,

but

I

have

run

out

of

time.

K

K

K

K

K

Where

I

describe

the

improvements

initially,

my

idea

was

to

just

make

the

draft

obviously

be

able

to

support

multiple,

multiple

loss

ratio

goals,

because

the

currently

it

focuses

on

just

two

goals.

One

goal

is

exactly

zero

loss,

which

leads

to

ndr

no

drop

rate

and

the

other

one

is

some

zero

small

ratio

which

leads

to

pdr

partial

drop

rate.

K

There

is

no

coupling

between

which

measurement

belongs

to

which

ratio,

because

in

the

external

search

this

can

change,

we

have

seen

that

previously

measurement

result

that

looks

unrelated

turned

out

to

be

related.

So

the

main

change

is

that

now

there

is

quality

quantum

database

that

holds

all

the

results,

at

least

for

the

particular.

K

Measurement

duration

and

the

the

fact

which

of

those

results

are

acting

as

upper

bound

or

lower

bound

for

a

particular

ratio,

is

computed

in

the

runtime

after

each

new

result.

So

this

way

even

old,

and

it's

basically,

we

avoid

the

previous

situation,

even

measurements,

that

when

they

were

done

looked

unrelated

now

they

can

become

related

based

on

this

computation

during

ground

type.

K

There

are

some

technicalities,

for

example,

I

am

now

introducing

effective

loss

ratio.

This

is

to

avoid

false

decisions

when

measurement

at

higher

rate

leads

to

lower

loss

ratio,

because

the

intention

is

still

to

be

conservative

in

the

search.

So

if

this

so-called

ratio

loss

inversion

happens,

we

do

not

trust

those

lower

loss

ratios,

and

we

assume

they

are

the

same

as

the

next

smaller

rate,

and

this

is

the

example

yeah

I

think

I

have.

There

is.

G

K

K

G

K

Happens

with

the

new

logic,

and

you

can

see

there

is

green

number

11.

This

is

what

the

new

logic

does

it

realizes

that

previously

unrelated

measurement

now

works

as

a

valid

bound,

so

the

search

ends

more

quickly

and

by

the

way

it

gives

a

different

result.

That

is

because

the

the

duty

is

not

behaving

deterministic

in

a

deterministic

way,

but

there

is

no

easy

way

to

deal

with

it

with

this

framework,

I

believe

this

is

a

good

solution

to

have

equally

valid

result,

even

if

it

is

different

when

the

this

result

comes

sooner.

A

A

K

For

this

particular

algorithm,

my

goal

is

to

optimize

the

search

logic,

so

the

algorithm

never

finds

any

consistencies

and

thinks

everything

is

good.

Of

course,

this

does

not

happen

because

the

algorithm

starts

with

shorter

durations

and

then

need

to

remeasure

with

longer

durations.

So

here

you

can

see

the

pdr

after

five

measurement.

Five.

Second

measurements

was

between

15

and

16,

and

then

in

30

seconds

it

was

forced

to

concede

it

is

lower,

so

this

will

always

well.

This

can

always

happens,

and

sometimes

this

happens

reliably.

D

K

Be

impossible

for

the

30

second

measurement

to

not

encounter

this

interrupt

and

the

previously

good

result,

which

was

lucky

now,

can

never

be

achieved

after

any

repetitions

and

so

on.

So

this

algorithm

is

prepared

for

this.

The

improvement

is

that

things

only

get

more

stable

within

one

specific

phase.

So

when

we

switch

from

five

seconds

to

30

seconds,

things

can

break,

but

they

will

not

break

as

badly

as

previously,

because

previously,

the

search

for

pdr

has

broken

the

previously

stabilized

search

for

ndr,

which

is

the

inefficiency

we

want

to

avoid.

K

K

K

We,

for

example,

decided

that

quadrupling

the

interval

with

gives

better

results,

because,

usually,

when

you

find

out

that

the

old

bound

no

longer

holds

the

new

bounds

are

not

adjacent,

there

are

several

expansions

away.

So

by

increasing

the

interval

length

more

aggressively

we

can

spend,

we

can

save

some

time

on

average,

so

this

will

probably

also

get

back,

and

finally,

there

is

uneven

splits.

This

is

some

information

theory

applied

to

this.

K

If

the

current

interval

width

is

not

a

power

of

two,

we

can

spend

some

time

by

not

splitting

evenly

but

trying

to

get

closer

in

to

the

power

of

two

of

the

resulting

intervals.

For

example,

one

to

two

split

is

the

logical

thing

to

do.

If

you

find

yourself

to

be

in

three

times

your

interval

goal,

so

this

will

be

another

improvement

on

average.

So

I

think

it

will

be

worth

documenting

in

this

in

this

next

version.

A

A

D

A

A

E

I

Oh

marcia,

yes,

just

evratco.

In

case

you

haven't

seen

the

carson

asked

the

question

on

the

on

the

chat:

whether

there

is

any

a

plan

or

an

observation

of

mlr

search

used

in

other

contexts,

then

fdio,

which

is

where

the

code

is

being

developed

and

are

there

any

other

implementations

on

the

horizon

radco?

Do

you

want

to

take

that

or

do

you

want

me

to

talk.

K

Well,

there

is

one

version

of

a

mlr

search

library

in

python,

available

on

pi

pi,

but

it

is

an

older

version,

that's

what

we

are

currently

using

in

ccit.

Basically

in

fdio

we

do

not

have

a

good

process

to

publish

different

versions

as

quickly

as

we

are

able

to

produce

them,

so

we

definitely

want

to

improve

on

that

and

other

than

that.

I

am

not

aware

of

anybody

else

trying

to

this

algorithm.

I

think

everybody

that

I

know

of

is

using

this

python

library.

I

I

A

A

A

H

H

H

Okay,

good,

so

this

should

be

fairly

simple.

Even

for

people

without

interest

in

testing,

we

have

even

like

tags

for

the

interfaces

and

the

device,

so

we

have

a

tester

and

we

have

the

same

device

implementing

the

device

under

test,

just

different

sd

cards,

and

this

this

was

the

setup

for

the

hackathon.

H

H

So

it

is

not

as

deterministic

as

the

other

one,

which

is

the

hardware

very

walk

implementation,

which

is

just

configured

to

register

interface

and

both

devices

have

the

same

command

line

to

interface.

So

actually

it's

very

simple

to

select

if

you

want

to

use

the

software

implementation

or

the

hardware

implementation

and

the

netcons

codes

like

the

young

core

implementation,

just

calls

command

line

tools.

H

H

Very

good

yeah-

and

I

think,

important

point

with

this

next

step

with

the

draft-

is

to

to

find

actually

serious

organizations

which

are

interested

in

it.

I'm

not

seeing

much

of

a

point

of

pushing

the

draft

before

such

a

party

exists.

I

can

continue

working

on

it.

You

are

more

than

helpful

bringing

focus

to

the

world,

so

anyone

knows

that

it

exists,

so

we

can

just

continue

doing

that

on

the

next

hack.

H

I'm

sorry

go

ahead,

I'm

pretty

much

finished

with

the

presentation

that

the

last

slide

just

shows

the

the

amount

of

work

done

during

the

hackathon.

If

you

go

down,

it

was

one.

Yes,

it's

a

very

minimal

amount

of

work

compared

to

the

the

entire

project,

so

this

is

just

implementing

the

latest

changes

and

it

is

yeah.

We

granted

also

public

access

to

the

netconf

note.

So

there

are

some

existing

validation

tools.

H

That

can

say

if

the

implementation

is

okay

or

not,

this

really

doesn't

have

much

significance

for

the

the

standardization

work

with

the

model

like

this

small,

like

we

test

our

implementation

of

it,

but

other

people

can

have

their

obviously

own

implementations,

and

this

is

not

that

significant

for

the

workload.

This

was

more

significant

for

the

the

hackathon

presentation.

H

A

A

Where

is

it

related

draft?

So

it

would

be

down

at

the

bottom

right,

oh

yeah,

so

there's

there's

some

yang

validation,

returned

warnings

or

errors

on

on

the

data

tracker

page

that

that

includes

this,

this

draft.

So

that's

that's

something

to

take

a

look

at.

It

looks

like

it's

only

one

warning,

though,.

H

A

Yeah,

that's

good!

Thank

you.

I

mean

it

looks.

It

looks

to

me

here

as

though

there's

really

something

trivial.

It

wasn't

even

worth

pursuing

with

everybody

else's

time,

but

I

saw

this.

I

did

see

this

red

yang

indication

before

and

I

wanted

to

check

that

if

there

was

something

we

needed

to

do

and

something

you

knew

about,

we

could

make

that

as

a

comment

here

today.

I

think

it

just

just

to

bring