►

From YouTube: IETF114 WEBTRANS 20220726 1400

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

C

D

E

F

A

A

We're

not

doing

paper

blue

sheets

anymore,

there's

a

qr

code

to

simplify

stuff,

otherwise,

it's

accessible

from

the

itf

agenda

page

the

full

meet

echo

allows

you

to

have

access

to

the

chat

and

otherwise

the

meat

echo

light

which

this

this

qr

code

gives

you

the

opportunity

to

join

the

queue

and

to

join

the

blue

sheets,

but

without

the

rest

of

the

interface

it's

it

works

better

than

the

other

one.

On

phones,

for

example,

all

right

next

slide,

please!

A

A

The

notewell,

so

some

of

you

may

know

this

pretty

well,

but

it's

worth

taking

a

minute

to

discuss

this,

the

what

the

ietf

does

is

covered

by

our

notewell

and

if

you're

here

it

means.

You

actually

said

you

read

it

on

the

page,

but

everyone

is

used

to

clicking

things

without

actually

reading

them.

So

let

me

take

a

minute

one

of

the

parts

of

it.

A

Is

that

anything

you

say

at

an

itf

meeting

or

on

the

github

issues

or

on

the

mailing

list

is

considered

an

itf

contribution

and

that

triggers

the

itf

policy

on

intellectual

property

patents

and

all

that.

So,

if

you

don't

know

what

that

is,

you

should

take

a

look

because,

if

you're

aware

of

a

patent,

it

means

you

have

to

disclose

it

and

that

could

become

complicated

if

your

lawyers

at

your

company

could

be

upset

at

you.

If

you

don't

do

it

right,

so

just

make

sure

you

read

all

this

and

next

slide,

please.

A

The

notewell

also

covers

the

itf

code

of

conduct

and

anti-harassment

policy.

I

want

to

take

a

minute

to

underscore

that

we've

never

had

a

problem

in

this

working

group.

Everyone

has

always

been

working

nicely,

so

let's

just

keep

doing

that

because

everything's

more

fun

when

everyone's

nice-

and

if

you

see

anything

that

you

think

is

not

great.

We

have

procedures

in

place

for

reporting

it

come

talk

to

me

or

to

the

ombuds

team,

we'll

make

sure

it

gets

handled

the

best

way.

We

can

next

slide

please.

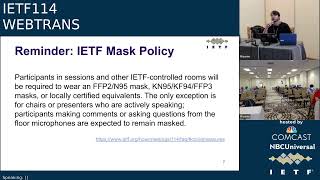

A

So

as

a

quick

reminder,

the

itf

has

a

strict

mask

policy

for

all

working

group

sessions.

If

you're

attending

in

person,

you

have

to

wear

a

mask

unless

you

are

presenting

or

at

the

chair

table,

which

is

far

away

from

everyone,

and

currently

speaking,

also

note

that

masks

need

to

be

certified

with

these

certifications

or

something

equivalent,

which

means

that

most

cloth

masks

and

surgical

masks,

actually

don't

qualify.

Those

don't

really

do

a

good

job

of

preventing

transmission

of

the

latest

variants.

So

just

fyi

we

have

free

kn

95

mass

at

the

front

desks.

A

A

All

right

here

are

some

more

links.

We're

going

to

need

a

jabra

scribe

and

a

note

taker.

Can

we

have

a

volunteer

for

jabber

scribe

all

right?

Thank

you.

Jake!

Can

we

have

a

volunteer

for

notetaker

the

fun

part?

Now

I

get

to

awkwardly

stare

at

people

in

the

room

and

remotely

and

we're

not

going

to

start

the

session

until

we

have

a

volunteer

because

notes

are

very

important.

A

A

Oh,

yes,

we

are

now

using

zulip

you,

so

you

can

either

join

through

the

meet

echo

chat

or

through

the

zoolop

client,

and

both

of

them

seem

to

work

and

are

bridged

together

and

if

you

want

alan

to

say

something

because,

for

example,

let's

say

you're

remote

and

you

don't

have

audio

just

say,

mick

colon,

something

and

alan

will

jump

in

the

mic

queue

and

say

what

you

said

at

the

microphone

awesome

thanks.

Both

of

you

next

slide.

A

G

Hi,

it's

me:

can

you

hear

me

now?

Yes,

we

can

go

ahead.

All

right!

Thank

you.

So

I'm

janavar

co-chair

with

will

law

for

the

w3c

specification

for

web

transport.

So

I'm

here

to

give

you

a

progress,

update

on

changes

since

march

24th,

so

we

published

another

working

draft.

The

latest

version

is

june

23rd

of

this

year

and

we

have

a

charter

extension

underway

for

an

additional

year,

because

the

current

charter

expires

september

22nd.

G

G

So

currently

we're

aiming

for

this

is

what

we're

aiming

for

september

end

of

september

for

canada

recommendation,

which

requires

stability

in

api

and

then

by

end

of

the

year,

we're

hoping

for

that's

our

goal

post

for

a

proposed

recommendation

at

the

moment,

which

would

require

two

independent

implementations

for

our

charter,

which

would

put

us

in

line

for

a

call

for

review

in

february,

and,

ideally,

everything

works.

We

have

publication

by

recommendation

by

the

next

ac

meeting

in

april

ish

all

right,

so

we've

defined

some

milestones.

G

G

And

so

here

are

some

decisions.

Since

our

last

presentation

in

march,

we

have

added

per

stream

stats

that

means

per

outgoing

and

then

going

in

duplex

stream,

not

not

datagrams,

and

these

are

not.

These

are

bytes

written

by

sent

and

by

technology,

bytes

acknowledged,

which

are

not

total

network

byte

counters.

G

G

Where

we

now

support

a

re

require

unreliable

through

boolean,

which

defaults

to

false.

This

is

so.

Applications

can,

in

the

future,

specify

whether

they

want

to

require

udp

and

by

default

they

will

get

fall

back

to

http

too,

and

we

added

another

read-only

property

for

that,

so

that

you

can

tell

what

what

you're

looking

at

another

issue

was,

is

connection

pulling

off

the

right

default

and

yes,

so

a

lot

pulling

still

defaults

to

false

next

slide.

G

All

right

so

current

issues

of

debate,

so

we

have

three

the

remaining

issues:

we've

been

circling

around

the

same

remaining

issues,

and-

and

this

is

one

of

them,

which

is

people

want

to

send

media

and

that

doesn't

always

work

so

great

with

the

default

congestion

controlling

quick.

So

we

have

agreement

to

provide

some

constructor

level

configuration

api

surface

that

would

allow

an

application

to

specify

its

preference

for

the

type

of

condition

control

to

be

used.

G

Now

we

know

that

that's

not

necessarily

available

anywhere.

Yet,

however,

we

hope

that

we

can

get

the

api

ready

and

have

bashed

out

all

the

api

decisions

here

to

get

us

to

candidate

recommendation,

and

we

can

then

subsequently

mark

this

as

a

feature

at

risk.

If

implementations

fail

to

materialize

prior

to

proposed

recommendation,

so

discussions

around

shape

remain,

so

we

have

two

proposals

with

two

directions.

G

G

G

G

So

this

is

a

low-level

version.

Now

the

alternative

would

be

to

provide

something

more

upfront

where

you

can

specify

fixed

levels,

and

that

has

been

specifically

requested

for

a

warp

and

chrome

has

volunteered

to

investigate.

If

that

is

practical,

having

in

32

number

of

levels

would

provide

javascript

with

some

more

ability

to

just

hear

all

the

priorities

levels,

I

want

send

it

to

that

order

and-

and

I

don't

need

to

change

it

later

next

slide.

H

G

I

should

no,

I

should

have

clarified

this

is

this

would

only

be

for

we

assume

servers

will

take

care

of

their

own

congestion,

control

and

clients

in

that

case,

will

just

receive

what

they

receive,

so

so

apologies,

so

this

would

only

be

for

ingestion

and

basically

for

clients

sending

media

to

servers.

That's

the

that's

the

missing

gap

that

we're

trying

to

specify

thanks

for

that

question.

A

So

please

go

and

comment

on

those

issues.

That's

what

we're

asking

for

here,

because

that

that's

the

kind

of

thing

where

the

w3c

has

to

figure

out

an

api

for

it,

but

we

have

the

congestion

control

experience

that

ietf.

So

this

is

the

kind

of

cross-pollination

that

we

left

to

see

and

I

see

bernard

in

the

queue.

G

Yes,

there

was

a

detail

in

the

api

didn't

show,

which

is

that

you

can

still

for

the

second

proposal.

When

you

expose

the

name

you

don't,

you

can

also

expose

other

attributes

of

each

congestion

controller,

such

as

what

the

aim

of

it

is,

and

you

can

have

enums

for

for

several

of

these

properties,

if

you

will

so,

but

but

thanks

david,

that's

a

good

question

that

good

it's

good

to

highlight

that

these

aren't

set

in

stone

in

any

way.

G

A

F

F

A

J

I

saw

david

was

talking

about

throughput

and

latency

and

looking

meaningfully

in

my

direction,

so

I

felt

compelled

to

say

something.

I

worry

here

that

there's

a

tendency

to

overcomplicate

things,

as

we've

seen

this

week

at

the

hackathon,

with

the

almost

work

done

on

l4s

and

other

things

that

have

been

going

on

in

the

industry

this

year,

it's

possible

to

have

low,

latency

anti-throughput.

J

At

the

same

time,

it's

not

an

either

or

choice.

Priorities

become

very

problematic

because

somebody's

got

to

decide

what

the

relative

priorities

are

and-

and

if

you

have

enough

bandwidth

for

everything,

then

it

doesn't

matter.

Every

flow

gets

what

it

needs

and

if

you

don't

have

enough

bandwidth

for

what

you

need,

then

it

becomes

extremely

tricky

to

figure

out.

What

is

the

right

way

to

resolve

that?

J

Do

you

have

a

strict

priority

where

you

have

you

have

total

starvation

for

the

lower

priority

things,

or

do

you

have

some

relative

priority?

This

is

all

very

complicated,

but

the

good

news

is

with

l4s

and

similar

technologies.

The

whole

problem

goes

away.

You

you

open

multiple

streams

and

they

each

get

a

nominal

fair

share

of

the

capacity

when

it's

scarce.

When

when

bandwidth

is

abundant,

then

everything

gets

what

it

needs.

J

So

I

guess

the

summary

is

that

let's

not

over

complicate

this

with

mechanism

that

is,

is

really

hard

to

understand,

and

even

the

people

at

the

ietf

who

are

congestion,

control

experts

find

this

hard

to

understand.

So

the

average

web

developer

is

probably

just

going

to

twiddle

knobs

randomly

without

even

understanding

the

implications

of

what

they're

doing.

A

K

Hi

everyone

I'm

alex

schneichowski,

I

work

at

google,

and

one

of

the

things

I

wanted

to

mention

is

that

I

was

a

little

bit

surprised

when

I

saw

this

slide,

because

I

remember

when

we

were

deploying

bbr

on

the

youtube

cdn,

and

one

of

the

concerns

that

we

had

was

that

we

actually

saw

people

complaining

about

bbr's.

Initial

lack

of

fairness

with

all

the

other

congestion

controllers,

and

one

of

the

things

that

I

worry

about

here

is

that,

even

if

you

do

something

nice

like

saying,

you

know,

aim

low

latency

versus

aim

throughput.

L

Hi

luke

from

twitch

here

so

first

thing:

the

congestion

control,

it's

something

that

is,

I

think,

the

low

latency

hint

is

pretty

important.

One

of

the

things

with

warp

that

we

struggle

with

is

cue

management

and

just

trying

to

have

this

buffer

in

the

socket

that

needs

to

be

sent

and

buffer.

Bloat

is

an

issue

like

if

there's

500,

milliseconds

of

rtt.

It's

like

there's

no

point

prioritizing

anything

like

you,

just

everything's

gonna

be

ordered

over

the

wire.

L

So

just

a

way

of

you

know

saying

congestion.

Control

like

keep

the

rtt

down

is

important

and

for

the

next

slide

just

I

think

there's

two

little

things

that

come

down

to

it.

One

is

like

you

said

mentioned:

ordering

is

important.

It's

not

clear

if

the

eight

levels

the

ordering

is

mainly

like

is

priority

two

always

lower

than

priority.

Three,

and

exactly

like

you

mentioned

as

well.

L

You

need

at

least

enough

levels

as

there

are

active

streams

and

eight

is

kind

of

low,

but

for

warp

it

would

be

fine

honestly,

but

if

you

start

doing

stuff

like

per

frame

priorities,

then

eight

is

just

gonna

be

artificially

low.

It's

almost

like

a

flow

control

limit

of

eight

hard-coded

there's

just

not

much

you

can

do,

but

all

end

of

the

day

it

all

comes

down

to

buffer

management.

L

M

Yeah

donald

linux,

I

mean

I

get

the

impression

that

the

what

is

actually

meant

by

throughput

versus

low

latency

is

cubic

versus

gcc,

which

I

mean

personally

I'd

be

fine

with,

but

I

mean,

obviously

short

term

longer

term.

Personally,

congestion

control

people

will

come

up

with

something

more

clever,

but

in

the

short

term

those

are

the

two

algorithms

that

are

actually

deployed

in

chrome

and

I

suspect

the

idea

is

to

switch

out

the

one

for

the

other.

M

A

C

To

the

mic,

please,

okay,

I

just

wanted

to

say

that

there

is

practical

tradeoff

between

throughput

and

latency,

in

the

sense

that

there

is

some

level

of

fundamental

uncertainty,

of

what

your

benefits

and

any

attempt

to

probe

it

would

result

in

building

up

secure.

So

that

is

one

of

the

fundamental

tuning

properties

that

pretty

much

every

congestion

control

scheme

has

to

overcome.

So

from

that

perspective,

setting

latency

targets

make

sense.

N

Ian

sweat,

google

yeah.

I

would

also

prefer

a

objective-based

approach,

whether

it's

latency

or

throughput,

I

mean

even

two

levels

is

vastly

preferable

so

like

there

are

times

or

we

actually

have

deployments

where

we're

using

bbr

b1,

but

we

have

it

tuned

to

be

much

lower

latency

and

it's

not

as

good

as

like.

You

know,

a

real-time

congestion

control,

but

it

does

prevent

buffer

bloat

and

so

for

a

given

dash

controller,

as

did

before

you

can

commonly

tune

parameters

to

like

provide

output.

That's

much

more

similar

to

one

of

the

other.

N

O

Thanks

colin

fascinating

to

hear

the

only

thing

we're

standardizing

sucks

the

I

wanted

to

actually

jump

back.

A

bunch

too.

There

are

some

comments

about

users

of

this

at

the

api

level.

Will

just

be

confused

with

this

and

not

how

know

how

to

set

these

things,

and

that's

that's

unquestionably,

true

in

some

cases,

with

all

these

things,

I'm

not

arguing

against

that,

but

I

think

that

is

the

wrong

thing

to

design.

For

that.

O

The

thing

is

we

have

to

realize

that

whatever

levels

of

controls

here,

we

give

limit

what

the

applications

that

literally

billions

of

users

use

like

zoom

webex

these

other

things

that

are

using

huge

numbers

of

minutes.

They

do

know

how

to

set

this

stuff.

Okay,

they

have

some

very

good

people

at

all

of

those

companies

are

doing

broad

webrtc

products

and

if

you

don't

give

them

the

controls

to

be

able

to

set

things

up

the

way

they

need,

whether

it's

twitch

or

somebody

else.

O

They

just

can't

use

this

and

they

will

will

just

abandon

the

web

stuff

and

go

use

thick

apps,

which

is

it

was

the

problem,

so

we

have

to

design

for

the

use

cases

that

represent

large

numbers

of

users

of

end

users

on

the

internet,

not

designed,

for

you

know

an

average

web

developer.

Who

may

not

understand

this

stuff,

so

I

think

that

we

should

design

for

giving

lots

of

control

of

what's

going

on

at

this

api

level

and

I

think

that's

a

different

direction

than

we

have

traditionally

gone

on

javascript

level

apis.

B

P

P

So

I

think

we

should

realize

that

the

application

innovators

are

faster

than

the

browser

vendors

and

we

need

to

bias

some

of

our

designs

to

that

one

specific

thing

on

the

prioritization,

though,

when

you

start

talking

about

abstract

levels,

you

know

one

two,

three,

four,

five,

six,

whatever

like

someone

said,

those

numbers

don't

mean

much.

Unless

you

know

what

the

actual

prioritization

method

is,

whether

it's

you

know

strict

priority

or

what

the

queuing

discipline

is

and

all

that

one

of

the

things

people

may

want

to

consider

is

in

rmcat.

P

There

was

a

proposal

called

nada,

it's

actually

an

rfc

now,

but

it's

an

experimental

congestion

control

and

one

of

the

interesting

things

about

it

is.

It

has

weighted

fairness,

and

so,

rather

than

expressing

priority

in

terms

of

you

know,

abstract

numbers

they

are

weights

and

they

are

weights

relative

to

what

a

default

unprioritized

stream

would

be.

So

you

have

an

atom.

Almost

you

know,

as

if

you

have

an

atom

stream

that,

if

you

don't

do

anything,

that's

what

you

get.

P

But

if

you

want

to

have

a

priority,

you

you

specify

a

weight.

So

if

you

want

to

be

a

three

three

times,

heavy

stream

or

a

half

heavy

stream,

so

that

weight

is

in

is

in

terms

of

an

absolute

thing.

It's

in

terms

of

it's

relative

to

an

absolute

thing,

which

is

the

default

stream

that

you

would

get

if

you

didn't

do

any

prioritization.

So

I

think

that

may

be

a

useful

concept

to

look

at

when

you

look

at

doing

the

prioritization

apis.

Q

But

I

think

we've

alluded

a

bit

in

this

discussion

to

the

fact

that,

like

you

might

have

something

that

gives

you

both

low

latency

and

throughput,

and

so

we've

almost

we've

had

real

trouble,

trying

to

specify

something

that

actually

makes

sense

in

real

life

for

people.

It's

almost

like

you

want

the

inverse

of

that

of.

Q

That

starts

to

get

really

messy

and

kind

of

gross,

so

I

would

almost

support

what

tommy

was

saying

of

either.

Let's

go

all

the

way

up

to

the

top

and

like

describe

what

we're

doing,

rather

than

some

intermediate

property

that

we

think

will

accomplish

that

goal

or,

and

maybe

both

also

give

people

a

direct

ability

to

just

say

nope.

Like

I'm

advanced,

I

know

what

I'm

doing,

I'm

working

on

developing

conjunction

controls

and,

like

I

know

I

want

bbr

v2.

Let's

do

it.

That's

going

to

be

exactly

what

I

want.

A

That

just

poking

the

congestion

control

there

would

be

very

successful

in

an

itf

meeting,

and

it

was

so

thanks

everyone

for

the

really

good

discussion.

I'll

repeat

my

point

about

please

adding

that

on

the

w3c

github.

This

is

really

good

input

for

them

and

that's

something

that

they

can

act

on.

So

thank

you

all

right,

yanivar

keep

going.

G

Oh

yes,

thank

you.

Can

you

hear

me

so?

Yes

thanks

again

and

yes,

I

think

we

should

say

the

w3c

will

be

probably

perfectly

happy

to

specify

whatever

you

guys

come

up

with

and

we're

very

open

to

your

input.

So

thank

you.

The

last

slide.

The

current.

The

third

issue

under

debate

is

to

expose

some

stats

to

enable

javascript

to

build

more

rtp,

like

real-time

protocols

for

client

to

server

audio

video.

G

So

the

previous

discussion

was

all

about

giving

javascript

control

knobs

for

what

the

browser

can

do

about

it

and

there's

some

some

who

are

trying

to

hand

off

this

wholesale

to

javascript

as

well,

and

you

know

somewhere

in

the

middle.

You

want

to

control

all

of

this.

So

this

it's

a

separate

issue

that

we're

tracking

it's

assumed

to

be

about

datagrams

only

or

at

least

at

the

connection

level.

Only

so

this

is

again

open

for

discussion.

G

So

we've

asked

the

question:

what

kind

of

what

kind

of

stats

would

javascript

need

in

order

to

build

its

own

congestion,

control

algorithm,

for

example,

here

and

so

there's

an

rfc

8888

that

suggested

latest

rtt

packet

departure

package

or

packet

arrival,

which

I

assume

is

arrival

on

the

server

right

and

then

ecn?

Maybe

an

ack

info

would

be

sufficient

and

we've

also

reached

out

to

david

balderson.

I

hope

I

got

your

name

right

for

some

experimental

data

over

implementing

rtp

over

web

transport

with

bbr

2

or

maybe

bbr2

plus

screen.

G

G

And

the

reason

why

this

is

only

for

datagrams

or

only

at

the

connection

level,

is

that

the

javascript

api

for

outgoing

incoming

streams

do

not

operate

at

the

packet

level.

So

the

questions

we

still

have

are

our

packets

and

datagrams

sufficiently

analogous

for

an

rtp

like

implementation,

and

you

know

this

is

again

an

exploratory

issue,

so

questions

of

welcome

or

input

welcome,

I

should

say

I

think

that's

my

last

slide.

Q

How's

that

gonna

do

yes,

no

good

bad,

sweet,

all

right

cool,

I'm

eric

kinnear

from

apple

and

if

we

can

get

the

next

slide,

please

we're

going

to

talk

a

bit

about

the

capsule

design

team

that

we

started

in

ihf

113

and

the

main

question

was

what

the

heck

should

we

do

about

capsules

so

like?

Should

we

use

them?

Should

we

not

use

them?

Q

And

if

we

go

to

the

next

slide,

we

can

see

like

we

had

a

datagram

capsule,

which

is

coming

from

hp

datagrams,

and

we

want

to

use

that

to

send

it

on

an

h2

stream.

Just

as

much

as

we

want

to

send

that

in

h3,

there's

also

a

closed

web

transport

session

capsule

in

h3,

and

if

we

go

to

the

next

slide,

we

can

see

we

have

a

whole

pile

of

them

for

h2.

So

the

obvious

crossover

here

is

something

like

datagram.

Q

Should

we

be

sharing

everything

else?

How

does

the

rest

of

this

work?

What

is

the

role?

If

I

can,

I

send

a

wt

stream

capsule

on

an

h3

stream,

and

is

that

cool?

Does

that

give

us

awesome

version

independence?

Does

that

destroy

everything

and

make

things

go

down

in

flames?

So

next

slide?

Please,

we

also

opened

the

can

of

worms

that

is

flow

control.

Q

That's

what

we

started

with

in

terms

of

problems

for

everything

and,

like

I

said

on

the

slide

here,

we'll

talk

a

little

bit

more

about

flow

control

and

some

of

that

stuff

later,

but

the

opportunity

arose

to

say:

hey.

We

have

all

of

these

different,

like

stream,

max

stream

data

blocked

capsule.

Should

we

send

that

on

h3

and

what

does

that

mean?

Q

So

that's

kind

of

what

we

set

out

to

solve

where

we

are

right

now

is

we

have

a

pull

request

against

h2

and

a

pull

request

against

h3

that

we

will

send

the

links

out

to

on

the

mailing

list

and

ask

for

a

bunch

of

input

and

review.

I'm

going

to

summarize

very

quickly

in

slide

form

what

those

do,

because

that's

often

a

lot

more

grockable

than

reading

a

bunch

of

diff

from

what

it

used

to

look

like.

Q

But

I

chose

to

use

explode

here,

so

we're

going

to

continue

with

that

one,

and

we

looked

at

some

pros

of

why

we

would

want

that.

It's

really

attractive

from

a

symmetry

perspective,

to

have

this

single

conceptual

model

that

looks

kind

of

like

a

miniature

version

of

quick

that

you

can

run

on

any

http

exchange

that

you

have

anywhere.

You

could

potentially

get

h1

support

out

of

this

for

free,

you

just

you

know,

doesn't

matter

it's

completely

transport

agnostic.

This

is

just

how

I

send

web

transport

streams.

Q

Q

Q

Now

the

common

case,

you

have

to

be

like

how's

it

coming

in.

What

do

I

do?

Are

you

allowed

to

switch

part

way

through

like

what,

if

some

go

over

a

single

h3

stream,

but

others

you

choose

to

split

out

into

its

own

h3

stream

and

like?

Can

I

restrict

that,

if

I'm

not

willing

to

give

you

some

of

those

resources

and

what

does

that

do

to

our

stream

limits

for

flow

control

which

we're

going

to

talk

about

in

a

second?

Q

Q

So

you

are

not

sending

a

datagram

capsule

on

your

h3

stream;

it

is

an

actual

datagram

and

that

persists

throughout

all

of

h3

everything

that

h3

can

split

out,

which

is

most

everything,

looks

just

like

it

does

today.

There's

no

debate

over

oh,

but

it

came

in

this

other

way.

Am

I

supposed

to

handle

it

some

weird

different

way,

and

can

I

signal

to

the

other

person

about

it?

So

no

weirdness

there

just

if

it

can

use

a

native

feature.

Q

Q

Q

B

B

So

I

mean

either

you're

going

to

say

you

don't

like

web

transfers

just

going

to

break

in

those

cases

or

you

need

to

tweak

the

language

where

you

say

that

you

must

use

datagram

in

h3

to

say

you

must

use

datagram

if

you

have

the

transport

parameter,

but

if,

for

some

reason

the

other

side

didn't

do

the

transfer

parameter,

you

know

already

that

it

can't

do

it.

So

then

you

must

use

the

capsule

version

of

it.

But

I

think

saying

that

you

have

to

support.

Capsules

is

fine,

because

you

always

can

do

that.

A

Q

Q

E

E

In

this

context,

it

does

mean

that,

if

someone's

going

to

try

to

deploy

this

and

they're

using

intermediaries

in

their

in

their

deployment,

they're

going

to

need

to

ensure

that

when

the

the

front

end

receives

one

of

these

things

and

says

yes,

it's

okay,

the

connection

onward

is

dealt

with

somehow,

whether

that

means

translation

or

whether

it

means

full-on

support

for

the

same

sort

of

feature

set.

I

think

that's

just

something

we

can

write

down

and

and

explain.

E

E

E

Q

E

I

understand

that

to

be

a

problem

for

some

people,

but

I

think

this

whole

idea

of

implicit,

signaling,

that's

tied

to

other

things

is

com

is

problematic

when

it

crosses

layers,

it

may

be

appropriate

at

the

layer

in

which

it

was

done

for

that

one,

because

it

was

all

tied

into

the

same

negotiation.

I

don't

like

that,

but

that's

where

we

ended

up

and

yeah.

I

guess

something.

A

F

S

I

would

like

to

point

out

that

there

is

a

use

case

of

web

transport

that

doesn't

require

datagrams

a

somewhat

primitive

use

case,

but

you

can

imagine

just

doing

the

stuff

you

did

on

on

websocket

via

web

transport

now

and

benefit

from

streams

and

never

sent

a

single

datagram.

So

I

don't

see

why

datagram

support

needs

to

be

a

requirement.

Q

That

that

was

going

to

be

my

next

question

was:

is

there

anybody

who's

planning

on

deploying

this

that

doesn't

have

datagrams

and

doesn't

want

them

and

would

rather

have

code

that

handles

datagram

capsules

coming

in

on

an

h3

stream,

because

that's

kind

of

your

alternative

right,

so

you're

still

going

to

have

to

write

code?

That

has

the

letters,

data

and

gram

in

them?

It's

just

now.

You

have

to

have

an

if

statement

and

deal

with

it

in

multiple

places.

S

P

E

That

is

possible

as

a

proprietary

protocol,

but

building

something

that

doesn't

have

datagrams

in

it

and

specifically

designing

to

allow

for

that.

Possibility

does

complicate

how

we

build

this

thing,

and

I

think

it's

a

complication

that

we

don't

necessarily

want

here.

Implementing,

datagrams

and

or

implementing

the

possibility

of

receiving

datagrams

from

someone

is

relatively

simple

to

do,

and

even

if

you

don't

plan

to

use

them,

and

and

all

you

do

is

throw

them

away,

then

that's

probably

something

that

that

you

could

possibly

do

in

that

context.

And

then

you

would

get

interoperability.

E

However,

building

something

that

says

well

datagrams

are

optional,

makes

it

very

much

more

difficult

for

those

of

us

who

are

building

to

this

sort

of

thing

and

have

to

talk

to

arbitrary

servers,

and

then

we

have

to

deal

with

the

possibility

that

maybe

datagrams

aren't

present.

We

have

to

think

about

how

to

move

things

on

capsules

and

all

sorts

of

other

things.

So

I

think

that's

nice,

but

I

don't

want

to

go

there.

A

Yeah,

david

schnazzy,

no

hats

well

mask

enthusiast

hat,

so

just

to

add

in

the

http

datagrams

document

we

say

that,

like

you,

you

must

support

receiving

datagrams

on

inside

capsules,

so

that's

kind

of

a

requirement.

Here

I

mean

at

the

end

of

the

day,

if

you

already

have

a

capsule

parser,

which

you

need

because

of

the

closed

web

transport

session.

Capsule

like

having

that

call

the

I

received

a

datagram

frame

function

is

pretty

trivial,

so

I

wouldn't

worry

about

that

too

much.

I

think

this

boils

down

to

do.

L

Hi,

it's

luke,

so

I've

deployed

a

quick

stack

without

datagram

support,

you're

right,

it's

really

easy

and

it's

it's

kind

of

trivial

to

just

throw

them

away.

I

think

the

only

concern

is

maybe

capabilities

on

the

w3c

side.

I

think

there

was

a

slide

there

saying

datagrams

are

reliable

or

unreliable,

and

it's

kind

of

hard

to

tell

if

a

server

actually

supports

these

unreliable

datagrams.

If

it

just

lies,

it

just

says

I

support

them.

L

I

need

to

say

this

to

get

web

transport,

but

then

you

actually

try

to

send

them

and

it

doesn't

work.

So

there

might

still

be

a

use

case

there

to

say

that

the

I

yeah

I

don't

know.

I

can't

really

think

of

any

reason

why

you

can't

just

lie

about

it,

but

I

definitely

would

like

to

avoid

having

to

implement

anything

complicated

with

datagrams

there's,

just

no

reason

to

use

them

in

most

use

cases.

I

think.

Q

Q

We

say

instead

of

sending

a

flag

that

says,

settings

enable

web

transport,

you

just

send

settings

web

transport

max

sessions

and

if

you

set

it

to

xero

no

web

transport

for

you

today,

but

if

you

set

it

to

one,

you

have

web

transport,

you

have

no

pooling,

you

get

one

session

and

if

you

set

it

to

more

than

one

now,

you

have.

However

many

you

asked

for

so

completely

reasonable.

Q

Q

But

if

you

have

multiple

web

transport

sessions

and

each

of

those

are

you

using

native

h3

streams,

it

would

be

very,

very

easy

for

my

first

session

to

use

the

entire

budget-

and

my

next

session

says

I'd

like

to

open

a

new

web

transport

stream.

And

the

answer

is

nice?

Try?

So

this

is

a

way

for

within

a

web

transport

session,

that,

in

a

context

that

understands

that,

as

opposed

to

h3,

which

just

sees

lots

and

lots

of

very

equal

streams,

you

can

just

say

mac

streams

and

use

that

same

capsule.

Q

S

Q

Q

The

and

the

reason

for

that

is

because

you

can't

necessarily

tell

that

that

was

associated

with

that

session,

because

the

stream

is

gone

and

you're

not

gonna

when

the

the

frames

arrive

you're

having

a

bad

day,

and

so

I

think

we

we

discussed

wordsmithing

some

text

around

how

the

capsule

kind

of

has

to

be

paired

to

try

to

make

that

less

possible.

But

I

don't

know

that

we

ever

got

it

to

a

zero

percent.

Chance

possibility.

Q

Q

S

S

The

the

problem

I

see

is

that

there's

a

race

condition

here,

like

let's

say

I

you

give

me

10

streams.

I

close.

I

have

this

10

streams

open.

I

close

five

of

them

and

open

five

new

streams

and

now

the

fin

bits

for

the

closed

streams

get

reordered.

Then

you

will

think

that

I

open

15

streams

and

you

will

give

me

a

protocol

violation.

I

would

assume

got.

Q

It

the

closing

before

being

opened,

is

essentially

like,

so

if

a

stream

gets

reset

before

you

knew

it

existed.

So,

if

I

say

hey,

I'm

resetting

the

stream

and

you

go.

Excuse

me

what

stream

very

often

in

at

least

several

implementations.

You

basically

say:

okay,

cool.

This

stream

is

dead

and

when

things

then

show

up

for

it

later,

you

basically

just

completely

discard

everything

to

do

with

it,

which,

without

careful

wording,

it

means

that

you're

also

discarding

the

information

that

told

you

which

web

transport

session

you

should

have

built

for

that

stream.

T

Allen

from

dell

meta

so

yeah,

I

think

what

martin

said

about

the

streams

that

are

closed,

gracefully

with

a

fin

bit.

Don't

have

this

problem,

but

the

ones

that

reset

yeah

could-

and

I

think

there

may

be

a

separate

issue

that

we'll

talk

about

in

the

h3

section,

but

I

think

the

leaking

it

is

bad

and

that

we

probably

need

a

reset

capsule,

which

would

be

reliable

to

make

sure

that

that

doesn't

happen.

E

So

I'm

not

even

sure

that

we

need

this

capability.

Honestly,

there

is

always

the

possibility

that

you

can

have

the

the

bad

session

completely

overwhelmed

the

capacity

of

the

connection,

for

instance,

if

I

as

a

as

a

bad

website

or

just

one

that

didn't

know

what

they

were

doing,

were

to

create

multiple

web

transport

sessions

and

use,

lots

and

lots

of

streams

on

them,

it's

possible

that

you

could

exceed

the

available

streams

that

are

there

for

that

connection.

E

Q

Well-

and

there

is

a

line

to

be

drawn

there,

so

sneak

peek.

The

next

slide

is

going

to

be

taking

all

of

the

data

limits

and

throwing

them

in

the

we'll

deal

with

this

later.

If

we

decide

we

actually

need

it.

It's

a

real

problem

bucket.

So

we

could

choose

to

do

that

for

this.

The

thing

you

say

about

you

know

hey.

I

want

to

send

non-web

transport

requests

on

this

h3

connection.

E

So

it

it's

always

going

to

be

the

case

that

you

can

exceed

it

unless

you

have

reserved

a

few

streams

for

the

purposes

of

making

other

requests,

which

a

browser

is

quite

capable

of

doing.

If

that's

what

we

want

to

do,

but

that's

a

lot

of

that's

going

to

depend

on

what

the

server

is

willing

to

allow

for.

So

if

the

server

only

gives

us

a

budget

of

three

streams,

then

we

don't

have

a

lot

of

options

available

to

us.

E

C

Yeah

one

observation

I

wanted

to

make

is

partially

that,

because

this

is

some

of

this

is

limiting.

So

the

situation

is

like

when

the

client

opens

too

many

streams,

so

the

browser

can

send

a

http

request.

Well,

browser

can

control

the

number

of

open

streams

on

top

of

what's

imposed

by

http

connections.

It

is

to

say

the

browser

might

decide

that

you

only

get

32

streams

from

this

connection,

and

there

is

no

need

to

support

this

in

protocol

because

it's

all

local

to

the

browser.

T

Alan

frindell,

I

think

the

concern

that

I

have

with

letting

the

browsers

just

decide

like

we're

going

to

reserve

some

streams

and

it

doesn't

need

to

be

communicated.

Is

that

then

servers

have

to

deal

with

browsers

that

have

different

limits,

or

maybe

you

decide

that

you're

not

going

to

have

the

limits

or

some

browsers

do

or

don't

so

that

it's

sort

of

inconvenient

just

better

to

be

able

to

like

have

some

guarantees,

but

I

think

also

to

victor's

point.

T

I

seem

to

remember

maybe

something

like

this

along

with

websockets,

where

chrome

has

some

limit

for

like

how

many

websocket

streams

you

can

have

in

an

h2

session

which

is

kind

of

similar.

So

I

don't

know

it

would

having

some

explicit

way

to

communicate

with

what

the

limits

are.

I

think

would

be

good

right.

S

Q

Q

So

we're

going

to

say

that

at

least

for

those

bytes

you

have

the

ability

for

any

h3

stream,

and

obviously

a

lot

of

this

also

applies

to

h2,

but

specifically

for

h3

for

any

h3

stream.

You

already

have

flow

control.

You

already

can

use

it.

You

already

screw

it

up.

Sometimes,

let's

not

make

it

any

more

complicated.

Q

If

we

end

up

needing

some

kind

of

thing

that

we'd

actually

want

to

signal

about

that,

we

can

certainly

add

it

later.

It's

not

super

hard

to

add

capsules

and

extend

things

by

making

that

work,

but

we're

gonna

propose

not

doing

that.

So

the

number

of

streams

we

said

was:

this

is

a

thing

that

you

cannot

necessarily

do

otherwise

and

it'd

be

interesting.

Q

If

we

can

chime

in

with

some

some

clear

text

on

how

we

would

explain

that

browsers,

should

you

know,

do

a

sensible

default

there

or

do

concurrent

stream

count

or

pick

some

other

strategy.

That

would

be

excellent,

but

we

said

it

was

worth

biting

off

a

little

bit

of

this

complexity

for

streams,

because

that's

something

that

you

don't

necessarily

have

good

control

over

otherwise,

but

for

bytes

you

have

lots

of

knobs.

We

have

yet

to

prove

that

we

can

use

those

knobs

successfully

in

every

case.

Q

Excellent

next

slide.

Please

intermediaries

make

the

entire

conversation.

We

just

had

a

lot

more

complicated.

That

is

also

potentially

a

reason

to

have

a

little

bit

more

explicit

signaling

if

we

need

to

distribute

some

of

that.

So

this

is

a

place

where

we

have

a

split

between

a

way

to

conceptualize.

What's

going

on

and

the

thing

you

actually

need

to

do

when

you

write

your

code

so

conceptually

the

proposal

here

is

that

and

it's

less

of

a

proposal

and

more

of

a

reality,

flow

control

is

terminated

at

an

intermediary.

Q

So

when

I

have

my

h3

connection

that

is

terminated

by

somebody,

who's

then

going

to

talk

upstream

of

that

via

h3

or

h2.

A

lot

of

those

flow

control

limits

are

actually

terminated,

especially

if

they're

translating

between

h3

and

h2,

but

even

if

they're,

just

sending

h3

to

h3

or

h2

to

h2

that

intermediary

could

choose

to

allow

someone

to

send

it

more

than

it

was.

Then

it's

allowed

to

send

upstream

and

vice

versa.

Q

S

Q

And

if

we

skip

to

the

next

slide,

since

I

think

building

this

in

in

segments

is

not

necessarily

going

to

help

much,

we

got

more

numbers

here

that

refers

to

a

thing,

that's

on

the

left

somewhere

conceptually.

What

this

is

saying

is

that

if

you're

an

intermediary

and

somebody's

saying

hey,

you

can

send

me

100

bytes,

you

probably

want

to

be

very

careful

before

you

tell

the

person

sending

you

stuff

that

they

can

send

you

more

than

that

100

bytes,

which

should

be

pretty

straightforward.

S

Q

Limits?

Yes,

yes

right,

so

you

need

some

sensible

set

of

initial

limits,

but

essentially

as

you're

going

to

increase

those

limits.

You

need

to

be

careful,

but

yes

you,

you

could

be

stuck

in

a

situation

where,

when

you

establish

your

upstream

connection,

it

says

my

initial

limit

is

50

and

you'd.

Already

advertised

100

and

you're

gonna

have

to

deal

with

that.

E

So,

let's

try

to

be

a

little

bit

more

pragmatic

about

this

sort

of

thing.

This

is

going

to

be

a

gateway

sitting

in

front

of

a

bunch

of

servers

and

a

lot

of

cases.

The

gateway's

going

to

know

something

about

those

servers.

Now,

whether

that's

based

on

the

fact

that

it's

already

talked

to

those

servers

in

the

past

or

because

they're

actually

operated

by

the

same

people,

and

they

run

off

the

same

configuration

as

largely

a

material.

E

E

I

was

just

thinking,

there's

interesting

complications

here

when

you

talk

about

having

quick

on

both

sides

of

the

intermediary

and

when

you

have

quick,

quick

and

tcp

on

on

different

sides

with

quick.

If

you

get

an

out

of

order

piece

of

stream

information,

you

just

forward

it

on

and

sort

of,

say.

Oh,

this

is

just

stuff

that

you'll

need

to

deal

with

in

the

future.

That's

easy

with

ccp

with

headline

blocking.

You

have

to

wait

for

everything

you

have

to

buffer

things

up.

E

So

ultimately

the

intermediary

can't

sort

of

blindly

forward

those

things

on

in

the

in

the

tcp

context,

because

it

does

need

to

have

all

of

the

space