►

From YouTube: IETF115-WEBTRANS-20221110-1300

Description

WEBTRANS meeting session at IETF115

2022/11/10 1300

https://datatracker.ietf.org/meeting/115/proceedings/

A

A

B

A

A

A

A

A

A

I

really

need

to

cue

the

Jeopardy

music

for

this

one,

so

I'm

looking

at

some

newcomers,

that's

a

great

way

to

help

out

if

you'd

like

not

twisting

anyone's

arm,

but

that

is

always

much

appreciated

by

the

community.

The

notes

really

don't

need

to

be

perfect.

If

you

look

at

those

from

any

other

meeting,

it's

just

a

rough

idea,

especially

now

that

we

have

the

YouTube

video.

So

is

anyone

willing

to

take

notes?

Please

foreign

's

like

staring

at

their

laptop

instead

of

making

eye

contact?

Wow,

it's

okay!

We

just

won't!

A

Oh

thank

you

very

much

appreciate

it

if

you

can

take

them

in

the

notes.itf.org,

there's

a

link

from

the

agenda

or

from

the

slides

and

anyone

else.

If

you

want

to

jump

in

and

help

that's

much

appreciated.

Thank

you

and

please

put

your

name

at

the

top,

so

I

can

probably

thank

you

in

the

email

right.

This

is

our

agenda,

as

per

is

often

the

case

here

at

web

transport.

A

We're

going

to

do

an

update

on

what's

going

on

at

the

w3c,

then

discuss

the

capsule

design

team

and

its

impact

on

H2

and

H3

and

other

H2

and

H3

open

issues.

Then

we

have

a

specific

topic

on

reliably

resetting

streams

from

Martin

and

then

we'll

see

if

we

need

any

hums

and

wrap

up.

Would

anyone

like

to

bash

this

agenda

foreign.

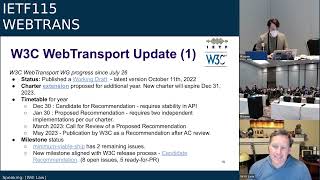

C

Yes,

let

me

just

confirm

the

mic's

working

all

right.

Yes,

it

is

okay.

Thank

you.

Happy

middle

of

the

night

from

California.

My

name

is

Will

law

from

Akamai

I

coach,

you

at

the

w3c

group,

along

with

yanivar

Brewery

from

Mozilla,

so

we

have

three

quick,

slides

on

updates

and

then

a

slide

with

four

questions

for

this

group,

which

I

think

would

hopefully

be

the

bulk

of

our

time.

So

just

an

update.

Since

we

last

presented

July

26th,

we've

updated.

Our

working

draft

to

the

latest

revision

happened

on

October

11th.

C

The

charter

is

in

the

midst

of

being

extended

through

to

December

31st

23.

We're

actually

just

passed

our

Charter

limit

currently,

but

we're

in

process.

So

we're

fine

from

a

w3c

perspective,

our

timetable

for

the

year

we're

really

trying

to

move

to

candidate

recommendation.

It

requires

stability

in

the

API

and

also

a

proposed

recommendation.

The

Next

Step

requires

two

independent

implementations.

Google

have

implemented

in

Chrome.

Mozilla

are

close

on

Firefox,

so

we

should

reach

there

and

hopefully,

within

Cube

one.

Our

goal

is

published

in

the

to

publication.

C

C

So

just

some

updates

for

you,

I

won't

go

through

them.

I

won't

read

through

all

those

texts

in

detail,

I

think

the

one

not

most

meaningful

for

this

group

is

we

added

a

new

congestion

control,

Constructor

argument,

so

it's

an

enorm.

It

has

three

values

default,

throughput

and

low

latency,

so

you

give

them

an

indication

of

what

type

of

congestion

control

you

would

like

the

user

agent

to

invoke

on

your

behalf

in

the

web

transport

connection

that

you're

instantiating.

C

C

D

Jonathan

Linux

is

that

decision

about

whether

low

latency

can

be

done

done

before

the

connection

is

established

because

I'm

concerned

about

the

case

where

you

have

a

congestion

control

algorithm

that

wants

I'm

thinking,

in

particular

of

say

the

time

stamp

extension

and

I

can

use

it.

You

know,

and

I

can

Implement

Google

congestion

control

if

I

have

time

stamps

on

the

other

side,

but

I

can't,

if

I

don't

so,

which

means

you

wouldn't

know

until

after

the

connection

is

established,

whether

you

got

the

low

latency

congestion

or

not.

Is

that

something

you.

C

A

Yeah

I

can

answer

on

that

one.

This

is

part

of

the

Constructor

in

JavaScript,

so

at

least

for

now

in

Chrome

we

don't

pool

multiple

web

transport

sessions

and

different

connections,

so

you

would

always

have

this

information

when

creating

the

connection

else.

Even

if

we

do

Implement

pooling

you

could

totally

say.

Oh

this

connection

isn't

valid

for

this

Constructor

we'll

create

a

new

one,

so

I

think

we

we're

covered,

because

this

is

on

the

Constructor.

It's

not

something

you

can

change

later.

D

A

A

C

B

Yeah

I

think

Jonathan's.

Question

may

also

have

been

about

whether

web

transport

requires

some

of

these

time

stamp

options,

and

the

answer

is

no

I

I,

don't

know

if

Jonathan

wants

to

come

back

and

clarify

that,

but

anyway,

this

is

just

about

the

congestion

control

algorithm

that

would

be

implemented

on

the

web

transfer

client.

It

doesn't

influence

what's

on

the

server

or

anything

like

that,.

C

C

So

we

have

two

main

issues

of

debate.

The

first

remains

around

prioritization.

We

have

three

issues

up

there

and

we've

proposed

over

the

last

few

months.

Various

ever

more

complicated

apis

constructs

around

flows

of

short-lip

streams,

for

example,

and

waiting

schemes.

The

latest

issue

there's

a

comes

from

myself,

which

is

as

a

baseline.

Maybe

we

should

consider

simply

doing

the

minimum

necessary

to

support

mock

media

over

quick,

which

has

a

a

simple,

relatively

simple

requirement

for

ever

escalating

priorities.

C

So

we

have

not

resolved

this

issue,

it's

an

issue

of

debate,

but

that's

on

the

books.

The

second

remaining

large

issue

is

the

stat

surface.

We've

had

prior

discussion

around

packet

arrival

departure

times

latest

rtt

and

ecn

information,

Peter

Thatcher's

put

forward

a

PR

that

resolves

that

to

a

single

property,

basically

expressing

available

throughput

and

there's

also

debate

over.

What's

the

time

base

over

over

that

value.

C

A

C

We

have

some

difference

of

opinion.

I

personally

think

it's

important

for

the

first

version,

because

it

seems

necessary

to

implement

one

of

our

strongest

use

cases,

which

is

the

web

conferencing

use

case,

in

other

words,

a

client

sending

real-time

audience

video

to

a

server.

It's

working

well

today

and

and

good

Network

conditions,

but

it

doesn't

work

well

under

poor

Network

conditions.

So

we

feel

that

we

need

a

prior

some

type

of

prioritization

scheme

to

be

able

to

make

that

happen.

B

Yeah

I

just

wanted

to

clarify

here.

You

know

priority,

isn't

free

in

that

when

you,

if

you,

when

you

do

that

and

have

this

strict

sending,

then

you

lose

concurrency

and

you

know

for

the

at

least

for

the

conference

in

case

it's

I

I,

don't

agree

that

it's

it's

required

to

to

do

that

and

in

fact,

when

I've

done

the

samples

it

can

actually

be

harmful.

C

B

I

would

say

that

the

goal

should

be

what

Alan

said,

which

is

figuring

out

a

way,

making

sure

that

it's

not

precluded.

Let

me

put

it

that

way

rather

than

because,

because

yeah,

and

also

you

know

they

can

be,

there

can

be

some

experiments,

because

I

think

you

just

have

to

be

very

careful

because

priority

goes

hand

in

hand.

You

can't

get,

but

both

have

priority

and

and

claim

to

get

rid

of

head

of

line

blocking

right.

I

want

I

want

I,

want

strict

priority

of

my

frames.

B

C

E

F

Alan

Ferndale,

yeah,

I

I,

think

Martin

made

this

point

a

second

ago

in

the

chat,

which

is

that

we,

the

web

transport

protocol

as

it's

being

defined

in

ITF,

does

not

have

a

way

to

Signal

priority

over

the

wire

and

I.

Don't

think

we

plan

to

create

one.

The

API

consideration

is

very

much

about

the

JavaScript

code

being

able

to

communicate

to

its

local

implementation,

how

it

would

like

things

to

be

prioritized

before

they

leave

that

endpoint.

C

A

A

So

for

it

to

work

properly,

you

need

your

bottle-like

bottleneck

link

to

implement

some

specific

kind

of

smart

queuing

and

the

idea

is

l4s

like

the

the

short

of

it

is

you

have

a

shorter

cue

that

l4s

gets

into,

but

it

promises

not

to

grow

the

queue.

There

are

a

lot

of

folks

working

on

those

and

we

saw

like

there

are

a

lot

of

progress

with

the

hackathon,

but

they're

not

deployed

yet

and

most

importantly,

web

transport

being

can't

do

requirements

on

anything.

A

G

G

It's

still

a

little

bit

unclear

as

to

what

why

we

would

actually

want

to

be

able

to

require

that

I

think

this

kind

of

comes

back

to

number

two

and

I

don't

want

to

reopen

that

if

we've

got

a

nice,

no

answer

but

like

I

think

the

the

sentiment

that

I'm

feeling,

which

I

think

might

be

shared,

is

like

we're

not

currently

planning

to

Signal

priorities.

We're

not

currently

planning

to

try

to

require

that

kind

of

congestion

control,

but

that

doesn't

mean

that

that

could

never

happen.

G

That

means

that,

like

right

now,

there

is

no

clear

use

case

for

why

you

would

need

to

Signal

those

priorities

as

opposed

to

having

it

be

an

endpoint

only

thing.

Similarly

for

question

three,

like

I'm,

not

seeing

a

huge

reason

that

you

would

want

to

require

that

everybody

anywhere

ever

doing

web

transport

can

only

do

Prague

or

something

similar

as

opposed

to

Simply,

making

it

available

for

an

implementation

that

needs

one.

C

B

G

Because

when

we

were

talking

about

hey

for

congestion,

control,

I

want

to

request

low

latency.

That

is

very

much

a

request,

and

even

if

you

have

Prague

on

your

local

endpoint,

that

does

not

necessarily

mean

that

we

would

consider

what

you're

getting

to

be

low,

latency,

so

I'm

a

little

bit,

hesitant

to

say

that

we're

gonna

like

say

this

is

a

thing

and

everybody

should

go

off

being

happy

and

then

be

surprised

down

the

line

when

that's

not

what

they

got.

G

B

I

So

I

am

hello.

I

think

we've

answered

the

priority

questions

adequately.

I

think

there's

there's

a

lot

of

space

in

here

for

signaling,

but

that

will

be

very

much

application.

Specific

and

really

all

that

we

can

do

with

this

at

this

layer

is

provide

an

API.

That's,

thankfully,

not

a

problem

that

the

ITF

has

to

concern

themselves

with

there's

a

bunch

of

people

who

have

some

experience

in

that

area.

I

Who

can

take

that

to

the

w3c

I

think?

That's

probably

the

cleanest

way

to

manage

that

I

think

on

point

three:

what

we

have

with

with

the

the

application

expressing

a

preference

for

the

way

in

which

congestion

control

is

managed,

but

leaving

implementations

and

by

implementations,

I

mean

browsers

and

and

servers

to

to

just

sort

of

compete

on

the

quality

of

the

congestion

control.

Algorithms

is

probably

the

most

sensible

approach

having

a

mandate

for

a

very

specific

set

of

congestion.

I

I

A

J

Yeah

I

mean

I'm

pretty

much

with

Smart

in

on

this

I

mean

certainly

for

position.

Control

I

have

a

financial

issue.

There

is

that

the

construction

control

is

the

property

of

the

connection

as

a

whole,

and

a

web

transport

is

only

using

part

of

the

connection,

so

you

might

even

have

two

web

Transportation

on

the

seven

quick

connection

and

what,

if

those.

E

J

K

Hey

Luke

from

twitch,

so

fortunately

Christian

opened

Pandora's

Box

for

me,

but

pooling,

there's,

there's

some

on

the

previous

slide.

You

exposed

some

Network

stats

like

the

estimated

bit

rate

rtt

a

congestion

controller.

It

doesn't

really

make

sense

when

you're

pulling

so

it

it

seems

like

at

least

the

JavaScript

API

is

assuming

no

pooling

or

is

that

is.

C

D

Even

if

you

have

a

default

congestion,

controller

I

think

that's

the

point

of

that

and

I

think

there

have

been

some

experiments

early

experiments

with

like

RTP

over

quick

that

have

gotten

reasonable

results

with

that,

basically

avoid

the

queue.

Keep

the

cues

low,

keep

your

latency

low

over

a

default

congest

controller.

C

Yeah

it

wasn't

that

people

wanted

to

implement

congestion,

control

and

JavaScript.

It

was

to

to

try

to

improve

the

delivery

and

to

do

it

on

top

of

the

existing

digestive

control.

That's

happening

under

the

base

layer

is

very

difficult

and

I

think

you're

referencing

the

ADT

core

work

that

was

done

over

bbr.

If

I

remember

correctly,

there.

A

L

Gotcha

I

was

gonna,

say

roughly

the

same

thing

as

Jonathan

that

we

wouldn't

be

if

we

added

an

API

which

we

don't

currently

have.

But

if

we

added

one

for

number

four,

it

would

not

be

trusting

the

JavaScript

with

congestion

control.

It

would

just

be

allowing

it

to

go

lower

than

whatever

built-in

congestion

control

there

is.

If

it

wants

to,

it,

wouldn't

be

allowed

to

go

higher

necessarily.

So

that's

in

response

to

Martin's

comment.

L

I

I

think

there

might

be

potential

for

doing

something

like

that,

but

it's

something

that

needs

to

be

explored

and

I.

Don't

think

that

the

quick

timestamp

option

is

even

mature

enough,

yet

to

be

something

we

can

rely

on

so

I

I

think

while

number

four

has

potential,

it's

not

something

that

is

mature

enough

either

with

the

extension

or

to

see.

If

this

whole

idea

can

work

to

be

something

we

can

rely

mandate

right

now.

A

My

computer

well

I,

was

in

the

queue

as

an

individual

as

well,

but

everyone

already

said

it

so

plus

one

as

chair.

My

read

of

the

situation

is

I'm

getting

agreement

in

the

room

for

no

on

all

four

points:

I'm

gonna

reopen

the

cube

briefly

in

case

someone

wants

to

disagree

just

so

we

can

have

that,

but

we're

not

we're

not

going

to

run

a

formal

Contessa's

call

because

I

don't

think

you're

asking

for

a

full

liaison

here,

but

does

anyone

if

anyone

wants

to

disagree?

A

G

I'd

just

like

to

say,

I

think

it's

no

on

all

points,

but

not

like

a

no,

and

you

should

be

sad

for

doing

it

more

of

like

a

no

and

if

there's

a

cool

use

case

that

really

needs

this

or

like.

If

there

is

a

place

where

you

do

need

to

Signal

priorities

like

that

is

an

open

thing.

So

I

don't

think

this

is

a

no

we're

uninterested

in

what

you're

trying

to

solve

it's

more

of

a

from

what

we're

aware

of

for

what

you're

trying

to

solve.

A

C

A

A

E

G

We've

now

removed,

basically

all

of

the

stuff

we

were

talking

about

about

flow

control

from

the

capsule

design.

Team,

pull

requests

at

this

point,

so

we've

we've

ripped

a

bunch

of

stuff

back

out.

We

have

left

a

very

little

bit

of

it

in

so

we've

we've

left

all

of

the

stuff

for

H2,

because

none

of

our

reordering

issues

occur

in

H2,

because

H2

is

conveniently,

on

top

of

this

Knight's

reliable,

ordered

protocol

and

there's

a

little

bit

of

Base

text

for

session

based

flow

control.

That

is

still

in

H3.

G

There's

some

settings

changes

that

we'll

talk

about

later,

and

otherwise

this

just

converts

everything

over

to

take

our

existing

tovs

and

call

them

capsules

and

list

them

this

nice

new

list

of

capsules

that

we

have

so

this

is

now

fairly

minimal.

Please

actually

go

read

and

review

you've

seen

this

diagram

at

three

ietfs.

By

now

it

has

not

changed

so,

let's

land

it

and

move

on,

so

we

can

stop.

Looking

at

this

diagram.

G

G

Some

implementation

experience

said

that's

actually

really

really

painful

when

you're

implementing,

because

it

means

that

you

have

to

teach

your

quick

datagram

implementation

about

web

transport

and

about

the

extended

connect

protocol

and

everything

in

between,

and

so

that

ends

up

being

mildly

painful,

especially

because

some

of

the

settings

don't

show

up

until

after

you

need

to

have

looked

at

the

transport

parameters

anyway.

So

when

you

suddenly

start

getting

in

datagrams

you're

like

hey

I'm

annoyed

now,

so

these

are

likely

to

become

totally

separate

things,

there's

a

slide

and

an

issue

for

it

later.

G

I

I

Just

you

know

zero

and

one

I

think

is

most

sensible,

I,

don't

think,

there's

any

need

or

value

in

the

client

saying

a

hundred

I.

Just

can't

imagine

what

client

would

know

how

many

sessions

it

would

create

at

the

time

it

makes

the

connection

right.

So

yes,

true

or

false,

which

is

a

bit

weird

but

better

than

having

ooh

two

seconds.

G

I

G

I

So

so

settings

like

cost

the

same

either

way,

so

this

is

just

double

the

cost

and

we're

going

to

be

sending

them

on

every

connection.

Yeah.

Every

connection

we

make

to

any

web

server

will

have

this

setting

in

it.

If

you

make

a

send

to

we're

going

to

be

spending

all

of

those

extra

bytes

and

we

do

kind

of

care

about

those

bytes

so

once

One's

best.

Thank

you.

D

F

Alan

Alan

trendel,

since

web

transport

sessions

are

client

initiated

aside

from

the

use

case,

Victor

just

mentioned,

which

is

a

strange

way

to

version

the

protocol

anyway.

But

okay,

what

if

the

client

didn't

have

to

announce

support

via

setting

and

the

server

says

I

could

handle

one

or

more

web

transport

sessions

and

then

the

client

sends

it

one

and

it's

like.

Oh,

the

client

supports

web

transport.

Otherwise,

what

will

you

do

other

than

just

the

client

says?

I,

don't

support

it

and

then

sends

you

a

web

transport

session.

N

O

D

Yeah

Jonathan

I

mean

I'm,

not

sure

why

you

need

to

have

I

wouldn't

having

just

the

client

send

enable

and

the

server

meaning

I

I

can

create

my

website

transport,

but

don't

create

them

to

me.

Basically

have

a

different,

don't

have

you

know

basically

the

same

semantic

as

Max

session

zero,

but

it

means

Max

session

zero,

but

I

do

web

transport,

and

so

basically

have

this

can

be

asymmetric.

Basically,

you

can

have

enable

meaning

not

actually

enable

but

I'm

willing

to

create

I'm

able

to

create

them.

D

D

I

mean

I,

think

yeah

I

mean

I

guess.

The

question

is:

if

your

implementation

is

such

that,

like

what

the

web

transport

stuff

and

the

HTTP

3

stuff

are

different

parts

of

your

stack

and

you

need

to

transfer

the

socket

over

to

a

different

part

of

the

handle

but

be

determined

what

different

part

of

your

code

or

even

a

different

process

or

something

that

would

be

the

reason

I

would

think.

But

right.

I

It

does

anyone

need

to

know

and

I.

Think

I

can

probably

answer

that

question.

So

does

any

server

need

to

know

that

if

a

client

does

work

transplant

I

think

there

may

be

cases

where

servers

need

to

know?

But

in

those

scenarios

you

can

put

the

server

on

a

different

hostname

and

the

client

will

be

able

to

connect

to

them

and

do

the

magic

stuff

that

way,

rather

than

looking

at

other

things,

I

like

our

own

suggestion.

G

G

A

David

schenazi

I

I

was

initially

going

to

say

that

the

client

should

send

it

because,

like

this

modifies,

HTTP

semantics

and

it

tells

the

server

that,

yes,

you

can

send

sort

of

initiated

bi-directional

streams

and

that's

how

we

generally

do

that

in

HTTP.

But

then

I

remembered

that

I

really

don't

care

either

way

works

and

Victor's

visioning

thing

from

the

client

can

be

done

as

a

header

as

well.

O

I

will

be

very

brief

and

just

say

it

does

not

seem

like

there's

any

actual

information

here

that

the

server

needs

to

receive

from

the

client.

The

client

needs

to

receive

the

number

of

sessions

and

anything

other

than

zero

implies

everything

the

client

needs

to

know.

So,

let's

just

have

one

setting

set

in

One

Direction

and

be

done.

K

G

N

Hey

Lucas

Pottery,

just

a

quick

one,

the

what

was

I

forgot.

What

was

it

gonna

say?

Damn.

Oh

quite

often

we

think

of

settings

as

the

only

way

to

negotiate

a

semantic

change

in

HP

203.

The

spec

doesn't

require

that

it

can

be

whatever

else

we

would

come

up

with.

So

that

would

fit

potentially

this

as

well.

A

M

As

I

said,

the

versioning

sync

cannot

be

done

via

the

only

server

thing,

because

you

have

to

understand

which

version

you

speak

so,

for

instance,

between

regular

2

and

chapter

four.

We

decided

that

we're

Banning

on

bi-directional

streams

where

require

web

transport

session

frame

to

be

in

the

very

front,

and

that

would

be

a

breaking

wire

change

and

we

have

to

know

on

client

and

server

have

an

agreement

on

which

version

is

actively

being

used.

G

So

I

think

we

have

two

concrete

options

here:

option

one

is

the

server

sends

settings

web

transport

Max

sessions

it?

Can

you

can

encode

a

version

in

the

type

of

the

thing

that

it

sends

there

if

you

like,

and

when

the

client

sends

extended

connect,

you

can

always

mint

a

draft

specific

protocol.

Token

just

like

we

did

with

alpn

for

H3

and

things

like

that

right

and

that

arrives

with

the

extended

connect.

There's

no

asynchrony

happening

there,

there's

no

reordering

possible.

G

G

G

So

option

one:

the

server

sends

settings

web

transport,

Max

sessions

to

some

non-zero

value

and

the

type

of

that

transport

of

that

setting

encodes

your

version

and

when

the

client

sends

extended

connect,

you

can

always

mint

a

new

protocol

entry.

So

right

now

we

just

call

it

web

transport,

but

you

could

always

call

it

web

transport-02

or,

however,

you

want

to

Signal

support

for

different

draft

versions

of

things,

just

like

we

did

with

alpn

for

H3.

G

Your

second

option

is

settings.

Web

transport

Max

sessions

is

sent

by

both

sides.

You've

encoded

the

version

in

the

type

of

that

setting,

and

we

Define

one

for

the

client

to

be

a

sentinel.

That

means

that

I'm

not

accepting

any

web

transport

sessions

at

all

but

I

do

support

web

transport,

and

you

can

then

infer

the

version

from

the

type

of

the

setting

that

was

sent.

G

I

You

know

I,

just

I

just

realized

that,

with

with

the

first

design,

the

server

can

advertise

support

for

multiple

versions.

Now

clients

can

exercise

whichever

one

they

choose

simply

by

indicating

with

the

version

in

the

in

the

header

field.

The

challenge

with

the

other

design

is

that

if

the

client

can't

support

multiple

versions,

you

don't

have

any

version

negotiation

available,

because

the

client,

if

the

client

says

oh

I,

support

draft

15

and

draft

16.,

then

when

it

exercises

that

option

later,

there's

no

way

of

knowing

which

one

it

concretely

is

using.

O

B

A

I

M

M

G

G

A

Yep

well

and

please,

if

you

know

I

I,

see

Victor

Martin

keep

keep

the

constant

the

conversation

going

in

the

chat.

If

you

can

resolve

it

there,

otherwise

we

can

do

it

on

the

list.

Let's

not

please

face

plant

on

the

settings

like

this

is

the

silliest

part

of

the

protocol.

Oh

for

next

time,

no

close

the

slide,

they've

added

a

button

so

where

I

can

reclaim

control

now,

yeah

no

worries

anyway

cool.

Then

oh

right,

no

Victor's

not

going

to

continue

the

conversation

he's

going

to

be

up

here

presenting.

A

M

Okay,

so

I'm

doing

the

presentation

for

web

transfer

to

overage

free,

open

issues.

There

are

plenty

of

those,

but

most

of

those

are

actually

either

addressed

by

the

previous

presentation.

Ie

is

a

design

team,

output

or

they're

addressed

by

the

presentation

that

Martin

will

make

later.

So.

First

of

all,

the

update

on

what

actually

got

merged,

which

is

one

issue,

is

that

we

have

a

clarification

text

on

that

when

exactly

you

can

open

new

streams

and

datagrams

and

the

client

can

basically

open

them

as

soon

as

it

opens

extended.

Connect.

M

M

M

Alan,

my

answer

to

this

is

the

please

file

a

bug

for

better

developer

tooling

for

Chrome,

because

this

is

definitely

something

I

have

have

that

has

frustrated

me

in

the

past

and

I.

Don't

think

we

can

get

rid

of

all

of

this

issues,

so

we

definitely

need

more,

informative,

tooling,

to

tell

you

why

your

server

got

rejected

and

it's

just

a

matter

of

writing

with

the

code.

M

F

D

Jonathan

Lennox

I

think

listing

them

all

explicitly

is

probably

a

good

idea,

because

otherwise,

in

five

years

we're

going

to

be

in

a

situation

where

you

know,

okay,

you

send

you

know

if

you

want

these

15

features,

and

this

one

implies

these

four,

but

not

these

other

three

and

it'll

be

a

complete

mess

rather

than

just

let's

list

them

all

from

the

start.

That

said,

I

feel

like

there

should

be

clear

Direction

on

what

to

do.

D

If

somebody

gives

you

a

nonsensical

response,

like

you

know,

I

support

web

transport,

but

not

extended,

connect

or

something

like

that.

So

or

you

know,

HTTP

datagrams

are

not

quick

datagrams.

There

should

be

a

clear

indication

of

just

you

know.

If

so

somebody

has

something

nonsensical,

just

tear

it

down

and

consider

it

a

complete

failure.

Don't

try

to

continue.

O

O

N

N

P

A

M

All

right,

let's

move

on

to

the

next

issue,

which

is

a

bit

more

complicated.

What

do

we

do

with

HTTP

redirects?

So

during

the

last

meeting

we

all

agree

that

we

definitely

should

have

either

a

must

support,

redirect

or

must

not

support,

redirect,

because

anything

else

is

just

highly

unpleasant

developer

experience

and

everyone

was

leaning

and

it's

a

general

consensuses

or

MC

was

leaning

towards

supporting

them

and

requiring

their

support

and

I

went

and

I

asked

Adam

rice,

who

was

the

reason

I?

Originally,

we

did

not

support

toys.

M

Rx

is

what

are

the

pitfalls

with

that

and

he

made

a

reply

which

is

you

can

read

on

the

issue

tracker

with

one

of

the

potential

attack

on

redirects,

but

so

the

more

I

think

about

it.

The

more

I

said

I

come

to

conclusions

that

redirects

have

a

lot

of

really

unpleasant

semantic

edge

cases,

and

a

lot

of

them

revolve

around

the

fact

that,

in

order

to

send

the

redirect

to

a

web

transport

resource,

you

need

to

user

connections

that

supports

web

transferred.

M

But

redirect

is

something

that

a

server

that

either

supports

or

does

not

support.

Where

transport

can

reply

with.

So

what

happens?

If

you

get

a

redirect

for

a

web

transport

resource

and

it

redirects

you

to

somewhere,

where

this

not

support

web

transport?

Or

can

you

consist

what

should

we

handle

redirects

and

attempt

to

fetch

for

those

redirects

in

cases

when

we

are

on

the

connections?

That

does

not

support

web

transport

in

the

first

place

and

then

the

second

issue

is

okay.

M

We

allow

technically

currently

allow

client

to

start

sending

streams

when

the

before

getting

reply

from

the

server,

and

this

has

the

obvious

problem

of

okay.

We

sent

some

data

on

the

streams

to

the

server,

and

now

we

got

redirected

what

happens

to

those

streams,

and

that

is

the

second

problem

we

have

to

deal

with

this.

So

my

personal

current

inclination

is

that

we

should

not

support

redirects,

because

we

will

have

to

deal

with

all

of

those

and

I

would

like

to

know

what

people

in

the

room

think

about

this

Market.

I

I

That's

that's

just

a

final

condition.

That's

just

another

one

of

the

things

on

the

checklist

that

Alan

was

talking

about

before

that's

difficult

to

get

right,

but

you

only

really

have

to

get

it

right

once

item

potency

is

interesting,

which

sort

of

led

me

to

ask

the

question

so

you're

connecting

and

you're

sending

I

I,

don't

know

what

are

you

sending

on

this

on

this

thing,

you're

sending

stream

data?

I

What's

the

stream

limit

on

the

other

end,

do

we

have

a

default?

Can

we

can

we

guarantee

that

you'll

have

a

bi-directional

stream

to

create

and

send

on

you

don't

know,

especially

not

in

the

H2

case

anyway,

maybe

in

the

H3

case,

because

you're

just

taking

from

a

global

pool

that

you

already

know

the

size

of

but

I'm

tending

towards

the

conclusion

here

that

that

is

difficult

and

I'm,

not

sure

what

to

do

with

it.

I

If

it's

just

stuff

that's

sent

in

the

payload

of

the

request,

then

it's

relatively

straightforward

and

I

think

that's

all

you're

allowed

to

really

do

before

you

get

confirmation

that

something

is

done

so

maybe

just

replay

it

is

that

right,

Eric's

nodding,

I,

think

if

we,

if

we

make

the

semantics

of

a

3xx

in

a

response

that

none

of

the

payload

of

this

request

was

was

processed.

If

you,

if

you're

doing

a

connect

and

you've

got

to

redirect,

then

then

you

can

just

take

that

those

bytes

and

send

them

on

the

next

one.

I

It'll

be

fine.

If

you

have

anything

other

than

that,

I

think

this

is

really

really

difficult.

I'm,

not

actually

sure

how

we're

going

to

do

this

for

H2,

the

more

I

think

I

think

about

it.

You

can

do

some

bookkeeping,

but

you

won't

be

able

to

do

any

stream

data

because

our

default

stream

limits

are

zero.

H

So

if

we

want

to

support

redirects

I

feel

like

the

answer

here,

if

you're

definitely

going

with

sending

data

over

datagrams

is

that

you

just

have

to

assume

that

anything

that

you

sent

prior

to

getting

a

confirmation

that

the

extended

connect

succeeded,

just

like,

as

we

did

with

the

underlying

mask

stuff

that

you

might

have

to

retransmit

it.

So

in

this

case,

I

feel

that

what

Martin

was

saying

here

around

item

potency

is

very

clearly

solved

that

you

know

you

get

the

redirect.

You

assume

it

all

that

data

was

not

processed.

H

M

I

Yes,

sir

Victor,

that's

a

fair

question.

This

is

the

case

where

you

you

attempt

on

on

one

connection

and

you've

got

a

nice

fat

flow

control

window,

and

you

send,

you

know

a

megabyte

of

stuff,

not

that

you

should

be

doing

that.

But

let's

say

you

manage

that

and

you

get

a

redirect

and

then

the

next

connection

only

has

like

10K.

M

I

E

G

I

would

agree

at

the

same

time.

I

do

think

the

what

happens

after

I'm

redirected

is

not

massively

different

from

what

I

would

have

done

if

I

went

there

originally

so

like

if

I,

if

the

thing

that

I'm

running

requires

me

to

have

three

streams,

open

and

I

would

have

gone

to

a

server

that

says

no,

you

can

only

have

two

like

this

is

the

same

problem

right.

So

the

fact

that

I

was

able

to

have

to

pack

my

connect

with

additional

data

that

tries

to

open

three

is

not

that's

not

really.

G

M

It's

list

for

dedicated

web

transport

or

for

HTTP

free.

You

can

open

as

many

streams

as

the

quick

transport

settings.

Allow

you

and

the

reason

I

say

opening

streams

specifically,

is

that

buffering

data

is

something

you

can

do

transparently,

but

with

opening

streams

or

API

explicitly

gives

you

a

promise

that

is

not

resolved

until

the

stream

is

actually

opened.

G

M

G

M

I

I

Maybe

we

need

a

new

setting

for

that,

because

in

in

the

in

the

pooled

scenario,

maybe

you

don't

want

clients

just

opening

up

new

web

transport

streams

for

sessions

that

haven't

been

approved

yet

and

in

the

in

the

dedicated

connection

case?

Well,

maybe

it's

okay

to

do

that,

so

that's

something

that

I

think

we're

going

to

have

to

think

about

a

little

bit

more

carefully,

because

that

changes

the

disposition

toward

this

particular

question.

Quite

a

bit.

G

What

I

was

going

to

say

yeah,

so

this

is

a.

This

is

essentially

the

box

of

things

that

you

open

up

when

you

start

trying

to

do

that

kind

of

flow

control,

and

things

like

that,

so

I

think

I

mean

I'm

totally

game

to

go.

Try

to

figure

that

out

offline,

but

I,

don't

think

we're

going

to

answer

it

in

the

next

half

an

hour.

Yeah.

A

But

just

speaking

as

chair

here,

the

I

think

the

output

of

the

design

team

that

we

declared

because

sessas

on

was

like

the

flow

control

for

that

part

is

oh

right.

Now

we

we,

we

punted

it

out.

That's

true,

so

that

we

didn't

reach

counter

consensus

on

it.

So

that's

still

like

a

nice

landmine

that

we

haven't

decided

how

we

want

to

step

on.

M

I'm

not

sure

flow

control,

specifically,

we

definitely

I

think

it's

better

to

take

it

offline

because

there

it's

very

clear

that

there

are

a

lot.

It

sounds

like

there

are

unsolved

design

issues

here

and

we

should

either

solve

them

or

design,

decide

that

they're

not

worth

solving

or

should

not

be

solved,

but

it

doesn't

sound

like

we're

arriving

to

a

new

conclusion.

That's

submitting

so

I

suggest

we

move

to

the

next.

A

M

M

Okay,

so

the

third

issue,

which

is

actually

three

issues

which

talk

about

truffle,

is

the

same

topic

but

different

aspects

of

it

or

maybe

the

same

aspect

of

it

is

that

the

we

have

for

unidirectional

streams

in

web

transport.

We

just

have

a

unidirectional

strain

type

and

we

use

that

and

we

put

the

session

ID

and

then

we,

it

just

works

for

bi-directional

streams

or

no

stream

types

in

HTTP

free.

So

we

made

a

special

frame

that

just

says

everything

else

is

on

this

stream

is

web

transport

and

we

can

make

that

frame.

M

It's

legal

because

we

use

a

setting

to

negotiate

web

transport

support,

meaning

that

we

can

alter

the

protocol

and

then

the

question

was

well.

Can

you

put

anything

before

that?

And

during

the

last

ATF

meeting

we

roughly

agreed

that

the

answer

to

that

question

is

no,

because

we

want

to

have

consistency

between

what

we

do

with

bi-directional

streams

and

unidirectional

streams,

so

that

is

as

far

this

is

I

think

we

have

agreement

on

that

and

Lucas

and

Lucas.

Please

correct

me,

because

I

am

trying

to

vaguely

restate

what

you

said

in

the

issue.

M

Slash

pull

request

suggests

that,

instead

of

doing

that,

we

should

just

Define

bi-directional

stream

types

and

as

an

extension

to

http

free,

and

there

is

a

pull

request

to

do

this

and

I

will

let

Lucas

advocate

for

it,

because

my

current

opinion

on

it

roughly

this

has

the

same

effects

on

the

wire,

so

I

don't

think

this

is

particularly

worth

it,

but

Lucas.

Please.

A

My

understanding

and

correct

me

if

my

arm

wrong

is

this:

doesn't

change

the

wire

format

in

the

sense

that

the

bi-directional

stream

will

always

start

with

a

variant

that

is

an

identifier

and

that

identifier

will

either

be

in

the

streams

in

a

registry

stream

type

sign

a

registry

or

any

frame

types

I.

Never,

actually

that's

that's.

What

we're

debating

is

which

registry,

but

it

doesn't

change

the.

What

we're

actually

sending

is

that

correct,

I'm

asking

you

Lucas,

okay,.

N

If,

instead,

you

kind

of

say

when

we're

using

web

transport

or

an

extension

of

this

type,

there

is

a

way

to

convert

the

semantics

of

hb3

such

that

biddy

streams

no

longer

become

request

streams,

and

this

is

a

way

to

do

that.

The

PR's

in

web

transport

I

think

it's

into

a

section

of

the

document.

That's

like.

N

Maybe

we

don't

want

to

do

this

in

web

transport

and

that

maybe

this

is

something

we

take

to

the

HB

working

group

and

say

we

designed

hb3

for

for

this

use

case

and

we're

trying

to

do

other

things

with

it,

and

this

is

an

approach.

So

there

were

some

good

comments

that

you

made

on

that

PR

and

some

stuff

has

shifted

on

I

haven't

had

the

time

to

go

back

and

comment

them.

It's

not

something

I

want

to

give

up

on

yet

because

I

haven't

had

the

time

to

revisit

it.

N

N

A

I

I

asked

chair,

I'll

jump

in

and

try

to

phrase

the

nuance

and

please

jump

in

and

correct

me

Lucas

if

you

think

I'm

doing

it

wrong

so

in

HTTP,

3

well,

quick

has

server,

initiated

bi-directional

streams

in

HTTP.

It

says

You

must

not

send

them

and

if

you

receive

them

explode,

so

we

know

no

one's

sending

them.

But

now

we

have

magical

super

duper

setting.

So

we

know

that

we're

in

a

different

mode

and

that

setting

tells

you

what

you're

allowed

to

send

on

this

stream

and

how

you're

supposed

to

parse

it.

A

A

N

N

So

the

client

request

stream.

You

have

frames

and

if

you

don't

understand

frames

extension

frames,

you

ignore

them.

What

we're

saying

is

we

want

to

change

some

of

that

Machinery

too,

such

that

when

you

receive

this

Frame

it

seems

the

property

that

we

want

is

that

web

transport

starts

and

converts

the

stream

immediately

into

the

mode

that

it

needs,

but

by

using

a

frame.

N

What

you

end

up

with

is

effectively

an

infinite

amount

of

stuff

that

could

appear

before

that

web

transport

stream

frame,

and

that

just

seems

completely

pointless

and

so

you're

making,

like

another

exception

for

behavior,

for

web

transport

streams,

but

I,

don't

think

we

need

I

think

we

should

just

say

if

you're

using

web

transport

and

you

get

the

stream

open

and

it

has

to

start

with

this

byte.

Otherwise,

there's

something

Focus

going

on.

It's

not

it's

no

longer

a

framed

stream,

it's

something

else

which

is

the

property

that

we

want.

If

I

understand.

I

Yeah

so

I'm,

not

all

that

enthusiastic

about

the

the

way

in

which

this

is

working

out,

because

we

have

bi-directional

and

unidirectional

streams

and

the

client

initiative

initiated

bi-directional.

Streams

have

to

have

frames,

and

in

order

to

for

us

to

to

do

this,

we

have

to

choose

a

thing

that

sits

the

front

of

that

stream.

That

looks

like

a

frame

or

at

least

uses

a

number

from

the

the

space

that

isn't

like.

I

We

have

to

register

a

frame

type

in

order

to

avoid

colliding

there

right,

so

I

don't

want

to

have

I,

don't

want

to

have

us

fix

the

asymmetry

between

unidirectional

streams

and

bi-directional

streams.

Only

to

create

this

problem

with

the

others,

so

I

think

we're

probably

in

a

situation

where

unidirectional

and

bi-directional

is

the

is

the

cleave

that

we're

looking

for

and

then

I

think

we

have

frames

now.

I

I

I

What

I

don't

want

to

have

happen

is

we

we

lose

the

the

ability

to

distinguish

our

streams

from

their

streams

when

they,

when

they

share

the

connection,

so

I'm

like

need

a

handle

there.

I

would

prefer

to

use

the

frame

parser

to

do

frame

parsing

on

like

the

bi-directional

streams,

which

potentially

means

it's

wasting

a

bite

for

a

zero

length.

I

I

think

zero

length

is

easier

than

a

than

a

whatever

other

options

we

have

for

that

field,

because

most

frame

parsers

will

be

type

length,

something

and

knowing

that

we

have

type

length

of

zero

and

nothing

following

it

makes

those

stream

passes

very

much

more

easy.

Well,

we've

got