►

From YouTube: IETF115-TVR-20221110-0930

Description

TVR meeting session at IETF115

2022/11/10 0930

https://datatracker.ietf.org/meeting/115/proceedings/

A

I'm

Lou

Berger-

this

is

Russ

Wright,

we're

co-chairing,

and

we

also

have

here

with

us

who's

volunteer

to

act

as

our

secretary.

Thank

you

all

the

material

is

online

and

if

you're

interested

in

the

topic,

please

take

a

look

at

the

material.

We

also

have

the

standard

web

page

available.

That

summarizes

the

purpose

of

the

boss,

we'll

of

course

go

over

that

here

and

we

have

a

couple

of

drafts

even

though

they're

a

little

bit

early

next.

A

This

is

an

ietf

meeting.

All

of

our

meetings

are

governed

by

that.

Our

note

well,

which

has

rules

regarding

our

participation.

Basically

everything

you

say

here

becomes

a

part

of

our

permanent

record.

If

you're

not

familiar

with

the

note.

Well,

please

go

to

the

ietf

page

and

take

a

look

and

familiarize

yourself

with

our

rules

of

participation.

Next,

we

also

have

rules

related

to

conduct,

and

basically

we

ask

you

to

always

treat

each

other

with

respect

and

professional

and

be

professional

with

each

other.

A

Of

course,

it's

okay

to

have

good

technical

argument,

but

please

keep

it

at

the

at

the

technical

and

professional

level

and

of

course

we

have

a

document

governing

that

too

TCP

54

next

for

those

in

the

room,

we

ask

two

things.

The

first

is:

please

scan

the

QR

code

joining

join

the

online

Tool,

whether

you

use

the

phone

or

the

the

computer.

It's

really

important

because

of

two

things:

it

gives

us

our

blue

sheets.

A

It

also

allows

you

to

participate

in

polling

and

we

will

conduct

some

polls

later

in

the

the

session

and

we'll

do

that

versus

raising

hands

or

humming,

so

that

we

are

Equitable

to

the

people

who

are

remote,

so

they

can

participate

at

the

same

level

for

remote

participants

you're.

Here

thanks

so

much

for

joining

and

please

meet

your

mics

if

you're,

not

speaking,

the

only

additional

piece

of

information

on

on

this

slide

really

is

come

join

us

on

joint

minute

taking

so

we

use

Hedgehog

ether

pad

whatever

you

want

to

call

it.

A

It's

a

it's

a

collaborative

tool

where

everyone

can

make

sure

that

we're

capturing

the

conversation

and

the

discussion

that

happens,

you

don't

have

to

capture

the

material

on

the

slides,

that's

already

on

the

slides

and

we

have

YouTube

available,

but

for

the

discussion

it's

really

important

to

help

capture

the

capture.

The

discussion

going

back

to

the

going

back

to

the

scanning

in

for

on-site

I

forgot

to

mention

the

other

bullet.

A

A

A

Eventually,

we

have

to

figure

out

what

new

work

is

to

be

done,

where

the

gaps

are

in

the

existing

Technologies

and

that's

those

two

are

sort

of

the

main

purposes

of

the

discussion,

but

from

a

management

standpoint

from

the

isg

from

the

IAB.

A

very

important

question

is:

is

there

sufficient

interest

in

working

on

this

problem

at

the

ietf?

B

A

So

we

have

a

posted

agenda.

The

agenda

is

a

little

different

today

than

it

was

yesterday

or

the

day

before.

Basically,

we

got

a

little

more

information

and

some

additional

contributions.

That's

always

really

appreciated.

As

a

contribution-driven

organization.

One

thing

I

want

to

say

about

the

time

here

these

are

approximate

times.

We

have

a

good

30

25

minutes

for

discussion.

If

that

discussion

happens

earlier

in

the

session,

that's

okay,

we

don't

have

to

be

completely

rigid

to

these

times.

C

C

There

was

good

correspondence

on

the

mailing

list

and

I

want

to

call

out

Adrian

for

taking

me

to

one

side

yesterday

and

saying:

let's

drill

this

down

to

something

a

bit

more

short

and

tight,

and

that

really

hasn't

been

updated

yet

and

is

kind

of

the

content

that

I

will

come

up

with

at

the

very

end

of

this

slide

deck.

But

meanwhile

can

we

have

the

first

slide.

Please.

C

So

I'm

going

to

jump

straight

into

the

meat

of

it

as

I

and

several

other

people.

We

have

chatted

to

see

it.

There

is

the

following

problem:

we

understand

that

in

routing

you

have

nodes,

you

have

links,

they

come

they

go.

You

need

a

protocol

to

try

and

build

some

sort

of

topology

so

that

you

can

build

end-to-end

paths

across

these

Networks.

C

C

What

we

are

discussing

here

is

the

proposal

that

maybe

there

is

another

case

here,

which

is

these

links,

and

these

nodes

may

change

in

predictable

or

scheduleable

ways,

so

you

can

know,

with

a

certain

degree

of

confidence

in

advance

of

a

break

or

a

restoration

in

link

adjacency

or

whatever

that

it's

going

to

happen.

Therefore,

as

a

routing

protocol

implementation

or

a

protocol

design,

you

don't

have

to

react

to

it

happening.

Oh

my

God,

my

Link's

just

gone.

C

You

can

say,

oh

at

12

pm

on

Wednesday

that

Link's

going

so

I

can

pre-compute

an

alternative

or

I

can

keep

a

backup,

topology

ready

to

apply

or

I

can

do

smart

things

and

I'm

not

entirely

sure.

Personally.

What

all

of

those

smart

things

are,

but

I

would

see

that

as

an

interesting

area

to

investigate

so

that

when

that

scheduled

event

occurs,

you

are

ready

to

do

it

and

you

can

move

swiftly

on

without

losing

end-to-end

connectivity.

Your

traffic

can

flow

less

disruption,

Etc.

So

next

slide.

Please.

C

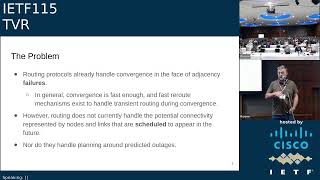

So

I'll

give

you

a

second

just

to

read

through

this

a

little

bit,

but

the

obvious

answer

to

that

statement

is

people

come

back

and

say:

well,

actually,

routing

protocols

already

handle

convergence

when

we

lose

links

when

nodes

fail

and

in

general,

that

convergence

is

fast

enough

and

fast

re-route

mechanisms

exist

and

they're.

Good

we've

worked

on

this

for

donkey's

years

within

the

IDF,

we've

got

a

good

Suite

of

protocols.

C

That

will

pretty

much

do

this,

but,

as

I've

said

before,

rooting

does

not

currently

handle

the

potential

connectivity

represented

by

root

nodes

and

links

that

are

scheduled

to

turn

up

in

the

future

or

are

scheduled

to

disappear.

So

you

know

it's

going

to

happen.

It

just

hasn't

happened

yet

and

I

personally

see

that

as

a

critical

difference

so

and

that

third

point

is

basically

an

extension

of

that

which

is

not

only

do

we

we

don't

handle

the

fact

that

we

know

a

node

is

going

to

appear

or

links

are

going

to

become

available.

C

C

C

How,

if

we

can

say

it's

coming,

can

we

also

include

how

long

is

it

going

to

be

up

for

and

equally

the

converse?

So

can

you

say

at

this

point

in

time

something

is

going

to

happen

either

an

availability

is

going

to

arrive

or

a

link

is

going

to

disappear.

So

therefore,

service

will

not

be

available

across

that

adjacency.

How

long

is

it

going

to

be

for,

and

will

this

repeat,

will

this

recur?

C

How

long

does

this

whole

description

of

forthcoming

events

going

to

be

valid,

for

so

we're

sort

of

starting

to

talk

about

possible

to

describe

a

schedule,

and

that's

the

word

I'm

using

at

the

moment,

I'm

not

trying

to

impose

any

words

onto

any

any

future

solution

to

this

problem.

But

can

we

talk

about

schedules,

forthcoming

events

and

is

that

of

use

to

routing

protocols?

Do

we

see

advantage

of

doing

that?

C

The

follow-on

question

for

that

is

if

we

see

available

and

unavailable

as

one

of

the

things

you

would

describe

in

that

schedule,

could

we

say

well,

availability

could

just

be

seen

as

a

Boolean

metric

up

down.

Could

we

say

change

link

costs?

You

know

whatever

the

waiting

you're

using

to

build

your

your

shortest

path

across

your

available

graph.

C

C

Next

slide,

please,

okay,

so

this

is

the

full

text

and

I'm

not

going

to

go

through

it

again.

I've

broken

out

the

key

points

in

the

previous

three

slides,

but

that's

there

so

please

grab

it

read

it

through.

My

intention

is

to

work

with

Adrian

to

reintegrate

this

text

into

the

existing

problem

statement

and

to

update

it.

E

E

You

know

when

I

went

through

school

and

learned

about

rooting

it

was

presented.

Like

you

know:

here's

link

State,

here's

whatever

distance,

Vector

Etc,

but

there's

another

whole

world

that

looks

at

the

same

problems,

which

was

the

operations

research

community

and

their

research

and

its

optimization

problems

and

they're

they're

duels

they're

really

the

same

kind

of

problems

in

many

cases,

and

so,

if

you,

the

the

other

kinds

of

interesting

work

that

relate

to

this,

that

probably

is

not

an

ietf

scope,

but

just

to

give

you

some

thinking

is

like

Dynamic

transshipment

problem.

E

E

C

Two

quick

answers:

first

off

I

think

the

the

presence

of

existing

research

in

this

area

is

a

really

good

thing,

because

I

always

worry

when

I

think

of

something-

and

everyone

says,

that's

unique,

no

one's

ever

thought

of

that.

It

probably

means

it's

really

dumb.

So

the

fact

that

other

people

have

been

spending

eight

years

decades

researching

this

stuff

I

think

that's

really

useful

and

second

of

all,

I'm

gonna

come

back

to

a

bit

of

the

technology

piece

and

look

at

some

of

the

Gap

analysis

stuff

in

a

later

presentation.

C

It's

we've

scheduled

it

in

Fairly,

bite-sized

chunks

and

you're

right,

there's

what

we

do

in

the

internet

area,

particularly

in

pretty

stable

networks

that

we're

all

pretty

familiar

with.

There's

the

whole

Mani

piece.

I

want

to

talk

about

where

there's

a

different

approach

and

you're

right.

The

the

kind

of

generic

traveling

salesman

problems,

the

resource,

scheduling

across

routes

that

are

not

traffic

based,

there's

a

whole

wealth

of

stuff

there

and

having

some

kind

of

timetable,

I

mean

think

about

railway

systems.

C

B

A

F

So

this

is

Alex

Clem

futureway

I

have

one

question

to

the

problem

statement

so

I

understand

the

basic

premise

of

this,

but

I

think

the

second

part

is

missing,

namely

what

you're

actually

planning

to

do

with

this

information?

So

assuming

that

you

had

this

solved,

how

will

you

leverage

it?

How

will

this

be

better?

What

problems

do

you

solve

better

by

knowing

the

schedule

Advance

versus

having

to

reconverge

or

or

what

have

you

so.

G

F

C

Fair

criticism

you're

right

that

first

off

the

problem

statement

can

be

much

better,

but

the

quick

answer

is

I

think

there

is

an

opportunity.

If

we

know

something

is

going

to

change

in

advance,

then

we

don't

waste

time

and

possibly

precious

resources

trying

to

work

out

why

it

failed

trying

to

bring

it

back,

because

you

know

it

has

gone,

no

need

to

try

and

restore

that

peer

link.

No

need!

So

that's

one

opportunity

to

not

waste

scarce

resources

and

the

other

opportunity

is.

If

you

know

something

is

going

to

happen

in

advance.

C

You

can

do

that

calculation

as

a

low

priority,

because

you

know

how

long

you've

got

to

do

it.

If,

particularly

when

we

look

at

iot

anything

in

space,

you

know

General

resource

constrained

things

anything

we

can

do

to

save

on

conservation.

So

if

we

know

something

is

going

to

change,

we

can

do

the

calculation

now,

rather

than

have

to

rush

a

reaction

to

something

happening.

We

can

be

sensible

about

the

resources

we

allot

to

make

making

that

change,

because

we

knew

it

was

about

to

happen.

B

A

H

H

C

Thanks

Tony

I'll,

try

and

answer

some

of

those

quite

quickly.

So

I'll

start

with

the

second

point,

which

is:

if

a

schedule

says

a

link

is

going

to

be

up.

Do

we

always

believe

it

I

think

would

be

a

mistake.

I

think

experience

tells

us

that

you

always

have

to

deal

with

exceptions.

You

always

have

to

deal

with

unpredictable

failures.

We

do

that

with

all

the

routing

protocols

from

across

the

entire

spectrum

of

dynamic

to

to

very

stable

environments,

so

I'm

not

suggesting

that

you

that

you

don't

expect

failures.

C

If

you

have

a

schedule,

I

think

that's

naive,

the

dynamic

question.

So

that's

a

scoping

question.

My

gut

tells

me

you

try

and

start

with

the

simple

cases

and

then

expand

out

to

the

more

complex

ones.

Whilst

when

you

have

a

better

handle

about

your

approaches,

but

that

doesn't

mean

you

don't

discount

more

complex

cases

while

you're

looking

at

your

simple

solution.

That

is

a

very

politicians

answer

to

that

question.

Yeah.

A

I

think

that

you

might

have

missed

each

other

because

you

have

on

your

slide,

I

think

the

answer

to

his

question,

which

is

dynamic,

topology,

is

in

scope

and

that's

represented

by

that

T2

and

T4

is

at

T2.

You

had

a

break

along

that

path

and

then

at

T4

it

comes

up

to

a

different

technology,

goes

somewhere

else.

So

and

Tony

you

can

jump

back

in

and

answer

if

I

get

that

wrong,

but

I

think

that's

what

you

meant

by

Dynamic

topology.

So

there's

a

chain.

Not

only

is

there

a

change

in

link

status?

C

Yeah,

no!

No!

If,

if

that

is

our

definition

of

dynamic,

then

yes,

absolutely

I

come

from

a

Manet

background,

where

Dynamic

can

mean

almost

chaotic

and

those

edge

cases,

I

would

suggest

still

remain

out

of

Scope

when

everything

is

moving

at

such

speed

or

your

schedule

is

talking

about

nanoseconds

and

the

predictability

of

this

stuff

becomes

very

low

probability.

I

think

we

disappear

into

a

corner

case.

We

shouldn't

go

in,

but

yeah.

I

J

Thank

you,

yeah

I

just

wanted

to

make

a

comment

from

a

really

a

comment

from

a

colleague

of

mine,

which

I

think

echoes

a

comment

that

was

made

at

the

mic

earlier

and

also

by

you

Rick

that

it's

like

a

bus,

scheduling

or

train

scheduling,

or

you

know,

using

a

Maps

app.

It's

related

to

driving

directions,

but

it

you

know,

but

it's

also

sufficiently

different,

that

it

warrants

a

special

look

and

so

for

the

purposes

of

like

buff

working

group

formation.

A

G

G

Sorry

R3

knows

as

much

about

the

topology

as

R2

does,

even

though

it's

not

connected

at

the

moment

to

the

main

site,

and

so

it

with

current.

You

know,

know

protocols

and

so

on.

It's

frustrating

that

you

know

to

take

a

vote

of

a

few

seconds,

for

you

know

R2

to

come

up.

The

protocols

to

come

up

Etc,

so

this

you

know

sort

of

chimes

in

you

know

very

well

with

that

scenario.

In

fact,

thank.

C

You

so

that's

that

I

think

is

yet

another

example

of

of

reducing

the

the

loss

of

of

data

transmission

capability.

When

something

has

happened,

if

you

can

know

it's

happened

in

advance,

then

you

can

say

well

as

soon

as

that

goes.

I

know

what

I

it

was

expected.

That's

due

to

R3

r3's

warm

standby

and

ready

for

it

rather

than

coming

from

cold.

C

K

L

Hi

Andrew

from

liquid,

intelligent

Technologies,

so

I

live

in

a

part

of

the

world

where

power

is

not

always

the

most

stable.

Luckily,

in

Kenya

it's

more

stable

than

my

colleague

from

South

Africa

I

think

he

brought

the

parachute

load

shedding

with

him

with

it.

When

we

arrived,

but

here's

the

thing

yes

I

can

say

the

thing's

going

to

go

down.

We've

got

the

fast

reroute

Etc,

however,

if

I

get

an

outage

and

I

know

that

this

is

power

or

has

a

good

probability

of

being

power

based

on

the

schedule

and

I'm.

L

Just

interestingly

enough

was

looking

at

the

load

shedding

for

my

parents

house

and

they

three

to

five

thirteen

seven

o'clock

to

nine

thirty

blah

blah

blah.

How

I'm

going

to

react

to

that

based

on?

Is

this

power

or

something

much

more

temporary?

How

long

that

power

outage

could

be,

could

potentially

be

very

different

and

if,

for

example,

I've

got

someone

at

the

knock

in

India

or

in

the

UK

picking

this

up

and

looking

well,

it's

just

another

outage

I'll

quickly

balance

this

here,

that's

different

to

saying:

I

know

this

is

power.

L

My

schedule

says

it's

going

to

be

out

for

eight

hours.

It's

going

to

hit

a

peak

time

over

here.

I

may

want

to

rebalance

this

traffic

in

a

very,

very

different

way,

and

that

makes

this

really

important

that

I

do

have

a

way

in

the

network

to

pick

these

things

up,

Etc

and

then

make

decisions

on

that

that

aren't

necessarily

related

to

some

guy's

impression

in

India

who

just

saw

that

the

router

went

down

you

know.

So

there

are

a

lot

of

possibilities

here

to

get

pretty

interesting

with

how

you

do

this.

L

The

other

thing

is:

if

I've

got

algorithms

that

are

learning

how

to

balance

traffic

when

something

is

down,

I

can

tell

that

algorithm.

This

is

because

of

power.

Outage

learn

something

different

versus

this

thing

has

just

rebooted

itself

and

may

come

back

at

any

instance.

So

there

are

a

lot

of

possibilities.

I

see

here

and

yeah.

I

am

extremely

interested

in

this

thanks.

C

Andrew

yeah

I

think

when

it

comes

to

the

what's

in

scope

and

what's

out

of

scope.

If

people

do

think

this

is

a

problem,

we

need

to

balance

I

personally,

don't

think

we

should

get

too

caught

up

in.

How

do

we

create

schedules?

I?

Think

that's

kind

of

beyond

the

scope

of

what's

achievable

in

a

in

a

reasonable

working

group

schedule.

M

The

cross

and

Huawei

I

asked

the

question

on

the

on

the

on

the

chat

and

I

I

thought

I

might

relay

this

here.

It's

called

time

variant,

routing,

but

but

I

feel

there

are

different

variances.

We

we

may

want

to

consider

it

came

out

already

before

it

may

well

be

energy.

It

may

well

be

locality.

You

know,

there's

a

fine

time

variant,

the

the

focus

on

the

schedule

very

limiting.

If

you

will-

and

it

turns

you

know-

link-

is

up

and

down

schedules.

M

C

Take

your

point:

I'm

really

trying

to

stop

boiling

oceans

here

and

I.

Do

wonder

that

I

don't

want

to

disappear

down

to

a

generic

expression.

Evaluation

condition

causes

effect.

That's

that's!

That's

programming

in

the

network,

I

think

that's

still

very

much.

The

work

of

the

irtf

I

think

if

we

say

time

things

change

at

a

point

in

time.

Can

we

prepare

ourselves

in

advance

that

reduces

our

scope

to

something

achievable,

which

will

have

noticeable

benefit?

C

C

Yeah

yeah,

so

a

scheduled

change

in

energy

consumption.

I.

Think

we've

got

use

cases

talking

about.

In

fact,

I

know:

we've

got

use

cases

coming

talk

about

that,

it's

not

just

on

and

off

it

could

be.

We

know

that

I,

don't

I,

don't

know

the

the

atmospherics

are

such

that

our

satellite

links

are

really

good

until

there's

a

rainstorm

coming

in

at

3

P.M

this

afternoon

you.

M

You

can

do

a

lot

with

the

schedule.

I

do

agree,

I

mean

if

you,

if

you

provide

an

API

in

the

scheduled

building,

that's

how

we

solve

some

of

the

problems

in

the

past,

but

obviously

the

conditions

for

the

schedule

can

be

can

be

exactly

along

the

dimension

that

I

just

mentioned.

It

could

be

driven

by

Energy

prices.

In

order

to

do

carbon

aware

routing,

it

could

be

based

on

expected

movements,

I

think

on

the

mailing

list.

I

sent

the

paper

around

we

published

20

odd

years

ago

on

using

contextual

information.

M

C

Agree

and

I

think

that

the

carve

Point

here

should

be.

Let's

say

we

have

a

schedule,

let's

see

if

we

can

Define

what

that

schedule

should

have

inside

it,

and

let's

discuss

the

kind

and

I'm

getting

beyond

the

problem

statement

into

what

I

think

the

scoping

is

so

actually

I'm

going

to

stop.

Can

we

revisit

this

in

my

next

slide

or

my

later

slide

deck.

N

So

when

a

a

node

or

a

link

changes

the

network

transients

and

during

that

transient,

a

lot

of

innocent

traffic

is

disrupted

yeah

because

the

formation

of

micro

Loops,

so

we're

going

to

do

this,

can

we

include

in

the

base

technology,

micro,

loop

prevention

in

some

way

or

other

to

try

and

minimize

this?

This

very

disruptive

effect

the

Technologies

for

knowing

are

for

doing.

It

are

well

known.

C

Absolutely

I've

seen

no

reason

why

that

shouldn't

be

considered.

It's

that's

just

the

sort

of

thing

that

we

can

attempt

to

avoid.

If

we

knew

it

was

coming

up,

we

might

be

able

to

use

different

techniques

to

say

when

we

have

to

make

this

change

because

it

is

predicted,

we

can

avoid

that

sort

of

micro,

loop

formation

or

whatever,

because

we

can

all

look

at

we

being

a

set

of

of

routers

or

whatever.

We

can

pre-agree

a

an

alternate

topology

to

have

prepped

without

those

micro

loops,

and

we

can

pre-agree

to.

N

Make

no?

No

it's

not

it's

not

quite

as

simple

as

that

right

so

because

you

have

to

deal

with

the

lack

of

synchronicity

in

the

changing

in

the

fibs,

which

can

take

quite

a

long

time.

So

actually

it's

not

an

instant

event,

changing

the

topology,

but

actually

a

topology

change

process

that

you

need

to

do

that,

takes

you

from

the

old

topology

to

the

new

topology

via

a

number

of

short-term

virtual

topologies,

usually.

C

And

that

sounds

absolutely

like

something

that

should

be

addressed

as

part

of

a

TBR

working

group.

I.

Think

that

would

be

absolutely

a

charter

item

to

say:

can

we

in

general

terms,

describe

how

one

makes

this

change

such

that

we

avoid

these

things

and

the

reason

I

say

general

terms

is

I?

Don't

think

it's

right

that

a

potential

TBR

working

group

should

tell

link

state

routing

what

to

change

in

ospf.

We

should

go

to

ospf

and

say

these

are

mechanisms

that

we

talk

about

go.

Can

you

integrate

it.

N

A

O

Okay,

so,

when,

when

Rick

and

I

started

talking

about

what

would

time

variant

routing

look

like?

Obviously

a

problem

statement

was

good,

but

everyone

has

in

their

in

their

mind

a

mental

model

or

a

problem

that

they've

encountered

and

that

they're

trying

to

solve

and

trying

to

bring

that

down

into

a

series

of

use.

Cases

was

an

interesting

Endeavor

because

we

could

have

two

of

them

or

we

could

have

two

thousand

of

them,

and

how

do

we

scope

and

understand

what

differences

are

important

to

talk

about?

O

So

what

I

want

to

talk

about

for

the

next

10

or

15

minutes,

or

so

is

an

initial

take

of

how

we

carve

out

use

cases

to

identify

what

we

think

are

the

unique

differences

that

and

the

expected

benefits

in

those

use

cases

of

something

like

TBR

time

variant.

Information

next

slide,

so

to

do

that,

I

wanted

to

to

just

go

over

some

background.

O

The

approach

that

I

took

in

laying

some

of

these

out,

based

on

the

conversations

and

the

working

on

the

mailing

list,

talk

a

little

bit

about

some

formatting

and

then

go

over

what

I

call

sort

of

the

three

use

cases

which

are

kind

of

categories

and

I'll

name

them

here

and

we'll

go

into

them

in

detail

in

just

a

moment.

The

first

is

cases

where

nodes

change

their

functionality

to

preserve

local

resources,

local

resources,

like

resources,

local

to

that

node.

O

O

Power

is

really

expensive

right

now.

Data

is

really

expensive

right

now,

it's

not

a

matter

of

node

survival,

but

it's

a

matter

of

reacting

and

and

working

better

in

an

external

environment

and

then

the

last

one

which

is,

which

is

one

that

is

probably

very

familiar.

When

we

talk

about

things

that

change

over

time

is

what

happens

when

nodes

in

our

Network

are

moving

when

they're

moving

far

away

from

each

other

or

when

they're

moving

through

a

difficult

environment

or

when

one

just

turns

a

little

bit

and

can't

talk

to

the

other.

O

So

next

slide.

So

before

we

go

into

those

three,

how

did

we

get

to

those

three

and

how

are

we

going

to

talk

about

them?

So

how

did

we

get

to

them?

Well,

obviously,

we

wanted

to

constrain

anything.

We

talked

about

to

the

problem

statement

that

Rick

had

just

talked

through,

because

this

is

the

problem

that

we're

trying

to

solve.

O

But

then,

when

we

talk

about

use

cases,

it

is

categories

of

these

problems,

so

we

say

use

cases,

but

the

three

things

that

I

just

said

I

believe

are

categories

of

problems

or

characteristics

of

problems,

and

the

idea

here

is

that

again,

I

don't

want

to

worry

too

much

about

two

or

three

or

four

instances

of

the

same

kind

of

problem

and

calling

them

different.

Another

way

of

saying

it

is

what

we

think

here

are

from

a

use

case

perspective.

O

These

three

are

such

that

a

solution

for

one

Exemplar

of

that

use

case

could

be

a

solution

for

any

other

Exemplar

within

that

use

case.

So

the

format

for

this

is

we

want

to

Define

what

they

are.

We

want

to

list

out

what

the

assumptions

are

and

assumptions

here,

because

the

use

cases

are

again

a

little

bit.

Abstract

are

what

information

do

we

think

would

need

to

be

present

in

order

to

yield

deterministic

benefits

from

having

a

predictable

schedule,

and

then

the

next

part

is

okay.

O

Well,

assuming

that

we

have

data

that

we

need

to

key

off

of

then

what

are

those

benefits

and

when

we

list

out

those

benefits,

it's

not

a

and

then

there

will

be

a

list

typically

two

or

three

or

four

or

five.

It's

not

that

we

think

we

will

get

all

of

them

all

at

once,

all

the

time,

but

maybe

you

get

some

combination

of

them

and

in

different

cases,

and

then

last

I'll

end

each

use

case

with

a

quick

Exemplar

just

to

try

and

ground

the

thinking.

A

O

I

will

happily

defer

discussions

until

I'm,

not

on

stage

so

use

case

number

one

local

resource

preservation

I

mean

this

is

the

idea

where

we

have

nodes

that

just

operate

with

limited

resources,

either

because

they're

in

a

very

difficult

environment,

or

because

there

is

some

nature

of

the

node

itself,

where

it

is

meant

to

be

small,

it

is

meant

to

not

need

a

lot

of

power.

It's

meant

to

not

have

a

lot

of

storage.

O

O

So

if

we

assume

that

we

are

a

resource

constrained

node

and

if

we

assume

that

that

resource

management

is

important

and

in

this

category

of

use,

cases

is

sort

of

dominant

to

node

function,

then

what

we

would

say

is

it's

important

in

these

cases

to

know

that

our

resource

expenditures

are

knowable.

The

amount

of

resources

consumed

by

node

functions

can

be

known

in

advance

if

I'm

managing

my

node

functions

based

on

the

amount

of

battery

life

left

and

I,

know

how

to

transmit

a

data

volume.

O

O

O

You

can

get

better

thermal

savings,

you

can

get

better

storage

management

and

and

really

honestly,

just

the

data

delivery,

because

that's

what

most

of

this

is

about

continues

to

be

a

little

bit

better,

because

if

you

can

manage

your

resources

better,

if

you

can

stay

active

in

the

network

better,

if

you're

not

wasting

Power

by

having

a

radio

on

when

you

don't

think,

there's

a

likelihood

of

having

a

link.

For

example,

then

you

are

you're

able

to

eventually

get

more

data

through

your

system

next

slide.

O

Then

you

could

put

some

very

different

plots

together

for

nodes

that

maybe

are

distributed

in

a

particular

way,

and

you

could

see

how

that

would

change

underneath

of

that,

the

topology

of

the

network

at

different

times

time,

one

time

two

and

time

three.

So

this

is

something

that's

while

generic

I

think

we

can

all

come

up

with

or

understand

how

this

would

look

in

in

actual

deployments.

From

an

assumptions

perspective,

we

think

you

know

these

power.

O

All

right

use

case

two,

which

is

now

not

about

resource

management

and

preserving

node

function,

is

instead

about

adapting

to

external

conditions

and,

and

the

idea

behind

this

is

that

there

is

likely

some

cost.

Some

external

costs

associated

with

participating

in

a

network

and

a

common

example

of

that

is

use

of

infrastructure,

use

of

power,

infrastructure,

use

of

data

infrastructure,

nodes

that

are

Wireless.

They

will,

for

example,

use

cellular

infrastructure

I.

Think

all

of

us

understand

the

idea

of

one

Peak

and

off-peak

usage

times

and

what

happens

with

overage

rates.

O

If

you

go

over

your

one

Peak

minutes,

you

know

it

came

out

in

the

in

the

news.

What

three

or

four

days

ago

that

starlink

has

come

back

and

said

you

know.

If

you

go

over

a

particular

cap,

then

that

will

be

problematic

and

you

will

have

an

overage.

However,

our

off-peak

time

is,

you

know:

11

P.M

to

7,

A.M,

I,

guess

your

time,

and

so

that

means

great.

If

I

have

you

know

low

priority

data,

then

that's

when

I

should

choose

to

send

it.

O

Not

all

the

costs

are

strictly

financial,

and

the

benefits

here

may

be

that

we

choose

to

rank

or

associate

cost

functions

with

the

use

of

links

in

a

different

way,

with

the

extra

information

next

slide

so

again

assumptions

and

benefits.

We

would

assume

that

if

this

were

something

that

would

benefit

from

TBR

schedule

like

information,

we

would

say

we

can

measure

those

external

costs.

We

understand

our

data

rate

usage

or

our

energy

usage.

O

The

the

changes

in

those

costs

are

either

predictable

or

scheduled

or

scheduled

because

they

were

predicted

and

that

the

cost

differences

persist

for

long

enough

and

optimizing

them

has

a

savings

that

is

big

enough

to

justify

the

extra

computation

or

work

to

figure

out

and

make

use

of

this

additional

knowledge.

But

of

course,

I.

Think

if

you

do

that,

then

you

can

come

back

with

some

benefits

and

say

well

wait

a

minute

we

can

filter

links.

That

is

a

very.

O

This

link

was

very

low

cost

five

minutes

ago,

but

now

we're

in

on

peak

hours

where

we're

about

to

go

into

overage,

and

now

this

link

might

have

a

different

higher

cost,

either

Financial

cost

or

logical

cost,

and

maybe

we

want

to

think

about

it

differently.

Because

of

that

we

can

also

come

back

and

say

in

certain

cases.

Maybe

we

want

to

plan

and

accumulate

Data

before

we

use

a

link

based

on

its

cost.

O

O

Maybe

we

wait

on

that

for

a

variety

of

reasons,

and

again,

all

of

this

comes

back

to

trying

to

get

better

data

delivery

and

make

better

data

delivery

decisions

next

slide

again

for

this

one,

a

contrived

node

one

node,

two

node

three,

where

we

come

back

and

say

if

we

were

to

Simply

on

the

vertical

say:

cost

High,

Low,

Time,

T1,

T2

T3

across

the

three

node

Network.

Those

can

change

over

time.

In

this

case,

maybe

it's

spatially

distributed

nodes

with

the

cellular

backhaul

and

we

look

at

how

the

topology

does

not

change.

O

The

links

are

still

there,

but

we

may

come

back

with

things

like

well:

Node

1

to

node

two

can

be

either

low

cost

or

high

cost

at

different

times,

and

even

perhaps

in

in

this

third

case

at

the

bottom.

You

may

wind

up

saying

if

I

want

it

to

be

low

from

Node

1

to

node,

2

and

low

from

node

2

to

node

3,

and

it's

something

that's

reasonable

in

your

network.

You

may

actually

hold

on

to

it

for

a

little

while

waiting

for

that

node

two

to

node

3

cost

to

be

lower

next

slide.

O

Then

the

last

slide

is

sort

of

these

mobile

networks,

and

obviously

Leo

satcom

is

is

an

example

of

that.

But

there

are

others,

and-

and

there

are

really

three

cases

of

Mobility

but

again

under

the

idea

of

a

solution

for

one

might

be

a

solution

for

many,

so

we

don't

necessarily

perhaps

see

them

as

three

distinct

use.

O

Cases

are

cases

where

I

have

two

nodes

have

moved

far

enough

apart

that

they

lose

the

ability

to

talk

to

each

other

or

two

nodes

have

not

moved

too

far

apart,

but

they

together

are

moving

through

an

environment

or

an

environment

is

moving

through

them

such

that

they

can't

talk

to

each

other,

or

one

of

them

has

pointed

their

antenna

somewhere

else,

and

they

can

no

longer

talk

to

each

other.

All

of

those

are

Mobility

based

or

motion

based

losses

to

a

link

next

slide

so

again

from

an

assumptions

and

benefits

point

of

view.

O

O

A

A

A

A

P

Cool

so

while,

while

we

figure

out

the

audio

and

some

slides

my

name's

Dan

with

Kevin

at

Airbus

I'm

at

Lancaster

University,

we

are

introducing

a

use

case

next

slide.

Please

there's

already

been

a

couple

of

mentions

to

sort

of

emerging

satellite

Club

constellations.

You

know

the

space-based

use

case.

It

sounds

extremely

futuristic,

but

of

course

these

Networks

are

being

put

in

space.

There

are

several

large

constellations

with

tens,

hundreds,

thousands

of

nodes

whizzing

around

the

planet.

P

At

the

moment

they

are

extremely

small,

they're

increasing

in

size

and

number.

They

have

multiple

link

types

next

slide,

please

that

we

can

choose

in

order

to

set

up

the

physical

connectivity.

So

in

some

of

the

constellations

there

are

high

bandwidth

interfaces

on

the

order

of

gigabits

per

second

using

free

space,

Optics

operating

actually

at

speeds

that

are

sufficiently

are

significantly

faster

than

traditional

sort

of

earth-based

fiber-based

systems.

P

There

are

also

radio

interfaces

and

those

radio,

those

RF

interfaces

are

also

evolving

over

time

up

to

sort

of

gigabits

and

tens

of

gigabits

per

second.

In

terms

of

building

the

the

space

topology,

we

can

continue

through

various

space

segments

using

interspace

links

isls,

but

we

also

have

the

opportunity,

with

some

constellations

to

Bounce

Down,

to

the

planet

using

base

stations

and

in

sort

of

several

major

countries.

There

are

often

multiple

base

stations

that

can

be

used

next

slide,

please.

P

We

know

that

these

links

offer

different

characteristics,

so

there

are

bandwidths

latencies,

potentially

Jitter

as

well,

if

you're,

a

starlink

user

and

and

I

am

as

well

as

a

tester

you'll

notice

that

we

have

a

lot

of

bandwidth

and

availability.

But

actually

there

are

cue

issues

and

latency,

and

unpredictability

for

jitta

can

cause

a

major

problem

for

certain

low

latency

applications.

Next

slide,

please.

P

So

we

need

to

figure

out

in

advance

how

we're

going

to

build

our

physical

topology,

given

that

there

are

multiple

links,

bandwidth

constraints,

potential,

cue

issues

and

also

the

amount

of

power

available

on

some

of

these

satellite

nodes

as

well

as,

of

course,

the

bandwidth

that's

going

to

be

potentially

available

at

a

given

point

in

time.

Now.

This

is

a

a

network

path,

computation

problem

that

can

be

solved

with

a

combination

of

traditional

techniques.

We've

heard

earlier

sort

of

some

linear

Solutions,

like

dijkstra.

P

You

could

of

course

use

a

control

center

with

humans

sort

of

biological

path,

computation

to

do

some

offline

planning

to

try

to

optimize

your

network.

What

what

we're

looking

for

next

slide?

Please

is

potentially

a

method,

a

process

of

building

a

network

topology

virtually

in

advance

that

can

then

be

overlaid.

P

In

your

igp,

but

in

space,

sort

of

a

real-time

change

might

be

over

several

hours

as

you're

recharging,

a

particular

node

in

order

to

sort

of

gain

power

and

then

be

able

to

light

some

new

interface,

there

will

also

be

connectivity

potentially

coming

Online

predicted,

though

that

is

not

space-based,

but

is

high

altitude.

So

there

are

sort

of

UA

UAV

unmanned

aerial

vehicles

that

potentially

can

augment

your

network

next

slide.

Please.

P

So

we

will

need

to

build

this

kind

of

network

graph,

including

some

sort

of

online

capability,

with

the

ability

to

consider

offline,

and

maybe

the

definition

of

online

and

offline

needs

to

be

clear

here

for

people.

Essentially

it's

some

kind

of

entity

that

is

talking

directly

to

the

network,

I,

suppose

that's

what

I

might

consider

online

offline

would

be

to

pull

out

the

traffic

engineering

database

run.

P

Some

evaluations

create

a

candidate

topology

that

that

might

be

done

in

an

offline

instance,

and

then

I

want

to

apply

that

at

some

point

in

the

future.

But

something

has

to

kind

of

merge

these

two

databases

at

some

point

and

if

we

had

Kevin

online

he

he

would

probably

talk

about

conops,

which

is

a

form

of

sort

of

operational

management.

That's

done

by

a

team

of

people,

augmented

by

heuristics

or

sort

of

non-heuristic

technology,

so

I

suppose

for

some

of

these

use

cases

the

time

Horizon

is

very

different.

P

P

So

what

would

we

like

from

TBR?

And,

of

course

this

is

sort

of

walking

into

a

toy

shop

and

saying

well,

Dan,

you

know,

choose

anything

that

you

want

I'm

going

to

try

and

grab

everything.

I

can

knowing

that

there

is

a

kind

of

reality

question

that

we

need

to

apply

here

and

wanting

to

kind

of

minimize,

potentially

the

amount

of

state

that

gets

created

and

potentially

injected

in

a

in

a

routing

protocol.

That's

going

to

be

used

in

an

online

function,

so

bear

with

me.

P

We've

just

kind

of

Kevin

and

I

have

put

everything

potentially

that

we

would

like

here,

but

there

has

to

be

a

method

of

exporting

or

retrieving

information

from

the

network,

discovering

capability

understanding

what

metrics

we

will

need

to

consider

and

it's

all

of

the

standard

sort

of

routing

metrics

that

we

might

want

in

terms

of

latency

bandwidth,

Etc

costs,

but

cost

might

also

have

a

physical

element

to

it.

In

this

case,

so

there'll

be

a

network

cost

and

maybe

a

fiscal

cost

of

powering

a

unit

versus

choosing

a

particular

link.

P

P

A

Would

prefer

to

save

them,

but

if

you

have

a

clarifying

question,

please

come

on

up

or

come

to

the

queue

and

Eve

if

you

get

ready

for

the

next

one.

Thank

you.

I

I

think

we're

going

to

have

some

good

discussion

on

the

gaps

and

mechanisms,

so

I

want

to

make

sure

to

reserve

some

time

for

that

all

right.

Eve,

okay,.

Q

You

know,

code

red

moments,

you

know

we're

really

at

a

Tipping

Point,

and

so

what

can

all

of

us

be

doing

to

be

more

considerate

about

climate

change

and

greenhouse

gas

emissions?

And

if

you

look

at

the

information

communication,

Technologies

contribution

to

those

emissions,

it's

fairly

sizable

and

growing,

and

some

estimates

are

that

at

least

the

electricity

usage

by

ICT

is

between

will

be

between

two

and

it's

about

two

percent

now,

but

by

2030,

will

be

about

six

percent

of

all

Global

electricity,

which

in

turn

has

a