►

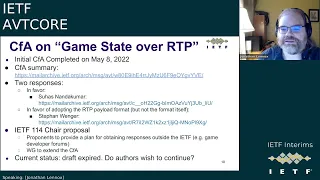

From YouTube: IETF-AVTCORE-20221004-1500

Description

AVTCORE meeting session at IETF

2022/10/04 1500

https://datatracker.ietf.org/meeting//proceedings/

A

A

A

A

B

B

B

A

A

Yeah,

why

don't

you

do

it

because

there's

a

single

unified

side

deck,

it's

probably

easier

that

way.

Yeah

here

are

the

virtual

meeting

tips.

Hopefully,

music

I

has

not

changed

throughout

the

slide,

but

I

think

it's

all

accurate

so

far,

hopefully

you're

all

familiar

with

this

by

now

next

slide.

The

note

well

applies

here.

A

Sorry

so

yeah,

so

the

note

well

applies

here

so

you're

agreed

to

follow

ITF

processes

and

policies

in

regard

to

IPR

and

various

anti-harassment

and

general

good

behavior.

Please

next

slide

not

really

well.

These

remember

to

you,

know,

behave

professionally

and

treat

everyone

with

dignity.

A

decency

of

respect

in

general,

I

think

we

haven't

had

a

problem

with

this.

But

if

you

disagree,

please

contact

the

chairs

or

the

iatf

outputs

team

about

this

meeting.

A

A

Agenda

got

this

thing's

the

skip

pilot

format.

Bernard

will

talk

about

his

RTP

over

quick

sandbox

who's,

presenting

for

Garden

View,

real

quick

is

that

York

or

I

guess

we'll

find

out

when

I

get

there,

and

so

we'll

have

some

more

talk

about

RDP,

real,

quick,

the

spec

and

the

green

metadata

proposal.

So

it

probably

should

be

a

relatively

quick

meeting,

but

if

anybody

has

any

other

business,

they

can

also

raise

it

if

they

look.

A

A

A

Excellent,

let's

do

anything

to

say:

bro!

Okay,

does

it

call

for

adoption

on

v3c

until

October

31st?

If

you

have

any

opinions

on

whether

we

should

adopt

that,

particularly

if

you're

in

favor

of

it

and

I

would

have

interest

in

doing

the

work

or

between

the

documents

or

just

you

know,

Implement

I

think

it

should

be

available

for

implementers.

Please

comment

so

that

we

can

know

that

there's

interest

again

until

October

31st

game

suite

over

RTP.

A

A

B

F

I'm

here,

thanks

John

all

right

next

slide,

so

revision

two

was

submitted

on

August

2nd

shortly

after

our

last

meeting

regarding

comments

that

were

Incorporated

from

the

Gen

art

and

art

art

reviews,

the

sector

comments

came

in

pretty

late,

actually

came

in

on

September

7th,

again

the

gist

of

it.

The

more

comments

regarding

you

know

the

opaque

standards

and

such

I

know.

F

The

other

comment

major

comment

that

was

in

the

sector's

reviewer,

was

about

the

security

considerations

section.

The

comment

was

it

may

be

adequate,

not

sure

what

that

means.

Well,

we

have

to

change

because

I

mean

basically

that

text.

That's

in

the

security

considerations

is

boilerplate

out

of

RFC

8088

again

that

doesn't

our

opinion

that

skip

doesn't

add

anything

or

make

it

any

more

less

secure

or

add

any

additional

considerations

at

this

point.

F

So

again

we

didn't

feel

that

there

was

any

need

to

change

that

text

as

it's

written,

certainly

open

to

comments

or

questions

or

from

the

group,

if

they're

think

that

they

that

we

do

need

to

change

that

text.

But

from

what

I

understand

it's

pretty

much

boilerplate

from

most

RTP

format

drafts

at

this

point

so.

F

Next

slide,

finally

figured

out

I've

been

trying

to

do

the

XML

version,

submission

for

the

last

couple

of

revisions

and

have

been

unsuccessful.

I

mean

I

reached

out

to

the

ietf

tools

group.

They

were

able

to

figure

out

what

the

bugs

were

I

mean.

We

basically

started

with

a

the

Microsoft

Word

template,

that's

what

we

were

using

for

that,

but

for

whatever

reason,

the

tool

when

you

go

to

upload

and

try

to

convert

it

to

XML,

it

would

always

come

back

with

errors

again,

nothing

really.

No,

there

were

no

real.

F

F

B

G

B

You

end

up

in

this

kind

of

limbo

state

and

basically

the

ads

increasingly

rely

upon

the

reviewers.

So

the

idea

of

doing

this

early

review

was

to

not

get

stuck

that

way.

So

that's

kind

of

the

last

step

is

to

just

confirm

with

them:

hey

are

you

okay

with

this,

and

particularly

to

post

it

to

the

list?

Because

then,

when

I

do

the

write-up

for

publication

requests,

I

can

say

the

following

director:

that's

reviewed

this

thing.

You

know

the

authors

responded

and

then

they,

the

reviewer,

said

it

was

okay

and

I.

B

F

F

B

A

A

B

B

B

So

why

do

this?

I

was

just

interested

in

exploring

the

behavior

of

RTP

over

quick

transport.

The

goal

here

was

just

to

visualize.

It

get

to

see

it

not

necessarily

to

fix

things

just

to

understand

what

might

be

an

issue

and

also

I

wanted

to

try

things

make

do

experiments,

maybe

change

a

little

code

run

the

experiments

again

see

what

the

difference

was

and

so

I

didn't

want

a

particularly

complex

implementation

to

do

that.

B

B

So

what

can

you

do

with

it?

Well,

I'll

I'll

show

you

a

little

bit,

but

you

can

basically

vary

the

encoding

parameters.

You

can

choose

the

codec

the

bit

rates

the

resolutions

and

then

you

turn

it

on,

and

you

see

what.

Basically,

you

can

visually

compare

the

local

video

which

just

comes

right

from

your

camera

and

then

we

bounce

the

video

off

of

a

server

in

the

cloud,

and

then

you

could

see

the

remote

video

and

see

the

difference

between

the

two.

B

So

you

could

measure

the

glass

to

gas

latency

just

get

a

sense

of

how

jittery

the

video

is,

whether

they're

video

pieces

stuff

like

that.

So

you

visually

can

see

what

what

it

looks

like

and

then

after

you

press,

stop

you

get

some

Diagnostics

that

computes

a

metrics

RTG

stats

for

the

frames

loss

reordering

stuff,

like

that,

it's

all

calculated

the

application

layer,

because

at

the

moment,

Chrome

web

transport

doesn't

surface

the

quick

stack

metrics,

but

there's

also

a

graph

of

rtt

versus

frame

length.

B

So

it

was

built

on

Next

Generation

web

apis,

which

are

all

fresh

and

new,

and

probably

quite

buggy.

That

includes

what

WD

streams

web

codecs,

web

transport

and

API

called

media

capture,

transform

I

implemented,

both

the

send

and

receipt

Pipeline

and

a

single

worker

that

might

not

have

been

a

great

idea

for

reasons.

I'll

describe

there's

some

new

features

that

have

just

come

in

called

bring

your

own

buffer

reads

that

weren't

in

Chrome

stable.

So

this

is

a

separate

version

of

the

canary.

This

didn't

intend

to

be

a

complete

implementation.

B

The

idea

was

to

let

the

experimenter

vary

the

bit

rate

and

then

kind

of

look

at

what

happens

and

considering.

What's

missing,

it

actually

works

surprisingly

well,

and

that

was

interesting,

because

I

didn't

expect

that

it

would

work

hardly

at

all

without

doing

all

this

other

stuff,

but

it

does

which

is

kind

of

interesting.

B

B

B

So

essentially

you

can

see

what

it

would

look

like

without

any

transport

and

then

in

number

two

I

basically

add

the

network

transport

to

the

sending

and

receiving

pipelines,

and

obviously

you

need

to

serialize

and

deserialize

as

well

and

what's

useful

is

you

can

compare

the

behavior

with

transport

to

that

without

it

may

help

isolate

some

of

the

things

you're.

Seeing

as

I

mentioned,

there

are

two

versions:

there's

one

in

Chrome,

stable

and

another

one

in

Chrome

Canary

and

there's

a

GitHub

repo.

B

Okay,

so

here

are

some

of

the

things

you

can

play

with.

You

can

select

the

average

Target

bit

rate,

which

is

I

guess

supposed

to

be

an

average,

including

the

keyframe

and

the

keyframes

in

practice.

The

actual

bandwidth

consumption

is

typically

lower

and

I.

Guess

that

depends

on

your

keyframe

interval,

which

is

the

number

of

frames

between

each

keyframe.

The

default

is

300,

so

essentially

at

30

frames,

a

second

that

would

be

a

keyframe

every

10

seconds.

The

Codex

supported

a

vpa,

dp9,

h.264

or

ab1.

B

I

did

note

some

strange

behavior

of

vp9

I

think

that's

probably

a

bug

in

web

codecs

you.

If

you

select

real

time

you

get

this

very

large

frame

size

even

for

p

frames

like

10,

kilobyte

keyframes,

which

doesn't

make

sense.

If

you

select

quality

that

changes

so

I

think

there's

a

bug

there.

Av1

is

pretty

solid

on

Mac

OS,

not

so

solid

on

windows.

So

there

may

be

some

issues

there.

B

The

currently

Chrome

only

supports

h26

if

I

decode,

so

you

can't

really

use

it

in

this

application.

You

can

choose

whether

you

want

Hardware

acceleration

or

software.

It

doesn't

seem

that

Hardware

acceleration

is

very

widely

supported,

so

the

default

is

no

preference

and

which

will

probably

end

up

being

software

most

of

the

time.

There's

this

latency

goal,

which

can

be

quality

which

produces

smaller

frame

size

but

only

takes

marginally

longer

than

real

time.

I'll

talk

about

that.

That's

a

little

the

behavior

there

isn't

quite

what

you'd

expect

you

can

actually

support.

B

Svc

temporal

scalability.

The

default

is

three

temporal

layers

and

that's

useful

for

reasons

I'll

describe

because

you

can

do

partial

reliability

and

then

you

can

choose

your

resolution,

so

you

can

have

a

small

resolution

or

you

can

choose

as

high

resolution

as

your

camera

will

support.

Some

of

the

newer

camera

supports

full

HD

and

that

is

sometimes

useful,

particularly

for

for

looking

at

High

higher

bit

rates.

B

The

video

quality

is

highly

dependent

on

the

device

and

the

camera

good

quality

seems

to

be

possible,

particularly

if

you

get

up

to

about

a

megabit

and

you're,

using

a

desktop

or

a

high

quality

notebook.

You

have

a

good

camera

I've

been

able

to

generate

full

HD

video

with

a

talking

head

and

a

Target

bit

rate

of

one

megabit,

and

it

looks

pretty

good.

The

actual

bit

rate

is

more

like

700

kilobits,

something

like

that.

B

The

other

thing

is

that

the

combination

of

quick

and

temporal

scalability

gives

you

pretty

good

resilience.

Properties.

Quick

is

good

at

at

re-transmitting

getting

stuff

to

the

other

side,

and

if

you

do

temporal

scalability,

then

a

lot.

A

large

proportion

of

all

the

frames

you

send

are

discardable,

so

you

don't,

if

they

take

longer

than

you

think

you

can

actually

send

a

reset

and

and

it'll

be

fine.

The

decoder

will

keep

on

chugging,

so

that

is

is

actually.

B

It

looks

like

a

kind

of

nice

combination

for

resilience

there

and

overall

in

the

experiments

I've

done

I've

seen

very.

Very

low

loss

I've

also

been

on

pretty

good

networks.

So

that's

that's

part

of

it,

but

overall

it

seems

to

do

a

good

job

of

of

delivering

frames

so

latency.

This

is

where

there

were

more

issues.

B

I

observed,

particularly

with

the

higher

resolutions

I

observed,

glass

to

glass

latency

considerably

higher

than

the

measured

frame

rtt

we'll

get

into

that

in

a

bit.

But

the

the

P

frames

were

typically

small

and

we'll

talk

about

that,

but

we're

talking

about

a

few

thousand

bytes.

So

a

couple

of

packets

for

the

P

frames

and

those

come

in

at

very

low

rgt.

B

They

look

like

they're

coming

in,

in

many

cases

clustered

around

rtt

bin,

but

the

iframes

are

a

lot

larger

I've

seen

iframes

as

large

as

200

kilobytes,

and

they

exhibit

some

issues.

Often

the

frame

rtts

are

multiple

times

higher

than

the

P

frames.

Although,

interestingly

not

always

we'll

talk

about

that,

the

effect

is

most

pronounced

with

the

high

Gap

sizes,

as

I

said,

I

use

the

default

of

300,

and

in

that

case

you

only

get

a

few

iframes

for

experiment

and

I.

Think

I

have

an

idea

why

this?

This

might

be.

That

way.

B

Also,

you

see

the

effect

of

this.

This

higher

frame

rtt

and

the

glass

latency,

even

under

con

conditions

of

low

bandwidth

utilization,

so

I've

been

running

these

experiments

on

a

gigabit

Network.

You

know

only

consuming

700

kilobits

with

very,

very

low

loss,

and

you

still

see

this

high

glass

to

glass,

latency

and

some

of

the

large

art

frame

latencies

on

the

eye

creams.

B

B

These

are

iframes

there's

two

of

those,

because

I

ran

the

experiment

roughly

around

12

seconds,

and

so

you

had

two

keyframes

and

there

you'll

see

that

the

there's

the

rtt

above

and

beyond

the

minimum

round

trip

Transit

time.

The

frame

rtt

is

looks

like

about

200

milliseconds,

larger

on

one

and

maybe

100,

and

something

on

the

other

one.

So

the

question

is

why

why

are

we

seeing

this?

B

What's

what's

going

on

here

and

I'm,

not

not

sure

I

could

have

the

complete

answer,

but

I'll

give

you

what

I

I

think

the

answer

might

be

so

basically

what's

happening

here,

because

the

Gob

size

is

so

large

is

that

the

the

P

frames

are

all

pretty

small

right,

a

couple

of

packets,

so

they

don't

really

push

the

congestion

window

up

so

essentially

we're

in

an

application,

limited

situation,

and

as

a

result

of

that,

you

don't

really

get

a

good

bandwidth

estimates.

So

what

happens?

B

B

So

you

get

you

get

multiple

rtts.

So

that's

why

you

see

the

the

RT,

the

frame

rtt

being

multiples

of

this

rtt

Min

that

you'd

see

the

other

thing.

Is

that

because

you

don't

really

have

a

particularly

good

bandwidth

estimate,

the

application

isn't

getting

good,

particularly

good

feedback,

and

so

it

can't

adjust

the

iframe

size

or

quality.

B

B

Also

after

you

send

that

maybe

second

iframe

subsequent

iframes

show

a

lower

frame

rtt,

so

the

congestion

window

maybe

got

pushed

up

a

little

bit.

It

finally

grew

because

you

took

you,

try

you

actually

fill

the

pipe,

and

so

if

your

Gap

sizes

are

smaller

it

you

actually

get

a

tighter

rtt

scatter,

which

is

kind

of

interesting

I'm,

not

not

necessarily

a

great

idea,

because

it

uses

a

lot

more

bandwidth,

but

it's

just

an

experiment

that

shows

that

this

this

might

be

the

cause

for

what

we're

seeing

here.

B

Okay,

another

effect

that

I

noticed

was

the

effect

of

CPU

utilization.

As

I

said,

I

put

all

of

the

the

encode

decode

and

transport

all

on

one

worker

thread.

I,

probably

wasn't

a

great

idea

because,

with

the

ab1

and

higher

resolutions,

pretty

much

taking

out

that

that

core

or

or

thread

with

100

CPU

utilization

and

that

may

be

the

cause

of

the

high

glass

glass

latency,

not

not

entirely

sure

so,

one

way

to

try

to

figure

out

if

this

is

true

is

to

support

more

threads.

B

Okay,

so

also

in

the

process

of

playing

with

this

came

up

with

some

some

issues

that

I

filed

and

I.

Think

Matthias

will

talk

about

some

of

those,

but

here

are

some

of

the

things

that

came

up

in

the

process

of

playing

with

this

stuff.

One

was

that,

as

I

mentioned,

partial

reliability

seemed

like

a

good

idea,

because

we

had

support

of

SVC

and

so

for

the

discardable

frames

we

didn't

have

to

wait

forever,

for

them

to

re-transmit

could

potentially

just

send

a

reset

after

the

timer

expires.

B

But

one

of

the

interesting

things

is

that

partial

reliability

requires

support

for

reset

frames

in

any

forwarders

that

you

have,

and

also

when

you

do

this

on

the

receiver.

You

don't

necessarily

know

when

you've

gotten

the

complete

frame

So.

In

theory,

at

least

the

quick

spec

says

when

you

send

the

reset.

The

sender

should

stop

retransmitting

when

it

gets

that

reset

and

also

on

the

on

the

receiver.

B

It's

it's

a

should

that

it

should

basically

use

the

reset

stream

as

a

signal

prioritize

it

when

it

comes

in

not

not

queue

it

up

and

wait

till

it

you

get

to

the

reset.

So,

potentially

you

may

not

get

the

entire

frame,

and

so

having

a

lane

field

is

a

potentially

useful

thing.

I

think

Matthias

will

talk

about

that.

B

The

other

thing

is

because

of

this

differential

reliability.

You

may

want

to

send

some

portions

of

the

stream

with

with

datagrams

like

the

P

frames,

or

maybe

you

want

to

send

the

iframe

with

with

reliably

over

a

reliable

stream

and

so

forth.

So

that's

another

issue.

I

think

Matthias

will

talk

about.

B

Another

thing

that

came

up

was

multiplexing

of

data

and

media.

Basically

I

wanted

to

make

this

as

simple

as

possible

and

have

a

single

quick

connection.

So

what

I

did

is

I'm

actually

sending

the

signaling

over

the

same

quick

transport,

quick

connection

as

the

media,

so

that

seems

like

a

pretty

useful

thing

to

do

and

also

I'm

not

doing

this,

but

you

might

want

to

communicate

state

or

device

input

again

over

the

same

quick

connection.

B

B

So

that's

a

little

weird.

The

other

thing

is,

if

you're

using

S

frame

for

end-to-end

encryption,

it

would.

How

does

this

translator

packetize

this

opaque

blob

right

of

a

best

frame

stuff,

even

if

it

did

have

codex

specific

knowledge?

It

actually

doesn't

have

the

encryption

key.

So

how

would

it

do

that?

The

only

option

it

would

have

would

be

generic

packetization

and

we've

talked

about

that.

So

those

are

those

are

some

questions.

B

B

As

you

saw

in

the

graph,

the

the

P

frames

were

fairly

tightly

clustered

around

rtt

Min.

So

basically,

if

something

has

a

you

know

log

much

larger

rtt.

You

basically

can

send

a

reset

and

forget

about

it,

but

as

I

mentioned

it

does

it's

helpful

to

have

a

length

field,

because

then

you

can

figure

out

if

you

got

it.

The

other

thing

to

note

is

that

and

I

think

you've

seen

this

on

the

graphs.

The

iframes

can

be

pretty

large,

I've

seen

them

as

large

as

200

kilobytes.

B

So

a

16-bit

field

isn't

necessarily

large

enough

to

cover

that.

So

that's

something

to

think

about.

The

other

thing

is

in

my

server

implementation.

I

didn't

found

some

bugs

I

wasn't

forwarding

the

reset

frames.

I

was

also

doing

store

and

forward,

which

adds

a

little

bit

to

the

latency.

So

you

have

some

things

to

think

about

and

a

forward

or

translate.

It

should

probably

just

forward

the

reset

streams

and

the

fins

and

stuff

like

that

doesn't

need

to

get

the

entire

quick.

B

The

entire

frame

before

forwarding

it

on

should

probably

just

do

a

cut

through

would

make

a

lot

more

sense,

foreign

a

little

bit

about

the

data

and

media

there's

multiple

uses

for

it

and

in

this

particular

experiment,

I

didn't

need

multiple

APNs,

just

use

a

single

Welk

transport,

alpn

and,

and

you

can

send

whatever

you

want

over

it

and

that's

kind

of

how

webrtc

works.

There's

a

single

alpn

for

dtls

for

both

media

and

data,

but

it

does

bring

up

the

question

of

how.

B

A

C

B

Yeah,

that's

a

really

great

question

Spencer.

That

was

one

of

the

questions

I

had

going

into

this,

and

it's

why

I

focused

on

the

frame

per

stream.

Instead,

the

good

news

is.

As

for

the

P

frames,

you

saw

they're

really

clustered

around

rtt

men,

so

at

least

for

those

it

doesn't

seem

like

you

need

datagrams

right

you're.

Getting

this

quick

does

a

really

good

job

of

sensing,

the

bandwidth

and

so

forth.

So

you

know

I

guess

my

answer

to

that.

B

Spencer

would

be

dependent

on

whether

I

can

get

that

glass

to

glass,

latency

down,

I

suspect

the

glass

glass

latency

issues

have

nothing

whatsoever

to

do

with

datagrams

versus

streams.

I

think

it

may

be

due

to

the

CPU

pegging

that

I'm,

seeing

maybe

some

other

things

and

I

have

been

as

I

improve

the

code.

The

GLA,

the

latency

overall

latency

comes

down

quite

a

bit,

so

I

want

to

take

a

rain

check

on

that,

but

I'm,

not

I'm,

not

really

I.

B

A

G

Can

you

hear

me

okay,

yeah,

I

guess

I

can

unmute

my

video

that

worked

all

right.

I

was

just

gonna.

Imagine

that

in

my

previous

experimentation

with

using

frames

or

sorry

streams

that

it

worked

fine,

except

for

the

congestion,

control

issues

and

I,

think

those

would

be

the

same

between

datagrams

and

streams.

So

I

don't

think

it's

a

question

of

streams

versus

datagrams.

It's

more

of

a

question

of

how

the

congestion

control

work

for

both.

B

Yeah

I

mean

if,

if

the

hypothesis

we

had

here

is

correct,

you

know

it

wouldn't

matter.

If

you

dump

the

iframe

as

in

a

single

stream

or

if

you

dumped

it

in

a

datagram

right,

I

guess

the

only

difference

would

be

yeah.

The

the

quick

would

basically

congestion

control

would

do

the

same

thing

more

or

less

right,

Peter,

yeah,

so

you'd

see

more

or

less

the

same

kind

of

extended

you'd

have

to

you'd,

see

essentially

multiple

rtts

of

to

to

get

the

whole

thing

there.

Anyway,

the

suggestion

window

wouldn't

be

opening

up.

A

I'm

next,

in

the

queue

as

an

individual,

just

asking

I'm

wondering

if

you

had

any

thoughts,

I

guess

this

might

be

then

more

input

to

either

the

web

transport

working

group

or

the

the

w3c

web

address

API,

whether

you

know

the

current,

you

know

what

would

be

useful.

Do

you

have

enough

information

to

do

meaningful?

You

know

debugging

and

Analysis

of

what's

going

on

or

do

you

need

more

information

out

of

the

browsers.

B

B

So

it's

not

like

you

get

any

info

on

a

quick

stack.

It's

not

like.

You

have

webrtc

internals,

there's

like

nothing

like

that,

so

basically

I'm

having

to

instrument

everything

myself

and

look

at

packet

traces.

So

actually

that

is

a

great

question

and

I

I

will

say

that

the

more

I

work

on

this,

the

more

I

appreciate

webrtc,

where

the

browser

does

everything

for

you

like

in

in

this

kind

of

work.

You

have

to

do

your

own

threading.

H

B

Yeah

I

think

any

Lane

field

would

does

help

with

that,

whether

it's

4571

or

something

else,

I

think

I.

Think

it's

a

good

question

Sergio,

whether

you

might

want

to

packetize

it

anyway.

I

don't

have

enough

information.

One

of

the

hypotheses

I

had

before

this

other

this

one

that

I

presented

here

was

that

I

could

have

been

a

pacing

issue

like

dumping

the

whole

these

huge

iframes

in

and

having

them

spewed

into

the

network

might

not

have

been

such

a

great

idea.

B

I.

The

the

information

I've

seen

on

the

congestion

Windows

suggested

that's

not

happening,

I

think.

Maybe

we

should

leave

that

discussion

of

the

of

the

lane

field.

I.

Think

Matthias

will

have

a

presentation

on

that,

so

we

can

pick

that

up

there,

but

anyway,

I

think

discussion

of

the

langfield

is

a

useful

thing

to

talk

about.

A

A

I

I

That

depends

on

which

RTP

stream

they

are

sent

in,

which

is

now

more

clearer

in

the

spec,

but

we'll

also

get

to

flow

identifiers

again

later

I

added

a

short

paragraph

about

exposing

the

bandwidth

estimation

to

the

API

considerations

and

I

removed

a

couple

of

absolute

editor

notes

from

the

document,

and

currently

there

are

a

few

open

pull

requests.

One

is

for

topology,

which

Bernard

already

mentioned,

and

then

there's

one

for

screen

concurrency

and

one

for

expressing

the

congestion

control

requirements

instead

of

specifying

fixed

congestion,

control,

algorithms,.

I

Then

yeah

thanks,

so

I

would

like

to

talk

about

four

issues

today

and

two

of

them

are

kind

of

linked,

which

is

this

one

and

the

next

one

Bennett

already

mentioned

arpn

usage

and

he

used

one

alpn

if

I'm

sort

correctly

for

sending

data

and

RTP.

In

the

same

query

connection

we

currently

Define

the

alpn

token

RTP,

most

quick

in

the

document,

which

we

thought

could

indicate

that

we

can

Multiplex

RTP

and

other

protocols

over

the

same

quick

connection.

I

The

problem

with

this

may

be

that

if

we

have

other

protocols

defining

a

map

into

quick,

they

also

have

to

Define

some

alpn

token,

which

would

be

incompatible

to

ours

and

a

second

problem

which

come

or

came

up

with.

That

is

the

issue

of

multiplexing,

which

will

be

the

next

slide.

But

let

me

finish

this

one

first

before

we

maybe

discuss

both

of

them.

I

If

we

do

multiplexing

in

or

RTP

over,

RTP

moves

quick

as

multiplexing

multiple

different

protocols.

We

don't

really

know

how

is

the

multiplexing

actually

going

to

work

between

the

different

protocols?

So

our

proposal

for

now

is

the

one

on

the

left

side

here

in

the

green

box.

To

Define

RTP

quick

instead

of

RTP

moves

quick

and

then

have

future

documents

to

specify

multiple

new

APNs

for

different

multiplexings

between

different

protocols,

for

example,

RTP

rtcp

and

some

other

data

protocol

and

I.

I

There

is,

however,

still

the

question:

if

we

still

need

a

flow

identifier,

we

can

do

RTP

rtcp

multiplexing

by

RFC

5761,

and

we

can

send

multiple

types

of

media

nursing

RTP

session,

but

I

think

there

is

no

equivalent

to

sending

multiple

different

RTP

sessions,

as

we

could

do

it,

for

example,

in

using

different

RTP

at

different

UDP

ports,

because

we

only

have

this

one

quick

connection.

So

if

we

still

want

to

use

multiple

RTP

sessions

on

the

same

quick

connection,

we

still

need

something

like

a

flow

identifier

to

identify

them.

I

But

the

problem

with

that

I

think

is

that

we

don't

necessarily

want

to

Multiplex

the

protocols

which

are

specified

in

that

RFC

because,

for

example,

dtls

doesn't

make

much

sense

to

put

on

top

of

crit

next

to

RTP.

If

we

can

just

send

data

channels

protocols,

for

example,

next

to

RTP

instead

without

using

dtls,

so

yeah

they're.

Not

do

you

have

any

thoughts

about

that

yeah.

B

B

I

mean

they

they

could

go

over

the

same

port.

You

know

with

different

connection

IDs,

but

I'm,

not

sure.

That's

that's

I

think!

That's!

Probably

you

probably

won't

want

that.

I

know

that

China

has

stuff

them

in

the

same

connection

does

raise

issues

maybe

a

priority

or

something

like

that,

but

the

kind

of

things

that

I'm

thinking

of

that

you

would

Multiplex

and

the

data

are

probably

relatively

small

bandwidth

I

mean

I.

B

B

B

I

would

agree

with

it

doesn't

make

a

lot

of

sense

to

Multiplex,

dtls

and

and

support

that

within

the

within

a

single

quick

connection,

but

so

we'd

probably

need

to

need

to

think

about

that.

A

little

more

with

respect

to

the

flow

ID

I

do

have

a

question

about

the

usage

model

and

we

can

talk

about

it

more,

but

the

reason

we

have

the

flow

ID

and

web

transport

is

because

we'd

have.

B

The

idea

was

to

support

multiple

browser

tabs,

and

so

we

used

the

flow

ID

to

separate

the

browser

tabs

I'm,

not

sure,

necessarily

that

that

would

be

the

model

in

RTP

over

quick,

although

I

could

be

wrong,

so

it

might

be

worthwhile

to

kind

of

articulate

what

what

the

use

cases

are

there

and

whether

we

might

just

say,

hey

everything.

That's

on

this

quick

connection

is

a

single

session

and

leave

it

at

that.

I

Okay,

thanks

I

also

think

it

makes

sense

to

have

something

like

RTP,

most

quick

for

later,

for

example,

for

using

RTP

and

data

channels

or

something

that

does

something

like

datogenets

in

quick

in

the

same

connection

for

RTP

and

that

data

General

protocol.

But

as

long

as

we

don't

have

this

other

protocol,

I

think

we

should

move

on

with

this

document

and

Define

RTP

quick

first

and

then

allow

future

documents

to

Define,

rdpmx,

quick,

which

can

do

RDP

and

another

data

Channel

protocol

on

top

of

quick

and

design

connection.

Does

that

make

sense.

B

I

G

So

the

question

of

multiplexing

is

very

similar

to

web

transport,

and

so

the

solution

we

came

up

with

there

I

think

would

make

sense

for

sending

media

as

well,

but

I

think

what

you

could

do

in

this

document

is

Define

how

to

send

RTP

over

quick,

like

connections

that

have

streams

of

datagrams.

And

then,

if

somebody

wants

to

run

that

over

web

transport,

they

can.

G

G

I

A

Yeah

Jonathan,

Max,

I,

I,

think

sort

of

you

know

partially

largely

agreeing

other

I.

Think

Bernard's

comment

makes

sense

in

the

context

of

web

transport,

where

you

have

your

own

custom,

signaling

mechanism,

I.

Think

if

you're

talking

about

raw

quick,

where

I

would

have

whatever

you're

multiplexing

would

have

to

be

a

defined

protocol.

I,

don't

think

we're

anywhere

near

ready

for

anything

like

that.

A

Yet

I

agree

that

having

something

making

making

this

make

sense

for

both

raw,

quick

and

web

transport

makes

sense,

whether

that's

whether

you

want

to

drive

or

describe

that

as

an

abstraction

layer

or

specifically,

mapping

to

both

I

mean

it's

more

or

less

the

same

thing.

It's

just

how

you

describe

it,

but

I

think

I

agree

that

having

writing

this

in

a

way

that

makes

sense

for

both

raw,

quick

and

web

transport

would

be

sensible.

I

C

I

E

Thanks

I

had

a

I

had

I

had

multiple

small

points,

briefly

back

to

Bernard's

point

about

sending

another

small

signaling

messages

back

and

forth,

I'd

like

to

understand

what

kind

of

signaling

between

whom

or

kinds

of

renegotiation,

would

usually

follow

the

signaling

path

that

would

be

used

to

set

up

the

subsequent

quick

connection

for

RTP

rtcp.

If

you

had

yeah

some

of

the

signaling

part,

then

that's

it.

E

Rather

than

opening

this

up

very

broadly

at

the

beginning,

which

might

make

us

go

around

in

circles

a

few

times.

I

do

see

the

value

in

making

this,

for

example,

usable

in

a

broader

abstraction

but

I

had

I

would

feel

more

comfortable

if

I

understood

all

the

implications

before

diet

trying

to

design

a

solution.

So

this

was

why

I'd

be

happy

to

rather

focus

on

a

narrow

solution.

Space

right

now,

maybe

exploring

the

others

in

parallel,

but

not

trying

to

board

the

ocean

right

away.

E

I

B

B

You

know

it

might

not

work

well,

but

but

this

is

something

you

can

use

for

these

kind

of

small

data

exchanges,

which

is

much

of

what

we

see

with

webrtc.

By

the

way

I

mean

there

are

some

people

who

use

the

data

channel

to

send

media

along

with

data,

but

I'd

say

most

of

the

RTC

data.

Channel

usage

is

these

kind

of

small

small

updates

like

game

streaming,

going

back

the

other

way

stuff

like

that?

B

Typically,

you

don't

do

a

lot

of

muxing

of

data

and

media,

so

I,

don't

think

you

necessarily

have

to

solve

all

those

problems.

You

can

say

hey.

This

is

what

we

do.

It

doesn't

solve

all

problems,

and

then

you

know

if

something

comes

along

later,

my

concern

would

be

we

may

or

may

not

have

this

priority

prioritization

solution,

necessarily

it

could

take

years.

B

And

meanwhile,

if

people

don't

have

the

ability

to

Multiplex

data,

they'll,

just

invent

their

own

thing

or

just

do

multiple

quick

connections

and

the

whole

thing

will

just

rapidly

degrade

so

I

just

say

just

try

to

do

something:

simple,

State

the

limitations

and

be

done

with

it

and

not

try

to

solve

every

problem.

That

would

be

my.

I

B

Well,

you,

you

know

we

should

you

probably

should

open

an

issue

and

figure

out.

If,

if

the

group

wants

to

specify

something

now

you

could

say

to

Define

your

own

mixing

and

maybe

something

like

7983.

This

is

the

way

to

do

it,

or

maybe

you

want

to

do

the

flow

ID,

I

guess

I,

you

know,

I

would

leave

that

discussion,

open,

I,

don't

think

you

necessarily

have

to

solve

it

here.

B

I

C

Yeah,

thank

you.

So

this

is

probably

for

Bernard

but

I'm

a

little

confused

about

whether

you're

talking

about

having

having

your

application.

That

is

still

doing

the

same

alpn,

but

the

effect

is

behaves

differently

in

the

future

versus

how

big

a

pain

it's

going

to

be

to

do

a

new

alpn

skill.

If

we

do

it,

if

we

do

RTP

quick

and

then

do

RTP

mugs

quick,

is

that

can

you

if

you,

if

you

need

to

explain

this

to

me

over

a

beer

sometime?

That's

the

fine

answer

too.

B

Yeah

I

I:

it's

not

all

that

complicated

as

an

example

when

I

was

writing

the

little

sandbox

thing,

I

sent

the

configuration

of

the

Kodak

along

with

the

media,

so

in

other

words

web

codex.

When

you

configure

the

encoder,

it

spits

out

a

string

that

you

can

dump

into

the

decoder

to

configure

it,

and

so

it's

kind

of

like

a

poor

man's

offer

answer.

You

can

just

say

here's

what

I'm

sending

just

send

it

along

in

the

in

the

first

kind

of

pseudo

frame.

B

One

of

the

big

discussions

we

had

in

web

transport

about

session

IDs

was

all

about

trying

to

collapse

the

number

of

total

quick

connections

we

had

so

that

they

didn't

explode

and,

and

people

were

saying

for

deployments.

If

this

was

actually

a

big

issue,

you

don't

want

to

have

a

connection

explosion

because

it

it

limits

your

scalability.

So

anyway,

those

were

some

of

the

things

that

came

to

mind.

Spencer

be

happy

to

talk

more

about

it,

but.

A

A

I

I

Also

brought

this

one

up

in

the

presentation

earlier

length,

field

and

quick

streams.

So

far,

we

only

added

the

sentence.

I

mentioned

earlier,

that

quick

streams

have

to

be

closed

after

a

packet

was

finished

to

identify

the

packet

boundaries

as

the

receiver,

but

it

might

also

be

helpful

to

have

a

length

field

for

buffer

location

or

for

identifying

incomplete

frames

in

quick

earlier.

I

So

there's

a

suggestion

to

add

the

length

field

and

then,

if

we

add

a

length

field,

there's

the

question

of

what

kind

of

next

field

we

are

not

mentioned

that

16-bit

fields

from

RPC

4571

may

not

be

enough,

because

the

payload

may

be

longer.

Alternatives

are

using

units

of

four

octets

or

a

longer

field

or

click

variable.

Lengths

integers,

we

have

been

using

for

something

else,

I

think

provided

yeah

any

opinions

on

this

one

or

are

there

any

cons

to

adding

a

length

field?

Maybe

more

specifically

Spencer.

A

A

You

sorry

about

that

I

always

get

confused

with

raising

the

hand

versus

you

know

the

raising

pretty

pretty

turning

my

mic

on

yeah,

so

I

guess.

My

question

here

is

in

terms

of

the

length

field:

I

mean

I,

guess

the

question

is

about.

You

know

apis

for

the

incomplete

frames,

question

I,

guess

I'm,

certainly

asking

about

web

transport,

but

I,

guess

that

might

be

relevant

otherwise

do

they

distinct

tell

you

the

difference

between

a

stream

that

was

reset

and

truncated

versus

a

stream

that

was

received?

A

A

A

A

B

So

in

the

web,

transport

API

Jonathan

you're

supposed

to

get

an

error.

If

you

receive

a

reset

versus

what

they

call

a

done

indication

if

you

receive

a

fin,

so

at

least

in

theory,

you

should

be

able

to

tell

the

difference.

However,

what

I

found

is

sometimes

the

reset

doesn't

get

forwarded

like

in

my

dumb

server

implementation.

It

didn't

forward

the

reset,

so

I

actually

didn't

get

the

done

and

I

didn't

get

the

didn't

get

the

reset.

B

So,

and

you

know,

I

raised

the

question

about

whether

the

reset

was

always

reliable,

because

what

it's

supposed

to

do

is

turn

off

re-transmission

and

there's.

Actually

a

draft

that's

been

submitted

to

quick

for

kind

of

a

more

a

reliable

reset.

So

that's

been

a

question.

That's

been

raised

about

whether

it'll

always

come

through

so

anyway,

I'm.

B

A

D

If

you

want

to

send

something,

you

should

be

able

to

send

it

out

and

then

know

whether

it's

completely

received

or

not,

which

might

be

done

with

a

trailing

non-fakeable

signal

or

checksums

or

whatever,

but

and

length

Fields

sent

out

as

a

header

or

not

the

only

possible

solution

and

perhaps

not

the

optimal

solution.

We

have

burned

ourselves

before

on

that

one,

but

if

we

have

a

length

field,

then

we'll

definitely

go

for

a

variable

light.

Integra.

I

G

About

the

Finn,

isn't

the

fin

reliable?

So

you

could

just

have

the

rule.

If

you

received

the

fin,

you

notice,

you

received

the

whole

thing

and

if

you

haven't

yet

received

the

fin,

then

you

know

there's

more

still

to

come.

So

I

think

the

fin

operates.

As

the

you

know,

the

trailer

that

lets

you

know

you're

done.

G

A

G

G

A

G

G

B

G

I

Okay,

so

I

I

feel

like

langsfield

might

be

helpful,

so

I

will

prepare

a

pull

request

for

that

and

then

maybe,

if

there's

more

to

discuss,

we

can

do

it

in

the

issue

or

in

the

pull

request.

Then

we

can

continue

with

the

next

mixing

stream.

Genetograms

they're

not

also

provide

this

one

up

earlier

in

the

presentation.

The

current

draft

only

allows

to

choose

between

either

streams

or

datagrams,

but

does

not

allow

mixing

both

of

them

in

one

RTP

stream.

I

One

issue

I

hit

when

I

was

experimenting

with

this

is

that

there

was

a

synchronization

issue

which

is,

of

course

solvable,

but

the

problem

there

was

that

I

prioritize

datagrams

and

that

led

to

the

situation

where

the

first

height

frame

was

received

after

the

first

datagram

and

then

I

kind

of

had

to

wait

for

the

first

keyframe

to

arrive.

But

that

is

of

course

solvable

and

using

a

job

perform.

A

Yeah

I

mean

obviously

the

need

to

wait

for

the

iframe

after

the

P

frame

could

happen.

Just

you

know,

because

of

partial

reliability.

You

know

because

of

separate

individual

separate

reliability

of

the

streams.

Anyway,

even

if

they're

on

a

stream

they're

separate

streams,

you

know

you

could

have

a

packed

loss

on

the

iframe

and

thus

it'll

come

in

later

so

I

think

you're.

Not

this

doesn't

introduce

that

issue.

It

just

maybe

makes

it

more

common,

so

I

guess

one

question

which

I'm

not

sure

which

way

it

pushes

the

answer.

A

But

it's

an

interesting

question

is

there

was

a

question

raised

on

the

zulip

about

whether

there

is

actually

a

substantive

difference

between

using

datagrams

and

using

less

than

MTU

streams

and

I?

Guess,

there's

probably

detailed

differences

in

terms

of

how

it

appears

in

the

wire

and

obviously

because

it

might

be

implementation

difference

of

prioritization.

A

A

I

B

I

I

A

I

guess

my

point

is

that

if,

if

that

is

if

that

is

the

case,

that

those

aren't

different,

that

also

might

mean

that

mixing

them

is

fine,

because

it

doesn't

introduce

any

new

complexities.

It's

only

you

know,

might

make

certain

complexities

more

common,

but

they're

going

to

things

that

are

going

to

happen

anyway.

Yeah,

okay,.

G

B

With

respect

to

what

Jonathan

said,

what

I

was

seeing

is

that

the

the

stream

per

frame

transport

plus

the

partial

reliability

gives

you