►

Description

In this Kong Summit 2020 session, we will cover the architecture and approach we used to build out our digital platform at Goldman Sachs by leveraging Kong’s API gateway to implement a secure ingress controller for all digital channels, including private/public API and web interfaces. We will discuss how we integrated Kong’s API gateway with AWS native services to implement mTLS, observability and container runtime, as well as share our operational experience of running resilient API workloads in production.

A

A

A

Our

mission

is

very

simple,

provide

a

global

transaction

banking

platform

that

is

nimble,

secure

and

easy

for

our

clients

to

use

the

key

differentiators

are

the

platform

is

cloud

native.

In

other

words,

the

platform

is

built

from

ground

up

and

it

makes

it

easy

for

us

to

really

deliver

features

to

our

clients

at

time

to

market.

A

So

we

want

to

make

sure

that

we

we

have

a

api

first

mindset

and

expose

all

our

functionalities

and

capabilities

to

our

clients

through

very

rich

apis.

We

at

transaction

banking

take

a

lot

of

pride

in

building

and

designing

these

apis.

So

with

that

special

focus

on

apis,

let's

dive

in

the

topic

of

api

gateway.

A

So,

let's

discuss

the

problem

statement

so

when

we

started

designing

our

digital

platform,

we

embraced

you

know,

microservices

architecture,

and

one

of

the

key

problems

we

wanted

to

solve

is

how

do

we

enable

connectivity

to

this

micro

services

from

an

external

client?

So

we're

looking

to

stand

up

a

foundational?

You

know

component

that

would

basically

process

all

the

not

to

sound

api

traffic

from

the

clients

at

the

edge

and

then

make

sure

that

it

it

meets

and

satisfies

the

following

non-function

requirements.

A

A

A

A

Last

but

not

the

least,

the

api

gateway

should

support

multi-platform.

In

other

words,

it

should

support

both

cloud

and

on-prem

platforms.

So,

during

our

evaluation

we

found

that

the

kong

api

gateway

meets

all

these

requirements.

Hence

the

we

made

a

decision

to

actually

go

with

kong

as

a

strategic

api

gateway

for

a

digital

platform

in

the

cloud.

A

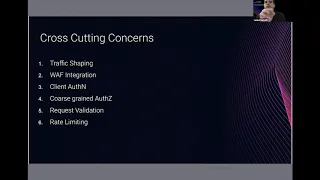

Okay,

so

let's,

let's

discuss

about

how

kong

really

implements

some

of

these

cross-cutting

concerns,

so

traffic

shaping?

So

essentially

you

know

you

would

have

a

number

of

micro

services

in

the

cloud

and

you

do

really

don't

want

clients

to

be

exposed

on

how

these

services

are

organized.

Ideally,

you

want

to

provide

one

single,

unified

interface

to

to

clients

and

then

have

some

rules

which

will

basically

decide

when

the

incoming

request.

A

Vaf

represents

the

web

application

firewall.

It's

a

very

specialized

software

which

prevents

you

know

the

actual

api

workloads

from

a

number

of

application.

You

know:

layered

attacks

such

as

cross-site

scripting,

sql

injection,

so

kong

provides

seamless

integration

to

a

number

of

you

know,

products

through

through

the

plugin.

A

A

I

must

admit

that

this

is

probably

one

of

those

plugins

which

has

overwhelming

number

of

configurations

which

also

talks

about

the

flexibility,

but

I

think

that

the

konk

team

was

really

instrumental

in

helping

us.

You

know,

guide

the

various

conflict

and

help

us

really

integrate

with

the

idp

that

that

was

interest

to

us.

A

You

know,

speaking

of

auth.

Authorization

is

also

important

where

you

know

it's

always

good

to

you

know,

make

a

security

control

where,

in

the

incoming

request,

does

the

client

have

the

permissions

to

basically

make

a

call

to

a

particular

endpoint,

and

you

can

think

of

different

models

where

you

could

have

authorization

based

on

products

or

you

could

have

authorization

within

a

product?

You

could

say

that.

A

Does

the

client

have

the

authorization

to

you

know

to

make

either

a

call

to

read-only

apis

or

could

be

an

update

apis

right

request,

validation,

another

key

security

control,

where

we

should

be

able

to

inspect

all

the

incoming

api

payloads

and

make

sure

that

it

adheres

to

the

contact

that

we

define?

For

example,

we

want

to

ensure

that

you

know

the

the

number

of

cookies,

the

number

of

headers

the

query

parameters

all

adhere

to

the

syntax

validation.

That's

been

defined

in

the

api

spec.

A

You

know

a

plug-in

to

basically

do

that

request,

validation

in

terms

of

rate

limiting

it's

important

to

and

for

the

api

gateway

to

total

the

number

of

client

requests,

and

the

idea

is

to

ensure

that

if

there's

one

client,

which

is

actually

you

know,

creating

some

peak

burst

traffic,

we

don't

want

that

client

to

actually

have

a

stability

impact

on

the

infrastructure

and

impact

other

clients.

So

kong

provides

a

rate

limiting

advanced

plug-in,

which

gives

a

lot

of

flexibility

around

how

we

configure

rate

limiting

windows,

and

also

it

provides

you.

A

Okay,

so,

let's

you

know,

switch

gates

and

spend

some

time

on

our

connectivity

architecture.

Packaging.

As

I

said,

you

know,

as

a

as

enterprises

bringing

you

businesses

in

in

the

cloud

they

will

have

to

live

with

the

world

where

you

have

number

of

api.

New

workloads

are

running

in

the

cloud

and

there

are

existing

workloads

in

your

on-prem

data

center,

and

you

know

you.

A

Okay,

now

we'll

let's

deep

dive

into

the

kong

deployment

architecture,

so,

as

I

said,

it's

important

for

kong

to

really

integrate

with

the

native

services

that

the

cloud

on

our

offer

provides.

In

our

case,

an

account

integrates

with

a

number

of

aws

services.

To

name

a

few.

You

know

we

we

like

the

idea

of

immutable

infra,

so

kong

is

packaged

as

a

in

a

docker

container

and

you

have

the

option

of

either

using

ecs

or

eks

in

in

aws

as

an

orchestration

engine.

A

All

the

secrets

that

are

you

know

relevant

to

kong

like

database

passwords

or

it

could

be

a

private

key

of

your

certificates

that

are

all

stored

in

secrets,

manager,

kong

also

integrates

with

pca

for

signing

of

some

certificates

and

we'll

talk

a

bit

more

detail

in

the

mkls

slide

certificate.

You

know

the

credential

rotation

is

a

good

practice,

so

ensure

that

all

our

credentials

are

database

fast.

Inserts

are

rotated

at

a

frequent

time

and

that

is

enabled

using

the

rotation

lambda.

It's

attached

to

a

secret

network

load

balancer.

A

We

basically

friend

kong

in

the

nodes

by

nlb

and

use

a

tcp

based

load

balancer

to

actually

sprinkle

the

request

between

different

kong

instances

that

are

deployed

in

different

everyday

zones.

For

the

you

know

for

the

purpose

of

resiliency,

we

ingest

all

the

cloud

watch,

the

the

kong

logs

and

the

kong

metrics

into

cloud

watch

which

allows

us

to

create

cloud

watch

metrics

and

alarms.

A

We

use

route

53,

for

you

know

accessing

the

kong

dns.

We

use

kms

for

encrypting.

Any

data

that

you

know

construct

on

disk

or

for

any

configuration

database.

Vpc

endpoint,

is

a

key

construct

here.

Where

you

know,

kong

would

need

to

connect

to

the

upstream

microservices,

which

are

all

deployed

in

different

aws

accounts

and

vpc.

Endpoint

enables

the

connectivity

between

kong

and

the

upstreams

rds

is

basically

the

in

you

know.

We

use

the

database

offering

to

store

all

the

con

config.

A

Okay,

interesting

so

mtls,

so

how

do

we

so

one

of

the

things

we

wanted

to

ensure

is

for

our

system

to

system

communication.

We

think

mtls

is

a

good

choice

because

it

it

doesn't

allow

there's

no

bare

token

that

exchanged

between

the

client

and

the

server

during

the

handshake

and

the

magic

of

tls

you

know

desert

so

to

for

to

get

mtls

to

work.

A

In

a

first

implementation,

we

had

to

really

customize

the

nginx

template

to

inject

some

of

the

nginx

specific

directives

to

get

the

tls

setup

mkls

working.

So

essentially

you

know

we

need

to

create

that.

We

have

pca,

which

we

allow

to.

You

know,

create

the

certificates

and

it's

important

to

really

understand

that

there

are

two.

You

know:

distinct

mtls

handshakes

here,

one

between

the

client

and

kong.

A

That's

that's

one

handshake

and

then

we

also

ensure

that

the

there

is

an

emptyless

handshake

between

the

kong

and

upstream

service,

and

the

idea

is

to

ensure

that

we

have

end-to-end

mtls.

You

know

connection

for

the

api

flow,

so

we

create

two

different,

distinct

pcas

one

pca

for

assigning

the

certificates

and

in

providing

it

to

the

clients

and

the

other

pc

to

actually

sign

the

certificates

and

give

it

to

kong

when

it

actually

has

just

handshake

with

the

upstream.

So

this

nginx

template

obviously

has

its

downsides.

A

Where

it

does,

it

doesn't

really

match

map

the

the

client

context

to

the

concept

of

consumer,

and

you

can't

leverage

some

of

the

consumer

related.

You

know

benefits

of

using

kong

plugins.

So

this

is

where

another

example

of

how

the

con

key

was.

You

know

able

to

listen

to

us.

You

know,

receive

get

our

inputs

and

then

work

with

us

to

build

the

first

class

mtls.

You

know

template

to

support

mtls

client

auth.

A

The

third

point

is

around

it's

important

that

you

know

once

you

are

through

with

the

mtls

handshake.

We

need

to

basically

pass

the

common

name

and

the

certificate

material

in

in

the

in

the

headers,

so

that

the

upstream

services

actually

understands

who

the

client

is

and

actually

do,

a

digital.

You

know

integrity

check

on

the

certificate

itself.

A

We

thought

it'd

be

good

to

share

the

experience

of

how

some

of

the

challenges

we

you

know

have

faced

with.

Initially

with

you

know,

putting

nlp

in

front

of

com.

Essentially,

when

you

get

a

client

request,

you

know,

nlb

would

forward

the

request

to

kong

and

kong

would

basically

get

the

nlp

site,

be

the

source

ip

and

you

would

really

lose

track

of

who

the

real

client

ip

is.

A

So

so

we

had

to

really

tweak

some

configuration

on

the

nlb

to

enable

proxy

protocol

support

where

it

would

basically

put

the

actual

client

ip

as

part

of

the

proxy

header,

and

then

we

use

the

nginx

real

ip

module

to.

Basically,

you

know

read

the

ip

from

the

proxy

header

and

stick

it

into

one

of

the

http

headers,

so

that

the

real

ip

can

be

used

for

auditing

compliance

and

for

troubleshooting

purposes.

A

Okay,

so

speaking

about

you

know,

deployment

strategies,

you

know,

I

think

it

was

important

for

us

to

make

sure

that

kong.

You

know

we

have

a.

You

know

good

developer

experience

for

for

our

developers.

So

essentially

there

are

two

distinct.

You

know

stages

where

you

know

developer

interacts

with

with

kong.

One

is

when

you

develop

a

new

micro

service.

When

you

deploy

a

new

micro

service,

you

need

to

register

that

micro

service

with

kong.

A

You

know

as

a

service

entity

and

then

once

you

do

that

you

know.

The

second

step

is

to

basically,

you

know,

register

the

open,

ap

aspect

with

calm,

so

that

con

then

can

configure

the

routes

and

the

various

plugins

using

the

information.

That's

there

in

the

open

api

spec,

and

we

think

that

that's

you

know

that

automation

is

important

to

really.

You

know,

make

sure

that

developers

have

a

great

experience

of

onboarding

their

end

points

onto

the

calm.

A

You

know

we

we

take

infrastructure

score

very

seriously

in

the

firm.

The

idea

is

to

make

sure

that

we

have

an

automated

way

of

provisioning

the

infrastructure,

and

we

really

believe

in

you

know

our

infrastructure.

You

know

repaving,

where

you

know

you

want

to

you

know

on

a

timely

basis.

You

want

to

really

destroy

all

infrastructure.

You

know,

create

the

infrastructure

from

the

scratch

and

infrastructure

code

as

a

core

tools

like,

and

you

know,

ansible

and

terraform

really

helps

you

to.

You

know

achieve

that.

A

We

see

that

kong

has,

you

know

as

a

proxy

server

jackson,

data

plane

and

then

admin

server,

which

has

a

control

plane,

and

we

really

like

that

sort

of

you

know

distinction

between

the

two

and

the

separation

of

runtimes,

because,

ideally

we

want

to

ensure

that

the

the

control

plane

ability

window

is

minimal.

So

what

we

essentially

do

is

we

have

a

different.

A

If

you

have

any

issues,

okay

in

terms

of

observability,

you

know-

I

think

it's

important

to

you

know,

make

sure

that

you

know

we

capture

the

all

the

key

metrics

from

kong

and

provide

and

convert

those

metrics

into

insights.

That

really

helps

our

you

know:

l3

our

sre,

our

production

support

team

to

look

into

the

you

know

these

metrics

and

help

troubleshooting,

or

if

there

is

any

abnormal

behavior

in

a

system,

so

kong

provides.

You

know

a

number

of

key

information

to

the

locks.

A

Typically,

you

would

see

that

either

the

locks

have

too

much

of

information

which

could

be

noise

or

it

has

too

little

information

which

is

not

useful.

I

think

kong

gives

the

flexibility

to.

You

know

make

sure

that

you

have

the

optimal

amount

of

information

in

access

logs

for

us

to

be

able

to

track

all

the

requests.

You

also

like

the

json

response

format

in

request

response

logs

and

one

of

the

key

metrics

that

we

are

interested

in.

That

is

the

the

latency

to

really

find

out

that,

for

you

know

across

requests.

A

As

I

said

before,

we

use

cloudwatch.

We,

we

ingest

all

the

json.

You

know

formatted

logs

into

cloud

watch

and

we

use

cloudwatch

log

insights,

which

provides

you

a

powerful

json

style

query

to

actually

slice

entice

the

data.

We

build

a

number

of

cloud

watch

dashboards

which

gives

visibility

into

how

the

traffic

is

performing

and

really

allows

you

to

slice

and

dice

the

various

metrics

like

latency

and

response

codes

by

in

a

client

and

by

service.

A

A

So,

just

to

you

know

summarize,

you

know,

as

a

api

gateway,

plays

a

very

critical

role

in

the

microservice

architecture,

to

really

act

as

a

mediator

between

you

know,

client

and

the

and

the

api

workloads.

I

think

the

nice

thing

that

our

security

team

and

compliance

team

likes

is

that

all

the

security

controls

are

centralized

and

they

are

all

implemented

in

in

in

the

central

api

gateway

layer

and

really

it

you

know,

takes

the

you

know

some

of

these

cross-cutting

concerns.

You

know

the

work.

A

The

the

responsibilities

gets

shifted

from

the

application

development

teams

into

the

into

the

api

layer.

Kong

is

the

plugin

architecture

is

extremely

extensible

and

we

had

a

couple

of

use

cases

where

we

had

to

really

customize

the

the

plugin

to

meet

our

own.

The

high

bar

that

we

set

for

our

for

our

own

internal

security

standards.