►

From YouTube: Kubernetes WG IoT Edge 20180914

Description

September 14 2018 WG meeting presentation on KubeEdge by Cindy Xing followed by Q&A and discussion

B

B

B

B

B

They

can

enable

a

lot

of

things,

as

I

mentioned,

for

the

first

scenario,

the

car

and

the

driver

can

be

recognized

automatically

and

with

actions

taken

and

then

for

the

second

for

the

parking

lot

or

the

gas

station

the

cars

their

locations.

All

the

data

can

be

collected

analyzed

so

that

a

lot

of

things

are

guidance

guidance.

A

simple

guidance

board

can

be

shared

to

the

audience,

and

then

you

enable

a

lot

of

efficient

and

then

drill

down

all

the

tools

scenarios

we

found

out.

B

B

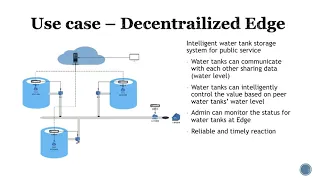

So

here

is

a

real

customer

scenario.

We

worked

with

a

customer

in

china,

it's

about

a

water

tank

system

in

a

city,

okay,

as

you

can

see,

there

are

abc

three

water

tanks,

geo

located

at

different

locations

in

the

city

and

then

for

each

water

tank.

There

is

the

edge

node,

the

sensors

and

valves

are

installed

among

other

edges.

B

They

are

aware

of

each

other,

so

they

can

from

a

network

or

perspective.

They

can

communicate

with

each

other,

for

example,

water

tank

a

can

broadcast

its

water

level

to

the

other

water

tanks

as

a

whole.

The

system

can

intelligently

decide

how

much

and

the

valves

need

to

be

open

or

closed

so

that

the

whole

system

is

efficient

and

prevent

like

a

water

hammer,

those

kind

of

things.

B

So

some

details

is

like

they

need

network

communication

among

the

water

tanks.

The

whole

system

is

decentralized

in

the

sense

in

case

there's

no

network

connection

between

edge

to

the

cloud

the

system

can

autonomously

work

effectively

and

local

quick

in

case

of,

for

example,

I

mentioned

the

water

hammer

scenario.

B

Actions

can

be

taken

really

quick

and

reliably,

so

this

is

and

on

the

other

hand,

from

a

cloud

perspective

adam

for

the

whole

water

tank

system.

They

can

monitor

the

health

for

the

whole

system.

So

this

is

another

scenario

next,

so

I

like

to

share

how

huawei

is

viewing

the

edge.

As

you

can

see,

cloud

is

in

the

data

center.

Then

we

have

the

edge

nodes

and

then

the

things

a

lot

of

iot

devices

we

see

edge

is

an

extension

of

a

cloud.

B

The

edge

nodes

are

located

at

edge,

but

they

are

managed

and

registered

from

the

cloud

applications

or

serverless

functions

are

orchestrated

and

deployed

from

cloud

to

the

edge.

So

this

is

as

that,

as

we

see

it's

a

cloud

extension

and

then

between

the

edge

and

the

cloud,

there

need

to

be

a

bi-directional

network

communication,

the

communication

sometimes

for

the

edge

it's

it

has

its

own

private

network

and

then

we

need

to

make

sure

the

bi-directional

communication

is

enabled.

B

B

B

C

B

C

B

That's

an

excellent

question,

so

I

think

from

os

perspective.

Right

now

we

are

targeting

linux,

but

I

know

like

for

amazon:

aws

is

supporting

our

free

rtos,

but

it's

still

linux

based

and

for

microsoft

is

exploring

windows.

I

think

this

system,

the

you

know

like

for

kubernetes,

it's

supporting

any

architecture,

it's

just

a

kind

of

execution

work.

B

C

B

A

B

B

Even

the

resources

are

located,

the

edge

node

is

located

remote,

and

then

we

have

a

special

component.

We

call

it

cool

bus

or

coupe

edge

bus.

This

one

enables

all

the

communications

bi-directional

and

multiplex

communication

tcp

communication

between

the

edge

node

and

the

cloud

and

even

like

in

production.

We

support

like

between

edge

to

edge

as

well.

D

D

And

the

so

this

is

a

this

is

a

fascinating

slide.

Hopefully

we

can

maybe

kind

of

talk

talk

through

some

of

the

details

on

it.

How

okay?

How

does

that

edge

controller?

Compare

to

the

virtual

cubelet

idea?

Does

it

does

it

represent?

How

does

it

represent

the

edge

in

the

in

the

master

control

plane?

Is

it

just

a

custom

resource,

or

is

it

actually

a

proper

node.

B

It's

a

proper

node.

It's

still

the

same,

so

it's

just

hide

all

the

from

a

concept

perspective.

It's

similar

to

the

virtual

corporate,

though

all

the

at

each

at

node

is

represent

a

single

kubernetes

node.

We

don't

like

hide

anything

there,

so

each

individual

node

will

be

a

single

node

here

in

kubernetes

api

server

and.

D

B

B

Another

point

is

we

want

this

app

engine

to

be

lightweight

because

as

much

as

possible

cover

later

has

a

lot

of

functionalities,

we

may

not

need

it

in

the

edge

scenario,

so

we

modified

it

and

then

it

can

take

action.

The

other

hand

is

as

similar

to

equivalent

it

can

do

the

plaque

actions

and

report

all

the

node

and

part

status

back

through

the

sync

service

to

the

api

kubernetes

cluster.

B

C

So

I'm

also

interested

then

in

how

the

container

images

get

pushed

down.

Are

these

held

in

an

image

repository,

that's

centrally

managed

and

then

pushed

to

the

locations,

and

then

what

that

implies,

which

is

interesting

is

that

since

you're

non-homogeneous,

meaning

the

images

that

would

run

at

central

are

likely

x86,

but

the

ones

at

the

edge

could

be

arm

and

making

those

in

a

an

image

repository

is

something

that

I'm

not

sure

has

commonly

been

done.

Have

you

guys

got

a

prototype

like

that

working.

B

B

B

B

So

for

the

rolling

update

we

haven't

tried

yet,

but

you

know

like

a

from

a

monitoring

perspective

using

couple,

people

can

just

do

what

we

are

currently

be

able

to

do.

Like

you

query

the

know

the

status

or

part

of

that

status,

so

the

philosophy

or

principle

we

are

trying

to

maintain

is

like

even

at

the

edge

scenario,

we

are

working

as

a

like.

A

normal

cluster.

Things

are

still

continue.

Working.

B

B

D

D

B

D

The

container

content

is

pulled

things

like

steven

mentioned

around.

Like

do

you

use,

I

guess

the

biggest

overall

question

is

sort

of

how

and

where

have

you

needed

to

deviate

from

what

kubernetes

does

as

sort

of

default

concepts

in

order

to

achieve

the

functional

goals

you

needed

and

were

there

are

there

any

of

those

places

that

could,

you

know

essentially

be

turned

into

features

in

kubernetes

itself,

rather

than

kind

of

custom

adjacent

code?

B

I

think

that's

an

excellent

point

personally

from

a

pod,

spec

or

conflict

map

or

secret

all

those

kind

of

thing

perspective.

Well,

I'm

implementing

the

edge

controller.

I

don't

see

kubernetes

lacking

any

capabilities,

so

the

only

challenge

is

around

the

service.

Standpoint,

concept,

controversies

and

stuff

like

that.

B

We

you

know

like

in

the

address

scenario,

as

I

mentioned,

the

edge

node

can

be

in

the

private

network.

How

can

we

ensure

that

bi-directional

network

communication

is

a

it's

the

biggest

challenge

and

then

that's

why

we

enable

the

bus

and

then,

on

top

of

that,

how

can

we

enable

the

service,

endpoint

service

and

allow

customers

to

to

like

just

deploy

application,

then

use

service

to

enable

the.

A

B

B

D

B

B

C

B

D

I

got

it

thanks,

yeah

and

I

know

maybe

maybe

feel

free

again

to

deflect

this

until

we

have

the

person

who

maybe

can

answer

but

from

your

understanding

is

the

is

the

idea

of

the

bust

to

provide

something

that

is

at

the

kind

of

logical

message.

Layer

in

terms

of

you

know,

discrete

controller

messages,

or

is

it

to

provide

something

that

is

more

of

a

network

layer

like

pass-through

for

being

able

to

kind

of

reach

arbitrary

kind

of

cluster

ips

or

that

kind

of

thing.

B

D

D

D

B

C

B

C

B

B

So

the

store

would

store,

for

example,

from

a

kubernetes

orchestration

perspective.

It

will

store

the

products

back

like

a

customer

put

through

the

cook

cuddle

and

also

the

the

node

information

or

the

metadata,

and

then,

on

the

other

hand,

from

the

app

engine

sync

back

then

through

the

controller,

it

also

store

the

the

part

status

and

node

status,

so

that,

like

it,

can

report

back

to

the

kubernetes

api

server.

D

D

You

have

this

app

engine

which

is

sort

of

a

derivative

of

the

cubelet

right,

yes,

and

now,

instead

of

having

that

communicate

over

kind

of

layer,

three

network

directly

to

the

the

api

server

in

the

cloud,

you're

kind

of

relaying

in

both

directions.

Some

of

this

some

similar

information

through

this

sort

of

alternate

path,

and

I'm

trying

to

I'm

trying

to

get

a

little

bit

of

better

understanding

of

why?

B

Okay,

yeah

I'd

like

to

hear

all

your

thoughts

as

well,

so

the

the

main

point

is

to

enable

the

loosely

couple,

because

we

assume

the

add

nodes

can

be

offline

and

reconnect,

on

the

other

hand,

is

for

all

the

data

they

are

metadata

right,

so

have

some

like

duplic

redundancy,

really

think

it's

fine.

The

the

other

one

I

want

to

say

is,

like

you

know,

for

kubernetes

api

server,

for

you

don't

have

in

the

in

the

in

the

api

server

in

the

etcd

tree.

B

D

B

C

D

B

So

I

I

think

the

other

one

is

conveyor:

okay,

rephrase

or

repeat

what

we

talked

earlier.

I

think

prison

asked

me

about

the

what

features

kubernetes

is

lacking

after

we

enable

this

architecture.

So

we

talked

about

the

the

kubernetes

service

and

then

steve

asked

about

the

the

image

repository

for

different

architectures.

B

Maybe

others

you

guys

can

help

me

understand

what

exactly

or

maybe

I

didn't

follow

what

his

question

about,

because

from

a

agile

perspective,

when

the

edge

node

configuration

is,

is

clear,

then

it

knows

what

to

pull

okay

and

then

I

think

the

third

one

is

about

h

a

dj.

Do

you

know

if

there

are

other

comments,

we

can

write

down.

D

A

D

B

F

F

B

B

F

B

B

D

B

E

D

D

Usages

and

that's

hypothetical,

I'm

not

saying

that

would

become

an

issue.

I

just

think

that's

that's

a

potential

area

where

you

would

want

to

divide

the

effort,

but

you

know

honestly,

it

could

just

be

it's

an

independent

fcd

instance

as

well.

It

just

sort

of

has

to

be

what's

mixing

the

the

task

on

on

one

ltd.

Okay,.