►

From YouTube: Kubernetes SIG Storage 20190620

Description

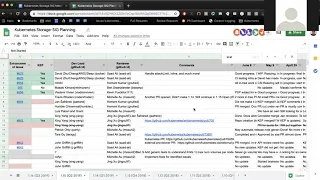

Kubernetes Storage Special-Interest-Group (SIG) Meeting - 20 June 2019

Meeting Notes/Agenda: https://docs.google.com/document/d/1-8KEG8AjAgKznS9NFm3qWqkGyCHmvU6HVl0sk5hwoAE/edit#heading=h.8ycgteyv60fk

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Saad Ali (Google)

Chat Log:

None

A

Hello

everyone-

this

is

the

meeting

of

the

kubernetes

storage

special

interest

group

today

is

June

20

2019.

As

a

reminder,

this

meeting

is

recorded

and

published

on

YouTube

today,

we're

gonna

do

planning

for

the

next

quarter,

q3,

which

will

be

the

1.16

version

of

kubernetes

I,

sent

out

a

link

to

the

spreadsheet

that

we

use

for

planning,

and

we

added

a

new

sheet

to

this

spreadsheet

to

start

tracking

the

items

that

we

think

we

should

be

working

on

for

the

next

quarter

to

populate

this

I

copied

over

the

items

from

the

115.

A

From

them

from

115

that

we

didn't

finish,

and

so

what

we

can

do

is

review

those

and

see

if

they're

still

applicable

and

who

wants

to

work

on

them

and,

of

course,

feel

free

to

add

to

the

bottom

of

this

anything

that

you

think

we

should

be

working

on.

So

the

important

things

here

will

be

to

figure

out

one.

Are

we

going

to

work

on

this

to

what

the

target

status

should

be

three,

what

the

priority

should

be

and

who

should

be

working

on

it,

and

so

with

that?

A

B

Yes,

so

CSI

migration,

we

managed

to

get

most

of

the

pieces

done

in

115,

for

the

Alpha

and

for

116

a

plan

is

to

basically

move

to

beta,

improve

test

coverage,

tie

up

some

loose

ends

from

around

unit

tests

and

stuff,

like

that,

so

mainly

improve

test

coverage.

Get

the

volume

attach

limits.

Support

in

I

know

that

strap

is

a

separate

item

completely

what

we

are

resizing,

that's

still

outstanding

and.

A

B

A

C

D

A

E

A

E

A

E

F

E

A

This

is

a

probably

one

of

the

most

complicated

features

that

we

have,

because

it's

essentially

taking

the

existing

mount

unmount

attach

detach

and

kind

of

touching

all

of

those

and

replacing

them

with

custom

things

for

block

and

getting

that

code

path.

Exercised

I

think

is

what

folks

are

looking

for

kind

of

stress

tested

to

say:

oh

yeah,

we're

confident

that

this

will

work,

and

so.

A

D

E

D

Think

it's

fair

to

say

that

you

know

we

definitely

want

to

see

some

production

usage

before

we

go

to

GA

I.

Just

don't

know

how

we

promote

that

or

maybe

that's

what

we

need

to

do

is

actually

promote

it

and

try

and

socialize

it

and

talk

about

it

more

and

see

if

we

can

get

people

to

actually

use

it.

Yeah.

G

E

Know

John,

and

often

it's

like

your

red

hat,

like

we're,

okay,

taking

stuff,

it's

it's

beta,

you

know

giving

it

a

stronger

support

statement.

So

maybe

us

you

know,

keeping

it

beta

and

then

moving

it

to

GA

with

an

open

shift.

Give

us

some

of

the

production

time

that

we

need

to.

You

know

considered

QA

upstream

yeah

I

mean

I.

It's.

E

D

H

E

E

And

we'll

just

do

this

so

time

you

know

in

openshift

basically

and

look

for

customers,

because

I

already

know

John,

and

you

know

some

other

people

are

looking

for

this,

we'll

be

looking

for

and

using

this

arrow

releases

at

this

point,

I

think

it's

more

just

a

so

time

thing

than

an

actual

feature

movement

right.

We

need

some

sort

of

a

time

and

then

we

can

move

the

feature

yeah.

I

K

K

L

E

L

Yeah

and,

and

what

makes

it

so

nasty

is

that

there

are

interactions

between

the

file

system,

the

ice,

cozy

file

system

and

eyes,

because

he

blocked

because

they

end

up

sharing

this

the

underlying

session.

So

if

you

have

a

mixture

of

ice

cuz

you

file

system

and

I

scuzzy

blocks,

it

gets

really

thorny,

so,

basically

that

all

that

I

scuzzy

code

needs

to

be

rewritten

to

have

some

common

core

that

shared

between

blocks

and

file

systems

and

right

now,

it's

basically

two

separate

sets

of

code

that

share

very

little.

L

E

L

So

so

Matt

Wong

attempted

to

fix

this

and

I

tested

his

fix

in

in

my

testing.

The

fix

actually

made

things

worse,

so

I

gave

him

feedback.

That

said

knows

this

is

not

the

right

fix,

but

then

I

guess

he

got

busy

working

on

other

things

and

I

got

busy

working

on

other

things.

We

didn't.

We

didn't

pursue

this,

but

so

so

yeah

I'm

totally

to

work

with

whoever

wants

to

fix

this

and

and

I

will

test

it

and

I

will

give

you

the

test.

Cases

and

I

will

run

the

test.

L

Cases

and

I

will

prove

that

it

actually

that

the

the

eventual

fix

was

really

solid,

because

I

have

I

wrote

a

bunch

of

test

cases

to

illustrate

how

bad

the

problem

is

to

them

to

people

out

here.

Netta,

ok,

the

the

larger

issue

of

roblox

in

general

I

mean

I.

Think

I

scuzzy

is

probably

one

of

the

common

protocols

for

roblox,

but

I'm

sure

that

there

are

others

that

also

matter

to

Michelle's

point.

We

really

want

to

see

people

using

in

production

and

saying

yes,

I

trust.

L

E

M

L

L

E

A

All

right,

thanks

guys,

so

it

sounds

like

I'm

putting

your

name

down

Brad

as

the

dev

lead

on

this,

and

hopefully

you

can

find

a

resource

for

it

then

we'll

help

architect

it

and

review

it.

We're

gonna

keep

it

in

beta

for

this

quarter.

There

are

known

issues

that

Ben's

already

tracking,

and

hopefully

we

can

get

some

more

stress,

sting

and

usage

to

find

more

issues

and

get

this

more

stabilized.

A

Alright.

Moving

on

to

the

next

item,

we

have

scalability

testing.

So

this

is

what

we

were

talking

about

earlier.

We

say:

scalability

really

wants

us

to

start

adding

tests

for

storage

to

the

scalability

test

suite.

Currently

we

don't

really

have

any

volume

related

tests

at

all,

so

that

path

doesn't

get

exercised.

A

Ok,

what

we'll

do

is

leave

that

leave

that

unassigned

and

we'll

see

if

anybody

commits

to

it

at

a

future

point

if

you're

new

to

the

sig

and

you're

kind

of

on

the

sidelines

about

where

you

should

jump

in

this

might

be

a

good

opportunity

to

contribute.

So

we

really

really

need

scalability

tests,

and

this

would

help

us

save

a

lot

on.

Moving

on

next

item

is

the

CSI

library

moving

the

mount

library

to

the

external

common

repo

Travis?

A

A

So

that

sounds

good

to

me

and

we'll

keep

that

as

a

p1

for

this

quarter,

pod

node

volume

attached

limits

implement

the

beta

redesigned.

So,

as

I

understand,

there

was

an

attached

limits

for

the

entry

code.

We

didn't

have

anything

for

CSI

the

entry

stuff

we

weren't

very

confident

there

were

some

issues

raised

about

the

way

it

was

implemented,

and

so

there

was

a

proposal

to

redesign

it

and

redesign

it

in

such

a

way

that

it

would

also

include

CSI

I

believe

that

design

happened

last

quarter.

Are

we

ready

to

implement

this.

A

So

we're

gonna

call

this

beta

for

this

quarter,

right,

yeah,

okay,

and

so

that

looks

good.

You

priority

one

seems

right.

Okay,

next

item

is

volume

snapshot,

namespace

transfer,

so

last

quarter

was

design.

I

believe.

Did

the

cap

get

approved

or

not

it

yet?

Actually,

we

haven't

approved

the

cap

yet

correct.

D

A

D

K

D

A

D

A

A

H

A

N

F

A

Next

item

is

generic

data

source

populate

the

functionality

here

is

today

we

have

two

types

of

data

sources:

one

is

a

snapshot,

the

second

last

quarter.

We

added

cloning

and

potentially

we

want

to

allow

any

sort

of

generic

data

sois,

meaning

the

the

thing

that

fills

a

newly

created

volume

doesn't

necessarily

have

to

be

the

same

thing

that

provisions

it.

This

would

enable

things

like

having

a

populate

earth

at

populates,

a

new

volume

with

a

docker

image,

for

example.

A

So

you

could

have

a

docker

image

populate

ur

and

the

work

required

here

would

be

some

how

to

do

this

in

a

backwards

compatible

way

such

that

a

popular

would

be

able

to

read

and

write

to

a

volume,

but

that

volume

would

not

be

generally

available

to

other

workloads,

regular

workloads

until

the

populate

ur

was

done

populating

it

writing

data

to

it

and

doing

that

in

a

backwards,

compatible

generic

way

and

there's

a

lot

of

other

use

cases.

I

think

folks

would

really

like

this

feature,

but

it

is

fairly

complicated

to

implement

so

I.

A

D

I

am

theirs

so

based

on

the

feedback

from

that

design.

There's

there's

basically

two

pieces

to

this

now.

The

first

piece

is

the

kubernetes

api

pieces,

including

what

you

just

described

in

terms

of

what

to

do

with

the

volume

in

terms

of

how

to

market

whether

it's

it's

finalized

or

not.

The

other

piece

is

the

data

source

field.

So

we

need

to

extend

that

again.

D

I

think

what

we'll

need

to

do

is

just

remove

the

filtering

altogether,

because

if

we're

gonna

allow

external

CRTs,

then

there's

no

other

way

that

I

know

to

do

that

and

then

the

other

piece

that

that

we

had

kind

of

touched

on

and

we

never

really

hash

tout.

As

we

talked

about

this

model

of

a

library

or

an

SDK

for

this

okay

and

I

I'm,

not

quite

sure

that

I

have

enough

kind

of

insight

into

into

what

you're

thinking

there.

So

I

think

at

some

point.

D

A

A

Next

few

items

are

for

the

generic

drivers

that

the

cig

still

owns

for

CSI

moving

the

NFS

driver

to

a

separate

repo

moving

the

I

scuzzy

driver

to

separate

repo

and

moving

the

fibre

channel

driver

to

a

separate

repo.

This

for

some

of

these,

the

work

has

already

begun,

so

they're

kind

of

in

different

stages.

D

A

H

N

D

A

A

A

M

N

D

A

N

O

A

Okay,

unless

we

have

somebody

volunteering

to

help

move

that

we'll

leave

that

on

the

back

burner

for

now,

next

items

are

related

to

so

we

kind

of

had

three

issues

here:

one

was

non-recursive

volume,

ownership,

revisit

UI,

UI

d,

GID

handling

and

file

permission

handling

for

Windows.

Are

these

worth

combining

into

a

single

item

or

effort?

Yes,.

C

I

would

say

no

actually

yeah,

because

the

design

that

I

was

inked

is

on

online,

but

she

has.

She

has

only

a

solution

for

the

recursive

bottom

ownership

change

and

we

do

not

yet

have

a

design

or

anything

for

like

revisiting

for

you,

idgi

handy

for

Windows

or

in

general

UID

GID,

and

we

have

you,

don't

have

anything

concrete

for

that.

So

I

was

right

on

here's.

E

My

view

on

this,

where

I,

why

I

said

that

we

should

probably

move

together.

It's

like

we

we

fiddle

around

on

the

you

know

on

each

little

piece

of

this,

but

we

haven't

looked

at

it

holistically

and

I

kind

of

feel

like

the

design,

for

this

is

something

like

we

should

just

dive

in

and

break

it

apart

right,

like

we've,

we

have

like

three

different

pieces

here

and

we've

looked

at

separately,

but

we

haven't

looked

at

it.

E

B

G

B

A

L

L

But

I

mean

fundamentally,

this

is

just

a

hole

in

the

in

the

container

design

decade

right.

It

is

forgot

that

this

kind

of

thing

matters

implemented

all

the

namespacing

stuff

in

the

kernel

and

like

the

real

way

to

fix.

This

would

be

to

go

fix

that

design

and

add

another

abstraction

layer

and

the

kernel

so

that

you

can

do

these

kinds

of

things.

E

L

L

Much

much

like

the

kernel

has

a

UID,

or

what

do

they

call

it?

The

the

pid',

not

the

pit,

the

the

the

UID

GID

namespace

mapping.

There

needs

another

mapping

at

the

VFS

layer.

You

can

say:

okay

in

this

namespace,

the

VF

s

will

also

perform

this

other

mapping

that

that

is

different

than

the

one

that

is

performed

at

the

for

the

UID

G

IDs

in

terms

of

permissions,

like

good

serialization

of

you,

IDs

G

IDs

on

to

disk

needs

to

be

something

that

can

be

controlled

at

the

namespace

level

and

I.

B

C

There's

a

s

in

the

next

problem

here

as

well,

actually

like,

even

if

you

fix

the

like

the

mr.

jenks

design,

fixes

the

for

like

for

just

a

four-volume

permission,

but

still

like

honest

Linux

and

I

will

disclose

they

still

doing

it.

Person

CH

con,

so

that's

a

fix

that

has

to

come

from

kinda

a

run

time

actually,

because

we

cannot

fix

it.

L

L

E

D

L

A

So

what

we

could

do

is

have

possibly

multiple

streams

of

work.

One

would

be

kind

of

figuring

out

this

new

design

end

to

end

everything

from

potential

kernel

changes

all

the

way

up

to

kubernetes

api

changes

and

then

the

rest

would

be

kind

of

more

immediate

hacky

solutions

to

work

around

the

pains

that

we

are

seeing

today,

non-recursive

volume

ownership

stuff

is

pretty

painful.

So

if

there's

some

temporary

solution

that

would

ease

that

pain

that

would

be

good

file.

Permission

handling

for

Windows

I

think

is

kind

of

in

a

similar

boat.

A

If

we

can

get

an

answer

for

it

great,

but

all

of

this

stuff

needs

to

be

done

in

coordination

with

this

new

design,

so

that

if

the

new

design

says

that

we

should

wait,

then

we

can

hold

off

on

it.

If

there's

something

we

can

do

short-term,

we

go

forward

with

it.

I

don't

know.

If

we

need

to

design

revisit

UID

GID

separate

from

this

new

design,

maybe

we

can

drop

that

thoughts.

C

E

C

G

E

G

A

L

E

That

stuff

active

permission

I'm

in

good

condition

or

do

we

need

to

is

I

guess

what

I'm

asking

is

line

18

is

that

to

just

make

all

the

town

ting

work

or

is

that

to

like

figure

out

how

to

do

counting

and

mapping

and

everything

else

in

a

more

intelligent

way?

So

if

it's

just

getting

it

to

work,

then

I

think

we

should

keep

that

separate.

L

At

the

at

the

very

least,

you

want

to

make

sure

that

whatever

we're

doing

on

unix

will

also

work

on

Windows,

but

right,

but

maybe

with

an

eye

towards,

is

there

something

we

can

do

on

Windows,

that's

less

crappy

right

and

the

answer

might

be

no,

but

but

Windows

does

have

a

lot

of

interesting

tricks.

Do.

A

B

A

A

C

H

A

C

A

N

C

A

A

Looks

like

there's

already

a

enhancement

issue

for

it,

so

that

should

be

fun.

Okay.

Next

item

is

CSI

volume,

health.

There

was

a

design

kept

that

I

think

Nick

put

out.

There

was

a

lot

of

comments

on

that.

I

didn't

look

at

the

design

but

looks

like

there's

a

lot

of

activity

there.

Does

anybody

know

what

the

status

of

that

is?

Is

that

in

a

condition

to

be

implemented

as

alpha

this

quarter

or

is

the

design

still

very

much

in

flux.

F

F

A

F

F

Just

if

the,

if

the

volume,

so

what

we

are

going

to

do

is

if

the

warning

cannot

be

used.

If

you

know

some

problem

with

it

how's

the

problem,

then

we

were

tainted

so

that

controller,

we're

not

try

to

you

like

attach,

attach

it

or

do

anything

with

it.

So

so,

basically,

that's

that

what

that

way

is

okay,.

F

A

F

A

Q

F

F

Q

F

F

Q

A

A

A

R

A

This

is,

we

I,

think

highlighted

some

issues

with

the

way

that

read-only

has

handed

handled

and

I.

Think

Michelle

pointed

out

that

this

becomes

important

once

we

have

cloning

and

pop

you

laters,

which

we

now

have

this

of

115.

So

we

should

really

do

this.

Anyone

interested

in

working

on

this

there

is

an

issue

that

basically

documents

what

needs

to

be

done.

D

A

A

B

A

F

A

A

We

have

no

insight

into

that,

and

also

it

doesn't

really

make

sense

for

a

lot

of

this

logic

to

be

duplicated

in

every

single

driver

separately.

So

I

think

what

I

would

like

to

see

is

a

proposal

where

we

shift

the

common

bulk

logic

into

a

common

snapshot,

controller

that

is

deployed

as

part

of

kubernetes

or

a

kubernetes

deployment

and

then

a

smaller

side.

Car

container.

That

kind

of

acts

like

our

external

attach

or

external

provision,

or

do

today

or

they're

smaller,

and

they

don't

actually

handle

the

bulk

of

the

logic.

Q

So

in

that

same

line,

it's

aligned

better

with

what

you're

saying

but

again,

that's

a

different

conversation,

but

it

is

something

that

I've

been

starting

because

the

CSI

drivers

they're

their

contract,

was

the

CSS

spec

and,

and

then

we

have

kind

of

added

more

to

that.

So

anyway,

just

bring

that

up

that.

That

conversation

is

starting

from

the

CSI

community's

implementation

team.

Hey.

F

A

A

Having

the

CEO

not

have

any

insight

into

what's

going

on

with

volume?

Snapshots

is

a

pretty

bad

place

to

be

today.

The

way

that

the

volume

snapshot

controller

is

written,

it's

entirely

a

sidecar

container

in

the

CSI

driver,

and

so

when

a

snapshot

happens

how

long

it

takes

whether

there's

errors

in

it.

That

kind

of

thing

is

completely

invisible

to

us

and

in

order

to

solve

that,

we

can

kind

of

follow

the

model

of

what

we've

been

doing.

A

With

other

controllers,

where

you

have

a

common

central

controller,

PV

PVC

controller

attached

to

each

controller

that

are

responsible

for

the

common

logic,

and

then

you

have

a

small,

minimal,

sidecar

container,

that's

responsible

for

basically

last-mile

communication,

and

it

gives

you

the

it

gives

kubernetes

insight

into

what's

going

on.

Basically,

but.

F

A

K

A

We're

gonna

have

to

talk

to

Sagarika

tech,

chure

to

say:

hey,

we

have

a

controller,

we

need

to

implement.

How

do

we

deploy

it?

How

do

we

ensure

that

it's

deployed

in

all

the

right

places?

We

started

having

this

discussion

with

the

si:

si

si

si

driver,

C

or

DS,

and

things

like

that.

How

do

you

deploy

them?

A

A

F

A

We

still

need

to

do

this.

I

think

the

the

sig

has

a

need

for

a

controller

that

we

need

to

deploy.

The

logic

was

all

deployed

out

of

tree,

so

we

need

to

go

to

Sagarika

tech,

chure

and

say

hey.

You

know.

This

is

a

controller

that

we

want.

It's

important

for

us

here

are

the

reasons

why

it's

important.

How

do

we

make

sure

that

it

is

kind

of

deployed

and

like

what

is

the

story

for

this?

How

do

we

do

it.

L

I'm

really

confused,

because

I

thought

that

the

move

towards

si

si

meant

that

all

of

that

entry

stuff

that

you

alluded

to

would

it

would

eventually

go

away

like

now.

The

act

of

turning

a

PVC

into

a

PV

happens

entirely

in

the

in

the

sidecar,

and

there

is

no

centralized

controller.

That's

involved

with

that.

A

L

A

It's

related

to

that,

but

not

quite

that

extreme

I

think

having

side

cars

completely

owned

and

managed

and

deployed

by

the

the

by

kubernetes

is,

is

not

going

to

happen

realistically,

but

I

think

we

can

take

baby

steps

to

kind

of

make

sure

that

we're

not

dumping

all

the

logic

on

the

on

the

storage

provider.

Right

in

the

case

of

snapshots,

the

entire

controller

sits

in

a

sidecar

and

every

single

driver

that

you

have

that

implements

snapshots

basically

has

duplicate

logic

for

the

entire

controller

deployed

as

part

of

their

sidecar,

but.

A

L

G

A

Yeah,

it's

something

very

similar

to

PV

PV

C

would

be

good.

I

and

I

agree

that

the

biggest

challenge

here

right

now

is

this

kind

of

cig

architecture

guidance

to

not

have

any

more

kind

of

intrigue,

components

or

CR

DS.

So

we'll

need

to

think

through

that

we

need

to

ask

them.

Is

you

know,

is

it

only

that

the

API

is

external?

Can

we

have

a

new

controller?

That

is

core,

but

it

relies

on

C

or

DS.

We

need

to

explore

what

that

means.

Okay,.

Q

A

We

are

also

over

time

for

this

meeting.

We

didn't

finish

this

time.

So

what

we'll

do

is

go

into

the

next

meeting

in

two

weeks

to

review

and

finalize

items

that

we're

working

on

and

then

finish

the

the

items

below

line

27

off

I'm

gonna

go

ahead

and

mark

those

as

bold

to

indicate

that

we

didn't

get

to

them

and

that

they

still

need

to

be

reviewed.

A

If

you

have

anything

between

now

and

then

feel

free

to

add

to

this

document,

and

also

if

you

have

an

item

assigned

to

you

and

it

needs

an

enhancement

issue,

please

make

sure

you

create

that

enhancement

issue

before

the

feature

freeze

deadline,

that's

how

the

rest

of

the

kubernetes

release

team

keeps

track

of

the

items

that

are

going

in

to

the

release.

If

we

don't

do

that,

then

it

could

block

the

PRS

and

caps,

and

things

like

that.

So

please

make

sure

to

do

that.

That's

all

that

I

have

for

today.

Is

there

anything

else.