►

From YouTube: Summit 2022: Kubevirt Clusters on Kubevirt VMs

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

B

B

B

Okay.

So

to

begin

with,

I

want

to

talk

about

what

the

cluster

api

is

and

the

briefest

definition

I

could

come

up

with.

Is

that

the

cluster

api

is

a

set

of

controllers

and

apis

that

allow

us

to

manage

the

creation

and

life

cycle

of

kubernetes

clusters

in

a

declarative

way?

So

the

cluster

api

is

this

generic

kubernetes

ecosystem

project

that

defines

a

set

of

apis

that

can

be

used

to

create

clusters.

B

So

this

project

is

designed

to

be

extendable

and

that's

where

we

get

to

the

cluster

api

provider.

Q

vert

we're

essentially

just

extending

the

cluster

api

project

to

allow

hosting

kubernetes

clusters

inside

of

virtual

machines,

and

I'm

going

to

make

more

sense

of

that.

In

a

moment,

I've

got

diagrams

and

everything

it

should

be

pretty

clear

before

I

get

into

that.

B

So

you

might

remember,

10

years

ago

this

analogy

started

popping

up

of

pets

versus

cattle.

It's

an

old

analogy.

It's

been

overdone,

but

the

analogy

here

is

that

there's

an

old

way

of

managing

servers

where

you

manually

provision

them,

you

put

lots

of

resources

like

people,

resources

and

keeping

these

things

alive

and

that

shifted

to

a

cattle

approach

where

provisioning

servers.

B

It's

automated

and

the

recovery

of

these

servers

is

as

simple

as

killing

a

machine

which

is

automatically

going

to

bring

up

a

new

one

to

replace

it.

So

essentially,

we

made

servers

disposable,

which

is

a

way

more

efficient

way

to

manage

infrastructure

at

scale,

but

I

saw

something

happen

when

we

introduced

kubernetes.

B

I

think

in

a

lot

of

ways,

kubernetes

has

become

that

old

pet

server,

so

we

make

a

cluster.

We

spend

all

these

resources,

keeping

it

online

healthy,

going

through

great

links

to

perform

complex,

rolling,

updates

successfully

and

putting

all

hands

on

deck

when

the

cluster

goes

down-

and

I

don't

like

that

at

all.

B

B

If

you

want

to

make

a

cluster,

you

start

with

a

set

of

manifests

and

these

manifest

define

the

clusters.

So

you

post

these

manifest

to

a

management

cluster

which

have

the

cluster

api

controllers

running

and

the

cluster

api

controller

is

made

provisioning

your

new

cluster

on

infrastructure

somewhere.

So

you

can

see

in

this

diagram.

B

The

cluster

api

is

capable

of

launching

new

clusters

across

various

infrastructure

providers

or

hyperscalers,

and

here

I'm

just

showing

a

couple

examples.

We

have

clusters

across

aws

and

gcp.

So

with

the

introduction

of

the

cluster

api

provider.

Cubert

qvert

is

just

another

one

of

these

providers,

just

treating

qvert

as

if

it's

a

hyperscaler,

similar

to

aws,

gcp

or

azure.

B

B

B

Okay,

so

I'm

hoping

that

those

diagrams

kind

of

gave

you

a

visual

for

how

cluster

api

works

from

a

from

a

high

level.

I

want

to

make

this

a

little

bit

more

tangible

now,

but

looking

into

those

cluster

manifests

themselves

a

little

bit

more

detail

and

hopefully

demystifying

what's

going

on

in

there.

So

here's

some

yaml,

let

your

eyes

glaze

over,

don't

even

try

to

interpret

this.

It's

just

a

visual,

just,

listen!

B

So

there's

two

top

level

objects

in

this

cluster

manifest

and

the

first

one

is

called

the

cluster

and

here

we're

defining

the

control

plane

for

this

new

workload

cluster.

So

that

includes

the

infrastructure

hosting

the

control

plane,

in

this

case

we're

using

qvert

and

the

control

plane

provider

itself,

which

in

this

case

is

cube

adm.

So

we

have

a

cube,

adm

controller,

that's

forming

this

control

flame

for

us,

that's

the

cluster

object.

B

The

second

object

is

the

machine

deployment,

and

this

object

defines

the

worker

nodes

for

that

cluster,

and

that

includes

the

provider

hosting

the

worker

nodes,

in

our

case

huvert

and

the

bootstrap

provider

that

joins

these

worker

nodes

to

the

cluster,

which

in

this

case

is

cube,

adm

again.

Okay,

so,

hopefully

you

all

are

still

with

me.

The

cluster

object

defines

the

control

plane

and

the

machine

deployment

defines

the

worker

nodes

and

how

those

worker

nodes

join

that

control

plane.

B

I'm

going

to

go

through

a

simplified

progression

here

to

show

you

how

these

two

top

level

objects

progress

to

the

point

of

launching

those

q,

virtual

machines

and

hosting

a

cluster

in

those

virtual

machines.

So,

starting

with

that

cluster

object

within

that

object,

we

define

that

the

cluster

is

going

to

be

hosted

on

a

q,

vert

provider

as

the

infrastructure,

and

here

we

reference

a

new

object

called

the

key

vert

cluster

that

qvert

cluster

object

is

going

to

be

creating

the

load

balancer

for

this

new

clusters.

B

Api

server,

so

this

keyboard

provider

is

defining

how

clients

like,

if

you're

using

cubectl,

how

these

clients

are

going

to

access

the

new

workload

clusters.

Api

server,

that

top

level

cluster

objects,

also

defining

the

control

plane

provider,

which

in

our

case

is

cube,

adm

and

the

cube

adm

control

plane

is

configured

to

launch

the

control

plane

nodes

as

qvert

virtual

machines.

So

in

our

example

here

we're

having

three

replicas

host

our

control

plane,

so

the

cube

adm

controller

knows

we

want

three

replicas.

B

The

cube

adm

controller

then

defines

cloud

init

configs

for

each

one

of

these

coovert

machines

and

and

those

can

fix.

This

is

kind

of

where

the

magic

occurs.

With

a

lot

of

this,

it

might

be

filling

the

gap

for

you.

These

cloudant

configs

are

what

qbadm

is

telling

these

virtual

machines

to

execute

on

startup

in

order

to

create

this

cluster.

So

it's

like

a

bash

script.

That's

actually

being

executed,

telling

these

virtual

machines

how

to

form

and

create

a

control

plane.

So,

lastly,

we

get

the

cuvert

vms

themselves

launched

up.

B

They

get

assigned

their

unique

cloud

in

it,

bootstrap

secrets

which

execute

a

startup.

At

this

point,

we

have

a

control

plane.

So

if

you,

if

you

lost

track

of

all

of

that,

know,

there's

a

lot

going

on

here.

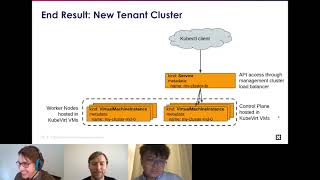

The

end

result

here

is

the

cluster

object,

results

in

the

creation

of

a

load

balancer

for

api

server

traffic

and

the

creation

of

the

control

plane

nodes

to

actually

host

the

control

planes,

we're

talking

about

the

api

server

and

q,

a

scheduler

and

all

that

good

stuff.

B

So

at

this

point

we

have

a

functioning

control

plane

for

a

new

cluster,

but

we

have

no

worker

nodes,

so

we

could

use

cubectl

to

see

what's

going

on

in

there,

but

we

won't

be

able

to

schedule

any

new

pods

or

anything

like

that.

So,

let's

add

some

worker

nodes

using

a

machine

deployment

and

the

machine

deployment

references,

a

bootstrap

provider

which

in

this

case

describes

how

the

nodes

join

the

cluster

which

we're

using

cube.

Adm

again,

we

describe

the

infrastructure.

B

These

worker

nodes

should

be

hosted

on

again

cube

vert,

in

this

case

we're

going

to

use

three

replicas.

We

get

three

unique

machines,

as

our

worker

nodes,

the

bootstrap

provider,

is

the

one

that's

involved

with

generating

these

unique

cloud,

init

secrets,

which

again

is

just

a

bash

script.

These

virtual

machines

execute

on

startup

tells

them

how

to

join

the

cluster

in

this

case,

how

to

actually

join

the

control

plane.

That's

already

been

created.

B

Lastly,

virtual

machines

spawn

they're,

going

to

be

assigned

those

cloudant

secrets

and

when

they

boot

up

they're

going

to

join

the

cluster.

So

the

end

result

here

is:

we

have

a

new

cluster

with

a

control

plane

hosted

across

three

virtual

machines,

and

three

worker

nodes

also

hosted

as

key

vert

virtual

machines.

B

All

right,

that's

my

brief

intro

to

the

cluster

api

and

how

the

cluster

api

provider

qvert

works.

Hopefully

that

gives

you

some

context

into

how

this

works

from

a

high

level.

I

know

there's

a

lot

of

complexity

here,

it's

a

really

complex

project.

I'm

being

honest,

so

I

tried

to

simplify

it

as

much

as

I

can,

but

it's

really

difficult,

but

maybe

this

gives

you

some

ideas

of

new

possibilities,

this

kind

of

technology

unlocks

as

well.

B

C

C

So

next

my

colleagues

alex-

and

I

are

going

to

share

our

journey

on

how

we,

how

this

project

was

inspired

and

made

to

today's

shape

and

I'll

start

with

sharing

some

background

and

the

use

case.

First,

so

back

to

2020,

when

I

first

joined

apple,

I

noticed

a

couple

of

colleagues

were

looking

for

tools

to

help

them

is

for

the

purpose

of

development

and

integration

testing.

C

The

effort

to

gain

the

cluster

should

also

be

minimal,

which

means,

ideally,

it

should

only

take

a

a

simple

command

and

a

couple

of

minutes

waiting

to

get

the

cluster

available

for

testing

and

the

teardown

afterwards

to

release

infrastructure

resources

can

click

the

next

slice

yeah.

So

by

that

time

we

did

wrong

a

couple

of

large-scale

bare

metal,

kubernetes

clusters

hosting

heterogeneous

workloads

for

apple

internal

use.

Key

if

for

apple

internal

use

and

a

vm

workload

is

one

of

most

popular

workload,

types

which

is

well

adapted

by

different

business

units.

C

It

would

be

very

natural

for

us

to

make

use

of

keyword

vms

to

build

another

layer

of

kubernetes

clusters

and

offer

cluster

as

a

service

to

our

development

teams.

Can

you

click

next,

please

yeah.

So,

with

my

background,

I

came

from

a

infrastructure

as

a

service

company

before

joining

apple,

by

seeing

bare

metal

servers

managed

by

kubernetes

and

loaded

with

the

keywords.

C

My

first

impression,

my

background

told

me

that

we

were

basically

running

a

free

infrastructure

as

a

service

stack,

it's

kind

of

like

a

free,

vmware

vista

vsphere

product.

So

can

you

click

an

exercise,

please

yeah.

So

then,

the

problem

is

translate

into

that

how

to

build

kubernetes

clusters

from

vms,

which

is

a

well

solved

problem

in

the

industry.

C

By

that

time

there

are

a

couple

of

frameworks

available

in

the

community,

such

as

ansible

bosch

and

the

cluster

api

and

the

and

others

so

among

all

of

them,

cluster

api

is

most

promising

and

attractive

framework

to

employ

for

our

project

can

click

next

slice.

Yes,

please

so

class

api

is

a

kubernetes

native

which

is

use

the

same

kubernetes

programming

paradigm.

C

The

class

api

is

adopted

by

multiple

cloud

vendors

such

as

aws,

google,

cloud,

vsphere

and

so

and

more

so

next.

My

colleagues,

my

colleague,

alex,

is

going

to

share

the

detailed

timeline

on

how

we

bring

this

cap

k,

which

is

a

short

name

for

the

class

api

provider,

keyword

from

an

team

initiative

to

a

collaborative

open

source

project

for

nowadays.

D

Chang

can

we

turn

over

the

slide?

Please

thank

you.

So,

let's

rewind

back

to

summer

of

2020.

back

then

internally

at

apple,

we

had

a

rising

need

for

ephemeral,

kubernetes

clusters

with

requirements

that

chang

already

talked

about.

At

the

same

time,

we

already

utilized

keyboard

to

run

vm

workloads

on

our

kubernetes

clusters.

Internally,

so

chang

had

this

idea

of

using

keyword

to

bootstrap

kubernetes

clusters

on

demand

in

fall

of

2020.

D

We

made

a

proposal

internally

and

started

working

on

a

project.

Initially

it

was

just

a

couple

of

engineers

researching

various

solutions

already

available

in

the

industry

and

by

around

spring

of

2021

with

api,

for

the

reasons

that

shank

talked

about

just

a

few

minutes

ago.

So

we

started

an

active

engagement

with

the

cluster

lifecycle

community.

On

this

there.

C

D

A

number

of

providers

from

various

vendors

available

already

and

under

active

development,

which

was

a

great

guidance

for

us,

and

there

was

a

version

of

keyboard

provider

already

open

sourced

by

openshift.

At

the

time,

however,

that

keyboard

provider

was

based

on

an

older

implementation

of

cluster

api

and

it

was

not

under

kubernetes

6

namespace,

along

with

other

cluster

api

providers.

D

D

I

should

say

that,

generally,

it's

not

a

very

straightforward

process

to

open

source

a

project

either

from

internal

standpoint

or

from

community

standpoint.

There

are

a

lot

of

legal

matters

to

overcome

internally

and

from

community

standpoint.

There

needs

to

be

sufficient

support

from

several

different

companies

and

confidence

that

this

project

will

be

maintained

and

developed

in

the

long

term.

D

So

while

we

sanitized

our

code

base

and

went

through

internal

reviews

at

apple

at

the

same

time,

at

the

same

time,

we

looked

for

support

and

engagement

from

other

companies

and

we

found

great

excitement

and

support

from

red

hat

and

microsoft

and

others.

I

should

say

that

support

from

the

community

was

absolutely

amazing.

Very

welcoming

it

was

the

utmost

pleasure

to

work

with

the

siege

leads,

so

we

just

had

to

do

our

part

to

lift

the

code

and

by

early

fall

of

2021.

D

We

had

an

upstream

repository

created

and

lifted

the

initial

implementation

to

upstream

since

then,

we

had

terrific

experience,

collaborating

with

folks

at

red,

hat,

microsoft

and

other

companies.

You

can

check

out.

You

can

check

out

our

repository

where

issues

get

created,

npr's

get

submitted

nearly

every

day.

We

have

our

weekly

office

hours

meetings

where

we

are

discussing

any

issues

or

questions

anyone

might

have,

and

it's

a

very

productive

collaboration

and

we

are

in

process

of

opening

up

keyword

provider

from

a

specific

use

case.

B

B

What

I'm

going

to

do

is

launch

launch

a

cluster

on

key

vert

virtual

machines

within

a

management

cluster

itself.

So

we'll

start

with

the

clean

management

cluster,

like

the

one

in

this

diagram,

I'll

post

a

cluster

manifest

here

and

then

we'll

observe

a

new

workload

cluster

get

created

within

our

management

cluster,

on

q,

virtual

machines

and

before

I

go

to

a

terminal,

real

quick.

I

wanted

to

point

out

I'm

using

a

new

client

tool

here

called

cluster

ctl

in

this

demo

and

this

tool.

B

It

can

help

with

a

lot

of

things

in

the

cluster

api

ecosystem,

from

installing

the

cluster

api

controllers,

to

creating

new

workload

controllers

and

even

gain

access

to

those

workload

controllers

and

more

and

there's

a

link

with

more

info

about

this

tool.

But

I

just

wanted

to

introduce

it

so

you

wouldn't

see

it

and

wonder:

what's

that?

That's

new

okay!

B

So,

let's

take

a

look

at

my

demo:

I've

recorded

it,

so

we

don't

have

to

sit

here

and

wait

for

things

to

provision

and,

let's

see

if

I

can

get

it

to

actually

play

come

on,

do

it

all

right.

So

the

first

thing

I'm

doing

here

is

I'm

going

to

list

the

pods

in

my

management

cluster

and

I'm

just

showing

you

that

the

cluster

api

controllers

exist.

B

B

So

I'm

going

to

export

a

couple

of

environment

variables

here

and

the

first

one

is

a

template,

and

this

template

is

it's.

The

cluster

manifests

with

variables

in

it.

So

I'm

using

I'm

not

constructing

this

cluster,

manifest

myself,

I'm

using

one.

That's

in

our

upstream

cluster

api

provider,

keyboard

repo,

there's

a

template

there,

I'm

defining

that.

It's

a

template.

I

want

to

use

and

I'm

just

going

to

inject

some

variables

in

there.

So

I

don't

have

to

construct

this

really

complex

interrelation

reference

crazy

manifest.

B

B

I

am

going

to

export

a

few

other

environment

variables

just

telling

where

my

virtual

machine

image

is

being

pulled

from

I'm

getting

it

from

mcquay

repo

and

a

few

other

things

associated

with

that

template

here

and

here

I'm

generating

the

cluster

manifest

using

cluster

ctl.

So

cluster

ctl

has

the

ability

to

take

a

cluster,

manifest

template,

and

then

you

assign

a

few

variables

here,

I'm

telling

it

that

I

want

kubernetes

version

1.21

telling

it

that

I

want

one

control,

plane,

node,

one

worker

node

and

the

namespace,

and

things

like

that.

B

B

B

In

this

example,

I

wait

like

10

minutes,

maybe

less.

I

can't

remember,

and

I

execute

the

exact

same

thing

again

and

we

can

see

control

plans

online

and

we

are

good

to

go.

So,

let's

take

a

look

at

this

cluster

in

a

little

bit

more

detail,

we

can

see

at

machine

deployment.

I

only

asked

for

one

replica,

so

we've

got

one

replica

with

our

work

worker

nodes

online

and

we

can

see

the

q

virtual

machines

hosting

this

cluster.

B

B

It's

just

a

convenience

wrapper

around

exporting

that

cube

config,

so

I'm

exporting

a

key

config

for

my

newly

created

workload

cluster

and

I'm

going

to

set

that

as

an

environment

variable,

let's

start

playing

again

there

we

go

saying

it

is

an

environment

variable

I'm

going

to

use

my

standard

cube,

ctl,

tooling,

and

now

I'm

actually

inside

that

cluster,

that

new

cluster

that

I

create.

So

you

can

see

the

api

servers

in

there

we

have

scheduler,

we

have

all

the

standard,

kubernetes

controllers,

like

deployment

controllers

and

all

that

good

stuff.

B

So,

lastly,

I'll

show

you

how

to

de-provision

it

it's

pretty

complicated.

No,

it's

not

all

you

do

is

just

delete

the

cluster

object

and

everything

goes

away.

So

that's

it.

I

showed

how

to

create

a

cluster.

We

gained

access

to

that

new

workload,

cluster

and

then

de-provisioning

it

it's

just

as

simple

as

deleting

that

top

level

cluster

object.

Everything

disappears

underneath

it

that's

about

it.

For

our

presentation,

I

don't

know.

If

we

have

any

questions,

I

know

we

covered

a

lot.

B

B

I've

also

been

thinking

about

the

possibility

of

using

the

management

cluster

to

start

virtual

machines

in

the

tenant

cluster,

so

keep

it

flat

only

have

one

layer

for

your

virtual

machines

and

using

the

kind

of

under

layer

to

launch

virtual

machines

into

the

tenant

cluster.

We

haven't

even

talked

about

that

in

the

community

meetings

or

anything

like

that.

It's

just

kind

of

an

idea

this

in

the

back

of

my

head

right

now,

but.

D

B

B

C

B

B

D

B

C

C

However,

we,

if

we

use

the

class

api

to

set

up

the

clusters,

it's

kind

of

like

a

chicken

axe

like

if

we

we

actually

want

to

have

used

some

different

mechanism

to

set

up

the

it

has

bad

and

then

tests

the

keyword.

Otherwise,

if

you

use

a

class

api

and

if

there's

like

a

bug

in

the

keyword,

we

cannot,

I

mean

our

test

value

is

fitting,

so

we

cannot

test

the

test.

The

keyword

anymore

right.

So

that's

what

I

guess.

The

chicken

have

problem.

B

B

A

D

Yes,

we've

already

experimented

with

several

cni

implementations

and

we

have

proof

of

concept

based

on

flannel

and

calico

and

psyllium.

So

any

of

this

should

work

with

very

minor

changes,

updates

pretty

much

out

of

the

box,

so

yeah

once

the

cluster

is

up

and

running.

Cni

is

a

little

bit

out

of

purview

of

cluster

lifecycle.

It's

more

of

an

addon

that

you

add

on

top

of

a

running

cluster,

but

yeah.

Most

of

the

common

implementations

should

work

so

try

it

out.