►

From YouTube: Marshall Ward

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

It

means

lots

of

new

and

unexpected

features

will

be

added,

but

it

also

means

that

there's

greater

potential

for

error

from

more

and

more

uncertain

sources,

and

so

that

puts

a

greater

burden

on

the

testing

framework

that

we

use,

and

so

what

I'm

going

to

do

in

this

talk

is

give

an

overview

of

as

much

of

that

as

I

can

and

so

in

particular,

I'll.

Try

to

introduce

these

concepts

of

verification

and

validation.

A

Now,

of

course,

nobody

knows

if

a

model

is

right.

All

we

can

really

do

is

show

that

it's

wrong

in

certain

special

cases

and

continue

to

iterate

on

that.

So

when

software

Engineers

talk

about

this,

they

introduce

this

notion

of

verification

and

validation,

which

I

would

not

blame

anyone

for

thinking.

A

They

sound

like

the

same

thing

and

I

wouldn't

be

surprised

if

I

end

up

making

the

same

mistake

myself,

but

they

do

represent

independent

Concepts

that

I'll

try

to

get

across

here

and

I

think

they

map

on

pretty

well

to

how

we

do

things

so

so

this

Berry

bomb

has

this

slightly

pithy

way

of

describing

what

they

are.

He

says,

verification

says,

am

I.

Building

the

product,

right

and

validation

is,

am

I.

Building

the

right

product

and

I'll

try

to

explain

what

he

means

by

that,

but

a

verification

is

basically

the

design

specifications.

A

A

I

wrote

a

few

examples

here,

but

the

list

is

very

long

in

general,

but,

for

example,

it

should

compile

on

all

the

places

that

we

say

it

should.

We

might

want

to

say

insist

that

the

equations

are

dimensionally

consistent,

like

Gustavo

alluded

to

in

his

talk,

and

we

might

want

to

insist

that

when

we

paralyze

the

answers,

don't

change

and

you

can

imagine

an

ever-growing

list

of

things

like

that,

but

these

are

all

sort

of

micro

rules

that

we

would

want

to

get

right

during

the

testing

and

that

we

could

conceivably

test

during

the

development.

A

Now,

the

distinction

in

that

case

is

validation,

which

they

what

they

mean

when

they

talk

about

that

is,

they

say,

is

the

product

right?

Did

we

make

what

we

said

we

were

going

to

make,

and

this

really

from

our

perspective,

I

think

is

kind

of.

Did

it

operationally

produce?

What

we

meant

to

there

are

the

simulations.

Realistic

is

the

amox

strong

enough?

A

A

Which

really

I

think

is

part

of

the

reason

the

concepts

do

start

to

get

a

bit

blurred.

But

the

point

is

this

is

not

like

a

strange

thing

that

I'm

talking

about

it's

usually

kind

of

representative

in

just

about

any

development

process

you

can

come

up

with

and

so

from

op6.

We

work

through

GitHub

and

we

work

through

a

git

repositories

as

I.

Think

at

this

point

everybody

is

pretty

familiar

with

I.

A

Don't

need

to

go

over

that

concept,

but

it's

all

publicly

hosted

and

we

sort

of

expect

people

to

engage

I'm

showing

a

little

picture

of

the

mall,

the

gfdl

mobstix

repository,

but

it

doesn't

have

to

be

the

gftl

repository.

It

can

be

any

of

the

hubs

we

work

with,

so

this

will

be

a

bit

of

review

for

anybody

who's

contributed.

A

Is

we

we

put

a

halt

on

the

process,

so

I

put

this

big

octagon,

V

and

V

for

verification

and

validation,

and

the

idea

is

that

you

have

to

go

through

this

process

before

we

consider

anything

and

to

a

very

large

degree.

This

is

an

automated

process,

and

so

what

I

would

like

to

do

in

this

talk

is

kind

of

go

over

as

much

the

features

of

that

as

I

can

and

sort

of

help.

New

people

understand

what's

going

on

there

and

maybe

help

developers

accommodate

that

process,

if

not

even

contribute

to

it.

A

So

how

does

this

look

when

we

contribute

something

to

Mom

6?

We

first

I

hope

this

figure

is

is

visible

to

everybody.

If

it's

not

I'll

just

describe

it,

but

we

would

go

through

something.

We

call

a

code

style

check.

This

I

won't

go

over

this

in

detail,

but

it

basically

just

makes

sure

that

the

code

looks

the

way.

We

think

it

should

there's

no

trailing

white

spaces,

little

pedantic

things

like

that.

That

might

bother

some

people,

but

I

think

they

do

help

streamline

development,

but

that's

a

broader

story

after

that.

A

We

then

do

several

build

configurations,

so

we

make

sure

that

the

model

compiles

under

all

the

configurations

we

care

about,

and

we

consider

that

part

of

the

verification

process

and

then,

after

that,

we

run

through

a

series

of

tests.

We

call

them

TCS.

If

you've

heard

people

discuss

that

in

discussions

with

others,

they

test

things

like

aggressive

initialization,

with

nonsense

to

make

sure

that

we

can

reproduce

our

answers.

A

These

tests

would

go.

We

currently

host

them

on

the

Travis

CI

system,

but

they

don't

have

to.

But

when

you

run

them

you

can

see

you

either

get

a

nice

green

check,

saying

it

worked

or

a

big

scary,

Red

X

saying

it

did

not,

and

if

you

look

inside

the

detail,

there's

lots

of

little

Christmas

lights,

saying

that

all

your

little

tests

passed

I

hope

this.

A

A

We

do

provide

some

information

I'll

get

to

what

this

information

is

in

a

bit,

but

it's

a

truncated

list

of

information

for

all

the

different

tests

that

failed

and

you

can

go

back

as

the

user

and

troubleshoot

those

when

you

are

doing

your

testing

whoops.

Oh

I'm,

sorry

that

happened

and

that's

kind

of

represented

by

this

little

dotted

Arrow

here

in

the

in

the

verification

validation.

So

you

could

kind

of

iterate

through

this

sequence

of

development.

A

Until

you

get

it

right

and

when

it's

right,

then

we

go

through

a

less

automated

process

of

validation,

but

before

I

go

through

that,

let

me

mention

one

special

case

of

verification.

That

is,

that

that

can

come

up,

which

is

what,

if

our

answers,

change

now,

the

tricky

thing

here

is

sometimes

we

want

our

answers

to

change.

For

example,

the

most

trivial

case

is

adding

a

new

diagnostic

but

more

important

ones.

We

might

sort

of

improve

the

algorithm

in

a

way

that

changes

the

answers.

A

A

The

second

is

the

configuration

tests,

which

are

all

the

verification

tests

I

alluded

to

where

we

expect

the

answers

to

remain

the

same

after

in

various

configurations,

and

then

the

final

is

a

regression

test

where

we

compare

our

answers

to

answers

from

the

code

before

it

was

modified,

and

so

for

the

most

part

we

want

to

know

when

that

happens,

and

we

fully

expect

that.

Sometimes

it

will

happen,

but

we

need

to

kind

of

deliberately

engage

with

it

when

it

does,

and

so

this

kind

of

transitions

from

what

we

might

call

verification

to

validation.

B

A

Think

it's

not

good

to

get

into

a

debate

on

what

is

a

verification

and

what

is

a

validation

test,

but

this

does

become

sort

of

where

it

becomes

more

subjective.

As

in

what

does

the

user

want?

And

if

we

want

the

answers

to

change,

then

we

acknowledge

that

in

our

review

so

bef

after

we

pass

through

the

verification

test.

We

go

through

a

code

review

where

somebody

who

is

not

the

author

of

the

change,

looks

at

the

code,

changes

and

says

yes

or

no.

This

is

what

we

should

do

and

then

what

happens?

A

So

in

our

case

we

run

it

through

a

a

bunch

of

different

configurations,

mainly

coupled

we

run

it

through

multiple

compilers

for

each

configuration

and

then

assuming

all

those

build

which

will

typically

be

about

15,

compilations

I.

Think

it's

reduced.

A

bit

I

think

it's

been

reduced

to

12,

but

if

those

pass,

then

we

run

through

a

much

broader

range

of

tests

and

these

tests

represent

basically

I

think

any

kind

of

research

activity.

A

That's

happened

at

gfdl

from

from

idealized

stuff

to

cement,

Type,

runs

and

so

there's

over

60

of

these,

and

some

are

small.

Some

are

very

large,

but

again

we

insist

that

the

answers

we

produce

so

the

way

that

we

kind

of

validate

the

code

is,

we

make

sure

we

can

get

our

old

answers,

and

so

we

have

to

go

through

this

complex

testing

Suite,

but

even

then

that

just

validates

the

code

for

gfdl.

But,

as

I

said

beginning,

this

is

a

community

code,

so

we

have

to

ensure

that

it

works

for

everyone.

A

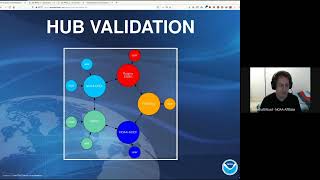

I've

drawn

I,

basically

redraw

a

diagram

that

Alistair

and

Bob

have

shown

in

previous

talks,

and

basically

we

give

everybody

an

opportunity

to

validate

that

code,

just

as

we

did,

and

this

happened.

This

could

happen

from

any

Hub

to

the

other

hubs

and

when

that

process

is

completed

through

a

review,

we

all

basically

sign

off

on

it

and

that's

how

we

can

say

with

confidence

that

we

have

a

code

that

works

for

everybody.

A

A

Well,

I

mean

so

for

for

these

tests

we

rely

on

basically

two

output

files,

so

one

is

sort

of

it's

named

ocean

stats.

It's

a

metric

of

for

the

most

part,

Global

metrics

about

the

code

at

certain

sequences,

so

I'd

say

the

most

significant

is

the

energy

per

unit

Mass

here,

which

I've

shown

a

small

sample

of

that

here.

We

report

that

to

full

machine

Precision

17

digits,

and

we

expect

that

number

to

be

identical

after

changing

it

in

various

ways.

A

There

are

additional

metrics

here.

If

you

can

see

them,

like

the

mean

temperature,

the

total

mass

things

like

that

sea

level

is

in

there

I

mean

sea

level

sub.

It's

not

a

100

perfect

test,

but

it

is

an

it

isn't

practice.

A

very

robust

metric

of

whether

or

not

answers

have

changed,

but

even

if

that's

not

sufficient,

we

do

have

a

more

complicated

one.

Where

we

enable

every

single

Diagnostic

in

the

model,

we

don't

actually

compute.

We

don't

actually

write

the

Diagnostics.

A

What

we

do

is

we

compute

them,

and

then

we

calculate

a

few

metrics

for

each

of

those

Diagnostics.

So

I've

shown

three

here.

I

just

showed

you

V

and

H,

but

we

report

the

mean

the

Min,

the

max

value

across

the

domain,

and

then

we

report

a

bit

count

based

on

the

as

a

check

sum

so

I

think

any

one

of

these

metrics

would

often

not

be

sufficient

to

detect

a

regression.

A

A

A

A

A

But

of

course

the

fractional

bit

is

the

problem,

because

we

will

very

commonly

lose

information,

and

so

there

is

a

minimum

resolution

we

have

of

about

10

to

the

minus

16

in

here,

and

so

we

have

to

know

how

to

preserve

that.

So

this

is

a

pretty

common

example.

But

if

we

want

to

add

these

three

numbers

together,

something

small

plus

one

minus

one,

then

if

we

add

the

first

two

together,

we

will

lose

this

because

it's

below

that

2

times

10

to

the

minus

16..

A

So

this

so

10

to

the

minus

16

plus

1

is

1

and

then

minus

1

is

0..

However,

had

we

added

them

in

the

other

order,

then

we

would

have

got

one:

minus

1

is

0

plus

10

to

the

minus.

16

is

10

to

the

minus

16..

So

so

the

point

is

order

matters,

and

if

we

want

to

be

reproducible,

we

have

to

retain

those

parentheses

and

just

another

quick

example:

it's

not

a

matter

of

just

keeping

track

of

your

parenthesis.

A

It's

not

a

matter

of

just

tracking

numbers

below

2

times

10

to

the

minus

16..

Manipulation

of

these

numbers

will

shift

what

is

the

least

significant

value.

So

if

s

is

one

plus

this

residual

than

if

we

add

one

to

it,

we

increase

that

exponent

and

as

a

result

of

increasing

the

exponent,

we've

dropped

the

smallest

resolvable

value

and

we

lose

this

residual

and

it

becomes

exactly

two.

A

So

this

is

and

then,

when

we

subtract

one

again,

we

get

the

value

of

we

get

something

that

is

identically

one

and

not

one

plus

the

noise.

So

these

so

these

parentheses

matter

very

much,

and

it

matters

very

much

where

you

put

them

so

again,

just

a

final,

quick

third

example:

it

also

matters

in

multiplication

as

well.

A

In

fact,

it's

a

little

more

subtle,

because

power

of

two

multiplication

is

associative

and

is

less

sensitive

to

where

we

put

the

parentheses,

but

it

the

residuals,

still

suffer

from

this,

and

so

you

can

do

this,

where,

if

we

have

these

two

numbers,

one

and

a

half

1.5

and

then

one

plus

a

residual,

it

matters

the

order

in

which

we

multiply.

We

do

get

different

answers,

so

the

lesson

on

all

this

is

that

when

you're

writing

your

code

and

your

stencils

and

so

on,

you

do

need

to

put

parentheses

in

these

operations.

A

A

So

we

need

to

be

sensitive

to

this

and

then

other

compilers,

like

Intel,

claim

that

they

turn

it

off

by

default

and

do

say

that

your

answers

could

be

reordered.

I

have

to

cons,

say

that

I've

never

been

able

to

get

Intel

to

break

the

order

parentheses,

but

they

do

they

do

say

it's

not

what

you

should

expect,

and

so

we

have

to

explicitly

tell

it

and

just

as

a

reminder,

if

you

read

the

Fortran

language

standard,

they

say

You're

supposed

to

respect

parentheses.

A

So

that's

how

we

deal

with

sort

of

simple

arithmetic

operations,

but

as

we

get

to

more

complex

things

like

global

summations,

then

we

really

have

to

rethink

how

we

do

this,

so

we

could,

in

principle

order

every

operation

with

you

know,

I

mean

theoretically,

with

parentheses.

In

practice,

we

would

just

sort

of

explicitly

gather

those

numbers

in

some

order

and

then

explicitly

do

it.

A

You

can

do

that

as

I'd

show

in

the

first

line

here,

but

the

problem

is

that

not

only

are

you

introducing

many

of

those

potential

residual

errors,

as

you

do

each

operation,

if

you're

adding

a

you,

also

don't

necessarily

know

the

order

in

which

these

numbers

are

going

to

come

in.

So

if

you're

doing

it

say

in

parallel,

so

if

that

happens,

then

you

have

to

not

only

wait

for

all

these

numbers

to

come

in.

You

also

also

have

to

deal

with

the

possibility

of

losing

residuals.

A

Basically,

you

store

the

value

over

about

six

integers

broken

up

into

bins,

of

different

powers

of

2

to

the

n,

in

this

case

it's

2

to

the

46,

and

then

you

do

the

arithmetic

from

with

within

those

bins,

and

then

you

deal

with

the

rounding

of

numbers

as

each

bin

overflows.

But

as

a

result,

you

can

get

a

you

solve

both

the

ordering

issue,

because

it's

integer

arithmetic,

you

also

saw

the

residual

issue

and

basically,

you

end

up

getting

very

highly

accurate

Global

sums.

A

So

that's

what

we

do

and

there's

a

there's,

a

specific

function.

You

should

use

to

do

those

in

the

code

which

I'll

mention

at

the

end.

So

that's

kind

of

how

we

deal

with

arithmetic

I

thought.

I

would

just

wrap

up

with

two

of

the

tests

that

we

do.

We

do

a

lot

of

tests

and

I

don't

have

time

to

go

over

all

of

them,

but

I

think

there

are

two

kind

of

Novel

tests

inside

mom

6

that

I

do

want

to

go

over.

A

The

first

is

something

that

was

alluded

to

in

gustavo's

talk,

which

is

this

dimensional

consistency

that

we

can

do

so.

The

basis

of

that

is

the

fact

that

if

you

recall

this

power

of

two

arithmetic

is

an

integer

arithmetic

operation,

meaning

you

can

do

it

in

any

order

and

it

won't

change

your

answer.

So

that's

kind

of

how

we

do

a

dimensional

scaling

of

these

numbers.

So

if

you

can

imagine,

we

can

take

each

quantity

and

scale

it

by

a

power

of

2

to

the

N.

A

Excuse

me,

by

a

2

to

a

power

of

some

number

and

by

doing

that,

multiplication

we

rescale

it

by

its

Dimensions.

So

we

would

define

an

L

for

length

and

a

horizontal

length

and

a

t

for

time

and

in

this

simple

example

I'm

just

assuming

we

could

calculate

an

acceleration

and

apply

it

to

the

velocity

from

u

n

to

u

n

Plus

1.,

so

the

UN

would

scale

like

2

to

the

L.

Minus

t

same

with:

u?

N?

A

U

n,

plus

1

delta

T

would

scale

like

2

to

the

T

and

F

should

scale

like

L

minus

2T,

since

it's

meters

per

second

squared.

So

the

idea

is

that

if

somehow

there's

a

dimensionally

consistency

in

the

calculation

of

f,

its

components

will

not

scale

that

way.

And

when

you

do

this

rescaling,

you

will

get

a

different

answer,

most

likely

at

some

2

to

the

N

residual,

but

that's

basically

how

it

works

and

it's

it's

working

in

practice

and

it

does

work

well.

A

How

do

we

implement

this?

Well,

what

we

do

now

is

when

we

register

our

parameters.

We

introduce

this

scaling

factor

to

denote

what

it

is,

so

this

will

rescale

it

from

MKS

units

to

this

this

dimensionally

rescaled

unit.

Similarly,

if

we

have

hard-coded

constants

in

the

source,

which

we

are

admittedly

trying

to

phase

out

to

as

much

a

degree

as

we

can,

but

when

they

are

present,

for

example,

here

I

have

a

minimum

velocity

of

10

to

the

minus

10..

A

Excuse

me:

we

also

Supply

the

dimensions,

and

so

the

idea

is

that

when

information

comes

in

it's

scaled

from

MKS

to

this

rescaled

to

the

N

Dimension,

we

run

through

a

calculation

and

then

when

we're

done,

we

output

back

into

the

MKS

units,

and

so

in

that

way

we

are

able

to

find

and

detect.

Dimensional

inconsistencies

in

our

equations,

and

that

is

a

massive

effort

that

Bob

really

worked

on

very

much

and

I

would

say,

is

quite

close

to

its

conclusion.

A

A

A

It

was

just

the

first

one

I

could

find,

but

we

do

a

lot

of

these

operations

in

say

One,

Direction

and

the

other

direction,

and

it's

very

easy

to

make

errors

in

here,

mix

up

I's

and

J's

and

mix

up

dxcus

with

dycvs

and

so

on.

And

so

what

happens

is

that

when

we

apply

this

index

rotation,

this

equation

stays

the

same,

but

all

the

quantities

get

rotated

and

swapped

and

so

on,

and

so

that's

a

way

to

make

sure

that

these

equations

are

doing

the

same

thing

and

on

in

in

the

different

directions.

A

So

as

for

how

to

design

around

this.

For

the

most

part,

it

will

work,

but

one

does

have

to

consider

how

these

stencils

look

when

you

rotate

them.

For

example,

if

we

wanted

to

say

interpolate

from

vertex

points

onto

Center

points,

we

would

generally

just

do

kind

of

a

simple

interpolation

of

the

mean

value

from

the

corners.

But

if

we

do

that

there

are

two

different

values

that

we

can.

A

There

are

two

different

ways:

we

could

do

it

if

we

bundled

the

A

and

B

here

with

the

c

and

d,

if

we

bundled

A

and

B

on

the

top

and

c

and

d

on

the

bottom,

then,

when

we

rotated

that

that

would

become

a

plus

c

plus,

b

plus

d,

which

going

back

to

the

discussion

about

the

parentheses,

would

give

a

different

bitwise

answer.

However,

if

we

had

constructed

these

across

diagonals,

where

a

plus

d

would

be

summed

with

the

other

diagonals

of

B

plus

C,

then

that

would

be

invariant

to

these

index

rotations.

A

But

even

then

that

will

not

always

work,

sometimes

I

mean

sometimes

it's

just

too

complicated,

for

example

in

our

Tracer

advection

there's

a

very

complex

advection

in

X,

followed

by

a

complex

Abduction

of

Y,

and

it's

not

necessarily

practical

to

try

to

make

those

even

work

in

some

kind

of

invariant

way.

So

you

can

just

sort

of

as

a

last

resort,

throw

these

flags

and

to

control

the

order

in

which

these

things

are

done,

which

is

kind

of

the

same

thing,

but

it

is.

A

Maybe

one

or

two

had

a

big

impact

on

the

physics,

but

for

the

most

part

they

were

bugs

related

to

reproducibility,

and

so

you

know

the

tests

are

working

and

solving

problems

and

I

think

that

we

should

embrace

them,

and

I

will

stop

here

because

I'm

a

minute

over.

But

basically

these

are

just

the

guidelines

in

which

we

should

follow

in

order

to

stick

with

keep

keep

the

model

reproducible,

so

I

will

stop

there

thanks.

C

A

B

B

A

Ideally,

no,

it

really

depends

on

the

nature

of

what

you're

doing.

For

the

most

part,

we

do

a

lot

of

vect

array

updates

and

in

fact

the

parallelization

of

those

should

be

independent

of

the

terms

The

Ordering

of

those

terms,

and

should

only

that

should

not

be

affected.

I'm

talking

about

things

like

the

SSE

and

AVX

instructions.

So,

though,

that's

that

shouldn't

matter,

the

collectives

I

think

so

so

things

like

the

global

sums

and

stuff

like

that.

A

But

since

we

kind

of

forbidding

that

I

think

the

answer

is

try

not

to

do

them

because

they

are,

they

are

performance

hit

and

we

need.

You

know

we

can't

do

them

in

the

ways

they

would

like.

They

would

like

to

reorder

them

using

particular

assembly

instructions

that

we

just

can't

use,

because

if

we

did,

we

wouldn't

get

reproducible

results,

but

what

I

would

add

is

that

really

in

terms

of

performance,

we

are

not

at

that

level.

A

Just

about

everything

we

run.

We

are

severely

bottlenecked

and

getting

data

from

the

ram

to

the

CPU.

It's

it's

a

long-standing

problem

with

Ocean

Models

and

the

kind

of

performance

hits

that

we're

talking

about

with

the

parentheses

are

really

something

we

don't

have

that

we're

not

really

in

a

position

to

worry

about

those

I

think

we

have

to

solve

this

Ram

bound

problem

before

we

even

try

to

solve

those

problems.