►

From YouTube: Optimizing Data Preprocessing in Cosmo-3D: Developing Efficient Pipelines with DALI & Nsight Systems

Description

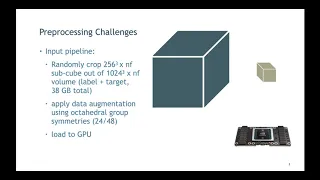

Thorsten Kurth from NVIDIA presents a talk on Optimizing Data Preprocessing in Cosmo-3D -Developing Efficient Pipelines with DALI and Nsight Systems. Recorded live via Zoom at GPUs for Science 2020. https://www.nersc.gov/users/training/gpus-for-science/gpus-for-science-2020/ Due to some data loss, this recording is missing the start of the talk. Session Chair: Michael Rowan.

A

So

this

is

a

very

generic,

a

very

generic

setup,

so

they

tweak

this

for

for

their

specific

purpose,

and

the

important

part

is

that

it's

all

3d

and

3d

usually

means

you

have

more

memory

movement,

and

that

is

what

it

challenges.

So

the

input

data

consists

of

these

simulation

outputs

and

what

they're

using

is

they

use

a

big

cube

of

data

like

a

1024

grid

sites,

cube

grid

sites,

times

number

of

features,

so

number

of

features

will

be

for

the

n-body

part,

the

density

and

the

velocity

in

3d

right

and

for

the

target.

A

It

will

be

these

these

three,

these

four

plus

the

the

temperature,

so

the

total

size

of

this

thing

is

38

gigabytes.

So

what

they

want

to

do

is

now

crop

out

sub

volumes

of

256

cube.

They

want

to

essentially

feed

to

the

neural

network,

because

this

whole

thing

doesn't

fit

and

also

they

hope

to

get

some

effect

through,

like

technically,

like

a

fruit

is

sampling

of

like

a

better

con,

better

convergence

and

better

so

that

the

network

learns

features

like

translational

invariants

and

things

like

that.

A

So

they

also

want

to

do

data

augmentation,

which

means

that

they

don't

only

want

to

crop

out

this

block.

They

also

want

to

rotate

it.

So

the

symmetry

group

they

have

is

the

octahedral

group,

so

all

sorts

of

cubic

rotations

and

essentially

also

reflections.

They

could

apply

to

that

to

increase

virtually

increase,

their

their

data

set

size

and

then

load

this

onto

the

gpu

okay.

So

that

is

that

sounds

pretty

easy

and

you

can

readily

go

and

code

this

up,

for

example,

in

numpy

and

pytorch.

A

So

pytorch

itself

has

a

has

a

nice

data,

loader

framework

and

the

rest.

You

can

do

numpy

right

so

what

they

do?

They

basically

load

all

the

data

into

the

memory,

which

is

a

full

copy

per

process.

So

it's

like

38

gigabytes

per

process

right.

So

if

you

are

in

a

box

with

like

eight

gpus,

you

have

like

eight

times

that

in

memory,

then

you

apply

a

random

crop

and

rotation

and

then

copy

the

buffer

into

a

pi

torch

tensor,

so

the

crop

buffer.

A

This

is

important

because

when

you

basically

slice

data

in

numpy,

it

will

create

a

handle

to

the

original

data

with

some

and

modifies

the

strides.

It

will

not

create

a

copy,

but

pytorch

doesn't

have

all

the

information

numpy

has

and

what

pytorch

wants

is

a

contiguous

buffer

with

basically

canonical

strides.

A

So

what

you

need

to

do

is

you

need

to

create

some

additional

data

copy

and

return

it

as

a

pytorch,

tensor,

and

then

pytorch

can

use

it

and

go

okay.

So

that

is

what

they

had

and

I

looked

at

the

I

o

performance

of

this

thing,

and

this

is

how

it

looks

like

basically

measured

in

samples

per

second,

when

you

scale

out

to

16

gpu

on

a

ddg

x2.

So

that's

important

here.

A

I

just

want

to

see

how

the

the

the

intra

node

scaling

is

right,

because

when

you

scale

out,

you

share

certain

resources.

Sources

such

as

like

the

cpu

resources

and

as

you

can

see,

it

falls

over

at

some

point

and

the

potential

reasons

for

that

could

be

is

that

you

saturate

the

memory

bandwidth

from

the

cpu

to

to

the

to

the

gpu,

and

you

have

some

cache

contention

here,

because

the

random

rotations

they

apply.

So

it's

like

basically

consists

of

like

reflections

in

memory

and

transposition,

so

it's

pretty

expensive.

A

So

I

think

what

what

you

have.

What

you

have

here

is

two

things

you

run

out

of:

maybe

a

fret

parallelism

and-

and

you

you

share

a

lot

of

memory

resources

between

these

these

guys.

So

that

is

not

not

very

good.

So

what

you

of

course

have

when

you

scale

out

in

that

way

on

the

box,

you,

you

basically

have

more

gpus.

So

why

not

put

this

stuff

on

the

gpu?

And

then

you

don't

have

these

issues

right.

A

So

how

do

you

do

that?

This

is

pretty

simple.

You

can

create

a

gpu

enabled

pipelines

with

a

software

called

dali.

It's

an

nvidia

software

which

allows

you

to

build

pre-processing

pipelines

for

any

data,

analytics

workflow,

not

only

deep

learning,

but

it's

it's

mainly

targeting

the

deep

learning

like

applications

and

what

you

can

do

is

you

can

build

the

pipeline

for

cpu

or

gpu

or

mixed,

so

you

can

run

part

of

it

on

the

cpu

part

of

it

of

the

gpu.

What

you

think

works

best.

A

It

supports

various

input

formats,

like

certain

images

for

image

formats,

videos

sound.

What

have

you

and

you

have

a

broad

framework

support.

So,

while

the

dali

pipeline

you

build

is

pretty

pretty

much

framework

agnostic,

you

can

feed

it

or

they

provide

like

wrapper

functions

to

feed

it

into

pytorch

or

tensorflow

stuff,

like

that,

and

it

comes

pre-installed

with

the

ngc

containers

you

find

on

nvidia

gpu

cloud.

A

They

also

open

source.

So

they

have

a

github

page.

If

you

have

a

feature

request

or

bug

report

or

something

you

can,

you

can

file

it

there

and

they

might

look

at

it

at

some

point

and

they

are

pretty

responsive.

So

it's

pretty

nice

okay,

so

I

did

that

so

I

just

used

it,

and

the

first

thing

you

need

to

do

is

very

boilerplate.

A

You

first

look

what

kind

of

input

readers

dali

offers-

and

you

see

that

for

this

specific

purpose

there

isn't

any

so

they

they

offer

something

which

is

called

external

source

which

allows

you

to

feed

arbitrary

data

into

your

daily

pipeline.

So

what

you

need

to

do

is

you

create

an

iterator

which

feeds

your

pipeline

and

then

the

pipeline

will

take

it

from

there

to

fit

in

your

network,

okay.

So

that

is

what

I

do.

It's

basically

just

some.

It's

basically

the

same

thing

as

before,

but

without

the

rotation

stuff.

A

In

this

case,

we

just

do

we

use

a

lot

of

rotation

operators

right,

so

we

want

to

basically

sample

the

the

the

octahedral

group-

and

we

basically

do

this

by

rotating

about

the

random

angle,

about

one

of

these

three

axes.

Okay,

so

that

is

that

and

then

you

need

to

to

tell

it

how

these

ops

are

tied

together

right.

So

you

need

to

basically

define

what

is

called

a

graph,

and

that

is

done

here.

So

we

take

this

external

source.

So

we

take

this

this

input

and

target.

A

We

upload

it

to

the

gpu,

because

we

want

to

use

it

as

a

gpu

pipeline

and

then

we've

we

pipe

it

to

all

three

rotations

like

step

by

step,

and

then

we

need

to

add

an

additional

transposition,

and

the

reason

for

this

is

here

because

dali

wants

to

have

the

feature

dimension

to

be

last

for

the

rotations,

whereas

pie

torch

wants

it

to

be

first

okay.

So

this

is

the

limitation

of

both

frameworks

in

that

sense,

and

they

don't

match.

A

So

you

need

to

add

this

additional

transposition,

but

you

can

hope

that

this

is

just

a

memory

movement

of

a

100

max

or

something

so

it

should

be

fine,

and

then

you

return

it.

So

that's

it

so

it

looks

pretty

much

like

an

iterator.

You

define

this

graph

daily

will

take

it,

it

will

compile

it

and

from

there

it

will

basically

create

an

efficient

pipeline.

A

A

You

import

the

generic

iterator

from

the

python

plugin

from

dali,

so

this

is

shown

here

and

what

I

did

in.

On

top

of

that

you

don't

need

to

do

this,

but

you

can.

I

wrote

the

wrapper

around

that

okay,

so

basically,

I

wrote

a

wrapper

around

the

this

iterator

to

make

it

a

drop-in

replacement

for

the

pie,

torch

iterator.

I

had

because

this

daily

generic

iterator

is

accessed

a

bit

differently

than

this.

A

So

that

being

said,

this

basically

is

now

drop

and

replacement

for

what

I

have,

but

it

does

a

lot

of

stuff

on

the

gpu,

and

this

was

the

original

performance

of

the

pipeline

and

with

the

new

one.

It

looked

like

that.

So

there's

still

some

scaling

issues

potentially

by

like

when

you,

when

you

read

from

from

memory

the

data

from

memory,

but

the

overall

performance

is

much

faster

because

most

of

this

expensive

stuff

is

now

on

the

gpu.

So

I

was

thinking

okay

great,

so

I'm

done

here

right.

A

So,

let's

just

connect

the

whole

the

whole

a

problem

to

it

basically

hook

the

framework

hook

it

into

the

framework

and

see

what

happens

right.

So

I

did

this.

This

is

the

original

original

workflow

scaling,

so

samples

by

seconds

drops

by

a

lot.

But

of

course

you

have

this

expensive

training

set

training

step

now

and

with

the

daily

pipeline

looks

the

same.

So

I

was

a

bit

frustrated

here.

I

was

like

gosh,

we

did

all

this

all

this

work

to

optimize

this

pipeline,

look

much

better.

So

why

is

that?

A

So?

The

first

reason

could

just

be

that

io

was

not

the

issue

here.

So

I

was

like

okay,

let's,

let's

optimize

the

whole

thing

now

and

the

easy

thing

you

can

do

is

use

a

mix

precision,

so

the

original

network

was

run

in

in

fp32,

so

basically

float

or

single

precision

and

pytorch

supports

something

which

is

called

automatic,

mixed

position

or

amp

even

natively

when

you

go

to

version

1.6

and

higher.

A

So

when

you

check

out

the

recent

master

branch,

you

will

get

it

and

this

allows

you

to

offload

certain

operations

to

the

tensor

cores,

so

I'm

on

the

volta

system

here.

So

if

you

use

ampere

it's

the

same

thing,

it

basically

enables

support

for

tensor

cores

for

convolutions

and

dense

operations,

and

since

this

network

is

like

convolutional

mostly

this

will

help

a

lot.

A

A

It

will

keep

copies

of

the

master

weights

in

fp32

and

usually,

if

you

apply

this

kind

of

things,

you

will

for

deep

learning

problems,

you

will

see

a

speed

up

at

no

performance

degradation,

so

that

is

kind

of

good

and

the

right

hand

side.

Sorry,

on

the

right

hand,

side

you

see

how

this

is

done.

It's

basically

by

adding

a

few

lines

of

python

code

and

the

most

important

parts

are

these.

A

A

A

Let's,

let's

profile

it,

and

for

that

you

can

use

ensys

or

inside

systems,

and

it

has

this

nice

tracing

functionality

where

you

can

trace

qr

codes,

which

is

standard

or

cubeless,

but

also

nvtx

and

nvtx,

is

a

library

which

allows

you

to

to

annotate

code

with

your

own.

Like

ranges

on

the

right

hand,

side.

A

A

You

can

put

put

these

ranges

and

and

tell

it

to

annotate

them

so

that

you

would

see

them

on

the

timeline,

and

this

is

how

it

looks

like

when

you

profile

it

and,

as

you

can

see

on

the

on

the

in

the

middle

of

the

screen,

this

gray

stuff,

you

see

like

the

forward,

pass

the

backward

pass

and

you

see

all

the

convolution

calls

made

by

pytorch,

and

what

you

also

can

see

is

this

stuff.

This

is

bad.

This

is

dali

and

it's

actually

not

overlapping

with

the

forward

backward

pass.

Okay.

A

So

this

was

like

what

is

going

on

here

and

you

see

this

big

gap

between

the

forward

and

back

between

the

iteration

steps,

so

that

is

also

bad,

and

what

you

also

can

see

is

another

thing:

is

that

there's

this

mem

copy

essence

in

the

way,

spanning

the

whole

block,

and

that

should

trigger

your

alarm?

It's

nicely

colored

and

red,

because

this

is

not

great

right

when

you

look

into

this.

A

What

happens

here

is

that

at

the

end

of

this

long,

cuda

copy

essence

region,

you

see

that

there

is

a

mem

copy

from

device

to

host

into

pageable

memory,

and

that

is

blocking

so

there's,

basically

an

asset

member

copy

into

pageable

memory.

Don't

do

that

so

now.

The

question

is

what

is

happening

here

and

since

this

only

happens

when

you

enable

amp,

I

was

talking

to

to

the

guy

who

implemented

this

amp

part,

and

it

turns

out

that

actually

this

is

this

line

is

responsible

for

it.

This

is

kind

of

funny.

A

A

We

basically

use

pin

memory,

download

it

into

pin

memory,

and

this

turns

out

to

be

a

more

brutal

python,

a

pie

torch

back

so

that,

basically,

if

you

ask

for

a

non-blocking

copy,

it

will

not

create

a

cpu,

a

pin,

cpu

buffer

to

copy

non-blocking

into

so

basically

unknown.

Blocking

copies

in

pi

torch

can

turn

out

to

be,

can

turn

out

to

be

blocking

if

you

do

not

pin

the

buffer

manually.

So

there

is

a

big

bug.

A

We

fixed

this

in

this

case

manually,

and

I

think

there

is

a

couple

of

like

a

discussion

around

it

how

to

fix

this

in

general.

Okay,

so,

with

these

fixed

results,

we

can

profile

again

and,

as

you

see

now,

great

dali

is

overlapping.

You

see

this.

This

red

cuda

copy

essence

is

just

gone

right

and

there's

this

cute

event

synchronized,

but

that

is

fine.

A

That

is

what

is

supposed

to

be

there,

okay,

but

you

still

see

there's

this

gap

and

it

turns

out

that

most

of

the

ios

synchronous-

and

this

is

because

dali

is

careful-

because

I

feed

external

input-

dali

makes

no

assumptions

about

threat,

safety

and

memory,

coherence

and

stuff.

It

will

assume

that

it

has

to

take

the

data

copy

it

in

the

main

thread

before

it

can

do

anything

with

it.

So

I

was

like

okay,

that

is

stupid

right

I

mean

dali

is

very

careful

here

and

it's

the

right

thing

to

do.

A

Maybe

but

it

doesn't

help

a

lot

with

performance,

so

what

you

need

to

do

is

hide

part

of

that

overhead,

so

what

I

added

stubble

buffering.

So

basically

I

have

two

buffers.

I

can

copy

the

data

into

from

the

memory

and

I

do

it

using

concurrent

futures

in

python

right.

So

there's

a

python

function,

calling

a

concurrent

features

which

you

can

say

copy

the

data

now

so

before

you

process

the

actual

badge

you

prefetch,

the

next

one

and

then

and

the

next

time

before

you

process

the

extra

batch.

A

You

wait

for

the

badge

to

arrive,

and

that

is

very

easily

done

with

a

few

lines

of

code.

I

don't

want

to

go

into

detail

here,

it's

technically

a

slight

modification

to

what

I

had

before,

and

you

need,

of

course,

to

have

a

second

buffer

around,

because

you

need

to

need

to

have

the

actual

and

the

next

batch

in

separate

buffers.

A

Okay,

so

that's

what's

the

original

scaling

and

with

these

fixes

actually

you'll

get

a

little

bit

better

performance.

So

this

is

the

the

orange

line

right

as

you

can

also

see

the

the

yellow

scaling

here.

This

is

just

with

the

amp

fix,

without

overlapping

the

the

I

o

a

bit,

and

this

is

because,

even

if

we

fix,

if

he

fixes

mp

issue

and

dali,

starts

overlapping

with

the

block,

it

previously

overlapped

with

this

still

synchronous

part

of

the

io.

A

So

it

overlapped

with

something

not

the

stuff

we

wanted

to

overlap

with,

but

it

overlapped

with

the

oh.

So

we

couldn't

see

a

benefit

here,

but

once

you

overlap

part

of

the

ao2,

you

see

a

benefit,

but

when

you

profile

it,

it's

still

not

great.

There's

still

this

gap,

and

it

turns

out,

as

I

said,

that

dali

copy

still

doesn't

a

buffer

copy

of

the

of

the

buffer.

I

provide

into

internal

storage

and

that

actually,

you

cannot

get

get

around

with

without

modifying

dahlia

accordingly.

A

We

have

those

some

of

those

are

going

to

be

fixed

soon,

so

they

will

add

a

zero

copy

option,

for

example,

to

this

external

source,

where

you,

as

a

user,

have

to

make

sure

have

to

make

the

guarantee

that

this

buffer

won't

go

away

for

the

next

couple

of

steps.

So,

in

my

case,

in

my

setup

this

is

the

case,

but

you

have

to

be

careful

when

you

do

this,

and

also

what

I

demonstrate

is

that,

with

just

a

few

line

of

python

code,

you

can

actually

get

a

lot

of

speed.

A

B

B

A

No,

I

first

looked

at

the

at

the

I

o

part

right,

so

the

python

part

looks

pretty

much

optimized

to

me.

So,

like

there's

everything

I

mean,

I

think

that

everything

overlaps,

what

what

you

can

overlap.

I

mean

we

found

this

issue

in

the

in

the

pytorch

in

the

in

the

amp

right,

so

that

that

is

one

thing

you

can

see

here.