►

From YouTube: 11 IO Best Practices

Description

Part of the NERSC New User Training on June 16, 2020.

Please see https://www.nersc.gov/users/training/events/new-user-training-june-16-2020/ for the training day agenda and presentation slides.

A

So

this

is

the

basic

set

of

topics.

I'll

cover

the

general

sense

of

the

parallel

I/o

down

to

the

storage

systems

and

then

a

lot

more

detail

about

burst

buffers

and

how

to

get

good

performance

and

how

they're

kind

of

architected.

So

you

can

think

about

how

the

data

moves

around

between

them.

I

know

some

of

this

has

been

covered

by

other

folks

earlier

in

the

day,

so

we

may,

if

I,

might

reiterate

something

that

other

folks

have

covered

as

part

of

the

ye.

A

This

part

of

the

file

systems

I,

hope

it's

not

too

repetitive,

I

think

we're.

We

cover

it

pretty

well

so

in

general,

you'd

like

to

think

of

your

application

as

a

very

nice

Python

script,

and

it

just

reads

some

data

and

magic

happens

and

you

pull

the

data

from

the

file

system

and

you

move

on

with

your

life

and

everything

happens,

and

you

can

do

this.

A

But

it's

very

likely

to

give

you

moderately

poor

performance

certainly

could

be

a

lot

better

if

you

spend

some

time

tuning

in

and

using

some

of

the

specialized

I

hope

metal

ware

that

we

have

and

obtaining

the

settings

and

tuning

things

for

your

app.

So

in

general,

there's

quite

a

bit

more

in

layers

in

between

you,

your

app

and

the

file

system.

A

I/O

library

they

they

provide

usually

some

object

level

abstraction

and

provide

portability

for

your

data,

so

that

you

can

take

your

data

files

that

you

create

it

nurse

or

argon

or

anywhere

else,

and

move

them

around

between

systems.

It

is

usually

between

applications

that

read

that

file

format

below

that

is

kind

of

what

I

would

consider

the

low

level

I

omit

aware-

and

this

is

things

like

in

PII-

oh

and

it

kind

of

deals

with

bytes

at

that

level.

A

It's

probably

not

presenting

something

at

the

object

level

like

hdf5

list,

you

can

further

down

the

stack.

How

do

you

get

data

off

the

compute

node

with

MPI?

Some

form

of

I/o

forwarding

gets

your

data

from

your

computer

over

into

the

actual

HPC

storage

system.

Somehow,

and

this

varies

from

system

to

system

and

vendor

to

vendor

office.

Sometimes

almost

never

are

you

going

to

be

talking

to

this

layer

with

their

applications?

That

would

be

extremely

unusual.

A

A

A

There

we

go.

Thank

you.

So

an

example

of

a

Productivity

I/o

interface

is

something

like

a

tripod.

This

is

a

Python

wrapper

around

the

hdf5

library

and

it

provides

a

really

nice

abstraction

layer

for

applications

to

like

a

Python

scripted

application.

Maybe

a

machine

learning

app

even

to

use

HPC

systems

to

storage,

get

five

data

from

their

Python

code,

and

you

can

see

it's

very

short

sequence

of

a

couple

of

imports

and

then

boom.

You

can

open

your

hdf5

file

with

this

h5

PI

file,

object

and

then

perform

either.

A

Independent,

I/o

and

I'll

talk

a

little

bit

more

about

that

later,

on

or

collective

IO

from

all

your

MPI

ranks

with

HIV.

It

exposes

up

the

the

EPC

file

system

and

a

very

high

level

and

in

Python

so

suggest

if

you're

writing

in

Python.

This

is

great.

It

certainly

does

reduce

your

coding

effort.

You

can

see.

A

So

you

can

see

the

condensation

the

the

amount

of

code.

You

have

to

read

that

the

higher

levels

is,

of

course

much

better

in

Python.

This

is

kind

of

a

generic

thing.

Right.

Python

is

better

a

little

bit

more

dense

and

a

lot

more

abstract

than

the

verbosity

that

you

get

with

c

but

you'd

say

well.

Maybe

the

performance

isn't

so

good,

but

it's

actually

pretty

good

from

an

I/o

perspective

with

HDH

Wi-Fi

and

the

c

interface

for

hdf5

python

is

a

little

bit

slower.

A

This

is

a

summary

of

the

performance

comparison,

but,

generally

speaking,

you

should

be

in

pretty

good

shape

so

coming

on

down

the

stack

talk

a

little

bit

about

high

level

I/o

libraries,

and

usually

these

are

geared

to

parallel

I/o.

They

try

to

reduce

the

applications,

complexity,

level

and

they

provide

an

object-oriented

data

model

and

typically

see

maybe

Fortran

to

the

best

extent.

A

They

can

and

they

that

the

users

construct

relationships

between

those

objects

and

usually

some

form

of

hierarchical

group,

will

file

like

directory

hierarchy

within

the

the

files

themselves

and

the

files,

and

they

create

our

self-describing

machine,

independent

they're,

very,

very

optimized,

for

the

typical

array

oriented

science

data

if

you're,

storing

goodbye

Sara

byte

scale,

floating-point

arrays.

This

is

your

ball

game,

it's

not

so

great

for

Strings

in

general,

but

can

handle

those

kinds

of

abstractions

as

well

and

some

of

the

ones

that

nurse

provides

on

their

systems.

A

Things

like

hdf5,

parallel,

netcdf,

adios

and

kind

of

roughly

that

order

of

popularity,

binding

applications.

Most

of

the

people

are

using

hdf5.

Some

of

them

are

using

peanuts,

ETF

and

a

few

are

using

audio

centers.

Probably

our

expertise

lies

along

that

frequency

domain

as

well

hdf5

just

very

quickly.

If

you

were

writing

C

code,

a

very

small

snippet

of

the

I/o

code

that

you

would

write

would

look

like

hey

I

want

to

create

a

file.

I

want

to

create

a

data

set

I'm

going

to

write

some

data

to

it.

A

If

you

want

to

do

that

in

parallel,

these

highlighted

sections

are

kind

of

the

changes

you

would

make

to

that

you'd

say:

hey

I

want

to

use

the

MPI

file

driver

when

I

open

this

file

and

I

also

want

to

perform

collective

IO.

When

I

do

my

dataset

right,

these

enable

the

majority

of

the

performance

improvements

that

you'll

see

for

your

application.

A

So

this

is

the

kind

of

core

set

of

basic

io

optimizations

that

you'll

make

there's

a

lot

more

hdf5,

tutorials

ones

available

online

as

web

pages

at

Nurik,

ATP

esc

series

has

videos

and

other

things

available

too,

so

you

can

watch

those

and

do

some

online

hands-on

things.

Htf

group

website

has

tons

and

tons

of

examples

for

how

to

do

hdf5.

The

parallel

well.

A

Going

down

the

stack

next

level,

aisle

middle,

where

it's

like

pork

stock,

where

why

are

we

doing

this,

and

usually

what

this

level

is

doing,

is

providing

portability

between

the

parallel

file

systems

that

lie

below

it?

You

want

to

program

to

some

level

of

abstraction,

but

you

don't

want

to

have

to

know

exactly

that.

You're

dealing

with

GPFS

versus

lustre

versus

anything

else.

So

this

level

is

the

level

that

provides

that

portability

across

the

parallel

file

systems,

and

hopefully

it

it

does

the

optimizations

that

are

appropriate

for

that

file

system.

A

A

Discontinuous,

you

can

do

strided

access

to

arrays

in

memory

and

like

file,

provides

a

non-blocking

operation,

so

you

can

initiate

an

I/o

and

then

go

back

and

do

something

else

in

your

compute

check

back

later,

it's

got

lots

of

wrappings

for

Fortran

and

Python

and

C++

and

other

things,

and

it

does

have

a

fairly

basic

way

of

trying

to

generate

some

portability

amongst

the

files

that

it

creates.

But

it's

it's

nowhere

near

as

comprehensive.

It's

the

higher

levels

like

hdf5

intensity.

A

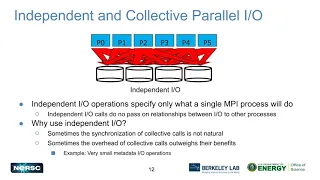

So

brief,

we

could

sit

here

and

talk

for

20

minutes

about

independent

versus

collective

parallel,

I/o

and

figure

out

how

those

really

optimize

things,

but

in

a

very

high-level

independent,

is

kind

of

what

it

says.

It's.

What

a

single

MPI

process

is

going

to

do

and

it

can

write

anywhere

in

the

file

or

in

multiple

files.

It

has

no

coordination

with

other

processes

and

other

MPI

ranks

and

just

as

basically

out

there

to

do

its

own

IO

by

itself,

and

sometimes

it's

useful

right.

A

You

know

the

synchronization

overhead

for

collective

IO

is

and

really

fit

into

your

application

and

whatever

way-

and

sometimes

the

overhead

is

a

little

bit

more

than

you

need

to

bear

the

actual

extra

data

transfers

and

coordination.

But

the

other

ranks

collective

IO

says:

hey.

Everybody

is

here

together,

so

you

have

to

make

these

calls

from

all

the

processes

that

open

the

file.

So

it

really

means

everybody

and

we're

all

gonna,

compare

notes

and

say

I'm

reading

this

section

of

the

file

and

you're

writing

that

section

of

the

file

that's

swaps

and

data

around.

A

So

lots

of

tutorials

on

how

to

do

IO

in

MPI.

Well

again,

their

skins

are

gone

and

mcsa

as

a

nice

and

lectures

from

they'll

drop

one

of

the

implementers,

the

initial

implementers

so

em

pitch.

So

all

these

are

really

great

resources

for

more

tuning

and

more

details

than

I

can

possibly

cover

in

20

minutes.

A

You

want

to

the

next

level

down

their

stack

right.

Looking

at

your

I/o

patterns,

I

want

to

improve

my

I/o.

What

do

I

have

to

do

to

do

that?

Well

answer

some

questions

right,

whether

the

number

processes

you're

using

number

of

files,

how

much

you're

writing

to

each

one.

That's

your

eye

opener

and

look

like

once.

You

start

gathering

some

data

about

what

am

I

doing,

then.

You

can

start

saying

well,

how

can

I

include

this

and

you

know

typical

I/o.

You

want

contiguous

big

blocks

to

write

the

disk.

A

If

we,

you

know,

the

access

time

is

much

lower,

much

lower,

better

performance

for

your

typical

rotating

disk,

non-contiguous

I/o.

If

you

see

call

over

the

file,

it's

going

to

jump

and

move

the

hard

drive

heads

a

lot

and

you're,

probably

gonna

have

performance

so

from

a

very

2000

quit

level

right

to

get

bigger,

more

contiguous

blocks

of

data

moving

out

to

disks.

Mpi

will

help

you

on

that.

You

know

collective

IO,

that's

part

of

what

its

goal

is.

A

A

A

One

of

the

success

stories

here

is

an

application

framework

called

Athena,

its

master

physics

code,

very

adult-like,

some

folks

at

Harvard

Princeton,

looking

at

their

I/o

patterns

with

darshan

allowed

us

to

say.

Oh

you're

doing

this,

and

this

with

your

I/o

patterns.

Can

you

change

how

your

application

runs,

and

so

we

gave

them

back

essentially

almost

half

of

their

compute

hours

right,

because

previously

IO

was

taking

40%

of

their

time

and

then

bring

this.

You

know

tuning

it

nicely

using

the

tools

and

techniques

here

took

all

the

other

way.

They.

A

So

I'm

not

going

to

touch

too

detailed

here

while

heats

covered.

The

file

systems

earlier

and

I've

just

mentioned

that

Cori

scratch

is

the

general-purpose

high-performance,

the

patient,

for

doing

I

Oh

tuning.

So

if

you're

optimizing

for

something

that's

where

you

want

to

do

your

I/o

tuning

and,

generally

speaking,

you

want

to

tune

your

striping

with

your

app

to

use

the

appropriate

and

there's

some

guidance

here

about

which,

what's

the

appropriate

stripe

count

and

stripe

size

for

strengthening

your

data

files

over

the

storage

servers

for

the

muster

scratch

system.

A

A

So

there's

a

special

data,

warped

software

that

stores

and

retrieves

data

from

the

burst

buffer.

But

you

would

see

it

as

a

projects,

file

system

and,

generally

speaking,

that

file

system

is

created

on

the

per

job

basis

and

you

and

you

see

all

those

first

buffer

notes,

kind

of

stitched

together

in

one

nice

POSIX

file

system

and

again,

some

more

performance

tuning

pieces.

Here

that

point

you

towards,

generally

speaking,

the

data

paths,

your

compute

is

going

to

move

through

I/o

nodes

and

then

either

to

mustard

scratch

or

possibly

directly

to

the

burst

buffer

nodes.

A

A

A

So

during

the

stage

in

we

bring

the

data

in

and

fire

off

your

computes.

While

it's

running

the

DDS

software

and

the

compute

nodes

talks

to

the

burst

buffer,

they

act

like

a

POSIX

filesystem,

hdf5

MPI.

Oh

all

that

works

just

fine

here

and

then

at

the

end

of

your

job

boom.

Everything

gets

sent

back

out

to

wherever

you

tell

it

on

the

lustre

scratch.

Typically

at

the

end

of

your

job,

and

that's

that's

your

flow

for

your

data,

your

your

best

practice

there.

You

can

do

bad

things.

A

If

you

move

data

around,

you

can

force

it

to

come

around

from

muster

scratch

into

your

computer

and

then

down

into

the

burst

buffer

instead

of

being

moved

directly

and

then

sent

back

the

other

way

to

try

not

to

tell

the

scratch

filesystem

to

be

doing

things

from

the

compute

nodes.

There's

occasional,

optimization

paths

here,

there's

work!

Ok,

but,

generally

speaking,

this

is

a

not

your

best

idea

for

your

free

ride

patterns

and

again

take

a

look

at

the

first

buffer

performance,

optimization

links

and

pages

on

the

nurse

site.

A

Just

a

small

success

story

here

at

the

very

end

accessing

the

HIV

boss,

which

is

a

galaxy

spectra

analysis

tool

from

muster,

was

taking

about

40

seconds

for

hdf5

s.

Worse

fits

different

file

format,

but

when

you

stage

that

data

on

to

the

first

buffer

and

do

the

iOS

they're

your

group,

you

know

performance

quite

a

bit.

Just

fits

less

so

just

mess

up

an

optimized

file

format

than

hdf5,

but

you

can

get

10x

or

more

for

DX

here

when

you're

dealing

with

the

burst

buffer

instead

of

non-core

either

you

know

the

muster

spinning

disk.

A

A

Yes,

I

think

the

link

here

has

a

little

bit

both

of

these

there's

documentation

here

about

the

architecture

itself

and

then

how

they

improve

the

performance

at

the

second

link.

Here

there

should

be

reasonable

code

there

and

you

shouldn't

even

need

to

do

anything

right.

The

the

goal

is

to

treat

it

like

a

POSIX

file

system

and

just

do

I

owe

the

magic

is

you

know

getting

your

data

staged

in

and

staged

out

with

your

job

submission

scripts

really,

so

it

shouldn't

have

any

special

modifications

to

your

app.