►

From YouTube: 10 Data Transfer Best Practices

Description

Part of the NERSC New User Training on June 16, 2020.

Please see https://www.nersc.gov/users/training/events/new-user-training-june-16-2020/ for the training day agenda and presentation slides.

A

B

A

So

the

first

thing

I

want

to,

let

you

know

is

that

we

have

a

dedicated

set

of

nodes,

so

called

data

transfer

nodes

that

you

can

use

for

moving

data

into

and

out

of

nurse,

you

can

SSH

directly

into

four

of

those

nodes.

There

Athiya

this

address

here,

DT

n,

zero,

one

dot

nurse

gov

all

the

way

through

four.

A

These

are.

These

servers

are

optimized

for

data

movement.

They

have

high

bandwidth

network

interfaces,

they've

been

highly

tuned

for

efficient

data

transfers

and

members

of

the

data,

analytic

services

and

the

storage

group

have

worked

to

make

these

really

really

work

well,

along

with

working

with

the

S

net,

to

remove

a

lot

of

obstacles

in

the

land

that

are

between

us

and

our

major

science

partners,

and

we

have

a

monitored

bandwidth

between

their

skin

other

major

facilities,

such

as

other

DOA

labs

and

slack,

to

make

sure

that

data

is

moving

as

optimally

as

it

can.

A

These

nodes

have

direct

access

to

all

of

the

nurse

file

systems

that

what

he

just

mentioned.

You

can

log

in

there

and

interact

with

the

community

file

system

that

HP

OSS

tape

archive

Cori

scratch,

and

so

you

can

use

them

to

move

data

externally

to

other

systems

outside

of

nurse

Co.

You

can

move

them,

use

them

to

move

data

internally

between

their

systems

and

there's

gauge

PSS.

B

A

Thanks,

sorry

about

that,

yes

so

use

the

D

chance

to

move

large

volumes

of

data

into

and

out

of

nurse

or

between

their

systems.

If

you

are

moving

a

lot

of

data,

we

come

in

that

you

use

globus,

which

is

a

software

stack.

That's

optimized

for

moving

data

under

the

covers.

It's

basically

parallel

grid

FTP,

and

what

that

is.

This

is

something

that

paralyzes

data

transfer

movement

for

you

and

it

can

so

it

sits,

and

they

excuse

me

they

have

a

whole

very

easy-to-use

web-based

service.

A

Basically,

it's

a

GUI

I'll

show

it

to

you

in

a

minute,

but

it

does

automatic

retry.

So

you

set

up

the

transfer

and

it'll

move

the

data

for

you

and

if

you

move

in

10,000

files

and

one

of

them

fails,

it'll

it'll

be

try

that

not

that

file

again

and

we

try

it

over

several

times

until

you

either

succeeds

or

reaches

its

quota,

and

then

it

sends

you

an

email

when

it's

done

and

you

can

go

to

the

website

and

check

on

these

transfers.

So

it's

really

great

for

sort

of

fire-and-forget

data

transfers.

A

We

have

a

globus,

endpoint

and

most

major

educational

institutions

have

a

globus

endpoint.

If

you

don't

have

one

from

your

target,

you

can

set

up

a

Globus,

personal

endpoint

and

even

that's

a

little

better

than

just

trying

to

move

it

yourself

with

secure

copy,

because

it

automatically

does

the

parallel

movements

and

it

does

the

retry

and

notification

when

it's

done

so

that

extra

stuff

really

makes

data

transfer

much

easier.

We

also

have

so

there's

a

web-based

GUI

I'll

show

you

in

a

minute.

A

We

also

have

some

globus,

some

scripts

deployed

at

nurse

for

command

line

transfers,

&

Glow.

This

also

has

a

rest

rest

api.

If

you

wanted

to

write

your

own

sort

of

services

to

pull

against

it,

they

have

a

nice

Python

software

development

kit.

You

can

also

use

the

write

scripts

and

they

also

have

a

canned

Globus

connect,

personal

installation

that

works

for

Mac,

Windows

Linux

that

you

can

install

on

your

personal

laptop.

If

you

wanted

to

move

data

that

much

so.

A

So

let

me

just

do

a

quick

demo

of

Globus,

so

this

is

the

globus

webpage.

It's

dubbed

Globus

org

and

you

can

go

here

to

this

button.

You

get

here

the

first

time

you're

going

to

need

to

create

a

globalist

account.

I've

already

created

a

Globus

account.

Let's

assume

that

you've

done

that.

So

you

can

come

here

and

you

can

log

in

and

you

choose

once

you

could

get

your

Globus

account.

You

can

link

it

against

your

your

nurse

cacao

or

any

other

number

of

different

accounts

that

are

here.

A

So

here's

what

it

looks

like

when

you

log

in

so

basically

this

is

GUI

here

and

you

can

go

here

and

search.

So

we

have

a

bunch

of

endpoints

I

recommend

the

nurse

BTN

endpoint.

This

is

the

one

that

points

directly

at

the

data

transfer

nodes,

and

then

here

is

a

listing

of

everything.

That's

in

your

in

your

home

directory,

let's

say:

I

just

wanted

to

put

something:

I'm

gonna

use

this

to

put

this

into

another

endpoint

that

we

have,

which

is

nurse

kori.

A

And

so

here

it's

loading

the

it's

also

loading

my

home

directory

because

they

both

amount

the

same

thing,

but

you

can

click

on

a

file.

You

want

to

move

and

then

you

can

go

over

here

and

put

a

place

where

you

want.

To

put

it

so,

let's

say:

there's

kind

of

folder

I'll

put

it

into

this

bar

folder,

and

then

you

just

click

on

this

start

and

it

does

the

transfer.

You

can

click

on

this

to

go,

see

the

details

of

the

transfer

and

it'll.

Tell

you

like.

A

A

Okay,

so

that's

Globus!

It's

pretty

easy

to

use.

I,

definitely

recommend

it.

So

some

general

tips

for

transferring

data

large

transfers.

We

recommend

Globus

online.

If

you

can,

you

can

also,

if

you're,

moving

large

chunks

of

data

inside

of

nurse.

Let's

say

you

have

a

bunch

several

terabytes

of

data.

You

want

to

stage

to

Cori

scratch.

You

can

use

Globus

for

that,

if

you're

doing

smaller,

one-time

transfers

that

are

less

than

100

megabytes

or

so

of

data,

you

can

use

SP.

A

The

globus

is

also

fine

for

small

transfers

too,

and

then

the

the

data

transfer

nodes

are

really

just

for

transferring

data.

So

please

don't

use

them

for

non

transfer

purposes.

Don't

use,

don't

run

compute

heavy

things

there,

don't

if

you

can

avoid

it

them

on

your

work

flows

out

of

it.

Let's

move

those

to

the

work

flow

nodes

and

use

the

system,

login

notes

for

more

more

general

routine

tasks

like

compiling.

A

So

one

thing

to

think

about

when

you're

trying

to

get

the

best

performance

is

that

usually

the

performance

is

often

limited

by

the

the

remote

endpoint.

So

you

know

nurse

works

really

closely

with

the

essent

to

get

the

most

optimal

paths

out

of

our

system

and

a

lot

of

institutions

also

have

optimal

paths

into

their

systems.

But

then

it's

that

last

hundred

meters

or

whatever

to

the

computer

you're

trying

to

get

through

that

usually

is

very

difficult

to

get

that

highly

optimized.

A

So

often

the

remote

endpoint

isn't

tuned

for

optimal

data

transfer,

and

so

you

can

see

low

performances

just

based

on

that.

Occasionally

the

nurse

side

file

system

contention

could

be

an

issue

like

Cori.

Scratch

is

very

heavily

loaded,

so

it's

delivering

data

very

slowly

and

also

don't

use

your

home

directory

for

for

large

data.

I

mean

number

one.

It

wouldn't

fit

number

two.

It

doesn't

perform.

Well,

so

keeping

those

things

in

mind.

A

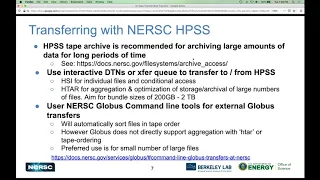

So

one

special

case

I

wanted

to

talk

about

is

transferring

with

with

into

and

out

of

the

nurse

HP

SS

archive,

and

so

like

what

he'd

said.

We

recommend

you

use

our

tape

archive

for

archiving

large

amounts

of

data

for

a

long

period

of

time,

and

we

have

a

pretty

extensive

documentation

on

this

I

recommend

you

check

it

out.

So

when

you're

done

with

your

data

and

you

you

don't

think

you're

going

to

need

it

for

you

know

about

a

year,

you

know.

Maybe

it's

like

data

from

your

paper.

You.

B

A

To

keep

it

forever,

but

you

don't

think

you're

going

to

be

frequently

accessing

it.

That's

the

time

you

want

to

put

it

into

HP

SS,

and

so

you

can

do

that

either

on

the

interactive

DT

ends

by

SSH

into

there

or

you

can

use

our

special

transfer

queue

on

Cori

to

move

this

data

to

into

and

out

of

HP

s

FS,

and

we

have

two

main

ways

on

the

command

line

that

you

can

move

data

into.

Hp

SS,

there's

something

else

HSI

which

is

sort

of

like

its.

A

It

works

with

a

put

get

method,

so

you

can

say,

HSI

put

this

file

and

it'll

put

that

file

into

HP

SS.

If

you

want

to

get

it

back

out,

you

use

get,

and

so

you

can

use

that

for

putting

individual

files

into

there.

It

also

has

some

nice

flags

on

there

for

like

conditional

access

like

put

this

file

into

HP

SS.

If

it

if

the

one

in

HP

is

the

one

on

the

spinning

disk

is

newer

than

the

one

in

HP

SS

then

transfer

it

over.

A

If

not,

don't

do

anything,

so

you

can

do

some

sort

of

you

can

do

some

running

backups

of

your

system

for

things

that

have

changed

generally,

if

you

have

lots

of

files

like

more

than

about

a

about

ten

or

so,

we

recommend

that

you

use

H

tar

to

aggregate

them

up

and

H

star

bundles

things

up

in

the

same

way

that

tar

does,

except

that

the

output

tar

file

goes

directly

into

HP

SS.

It

doesn't

hit

your

spinning

disk.

A

It

can

be

really

handy

if

you

have

let's

say

two

terabytes

of

data

that

are

in

all

in

small

files.

You

can

use

H

tar

to

bundle

them

all

up

and

put

the

output

into

HP

SS.

You

don't

need

to

have

space

for

both

of

those

things

before

you

put

it

in

and

like

I

said

like

what

he'd

said

before

when

you're

bundling

these

things

up,

think

about

how

you

might

want

to

come

and

get

them

back

out

if

you

think

you're

going

to

get

a

whole

years

worth

of

data

all

at

once.

A

Maybe

you

put

that

into

one

bundle.

If

you

think

you're

only

going

to

need

I

know

that

the

calibration

files

or

something

maybe

you

bundle

those

separately

just

try

to

think

about

how

you

might

possibly

want

to

get

out

when

you're

putting

them

away.

You

can

also

use

globus

for

our

HP

SS

system,

but

we

actually

recommend

that

you

use

our

command

line

tools

for

external

globus.

A

At

the

same

time,

instead

of

you

know

getting

it

on

a

tape

and

then

the

machine

has

to

load.

Another

tape

go

to

the

it's

a

little

robot.

It

has

to

go

down

the

thing

and

pick

up

another

tape

and

put

it

in,

and

so,

if

you

don't

order

them

so

you're

getting

everything

off

the

tape

in

order,

it

can

really

slow

down

the

process.

A

So

this

is

this

is

me

and

Corey,

and

you

can

get

there's

some

documentation

on

this

on

how

to

do

this,

this

to

dip

to

these

tools.

You

say:

module,

load,

Globus

tools

and

then

you

can

say

which

transfer

files.

So

this

is

a

helper

script

that

will

let

you

transfer

start

Globus

transfers

from

the

command

line

at

nursing,

and

so

you

don't

have

to

use

this

just

for

HP

SS.

You

can

use

it

for

various

other

things.

You

could

script

it

as

part

of

your

job.

Submission

I'll

talk

a

little

bit

more

about

that.

A

If

you

wanted

to

stage

data

and

then

submit

a

job

when

it's

done

and

so

the

way

you

use

this,

is

you

say

you

have

a

source,

that's

where

this

data

is

coming

from,

and

this

is

normally

an

endpoint

UUID

which

you

can

get

from

the

globus

endpoint

page?

Is

this

really

a

long

Godley

good

thing,

but

it

also

knows

a

bunch

of

shortcuts

for

the

nurse

DTN

endpoint,

you

can

just

say

DTN

for

nurse

gage

PSI.

A

From

HP

SS

and

put

them

in

to

put

them

into

my

scratch

directory,

so

I

have

this

list

of

files.

So

I've

listed

the

files

that

I

have

an

HP

SS

in

this

text

file.

My

output

file

here

is

in

my

scratch

directory

and

then

it's

going

to

transfer

it's

going

to

pull

from

HP

SS

and

move

it

to

the

my

global

scratch

directory.

So

I

can

hit

return.

A

A

We

can

take

a

look

in

this

directory

and

these

these

files

are

here

I,

think

so

they've

been

copied

over

and

if

you

wanted

to

excuse

me,

there's

also

an

example:

script

on

this

stage

data

script,

which

we

can

give

it

the

same,

the

same

arguments

but

with

the

additional

the

addition

of

an

analysis,

script,

a

job

script,

and

so

what

this

will

do

is

it'll

move.

This

data

over

and

it'll

keep

checking

that

until

the

data

is

transferred,

and

then

it

will

submit

this

analysis

script

job.

So

this

is

submitting

to

the

transfer

cluster.

A

Just

watch

this

directory

and

what

it's

doing

it's

setup,

the

transfer,

and

now

it's

querying

every

few

minutes

transfers

active,

not

succeeded.

It

submitted

this

analysis,

script

job,

which

is

going

to

use

this

data

that's

in

place,

so

you

can

see

this

this

this

stage.

Data

script

is

actually

included

in

the

the

globus

tools

module,

and

so

you

can

take

a

look

at

this,

and

if

you

wanted

to

use

something

like

this

for

your

own

job

submission,

you

can

just

alter

it

a

little.

A

A

A

You

need

to

share

with

someone

you

can

set

up

a

global

sharing

endpoint

and

then

that

has

a

permanent

web

address

that

you

can

use

to

share

this

data

with

either

all

globish

users

like

anyone

who

has

the

address

and

is

on

Globus

or

with

a

specific

subset

of

just

a

few

Globus

users.

It's

your

choice.