►

From YouTube: 8. Introduction to OpenACC -- Brent Leback

Description

Part of the Nvidia HPC SDK Training, Jan 12-13, 2022. Slides and more details are available at https://www.nersc.gov/users/training/events/nvidia-hpcsdk-training-jan2022/

A

Again,

my

name

is

brent

levac.

If

you

were

here

yesterday,

you

had

my

introduction,

so

I'm

just

a

member

of

the

nvidia

hpc

sdk

team.

I

do

a

lot

of

customer

support,

10

hackathons

and

help

out

wherever

I

can

so

today

we're

going

to

talk

about

open,

acc

first

and

then

I'm

going

to

also

talk

about

openmp.

These

are

two

directive

based

models

and

we'll

talk

about

the

similarities

and

the

differences

between

the

two

models.

A

Open

acc

resources

so

there's

a

website.

Openacc.Org

has

a

lot

of

the

resources

like

the

specification

where

to

find

literature

on

it

where

to

find

maybe

some

blogs

or

success

stories,

upcoming

events.

So

through

nvidia

we

have

a

lot

of

hackathons

over

the

course

of

the

year.

I'm

currently

involved

with

one

at

georgia,

tech

I've

been

involved

with

a

lot

of

the

hackathons

over

the

years

and

the

hackathons

are

open

to

people

using,

of

course,

open

acc

also

openmp

just

cuda.

A

A

Let's

continue

on

here

basic

syntactic

concepts,

so

both

openmp

and

openacc

use

directives.

We,

I

guess

in

fortran

the

the

correct

name,

is

directive

in

c.

Maybe

the

it's

also

called

a

directive,

but

it's

found

pragma.

So

you

say:

pound

pregnant

acc

directive

name

or

in

in

fortran.

Most

fortran

that

I've

come

across

now

is

is

free

form.

A

Now,

if

you

use

a

compiler

that

doesn't

recognize

those

directives

either

it

doesn't

have

that

capability

or

you

don't

turn

the

the

compiler

options

on

to

recognize

those

directives.

You

should

still

have

your

original

sequential

code,

which

will

work

the

way

it

always

so

that's

both

powerful

and

has

a

few

drawbacks.

I

have

to

warn

people.

I

have

been

involved

many

times

at

hackathons

in

in

my

own

work,

where

I

have

misspelled

the

directive.

A

I've

said

bang

acc

or

bang

bang

acc

that

we've

just

you

know,

created

a

typo

when

we

type

things

in,

and

you

will

probably

do

that

yourself.

If

you

use

directives

a

lot

and

then

it

just

becomes

a

comment

and

I've

been

at

a

hackathon

where

we've

wasted

like

half

a

day,

because

we

had

a

typo

in

the

comment,

and

so

the

directive

wasn't

doing

what

we

thought

it

should

be

doing.

It

was

just

a

comment,

so

so

you

got

to

take

the

good

with

the

bad

a

little

bit.

A

A

A

So

I'm

going

to

kind

of

keep

with

the

the

design

of

the

two-day

talks,

we'll

start

with

the

highest

level.

First,

so

the

highest

level

compute

construct

in

open

acc

is

the

kernels

construct

and

there

have

been

many

successful

ports

of

applications

that

only

use

acc

kernels

and

what

they

do.

Is

they

just

kind

of

find

the

the

section

of

code

and

they

put

acc

kernels

at

the

beginning

and

the

kernels

at

the

end.

A

So

what

this

does

is

expresses

that

this

region

of

code

may

contain

parallelism

and

it's

up

to

the

compiler

to

determine

what

can

safely

be

parallelized

and

offloaded

to

the

gpu.

So,

depending

on

the

compiler,

you

may

have,

you

know,

begin

kernels

and

kernels

and

you

can

have

multiple

sections

or

multiple

loops

inside

of

there

and

the

compiler

is

free

to

identify

the

number

of

parallel

loops

in

there

and

and

generate

multiple

kernels

for

offload.

In

this

case

it

could

generate

two

kernels

or

it

could.

A

You

know,

fuse

the

two

loops

and

generate

one

kernel:

it's

not

specified

by

the

the

open,

acc

spec,

how

many

kernels

to

generate

here.

It's

up

to

the

compiler's

capabilities

and

the

compiler

analysis

to

make

that

decision.

For

you

and,

like

I

said

many

people

are

comfortable

with

this,

and

it's

worked

in

a

lot

of

major

applications.

A

A

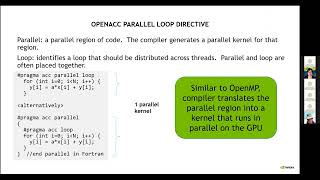

So

you

basically

say

pragma

acc,

parallel

or

pragma,

acc

parallel

or

fragment,

acc

loop,

and

so

just

like

in

openmp.

Acc

parallel

just

starts

a

kernel

region,

and

then

you

put

the

work

sharing

loops

inside

or

the

upper

example.

Acc

parallel

loop

is

a

combined

directive,

both

parallel

and

loop

inside

the

same

directive,

and

you

can

do

it

either

way

they

are

equivalent,

if

you

say,

acc,

parallel

and

you're

in

fortran,

you

need

to

have

an

end

parallel

and

the

same

in

c.

A

A

A

A

A

A

I

could

have

the

middle

loop

loop

worker,

I

could

have

the

innermost

loop

loop

vector

and

that's

a

common

way

to

do

it

another

way

that

I've

ended

up

using

more

and

more.

The

more

experience

I

get

is

just

the

bottom

block.

There,

acc

parallel

loop

collapse,

three

and

I

let

the

compiler

compute

the

the.

A

What

am

I

trying

to

say,

the

decomposition

of

the

three

loops

inside

the

kernel

and

I'll

show

a

little

bit

more

example

of

that

later.

So

you

can

put

gang

and

vector

on

that

and

it

will

use

both

the

blocks

in

a

grid

and

the

threads

in

a

block

or

running

that,

and

you

just

kind

of

end

up

with

the

default

configuration

that

the

compiler

chooses.

A

So,

but

if

you,

if

you

know

something

like

you

know,

my

vector

dimension

of

my

arrays

is

not

128.

It's

only

30

or

32,

or

you

know

a

short

number.

You

can

force

the

vector

length

to

be

smaller,

so

vector

length.

Q

use

a

vector

length

of

q

for

this

parallel

region.

Of

course

you

can

change

this

for

every

different

kernel,

which

is

nice.

A

You

don't

have

to

have

one

setting

over

the

whole

program,

so

this

gives

you

some

flexibility

and

it's

a

little

more

like

cuda,

in

that

you

can

have

different

launch

bounds

for

every

different

kernel.

So

we

talked

about

yesterday

a

little

bit

about

do

concurrent

and

some

of

the

limitations

of

do

concurrent

and

one

of

the

ones

that

I

pointed

out

was

you

don't

have

any

control

over

the

launch

configuration

in

do

concurrent

currently

and

so

open,

acc

and

openmp

is,

will

show,

give

you

some

flexibility

and

control

over

the

launch.

A

You

do

not

always

want

to

work

share

a

loop

in

your

kernels,

so

sometimes

you

want

every

thread

to

run

the

entire

loop

you

want

well.

This

is

often

the

case

in

you

know

multi-dimensional

loop

constructs.

So

if

you

have

a

four

or

five

I've,

even

seen,

seven

deep

nested

do

loops

and

fortran

some

of

the

loops.

A

You

just

want

every

thread

to

run

sequential,

so

you

can

force

that

using

acc

parallel

by

saying

acc

loop

seq

for

sequential,

so

you

can

still

you

know,

work

share

outermost

loops

and

then

run

sequentially

an

innermost

loop

or

you

can.

You

can

actually

run

sequentially,

not

the

innermost

loop.

You

can.

The

acc

loop

sequential

can

occur

anywhere

in

the

loop

structure

or

above

any

for

loop.

A

One

recommendation

for

you

is

that

don't

put

acc

loop

sequential

on

the

loops

that

have

arrays

with

the

index

being

the

leading

dimension

of

your

array.

So

yesterday

I

talked

about

the

most

important

thing

for

performance

on

a

gpu

was

at

the

vector

dimension,

use

the

vector

index

as

the

leading

dimension

of

most

of

your

array

accesses.

So

in

this

example,

maybe

it's

not

great,

because

the

array

accesses

are

pretty

mixed

up.

There's

I

j

I

k

and

k

j.

A

This

last

note,

sometimes

the

m

info

messages

will

tell

you

that

the

compiler

found

a

dependency

in

one

of

the

loops

and

forced

it

to

be

sequ,

and

sometimes

even

if

you

mark

the

loop

as

seq,

the

compiler

m

info

will

still

tell

you

that,

which

is

kind

of

an

annoyance

to

me.

But

again,

we've

stressed

this

many

times.

Look

at

the

m

info

output

and

verify

that

what

the

compiler

is

doing,

syncs

with

what

you

think

should

be

happening.

A

So

it's

useful

when

the

loop

extents

are

short

or

there

are

more

loops

than

levels.

So

you

know

I

mentioned

you

could

have

a

four

or

five

six

dimensional,

you

know

do

loops

or

four

loops.

You

only

have

three

levels

of

parallelism,

but

maybe

some

of

the

loops

are

short

like

you

know,

only

one

to

four

or

zero

to

seven

or

something

like

that.

So

you

know

you

don't

want

to

dedicate

a

whole.

A

You

know

loop

to

one

of

your

units

of

parallel

is

so

you

want

to

collapse

a

couple

of

those

together.

So

if

you

have

the

code

on

the

left,

basically

what

the

compiler

does

is

takes

the

two

loop

bounds,

both

in

in

this

case

and

creates

a

single

loop

from

zero

to

n

times

n.

And

then,

if

you

use

I

and

j

as

indices

into

arrays,

it

does

you

know

the

correct

arithmetic,

either

divide

or

mod

or

or

both.

I

don't

know.

If

I

did

that

exactly

right,

but

it's

something

like

that.

A

To

get

the

I

and

j

values,

and

so

you

might

think

well,

that's

just

a

lot

of

overhead,

but

remember

that

these

loops

are

loop

shared.

So

every

thread

has

to

do

this

anyway

right,

so

it

doesn't

create

any

more

work

than

you

would

have

to

do

anyway

by

the

time

that

the

the

loops

from

zero

to

n

minus

one

are

mapped

onto

some

number

of

threads

or

some

number

of

blocks,

so

the

work

is

done

anyway.

You

shouldn't

see

a

performance

degradation

for

this

little

bit

of

extra

arithmetic.

A

A

A

So

fv

is

a

function,

that's

our

routine,

vector

and

so

vector

routines

can

have

vector

loops

inside

of

them

and

then

inside

the

vector

loop.

I

call

fs,

which

is

another

function,

but

it's

a

sequential

function

and

it

just

returns

some

function

of

a

so

you

can

have

pretty

deeply

nested

call

chains

in

open

acc

and

the

each

call

in

the

this

is

a

fortran

example.

In

the

fortran

sense,

the

call

has

to

be

explicit

and

you

need

to

know

the

interface

of

the

routine

you're

calling.

A

So

maybe

this

example

isn't

quite

right

that

f,

g,

f,

fv

and

fs

need

to

be

either

in

an

interface

block

or

in

a

module.

That

program

main

can

see

so

open

acc

makes

it

a

little

easier

on

the

compiler

vendor

in

that,

when

you

compile

and

call

routine

fg,

you

know

what

level

in

the

parallelism

tree

or

hierarchy

that

each

routine

is

in.

Is

it

a

gang

level

routine?

Is

it

a

vector

level

routine,

or

is

it

a

sequential

level

there's

also

worker

again

we

don't

use

worker

very

often.

A

The

way

that

the

compiler

and

runtime

do

this

under

the

hood

is

the

subject

of

a

lot

of

experimentation,

research

churn

and

we're

fixing

it

all

the

time

we

almost

have

like

a

full-time

compiler

person

that

just

works

on

reductions.

We'll

talk

a

little

bit

more

about

that

later.

But

it's

it's

a

very

important

part

of

the

gpu

code

generator.

A

So

each

thread

calculates

its

parts.

The

reduction

doesn't

have

to

just

be

a

high

level

reduction

over

the

whole

kernel.

You

can

have

reductions

within

a

gang

over

a

vector

or

over

a

worker,

or

you

know.

If

it's,

if

it's

a

sequential

loop,

then

it's

not

really

a

reduction,

because

every

thread

is

computing,

its

sum,

but

the

compiler

will

insert

code

to

produce

the

single

result

at

whatever

level

you

need

and

if

you've

ever

written

a

reduction

in

cuda.

You

know

just

how

nice

of

a

feature

this

is.

A

A

A

The

data

construct

defines

a

region

of

code

in

which

gpu

arrays

remain

on

the

gpu

and

are

shared

so

you

can

have.

This

is

an

example

of

a

structured

data

region.

So

a

structured

data

region

exists

all

within

the

same

function

or

subroutine

in

fortran,

there's

a

definite

beginning

and

end

in

the

same

program

unit

and

within

that

the

data

within

the

data

directives

will

remain

on

the

gpu

the

entire

time

it.

A

A

A

At

every

kernel

we

copied

the

data

over,

ran

the

kernel

and

copied

the

data

back.

Then

later

we

decided

well

the

same

style

of

directives

that

we

use

to

generate

kernels.

We

can

use

to

generate

data

motion

or

data

movement,

so

we

created

things

called.

You

know

copy

in

copy

out

all

these

and

then

about

a

year

later

we

decided

well

when

we

port

code

a

lot

of

times.

A

We

put

the

data

directives

just

around

the

kernels

and

then

we

move

one

subroutine

higher,

and

then

we

put

data

directives

there

and

then

we

move

another

subroutine

higher

and

put

data

directives

there

and

that's

kind

of

the

the

method

of

tuning

for

performance

is

to

move

the

data

movement

higher

and

higher

in

your

program.

So

it's

you

know

resident

over

all

the

time

steps.

A

For

instance,

we

talked

about

yesterday,

so

it

occurred

to

us

that

really

we

don't

want

to

have

to

remove

the

data

directives,

but

we've

always

had

this

notion

that

the

data

directives

are

present

or

copy

present

or

copy

in

present

or

copy

out.

So

what

that

means

is

the

open,

acc,

runtime

and

openmp.

A

So

you

don't

suffer

the

performance

penalty

of

copying

in

at

every

level

of

your

call

tree.

You

only

do

it

at

the

highest

level

and

then

you

just

have

a

tiny

little

performance

hit

just

to

check

the

present

table,

which

is

just

a

you

know,

reading

a

little

bit

of

from

the

cpu

memory.

So

it's

not

a

very

expensive

operation

at

all.

A

So

just

a

little

history

there

and

something

to

watch

out

for

that.

These

data

clauses

are

present

for

variants.

In

fact,

our

compiler

probably

still

accepts

the

syntax

present

or

copy,

which

was

shortened

to

p

copy,

and

then

it

just

became

copy

is

the

same

as

so,

and

I

digress

there

a

little

bit

but

copy

of

list

copies

both

in

and

out

copy

in,

of

course,

only

copies

in

copy

out

only

copies

out.

A

Sometimes

you

don't

even

need

a

copy

of

the

gpu.

It's

just

kind

of

a

scratch

space,

while

you're

in

the

kernel,

so

that's

create

and

open.

Htc

has

something

called

present

where

it

just

checks,

and

so

it's

assumed

that

there's

a

data

directive

outside

of

of

this

data

directive.

So

I'm

just

checking

that

the

data

is

already

present.

A

Unstructured

data

regions,

so,

as

I

mentioned

before,

structured

data

regions,

the

beginning

end,

has

to

be

in

the

same

program.

Unit

worked

great

for

a

while,

but

then

you

know

there's

complicated

codes

where

all

the

data

is

created

in

the

emit

function

and

all

the

data

is

torn

down

in

the

file

function

and

stuff

like

that.

So

you

wanted

the

data

directives

where

the

data

was

initialized,

so

these

are

unstructured.

A

A

The

one

thing

I

wanted

to

point

out

here

is

that

there

is

another

form

of

data

directives

that

I

didn't

touch

on

anywhere

else

and

that's

the

acc

declare

create

data

directive

and

what

this

is

useful

for

is

two

cases.

One

is

for

global

data,

so

in

fortran,

if

you

have

data

in

a

module,

that's

global

data

see

it's

like

data

at

the

global

level

and

also

it

can

be

used

for,

like

local

arrays,

within

a

function.

A

A

A

So

this

is

actually

a

always

a

actionable

directive,

so

it

always

updates

it's

not

a

present

for

operation.

So

a

lot

of

times

you

have

a

you

know.

Your

data

is

really

high

out,

but

you

need

to

get

that

data

onto

the

cpu

or

update

the

values

on

the

gpu

and

typically

or

one

common

example.

Of

this

is

say

you

need

to

do

mpi

communication,

so

you

know

I

need

to

update

the

host

version

of

x

in

the

code

down

below

right

before

I

do.

An

mpi

send.

A

So,

that's

why

you

would

use

the

update

clause

so

the

the

spec

itself

they

changed,

host

to

be

update

self.

I

find

that

kind

of

annoying,

so

I

still

use

update

device

and

update

host,

but

host

and

self

are,

for

the

most

part,

the

same

thing

there's

a

notion

in

the

spec

that

you

could

do

an

update

on

the

gpu,

so

self

would

be

device,

but

I

don't

think

in

practice.

I've

ever

seen

that.

A

A

A

A

Array

shaping

so

sometimes

the

compiler

knows

the

shape

of

your

arrays.

Sometimes

it

doesn't.

You

know

in

c

c,

plus

plus.

Sometimes

you

just

pass

a

pointer

and

the

shape

is

sort

of

just

up

to

the

programmer

to

take

care

of

in

fortran.

You

always

just

pass

a

reference,

but

the

dimensions

sometimes

can

be

different

in

for

transparent

than

they

are

at

the

high

level

right

they're

still,

you

can

just

say

star

as

the

shape

of

the

array.

A

So

if

you

suspect

something

is

wrong

here,

examine

the

m

info

output

and

the

m

info

tells

you

what

it

assumes

the

sizes

of

the

arrays

are.

If

you

want

to

be

explicit,

you

can

put

the

shape

on

your

copy

in

and

copy

out

directives,

but

note

there

is

a

little

difference

between

c

and

c,

plus,

plus

and

fortran.

A

A

So

about

two

releases

ago

we

made

a

change

to

require.

You

know

the

colon

notation.

Otherwise

we

will

just

copy

in

one

element

and

we

have

a

way

to

with

a

flag.

I

have

to

look

and

see

what

it

is,

but

it's

like

implicit

sections

or

something

like

that

to

go

back

to

the

old

behavior,

but

that

is

really

non-standard

behavior.

A

So

I

think

I'll

have

this

slide

in

both

my

opening

htc

and

openmp

box.

It's

a

useful

slide.

I

refer

to

it

a

lot,

so

I'm

glad

that

I

wrote

it.

It's

the

open,

acc

data

directives

on

the

left,

openmp

data

directives

on

the

right.

They

are

almost

identical

other

than

you

know,

slight

syntax

changes.

In

fact

our

compiler

uses

the

same

exact

runtime.

A

A

A

A

So

again

I

have

a

fortran

on

the

left

c

on

the

right

and

it's

done

pretty

much

the

same

way.

This

is

probably

almost

the

entire

talk

in

itself.

In

my

introduction,

I

just

want

to

mention

that

open

acc

uses

cues

and

the

queues

are

numbered.

So

you

just

kind

of

pick

an

arbitrary

number.

You

know

on

the

left.

I

picked

10

and

that's

my

hue

number

that

I'm

using

in

open

acc.

So

I

can

do

update

device

async

10,

I

can

do

kernels

async

10.

A

So

the

call

you

want

to

really

focus

on

here

is

when

we

set

the

qft

set

stream.

There's

a

api

function

called

acc

get

cuda

stream,

which

you

give

it

the

cuda.

I

mean

the

open,

acc

async

number

and

it

returns

the

cuda

stream,

and

this

cuda

stream

is

a

64-bit

handle.

Basically,

we

treat

it

in

fortran

as

a

64-bit.

A

Openacc

does

allow

you

to

tune

your

code

a

little

bit

like

cuda,

and

it

has

something

called

the

cache

directive

which

I'm

showing

on

the

right

here.

I

haven't

really

talked

about

private,

but

it's

kind

of

a

common

notion

among

openmp

and

openacc

that

you

can

have

private

data

at

the

gang

level

or

at

the

vector

level.

A

At

the

gang

level,

there

will

be

one

copy

of

that

for

block

thread

block

in

cuda

and

that's

very

convenient,

because

cuda

has

shared

memory

that

can

be

used

across.

You

know

all

the

threads

in

a

thread

block.

So

in

this

case

on

the

right,

the

tile

array

is

actually

put

into

cuda's

shared

memory

and

it

is

accessible

very

quickly

and

with

high

performance.

A

A

Lots

of

people

find

this

pretty

useful,

but

there

are

some.

You

know,

drawbacks

of

it,

and

sometimes

it's

performance

issues

max

reg

count

is,

is

kind

of

a

code

generator

flag.

You

know

there

are

limited

number

of

registers.

You

can

kind

of

force

the

compiler

to

use

less

registers

and

spill.

Those

more

gpu

equals

line

info

is

is

useful

if

you're

needing

to

use

some

debugging

or

diagnostics.

A

I

think

max

yesterday

talked

about

compute

sanitizer,

so

you

get

a

little

bit

more

information

out

of

the

compute

sanitizer.

If

you

have

some,

you

know

it's

called

dwarf

information

or

line

information

in

your

executable

gpu

equals

compute

capability.

Xy

only

generate

code

for

a

particular

compute

capability.

If

you're

just

running

on

perlmutter,

you

really

only

need

to

generate.

You

know,

code

for

ampere,

which

is

tc80.

A

And

finally,

we

added

a

feature:

maybe

a

year

or

two

ago

called

auto,

compare

well.

Actually

it's

called

pcast

and

pcast

stands

for

parallel.

Compiler

assisted

software

testing,

so

you

can

compile

with

gpu

equals

auto

compare

and

it

will

automatically

run

your

kernels

both

on

the

cpu

and

the

gpu,

and

then

compare

the

results

at

the

end,

and

you

can

have

some

control

over

the

comparison,

whether

it's

exact,

compare

or

provided

tolerance,

and

you

can

find

bugs

that

way.