►

From YouTube: 9. Introduction to OpenMP Offload

Description

Part of the Nvidia HPC SDK Training, Jan 12-13, 2022. Slides and more details are available at https://www.nersc.gov/users/training/events/nvidia-hpcsdk-training-jan2022/

A

A

A

A

A

A

What

am

I

trying

to

say?

Barriers,

openmp,

has

thread

local

storage

or

thread

private

data.

I

always

get

the

two

confused.

One

is

an

openmp

thing

and

one

is

more

of

a

cpu

thing,

but

you

know

it's

got

a

fork

join

model

and

what

that

means

is

that

the

threads

are

pretty

much

spawned

at

the

beginning

of

the

program

in

cpu

openmp

and

they

kind

of

just

hang

around

and

you

hit

an

openmp

region

and

the

threads

fire

up

and

do

some

work.

A

And

then

you

hit

the

barrier

or

the

end

of

the

region

and

they

kind

of

just

go

to

sleep

and

wait

for

the

next

amount

of

work

to

come

up

so

cuda

and

and

gpu

programming

is

a

lot

different

than

that.

The

only

thing

that

really

stays

around

is

your

data

in

the

gpu

memory,

other

than

that,

the

the

kernel

grabs,

resources,

computes

and

then

the

results

are

stored.

A

So

the

top

part

on

the

left

is

the

openmp

pragma

syntax,

not

really

any

different

by

design

and

then

open,

acc

again

open

acc,

you

know

borrowed

lots

of

things

from

openmp

on.

The

bottom.

Left,

though,

are

the

things

that

you

should

be

concerned

with

or

studying,

or

are

the

keys

to

gpu

offload

so

they've

added

some

new

constructs

target

teams

and

distribute.

So

these

are

the

major

additions

for

gpu

acceleration.

A

A

That's

following

amongst

the

teams

that

I've

created

and

then

we're

back

to

the

original

openmp

directives

parallel,

which

has

been

around

since

the

beginning

and

in

our

implementation

that

creates

the

cuda

threads

within

the

team.

So

that's

the

threads

in

a

thread

block

and

then

parallel

do

or

four

says

to

work

share

that

work

below

that

among

the

threads

in

the

team.

A

Lots

of

applications

have

been

written

using

openmp

on

the

cpu

and

open

acc

on

the

gpu,

and

I

think

those

same

applications

could

still

use

openmp

without

target

on

the

cpu

and

open

mp

with

target

on

the

gp.

I

haven't

seen

it

yet,

but

I

there's

no

reason

that

that

can't

happen

in

that

case.

You

know

this

this.

This

diagram,

maybe

is

a

little

false

in

that,

probably

with

openmp

on

the

cpu

there's,

probably

more

than

five

percent

of

the

code.

A

So,

what's

a

programmer

to

do

so,

the

first

attempt

might

just

be

to

insert

target

teams

distribute

where

you

already

have

openmp

directives.

So

this

is

like

the

laplace

code

and

we'll

go

through

this

example.

Quite

a

bit

so

before

where

I

had

pregnant

omg

parallel

four

on

the

outer

eye

loop,

I

can

just

insert

target

teams

distribute,

and

you

know

it's

not

terrible.

A

A

So

if

this

is,

you

know

if,

if

your

code

has

a

whole

bunch

of

one-dimensional

loops

and

that's

how

your

code

is

structured,

you

may

be

okay.

The

thing

to

worry

about,

then,

is

like

you

talked

about

before.

Do

you

have

long

sections

of

code

under

the

omp

parallel,

and

are

you

just

going

to

overwhelm

the

gpu

with

all

the

resources

that

you

expected

to

use.

A

A

So

on

the

outer

loop,

I

distribute

that

across

the

gangs

using

target

teams

distribute

and

on

the

inner

loop,

I

distribute

that

across

the

threads

in

a

gang

using

omp

parallel

four,

so

this

gives

a

pretty

good

performance.

Actually,

you

know

almost

as

good

as

it

gets.

What

we've

done

now

is

since

c.

The

rightmost

is

the

leading

dimension.

Now

I

have

the

leading

dimension

in

the

in

the

thread.

A

A

Loop

69

is

a

parallel

loop

and

that's

parallelized

across

threads,

so

you're

running

on

the

gpu

and

it's

great.

Unfortunately,

this

is

not

what

you

want

to

do

on

the

cpu.

So

what

we've

done

here

with

this

example

is

we've

kind

of

crippled

the

cpu

performance,

because

we've

moved

the

parallelism

on

the

cpu

to

the

innermost

loop,

so

we're

creating

you

know

doing

this

fork

join

operation

for

every

innermost

loop

j

equals

one

to

s,

size

minus

one.

A

So

when

you

compile

this

for

the

the

cpu

using

our

compiler,

and

I

think

most

compilers

you'll

see

that

the

the

outermost

loop

is

across

teams

and

I

believe

well,

I

know

for

sure

our

compiler

only

creates

one

cpu

team

and

I

believe

most

compilers

only

generate

one

cpu

team

and

the

reason

we

can't

do

anything

else.

There

is

the

openmp

spec,

as

far

as

I

understand

does

not

allow

barriers

or

things

like

the

single

construct

or

or

things

like

that

across

the

target

teams

dimension.

A

A

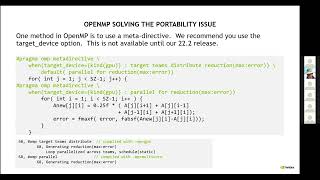

So

there

have

been,

you

know,

lots

of

papers

and

and

proposed

solutions

to

this

over

the

years.

In

fact,

there

was

a

paper

pretty

popular

one

a

couple

years

ago.

That

just

said

oh

just

used

preprocessor

directors,

and

so

one

thing

that

they

have

supported

or

added

to

the

openmp

spec

is

this

notion

of

a

meta

directive,

and

I

am

struggling

with

this

a

little

bit

myself.

A

We

don't

really

have

this

working

in

a

released

compiler.

Yet

we

have

something

that

I

think

works

correctly,

that

we're

gonna

have

in

our

next

release

22.2,

but

with

a

meta

directive,

you

will

be

able

to

have

a

directive

based

on

different

targets.

So

here

the

syntax

is

when

the

target

device

is

a

of

kind.

Gpu

use,

target

teams

distribute

you

know,

reduction

when

it

is

not

kind

gpu

or

the

default

use

parallel.

Four

with

a

reduction

and

enter

you

can

just

say:

if

it's,

if

it's

gpu

add

parallel

four,

otherwise

don't

do

anything.

A

A

A

You

know

high

level

macro,

maybe

in

an

include

file

and

then

kind

of

clean

up

the

syntax

and

not

have

to

duplicate

that

with

with

every

single

kernel,

I'm

struggling

a

little

bit

because

this

is

not

really

out

in

the

wild

yet

so

I

don't

have

a

lot

of

experience

with

this

in

our

compiler

when

I

used

the

dev.

This

worked

for

me.

A

A

A

A

Alternatively,

like

below

this

is

almost

like.

An

open

acc

slide

that

I

had.

You

can

specify

the

omp

target

teams,

you

know

begin

and

end,

and

then

you

can

have

openmp

loop.

Just

inside

of

that,

so

we'll

generate

one

parallel

kernel

and

and

map

that,

hopefully

very

efficiently

onto

the

gpu,

giving

the

compiler

a

little

bit

of

flexibility

of

of

the

teams

and

and

threads

that

it

uses.

A

So

using

our

m

info

messages,

you

can

see

that

you

get

the

you

know,

generating

nvidia

gpu

code,

using

teams

and

threads,

and

when

we

generate

multi-core

code

on

the

outer

loop,

we

use

the

lines

to

cross

the

threads

because

we've

given

the

compiler

the

flexibility

to

schedule

it.

You

know

the

right

way

for

the

different

hardware.

A

A

A

A

It's

not

just

for

openmp

target

teams.

I

believe

you

can

also

specify

thread

limit

in

num

teams,

for

you

know,

target

teams

loop,

I

mean

target

teams

distribute

as

well.

I

think

we

will

accept

thread

limit

and

num

teams

for

almost

all

cases.

There

are

some

cases

where

we

will

not

I'm

trying

to

remember

what

it

is.

I

think

it's

if

you

just

say,

omp

target

without

teams.

A

We

have

found

times

where

we

generate

too

many

teams

and

our

reduction

implementation

currently

in

openmp

uses

atomic

operations,

and

you

may

find

limiting.

The

number

of

teams

gives

better

performance.

So

this

is

something

actually.

You

can

also

try

in

the

lab

exercise.

Today,

I

think

one

version

of

the

lab

has

atomic

operations.

A

A

Loop,

our

the

original

kernel,

begin,

begin

kernel,

end

kernel

block,

so

here's

the

error

that

we

give

in

21.11,

I

believe

in

22.2

our

next

compiler.

We

will

handle

this

case,

so

we've

been

working

hard

on

this

kind

of

for

orphaned,

parallel

operations,

so

there's

work

to

be

done

there.

It's

unfortunate.

I

think

that

openmp

decided

to

not

help

out

the

compiler

vendors

a

little

bit

more

here.

A

A

Atomic

operations

I

haven't,

talked

about

atomic

operations,

but

they

are

supported

in

both

open,

acc

and

openmp,

and

these

are,

you

know,

actually

used

quite

a

bit

they're

kind

of

considered,

maybe

a

more

advanced

topic

than

for

an

introductory

talk,

but

the

atomic

ensures

that

a

specific

storage

location

is

accessed

atomically,

hence

the

name.

So

this

prevents

race

conditions

and

can

you

can

actually

implement

reductions?

A

A

A

We've

hit

that

in

well

berkeley,

gw,

almost

every

time

I

mentor

that

team,

some

other.

You

know

chemistry

codes,

most

purposes,

it's

okay

and

our

work

around

is

usually

to

do

the

real

and

imaginary

parts

separately,

because

they're

just

doing

atomic

sums

and

they

nobody

actually

reads

the

result.

Until

the

kernel

is

finished,

the

hardware

itself

does

not

have

a

you

know

two

double

atomic

update,

so

you

have

to

break

it

up

into

two.

A

So

why

why

do

we

encourage

users,

the

descriptive

versus

prescriptive

argument,

so

our

openmp

loop,

more

directly,

leverages

years

of

our

open,

acc

scheduling,

kernel

generation

and

openmp

inside

parallel

four

allows

some

things

that

we

view

as

parallelism,

limiting

so

like

master

directive,

single

barriers,

etc

or

openmp.

Api

calls

so

lots

of

code

that

people

are

porting

may

call

things

like

omp

get

threadnum

or

something

like

that.

A

What

we've

found,

though,

is

you

know

the

converse.

The

cuda

tool

chain

does

a

pretty

good

job

of

removing,

or

at

least

minimizing

the

overhead

that

we

have

to

insert

into

the

you

know

the

prescriptive

openmp,

but

we

haven't

gained

a

lot

of

experience

yet

with

complicated

kernels

so

whether

that

still

holds

up

or

not

we'll

get

to

see.

So

I

I

think

the

jury

is

still

out.

A

A

A

A

A

A

A

A

Openmp

talks

a

little

bit

more

about

the

reference

counts.

You

know

it.

It's

actually

implemented

the

same

way

in

openacc

and

openmp.

So

the

present

table

that

I

talked

about

earlier

has

reference

counts

for

how

many

times

you

know

the

data

has

been

referenced

or

kind

of

pushed

onto

the

stack,

and

when

you

leave

a

data

region,

it

just

reduces

the

reference

count.

A

Target

update

just

again

exactly

like

openings,

you

see

just

different

syntax

lmp

target

from

again.

You

might

need

this

before

you

use

mpi

send

or

something

like

that

array.

Shaping

is

again

the

same

between

open,

htc

and

openmp,

and

here's

the

same

slide

just

to

show

the

corresponding

data

directives

between

openhcc

and

openmp.

A

So

it

is

a

little

different

than

openacc

openacc

had

q

numbers

that

map

almost

one

to

one

between

streams,

open

a

openmp

uses

depend

clauses

and

what

you

put

in

a

depend

clause

is

really

kind

of

a

just

a

marker.

It's

convenient

to

use

a

variable,

and

then

you

kind

of

use

that

same

variable

independent

clauses

and

map,

whether

the

dependency

is

in

or

out

and

then

and

then

also

add,

a

no

weight

clause

for

asynchronous

behavior

on

that.

A

A

A

So

it's

kind

of

looking

forward

that

says

if

I

call

omp

get

cuda

stream,

it

returns

me

the

stream

number

that

I

can

subsequently

use,

and

then

I

can

use

that

stream

number.

It's

exposed,

actually

the

cuda

stream

to

call

coup

fft

set

stream.

So

I'm

you

know,

instructing

the

cuda

fft

library

to

use

that

stream,

and

then

I

can

use

the

openmp

depend

clause

on

stream

and

no

weight

to

put

all

this

work

using

depend

with

end

stream

into

the

same

stream,

asynchronous

stream.

A

So

this

update

2

will

use

the

end

stream

under

the

hood.

Our

qffts

we've

specified

those

to

use

and

stream

the

scale

of

c

we'll

use

n

stream

and

then

the

target

update,

reading

the

result

back

we'll

use

that

cuda

stream

and

then

finally,

I

say

omp

task

weight

and

that's

where

the

synchronization

will

occur.

And

then

the

result

is

back

on

the

cpu.

A

A

A

Fortran

array,

syntax

and

device

code.

Basically,

it's

not

really

supported

in

our

openmp

compiler.

Unfortunately,

there's

a

couple

of

ugly

work:

arounds,

you

can

say

target

teams

loop

and

create

a

loop

that

goes

from

one

to

one,

and

we

will

accept

this

error,

syntax

here

and

kind

of

do

the

right

thing

or

you

can

explicitly

write

out

the

array

syntax

here

on

the

left.

H

colon

equals

zero,

adding

a

loop,

so

we

don't

have

a

good

solution

for

fortran

array,

syntax

at

the

high

level

in

openmp.

A

Yet

so

this

is

my

last

slide.

So

I've

mentioned

a

few

times

that

our

openmp

compiler

is

still

sort

of

work

in

progress

and

in

our

next

release,

22.2,

which

will

be

out

in

february.

These

are

the

things

we're

working

on

so

openmp

and

openacc

in

the

spec

define

array

reductions.

So

that's

a

reduction

not

just

on

a

scalar

but

on

an

array

element

or

an

entire

array

or

a

section

of

an

array

and

we've

been

working

on

that

for

a

while.

A

A

The

target

task

no

weight

like

the

example

I

showed,

and

how

that

maps

to

cuda

streams

that

should

be

pretty

solid

in

22.2

and

as

again,

that's

important

for

you

know:

interoperability

with

cuda

libraries

support

for

orphan

parallel.

Like

the

example,

I

showed,

a

parallel

loop

in

a

user

function

called

from

a

kernel

that

should

be

working

in

most

cases

in

22.2,

still

working

through

some

of

the

metadirective

support

issues

and

we're

always

working

on

performance.