►

From YouTube: 2. Introduction to GPU Computing

Description

From the NERSC NVIDIA RAPIDS Workshop on April 14, 2020. Please see https://www.nersc.gov/users/training/events/rapids-hackathon/ for all course materials.

A

So

high

I

use

and

I've

been

working

in

the

rapid

engineering

team

for

a

little

over

a

year

now

and

my

books

primarily

related

to

Python

and

workflows,

using

APIs,

as

well

as

some

some

depth

around

the

Python

API

side

of

things

and

today

I'm

going

to

be

giving

you

an

overview

on

GPU

computing

and

what

it

is

and

why

it's

become

so

relevant

and

importantly,

before

I

begin.

The

presentation

I'd

also

like

to

thank

Nick

and

other

folks

at

Nvidia

who

put

these

slides

together

and

prepare

them

so

that

I

can

use

them

today.

A

So

I

think

it's

it's

known

that

GPUs

are

useful

gaming

and

generally,

when

people

think

of

GPUs

outside

of

say

the

supercomputing

HPC

or

say

industry.

Setting

people

think

of

GPUs

is

something

that's

used

for

gaming

and

the

reason

GPUs

are

actually

useful

for

gaming

or

things

like

video.

Rendering

is

because,

when

you're

trying

to

render

video

it's

it's

all

about

representing

curves

and

different

polygons

and

shapes

on

the

screen,

and

that

turns

out

to

be

something:

that's

compute-intensive,

that's

something

that

cannot

be

done

very

quickly

or

it's

difficult

to

do

in

real

time.

A

You

want

to

take

some

tasks,

that's

embarassingly

parallel!

You

want

to

do

multiple

computations

on

the

task

and

instead

of

actually

lounging

pixels,

you

want

to

calculate

some

values

that

are

useful

for

your

simulation.

So

that's

sort

of

like

the

motivation

behind

why

people

thought

GPU

computing

is

is

more

than

just

video

games

or

video

rendering.

A

A

So,

I

think

these

days

you

get

CPUs

with

up

to

24

cores

like

desktop

CPUs,

and

these

cores

are

like

difficult,

so

they

have

high

clock

speed

and

they

can

process

tasks

at

a

high

rate,

but

they

are

not

good

at

doing

multiple

tasks

simultaneously

because

of

the

limitation

of

number.

Of

course.

On

the

other

hand,

if

you

look

at

the

GPU

architecture

right

here,

you

have

smaller

cores

with

lesser

clock

speed,

but

you

have,

you

know,

500

to

thousands

of

cores

on

a

single

GPU.

A

So

the

whole

idea

behind

GPU

computing

or

GPU

programming

or

this

whole

CUDA

programming

model-

is

that

you

have

a

bunch

of

code

and

you

have

specific

section,

that's

extremely

compute-intensive

and

as

something

that

can

be

paralyzed

easily.

If

you

use

the

GPU,

so

what

people

do

is

they

just

take

that

particular

section

traditionally,

and

you

know

port-

that

code

over

to

use

the

GPUs

instead,

which

is

the

section

that's

performance,

critical

now

the

rest,

the

code

can

run,

as

is

on

the

CPU,

and

this.

A

This

kind

of

programming

model

is

what's

been

traditionally

used

in

GPU

computing

widths.

With

this

workshop,

and

hopefully

with,

as

you

understand

more

about

Rapids,

you

probably

see

how

we

can

actually

reverse

this

and

with

the

amount

of

compute

growing

today

and

the

number

of

you

know,

computer

intensive

applications

we

work

with

today,

you

can

see

how,

with

Rapids,

we

actually

reverse

this,

and

you

know,

use

majority

of

the

code

and

majority

of

the

code

on

the

GPU

and

then

use

a

part

of

the

CPU

for

processing.

So.

A

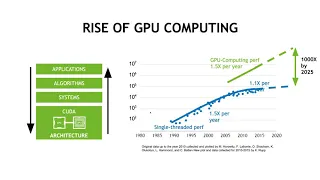

Again,

going

with

the

previous

slide

of

the

GPU

computing

becoming

popular,

if

you

see

here,

CPUs

have

been

popular

and

have

been

around

for

a

while,

and

some

of

you

might

be

familiar

with

Moore's

law,

which

states

that

you

know

the

number

of

transistors

in

a

chip

doubles

every

couple

of

years.

So,

as

you

can

see

on

this

graph,

you

know

the

performance

kept

increasing

you're

near

by

around

1.5

times

and

then

somewhere

in

the

mid

2000s

CUDA

came

along

with

this

GPU

computing

and

instantly.

A

It

was

able

to

accelerate

applications

because

there

are

certain

applications

that

are

that

are

still

that

is

still

so

compute

heavy.

That

is

difficult

to

achieve

the

same

performance

you

want

on

a

CPU

and

then

the

other

aspect

is

that

Moore's

law

that

cannot

keep

going

on

forever.

There's

like

a

there's

like

a

limited

class.

There's

like

a

limit

as

to

what

classical

physics

laws

allows

you,

and

so

what

we're

seeing

now

is

that

Moore's

law

is

slowly.

A

You

know

slowing

down,

and

the

generation

advances

CP

making

your

mule

is

not

as

much

it

turns

out.

Gpus

haven't

been

affected

by

the

same

problem,

and

GPU

performance

keeps

going

your

new

or

at

the

same

rate,

and

that

kind

of

shows

in

in

these

in

these

numbers.

So

since

2011,

the

number

of

applications

that

have

been

accelerated

using

the

GPU

and

using

CUDA

has

increased

significantly.

The

number

of

developers

who

are

now

you

know

computing

and

programming

using

GPUs

have

increased

22

times,

and

the

number

of

people

using

CUDA

have

also

increased

significantly.

A

So

this

is

kind

of

just

like

showing

the

numbers

are

just

proving

that

you

know,

as

as

time

goes,

GPU

computing

has

become

more

and

more

relevant

and,

if

you

see

today

in

in

almost

all

accelerated

computing

applications,

CUDA

is

like

at

the

core

of

it

and

could

has

really

been

significant

in

a

lot

of

these

applications.

So

if

you

see

here

in

terms

of

libraries

and

frameworks,

all

these

deep

learning,

liabilities

and

different

machine

learning,

libraries

like

pi

torch,

intensive

flow

all

have

code

included

so

that

you

can

accelerate

these

liabilities

using

the

GPUs.

A

If

you

look

at

other

simulation

tools

and

other

tools

used

in

HPC

like

say

answers,

those

are

something

that

are

accelerated

using

GPUs

and

if

you

see

the

performance

of

Google

net

as

it's

grown.

So

here

you

had

the

initial

performance

on

a

capital

o

ATK

it

a

GPU.

And

if

you

see

within

three

years

you

can

now

run

the

same

thing

on

8100

and

it's

the

performance

is

eighty

times.

A

A

What's

the

motivation

behind

all

of

this,

so

there

are

a

lot

of

different

tough

scientific

problems

out

in

the

world

and

did

it

by

a

massive

amounts

of

computing

and

like

in

some

cases

you

know

just

getting

better

performance

is

enough,

but

if

you

just

get

general

generational

advances

somewhere

in

your

in

your

on

CPUs

that

that

kind

of

does

a

job

but

for

really

really

difficult

problems.

Like

say

you

know,

climate

simulations

or

understanding

protein

structures.

A

You

really

need

to

change

the

approach

of

how

you

want

to

compute

and

that's

where

GPUs

kind

of

common.

So

if

you

look

at

say

understanding

the

HIV

protein

structure,

this

is

something

that

traditionally

takes.

You

know

10

million

no

dollars

and

as

a

civilization.

We

don't

really

have

that

much

time

to

spend

on

this

one

problem,

because

it

is

just

too

much

time

and

that's

where

you

know

these.

These

new

architectures,

like

GPUs,

come

in

and

using

GPUs

and

the

massively

powerful

computational

model.

You

can

actually

analyze

the

same

thing

in

16

days.

A

B

When

we

hit

this

trigger

on

this

thing,

2,100

gallons

of

air

goes

through

these

accumulators

out

these

valves

into

all

1,100

of

these

tubes

into

these

tubes

would

in

which

the

bottom

up

is

a

paintball.

Each

of

those

paintballs

will

fly

across

seven

feet

of

space

and

in

80

milliseconds

reach

its

target.

Hopefully,

when

it's

all

said

and

done,

it's

going

to

paint

the

Mona

Lisa,

do

you

he

you

painting

demonstration

and

ten

nine,

eight,

seven,

six,

five,

four

three

two

one.

A

So

hopefully,

that

gave

you

like

this

visual

image

of

CPUs

with

such

abuse

and

the

video

kind

of

illustrates

you

know

how

paral

paral,

coals

or

poles

working

simultaneously

using

you

know

same

data

to

perform

those

instructions,

works

and

and

how

you

know

doing

a

lot

of

independent

activity

at

once.

This

helps

speed

things

up.

So

hopefully

you

know

with

this.