►

From YouTube: Workload Demo: Red Hat AMQ Streams (Kafka) with OpenShift 4 and OpenShift Container Storage 4

Description

Karan Singh, architect, describes the concepts and demonstrates Red Hat AMQ Streams (Kafka) on OpenShift Container Storage 4.

Learn more: openshift.com/storage

A

Hello:

everyone,

my

name

is

Karan

Singh

senior

architect

at

Leonard

storage,

Peninsula.

The

topic

of

the

day

is

Red

Hat

in

his

dreams,

as

known

as

CAFTA

running

on

top

of

OpenShift

container

platform,

because

even

Red

Hat

OpenShift

container

storage

will

come

up

some

concept

followed

by

a

live

demo.

A

We

will

quickly

go

over

some

concepts

of

Abijah

Kafka

and

bring

it

into

streams

touching

upon

some

cafe,

use

cases

and

then

storage

use

cases

for

Kafka

at

the

end,

we'll

go

over

a

demo

where

we

cannot

deploy

or

edit

MQ

streams

using

operators

and

then

launch

some

example

cough

cough

producers

and

consumer

applications

and

then

in

the

end

we

will

do

some

failure.

Injection

testing,

let's

begin

Apache

Kafka

is

an

open

source

project

initially

developed

by

LinkedIn

and

then

later,

if

it

was

contributed

through

Apache

foundation.

A

Kafka

underneath

is

a

highly

scalable

distributed

messaging

system,

which

is

high

performance

and

and

fault.

Tolerant.

Kafka

does

have

a

notion

of

producers

and

consumers.

We

our

producer,

produces

message

and

events

which

are

then

ingested

into

calf

guard

on

the

aside.

Consumer

apps

could

consume

the

messages

from

Kafka

a

topic

and

Duty

post

processing.

Calf

kernel

comes

with

a

stream

processing

API,

which

makes

Kafka

a

good

fit

for

real-time

streaming.

Engine

as

well.

A

Kafka

could

also

connect

with

other

tools

using

the

connectors

which,

for

example,

like

no

sequel

woody

me

that

my

sequel

has

three

could

connect

to

Kafka

and

then

use

it.

Kafka

comprises

of

Vedic

use

cases

so

waiting

from

audit

logs

messaging

web

activity

tracking

victim

data,

all

the

data

code

directly

in

just

you

know,

dumped

into

Kafka

topic

and

then

use

by

applications.

Kafka

is

also

good

for

it

for

metrics

love,

aggregation

and

stream

processing

engines,

so

lots

of

streaming

metrics

data

are

streaming

logs.

A

Data

could

land

up

in

Kafka

and

then

used

by

apps

later

as

and

when

needed

databases

de

caca

app

like

web

apps

could

simply

write

data

to

cough

cough

which

could

and

later

bring

this

into

database.

This

is

pretty

popular

funky

texture

these

days,

GPS

radar,

real-time

mobile

tracking

and

this

coordinate

income

data

could

also

come

to

Kafka.

Iot

is

good

use

case

where

software

devices

and

sensors

could

send

the

data

to

the

application

into

a

Kafka

topic,

Kafka,

clear

and

then

later

it

would

be

moved

to

their

respective

person.

Storage

systems.

A

Freud

had

in

queue

streams

is

a

reddit

product

which

is

an

enterprise

distribution

of

a

fancy

Kafka.

So

we

had

a

in

queue.

Streams

aims

to

simplify

the

deployment

of

Kafka

on

top

of

hope

and

shift,

and

this

is

all

based

on

top

of

an

open

source

project

called

strings

II.

Basically,

in

key

streams,

provide

contrived

hardened

and

secure

images

for

Apache

cough

crying

zookeeper.

It

also

provides

operators

for

managing,

deploying

and

maintaining

the

cluster,

so

copied

is

like

plus,

operators

use

operators

for

users

and

topkapi

to

a

photonic

soul.

A

This

all

comes

bundled

with

batavia

streams

and

making

Kafka

simple

on

top

of

operation,

how

the

storage

plays

in

the

world

of

Kafka.

So

storage

plays

a

very

vital

role

for

Katherine

because

the

retention

of

messages,

the

rate

of

the

message

processing,

it

all

depends

on

the

storage

type

used

underneath

a

starting,

open,

Chef

container

storage,

which

is

built

on

off

SEF,

provides

a

fault-tolerant,

highly

scalable

storage

system

for

Kafka.

A

Here

at

the

CAFTA

brokers,

all

the

Gawker

broker,

basical

Akaka

parts

put

the

request

for

PVC

and

person

volume

4

from

Co

bunch

of

container

storage.

At

the

same

time,

zookeeper

err

parts

could

also

request

for

TVs

from

open

container

storage

told

mr.

Neher

provides

persistency

in

Capcom.

If

you

don't

choose

to

use

persistent

layer

online

OCS,

then

the

retention

of

the

topic

are

ephemeral.

A

So

if

a

part

destroyed

in

a

part

goes

offline,

your

data

is

lost

and

Kafka

needs

to

do

the

replication

and

rebalancing

out

the

data

from

the

other

part

so

which

is

not

very

convenient

so

in

the

first

place,

use

PB

s--

from

UCS

back

and

make

Kafka

kind

of

a

high

available,

and

then,

in

this

case,

if

any

of

the

part

first

down

Puma

ladies,

will

spawn

up

a

new

pole

and

it

will

attach

the

same

volume

to

the

new

car

compartment,

which

means

the

recovery

is

way

faster.

Compared

to

the

ephemeral

storage.

A

Cough

colds

comes

with

cargo

connect,

another

site

rule

which

could

move

the

messages

from

the

cough

cough,

persistent

layer

on

to

the

to

the

object,

storage

layers

like

self,

in

this

case

surface

or

operative

container

storage.

Another

type

of

storage,

which

is

under

development.

The

upstream

community

is

the

tier

storage.

Where,

based

on

the

retention

period

of

the

messages,

the

kafka

itself

move

the

messages

ship.

A

The

messages

like

the

author

messages

to

the

first

three

in

this

case

and

when

needed

when

application

requests

for

even

older

messages

after

could

go

and

fetch

the

messages

from

s3

and

serve

it

to

the

application,

so

which

means

it's

a

tiered

still

its

concept

in

Catherine.

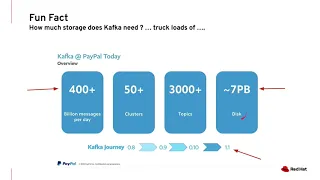

So

here

in

the

fun

factor,

Oh

didn't

slide.

I

borrowed

from

PayPal,

so

PayPal

is

processing

four

hundred

billion

messages

a

day

with

fifty

Khafre

clusters

running

using

three

hundred-plus

topics

and

overall,

this

system

was

consuming

seven

petabytes

of

storage

capacity,

and

this

data

is

not

new.

A

This

is

based

on

character

1.1.

Currently

we

are

on

Kafka

2.3,

which

means

the

data

is

one

year

old

and

I'm

very

positive

that

they

storage

requirement

for

PayPal

would

have

grown

higher

as

we

speak.

So

while

there

are

sales

rep

out

there,

Kafka

could

be

a

serious

consumer

of

storage,

which

means

storage,

flee

the

white

and

ruin

CAFTA,

and

it

has

to

be

treated

nicely.

A

So,

let's

move

on

to

demonym,

oh

one,

where

we

gonna

provision

our

craft

crime,

super

cluster,

running

on

an

open

shaved,

4.2,

backed

by

open

ship

containers

to

is

photo

tool

and

then

we're

gonna

launch

example:

cough

cough

producer

and

consumer

app.

So

let's

go

first,

create

a

project

called

NQ

streams

and

within

this

project

we

will

install

the

aim,

kill

streams

operator

using

operator

will

select

streaming

and

messaging

and

in

key

streams,

make

sure

that

the

project

is

in

key

streams.

A

You

could

install

this

globally

across

a

bunch

of

platform,

but

for

the

sake

of

simplicity,

right

now,

I'm

installing

this

fit

in

passing

project

I.

Let

select

a

project

from

here

and

I

will

subscribe

to

this

namespace.

So

this

should

install

my

a

filter

for

the

operator

reserve.

I

can

go

and

watch

my

parts

and

the

part

is

coming

up.

I

can

switch

to

my

c9c

project

streams

and

the

patent

is

running.

A

See,

yes,

should

tell

me

my

deployment

units

on

fine

arts

and

services

if

they

are

already

okay,

so

my

part

is

running.

My

operative

is

running

next

step

is

to

install

or

set

up

a

car

faster,

but

before

that

we'll

make

sure

the

products

class

is

set

to

official

containers

also

get

get

to

this

class.

It

should

tell

us

understood

its

class,

and

it

defaults

to

this

class

is

so

forbidding.

A

A

This

is

running.

Meanwhile,

let's

go

and

talk

a

little

bit

about

this

configuration

file.

What

we

have

in

here,

so

this

file

is

the

basic

so

and

installing

a

car,

cluster

and

I

am

assigning

a

persistent

storage

claim

of

a

regime

to

my

cough

olestra

and

I'm

assigning

then

until

storage

tool,

my

zookeeper

reports,

so,

as

you

can

see,

this

careful

class

is

coming

up.

The

country

is

coming

up

and

it

should

take

a

few

minutes

all

right.

A

So

the

Kafka

and

the

zookeeper

clusters

are

up

and

we

should

be

good

to

with

next

steps

of

the

stem.

We

will

verify

the

storage

claims

that

Kafka

and

two

people

has

requested.

As

you

can

see

this,

we

have

three

parts

of

Kafka

and

each

of

them

as

a

request:

100

GB

or

for

TV

prom

openshift,

frontier

storage,

similarly

10

GB

or

each

zookeeper

Gloucester.

Oh,

this

is

good,

alright.

So

next

we

will

create

a

Kafka

topic

but

burn

atlas.

Yet

kafka

topic.

A

A

The

next

step

is

to

create

our

produce,

a

half

which

will

write

contents

to

this

Kafka

topic,

so

we

will

for

supply

file

and

meanwhile,

the

his

running.

We

will

go

and

look

the

contents

of

this

OSI

file,

real,

quick,

simple

file.

It

will

it's

a

hello

world

producer

application

which

will

write

to

my

Kafka

topic.

1

million

messages,

so

de

Lima

has

count

continuously,

like

writer,

1,

mil

messages.

This

is

a

we'll

see

that

part.

A

A

A

A

A

It

would

be

three

demands

is

from

same

topic.

Right

super

love.

We

now

in

the

logs

of

my

hello

world,

consumer

app.

So

as

you

can

see

this,

there

is

a

slight

latency.

However,

you

can

see

this.

The

first

window

is

generating

messages

in

decaf

the

topic,

which

is

a

consumer

app

sorry

producer

app,

the

second

window.

We

are

continuously

receiving

the

messages

from

the

CAF

ketotic,

alright.

A

So,

let's

induce

some

failure

into

the

system

by

destroying

up

kafka

part

which

is

backed

by

open

container

storage.

So

they

should.

There

would

be

no

glitch

if

we

do

that,

because

fit

is

backed

by

a

personal

storage

layer,

so

change

the

shell.

So

this

is

the

list

of

this

containers.

Watch

command

of

my

existing

cluster

parts,

I'm

gonna

delete

the

coffe

cup

or,

like

this

Kafka

delete

parts

for

deleted.

We

should

see

some

changes

here.

So

look

at

this.

A

This

is

terminating

so

Kafka

cluster

node

one

has

gone

at

the

same

time

my

consumer

and

producer

f.

They

are

functional

as

they

are

this

voltage

in

here.

What

communities

will

do

is

it

will

spawn

up

a

new

container

for

Kefka

node

1

and

it

will

mount

the

same

precision

volume

which

was

mapped

the

previous

container

and

migrating

it

with

a

movable

daesil

to

this

container

is

kafka.

Zero

is

now

coming

up.