►

From YouTube: WEBRTC WG meeting 2023-06-27

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

B

So

we're

going

to

cover

use

cases,

ice

controller,

seven,

custom,

sdp

stuff

from

Harold

and

probably

a

few

other

things

we

have

meetings

scheduled

for

July

in

the

future.

If

you

don't

have

one

for

August,

because

traditionally

that's

been

vacation

month

and

then

we're

gonna

have

a

whole

bunch

of

meetings

of

TPAC.

B

B

So

you

probably

all

notice

by

now,

but

the

session

is

being

recorded

and

you

raise

your

hand

to

get

into

the

queue

in

order

to

get

out,

and

please

wait

for

my

microphone

access

tell

us

who

you

are

I,

don't

think

we'll

use

pulse

today,

but

we

could

so

a

little

bit

about

document

status.

Just

because

something's

in

the

repo

doesn't

mean

it's

been

adopted.

That's

a

separate

thing

requires

a

call

for

adoption.

B

Air

straps,

don't

represent

consensus,

working

grabs

do

and

it's

possible

to

merge

PR,

so

that

lack

consensus

we'll

be

talking

about

that

in

the

end

of

the

use

case,

discussion

a

little

bit

later,

okay,

so

here's!

What's

on

the

agenda,

we

have

a

lot

of

talking

about

media

capture

and

screen

share

a

little

bit

about

webrtc

extensions

from

fitbull

we're

going

to

talk

about

every

use

cases,

ice

controller

and

encoded,

transform

codec

negotiation

from

Harold

all

right,

so

I'm

going

to

turn

it

over

to

a

lot.

C

C

C

But

all

things

start

like

that

right

at

the

moment,

we're

only

using

it

for

set

Focus,

behavior

and

I

suggest

that

if

we

want

to

make

it

more

easily

usable

by

other

specs

and

and

that

some

of

which

we

will

eventually

merge

into

the

current

one,

it

helps

to

make

it

into

an

event

Target,

and

we

already

have

a

specific

use

case

in

mind

in

the

screen

capture,

Mouse

events

spec,

and

we

can

think

about

other

reasons.

What

other

use

cases

these

are

not

to

be

debated

right

now.

C

C

C

E

C

C

Thank

you.

263

improve

upon

capture

start

Focus,

Behavior,

no

Focus

change.

So

at

the

moment,

it's

basically

it

goes

like

this

when

you

start

capturing

a

tab

or

a

window.

There's

the

question

of

what

needs

to

be

in

Focus,

whether

it's

the

window

that

you've

just

started

capturing

or

whether

it's

the

browser

that

needs

to

still

be

in

Focus.

C

Thank

you

so

much

so.

We've

discussed

this

and

no

Focus

change

is

actually

a

little

bit

ambiguous,

given

Safari's

model,

because

in

Safari

what

happens

is

you

when

get

display

meter

is

called?

There

is

a

Mac

OS

level

picker

that

allows

you

to

basically

click

on

the

window

itself

that

you

want

to

capture,

and

at

that

moment

no

Focus

change

is

less

ambiguous

than

it

would

be,

for

example

in

Chrome.

So

next

slide,

please.

C

Thank

you.

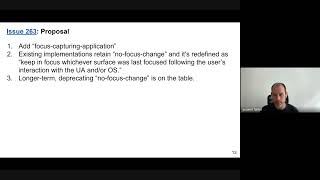

So

my

proposal

is

that

we

add

Focus

capturing

application,

and

this

way

we've

got

an

unambiguous

value.

The

existing

implementation,

existing

implementations,

like

Chrome,

can

keep

no

Focus

change

and

redefine

it

as

keep

in

Focus.

Whichever

surface

was

less

focused,

following

the

user's

interaction

with

the

user

agent

and

or

operating

system,

so

basically

for

Chrome.

This

would

still

mean

keep

the

browser

itself

in

focus

and

for

Safari

this

would

mean

Focus

the

captured

window

and

longer

term.

We

might

want

to

actually

deprecate

the

old

confusing

value.

C

F

I

like

option

one:

it's

it's

good

I

like

it,

I

wonder

whether

we

want

to

keep

the

focus

change.

So

maybe

we

should

add,

like

a

warning

note

in

respect,

saying:

hey

we

plan

to

duplicate

no

Focus

change

and

when

Chrome

is

already

Chrome

might

be

able

to

deprecate

it,

but

I

think

it

would

be

good

for

the

spec

to

be

clear

from

the

start

of

the

direction

we

want

to

take.

Even

though

implementations

will

catch

up

later.

C

So

I

would

prefer

to

not

use

language

so

strong

as,

like

we

plan

I,

think

that

we

need

to

think

about

it

a

bit

more

but

like

definitely

having

two

values

instead

of

three

is

seems

preferable.

I

just

want

to

see

if

it's

feasible,

I

need

to

check

with

how

many

applications

actually

call

you

know

the

old

value

and

how

difficult

it's

going

to

be

to

migrate

them.

F

G

Yeah

I

mean

I,

I,

think

it's

so

what

I

wanted

to

say

was

kind

of

almost

covered,

which

is

that

I

would

really

like

to

know

whether

deprecating

it

now

is

an

issue

or

not,

because

obviously

the

best

thing

to

do.

Is

it

deprecate

it

as

soon

as

possible,

but

I

don't

have

any

input

on

when

soon

is

or

when

it's

possible.

But

I

would

like

to

know.

E

Yeah,

so

for

other

browsers

that

are

going

to

implement

is

knowing

whether

we

need

to

implement

two

or

three

values

is

going

to

be

important,

because

this

is

this

is

my

buddy

El

enum?

It

will

throw

if

you

try

to

provide

a

value

of

no

Focus

change,

so

applications

that

provide

that

value

would

get

errors,

potentially

in

Firefox,

for

example,

which

would

be

oh.

C

H

If

we

have

deployed

code

that

uses

this,

then

the

usual

magic

is

that

you

don't

break

running

codes.

So

if

running

code

uses

no

Focus

change,

then

we

need

to

make

both

no

Focus

change

and

focus

capturing

application

available

for

some

time

and

then

and

then

remove

the

the

less

used

option

and

so

I

would

say.

C

G

G

C

I

group

Universe

here

I,

don't

think

that

it

makes

sense

for

every

given

user

agent

to

to

implement

the

different

subset

by

the

way

there.

It's

not

like

there

are

no

arguments

for

retaining

no

Focus

change

right.

You

can

imagine

that

an

application,

for

example,

doesn't

care

one

way

or

the

other,

and

all

it

wants

is

to

minimize

the

changes

of

active

window

on

the

screen.

Not

so

it's

not

to

annoy

the

user,

so

maybe

no

Focus

changes

just

a

way

for

the

application

to

say

whatever.

C

Just

don't

don't

move

too

much,

which

could

be

useful,

for

example,

for

users

with

accessibility

issues

Maybe,

so

I

think

that

it

would

be

good

to

first

take

the

consensus

that

we've

got

here

about

adding

an

additional

value

that

helps

Apple

and

then

discuss

separately.

Whether

we

want

to

deprecate

the

old

value.

F

F

C

C

Third

and

last

issue,

so

this

is

a

proposal

to

allow

applications

to

avoid

riskier

display,

Services

I'm,

going

to

use

Chrome

as

an

example,

but

obviously

you

can

all

make

the

jump

to

your

other

browsers.

At

the

moment

it

is

possible,

I'm,

sorry

I'm,

proposing

to

it

and

constraint

that

would

allow

an

application

to

say:

hey

only

offer

tabs

and

windows

under

the

assumptions

that

the

current

screen

is

the

most

risky

option.

Next

slide.

Please.

C

So

I

just

want

to

explain

a

sample

use

case

that

explained

this.

So

let's

imagine

that

there

is

some

video

conferencing

application

called

hype

comp

for

hypothetical

company

right.

Sorry,

so

that's

not

the

application.

That's

the

company

and

they've

got

employees

and

the

employees

routinely

speak

to

each

other

over

video

conferencing

applications.

Specifically

one

called

VC

app

and

let's

say

that

the

admin

wants

to

configure

it

so

that

Whenever,

two

people

who

are

both

hyper

comp

employees

speak

to

each

other.

They

can

share

anything.

C

You

know

they

can

get

troubleshooting,

they

yeah

they

can,

you

know,

do

anything

together,

just

like

as

if

one

of

them

just

walked

over

to

the

other

one's

desk.

But

if

anybody

externals

is

invited

to

the

meeting,

it

is

not

actually

possible

to

share

your

entire

screen,

because

that

causes

a

bit

of

risk

to

a

company

IP.

C

C

There's

also

the

issue

of

users

joining

the

meeting

on

the

fly

right,

so

the

application

could

theoretically

terminate

a

share.

That's

already

started

right

before

an

external

user

joins

and

that's

going

to

be

easy,

but

if

you're

already

sharing

a

tab-

and

now

you

want

to

dynamically

change

to

sharing

the

entire

screen,

something

that's

almost

possible

in

Safari,

where

you

can

change

from

window

to

a

entire

screen.

C

Yes,

security,

so

right

now

the

spec

diesel

for

git

display

video.

This

allows

constraining

user

choice,

but

we

do

have

a

carve

out.

We

already

have

a

precedent

where

we

allow

to

say

that

we

don't

want

to

capture

the

current

Tab,

and

that

is

both

to

prevent

whole

of

mirrors,

but

also

because

we

know

that

the

current

tab

is

actually

the

riskier

one.

C

So

if

the

application

wants

to

up

riskiest

one,

so

if

the

application

wants

to

avoid

that

one,

it's

also

better

for

security

and

I

claim

that

when

you

capture

the

current

screen,

which

most

users

actually

only

have

a

single

screen,

then

it's

exactly

in

case.

So

if

we're

gonna

allow

an

option

to

remove

that

we're

increasing

security,

we're

not

reducing

it.

F

C

So,

I'm

glad

that

you,

like

waterposs

I,

actually

have

a

V2

that

I

would

like

to

pitch

to

you,

but

regardless,

when

the

user

is

offered,

the

current

screen,

that's

already

a

point

where

things

are

go

off

the

rails

right,

because

the

user

does

not

necessarily

understand

that

they

shouldn't

choose

that

and

when

they

do,

that.

That

costs

time,

that

derails

discussions

during

a

meeting-

and

it's

just

not

a

good

experience

so

and

we

don't

currently

have

any

preferences

that

allow

us

to

remove

that.

C

F

C

F

I,

don't

particularly

like

it

because,

for

instance,

in

in

Mac

OS,

the

user

might

be

able

to

dynamically

switch

to

the

screen,

for

instance,

and

this

will

not

be

a

chrome

UI,

it

will

be

osui,

so

there

you

could,

you

could

Chrome

could

show

the

kicker

and

then

the

user

would

be

able

to

write

it

and

then

Chrome

with,

for

instance,

and

the

track

it.

It

seems

like

it's

not

totally

consistent

there,

and

currently

our

approach

was

always

a

preference,

not

a

hard

requirement

on

this,

allowing

user

selection.

C

Sorry

for

the

people

in

the

queue

for

monopolizing

this

just

a

second,

so

I

think

that

for

Safari

this

would

actually

be

quite

useful,

because

yeah

Safari

has

quite

a

lot

of

clicks

right

now.

Right,

you

first

click

a

display

media.

Then

you

get

a

dialogue.

That's

kind

of

you

know,

delayed

by

by

random

amount

of

time

right

and

then

you

need

to

say

if

you

want

to

window

or

screen

and

now

imagine

if,

if

the

application

is.

C

Thank

you

so

much

so

if

the

application

is

already

going

to

discard

any

kind

of

any

selection

of

a

screen,

now

the

user

clicks

to

select

the

screen,

then

they

choose

S

screen

and

then

it

gets

discarded

and

then

the

user

needs

to

go

through

all

of

this

again,

this

time

choosing

window

not

screen

like

this

is

quite

laborious,

so

I

think

that

for

Safari

this

would

be

an

improvement

and

also

in

terms

of

dynamic.

Switching

like

you

know,

the

operating

system

could

theoretically

avoid

offering

dynamically

switching

to

an

entire

screen.

F

G

So

I

like

this

in

principle

but

I,

think

it's

going

to

be

very

difficult

to

implement

it

in

a

way

that

isn't

surprising

to

the

users.

I

I

suspect

that

in

the

end

like

why

can't

I

share

my

screen,

it's

going

to

be

the

dominant

response

to

this

because

it's

unexpected,

like

it.

It

varies

from

meeting

to

meeting

and

even

if

it's

done

right,

so

I

think

I'm

kind

of

moderately

against

it.

G

Exactly

so,

the

instant

response

to

this

screen

is

well.

Why

can't

I

share

my

screen?

I?

Could

yesterday

you

know

that's

going

to

be

the

what

I'm

going

to

hear

from

half

the

users

is:

why

have

I

suddenly

lost

the

ability

to

screen

share

the

screen?

I

could

do

it

two

minutes

ago

on

another

call

and

so

like

I

I,

think

I'm,

not

I'm

Pro.

The

security

benefit

from

this

and

I

think

it

is

possible

to

do

it

in

a

way.

C

I

think

that

your

application

doesn't

have

to

call

that

unless

it

thinks

that

it's

got

a

way

to

communicate

to

the

user,

that

the

screen

will

not

be

acceptable.

And

if

you

don't

want

that,

then

you

don't

need

to

specify

that

particular

preference

and

applications

are

free

to

not

call

that

until

such

a

time

as

they

believe

the

browser

actually

found

a

good

model

of

communicating

to

the

user.

Why?

Something

is

not

possible,

but

sorry,

Jennifer

and

calling

are

on

the

Queue

I

think

enable

first.

E

Oh

yeah,

so

this

one

was

a

little

tough

for

me,

but

I

I

I.

Thank

you

for

presenting

the

use

case.

I

think

the

use

case

makes

a

lot

of

sense

and

I

I

do

believe.

I

think

I

support

this

because

normally

I

I,

this

I

dislike

that

we're

limiting

user

choice

but

also

I

feel

like

selecting

the

full

screen.

Is

the

risky

choice

and

I'm

glad

that

we

have

ways

to

remove

that

so

overall

I

think

I'm,

supportive,

I

think

Mike.

E

C

A

Well,

I'm

glad

I

could

make

it

thanks

Bernard.

So

this

is

the

continuation

of

the

pull

request

to

request

keyframes

and

the

last

time

we

agreed

that

adding

a

Boolean

to

the

encoding

parameters.

It

is

not

the

way

to

go

because

it's

not

persistent.

Unlike

other

parameters,

the

new

proposal

is

to

add

a

second

parameter

to

set

parameters,

and

this

should

be

an

optional

sequence

of

video

encoder

encode

options

same

length

as

the

encoding

parameters

and

reusing

the

video

encoder

encode

options

object

from

web

codecs,

which

has

a

Boolean

keyframe

flag.

E

Oh

yes,

so

thank

you.

I,

like

this

Improvement

I,

think

that's

the

right

way

to

go

as

far

as

reusing

web

ideal

dictionaries

from

other

specs,

I

I.

Don't

think

that's

a

good

idea!

I

mean

we've

done

that

before,

for

instance,

get

display

media

and

get

viewport

media

had

the

same

options

object,

but

then,

when

things

get

added

in

the

other

spec

suddenly

you

know

implicitly

taken

on

extra

code

and

and

people

will

read

that

to

mean

just

because

someone

adds

some

new

web

codecs

options

doesn't

mean

we

want

to

support

them

here.

E

A

I

I

think

that

is

the

right

approach

and

we

probably

want

to

keep

a

dictionary

with

keys

that

are

compatible

with

the

webcodex

one

as

much

as

possible

if

any

encoding

parameters,

including

options,

make

sense

to

implement

as

well

in

robotics,

even

we

might

consider

them

in

the

future.

For

now,

if

we

only

had

keyframe,

that

would

be

fine

by

me.

F

Yeah

I

mean

I

dropped.

I

was

dropped

over

the

course.

So

maybe

it

was

already

given

feedback,

but

where

codex

dictionary

is

a

brain

and

set

parameters

is

not

per

frame,

so

there

might

be

species

between

the

web

codecs

dictionary

and

the

connect

we

want.

So

that

that's

why

I

prefer

that

we

have

our

own

dictionary

and

we

keyframe

will

be.

E

E

A

I

That,

yes,

one

more

question

regarding

said

parameters.

It's

supposed

to

return

the

promise

when

to

resolve

the

promise

when

all

the

parameters

have

been

applied.

Do

we

want

to

have

set

parameters

to

resolve

when

the

keyframe

has

been

created

and

sent,

or

do

we

want

to

have

it

when

all

the

other

parameters

have

been

applied,

knowing

that

the

keyframe

will

follow

soon?

After

what.

A

A

B

B

B

So,

to

remind

you,

we

had

this

list

of

proposals

from

the

May

meeting

and

the

first

was

to

rename

the

document

to

Extended

use

cases,

and

we've

done

that

I'm

going

to

talk

about

one

of

the

proposals

and

Tim

will

talk

about

two

more

of

them.

The

one

I'll

talk

about

is

removing

use

cases

that

don't

add

new

requirements.

B

We'll

also

probably

cover

this

in

the

July

meeting,

with

some

of

the

other

items

on

this

list

and

kind

of

work

through

them

over

time.

All

right,

so

I

have

two

PR's

relating

to

removing

use

cases

that

don't

add

no

requirements.

One

of

them

relates

to

section

three:

nine,

which

is

reduce

complexity

signaling.

B

The

other

is

relating

to

the

machine

learning

use

case,

which

is

section

three

seven,

and

so

the

thing

I

like

the

working

group

to

consider

is

whether

we

need

these

use

cases,

and

if

we

do

what

value

they

add

all

right

so

section

39

is

reduced

complexity,

signaling

I,

don't

know

Tim.

Do

you

want

to

talk

a

little

bit

about

what

you

had

in

mind

here

sure

I.

G

Mean

I,

it's

really

a

question

like

the

original

idea

was

to

sort

of

get

something

where

you

could

drop

into

a

a

URL

that

you

could

URI.

You

could

drop

into

the

source

attribute

of

a

of

a

of

a

video

or

an

image,

and

you

would

get

like

magic

would

happen

underneath

and

suddenly

you'd

get

a

stream

into

that

element

without

you

having

to

do

any

kind

of

conscious

offer

answer

and

like

the

objection

at

the

time

as

well.

G

Hey

you

can

do

this

with

with

a

JavaScript

library

and-

and

this

is

fair

and

and

that's

kind

of

been

proven

by

the

fact

that

that's

pretty

much.

What

well

wish

is

doing

the

wish

and

where

for

doing

in

currently

in

in

just

for

capture

but

are

talking

about

doing

for

playback

as

well,

and

so

the

kind

of

the

the

argument

that

hey

there's

a

there

can

be

a

library

for

this

sort

of

borne

out.

So

I'm

I

think

that

there's

a

good

case

for

saying

well,

yeah.

G

B

G

B

J

G

View

is:

is

this

feeling

that

I

still

have,

which

I

think

the

rest

of

the

group

doesn't

have

that

the

role

of

this

document

is

somewhat

to

encourage

developers

that

their

minority

use

of

webrtc

is

a

valid

one,

that

like

they're

not

going

to

have

the

rug

pulled

out

from

underneath

them

by

changes

in

the

spec

in

the

future?

I

think

that's

the

only

reason

for

keeping

this

and

as

far

as

I

remember

from

the

non-consensus

last

month.

I,

don't

think

anybody

else

in

the

group

has

has

the

same

opinion

as

me

on

that.

B

E

K

D

Yeah

so

I'll

go

on

since

I

was

my

hand.

I

think

this

particular

document

should

only

focus

on

things

that

brings

new

requirements,

but

but

I

do

agree

with

you

Tim

that

there

is

value

in

documenting

somewhere

usage.

Is

that

aren't

driving

new

requirements,

but

that

people

have

started

to

rely

on

just

so

that

when

we

change

something

we

can

refer

to

this

and

verify

we're

not

creating

new

performance

issue

or

new

blocker

I'm

skeptical.

D

D

D

B

H

B

All

right,

so

next

one

is

PR

113,

which

is

removing

the

machine

learning

use

case.

So

this

one

also

doesn't

add

any

requirements

Beyond

those

for

funny

hats,

which

is

section

three

six

now

one

subtle

difference

between

this

and

funny

hats

is

that

funny.

Hats

really

is

is

largely

about

doing

things

with

Graphics

manipulation,

so

it

included

requirement

n22,

which

was

efficient

media

manipulation

via

gpus,

and

that

requirement

I

think

made

some

sense

for

funny

hats.

B

But

it's

included

here

as

well,

and

that,

if

you

think

about

it

is

a

little

bit

odd

because

efficiently

doing

media

manipulation

is

not

quite

the

same

thing

as

efficient

machine

learning.

So

as

an

example,

there

are

different

ways

to

accelerate

machine

learning,

which

would

include

things

like

for

the

case

of

audio.

There

are

now

mpus,

which

are,

in

many

cases,

preferred

to

gpus

for

accelerating

machine

learning.

B

B

F

F

We

are

not

defending

this

right,

so

the

only

thing

that

we

are

defining

is

access

to

video

frames

right.

We

want

it

to

be

efficient,

so

it's

still

a

requirement

that

somehow

we

we

need

to

manage

and

I

think

we

have

done

it

and

to

the

best

we

we

can

so

I'm

fine,

either

way.

As

long

as

we

understand

which

group

of

requirements

and

their

requirement

scope

is

related

to

the

significant

filing.

Only

and

not

the

web

genome.

D

C

D

Ensuring

or

reducing

the

risk

of

memory

copies

across

the

various

ways,

machine

learning

could

happen,

as

you

say,

compared

to

Media

manipulation.

Machine

learning

could

happen

right

through

CPU

or

other

processing

units.

Where

does

any

requirements

from

from

that

need

would

be

derived

in

the

context

of

encoded

transform,

but.

B

Yeah,

so

so

that

is

an

important

question.

Dom

and

in

fact,

in

the

media

working

group,

we

are

discussing,

for

example,

color

conversion

with

cop

video

frame,

dot,

copy2,

there's

now

in

I,

believe

the

web

GPU

working

group

a

function

to

convert

between

video

frame

and

web

GPU

external

textures.

So

these

things

are

being

worked

on,

but

not

in

this

working

group,

Tim

yeah.

G

I

think

just

to

put

this

in

context.

I

think

this

is

another

one

which

has

been

pretty

much

overtaken

by

events.

You

know

and

and

Like

You

could

argue

that

it's

been

achieved

and

I

think

when

it

was

written.

We

had

no

idea

kind

of

like

what

these

apis

would

look

like

and

and

now

they're

there

so

I

think

it's

it's

fair

to

say

that

it

can

that

it's

done

its

job.

To

some

extent,

I

mean

it

didn't

turn

out

the

way,

maybe

we

might

have

expected,

but

it

like

it's

available

now.

B

B

B

Right,

yeah:

it's

not

even

a

given

today

right

because

we're

they're

actually

activity

activity

in

the

media

working

group

to

try

to

remove

copies

as

Dom

said.

So

it's

not

like

it's

even

even

proven

that

we

can

do.

You

know

certainly

a

lot

of

machine

learning

stuff.

We

know

we

can't

do

on

the

web

efficiently.

B

E

B

H

B

B

E

G

G

How

do

we

move?

How

do

we

shape

the

documents

in

in

the

future

and

kind

of

we're

saying

that

this

document

shouldn't

do

some

things

and-

and

we've

also

said

last

last

month,

that

we

felt

that

explainers

were

a

really

useful

thing

to

do,

and

so

I

kind

of

want

some

sort

of

guidance

about

like

written

guidance

about

how

this

should

work?

G

You

know

what

the

relationship

is

between

an

explainer

and

the

Envy

use

cases

document

if

it.

If

there

is

one-

and

you

know,

conversely,

if

we're

making

OPI

changes,

maybe

they

should

refer

back

to

a

document

or

add

a

use

case

like

you

know,

so

so

all

the

small

changes

in

capture

that

have

come

along

have

been

about

use

cases

of

well

hey.

G

We

want

to

protect

the

user

from

doing

this,

or

we

want

to

make

it

easier

for

the

user

to

do

that,

but

there

are

no

use

cases

directly

in

the

document

that

talk

about

them

all.

There

are

at

a

high

level,

so

I'm

trying

to

work

out

what

we

think

the

relationship

should

be

between

any

new

documents

we

might

want

to

try

and

create,

and

and

the

existing

document

and

explainers

and

yeah,

not

a

very

coherent

question

but

I,

but

I

think

we

need

a

shape.

D

There's

two

person

proposal

and

I

haven't

put

a

lot

of

those

into

it,

but

but

I

wonder

if

a

solution

might

be

to

keep

this

use

case

document

for

use

cases

and

requirements

that

don't

have

a

home

yet

and

so

in

particular,

I

don't

have

an

API

which

hopefully

is

accompanied

by

an

explainer.

That,

indeed,

would

have

the

more

detailed

use

case

and

requirements

analysis.

So

basically,

this

would

be

a

place

where

we

park

things

that

we

know

we

want

to

do.

G

E

Yes,

so

I

think

this

use.

This

use

case

document

satisfies

a

different

purpose

than

explainers

I

think

this

document.

This

is

where

we

put

ideas,

and

we

we

wish

to.

This

is

where

we

wish

problems

we

wish

to

solve

and

describe

the

problems

we

wish

to

solve.

An

explainer

I

think

is

what

comes

out

at

the

end

unnecessary

for

tag

reviews

as

well.

That

explains

the

API

we

have

built

to

solve

the

problems

that

we

described

here

originally

so

I.

D

E

E

G

B

B

D

Yeah

I

think

we

already

have

not

thoroughly

applied

rule

that

any

significant

proposal

should

come

with

an

explainer,

and

one

reason

for

that

is.

We

need

it

for

tag

review

and

again,

an

explainer

is

supposed

to

describe

a

fairly

detailed

use

cases

that

lets.

You

understand

what

you

want

to

achieve,

so

we

in

my

mind

when

someone

is

already

at

the

stage

of

formulating

a

proposal.

D

We

should

insist

on

having

the

explainer

that

accompanies

it

not

necessarily

having

it

described

in

a

use

case

in

the

document.

In

particular,

if

we

move

towards

that

sequencing

I

was

describing

where

this

document

is

for

early.

We

think

we

know

we

need

that,

but

we

don't

know

quite

sure

how

to

approach

it

towards

the

later

phase,

where

we

have

this

proposal

and

it

fulfills

that

set

of

use

cases.

G

So

I

think,

if

I

kind

of

can

maybe

try

and

summarize

that

discussion,

the

feeling

is

that

this

document

is

should,

in

fact,

maybe

be

a

cue.

It's

a

it's.

The

input

queue

of

things

that

for

which

there

are

not

yet

explainers

or

proposals

and,

as

things

are

either

taken

off

it

or

age

off

it.

It

gets

shorter

and

longer.

G

So

it's

a

dynamic,

much

more

Dynamic

document

than

it

has

been,

which

I

I

think

I'm

in

favor

of

and

I

think

so,

maybe

it

it

needs

to

be

renamed

again,

like

future

use

cases

or

something

to

be

clear

that

it

doesn't.

We

don't

do

them

yet,

which

then

leaves

my

remaining

worry,

that

we

have

a

bunch

of

and

and

massively

a

bunch

of

use

cases

that

we

really

want

to

support

that.

We

do

support

that.

G

We

Implement

that

we

work

for

that,

aren't

in

70,

74,

78

and

I,

and

that

we

don't

claim

to

be

trying

to

do

at

a

high

level

and

I

I

think

we

need

some

way

of

resolving

that

and

and

I

don't

think

it's.

This

document

I

think

I

think

it's

clear

from

the

group

that

this

document

needs

a

much

narrower,

much

more

Dynamic

purpose,

but

I

do

think

that

we

need

a

another

place

to

say

these

are

the

kinds

of

problems

that

webrtc

is

is

solving

today

and

will

continue

to

solve

in

the

future.

H

Yeah

so

I

I

worry

a

bit

because

of

the

difficulties

I

had

in

getting

things

into

the

Q

into

the

queue

where

it

actually

seemed

to

be

easier

to

write

the

write,

an

explainer

of

how

I

want

to

do

something

and

I

mean

explainers,

should

include

why

I

want

to

do

something

and

how

I

want

to

do

something.

It's

easier

to

write

that

than

to

get

and

get

consensus

for

for

adding

a

a

use

case

to

the

to

the

the

working

group

should

have

a

solution

for

this

document.

G

I

I,

that's

true

from

within,

where

you're

sitting,

but

getting

things

done

from

where

I'm

sitting

by

a

browser

vendor

is

a

big

ask

so,

like

I,

think,

there's

a

bunch

of

kind

of

developer

requirements

that

are

pretty

much

impossible

to

implement

without

getting

somebody

on

board

to

help

them

implement.

It.

J

So

this

slide

is

really

just

a

call

for

feedback

on

the

pr

about

the

issue

that

I

talked

about

last

month,

which

is

to

prevent

candidate

per

removal.

There

has

been

some

feedback,

so

thank

you

very

much

for

that.

We

can

skip

this

as

just

a

one

topic

of

feedback

that

I

would

like

to

discuss

later.

So,

if

you

can

skip

ahead,

there

are

two

slides.

Please

we

can

skip

this

yeah.

J

So,

while

I'm

waiting

for

feedback

on

the

pr

I

went

ahead

and

created

issues

for

the

next

two

items

on

the

list,

these

issues

address

what

we

would

do

with

the

country.

What

an

application

would

do

with

the

candidate

pair

once

it

has

prevented

it

from

being

removed

and

there's

really

two

things

we

can

do

either

remove

it

at

some

point

in

the

future

explicitly

or

use

a

candidate

pair

to

transport

media.

J

J

J

The

exact

implementation

of

this

is

open

to

discussion.

So

if

we

are

going

to

be

deviating

from

the

spec

by

allowing

the

application

to

actually

change

the

ice

Behavior,

then

it's

open

to

discussion

exactly

what

approach

we

should

take,

whether

a

browser

is

free

to

open

implemented

in

a

certain

way,

or

the

application

of

course

would

need

to

ensure

that

at

the

other

end

understands

and

follows

the

same

protocol

for

selecting

transport

so

to

make

things

a

bit

more

clearer.

J

J

The

control

side

will

respond

to

that

and

on

receipt

of

successful

response,

the

controlling

agent

will

set

the

pair

to

be

nominated.

The

control

side

will

do

the

same

thing

on

receipts

of

the

first

request

and

then

that's

when

the

pair

gets

nominated,

the

alternator

would

be

to

just

take

the

pair

that

the

application

is

indicating

and

start

sending

media

on

that

immediately.

Presumably,

the

pair

is

still

active,

so

it

is

safe

to

do

that.

J

J

Yeah

so

this

slide-

let's

maybe

take

this

in

Reverse,

so

since

I

spoke

just

now

about

how

setting

candidate

players

might

work,

maybe

we

can

talk

about

that

first,

the

slide

is

phrased

a

bit

in

terms

of

what

the

API

looks

like,

but

I

think

the

discussion

is

a

bit

broader

than

that.

What

should

be

the

approach

by

the

browser

to

actually

set

a

selected

candidate

pair

and

then

how

does

that

reflect

in

the

API?

J

It

is

on

asynchronous

operations,

so

I

think

it

makes

sense

to

return

a

promise

that

gets

resolved

at

a

future

Point.

For

instance,

when

the

stunt

checks

are

successfully

received

and

a

pair

is

nominated,

the

alternative

would

be

to

return

immediately

and

then

let

the

selected

candidate

pair

change

event

actually

notify

the

application

that

the

selection

was

successful

or

it

was

not

not

successful.

Based

on

the

lack

of

an

event

so

thoughts.

J

E

J

E

B

J

E

J

E

J

So

further,

along

in

the

list

of

additions,

is

another

event

for

deletion,

which

cannot

be

canceled,

and

so

that

would

be

an

event

that

indicates

when

pooling

is

complete,

for

instance,

on

the

candidate

has

been

deleted,

so

that

I

think

makes

more

sense

with

regards

to

failure.

The

only

reasonable

reason

why

I

can

think

this

would

fail

is,

if

you

are

trying

to

prune

a

candidate

pair

that

doesn't

actually

exist,

because

you

just

pass

a

dictionary

that

can

be

anything

in

which

case.

E

J

For

a

sense

selected

again,

depending

on

how

we

do

the

implementation,

presumably

there

are

things

that

can

fail

asynchronously.

So,

for

instance,

if

we

do

the

stun

use

candidate

back

and

forth,

then

the

response

could

be

lost,

in

which

case

it

fails.

Asynchronously,

in

which

case

the

promise

resolves,

fails.

Asynchronously.

F

F

J

So

yeah,

so

in

the

issue,

I

have

actually

mentioned

that

it

is

perfectly

valid

to

prune

the

currently

selected

candidate

pair,

in

which

case

it

would

be

the

equivalent

of

the

cap

pair

going

away

for

any

other

reason,

for

instance,

the

network

interface

going

away,

in

which

case

you

would

select

or

sorry

you

would

trigger

the

selection

of

a

different

candid

pair

or

ice

failure.

Paragraph.

F

Yeah

I

I

guess:

if,

if

you

dynamically

switched

to

the

selected

candid

pair,

just

at

the

time

that

you

prune,

you

might

actually

ruin

the

selected

candidate

there

without

knowing

it

because

I'm

guessing

that

one

take

is

happening

in

the

network

process

in

the

network

trap

and

the

other

one

is

of

course,

in

the

main

thread.

So

I'm

gonna

move

that

there

might

be

some

race

conditions

there

and

some

valid

places

with.

F

So

maybe

it's

time

to

put

silica

and

later.

But

maybe,

if

you

start

with

trying

to

put

this

candidate

there-

and

you

know

it's

not

in

the

selected

one

in

the

main

thread-

maybe

when

you

are

actually

in

the

network

thread,

you

will

not

like

to

to

front

it

at

that

time.

So

I

don't

know.

Maybe

it's

worth

some

truth

here.

F

I

I

see

that

prune

is

taking

a

sequence

I'm

guessing

it's

for

tough

reasons,

but

you

usually

just

want

to

hop.

Is

that

correct?

Because

otherwise

you

could

like

pair

and

call

it

several

times

instead

of

having

to

create

a

sequence.

So

I

was

wondering

what

was

the

best

approach

here?

Maybe

it

should

be

like,

like,

like

you

pulling

candidate

there

to

Market

data

to

and

so

on,

I

don't

know.

What's

the

best

part

here.

J

G

G

J

G

J

K

J

Right

I

think

there's

definitely

an

opportunity

to

to

do

that,

because

the

fact

that

the

application

uses

these

implies

that

the

application

is

taking

a

certain

responsibility

in

making

sure

that

connection

will

actually

work.

On

the

other

end

as

well,

and

if

we

open

up

that

possibility,

then

we

should

theoretically

also

open

up

the

possibility

of

the

control

side.

E

Sorry,

I

didn't

jump

in

to

answer

that

question.

Sorry

I

had

a

different

question,

though

I'm

I'm

just

nervous

that

for

the

ice

transport

in

general,

it's

running

asynchronously

and

peer

Condition,

it's

a

huge

State

machine.

How

are

these

chain?

How

do

we

imagine

these

changes?

Working

with

you

know,

negotiation,

ice

restart

and

that

kind

of

stuff

like

it

seems

like

there

could

be

a

lot

of

races.

E

J

E

G

Think

if

you

don't

then

you'll

end

up

completely

messing

up

things

like

bandwidth

estimation

and

all

sorts

of

other

fun,

so

I

think

I

think

you

definitely

need

to

do

that

and

the

two

sides

need

to

agree

and-

and

this

is

going

to

be

the

quickest

way

to

do

it.

There's

no

other,

like

you

could

send

a

thing,

a

message

over

the

date

Channel

or

something,

but

it

would

take

longer

than

a

than

the

ice

protocol.

So

I

think

that's

the

right

way

to

do

it.

Yeah.

K

So

the

question

is:

would

it

newly

added

candidate

pair

be

able

to

override

your

previously

selected

candidate

pair

if

the

application

specifically

selected

a

candidate

pair

and

I,

don't

know

the

answer

to

that?

But

is

it

something

we

should

choose

either

way

I'm

I'm

inclined

to

say

that

the

application

should

be

in

control

and

not

have

it

changed

at

it

under

it,

but

I

could

see

an

argument.

K

The

other

way

on

the

question

of

I,

think

you

were

talking

about

the

candidate,

the

the

selected

candidate

pair

being

the

same

pair

for

both

sides,

and

him

was

talking

about

bandwidth

estimation,

there's

no

reason

the

candidate

pairs

need

to

be

both

the

same.

Both

sides

could

pick

different

candidate

Pairs

and

the

congestion

control

will

work

just

fine

one

using

one

candidate

pair

and

another

using

Canon

pair.

There's,

there's

really

no

reason

they

have

to

be

the

same,

but

I

didn't

quite

understand

the

original

question

that

Tim

was

trying

to

answer.

G

C

J

Right

so

yeah,

so

you

right

now

with

ICU.

Do

you

nominate

a

candidate

player

by

sending

a

stunt

check

with

U.S

candidate

attribute

set,

and

then

the

control

side

responds

to

that?

And

then

the

control

side

sends

its

own

check

and

it

reads

for

response

and

once

that

exchange

is

complete,

that's

when

you

actually

start

using

that

candidate

pair

with

I

think

8445.

You

can

still

send

media

until

candidate

pair

has

been

selected

on

any

ballot

pair,

but

then,

once

awarded

pair

has

been

selected,

you

have

to

do.

J

K

Then

I'll

be

strongly

in

favor

of

sending

media

immediately

on

the

when

you

called

selected

on

you

want

that

to

be

as

responsive

as

possible,

and

there's

really

no

reason

to

wait

for

another

round

trip,

because

the

the

only

reason

you'd

select

it

is

if

it's

receiving

check

responses

that

are

working

already.

So

why

wait

for

another

round

trip

I

I?

Don't

see

a

good

reason

for

that,

so.

H

H

H

H

H

H

B

H

B

H

H

The

sender

encoder,

if

we

use

it

at

all

and

what

to

encode

to

so.

For

instance,

we

can

have

bp8

encoding,

setting

coding

codec

to

ep8

that

takes

the

output

of

the

encoding,

transform

it

using

S

frame

and

send

it

of

a

as

a

a

penal

type

that

this

tag,

that's

bp8,

business

frame

or

even

s-frame.

You

have

to

look

inside

to

figure

out

that

is

vp8

and

similar.

We

have

to

add

a

decoding

codec

on

the

other

end.

H

H

H

H

H

H

H

So

I

started

the

implementation

in

Mark

at

IDF.

Hackathon

in

March

I

haven't

followed

that

up

later,

but

yeah.

So

it's

not

that

functional,

but

I

didn't

find

the

showstoppers.

Yet

I

was

capable

of

getting

getting

the

new

STP

into

the

list

of

things

being

negotiated,

but

not

I

didn't

get

that

first

to

get

them

back

into

the

list

of

things

that

had

been

negotiated.

E

E

H

G

Yeah

much

against

my

better

judgment.

I

do

see

the

necessity

for

involving

STP

in

this

one

thing

I

worry

about

is

what

hap?

How

do

we

deal

with

the

kind

of

scope

rules

of

STP?

How

are

you

going

to

deal

with

things

like

RTX

and

all

of

the

other

bits

where,

where

STP

Scopes

bleed

into

other

parts

of

the

of

of

themselves

like,

if

you

know

yeah,

how

does

that?

How

does

that

work.

H

It

would

be

and

correct

to

say

that

I

haven't

figured

that

one

out

yet

so

at

the

moment

we

have

a

very

imprecise

surface

for

deciding

what

kind

of

protection

features

where

to

want

to

turn

on

I.

Think

the

current

API

for

doing

that

is

called

sdp

in

Man

game

merging

and

that's

sad,

so

I

think

we

should

figure

that

one

out

and

then

start

doing

it

also

for

this

case.

H

H

E

So

just

I

mean

this

is

early.

Maybe

this

is

bike

shedding,

but

I'm

wondering

I,

see,

there's

only

methods

to

add

things

not

to

remove

things

and

then

I

was

thinking.

Well,

maybe

why

isn't

this

part

of

set

configuration

or

something

like

that?

But

but

this

is

bike

shedding

I

guess,

so

we

could

do

that

later.

H

I

thought

I

thought

about

adding,

adding

adding

removing,

but

then

again

we're

able

to

turn

off

using

the

negotiated

codex

by

just

removing

them

from

the

M

line

that

from

from

there

from

the

cyclic

references

list

right

right.