►

From YouTube: Interim WEBRTC WG Meeting December 2, 2020

Description

December 2 Interim WEBRTC WG meeting

A

A

Okay.

So

this

is

the

agenda.

We

have

harold

for

10

minutes

and

then

on

for

10

get

into

webrtc

capabilities,

for

which

we've

allocated

15

back

to

u.n

for

10..

They

have

the

nd

use

cases

for

15

minutes

and

then

the

rest

of

the

agenda.

So

hopefully

we

can

make

it

through

all

of

this,

so

I

am

going

to

turn

over

the

floor

to

harold

or

insertable

streams,

and

then

you

went

so

did

no

one

volunteer

prescribed.

C

C

C

So

that's

the

how

how

you

would

use

it

next

well

stage.

Three

is

the

complete

break

apart,

which

that

says

that

okay,

you

have

to

have

you

handle

the

media

that

goes

in

one

direction

and

you

also

handle

the

feedback

that

goes

in

the

other

direction

such

as

downstream

has

stopped.

You

don't

need

to

sell

anything

more

downstream,

wants

something

different

from

what

you're

giving

it

and

when

ghido

started

implementing

it.

He

said

hey.

This

is

approximately

the

same

amount

of

complexity,

I'm

going

to

implement

stage

3

first,

so

next

slide.

C

C

C

E

F

F

F

F

C

C

G

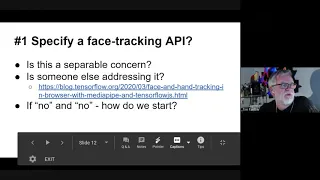

Tim

yeah,

I

I

think,

there's

a

slight

I

mean

so

the

the

place

to

look

at

this

is

that

the

this

use

case

is

for

is

for

funny

hats

and

you

just

can't

do

funny

hats

in

a

non-discriminatory

way

unless

you've

got

a

decent

face

tracker.

So

I

think,

like

coming

to

your

question

about

whether

it's

separable

my

view

is

that

it

isn't,

because

I

don't

see

how

you

can

address

funny

hats

without

having

something

that

does

does

face

tracking,

and

so

it's

sort

of

it

isn't

a

separable

issue.

G

F

G

I

think

the

risk,

though,

is

that

if

we

don't

start

somewhere

we're

going

to

end

up

with

everybody

implementing

this

in

in

12

lines

of

bad

javascript

and

we'll

end

up

with

something

that

is

deeply

discriminatory.

For

you

know

everybody

I

think

and

and

not

meet

the

not

meet

the

goal

of

even

doing

face

tracking

properly.

For,

for

you

know,

a

substantial

proportion

of

the

users.

So

I

kind

of

I'm

not

fixed

on

how

this

is

done,

but

I

do

think

we

need

to

address

it.

H

So

from

the

web

products

side,

I

just

wanted

to

mention

that

there

are

use

cases

for

breakout

box

that

have

no

need

for

face

tracking

right.

You

know,

like,

like

zoom,

for

instance,

would

like

to

have

access

to

your

video

frames,

but

that

doesn't

necessarily,

I

guess

I

I

take

them

back.

Actually,

as

I

say

it,

they

may

actually

care

about

face

tracking

still,

I

think

there

are

use

cases

in

web

codex

generally

for

breakup

box

without

face

tracking.

Oh.

G

Sure

I

mean

I

I

think,

for

example,

you

know

just

turning

the

whole

thing

into

black

and

white

or

or

modeling

an

old

tv,

or

something

that

there's

tons

of

use

cases,

but

specifically,

we've

called

out

face

tracking,

as

as

the

kind

of

headline

use

case

for

this

category

and

and

so

either.

We

need

to

take

that

out

of

the

headline

or

we

need

to

do

it

in

a

way.

That's

you

know

acceptable.

E

To

me

it

sounds

like

we

probably

should

discuss

that

requirement

in

the

nv.

Use

cases,

context

right,

but

it's

unseparable

enough

from

the

media

capture,

insertable

stream

context

that

it

doesn't

need

to

be

part

of

that

api

and

so

can

be

left

as

a

separate

exercise.

And

again

there

is

already

an

api.

I

don't

know

how

good

a

feed

it

is

with

our

model,

but

there

is

already

an

api

being

incubated

in

ycg

to

do

face

detection

so.

E

B

C

I

think

that

I

think

that's,

I

think

it

does

it's

reasonable

to

add

an

example

using

that

that

api

and

then

we'll

have

to

discuss

again

whether

we

need

need

to

do

more

so

I'll.

Take

that

as

instruct

instructions

to

the

editor

and

then

we'll

and

then

we'll

leave

the

leave

the

bag

open

for

now

so

status.

C

F

It's

now

available

in

safari

nightly.

Last

patch

landed

this

yesterday

or

today

I

don't

remember

and

of

course

it's

behind

a

feature

flag,

so

we

decided,

based

on

past

discussions,

we

had

in

the

past

months

to

try

a

slightly

different

approach,

which

is

to

attach

transform

to

senders

and

receivers.

So

you

can

see

that

there's

the

api

that

is

in

safari

is

adding

one

attribute

transform

to

either

sender

or

receiver.

F

The

idea

behind

this

this

is

that

what

this

feature

is

doing

is

really

trying

to

get

a

frame,

do

some

processing

on

it

and

then

pass

it

on

either

to

the

network

or

to

the

decoder.

So

it's

really

a

transform.

There's,

no

idea

of

trying

to

get

the

frame

and

keep

it

or

do

something

with

it.

It's

really

you

you

get

it,

you

transform,

transform

it

and

then

you

pass

it

along.

So

it

seems

nice,

given

what

what

workforce

group

streams

has

this

notion

of

transform

to

to

use

that

model?

F

So

what

we

added

is

a

simple

interface,

a

frame

transform

which

has

a

constructor

and

which

has

a

few

methods

to

basically

manage

the

encryption

keys,

and

then

all

you

have

to

do

is

to

set

sender.transform

to

a

new

transform

to

a

new

frame

transform

and

then

you

can

set

the

key

and

the

same

on

the

receiver.

So

it's

very

simple:

it's

very

convenient.

It

works.

F

Well,

we

added

the

possibility

to

have

the

s

frame,

transform,

expose,

readable

and

write

all

gators

next

slide,

and

the

idea

behind

that

is

that

you

can

use

via

train

transform

not

only

as

a

whole

but

as

a

part

of

a

generic

transform.

Let's

say,

for

instance,

that

you

have

a

transform

that

is

adding

some

meta

data

at

the

end

of

frame

then

doing

encryption.

F

There

are

cases

like

that,

where

you

want

to

add

like

face

tracking

information

and

still

encrypt

it,

so

there

it's

good.

If

you

could

reuse

the

s1

transform

to

do

the

encryption,

even

though

the

appending

of

the

metadata

will

be

done

in

javascript

by

making

the

s1

transform

a

generic

transform

stream.

It's

very

easy.

Let's

say

we

have

a

readable

and

writable

objects.

Then

you

you

just

do

a

python,

readable

python

to

the

sram,

transform

and

bang.

F

So

with

that

model

we

see

that

the

combos

are

working.

Fine

in

suffering,

vs

frame,

transform

is

able

to

process

either

audio

video

or

array

buffers.

So

at

the

end,

it's

just

an

extreme

transform

is

just

taking

bites,

encrypting

them

and

sending

them.

So

there's

no

reason

all

three

of

them

could

not

be

supported.

So

that's

what

we've

done

next

slide.

F

But

what

I

want

to

point

out

that

there

is

that,

given

how

instable

stream

is

used

currently

where

people

are

using

it

and

they're

implementing

their

transform

as

a

transform

stream.

So

what

they're

doing

is

they're

taking

the

readable

and

writable

that

are

exposed

currently

with

the

current

api

and

they're

piping

it

to

a

transform.

F

F

So

the

first

thing

is

that

we

are

really

talking

about

the

transform

so

modeling

what

it

is

as

a

transform

api

is.

Actually

it

actually

makes

sense,

and

it's

it's

beneficial.

We

also

like,

if

you

look

at

compressed

streams

or

encoded

streams,

they

are

not

implemented

as

an

array

of

readable

and

writable.

F

They

are

implemented

as

transform

streams,

and

you

could,

for

instance,

think

that

maybe

you

could

gzip

and

coded

frames

as

well,

and

then

you

would

be

able

to

just

set

the

transform

on

the

sender

to

this

native

transform

as

well.

So

this

is

why

I

think

the

model

there

is

right.

The

other

thing

I

would

mention

is

that

riddle

stream

and

right

of

both

steam

might

not

be

enough

in

all

cases.

F

So

question:

oh,

no!

Go

back

so

I

have

two

questions

to

the

working

group

and

I

only

have

like

two

minutes

left.

So

I'm

not

sure

I

will

be

able

to

get

to

the

rest

of

the

slides,

but

that's

fine.

The

first

question

is:

do

the

working

group

think

that

we

could

start

adding

the

s4

and

transform

to

istanbul

streams

to

have

spec

along

the

lines,

for

instance,

of

the

api

that

is

currently

in

safari?

F

F

C

F

So

far

I

haven't.

I

I'm

not

aware

of

any

any

other

case

where

we

would

like

additional

data

to

flow

on

the

opposite

way.

So,

for

instance,

controlling

better

the

encoder,

but

I

do

not

see

how

we

could

not

fix

that

in

the

same

manner,

adding

additional

apis

so

that

you

can

control

the

encoder.

Somehow

you

request

keyframe

or

you

want

to

increase

your

qp

or

whatever

it's

not

too

difficult

to

add

apis.

There.

G

F

So

I

think

that

in

that

case

we

should

think

that

on

the

encoder,

the

transform

is

just

the

prospecting

of

the

encoder.

So

I

hope

that

the

bandwidth

estimation

should

take

into

account

the

transform

and

somehow

it's

a

difficult

thing

to

to.

You

will

need

to

talk

to

the

encoder

and

say

hey.

I

asked

you

to

do

one

megabyte,

but

I'm

measuring

that

you're

doing

more

because

of

the

transform,

maybe

in

which

case

it

will

be

up

to

the

browser

to

say.

I

Sorry

you

anybody

here,

I

think,

purely

from

an

api

point

of

view.

I

think

it's

it's

an

improvement

to

have

it

transform,

because

I

was

a

little

worried

about

the

existing

api

that

you

have

basically

two

ends

of

a

cable

and

in

order

you're

expected

to

put

them

both

into

from

and

two

correctly

and

there's

nothing

to

prevent

you

from

you

know

what

this

opens

a

lot

of

questions.

C

F

Maybe

it's

it's

very

unclear

now

because

we're

talking

about

this

signaling

on

the

other

on

the

other

side,

but

we

have

only

very

few

use

cases

or

the

current

api

is

not

tackling

this.

The

transform

api

is

only

tackling

this

for

request,

quick

frame

which

is

already

an

improvement

and

which

is

very

similar

there.

So

it's

very

difficult

to

judge

both

approaches

based

on

something

where

which

is

not

precisely

defined

what

we

want

to

solve,

and

so

I

mean

we.

F

We

would

need

to

make

big

improvements

in

precisely

what

we

want

to

solve

with

the

other

signaling

channel

before

judging

either

approaches

there

and

I'm

happy

to

do

it.

But

I

don't

want

to

make

the

decision

depending

on

that,

because

I

think

we

we

need.

We

need

to

make

progress

there

and

currently,

with

what

we

want

to

solve.

It

seems

that

the

proposal

there

is

an

improvement.

C

F

A

E

I

F

F

There

it's

supported.

In

both

cases

the

overuse

cases

are

less

documented,

so

it

would

be

good

to

document

them

with

both

approaches.

You

get

the

uncoded

frames

anyway,

so

if

you

want

to

send

them

to

another

transform

or

to

serialize

them

through

websocket,

you

can

do

that

as

well.

There's

no

difference

with

any

approaches.

A

F

I

F

Would

you

do

that

yeah

you?

You

want

to

use

web

codec

there,

where

you

have

the

encoder

as

a

box,

then

you

place

the

same

as

frame

transform

and

then

you

pipe

the

results

of

the

transform

to

the

web.

Sunspot.

That's

the

model

there,

but

I

think

that

it's

a

separate

world,

except

via

the

idea

that

you

could

reuse

the

svm

transform,

for

instance,

or

javascript

code,

that

you

would

write

and,

as

I

showed

in

the

example

vs

trend

transform,

for

instance,

is

taking

encoded

audio

frames.

So

it's

fully.

A

G

I

F

J

F

F

A

C

F

So

if

we

go

back

to

questions

there

are

two

questions.

The

first

question

seems

yes,

there

is

rough

consensus

and

the

second

one

we

need

to

continue

working

on

it

and

to

get

consensus

so

for

the

second

one

we

check.

So

it

seems

there's

a

one

action

item

for

harald

to

provide

use

cases

for

the

secondary

channel

and

maybe

bernard

could

also

help

because

it

seems

you

have

use

cases

there

and.

A

F

B

I

All

right,

thank

you,

so

this

is

really

slides

from

that.

I

prepared

for

an

earlier

meeting

and

was

also

presented

to

the

ping

a

couple

of

months

ago

that

we

have

the

privacy

interest

group

review

some

apis

in

both

midi

capture

and

here

in

webrtc,

and

specifically,

they

were

mentioning

sender,

get

capabilities

and

receiver

get

capabilities

for

audio

and

video.

I

I

So

we

already

have

fingerprinting

notices

in

respect

about

this

and

bernard

graciously

provided

a

document.

That's

called

graphics

hardware,

fingerprinting,

which

mentions

which

looked

into

this

and

says

that

information

relating

to

graphics,

hardware,

capabilities

provided

by

webrtc

and

stats

and

svc

may

also

be

inferred

from

other

sources

such

as

web

tpu,

webgl

and

performance

api

and

therefore

graphics.

I

I

Similarly

stats

api

should

require

additional

permission.

They

said

through

two

privacy

harms

leaking

communication

and

plain

text,

while

that

might

be

useful

consideration

for

webrtc

identity,

which

supports

isolated

streams

for

regular

streams.

The

web

page

already

has

prior

access

to

audio

and

video

and

text.

So

that

is

not

an

issue

and

again

hardware

fingerprinting

through

the

decoder,

implementation

and

codec

members

in

the

stats

api

and

those

are

certainly

covered.

B

B

D

So

I

guess

you're

familiar

with

the

media

capabilities

api,

but

the

idea

is

that

a

website

should

be

able

to

just

query

and

for

particular

particular

video

configuration

and

ask

if

this

will

be

really

a

smooth

experience

and

also

it

also.

So

it

has

three

different

results.

It

will

say

supported

smooth

and

power

efficient.

D

D

A

A

D

F

A

F

Just

a

it's

just

a

matter

of

technology

and

like

being

low,

latency

or

not,

that

people

are

choosing

webrtc

versus

non-webrtc,

so

getting

api

like

media

capabilities

as

a

replacement

as

a

future

replacement

to

receiver

standard

gate

capabilities

seems

good,

especially

since

the

the

model

is

slightly

better.

It's

asynchronous

and

we

are

seeing

that

synchronous

get

capabilities

is

difficult.

F

I

D

A

C

F

B

Is

is

it?

Do

we

give

any

sort

of

guidance

in

the

spec

how

to

define

what

you

know

smooth

means

or

power

efficient,

because

one

difference

between

you

know

playing

a

youtube,

video

or

something

is

within

coding

and

decoding?

What

experience

you

get

might

depend

a

lot

on.

You

know.

Is

this

a

one-person

meeting

or

a

50-person

meeting?

B

F

So

one

example

where

it's

a

bit

better

as

well

is:

we

are

trying

in

safari

to

say:

okay,

this

codec

is

hardware

supported,

so

it

should

be

up

in

the

list

of

get

capabilities

codecs.

So

we

order

the

list

thinking

that

yeah

first

codec

is

more

power

efficient

and

that's

the

way

we're

doing.

But

it's

it's

not

great.

It's

it's

better.

If

we

could

have

just

an

attribute,

saying

yeah,

it's

more

efficient.

H

H

The

idea

was

that

it

was

kind

of

a

relative

thing

that,

like

if

you

have

a

software

codec,

you

know

at

a

low

resolution

which,

on

the

given

device,

draws

roughly

the

same

amount

of

power

as

a

hardware,

codec

or

less

power

than

a

hardware

codec

on

that

same

device

for

a

different

codec

than

we

should

call

both

codecs.

You

know

power

equipment

without

being

too

worried

about

exactly

how

much

power

or

or

even

whether

or

not

it

truly

was

decoded

in

hardware.

H

D

I

B

B

D

D

H

That's

that's

correct.

When

the

media

capabilities

api

was

launched

and

like

these

privacy

discussions

came

up,

the

the

answer

was

was

mostly

that,

like

yes,

we

are

exposing

some

information

about

your

device

performance,

but

we

expect

devices

to

kind

of

fall

into

big

buckets

of

common

capabilities

such

that

you

know

it.

Every

macbook

looks

relatively

the

same

as

every

other

macbook

and

every

android

phone

looks

the

same

as

every

other.

You

know

like

the

with

with

some

caveats

right,

but

yeah.

I

All

right,

well,

I

think,

from

our

working

group

point

of

view.

This

seems

like

the

right

way

to

consolidate

information

in

the

proper

place,

because

I

know

there's

always

going

to

be

fingerprinting

risk.

Here

I

mean

the

websites

today

are

already

using

things

like

navigator

dot

hardware

concurrency,

to

try

to

figure

out

whether

to.

J

I

D

I

J

A

F

Okay,

so

we

are

going

to

media

capture

and

we

identify

that

web

pages

tend

to

favor

using

camera

presets.

So

typically,

a

camera

is

working

well

for

discrete

resolutions

like

hd,

sd

and

so

on

and

discrete

frame

rates

as

well.

And

if

you

select,

if

the

webpage

is

selecting

something

else,

then

maybe

the

user

agent

will

try

to

down

sample

or

do

some

things

there

to

try

to

match

what

the

webpage

is

asking,

but

usually

web

page

is

trying

to

get

the

native

resolutions,

because

it's

good

for

performances

and

image

quality.

F

So

there

are

ways

to

try

to

get

to

the

camera

process.

You

can

use

resize

mode

frame

rate,

ideal

constraints

with

eight

idle

constraints,

but

really

it's

hard

to

master,

and

it's

also

difficult

to

get

it

right.

Cross

browser

as

well,

and

even

though

you

get

to

a

camera

press

set,

there's

no

easy

way

to

know

whether

the

one

that

is

found

is

the

most

suitable

one.

F

So

it's

it's

very

difficult

to

to

get

it

right

and

I

believe

that

enrique

is

working

on

exposing

pixel

formats

as

well,

which

is

a

nice

idea,

and

things

might

get

even

more

complex

if

we

start

exposing

that

so

the

purpose

of

there

is

to

define

a

more

straightforward

api.

Basically,

we

would

expose

camera

native

presets,

similarly

to

how

it's

done

by

vos

to

native

applications.

F

Of

course,

we

would

only

expose

that

after

get

user

media

granted.

So

that's

why

it's

currently

put

in

the

media

stream

track

level.

That

way,

there's

not

really

any

more

fingerprinting

issue

really

because

you

already

granted

access

to

you

to

your

your

to

your

camera

feed

next

slide.

So

let's

look

at

what

it

could

look

like.

F

We

could

add

that

somewhere

else

like

get

capabilities,

maybe,

but

I

thought

that

it's

better

to

keep

it

separate,

because

cat

capabilities

is

mostly

about

constraints

and

then

there's

a

simple

example

there.

Where

you

get

the

track

from

get

media

you

you

then

select

the

best

preset

given

the

list

of

presets,

and

then

you

apply

constraints

and

and

you're

done

with

it

next

slide.

F

F

Yes,

and

no

it's

up

to

the

us

user

agent

to

do

that,

what

we

would

be

planning

to

do

would

be

to

start

like

start

syncing

up

the

camera

until

the

get

user

media

promise

is

resolved.

So

there

will

be

a

slight

delay

in

the

opening

and

then,

as

soon

as

the

promise

is

resolved

and

the

callback

the

javascript

callback

is

synchronously

implemented.

F

We

have

the

final

settings

where

we

would

open

the

device,

so

it

would

be

only

configured

once

there

would

be

a

slight

delay,

but

I

think

it's

it's

fine

this

way

and

if

implementations

do

not

want

to

do

that,

they

could

still

do

what

you're

saying,

which

would

be

like

what's

available

right

now,

and

I

believe

that

it

will

only

happen

the

first

time

because

the

second

time

more,

probably

you

have

the

device

id.

So

probably

you

also

have

the

presets

so

you're

fine.

B

F

Whether

what

about

the

frame

rate,

for

instance

and

also

resize

mode,

is

not

always

available,

or

maybe

you

have

a

width

on

a

an

a

which

is,

let's

say,

100

by

100.,

so

maybe

you

will

want

120

by

160

and

then

it's

up

to

the

user

agent

to

to

crop

it

or

do

things

like

that.

But

it's

it's

really

difficult.

So

then

you

would

need

to

use

aspect

ratio

as

well,

and

that

makes

things

complex.

F

F

A

B

B

I

I

do

think

that

john

evers

like,

if

you

do

what

he

said

like

use

the

ideals.

It's

probably

gonna

work

like

99

of

the

time,

but

I'm

sure

there's

some

odd

camera,

where

the

frame

rate

is

messed

up.

So

I'm

wondering

how

important

it

is.

I

I

think

the

real

importance

might

come

into

when

it

comes

to

avoiding

like

mjpeg,

decompression

or

stuff

like

that,

but

that's

not

really

part

of

this

proposal,

so

I

I

know

I

think

I

think

it

makes

sense.

B

F

I

think

it's

it's

true

for

with

the

nate

for

frame

rate,

I

looked

at

a

little

bit

of

some

of

the

macos

and

ios

cameras

and

they

have

frame

rates

that

are

widely

different.

Some

it's

10

some,

it's

11,

some,

it's

15

16..

So

if

you

select

15,

for

instance,

maybe

you

will

get

it

and

that's

fine

or

maybe

it

will

be

30

frames

and

then

safari

will

dance

with

that

separate

to

15

frames

per

second

and

that's

a

bit

sad.

A

G

So

these

are

use

cases

that

you

that

we

already

kind

of

mentioned

at

tpac,

and

the

idea

is

to

try

and

expand

on

on

the

nv

use

cases,

but

basically

learning

from

stuff

that

nearly

works

in

webrtc

one

zero.

So

like

this

isn't

stuff,

that's

kind

of

totally

out

there

totally

weird

it's

like

things

that

people

are

trying

and

doesn't

don't

quite

work

because

we're

missing

some

api

points

or

some

some

features.

G

G

G

G

G

So

next

slide,

please

so

the

detail

on

this

one.

Basically,

when

you

have

a

gsm,

call

comes

in

and

you're

taking

a

using

webrtc

in

the

browser,

typically

on

a

mobile

that

something

will

drop

and

which

is

annoying

for

the

person

who's

who's,

getting

the

incoming

call,

but

it's

not

disastrous

because

at

least

they

know

what's

happening,

but

the

remote

user

has

absolutely

no

idea

why

their

video

call

is

just

frozen,

and

so

the

idea

is

to

try

and

provide

some

mechanism

for

allowing

the

remote

user

to

know

what's

happening.

G

Kind

of

think

of

it

as

on

hold

music

in

effect

or

a

message

or

something,

and

then

the

second

thing

is

that

once

that's

over,

it

would

be

really

good

if

you

could

get

the

call

back

and

and

there's

a

sort

of

side

thing

there,

which

is

that

if,

in

the

meantime,

somebody's

browsed

away,

it

would

be

good

to

be

able

to

get

that

back

as

well.

I'm

less

stuck

on

that,

but

I

definitely

think

that

you

know

during

an

in

post

an

interruption.

G

A

G

Within

typically

within

I

mean

I

haven't

tried

it

recently,

but

I've

I,

this

is

driven

by

the

fact

that

a

whole

bunch

of

people

who

have

implemented

what

are

effectively

soft

phones

as

native

apps

using

web

lib,

because

it's

not

possible

to

get

this

behavior

correct

in

the

browser.

So

my

motivation

is

to

try

and

kind

of

fix

that.

G

G

So

next

slide,

then,

so

this

is

one

about

kind

of

baby

monitors,

respiration

monitors

and

what

you

find

is

quite

a

lot

of

use

cases

that

a

lot

of

the

time

they're

done

the

people

viewing.

The

video

are

not

that

far

away

in

the

network

terms

from

the

device-

and

this

is

different

from

video

conferencing,

where

typically

we're

all

spread

out

over

the

internet.

And

what

that

means

is

that,

if

the

internet,

if

the

internet

drops

it's

still

useful

to

be

able

to

communicate

locally

over

the

lan.

F

F

G

E

G

C

G

G

Cool,

so

let's

move

on

to

the

next

one,

then

yeah

we

kind

of

talked

about

this,

but

I

can

I

again.

I

think

we

we

do

need

to

have

some

sense

of

face

tracking

kind

of

in

the

group

I

I'm

uncomfortable

with

it

being

delegated

elsewhere,

without

us

at

least

having

it

in

there.

Whether

it

needs

to

be

a

requirement

here

or

not.

G

I

I

I'm

prepared

to

discuss

or

or

or

back

down

on

or

something,

but

but

I

do

think

we

should

have

it

somewhere

in

the

group

as

a

as

a

thing

that

we

keep

in

mind

because

it

it's

definitely

an

issue

for

a

lot

of

people,

so

we'll

maybe

we'll

move

on,

because

we've

talked

about

this

a

little

already

so

so

next

slide,

please

yeah

this

one.

This

is.

G

This

is

really

about

the

idea

that,

as

people

get

more

and

more

bandwidth

and

more

often

get

symmetrical

bandwidth,

it

turns

out

that,

like

relatively

small

conferences

can

be

handled

by

their

device,

their

own

devices

without

needing

a

server

and

so

to

to

handle

the

video

mixing.

So

I'm

interested

in

the

idea

that

we

could

like

run

an

sfu

and

an

mcu

in

the

browser

you

can

kind

of

already

do

it

with

a

with

canvas,

hacks

and

and

web

audio.

G

But

I

think

if

we

actually

had

a

requirement-

and

we

had

a

use

case

for

it

in

the

document-

then

that

would

kind

of

drive

some

of

the

thinking

around,

maybe

extending

some

of

those

apis

or

loosening

some

of

them

up,

so

that

these

things

could

could

be

could

be

done.

I

suspect

that

kind

of

some

of

the

insertable

stream

stuff

maybe

plays

in

here

or

I

don't

know.

G

Yeah,

I

I

don't

maybe

yes,

a

few

mcus

is

a

is

a

mistake.

Maybe

maybe

I

should

have

put

more

generally

conferencing

engine,

so

I

mean

you

know.

I've

already

done

experiments

with

with

audio

conferencing

in

from

purely

in

the

browser

and

it

and

it

works

really

really

well

actually

shockingly

well

and

the

video

equivalent

is

is

unsatisfactory

because

then

you

end

up

having

to

effectively

do

a

video

mix

on

a

canvas

which

kind

of

actually

works

better

than

I

expected.

G

It's

it's

it's

the

outbound,

so

if

I've

got,

if

I

have,

if

I'm

hosting

this,

if

I

were

hosting

this

meeting,

you

would

all

be

sending

your

video

streams

to

me

and

I

would

be

doing

a

mix

that

would

produce

this

bar

at

the

bottom

on

a

on

a

canvas,

and

I

would

then

send

out

a

single

stream

to

each

of

you,

which

was

the

bottom

bar

and

and

what

happens

at

the

moment.

Is

you

end

up

re-encoding

that

bar

for

each

for

each

recipient?

Whereas

that's

completely

unnecessary?

G

G

G

But

it

may

end

up

being

getting

its

data

over

p2p

link,

rather

than

over,

actually

over

https

link

or

quick

or

whatever.

And

so

the

idea

is

to

try

and

mock

basically

mock

the

result

of

a

fetch,

so

that

these

things

can

use

the

same

api

and

not

care

whether

the

data's

arrived

over

a

p2p

link

or

or

over

a

plain

fetch,

and

it

turns

out,

it's

actually

relatively

simple

to

do.

G

G

I

mean,

I

think,

that's

that's

kind

of

the

point.

All

of

these

things

are

things

that

actually

already

sort

of

work,

but

they

do

it

in

an

ugly

or

inefficient

way,

and

the

aim

of

these

use

cases

is

to

try

and

push

a

little

more

efficiency

and

and

beauty

into

the

thing.

So

maybe

next

slide

yeah,

I

mean

there's

a

bunch

of

use.

Cases

here

for

for

live.

G

Betting,

live

auctions

where

low

latency,

but

mass

broadcast

is

something

that

that's

required,

and

there

are

a

bunch

of

reasons

why

this

turns

out

to

be

quite

difficult

to

do

at

the

moment,

and

one

of

them

is

audio

auto

play

on

a

a

device

that

isn't

sending

media

it's

just

like

the

whole.

The

whole

autoplay

is

horrifically

unpredictable,

particularly

on

chrome,

and

the

other

aspect

is

that

a

lot

of

these

people

have

drm

or

or

other

assets,

subtitle

assets,

and

it

would

be

good

to

have

those

somehow

communicated.

G

I've

been

called

out

on

the

idea

of

necessarily

doing

overdose

channels.

I

don't

it

could

be

rtp

extensions.

There

could

be

some

other

way,

but

it

would

be

really

good

to

get

those

out

in

a

way

that

that

can

be

consumed

by

the

end

points

and

ideally

with

as

little

change

to

the

like

their

infrastructure

as

possible.

A

G

K

Doable

but

I

had

the

impression

it

wasn't

you

yes,

okay,

I

think

it's

it's

doable.

You

can

pass

use

the

data

channel

as

a

transport

to

reconstruct

the

thing

and

pass

it

to

msc,

but

that's

that's

really

going

through

a

lot

of

hooks,

and

I

think

I

put

a

comment

in

the

in

the

pr

there

are

other

stuff

we

would

like

to

be

using

to

be

able

to

use

the

video

elements

like

the

the

media.

People.

K

Do

it

so,

for

example,

send

several

audio

track

with

different

languages

and

send

different

subtitles

for

different

languages,

but

to

be

able

to

choose

which

one

we

actually

play

back

or

display,

and

right

now,

when

the

source

is

we're

about

to

see

stream,

it

just

plays

everything

right.

So

let's

say

you

do

it?

The

old

japanese

way,

with

the

a

stereo

channel

with

the

the

right

in

japanese

and

the

left

in

english,

then

you're

gonna

have

both

language

being

played

today.

K

F

G

G

Let's

move

on

to

the

the

last

one,

which

I

think

is

is

interesting

as

well

just

cover

that

so

the

idea

here

is

that

there

are

a

lot

of

use

cases

where

we

don't

need

the

full

offer

answer

for

one

reason

or

another.

We

kind

of

know

what

we're

going

to

get,

and

this

is

often

true

for

very

small

devices,

which

only

have

a

very

limited

set

of

things

or

for

servers

that

are

ingesting

data.

G

E

G

A

A

G

Yeah,

maybe

we

we

need

to

finesse

the

language

on

that,

maybe

it's

not

that

with

the

apis

there,

but

that

we

need

some

note

there

and

whether

it

and

whether

it

ends

up

being

a

use

case.

I

don't

know,

but

I

I

do

want

to

kind

of

work

it

in

somewhere

into

our

documents.

I

think

it's

or

not

even

the

face

tracking

but

the

non-discriminatory

requirement

right.

I

want

that.