►

From YouTube: WEBRTC WG meeting at TPAC 2020 - part 2

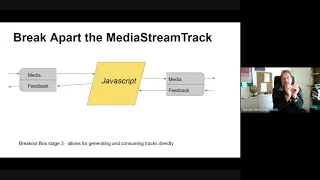

Description

WEBRTC WG meeting at TPAC 2020 (virtual), October 22, 2020.

A

So

everyone's

seen

this

search

right

by

now,

basically

there's

the

entire

set

of

boxes

that

go

into

mrtc

and

what

we're

now

looking

at

is

not

the

stuff

to

the

left,

which

is

all

the

networking

stuff

that

everyone

loves,

except

the

ones

that

who

the

ones

who

don't

we're.

Looking

at

the

stuff

on

the

right.

A

There

was

a

nice

picture.

I

think

it

was

given

an

at

an

earlier

talk

last

week

that

had

like

the

media

stream

track

in

the

middle,

and

then

you

could

feed

cameras.

Microphones

desktop

captures

incoming

streams

into

it

and

pull

stuff

out

on

the

right,

presenting

on

a

video

on

a

canvas

on

a

video

tag

on

a

recorder

or

media

capture

or

something

else,

but

the

media

stream

track

is

kind

of

this

central

idea

in

the

local

media

processing

pipeline,

and

what

we

wanted

to

do

is

open

it

up.

A

A

Nowadays,

people

are

using

ethernet

cables.

How

simple,

anyway,

a

breakout

box

then

permits

you

to

disconnect

some

of

the

wires

that

run

from

one

end

to

the

other

and

perhaps

patch

some

wires

onto

other

wires,

and

that's

the

mental

model

that

you

would

want

to

have

in

when

making

it

simple

to

understand

the

break.

This

three

stages

of

the

breakout

box,

because

the

first

thing

we

have

now

is

a

self-contained

unit,

the

breakout

box

stage,

one

you

can

apply

constraints

to

it.

A

A

A

I

have

my

media

stream

track.

That

comes

from

my

video

input

and

I'm

going

to

turn

that

into

a

processing

media

stream

track

that

has

this

way

of

pulling

out

and

then

I

can

create

what's

called

in

streams

parallel

parents

stream

is

such

an

overloaded

word,

sorry,

a

transform

stream,

which

is

you

put

data

into

it,

and

you

get

data

out

of

it

and

what

you're

doing

in

the

middle

is

call

the

add

massage

function.

A

A

A

D

A

A

A

A

A

A

The

we're

reusing

the

video

frame

and

audio

frame

for

that

matter

that

were

defined

for

web

codec

and

the

web

codec

folks

are

thinking

that

they

have

found

ways

to

handle

a

main

memory

object

that

is

basically

backed

by

gpu

memory

so

that

as

long

as

you

don't

have

to

convert-

or

you

can

run

the

converters

on

the

gpu-

you

can

just

chip

around

this.

This

thing

with

a

pointer

down

into

gpu

memory.

D

D

B

A

A

F

I

believe

them

you

can

use.

You

can

see

these

video

framework

products

as

cms

bitmaps,

which

are

structures

that

can

be

backed

by

the

gpu

memory

and

then

you

can

you

can

run

then

I'm

not

an

excellent

detector,

but

I

think

you

can

operate

with

webvl

on

those

objects,

so

everything

everything

stays

on

the

on

the

epu.

G

A

H

A

F

I

C

So

there's

some-

I

I

I

think

this

is

coming

back

sort

of

where

tim

was

too

a

little

bit

like

I.

I

get

this

like

where

this

going.

I'm

thinking

about

the

cases

where

we

I

mean

if

the

camera

is

producing

compressed

media,

this

means

we're

going

to

decompress

it

to

operate

on

the

frame

here

then

recompress

it

and

and

send

it

again.

A

Know

that

that

depends

on

the

compressor

I

mean.

The

current

example

we

have

are

is

hd

cameras

on

on

the

usb

2

bus

and

they

produce

motion

jpeg

and

no,

but

no

sane

person

wants

to

send

most

to

end

up

with

motion

jpeg,

so

handrick

actually

has

some

slides

right

at

the

end

for

dealing

with

or

for

avoiding

motion

jpeg.

C

Sure

I

mean

most

newer

cameras

are

not

doing

motion

jpeg

anymore,

but

I

mean

for

the

ones

that

are

doing

h.264

or

something

like

that.

Yeah

I

mean

I,

I

don't

think

it

changes

the

story.

Much

like,

I

think

the

question

is,

is

roughly

you

know,

would

you

be

open

to

having

the

possibilities

of

being

able

to

get

the

compressed

packets

regard,

regardless

of

whatever

the

format

is

passed

into?

The

you

know

exposed

into

the

javascript

as

a

as

an

alternative?

Well,

look.

C

A

Yep,

I

mean,

I

think,

that's

an

interesting,

interesting

question,

and

both

I

mean

if

we

can

can

get

things

done

without

transcoding.

It's

obviously

cheaper

right,

but

that

that

might

be,

but

having

it

in

an

encoder

form

that

we're

not

going

to

decode

might

actually

be

more

suitable

for

inserting

the

camera

straight

into

the

in

into

the

encoded

side,

which

is

downstream

of

the

of

of

the

encoder

and

the

nice

thing

about

row

frames

apart

from

them

being

wrong,

is

that

they

don't

depend

on

each

other.

A

C

All

right

sure,

so

I

mean

I'm

totally

understand

depending

on

the

codec,

so

that

some

some

are

temporal.

Some

are

not,

but

I

mean

like

some.

You

know

this

would

really

open.

This

opens

up

to

some

of

the

stuff

that

bernard

was

saying

from

long

ago

that

we

ought

to

be

able

to

have

plug-in

codecs

right

I

mean

particularly,

if

you

think,

about

very

low

bandwidth,

audio

codecs

or

something

like

that.

I

don't

know.

I

think

that

there's

a

lot

of

options

here.

A

H

Yeah,

I'm

just

going

to

say

a

note

on

web

codex

is

that

they've

so

far

rejected

streams.

I

think

for

performance

reasons.

So

one

concern

I

have

with

this

and

I'm

glad

it

can

be

done

in

workers,

but

since

the

api,

the

control

surface

is

on

main

thread.

I'm

worried

that

this

might

encourage

sites

to

try

to

do

I

mean

doing

raw

media

on

the

main

thread

seems

like

a

horrible

mistake

to

me.

H

H

A

H

Well,

I

mean

there

are

other

concerns,

though,

like

like,

if

you

have

a

camera

track,

that

has

privacy

indicators,

that's

necessarily

tied

to

the

current

document,

so

you

wouldn't

be

able

to

do

this.

I'm

hoping

we

can't

this

won't

be

ever

possible

to

send

to

a

service

worker,

for

instance,

because

then

your

camera

access

may

outlast

the

page,

and

that

would

be

bad.

So

this

is

limited.

I

I

H

C

H

Steps

here

and

maybe

started

would

say:

let's

only

allow

it

in

worker

to

begin

with,

see

how

that

works

out

and

then,

if,

if

we

see

main

thread

needs

and

that

main

thread

is

performant

once

we

have

further

along

with

implementations,

because

we

don't

even

know

if

it's

going

to

be

performant

in

workers.

Correct.

I

B

I

C

A

H

K

I

I

I

So

with

pen

and

paper

we

decided

that

yeah.

It

would

be

too

long

to

redo

the

whole

world,

so

we

decided

to

build

upon

existing

technologies.

So

there's

s

frame,

which

is

the

underlying

format.

Basically,

as

frame

is

an

ietf

it's,

it

will

be

in

an

etf

spec.

I

think

the

itf

is

working

on

the

via

frame

spec.

I

We

also

want

to

use

cryptokey

to

convey

key

material

and

cryptokey

is

nice

because

you

can

either

generate

cryptokey

from

javascript

or

you

can

generate

from

native

code.

You

can

have

under

the

hood

properties

like.

Oh,

this

key

is

not

exposed

to

gs,

or

this

key

is

exposed

to

gs

and

in

the

future

we

could

add

a

lot

of

properties

to

crypto

keys

without

changing

this

proposal

and

of

course

we

want

to

integrate

with

peer

connection.

So

that's

why

instable

streams

is

coming

to

play

here.

I

I

It

has

quite

some

some

similarities

with

medus

s

frame

implementation,

which

is

some

kind

of

s

frame

in

javascript

implementation,

and

it's

done

in

a

worker,

so

the

worker

has

a

kind

of

api

that

is

exposing

to

the

main

thread

to

the

pages,

and

it's

quite

close.

So

it's

it's

quite

quite

nice

in

a

sense

next

slide.

I

So

there

is

an

example:

you

take

a

stream,

you

create

a

peer

connection,

you

add

a

track

and

then

you

have

a

sender.

So

since

you

want

to

encrypt,

you

need

a

key.

So

there's

a

key

management,

the

key

management.

You

don't

really

know

what

it

is.

It

may

be

implemented

in

javascript,

because

currently

it's

the

only

way,

maybe

in

the

future

it

could

be

mls

implemented

in

user

agents

whatever

at

the

end

you

get

a

crypto

key

and

then

in

red.

I

So

both

the

transform

and

the

sram

center

stream

are

in

red

because

it's

not

in

the

connection

api

and

it's

not

in

the

install

insertable

stream

spec

yet,

but

we

think

that

it's

a

it's

a

good

model.

If

we

were

to

remove

the

transform

attribute

there,

what

we

would

do

would

be

a

typical

take

very

double

stream

pipe

through

this

s

frame

center

stream

and

then

pipe

two

to

the

right

of

all

stream.

So

it

could

be

done

as

well

and

with

the

current

instable

streams

api.

I

Of

course,

this

model

there.

The

setup

is

done

in

main

file,

but

the

actual

processing

can

be

done

wherever

is

more

appropriate,

so

it

could

be

done

in

a

dedicated

crypto

thread.

It

could

be

done

in

the

media

pipeline,

it's

really

up

to

the

user

agent

and

that's

nice,

because

it's

allowing

flexibility

and

possibilities

to

optimize

things,

while

keeping

the

web

developers

life

very

easy.

It's

just

a

few

lines

of

code,

nothing

to

care

except

to

do

the

setup

properly.

I

K

I'm

just

going

to

give

a

status

update.

It

looks

like

the

ietf.

He

is

going

to

make

a

working

group

for

his

friend

and

that

is

going

to

be

open

to

all

different

kind

of

key

management.

So

there

may

be

something

interesting

to

be

done

in

collaboration

with

our

working

group

and

richard

here

can

tell

you

a

little

bit

more

about

that.

If

you

want.

E

This

seems

like

a

very

sensible

step

toward

leveraging

that

up

into

a

you

know

the

long-range

vision

of

having

stuff

protected

from

js,

obviously

not

yet

a

complete

vision.

I

think

we

need

to

get

you

know.

Key

management

that

was

out

of

the

hands

of

js

as

well,

but

like

this

seems,

like

seems

like

we

can

do

it

step

wise,

like

get

this

frame

locked

in,

get

this

thing

to

do

the

s

frame

transformation,

and

then

we

can

add

another

thing

that

encapsulates

the

key

management.

I

E

I

A

L

One

thing

that

I

guess

I

was

thinking

about

and

I

apologize

guys

I

was

I'm

trying

to

look

this

up

in

insertable

streams

before

yeah.

Sorry

before,

while

I

was

waiting

in

the

queue,

what

happens

if

you

get

an

integrity

error

in

in

stream

processing?

That's

not

that's

not

specific

to

this.

This.

This

would

happen

as

well

with

the

javascript

stream

implementation,

but

how

does

one

handle

integrity?

Errors

is

that

is

that?

I

So

this

you

can

provide

a

sign-in

key

and

based

on

the

signing

key.

If

the

sign

the

signature

is

wrong,

then

there's

something

that

should

be

done.

I

guess

that,

depending

on

the

options

we

could

provide

as

part

of

the

creation

of

the

s

sender

stream,

we

could

provide

some

flexibility

there.

My

initial

guess

that

the

default

implementation

would

be

if

you're

not

providing

signing

keys

you

you

do

not

validate

them

so

you're,

okay

with

and

if

you're,

providing

a

signing

key.

L

Right

that

was

an

answer.

That

was

an

answer

to

a

question

I

was

about

to

ask

so

great.

My

question

is

dumber.

My

question

is:

what

happens

if

the

verification

fails?

Does

insertable

streams

have

an

api

for

saying

that

there's

a

there's,

a

failure,

or

do

you

just

like

like

how

do

you?

Actually?

How

do

you

actually,

the

practical

matter,

deal

with

the

fact

that

a

frame

can't

be

verified.

I

Yeah,

it's

it's

up

for

it's

not

present

in

the

api.

So

I

guess

that

if

we

were

to

do

that,

there

would

be

webrtc

stats

that

would

allow

you

to

see

that

frames

are

dropped

and

the

reason

being

as

frame

for

instance,

so

we

could

provide

that.

We

could

also

provide

like,

for

instance,

there's

the

issue

where,

when

there's

key

rolling,

oh,

I

receive

a

new

frame

and

I

do

not

have

yet

the

key.

For

instance,.

C

I

So

I

was

thinking

yeah.

Maybe

we

should

add

a

call

back

there,

but

I'm

not

sure

we

actually

want

to

add

that

complexity,

and

I

think

that

yeah

you,

you

don't

have

a

key

for

the

next

frame,

so

you

will

drop

it

and

you

will

get

the

next

one

and

you

will

need

an

iframe

or

we

will

prefer

a

little

bit

and

if

we

cannot

buffer

more

then

we'll

say

sorry,

we

drop

on

the

floor

and

once

you

get

the

key,

we'll

ask

for

a

new

a

new

key

keyframe,

for

instance,.

A

M

A

I

C

A

I

Looks

good

next

slide,

so

I

looked

further

and

started

to

think.

Oh,

we

have

installable

streams,

so

instable

stream,

when

you're

sending,

is

receiving

and

coding

valid

and

coded

media

content,

but

it

might

produce

whatever

content

it

wants,

so

it

could

be

valid

and

coding

media

content

or

it

could

be

invalid

media

content.

I

I

Actually,

the

u.s

agent

pipeline

might

expect

to

get

valid

media

content

for

packetization

and

depocketization.

So

if

you

have

a

h364

with

various

nalu

and

you

will

not

be

able

to

pack

a

device

correctly

because

all

this

is

completely

crappy

compared

to

what

you

expect

and

on

the

decoding

side,

it's

also

difficult

sfus.

I

So

before

I

stream,

particularly,

I

believe

that

in

the

future

we

might

have

a

generic

future

packetizer,

but

might

work

with

codecs

like

everyone,

for

instance,

and

that

might

be

totally

fine

there.

We

don't

need

to

do

anything

whenever

there's

this

packet

sizer,

but

we

don't

have

it

yet.

So

what

can

we

do

there?

I

So

let's

say

that

you

have

a

keyframe,

it's

it's

being

encrypted,

then

you

take

the

blob

and

you

create

a

big

nelly

based

on

that

and

and

you

send

it

and

then

the

sfu

will

be

fine.

The

user

agents

will

be

fine

as

well

without

any

change,

so

one

possibility

would

be

to

define

this

adaptation

for

x264

and

vp8,

which

are

mandatory.

Video

codecs,

either

within

vs

frame,

transform

or

standalone.

I

K

Actually,

I

think

that

the

job

has

already

been

done,

or

I

put

it

that

way.

There

is

a

solution

for

that

one

one

of

the

problem

with

just

insertable

frame

and

when

you

you

change

the

the

packet

right

is

that

you

kind

of

already

announced

the

which

encoder

you

would

use,

and

you

already

done

the

the

stp

offer

answer.

K

The

dependency

descriptor

has

been

fully

described

in

the

annex

of

the

av1rtp

payload

codec

and

sergio

finished

an

open

source

implementation

this

week.

So

I

can,

I

think

I

can

liaise

you

with

the

people

that

have

done

that

work

and

that

will

be

part

of

the

work

in

the

itf

x-frame

working

group

anyway,

and

I

know

there

is

a

draft

document

for

exactly

this

problem,

so

yeah.

I

K

I

Yeah,

but

that

would

be

nice.

I

I

still

think

that

we

need

something

in

general,

even

without

this

frame,

for

instance,

if

insertable

stream

is

modifying

the

stream,

you

need

to

send

it

and

you

need

you

don't

want

to

change

a

lot

as

if

use

user

agents

for

for

for

that.

So,

but

I,

I

would

be

very

fine

with

initially

an

strength,

specific

solution

that

would

be

great

as

well.

A

E

Yeah,

I

think

that

that's

also

one

of

the

things

that

was

making

me

a

little

sad

about

us

frame

is

that

there

was

this

risk.

We

were

throwing

away

useful

information

as

we're

making

the

transformation

so

yeah.

I

think

we'd

be

a

difference

to

talking

about

ways

to

do

that,

both

at

the

api

level

and

at

the

protocol

level.

I

Okay,

good

next

slide,

which

is

coming

back

to

what

universe.

What's

suggesting

the

transformer

attribute

versus

exposing

readable

and

way

to

go

so

instable

streams

are

transformed

by

nature,

meaning,

if

you

look

at

so

I

took

herald

nice

block

images

and

just

put

ts

in

the

middle,

which

are

instable

streams

there,

and

if

you

look

at

that,

you

clearly

see

that

this

is

transform

right

in

super

streams.

They

are

not

producing

content,

they

are

transforming

contents

and

streams.

I

Api

has

support

for

transform

and

this

model

is

actually

validated

and

being

used

today

with

compression

streams

and

encoding

api.

These

are

apis

that

are

implemented

in

chrome

and

encoding.

Api

is

also

implemented

in

safari,

so

with

writable

stream,

transform

streams

and

readable

stream.

It's

available

in

software

tech

review,

and

it's

working

fine

there.

So

it

seems

that

using

the

transform

model

for

insertable

streams

will

might

have

some

benefits

next

slide.

I

So

I

took

various

examples

there

on

the

top

left.

You

have

the

native

transform,

which

is

exactly

the

same

as

before,

as

I

presented

earlier,

there's

a

current

api

example

where

you

have

the

pipe

through

type

2

thing,

which

is

in

the

main

thread,

and

that's

fine

and

I

thought

hey.

Why

can't

we

add

like

a

new

rtc

transform

that

would

take

a

worker,

for

instance,

so

you

create

it

in

the

you

create

it

in

the

main

thread:

it's

there.

I

Maybe

it

has

post

messages

under

the

hood

that

is

already

defined

so

that

you

provide

some

nice

benefits

to

the

web

developer

and

it's

just

one

object.

It

seems

fine

as

well.

What

about

a

worklet?

That's

another

approach

there.

You

can

see

that

there's

some

more

api,

like

you,

add

a

worklet

to

a

connection

and

you,

you

add,

add

module,

but

the

add

module

is

already

defined

in

for

worklet

in

general.

So

you're,

not

you!

I

I

So

some

observations

there,

api

with

transform,

is

ergonomic,

there's

an

intuitive

use

of

transform

and

also

it

allows

mixing

native

and

gs

transform

very

nicely,

just

as

like

people

are

doing.

Like

usually

you

text,

you

take

the

text,

decoder

stream,

api,

it's

the

transform

and

you

pipe

it,

the

fetch

api

response

body

and

then

you

take

that

out

to

do

something

else.

People

are

used

to

that

and

it's

working

fine.

So

why

not

piggyback

on

this

development

style

here?

I

That

makes

a

lot

of

sense

to

me:

there's

a

potential

for

a

good

threading

model,

because

we

could

provide

some

read

limited

api

to

be

government

fed

by

default

and

then

there

would

be

no

need

for

transferring

strength.

In

most

cases,

I

hear

that

transfer

transferring

streams

is

possible.

I

implemented

readable

stream,

I

implemented

writable

stream,

I

implemented

transfer

stream

text

decoder

streams

and

a

lot

of

things.

I

I

did

the

integration

of

the

fetch

api

and

I

know

that

transferring

streams

is

in

the

very

easy

cases

can

be

done

optimally,

almost

optimally,

no

memory

copy

and

not

blocking

on

the

main

thread,

but

there

are

edge

cases

where

you

start

doing

things

and

bang

the

optimal

way

is

no

longer

possible,

and

that

makes

me

think

that

we

should

allow

transferring

strings,

but

we

should

not

require

to

use

it

in

most

cases.

It

should

be

by

default

of

the

main

thread

and

in

some

specific

cases,

yeah.

Let's

transfer

it

next

slide.

I

Yeah,

so

worker

worklet-

I

don't

know

most-

will

probably

fulfill

cases.

Worker

or

worklet

worklet

has

some

advantages.

It

might

be

more

efficient,

like

you

could

do.

Synchronous

processing,

reuse

of

existing

native

threads

on

the

media

pipeline

worklet

might

be

less

error

prone

noise

to

chair,

for

instance,

but

I

feel

like

we

need

more

studies

there.

Maybe

we

need

more

feedback

from

the

web

audio

working

group.

Maybe

we

need

additional

feedback

from

a

native

transform

stream

implementation

experiments.

H

So,

jan

over

here,

I

I

like

the

direction

and

that

the

and

I

like

the

intent,

which

seems

to

be

to

get

this

off

main

thread,

and

I

agree

it's

also

early

to

decide

exactly

on

the

api

shape.

But

I

think

what

we

should

do

immediately

is

even

if

we

use

the

existing

post

message

solution

where

you

create

the

the

transform

stream

in

the

worker

and

then

post

messages

to

main

thread

or

the

other

way

around.

A

A

I

I

Okay,

we

could

also

simply

only

expose

a

transform-

and

in

that

case

you

don't

no

longer

expose

a

readable

and

writeable

stream

on

the

main

thread,

at

least

the

native

ones.

You

would

need

to

expose

them

elsewhere,

which

would

be

a

worker

or

worklet,

and

in

that

case

you

no

longer

allow

the

potential

food

gun

of

a

web

developer,

being

lazy

and

trying

to

do

the

easy

thing

and

it's

working

in

in

a

big

laptop

and

it's

not

working

in

a

mobile

device.

I

H

Just

just

for

context,

a

problem

with

running

a

lot

of

event-based

things

or

anything

on

main

thread

is

that

you're,

I'm

assuming

this

is

going

to

be

running

off

the

main

event

loop

and

performance

wise

for

real-time

things.

It's

often

very

bad

to

rely

on

the

main

event

loop,

because

so

many

other

things

may

be

queued.

H

I

Yeah,

even

even

for

audio

processing,

which

might

not

be

a

lot

of

data,

it's

very

sensitive

to

latency

and

that's

why

there's

an

audio

workload,

for

instance,

where

people

are

using

it

to

actually

process

captured

data

when

they're

encoding,

then

they're

sending

it

to

food

data

channel?

We

we

know

who

are

doing

that

and

it's

sort

of

working.

A

Fail

separately

or

be

impossible

for

different

reasons

might

just

possibly

be

a

bad

idea,

so

it's

possible

that

defining

the

transform

is

easier

for

users,

but

I

have

this

nasty

feeling

that

something

is

going

to

come

and

bite

me

for

the

cases

that,

where

we

absolutely

don't

want

javascript

to

see

the

see

the

data

like

native

transforms,

then

absolutely.

This

is

a

much

much

better

api

for

that.

A

I

H

Yeah,

I

think

my

main

concern

is

that

sites

would

start

using

this

on

main

thread

and

depend

on

it

and

they

should

have

used

workers,

but

they

just

didn't

because

it

was

fast

enough

on

their

fast

machines

and

then

later.

If

we

try

to

decide.

Oh,

that

was

a

bad

idea

and

turn

it

off

and

throw

a

failure.

We

will

break

a

lot

of

sights

and

maybe

it'd

be

better

to

encourage

them

to

move

to

workers

early.

I

B

Yeah,

thank

you

very

much

u.n,

okay,

so

we'll

try

to

go

quickly

through

webrtc

svc.

So

a

little

bit

of

an

update.

We

have

four

open

issues,

of

which

two

are

recent

reviews,

one

from

the

tag

and

one

from

ping,

and

then

there

are

two

extensions:

it

has

been

implemented

in

chrome

behind

an

experimental

web

platform

features

flag,

so

you

can

try

it

out.

B

Okay,

so

at

last

tpack

we

talked

about

how

to

maintain

the

scalability

mode

table

and

diagrams

last

year.

The

idea

we

had

is

hey

make

this

someone

else's

problem,

someone

like

ale

media,

and

so

we

originally

were

thinking

of

referencing.

The

ab1

bitstream

specification

and

just

say:

hey

here

are

the

mode

names,

and

here

are

the

diagrams.

B

However,

since

then

the

disadvantages

of

this

have

become

apparent.

First

of

all,

whoever

do

you

see.

Svc

applies

to

multiple

codecs,

not

just

av1.

There

were

new

codecs

coming

down

the

pike,

so

just

referencing

an

av-1

spec

may

not

make

sense.

Another

problem

is

that

it

was

pointed

out

by

implementers

of

ab1

that

there

are

some

limitations

with

respect

to

svc

and

av1

that

result

in

very

unnatural

names

and

diagrams

for

some

modes

and

also

the

diagrams

in

the

av-1

spec

weren't,

very

good.

B

B

Just

so

people

don't

get

confused,

but

I

was

felt

there

were

better

names

than

the

ones

that

were

in

the

a81

spec.

Also.

We

now

have

our

own

scalability

mode

diagrams

in

section

nine

that

people

seem

to

think

are

a

lot

more

clear

than

the

ones

in

the

av-1

spec,

and

now

we

updated

section

6.1,

which

talks

about

adding

new

modes

where

we

require

submission

of

dependency

diagrams.

You

have

to

submit

the

diagram

along

with

your

mode

name,

to

get

into

the

table.

B

B

Yeah,

that's

that's

a

that's

a

great

point,

dom

yeah,

because

basically

the

spec

itself

is

pretty

simple,

but

almost

all

the

changes

would

be

to

this

to

this

registry

of

the

table.

Yeah.

Okay.

So

the

tag

review

came

up

with

a

comment

and

they

asked.

Why

is

the

scalability

mode

a

dom

string

rather

than

an

enum,

and

they

pointed

out

that

enums

do

offer

automatic

error,

checking,

for

example,

of

a

scalability

mode

value?

B

Just

since

last

tpack,

for

example,

we

had

originally

taken

out

the

s

modes

and

then

they

added

them

back.

We've

just

renamed

some

of

the

key

and

key

shift

modes,

and

we

suspect

that

as

new

codecs

come

about

we'll

we'll

add

new

modes.

So

this

table

is

constantly

changing,

and

that

raises

the

question

about

whether

automatic

error

checking

actually

adds

any

real

value

or

just

adds

confusion.

B

For

example,

we

already

have,

as

I

mentioned,

the

non-automatic

checks,

so

you

can't

really

set

a

mode

that

isn't

supported

and

you'll

get

an

error

and

the

problem.

If

you

have

the

automatic

checks

it,

it

could

result

in

some

different

behavior

between

browsers,

for

example,

one

you

get

a

new

mode

or

a

rename,

or

something,

for

example,

in

the

in

the

current

implementation

in

chrome.

That

was

done

before

these

these

this

mode

renaming.

B

N

B

And

and,

like

I

said,

having

different

behavior

in

different

browsers

doesn't

make

sense

to

me.

If

both

browsers

would,

if

you

can't

set

a

mode,

you

don't

support

in

either

browser.

Why

would

you

know

what

what

how

does

it

help

you

to

have

automatic

checking

when

the

table's

changing

all

the

time?

That

may

not

make

any

sense.

B

B

So

basically

here's

the

situation.

The

only

thing

that

weber

to

see

svc

does

doesn't

change

any

of

the

methods

or

how

they

behave

just

adds

to

the

capabilities

dictionary

by

including

a

list

of

supported,

scalability

modes.

So

what's

the

additional

fingerprinting

surface

from

this?

Well,

whoever

csvc

is

currently

only

supported

on

chromium-based

browsers,

but

there

are

a

couple

of

those.

So

you

do

get

generic

info

on

the

user

agent.

Like

you

know,

it's

a

chromium-based

browser,

but

you

don't

necessarily

know

which

one

it

is.

B

The

other

thing

is

that

we

had

an

issue

49,

which

was

filed

in

webrtc

extensions,

and

basically

that

issue

complains

that

the

existing

sync

synchronous

get

capabilities

method

is

not

usable

for

exposing

hardware

capabilities,

in

other

words,

the

person

who

posted

that

issue

wanted

to

be

able

to

describe

whether

the

codec

was

supported

in

hardware

software

and

found

themselves

unable

to

support

hardware

adequately,

and

they

proposed

an

async

method.

Instead,

so

we

simultaneously

have

the

ping

people

saying

it's

leaking

hardware

and

then

issue

49

says

no.

B

I

J

Yeah,

I

agree

with

your

analysis.

Bernard

I

wouldn't

bother

with

not

about

user

agents

because

that's

describing

a

temporary

situation

and

it's

not

adding

fingerprinting

surface

anyway,

but

yeah,

given

what

you

described.

I

agree

that

there

is

really

nothing

exposed

in

svc.

That's

adding

anything

there.

B

B

That

I

think

the

we

should

discuss

adding

an

async

method,

but

that's

separate

from,

I

would

add

a

note

to

the

issue

in

webrtc.

Svc

just

say:

hey,

this

is

your

generic

issue

and

it

really

has

nothing

to

svc

and

then,

in

a

minute,

henrik

we'll

get

to

the

generic

slides,

which

yanivar

was

going

to

present

to

ping

but

never

got

to,

but

but

basically

idea.

Henrik

is

we

can

talk

about

an

asic

method,

but

it

has

nothing

to

do

with

with

this

particular

ping

book.

D

J

On

the

process

bits,

I

don't

know

that

the

right

approach

is

necessary

to

reclose

the

issue.

I

don't

think

going

back

and

forth

on.

This

will

help,

but

I

think

what

this

gets

us

is

clear

consensus

that

the

working

group

doesn't

believe

this

exposed

new

fingerprint

interface

and

then

once

we

have

a

started

that

consensus,

we

can

work

with

opinion

figuring.

The

next

steps

right.

D

H

B

B

N

H

Sure

I

mean

I'm

mostly

referencing

the

documents

that

you

put

together

bernard

so,

but

it's

basically

saying

that

a

site

can

learn

about

visitors

underlying

hardware

without

permission

prompt

that

was

the

issue

is

filed.

But

then

it's

just

basically

saying.

I

think

what

you've

said

here

is

that

the

same

information

is

available

in

the

sdp

but

which

is

inherently

needed

for

peer-to-peer

signaling

and

using

use

cases,

for

that

is

data

channels,

receiving

media

or

sending

media

other

than

camera

microphone

and

screen.

For

instance,

camera

element,

capture

stream

and

next

slide.

H

So,

oh

there,

it

is

yes,

so

we

already

have

fingerprint

notifications

in

the

spec

about

that

get

capabilities

and

create

offer,

and

I

think

bernard

you

had

a

graphics

hardware

and

fingerprinting

document

that

linked

to

some

of

our

thinking

there

that

basically,

these

the

same

information

can

be

inferred

from

other

sources

such

as

web

gpu

and

webgl,

and

the

performance

api.

So

the

proposed

solution

is

to

include

a

node

relating

to

implementation

issues

of

hardware,

hardware

capabilities

and

then

I

think,

to

ask

for

this

to

be

dealt

with

outside

of

webrtc.

B

C

J

J

I

think

the

to

me.

The

real

point,

though,

is

on

the

fact

that

a

lot

of

this

fingerprinting

surface

is

indeed

already

exposed

in

other

apis

and

that's

getting

a

proper

solution

or

mitigation

to

this

requires

coordination

across

these

various

apis.

I've

tried

to

get

ping

to

take

ownership

of

this,

but

I

don't

think.

J

I

mean,

I

guess

we

need

to

figure

that

story

out.

My

sense

is

that

we

cannot

stop

until

this

gets

figured

out

because

it's

a

really

complicated

issue,

but

I

think

we

at

least

need

to

be

very

clear

as

to

what

our

argument

is,

and

you

know

I

could

be

wrong

on

the

negotiation

negotiation

thing,

but

if

I'm

not,

then

I

think

we

need

to

be

careful

in

how

we

present

and

how

we

present

this

and,

as

a

result,

what

kind

of

mitigations

would

be

possible

or

not.

H

B

G

Thank

you

so

yeah,

the

theory

theory

here

is

it's

a

bunch.

There

are

a

bunch

of

people

out

there

who

are

doing

things

with

webrtc,

which

kind

of

mostly

work

but

don't

work

as

well

as

they

should,

and

so

I

went

and

talked

to

a

few

of

them

and

came

back

with

these

use

cases

that

are

like

just

on

the

edge

of

what

webrtc

one

zero

does,

and

I

think

that

means

that

they're

good

candidates

for

improvement

in

in

nv.

G

Basically,

you

know

these

are

things

that

we

could

fix

for

the

the

next

version.

So

yeah

next

slide,

please

so

the

the

first

one

is

p2p

broadcast.

You

know

things

like

auctions

live

events.

People

want

low

latency,

they

want

lower

latency

than

hls

and

dash

can

do,

and

you

can

do

that

with

the

web

otc,

but

like

there's

a

they

the

moment

they

do

that

they

lose

a

bunch

of

features

that

they

kind

of

love.

G

So

it's

a

question

of

whether

whether

we

can

how

many

of

those

we

can

put

back

in

and

then

can

we

also

give

them

the

thing.

The

other

thing

that

we

do

with

webrtc,

which

is

to

work

behind

that.

So,

if

you

think

about

an

auction

site,

that's

maybe

actually

on

a

fiber

to

the

premises

link,

so

they've

got

the

bandwidth

to

broadcast

to

their

500

bidders

or

100

bidders

or

whatever,

but

actually

they

maybe

don't

have

enough

public

ip

addresses

to

do

that

so

kind

of

bonus

points.

G

G

I

I'm

not

really

claiming

that

so

kind

of

the

idea

here

is

to

try

and

maximize

the

reuse

of

existing

high

latency

streaming

assets,

so

kind

of

create

stats

that

allow

people

to

see

the

things

that

they

would

have

got

from

dash,

and

also

this

is

a

big

ask.

I

know,

but

clarity

on

the

rules

of

autoplay,

like

a

lot

of

these

sites

are

are,

are

receive

only

essentially-

and

I

also

plea

play

on

on

on

video

and

audio

is

just

I

get

more

bugs

from

that

than

I

get

from

anything

else.

G

So

we

just

need

to.

We

need

to

make

that

work

somehow

and

it

doesn't

and,

and

then

yeah

there's

a

final

thing

here

is:

maybe

we

could

do

some

something

trixie

with

allowing

drm

to

work

by

kind

of

creating

a

our

webrtc

and

then

piping

it

up

through

the

drm

architecture,

so

that

they

can.

I

mean

it's

sort

of

like

s

frame

only

it

would

be

nice

if

they

could

use

their

existing

encryption

so

yeah

that

that's

the

idea

for

that

one.

The

next

slide

please.

G

Yeah,

so

so

this

is

about

cameras

in

hospitals,

factories

whatever

and

the

use

cases

that

quite

often

there's

a

nearby

user.

So

there's

a

camera,

maybe

watching

a

baby

or

a

patient

or

something

and

the

user

is

maybe

sitting

at

a

nursing

station

or

downstairs

watching

the

baby

monitor

so

they're,

probably

on

the

same

network,

and

then

what

happens

when

the

the

the

router

goes

down

like?

G

How

do

they

maintain

their

linkage

between

those

two

devices

that

are

probably

on

the

same

network,

although

not

definitively,

and

without

needing

to

go

back

to

the

external

internet

as

a

making

that

a

dependency?

So

it's

kind

of

trying

to

raise

the

availability

of

these

things

next

slide,

please,

and

so

like.

G

The

assumption

you

can

make

is

that

these

endpoints

have

already

been

connected

and

probably

recently,

and

what

you

want

to

be

able

to

try

and

do

is

reconnect

without

recourse

to

to

non-local

servers

and

and

that

kind

of

a

potential

way

of

doing.

That

would

be

to

make

stun

an

offer

answer

in

some

way

optional

or

predictable

for

reconnections,

and-

and

you

know,

maybe

you

do

that

with

service

workers.

I

don't

know

how

you

do

it,

but,

like

it's

a

it's

a

desirable

feature,

I

think

next

slide.

Please.

G

Next

one

is

decentralized

internet.

A

lot

of

people

using

the

dace

channel

for

a

bunch

of

interesting

things

and

matrix

is

one

of

the

ones

that

I'm

particularly

interested

in.

So

that's

the

entire

peer-to-peer

mesh

internet

messaging

network,

but

what

they

really

want

is

a

home

for

their

data

channel

and

and

because

the

page

life

cycle

just

doesn't

work

for

these

encryption

services,

essentially

or

you

know

a

bunch

of

other

things

that

you

want

to

try

and

do

in

that

space.

G

G

If

you

push

them

into

a

service

worker

or

somewhere,

then

you

would

allow

a

page

to

issue

a

fetch

which

could

then

be

resolved

either

with

a

cat

from

cash

as

it

is

at

the

moment

or

over

https,

as

it

is

at

the

moment

or

by

a

service

running

in

the

service

worker.

That's

doing

some

p2p

over

the

data

channel

and

it's

that

third

option

that

we

can't

do

at

the

moment

next

slide.

Please

and

this

one's

about.

G

If

you

see

there's

a

bunch

of

people

out

there

who

are

doing

communications

apps

on

on

mobile

phones

and

smartphones,

and

you

find

that

they're

building

them

as

native

apps

and

they're,

typically

native

apps

lit

wrapped

around

libweb

otc,

and

you

ask

yourself:

why

aren't

they

aren't

they

progressive

web

apps?

And

the

answer

is

that

the

robustness

isn't

there?

You

can

lose

the

call

way

too

easily.

G

You

know

an

incoming

gsm

call

will

just

drop

the

we'll

just

drop

the

webrtc

connection,

and

likewise,

if

the

bro

user

accidentally

browses

off

the

page,

then

they

lose

the

call

and

for

the

user

point

of

view,

it's

kind

of

understandable.

But

the

person

on

the

other

end

has

absolutely

no

idea.

What

just

happened

to

that

video

call

like

there's

no

information

whatsoever.

G

The

ambition

here

is

to

is

to

try

and

at

least

allow

the

far

end

to

have

a

sense

of

what

happened

like

the

fact

that

this

call

has

gone

away

or

may

come

back

or

whatever

so

some

way

of

indicating

that

this

is

happening

and

to

send

some

sort

of

message

to

them

in

terms

of

audio

or

play

out

or

something

I

mean.

I

don't

know

how

this

is

done,

but

that's

my

suggestion

and

ideally

you

want

an

api

to

allow

reconnection

of

a

pre-existing

call

post

to

gsm

interruption.

G

In

my

experience

and

then

the

third

one

which

is

kind

of

a

big

ask,

I

realize,

is

to

be

able

to

park

a

track

in

the

service

worker

so

that

you

can

move

them

between

pages

so

that

if

you're

co-browsing

with

a

support

user

support,

worker

and

you're

co-browsing

with

somebody,

you

can

keep

the

audio

running

whilst

you're

talking

them

through

how

to

use

their

banking

site

or

whatever

you

know,

obviously

huge

security

restrictions.

One

has

to

apply

to

that,

but

I

think

it's

a

desirable

aim.

G

But

there

are

simple

cases

where

we

make

it.

Maybe

could

could

minimize

the

signaling

such

that

you

could

bring

up

a

channel

with

pretty

much

no

signaling

at

all.

I

mean

if

you

look

at

whip,

that's

kind

of

that's

a

heading

in

that

direction,

but

but

next

slide

please.

I

think

we

can

go

a

little

further

than

that.

G

Potentially-

and

I

don't

know

if

we

want

to

talk

about

this-

and

maybe

it's

the

wrong

forum,

but

but

in

fact

a

url

like

that

actually

has

everything

you

need

to

bring

up

a

data

channel

like

you.

Could

con

you

could

compress

the

whole

of

of

of

the

offer

answer

to

to

something

as

simple

as

that

and

we've

done

a

little

a

couple

of

little

experiments

with

it

and

it

and

it's

doable

again.

G

There

are

some

some

interesting

compromises

you

have

to

make

like

isolite,

for

example,

and

you

have

to

assume

who's

going

to

be

the

server

and

the

client.

So

there's

like

a

bunch

of

the

optionality

in

the

normal

offer

answer

goes

out

and

you

kind

of

define

it

by

config,

but

it's

doable

anyway,

so

yeah

that

I

think

that's

pretty

much

all

I

wanted

to

say

just

that

that

you

know

those

are

the

the

options

that

I

think

we

should

be.

Maybe

looking

at

adding.

K

K

People

are

used

to

rtmp,

they

want

to

put

a

url

and

be

done

with

it

and

point

it

to

a

server

and

today

with

webrtc