►

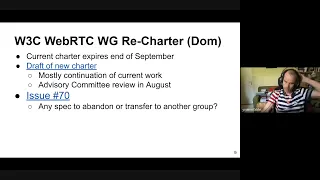

From YouTube: WebRTC WG meeting 2022-06-23

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

B

A

A

A

A

Just

a

reminder.

This

meeting

is

being

recorded.

Please

use

headphones

or

an

echo

canceling,

speakerphone

and

state

your

full

name,

so

we

can

get

it

into

minutes.

I

don't

think

we'll.

I

don't

know

if

we'll

be

doing

a

poll

today,

I

suspect

not,

but

we

we

have

the

mechanism

available,

just

a

few

words

about

document

status.

Just

because

something

is

in

the

w3c

repo

doesn't

mean

it's

been

adopted.

A

We

use

a

call

for

adoption

to

do

that

and

editors

drafts

don't

represent

working

group

consensus,

but

working

group

drafts

do

once

they're

confirmed

by

a

cfc.

It

is

possible

to

merge

brs,

but

you

should

attach

a

note

if

it's

controversial

all

right.

Here's

the

issues

for

discussion

today,

we're

going

to

start

off

with

a

recharger,

then

get

into

region

capture

and

face

detection

tom.

C

C

B

B

C

I

haven't

looked

into

it.

It

depends

on

whether

the

charter

is

flexible

in

terms

of

scope

or

not,

but

I

would

say

so

if

we

were

to

suggest

that

clearly

we

would

need

a

very

strong

coordination

with

the

media

working

opening

that

may

indeed

include

having

them

rechartering.

I

don't

know

of

the

top

of

my

head,

whether

that

would

be

necessary

or

not.

B

A

B

C

So

I

don't

think

we

can

afford

to

wait

until

september

to

have

the

discussion,

but

if

we

were

starting

the

discussion

now

and

with

a

conclusion

that

we

would

need

to

keep

operating

under

the

current

charter

for

say

three

more

months,

we

could

extend

the

current

charter

while

we

go

through

the

administrative

yeah,

but

I

don't

think

we

should

wait

until

september

when

we

know

it

would

be

too

late.

No

matter

what

to

to

have

the

conversation.

If

that's

a

conversation,

we

want

to

have.

D

What

I

know

is

that,

from

an

administrative

point

of

view,

every

time

we

change

charter

the

scope,

even

though

it's

just

migrating

one

item

to

the

other,

then

on

our

side

we

have

to

to

do

some

assessment

and

so

on,

and

it

can

take

time

and

so

on.

So

it's

not

it's

not

free

to

do

the

migration

at

least

first.

C

Yes,

we

should

have

meetings

with

the

very

least

media

working

group

chairs

to

discuss

whether

they

would

be

willing

to

do

so

in

the

first

place

and

then

coordinate

on

what

it

would

take

to

make

it

happen.

But

the

first

part

of

the

question

is

really

for

us

to

say:

is

it

something

we

want

to

do

or

something

we

want

to

explore,

or

is

it

just

not

a

priority,

and

I

guess

un

was

saying

we

should

have

good

reason

to

make

that

decision,

because

it's

not

cost-free

yeah.

One

thing.

A

I

would

observe

is

that

there

is

a

whole

bunch

of

different

working

groups

that

are

working

on

specs,

that

interact

with

video

frame,

and

I

think

this

is

causing

problems,

we're

seeing

it

already

and

and

also

encoded

chunks,

where

we're

getting

out

of

sync

in

different

specs

and

things

that

are

falling

between

the

cracks.

So

I

do

think

there's

definitely

an

issue

there

with

too

many

working

groups

involved

in

that

in

that

work.

E

C

Well,

I

mean

I,

like

some

people

in

this

group

are

also

involved

in

media

in

the

media

argument,

so

maybe

they

can

share

actual

insights.

I'm

not.

I

will

say

that

they

do

have

a

number

of

other

work,

including

web

codecs,

media

capabilities,

which

obviously

come

also

with

a

set

of

challenges.

Controversies

and

coordination

coordination

needs

so.

C

C

C

A

All

right,

so

next

topic

is

region

capture.

We've

got

two

things

to

discuss

today:

issues

17

and

18.

17

will

probably

take

most

of

the

time.

We

have

a

we'll,

have

a

presentation

from

jan

ivar

about

proposing

sync

usage

for

crop

target,

and

then

a

lot

will

present

the

case

for

async

and

then

we'll

have

a

shared

discussion

and

then

we'll

talk

about

issue.

F

All

right,

thank

you.

So

yes,

so

this

is

about

crop

two

which

also,

which

you

pass

an

argument

to

to

crop

self-capture

to

an

element

in

a

way

that

is

consistent

and

doesn't

rely

on

coordinates

and

has

some

benefits.

So

the

issue

threads

on

these

issues,

issue

17

and

related

issues

have

gotten

really

long

and

impenetrable.

F

So

we

have

slideware

to

highlight

what

is

the

outstanding

issues

in

order

to

invite

the

larger

working

group

to

participate

in

decisions?

So

you'll

hear

two

views

and

I'm

up

first

and

I'll:

try

to

explain

the

api

as

I

go

for

those

who

haven't

followed

closely,

so

I

intend

to

present

arguments

that

I

hope

we

can

discuss

from

first

principles

that

I

hope

can

be

rebutted

with

counter

arguments

to

find

solutions

for

them

or

show

why

they're

not

a

problem.

F

This

is

our

process

and

we're

following

it.

It's

the

working

group

that

has

the

domain

knowledge

to

design

apis,

and

it's

not

the

tags

job,

for

instance,

to

call

winners

or

losers

on

api

design,

especially

not

in

first

public

working.

If

that

were

the

process,

we

could

just

ban

this

working

group

and

save

a

lot

of

time.

So,

but

what

the

tag

does

provide

is

a

list

of

design

principles

and

among

them

are

sorry.

Can

we

go

back

a

slide?

F

So

right

now

the

current

api

and

at

first

I

was

going

to

write

current

api.

The

problem

is

that,

what's

in

the

spec

right

now

is

what

I

mean

and

there's

a

note

in

respect

that

says,

the

current

api

does

not

have

consensus,

which

was

added

for

first

public

working

draft.

So

I'm

calling

it

non-consensus

api

here

just

to

be

clear

that

these

are

equal

proposals

in

my

mind.

So

currently

you

you

have

to

mint

a

crop

target,

and

this

is

because

for

reason

I'll

come

back

to

later.

F

The

question

here

for

the

working

group

does

this

need

to

be

an

asynchronous

method,

so,

right

now

in

the

spec

in

the

non-consensus

note

says

you

have

to

await

crop

target

from

element

and

then

you

mint

a

crop

target

object

instead

of

the

element.

Now.

Why

would

we

do

that?

It's

because

we

might

need

to

post

message

this

element

to

another

realm,

and

you

can't

do

that

with

elements,

so

we

get

this

sort

of

reference

handle

instead.

So

what

I'm

proposing

is

that

we

don't

need

this

to

be

async.

F

You

can

just

create

a

new

crop

target

and

pass

in

the

element,

and

that

would

be

a

synchronous

api,

and

this

is

because

the

purpose

of

this

target

is

to

associate

a

serializable

identifier

with

an

element

which

we'll

call

minting

and

the

spec

says,

calling

from

element

with

an

element

of

a

supported

type

associates.

That

element

with

a

crop

target

crop

target

is

an

intentionally

empty,

opaque

identifier

and

its

purpose

is

to

be

handed

to

crop2

as

input,

so

I've

currently

specified.

F

F

It

could

be

a

different

origin

and

you

post

message

it

to

a

top

level

document

that

is

doing,

screen,

capture

self

captured

through

screen

sharing,

and

it

has

a

video

track

upon

which

you

call

crop2

with

that

crop

target.

If

this

were

all

the

same

document,

you

could

just

pass

in

the

element.

So

crop

target

serves

a

really

useful

purpose

here,

but

the

testimonial

requirement

here

is

that

cropton

must

accept

it

at

this

point.

F

So

this

is

where

this

might

be

confusing,

but

I'll

work

through

it

this,

if

you're

not

familiar

with

the

api.

Hopefully

this

might

actually

help.

My

point

here

is

that

multiple

actions

need

to

happen

before

we're

cropping

anything,

and

this

diagram

shows

that

vertically

you

have

time

progressing

and

on

the

left,

you

have

a

top

level

document

in

the

middle.

You

have

the

iframe

and

some

notes

about

optimizations

on

the

right.

So

the

first

thing

that

happens

on

any

random

page

on

the

web.

F

F

It

can

do

nothing,

in

which

case

nothing

happens

so

or

you

can

post

message

it

to

the

top-level

document

and

where

it

receives

this

token,

and

then

nothing

can

happen

or

the

user

pushes

a

button

which

to

present

something

and

which

creates

the

top-level

javascript,

calls

get

display

media

or

get

viewport

media

and

prompt

user

for

capture

the

user.

May

cancel

or

the

user

may

select

a

different

capture,

in

both

case,

nothing

happens.

And

finally,

we

see

that

the

application,

the

top

level

document

application

decides

to

crop.

It

has

a

screen

sharing

track.

F

F

So

user

agents

can

jump

the

gun

here,

but

to

optimize

this

and

as

I've

shown

them

on

the

right,

you

can

optimize

quite

early.

But

if

you

do,

you

run

a

lot,

a

lot

of

risks

and

you

have

to

actually

then

handle

the

case

that

maybe

you

prematurely

optimized,

because

you

don't

actually

know

that

you

capture

that

you're

going

to

be

capturing

it.

So

that's

the

main

problem

with

early

optimization.

It's

a

good

thing.

F

F

So

another

reason

for

why

it

needs

to

be

async.

Is

that

there's

a

new

request

on

the

spec

to

change

the

original

spec

said

it

was

infallible,

but

there's

a

new

request

to

say

that

we

want

to

be

able

to

fail,

reject

the

promise

from

the

minting

process

because

of

resource

extortion,

errors,

and

presumably

this

is

because

chrome

has

implemented

some

optimizations.

F

F

So

if

you

have

a

javascript

library

that

you're

importing

that

has

nothing

to

do

with

cropping,

it

could

cause

action

at

a

distance

by

basically

calling

this

api

over

and

over.

It's

not

behind

any

permission,

so

any

javascript

can

do

it

and

basically

doing

a

denial

of

service

attack

on

the

cropping

function

without

user

permission

and

defeat

cropping

now

defeating

cropping

may

actually

expose

private

user

information

in

unsuspecting

poorly

written

apps,

which

is

a

foot

gun

because

that

may

have

been

designed

to

never

been

tested.

F

What

would

happen

if

cropping

resources

fail

and

what

happens

we

know

there

are

bugs?

Then,

if

you

don't

test

something,

the

worst

will

happen,

which

is

that

the

the

app

will

then,

instead

of

cropping

to

exclude

important

information,

it

will

now

show

and

screencast

everything,

and

I

hope

I've

also

shown

that

early

research

allocation,

it's

inherently

unnecessary.

F

F

F

F

So

what

you

have

to

do

instead

is

provide

to

to

to

combat

that

you

need

local

per

document

limits.

That

obviously,

must

be

smaller,

and

these

would

risk

becoming

exhaustible.

Much

sooner

to

the

point

where

you

could

even

have

well-designed

apps

that

maybe

sit

in

an

open

tab

for

a

long

time,

you

can

eventually

run

it

to

this

limit.

So

again,

web

compatibility

issues

and

web

developers

are

likely

to

ever

expect

or

check

for

exhaustion

and

again,

the

better

approach

would

be

to

have

the

user

agent

handle

this

and

not

fail.

E

F

F

So

a

part

of

the

spec

that

doesn't

have

consensus,

since

the

user

agent

must

resolve

the

promise

only

after

it

has

finished

all

the

necessary

internal

propagation

of

state

associated

with

a

new

crop

target,

at

which

point

the

use

agent

must

be

ready

to

receive

the

new

crop

target

as

a

valid

parameter

to

crop.

To

no

question

no

problem

with

the

last

part,

but

the

issue

here

is

that

what

does

this

mean

because

modern

browsers

use

process

separation

of

iframes,

which

means

there's

going

to

be

ipc

of

both

state,

propagation

and

post

message?

F

So,

looking

at

the

earlier

example

of

the

iframe,

I've

added

some

arrows

here

about

what

would

happen

and

arrows

up

means

out

of

process.

Basically,

so

you

call

crop

target

firm

element

and

the

spec

says

to

do

state

propagation,

which

is

really

you

know,

pre-optimized

resource

allocation

for

cropping,

which

would

need

to

go

under

master

process.

So

you

send

one

ipc

and

it

also

says

we

can't.

F

F

So

this

api

actually

serializes

those

three

ipc's

which

is

slower

than

running

ipc

in

parallel

you

could,

you

could

have

the

state

propagation

happen

and

still

resolve

immediately

or

basically

have

it

be

synchronous,

in

which

case

it

would

be

up

to

crop

to

to

handle

this

race,

which

it

which

can

it

can

handle

either

approach.

Basically,

so

the

proposed

the

api

I'm

proposing

here,

I

think,

is

faster,

simpler

and

still

optimizable,

and

this

satisfies

the

design

rule

that

the

api

implementation

will

not

block.

You

know

one

of

the

exceptions

for

the

synchronous.

F

F

F

There

might

be

new

information

later

about

how

to

optimize

better,

and

I

think

my

prediction

will

be

we'll

see

new

issues

being

opened.

If

we

don't

close

this

gap

and

basically

said

optimization

should

not

influence

the

api

and

it

would

not

be

a

good

use

of

working

group

time.

Let's

be

done,

there's

no

inherent

need

and

no

developer

benefit

to

it

being

async

and

async.

F

Apis

goes

against

the

w3c

design

principle

I

mentioned

earlier,

which

is

consistency

with

a

media

source,

get

handle

having

an

object

constructor

for

new

objects

and

being

synchronous

when

possible.

There's

a

developer

cost

as

well

to

asynchronous

apis.

That

are

quite

general.

Every

await

is

a

preemption

point

in

javascript.

Javascript

is

single

threaded,

so

a

permission

point

a

single

thread.

That

means

you

don't

have

to

worry

about,

locking

in

order

to

read

data,

but

as

soon

as

you

do

an

await,

you

have

to

reassess

all

state

or

risk

databases

and

also

it's.

F

It

spreads

like

wildfire

because

much

like

cons,

non-cons

methods

and

simple

applause.

If

a

method,

you

call

is

asynchronous,

you

have

to

be

asynchronous

and

the

caller

of

you

has

to

be

asynchronous

so

which

is

unfortunate

but

reality

and

also

having

multiple

process

failure.

Points

for

extremely

rare

resources

is

also

risky,

because

web

developers

would

might

not

expect

or

test

for

it,

and

I've

also

shown

that

it's

slower

delaying

when

post

message

can

happen

and

I'm

happy

to

discuss

performance.

F

E

Sure

I've

got

two

questions,

but

I

need

to

set

up

a

quick

setup

for

this.

So

first

you've

mentioned

the

first

principles

and

they

think

that,

yes,

we

should

debate

this

on

first

principles,

but

the

tag

does

not

only

offer

design

principles.

It

also

sets

the

meta

discussion

right.

It

talks

about

how

we

can

even

have

a

productive

discussion,

so

I

think

that

it

is

not

necessarily

productive

for

us

to

discuss

other

the

browsers

other

browsers

optimizations

and

specifically

when

we

talk

about

resources,

exhaustion

and

how

it

could

serve

for

fingerprinting.

E

That

is

a

valid

concern,

but

nobody

is

forcing

you

to

actually

allocate

resources

to

nobody's

forcing

you

to

actually

exhaust

them

and

to

allow

allow

this.

So

if

you

think

that

this

is

a

bug

in

chrome's

implementation,

that's

very

good

and

we

can

solve

that

bug

over

time,

but

nobody's

forcing

you

to

replicate

this

bug.

E

Similarly,

nobody

is

forcing

you

to

propagate

state

only

when

you

at

one

point

or

another,

the

way

that

that

which

is

currently

phrased

you

could

have,

you

could

just

resolve

the

promise

immediately,

never

allocate

anything,

never

fail,

and

I

just

don't

understand

so.

If

you

could

just

explain

to

me

in

what

way

the

current

text

is,

limiting

your

implementation

and

forcing

you

to

make

design

decisions,

you

do

not

wish.

F

Well,

I'm

not

here

to

talk

implementation

so

much

as

the

api,

and

I

think

that

the

api

should

be

designed

based

on

principles

that

the

tag

has

put

forth

and

I've

shown

three

of

them,

and

I've

also

shown

some

problems

that

I

hope

can

be

counter

argued

from

first

principles.

Why

they're

not

important

or

why

they're

not

how

they

can

be

solved.

I

just

don't.

E

F

E

E

No

okay,

so

thank

you.

I

will

start

now

so,

as

he

never

has

mentioned,

and

thank

you

for

introducing

the

api.

This

is

an

api

for

cropping

video

targets.

It

was

proposed

one

and

a

half

years

ago

by

yours,

truly

chrome

has

implemented

it

and

google

products

have

actually

started

using

it

on

origin,

trial

and

soon

in

actual

production,

when

it's

shipped

but

right

now,

it's

already

in

production,

very

big

products

such

as

meat

dogs,

slides

and

it's

battle

tested.

So

it.

B

E

So,

as

we

know,

cropping

involves

the

target.

The

target

can

be

in

another

document,

which

means

that

you

cannot

pinpoint

the

element

without

actually

having

a

crop

target,

and

the

question

here

revolves

around

whether

this

the

minting

of

this

token

should

be

sync

or

async,

and

I'm

going

to

claim

a

that,

I'm

going

to

explain.

E

Next

slide,

please

so

just

to

remind

everybody

of

chrome's

implementation,

which

is

we're

not

mandating

that

anybody

else

follow

the

same

implementation,

we're

just

explaining

chrome

direction

now,

so,

first

of

all,

you've

got

the

captured

document

on

the

left.

The

capture

document

means

a

token

that

involves

the

ipc

in

chrome's

implementation,

not

necessarily

in

anybody

else's

implementation,

and

once

you've

got

this

strobe

target.

It's

not

only

that

the

capture

document

has

it.

The

browser

is

now

aware

of

it,

and

the

browser

knows.

B

E

E

Next

slide,

please

so,

as

mentioned

simpler,

more

performant

and

you'll

notice

that

there

are

no

race

cases

here,

because

by

the

time

that

the

capture

document,

I'm

sorry,

the

capturing

document

gets

the

token

that

token

is

ready

to

be

used.

Whereas

with

some

alternatives

that

have

been

debated

at

length

on

issue

number

17,

there

was

basically

an

ipc

being

sent

in

both

directions.

At

the

same

time,

one

with

browser

process

and

one

to

the

capture

document-

and

you

can

imagine

that

there

are

cases

where

one

ipc

would

reach

faster

than

the

other

next

slide.

E

Please,

oh

sorry

and

one

more

okay,

next

like

this,

so

basically

I

claim

that

we've

got

a

an

argument

here

between

a

party

that

needs

this

to

be

async

and

then

party,

that

just

wants

it

to

be

async,

and

then

the

question

is

why

so

chrome

needs

it,

because

we've

got

a

certain

set

of

trade-offs.

Other

sets

of

trade-offs

are

possible,

but

we've

got

our

own

philosophy

and

we

want

to

implement

according

to

it.

E

Mozilla

could

implement

according

to

their

own

philosophy,

regardless

of

whether

this

is

sync

or

async,

but

they

want

this

to

be

sync,

now

that's

fine,

but

for

that

they

need

to

show

that

there

is

an

actual

problem

with

making

this

sync,

I'm

sorry

async,

regardless

of

implementation,

or

that

one

of

the

constituencies

is

actually

impacted

by

this

negatively

next

slide.

Please.

E

So,

as

we

know,

this

is

the

text

it's

a

little

bit

much,

but

that's

the

original

text

with

a

reduction,

so

the

needs

of

users

come

first.

Then

web

developers

then

browser

implementers,

spec

authors

and

then

a

surprise

at

the

end,

let's

examine

how

almost

all

of

them

are

impacted,

because

I

think

spec

authors

are

a

little

bit

irrelevant

right

now.

Next

slide,

please

so

users,

users,

don't

know.

If

this

is

sync

async,

they

don't

care.

Users

want

their

software

to

be

performed

available

on

all

browsers

and

available

yesterday.

E

Well,

we

can

guess

what

they

think

or

we

could

ask

them,

and

we

have

asked

them

and

they've

provided

feedback.

They've

said

that

they

simply

don't

care

if

this

is

async,

most

developers

that

are

going

to

use

this

already

are

already

maintaining

very

large

applications,

very

complex

millions

of

lines,

and

they

don't

care.

E

If

we

just

add

the

award

await

now,

we

could

think

that

in

theory,

maybe

this

slots

right

into

the

place

one

place

where

it

would

otherwise

be

a

problem,

but

so

far

no

web

developer

has

come

and

explain

that

this

was

an

issue

for

them

and

also

github.

We

have

discussed

if

this

were

an

issue,

how

we

could

have

actually

gone

around

this

issue.

E

So

the

third

constituency

is

implementers

and

I

happen

to

be

an

implementer

and

to

represent

other

implementers

in

chromium,

and

we

say

that

this

is

imperative

for

us,

so,

whereas

the

other

two

constituencies

did

not

care

we

care

and

because

it's

of

no

impact

to

them

now,

it's

our

turn

next

slide.

Please,

so

is

there

a

problem

with

if

the

constituencies

are

not

the

thing

that

are

going

to

make

us

decide

to

make

this

synchronous?

E

E

E

So,

first

of

all

about

the

api

as

a

whole,

they

said

we

reviewed

it

and

we're

satisfied,

but

then,

when

this

debate

raged

for

a

few

months,

tag

also

made

a

comment

on

the

meta

discussion

here,

and

I

think

that

we

should

actually

pay

attention.

So

let's

read

first,

they

said

so

sanguan

said

I

have

looked

at

this

discussion

and

think

that

developer

ergonomic

gains

would

be

minimal.

I

don't

see

a

significant

gain

in

terms

of

ergonomics

for

developers.

E

We

think

then

he

said

it's

fine

to

be

a

sync

async,

so

this

looks

good,

but

then

so

this

is

first

principles.

But

now,

let's

talk

about

the

meta

discussion,

please

so

dan

said

we

can

feedback

that

interoperability

is

an

imperative

and

then

he

said

the

issues

of

interop

are

many.

This

is

something

that

should

be

guiding

the

working

group's

work.

E

E

E

Furthermore,

I

think

that

if

it's

a

more

c

flexible

solution

right

because

if

you've

got

a

synchronous

api,

it's

trivial

to

make

it

asynchronous

and

by

making

it

synchronous

where

actually

we

would

be

constraining,

implementations

such

as

trump's

implementation,

and

I

think

that

would

be

inappropriate,

and

I

think

that

this

debate

has

gone

on

for

too

long.

I

think

that

we

can

do

much

better

and

move

on

to

more

productive

work

by

just

going

with

the

interrupt

solution.

A

E

E

E

We

wouldn't

want

to

do

that

and

the

other

way

that

we

could

go

is

we

could

embed

all

of

the

information

that's

necessary

in

the

crop

target

when

we

mint

it

do

that

in

the

render

process

on

the

top

left

on

the

captured,

captured

side

and

simultaneously

send

it

both

to

the

capture

document,

as

well

as

to

the

browser

process,

so

that

when

the

capture

process

goes

and

calls

crop2

and

asks

the

browser

hey,

I

want

to

start

cropping

to

this.

Yes

or

no.

E

This

would

already

be

there,

but

the

problem

is

that

it

is

not

guaranteed

that

the

crop

target

is

going

to

reach

the

browser

process

first

from

the

capture

document

and

not

for

the

from

the

capturing

document.

So

now

it

could

be

said

that,

yes,

this

is

an

edge

case.

You

can

optimize

for

that.

I

think

that

the

enabler

said

that

on

github

at

the

very

least-

but

I

don't

think

that's

worth

the

complexity

because

now

we

need

to

have

complete.

I

think

un

is

also

common

to

that

effect.

E

So

now

we

need

to

introduce

very

complex

code

that

says:

okay,

but

if

it's

already

here,

I

do

one

thing,

but

if

it's

not

here,

then

I'm

going

to

communicate

back

with

the

original

render

process

and

check.

Hey.

Are

you

still

here,

if

you

just

posted

this

to

me,

etc

and

sure

such

implementations

would

be

possible,

but

there's

always

a

trade-off

between

complexity

and

everything

else,

and

I

we

do

not

believe

that

this

trade-off

would

be

favorable.

Yes,

even.

D

So

I

I'm

surprised

that

you

you're

saying

that

this

is

a

must

on

past

discussions.

I

think

we

agreed

that

this

was

implementable,

synchronously

in

chrome

or

in

in

any

browser,

but

my

understanding

was

that

you,

you

were

favoring

chrome's

current

trade-offs,

meaning

that

you,

you

prefer

that

you

do

the

pre-allocation

at

the

time

the

crop

target

is

created

and

not

at

the

time

of

crop

to,

and

this

is

the

main

motivation

for

an

asynchronous

api.

D

I

pointed

out

that

in

the

last

that

this

is

a

fingerprinting

issue,

this

is

a

potential

interrupt

issue

as

well,

so

that

that's

why

I

think

the

current

chrome's

trade-offs

with

pre-allocating

things

might

be

fine,

but

it's

it's

also

a

food

game,

and

so

in

general,

I

think

that

you

prefer

your

current

approach,

but

you

still

agree

with

being

able

to

implement

the

synchronous

api

with

similar

trade-offs,

except

that

you

think

that

the

implementation

might

be

a

bit

more

complex.

So

that's

that's

really.

D

I'd

like

to

to

finish

what

I'm

saying

so

that

was

my

first

point.

Maybe

I

misunderstood,

but

I

I

I'm

trying

to

narrow

down

exactly

why

you're

asking

for

an

asynchronous

api.

I'm

also

surprised

by

the

two

presentations

on

the

fact

that

they're

both

claiming

that

both

approaches

are

more

efficient,

that

both

approaches

are

faster.

They

cannot

be

faster,

one

needs

to

be

faster

than

the

other,

or

maybe

it's

different

cases.

But

it's

it's.

It's

a

bit.

D

D

Usually

synchronous

api

are

more

efficient,

except

if

you

know

that

there

will

be

something

blocking

like

getting

to

hardware

getting

into

files

hoping

to

thread

and

so

on

here

I'm

not

I'm

not

hearing

that.

So

I

think

that

a

synchronous

api

is

helping

a

bit

web

developers.

It's

not

a

huge

deal,

but

it's

still

a

tiny

important

thing.

E

Okay,

I

would

like

to

answer

and

you've

made

two

points.

The

first

point

included

the

claim

that

about

resource

allocation.

I

think

that

is

irrelevant,

because

resource

allocation

is

not

mandated

by

making

this

async

it's

a

design

decision

that

we've

made

and

we

can

always

roll

it

back.

You've

pointed

out

correctly

the

deficiencies

of

that

and

it's

up

to

us

to

decide

what

to

do

with

that.

We're

not

mandating

that

you

do

anything

similar

just

by

making

it

async.

D

Can

I

understand,

can

I

answer

to

that

you're

saying

we

implemented.

We

implemented

this

and

based

on

our

implementation.

We

learned

a

lot

of

things

and

we

are

thinking

that

we

should

do

that

in

in.

We

should

build

on

that

to

design

the

api

and

what

we're

seeing

is

that

with

your

current

implementation,

there

are

some

potential

issues

with

regards

to

security,

privacy

and

they

are

not

solved.

D

So

I

would

feel

much

more

comfortable

if

they

were

solved

and

then

you

were,

you

would

say,

hey

we

solved

all

these

things,

and

now

we

can.

We

can

say

that

this

is

good,

because

you're

asking

asynchronous

ct

exactly

for

that

reason,

and

that

reason

is

actually

your

implementation

has

some

issues,

and

so

that's

why

it's

weakening

the

synchrony

point.

E

No,

that

is

not

true,

so

it

would

be

very

easy

for

us

to

actually

change

the

implementation

in

the

following

way.

Each

iframe

has

its

own

limit

and

therefore

there

is

no

leakage

of

information

between

iframes

and

that

would

be

trivial

and

therefore

I

don't

think

that

this

is

relevant

and

you

don't

need

to

have

a

limit

on

anything

right.

So

I

think

that

this

is

a

a

red

herring

and

your

second

issue

was

about

which

one

is

faster

and

that

isn't

it

something

that

needs

to

be

debated.

E

It

is

possible

that

for

each

implementation,

things

will

be

faster

differently,

but

the

claims

that

you

you

never

made

about

serial

serializing

multiple

ipc's,

was

unfortunate.

I

didn't

have

time

to

counter

that,

and

that

is

you

can

crop

the

target

whenever

you

want.

You

can

do

this

while

the

user

is

interacting

with

the

prompts

right,

you

can

do

that

before

you

even

display

the

prompt

to

the

user,

so

these

are

not

in

serial.

These

are

quite.

E

E

That's

when

you

need

to

be

fast

everything

that

came

before

that

the

user

did

not

notice

the

page

load

is

the

page

mean

to

the

crop

target,

the

page

posted

the

crop

target

in

between

its

various

sites

and

all

of

this

time

the

user

was

just

moving

slowly

now

to

start

the

capture

and

by

the

time

we

started

the

capture.

All

of

that

is

already.

All

of

this

cost

is

already

paid.

D

D

This

cost

is

so

I

think

we

are

agreeing

that

you

create

a

crop

target.

You

post

message

it

and

you

receive

it

on

a

page.

Then

the

synchronous

api

is

faster

than

the

async

one.

What

you're

saying,

then,

is

that

the

actual

big

latency

thing

is

about

crop

two

and

that

you

think

it's

neglectable

to

optimize

the

crop

target

generation

and

this

code

path,

because

scope

2

will

take

a

lot

of

time,

but

is

that

correct.

E

I

don't

want

to

say

that

everything

you

said

was

correct,

but

I

can

say

the

following

and

you

can

decide

I'm

saying

that

everything

that

comes

before

crop

2

and

before

get

display

media

are

called,

is

irrelevant

and

all

of

those

costs

are

indeed

negligible

and

the

cost

that

matters

is

once

the

user

decides

to

start

capture

and

capture

starts.

If

you

now

crop

to

how

quickly

does

that

happen,

and

because

now

there's

only

a

single

ipcrt

round

trip

that

is

faster.

That

is

my

claim.

I

Yeah,

I'm

I

don't

know

anything

about

the

implementation,

but

from

the

point

of

view

of

a

developer,

who

does

actually

have

an

app

a

small

few

hundred

line

app?

That

would

do

this.

I

have

a

mild

preference

for

it

being

synchronous

because

it's

slightly

tidier

and

slightly

easier

to

use,

but

I'd

sacrifice

that

if

I

was

convinced

there

was

a

user

benefit

or

there

was

a

developer

benefit

and

I

don't

see

a

developer

benefit

to

it

being

to

becoming

pacing

there's.

I

I

imagine

there

might

be

a

user

benefit

in

terms

of

like

the

pre-crop,

somehow

not

like,

not

not

not

seeing

the

whole

thing

and

then

then

it's

shrinking

down

to

the

crop

area

and

if

there's

a

visual

twitch,

that's

unavoidable,

then

like

obviously

getting

rid

of

that's

a

huge

win.

So

that

would

be

kind

of

my

only

overwhelming

argument.

There

is,

if

that's

somebody

could

convince

me

that

that

was

going

to

happen,

then

I

would

kind

of

be

interested.

I

I

think

you

know.

I

The

other

issue

from

the

developer

point

of

view

is,

is

about

whether

the

top

level

page

sees

whether

there's

a

failure

in

with

whether

you're

interested

in

any

kind

of

failures

in

the

resolving

on

crop.

Two

on

on

on

obtain

on

obtaining

a

target.

Is

there

a

way

of

of

an

interesting

crop

target

failure

mode,

because

that

would

convince

also

convince

me

that

that

it

needs

to

be

async.

But

I

haven't

heard

that

so

I'm

kind

of

unconvinced

that

it

needs

to

be

async,

but

I'm

listening

for

reasons

from

a

developer.

E

Point

of

view,

the

claim

has

never

been

that

this

can

only

be

implemented

if

async.

The

claim

has

always

been

that

there

are

multiple

sets

of

trade-offs.

One

of

the

sets

of

trade-offs

requires

this

to

be

async

for

that

trade-off,

and

there

is

one

implementation

that

wishes

to

go

for

that

set

of

trade-offs

and

the

other

implementations

are

not

going

to

suffer.

Developers

are

not

going

to

suffer

and

users

are

not

going

to

suffer

and

therefore

it's

quite

appropriate

to

to

care

about

that.

Implementer's

needs.

I

I

I

don't

think

it's

even

negligible.

I

did

like

I've

had

a

thing

where

you

know

something:

low-level

becomes

an

async

method

and

then

you

have

to

spend

an

hour

making

sure

that

that

kind

of

gets

compartmentalized

and

doesn't

spread

its

way

throughout

the

whole

damn

code.

So

it's

not

trivial,

but

it

isn't

insoluble

by

any

means.

E

I

E

E

F

F

Since

you

mentioned,

there

were

no

downsides,

but

nobody

is

claiming

the

chrome's

optimizations

aren't

useful.

Clearly

they

are,

but

I'm

confused.

It

sounds

like

you're

claiming

chrome

cannot

do

the

same

optimizations

with

the

synchronous

api

with

a

completely

synchronous

api,

and

I

don't

understand

that

because

you

haven't

actually

explained

why

you

needed

to

be

async,

except

for

the

fact

that,

instead

of

falling

back

to

something

slower,

you

need

to

fail

to

the

web

developer.

F

Why

can't

you

implement

a

fallback

and,

regarding

the

let

me

list,

the

the

downsides

again

to

users

attack

on

the

cropping

servers

to

defeat

it

defeated

cropping,

might

leak

private

information,

resource

leaks,

expose

fingerprinting

info

and

to

web

developers.

Async

is

harder

to

use,

introduces

databases

and

proliferation

of

async

web

developers

have

to

deal

with

failure

and

for

spec

authors.

User

agents

have

been

opening

new

issues

on

the

spec

because

of

the

they're

chasing

this

implementing

only

an

optimized

design.

Only

right.

B

B

B

B

B

F

B

E

I

do

not

see

any

benefit

in

continuing

with

this

discussion.

I

think

that

it

has

gone

well

enough.

I

think

that

we've

got

many

of

many

other

things

to

discuss

on

first

principles

and

much

more

progress

to

the

to

have

elsewhere.

It

could

be

that

you

know.

Maybe

this

is

the

suboptimal

decision

here.

Maybe

you

are

making

a

mistake,

but

this

is

quite

a

small

mistake

and

we,

our

time,

would

be

better

spent,

making

other

smaller

mistakes

that

advance

the

web

and

not

dwell

in

on

this

particular

one.

E

D

C

I

can

suggest

to

paths.

One

would

be

to

organize

a

formal

vault

of

the

working

group,

which

would

say

each

member

organization

gets

one

vote

and

we

call

it

that,

and

this

is

used

to

determine

the

path

forward.

The

other

approach

is

to

leave

that

issue

open.

I

guess

what

I'm

hearing

is

that

a

lot

of

it

is

bound

to

implementation

experience.

So

maybe

we

leave

that

open

until

we

get

other

implementation

experience.

That

shows

an

alternative

path

and

brings

more

clarity

as

to

what

the

right

tradeoff

should

be.

B

I

D

You

in

okay,

so

issue

18

is

target

name

to

generate.

So

when

I

read

the

spec

and

I

saw

crop

target,

I

wasn't

sure

exactly

what

it

meant

it's

made

of

two

very

generic

terms,

crop

and

target,

and

it

it

wasn't

very

clear

to

me

what

what

the

scope

was.

I

wasn't

even

sure

what

group

was

a

good

idea,

given

it's

used

a

lot

in

images,

so

I

was

thinking

that

maybe

it

would

be

used

quite

a

lot

in

css

or

html,

and

so

on.

It

appears.

D

Html

is

mostly

about

masking

and

clipping

not

cropping,

so

crop

is

not

used

a

lot

in

web

apis,

so

it's

probably

fine

to

use

it,

as

I

see

it,

target

does

not

bring

much

value

so

to

so.

I

thought

to

myself.

Ideally,

we

would

like

a

name

that

is

very

clear

about

what

the

object

is,

what

what

its

definition

is

and

so

on.

So

next

slide

is

looking

at

different

definitions

that

we

could

have

for

target

and

we

can

define

it

in

different

ways.

It

could

be.

D

So

I

I

looked

at

all

three

definitions

next

slide

and

I

think

that

initially

I

was

inclined

to

go

with

just

the

fact

that

it

was

an

element

reference

or

maybe

a

bounded

box

to

an

element

reference,

but

crop

2

is

a

mediation

track

method

currently

dedicated

to

screen

capture,

but

maybe

in

the

future

it

might

be

prop.

2

might

be

used

for

other

tracks.

For

instance,

if

you

have

phase

detections

somehow,

maybe

you

want

to

do

a

face.

Detection

cropping

as

well

and

maybe

crop

2

would

be

fine.

D

I

don't

know

so

that's

why

I

changed

my

mind

a

little

bit

and

I

thought

that

the

third

definition,

which

is

it's

an

object

with

soul

purposes

to

be

given

to

crop2,

is

the

definition

that

we

want

and

then

we

can

have

multiple

definitions.

Somehow

it

could

be

a

based

on

element

or

it

could

be

based

on

something

and

based

on

that,

I

think

that

probe

target

is

less

good

than

crop

region.

D

This

way,

we

are

clear

that

it's

not

related

to

targeting

an

element

or

whatever,

so

I

would

favor

corporation,

which

would

also

align

with

a

spec

name,

the

spec

name,

it

media

capture,

region

or

screen

capture

region.

I

I

don't

know

that

region

in

the

name

of

spec

region,

capture,

region,

capture,

yeah.

So

I'm

thinking.

L

D

What

about

crop

region?

It

seems

to

me

that

we

could

make

him

making

respect

that

it's

very

clear

that

the

objectives

is

not.

It

purpose

is

really

to

be

given

to

crop

to

we'll

change

a

little

bit,

the

name

as

well,

and

then

we

would

no

longer

have

to

deal

with

the

fact

that

yeah,

it's

just

a

reference

to

an

element

and

so

on,

because

we

will.

D

I

Okay,

yeah,

okay

yeah,

so

I

just

wanted

to

say

that

it's

I

think,

if

we're

gonna

change

the

name,

we

should

make

it

so

or

come

up

with

a

better

name.

I

think

we

should

add

the

fact

that

it's

a

token

it

isn't

a

region,

it's

a

token,

to

a

region.

It

isn't

a

target,

it's

a

token,

to

a

target.

It

should

be.

If

we're

going

to

make

a

name,

we

should

say

that

it's

an

opaque

thing

and

that

should

be

in

the

name.

D

E

I

have

similar

reservations

about

crop

region.

I

also

read

it

initially

as

being

like

a

set

of

coordinates

that

I

would

be

able

to

read,

which

is

wrong,

so

that

would

be

misleading

and,

additionally,

it

kind

of

sounds

like

it's

static

right.

It's

a

region,

whereas

a

crop

target

is

something

that

can

move

because

the

target

can

move,

and

that

is

important,

like

the

entire

reason

that

we

have

crop

target

and

not

a

set

of

coordinates

is

that

that

thing

can

move,

and

that

can

happen

asynchronously

from

the

capture.

B

A

D

B

B

E

10

minutes

behind

schedule

I'll

do

my

best

to

make

up

the

gap.

So

now

we're

going

to

talk

about

cropping

to

non-self

capture

tracks.

Currently,

you

can

only

crop

a

capture

of

the

car

and

tab.

I'm

not

sure

why.

So

I

would.

I

suggest

that

we

allow

cropping

arbitrary

tab

captures.

I

think

that

there

are

compelling

reasons

so,

for

example,

if

the

next

slide,

please.

E

E

So

yes,

so

to

me

what

we

see

here

that

the

local

user

can

see

all

of

the

slides,

including

the

sidebar

on

the

left,

but

nevertheless

they

can

crop

and

only

show

the

slide

not

show

the

meat

on

the

right

not

show