►

From YouTube: WebRTC WG meeting 2022-05-17

Description

See also the minutes of the meeting https://www.w3.org/2022/05/17-webrtc-minutes.html

01:05 Future meetings

04:00 WebRTC

04:00 RTP Header Extension Encryption

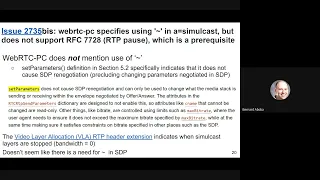

12:00 Issue #2735: webrtc-pc does not specify what level of support is required for RFC 7728 (RTP pause)

16:00 CaptureController

36:14 WebRTC Encoded Transform

39:29 Extensions to Media Capture and Streams

39:30 PR #61: Add support for background blur and configuration change event

52:44 PR #59: Add powerEfficientPixelFormat constraint

1:02:14 Dynamic Source for Screensharing

1:35:30 WebRTC

1:35:38 Simulcast issues

1:45:36 Next meeting

B

A

B

D

D

A

A

B

B

B

B

B

B

B

B

So

you

put

a

cool

cryptex

on

all

the

m

lines

in

a

browser

that

supports

cryptex,

and

then

you

can

set

the

policy

whether

you

want

to

negotiate,

which

means

you'll,

accept

it

not

being

supported

by

the

other

side

or

require,

which

is

that

the

other

side

has

to

support

it

or

you'll

fail

and

then

on

each

transceiver.

You

get

a

little

attribute.

That

tells

you

whether

it

was

negotiated

or

not.

So

that's,

basically

what

we

what

we

had

in

the

api.

B

So

what

can

happen

is

you

a

browser

can

send

an

offer

to

a

peer

that

doesn't

support

it

or,

of

course

you

can

get

an

offer

from

a

peer

that

doesn't

support

it

and

it

basically

it

cryptex

is.

The

extension

is,

what's

called

bundle

transport,

and

it

only

requires

that

you

have

a

cryptex

in

all

the

m

lines

of

a

bundle

group,

but

you

can

have

multiple

bundle

groups,

you

might

not

be

bundling,

so

it

doesn't

require

that

all

m

lines

be

identical.

B

So

here's

an

example

that

harold

came

up

with

where

you

have.

Basically

one

bundle

is

for

audio

and

the

others

for

video,

so

everything's

not

on

the

same

port,

and

this

is

this

would

be

an

offer

you

got

from,

it

would

be

from

a

non-browser,

and

so

here

what's

happening

is

for

some

reason

the

audio

is

doing

cryptex,

but

the

video

is

not

maybe

there's

a

different.

It's

a

like

a

legacy,

video

system

that

doesn't

support

cryptics.

B

So,

if

you

put

in

require

as

your

rtp

header

encryption

policy,

the

browser

would

reject

this

offer,

but

if

you

put

in

negotiate

basically

what

will

happen

is

the

browser?

Would

send

cryptex

for

audio,

but

not

for

video,

so

it's

you

can

get

these

situations

where

it's

sending

it

on

some

bundles,

but

not

others,

but

I

think

that's

basically

what

will

happen

if

it's

negotiated

and

probably

negotiate,

has

to

be

the

default.

D

B

B

I

don't

know

if

we

need

more

examples

with

respect

to

this,

how

this

works,

but

this

is

what's

in

the

pr,

it

is

a

little

complicated

because

you

do

have

to

change

some

of

the

the

steps

in

in

webrtc

pc

to

set

the

policies

and

stuff

like

that,

and

then

we

have

this.

This

extension,

this

attribute

of

the

transceiver,

which

is

the

rtp

header

encryption,

negotiated,

attribute.

B

C

F

F

B

B

B

B

D

G

B

B

Yeah,

okay,

I

think

we've

got

as

much

as

we

can

out

of

that

one.

So

I

do

want

to

try

to

cover

it's

27

35.,

so

it's

been

changing

a

little

bit

when

he.

This

is

what

it

looked

like

when

the

issue

was

originally

filed,

which

is

basically

what

what

this

is

about

is

is

some

confusion.

I

think

that

may

exist

about

rc7728.

B

G

I

I

can

speak

to

this

very

briefly.

There

is

one

place

in

weber

tcpc

where

it

specifies

paying

attention

to

the

simulcast

rid

paused

flag,

so

the

little

tilde

that

you

put

in

the

sdp

in

the

simulcast

attribute,

so

we've

got

one

place

in

set

remote

description

for

processing

remote

answers

that

specifies

looking

at

that

paused

flag

from

8853

and

then

setting

the

active

bit

on

the

the

encoding

parameters

to

reflect

that

state.

G

But

if

you're

gonna

support

that

flag,

that

little

tilde

that

requires

support

for

7728,

and

so

my

question

was

okay.

If

we're

gonna

be

paying

attention

to

that,

don't

we

need

to

support

7728

and

it

seems

like,

like

people

are

kind

of

leaning

towards

removing

that

language

from

webrtc

pc,

so

just

ignore

that

that

little

rid

pause

flag

and

that

just

makes

the

problem

go

away.

B

F

B

B

D

D

D

We

have

capture,

handle

identity

and

actions

that

exist

to

let

apps

keep

the

user

in

the

vc

tab

and

also

we're

hearing

from

some

screen

recording

apps

that

it's

too

soon

to

focus

the

new

screen,

because

the

user

hasn't

hit

record

yet

and

they're

asking

for

a

way

to

focus

later,

which

is

not

part

of

the

proposal

at

the

moment.

But

this

is

about

providing

a

place

where

we

can

put

potential

controls

and

have

these

discussions

later

so

where

to

put

a

focus,

control

api,

why

not

the

video

track?

D

What

that

means

is

that

you

can

have

one

source

and

many

consumers

where

each

track

has

its

basically

its

own

constraints

and

the

constraints

mechanism

here

lets

basically

arbit

excuse

me,

will

arbitrate

between

for

some

properties

on

a

source

that

are

not

shareable.

You

can

use

constraints

to

negotiate,

but

in

practice,

most

browsers,

implement

things

like

downscaling

and

frame

destination

per

track.

So

that

means

that

these

tracks

can

have

independent

settings

and

that's

fine

for

some

apis,

like

we

have

new

methods

on

the

track

like

crop2,

that

is

basically

per

clone.

D

So

if

you

crop

one

track

clone

it

doesn't

affect

another.

However,

imagine

a

new

track

focus

method

say

would

affect

all

clones

and

that's

a

leaky

abstraction,

because

the

user's

focus

is

not

an

inherent

property

of

a

single

video

track,

but

of

the

source

and

the

other

problem

with

putting

it

on

the

video

track.

Is

that

why

only

the

video

track?

What

about

the

audio

track?

Do

they

both

have

this

property?

If

so,

why?

Why

not?

D

D

It's

an

api

to

send

supported

actions

to

the

captured

page

that

we're

we're

still

discussing

it's

still

early

times

for

it,

but

it

also

seems

misplaced

on

the

media

stream

track

where

it

is

right

now,

and

the

reasons

are

the

same.

Basically,

one

source,

many

consumers

and,

unlike

crop2,

send

capital

action

would

affect

all

clones

again.

A

leaky

abstraction

because

progression

of

the

captured

page

isn't

the

property

of

a

single

video

track

and

same

thing.

I

will

disappear

both

on

the

audio

and

video

track.

Who

knows

so?

D

D

D

D

This

controller

is

associated

one-on-one

with

this

get

display.

Media

call-

and

this

is

a

new

advantage

because,

unlike

say

media

devices,

if

you

get

an

event

on

media

devices,

you

don't

know

which

you

know

which

specific

capture

you

can

have

multiple

captures

going

on.

At

the

same

time,

you

know

which

you

don't

know

which

one

it

is,

but

here

you

have

a

dedicated

object

to

this

particular

capture,

so

you

could

do

things

like

if

we

wanted

to

later-

or

we

could

put.

D

This

could

solve

identity

where

you

could

put

origin

and

handle

directly

on

this

controller

object,

and

you

could

have

a

focus

method

on

the

controller

that

returns

a

promise.

So

the

idea

here

is

that

if

you

don't

specify

a

controller,

you

get

backwards,

compatible

behavior,

that

the

browser

will

automatically

focus

in

window

or

tab.

But

if

you

specify

a

controller,

it

will

not

automatically

focus

unless

the

application

calls

focus,

and

this

could

be

the

the

application

could

call

this

even

before

calling

get

display

media

or

you

could

call

it

shortly

after

and

in

the

past.

D

I

don't

believe-

and

this

this

kind

of

solves

that,

because

we

have

a

dedicated

controller

object

for

this

capture

only

and

same

for

actions.

We

could

also

put

get

supported

actions

on

this

controller

and

if,

if

they

are

supported,

you

would

get

a

non-empty

array,

otherwise

you'll

get

an

empty

array

and

you

can

send

an

action

on

your

using

your

controller

dot.

Send

action

next

slide,

for

example,

and

that's

it.

A

Yes,

hello,

so

I

would

need

to

think

about

this

a

bit

more,

but

generally

the

controller

pattern

seems

nice

to

me.

If

this

helps

us

move

forward

with

all

sorts

of

proposals,

all

the

better

a

couple

of

questions

would

you

be

open

to

exposing

a

getter

for

the

controller

on

a

track,

or

would

you

consider

that

this

actually

goes

against

the

grain

of

your

proposal

and

that's

number

one

and

number

two?

What

about

a

proposed

new

api

right

now?

That

returns

several

streams?

D

Sure

so,

on

the

first

question,

I

think

the

purpose

here

would

be

to

not

ex

it

would

be

a

feature

to

not

expose

it

on

the

track.

So

I

would

discourage

having

a

method

to

get

to

it,

because

the

goal

here

was

to

return

an

object

that

the

owner,

the

caller

of

get

display

media,

can

keep

to

themselves

and

and

not

necessarily

distribute

to

every

downstream

media

consumer

that

they

may

have

for

clones

and

that

kind

of

stuff.

D

A

That's

one

thing

they

could

do,

but

what

another

thing

that

you

could

do

is

you

could

say

that

when

you

clone

or

when

you

transfer,

you

don't

actually

transfer

the

controller

and

that

you

do

separately

but

yeah.

I

guess

that

you

do

make

a

good

point

that

if

somebody

really

wants

to

attach

it

to

the

track,

they

can

do

the

it

themselves.

D

Okay,

great

and

the

second

question

yeah,

I

haven't

really

put

anything

about

multi

capture

here

and

I

I'm

open

to

discuss

that.

If

we

think

that

that's

useful,

I

guess

I

haven't

thought

through

how

you

know

it

seems

like

intuitively.

I

would

have

expected

one

controller

per

per

capture,

but

if

you

can

think

of

use

cases

for

having

a

shared

one,

we

could

also

entertain

that.

I

guess.

A

I

would

say

that

if

you're

capturing

several

things

at

once

with

an

api

that

is

not

currently

standardized

but

has

been

proposed,

and

then

you

might

actually

have

completely

different

actions

for

the

different

things

and

I

was

kind

of

leaning

towards

a

model

in

which

you

different,

depending

on

what

you

capture.

If

it's

a

window,

if

it's

a

tab,

if

it's

a

screen,

you

might

have

different

a

different

subclass

of

ministering

track

and

different

things

exposed.

A

It

looks

like

a

controller,

would

have

a

bit

more

trouble

doing

that

unless

you

also

subclass

the

controller

and

gave

it

different

properties,

because

imagine

like

focus,

for

example,

makes

sense

for

capturing

a

window

or

a

tab,

but

probably

not

for

capturing

a

screen.

But

I

imagine

you

would

still

want

to

expose

focus

regardless

so

and

then

what

it

would

raise.

An

exception.

D

Yeah,

so

so

I

put

a

little

disclaimer

at

the

bottom

here.

So

what

did

I

say

here,

sort

of

my

speaker

notes

down

there

so

yeah.

The

idea

of

focus

would

be

that

it

would

return

for

a

captured

window

tab.

It

would

resolve

when

the

tab

window

got

focused,

but

no

earlier

than

get

media

get

display.

Media.

F

D

A

D

F

F

F

We

should

dig

into

that

apply

this

idea

of

separating

these

functionalities

out

of

track

and

see

where

it

should

be

put

in

the

same

object

in

a

different

object

in

a

controller

kind

of

style

or

in

an

object

that

is

created

by

the

display

itself

and

so

on

and

yeah.

Let's

continue

discussing

it

and

improving

it.

A

So

I

would

have

a

quick

question:

how

quickly

do

we

think

if

we

all

agree,

assuming

that

we

agree

that

we

want

to

go

this

way?

How

quickly

can

we

actually

agree

that

this

is

the

shape

and

you

know

get

it

done,

because

otherwise

it

could

be

that

now

other

discussions

that

have

started

a

very

long

time

ago,

for

example,

conditional

focus,

would

now

get

you

know

scheduled

behind.

Yet

more

discussions.

D

Right,

yes,

so

I

I

would

say

that

I'm

not

a

huge

fan

of

subclassing

and

that

it

has

some

benefits.

I

understand,

for

you

know

a

method:

it's

not

there,

but

that's

usually

just

going

to

throw

in

a

different

place.

You're

going

to

get

an

you

know,

type

error

instead

of

can't

focus,

error

or

whatever,

so

it

doesn't

seem

that

different

to

me,

I

think

the

main

benefit

here

is

to

have

an

object

where

we

don't

have

one

today,

and

I

would

certainly

welcome

getting

this

ready.

D

D

A

We

do

have

that

already

a

quick

question:

why

do

you

mean?

What

do

you

mean

that

focus

would

throw

elsewhere

if

it

doesn't

like?

If

we

subclass,

I

mean,

I

would

expect

that

the

developer

would

check,

if

not

not,

focus

right

before

calling

focus

if

they

know

that

this

doesn't

necessarily

have

focus

the

focus

method.

D

Sure,

but

the

the

benefits

of

subclassing

that

you

get

from

c,

plus

plus,

for

example,

where

things

don't

compile

you

catch

it

earlier

are

not

present

in

javascript,

so

you'll

you'll

get

javascript

developers

that

just

call

focus

because

it

worked

when

they

tried

it

and

something

returns,

a

different

type,

suddenly

user

picks

a

different

type

and

then

focus

is

now

not

a

function

of

undefined

as

the

error

they

would

get

right.

So

I'm

saying

supplies

doesn't

bias

that

much

in

javascript.

E

So

to

to

to

a

large

point

and

assuming

that

conditional

focus

is

the

one

topic

where

we

have

the

greatest

clarity,

maybe

capture

controller

should

focus

on

so

to

speak.

Let's

should

focus

on

on

this

and

when

the

time

comes

to

look

at

supported

actions,

we

can

look

whether

they

share

and

offer

the

same

properties

across

the

two

needs.

E

A

Okay,

nobody

objects.

What

what

do

we

think

this

means

for

backwards

compatibility

I

mean

for

if

capture

controller

does

not

exist

on

the

platform,

so

controller

equals

new

capture.

Controller

is

probably

going

to

throw,

whereas

you

know

the

yes,

so

that

looks

less

than

the

best

solution.

Hopefully

there

is

a

better

solution.

F

A

D

A

F

F

So

when

the

promise

is

resolved,

you

know

that

you

will

be

able

to

read

and

get

to

the

keyframe

very

very

quickly.

It

was

mentioned

during

the

generation

of

the

pr

and

discussions

that,

if

the

promise

could

return

the

timestamp,

the

keyframe

timestamp,

it

would

be

a

nice

addition

and

that's

true.

It

would

make

life

a

little

bit

easier.

Probably,

but

since

generate

keyframe

is

able

currently

to

generate

several

keyframes

from

different

encoders.

F

We

would

need

to

generate

multiple

timestamps

and

potentially

resolve

at

the

last

timestamp.

So

it

does

not

work

great

with

multiple

reads

so

next

slide.

The

idea

is

to

basically

remove

so

to

change

the

parameter

from

a

sequence,

just

one

parameter,

so

the

old

version

is

returning

a

promise

with

no

value,

and

it

has

a

sequence

and

the

new

version

would

be

returning

a

promise

that

would

result

to

timestamp

and

there

will

be

an

optional

read,

meaning

that

you

can

select

which

anchor

encoder

you

you

want.

F

B

F

F

The

proposal

is

to

add

a

background

blur

constraint.

It

will

be,

it

would

be

a

capability

and

a

setting

as

well,

and

it

will

allow

applications

to

identify

whether

background

blur

is

supported

by

by

vos,

and

it

would

also

allow

the

application

to

identify

whether

a

given

track

is

already

blurred

or

not

by

the

os

and,

of

course,

with

apply

constraints.

You

would

be

able

to

switch

on

and

off

background

blur.

F

So

the

proposal

is

also

to

make

background

blur

a

boolean

constraint

for

now,

oss

are

more

or

less

like

echo

cancellation,

it's

enabled

or

not,

and

we

could

start

with

this.

I

think

that

we

we

can

evaluate

the

usefulness

for

a

zero

one

kind

of

double

constraint,

and

if

we

go

there,

we

would

need

to

understand

how

to

define

it.

F

F

Yeah

it's

I

I'm

not

exactly

sure

about

the

migration

path.

One

migration

would

be

to

to

add

a

new

constraint

which

should

be

a

double,

for

instance,

maybe

there's,

maybe

when

we'll

implement

it

at

that

point,

all

acs

will

have

changed

to

a

double

and

there

will

be

a

clear

definition.

So

we

we

can

switch

to

the

ball

before

then,

but

yeah,

starting

with

boolean,

is

probably

the

safe

seems

like

a

safe

approach

to

me.

Right

now,.

F

F

So

initially

the

stream

was

not

background

blurred

by

the

os

and

after

that,

it's

background

by

the

os

and

some

oss

will

not

allow

web

applications

to

to

switch

it

off.

But

in

any

case,

there's

a

change

of

settings.

It

went

from

false

to

true

and

web

applications

might

want

to

be

notified

of

that,

and

currently

the

only

way

would

be

to

basically

call

get

settings

over

and

over

and

over.

So

the

proposal

here

is

to

add,

like

a

configure

exchange

event,

so

it

will

tell

the

web

application.

F

Hey

you,

if

you,

if

you're

interested

in

understanding,

what's

what's

happened,

please

call

get

settings,

get

capabilities

and

look

at

whether

these

things

are

good

for

you.

So

it

would

be

a

simple

event.

We

would

start

with

a

simple

event

like

no

fields,

no,

nothing

and

there's

a

small

example

there

that

says

hey.

If,

whenever

configuration

is

changing,

we

will

we

will

toggle

back

on

blur

enough

if

we,

if

we,

if

we

can

dom

you're

on

the

queue.

E

F

So

yeah

I

forgot

to

mention

that

it

would

be

on

the

track

on

the

track

object

and

it

would

apply

to

any

settings

capabilities.

We

can,

but

that's

my

understanding.

We

could

restrict

it

to

only

some

set

some

some

values,

but

we

should.

We

would

need

to

have

a

good

reason

for

that

and

I

feel

like

it

might

be

useful

in

the

future

for

other

properties

as

well.

D

F

F

F

D

F

So

that's

that's

a

that's

a

good

yeah!

That's

a

good

thing

to

to

to

discuss.

For

instance,

I

know

that

on

ios,

if

you

disable

a

cancellation

in

safari

you

we

would

not

be

able

to

to

only

disable

it

for

one

track.

So

there

are

there

precedence

for

things

that

are

leaking

already.

If

you

apply

constraints

on

one

track

and

not

the

other.

D

F

F

H

You're

on

the

queue,

yes,

I

was

wondering

what

other

settings

and

capabilities

would

trigger

the

configuration

change

you

mentioned

always

level

ones,

which

ones

are

they

and

would

it

apply?

Also

if

the

same

device

was

opened

by

multiple

pages

and

one

of

them

was,

for

example,

changing

the

brightness

of

the

exposure

mode

and

different

things

like

that.

F

Yeah,

so

that's

the

same

thing

with

with

grenade,

for

instance,

in

macos.

If

two

applications

are

capturing,

then

they

might

compete

with

camera

settings

and

what

we

try

in

the

browser

world

is

usually

to

to

hide

this,

and

we

can

sort

of

doing

doing

that

and

we

can

continue

doing

that

it

will.

The

configuration

change

event

will

be

at

the

discretion

of

of

the

user

agent.

On

that.

F

Okay

and

it's

it's

true

that

there

might

be

like

some

security

or

privacy

issues

like

if

you're

capturing

between

two

different

origins,

so

you

need

to

be

cautious

and

in

the

pr

we

probably

need

to

to

add

some

warnings

there

in

safari.

I

don't

think

that

it

would

be

possible,

because

there's

only

one

page

that

can

capture

at

any

point

in

time,

and

so,

if

the

track

is,

for

instance,

muted,

we

should

probably

not

fire

the

configuration

change

event.

Even

if

a

configuration

has

changed

yeah.

B

F

So,

henrik

from

google

uploaded

a

pr

which

is

about

adding

poor,

efficient

pixel

format

constraints,

so

it

was

pretty

good

to

describe

it

there

before

further

making

progress

on

vpr.

But

the

issue

is

that

some

cameras

generate

motion.

Jpeg

video

frames,

especially

for

some

like

with

eight

frame

rate,

combinations

and

os,

will

typically

decompress.

F

These

video

frames

before

feeding

feeding

them

to

the

user

agent,

which

usually

expect

like

yuv

or

like

non-compressed

video

frames,

and

if

there

is

a

hardware

decoding,

then

the

impact

of

actually

capturing

motion.

Jpeg

is

okay,

but

if

there's,

if

mobility

decoding

is

expensive

and

for

some

machine,

it

is

then

there's

a

potential

power

impact.

And

apparently

google

can

made

some

measurements

and

validated

value

that

it

was

an

issue

and

some

applications

would

like

to

be

able

to

avoid

these

configurations

so

avoid

the

motion.

F

F

F

If

you,

if

you

require

a

specific

night,

for

instance,

like

I

don't

know

when

motion

jpeg,

video

frame

is

being

used,

but

let's

say

it's

for

super

hd

resolutions,

and

you

actually

want

to

capture

super

hd

resolutions

because

you're

capturing

one

you

just

want

to

capture

a

single

frame,

for

instance,

and

nothing

more

in

that

case,

maybe

using

motion

jpeg,

you

don't

care

about

it

for

typical

video

conferences

system.

I

would

guess

you

would

like

to

enable

it.

F

F

Not

select

motion

gpeg

if

it

can,

but

if

it

cannot

like,

if

you're

setting

with

a

to

exact

constraints,

for

instance,

and

there's

only

motion

jpeg,

then

you

would

still

select

it

and

and

yeah.

That's

that's

fine,

but

I

would

hope

that

user

agents

in

general

will

try

to

favor

not

selecting

over

efficient

pixel

formats.

E

But

I

guess

that's

a

bit

what

I

mean

like

if

you're

asking

for

an

exact

resolution,

then,

as

you

say,

probably

you

don't

care

about

the

power.

You

understand

that

you're

asking

for

something

weird

and

specific.

If

I'm

just

you

know

here

doing

my

get

your

media

so

that

I

can

send

it

over

wherever

you

see,

I

really

don't

want

to

have

to

tell

you

to

do

that

efficiently.

Just

do

it!

E

F

D

F

D

D

A

D

F

E

E

F

So

for

device

selection,

I

totally

agree

the

apply

constraints

thing

it's

fine

as

well

like.

If

you

there's

no

way

you

can

discover

currently-

and

that's

really

sad

like

the

different

part

of

the

camera

and

that's

something

I

I

think

we

should

we

should

do,

but

in

any

case

having

this

constraint

with

it

available

for

apply

constraint

is

good

for

device

selection.

D

D

A

Hello,

thank

you.

So

I

would

like

to

talk

about

sharing

and

specifically

sharing

tabs,

but

actually

we

can

generalize

the

image

to

sharing

anything.

Usually

when

a

user

gets

ready

to

share

a

presentation

or

to

explain

things

to

people,

they

might

have

several

surfaces

that

they

might

want

to

switch

between.

For

example,

I

might

have

you

know

something

for

editing

code,

mdn

wikipedia

stack

overflow,

all

of

them

ready,

scrolled

to

just

the

right

place,

and

I'm

just

about

to

start

giving

the

presentation

I

share.

A

I

show

the

first

thing:

everything's

great

people

can't

stop

themselves

from

uploading

and

then

I

need

to

start

sharing

the

next

thing,

and

here

it

gets

a

bit

tricky

next

slide.

Please,

because

how

do

I

even

go

about

starting

to

share

something

else?

If

I'm

not

using

google

me,

I

need

to

go

to

the

tab

for

the

feeder

conference

right.

So

I

need

to

find

that

out

of

potentially

many

tabs

and

many

windows,

then

I

need

to

trigger

a

cool

another

call

to

get

display

media

right.

A

Instead,

obviously,

we

would

like

to

ship

that

for

absolutely

all

websites

out

there,

but

that's

a

little

bit

problematic

next

slide,

please,

because

until

now

this

did

not

exist.

Many

applications

are

built

with

the

assumption

that

it

does

not

exist

if

they

get

something

and

especially

if

they

establish

a

connection

using

capture

handle,

but

they

can

do

it

using

other

means

they

might

start

relying

on

that

right.

They

could

expose

controls.

A

So

here

we've

got

one

example.

So

currently,

if

you're

using

google

slides

or

google

docs,

you

could

embed

the

meat

inside

and

it

kind

of

assumes

that

you're

capturing

the

current

tab.

If

you

try

to

capture

any

other

tab,

it

tells

you

hey.

What

are

you

doing

and

it

shows

you

an

error

sure

it

could

also

transmit

that

other

tab

remotely,

but

that's

a

product

decision

right.

That's

not

a

decision

for

user

agent.

A

If,

for

some

reason

the

application

does

not

think

that

the

user

should

be

able

to

choose

anything

else,

so

be

it,

and

if

the

user

has

already

chosen

the

right

thing

and

all

sorts

of

nice

things

are

happening

and

the

user

is

happy.

We

don't

want

to

confuse

the

user

by

showing

him

a

button

which

once

pressed

breaks

everything

down

next

slide.

Please.

A

Similarly,

let's

say

that

we've

got

two

tabs

going

and

one

of

them

is

capturing

a

slides

deck

and

we

actually

remote

control

the

tab

and

then

maybe

we

switch

to

capturing

docs

or

maybe

we

started

capturing

docs

and

we

can

actually

scroll

in

the

middle

and

that

scrolls

the

capture,

tab

too,

the

user

might

think

hey,

that's

just

the

general

thing

that

can

happen

right

like

if

I

capture

another

tab.

I

can

scroll

it

from

this

tab.

Then

they

try

to

share

another

tab.

Doesn't

work

and

they're

upset?

But

you

know

next

slide

page.

A

So

next

slide.

Please.

I

suggest

that

the

the

solution

here

is

mind-bogglingly

simple.

All

we

need

to

do

is

yes.

All

we

need

to

do

is

just

expose

an

extra

boolean.

The

application

can

tell

us

if

it's

interested

in

dynamic

sources

and

then

it's

up

to

the

browser

to

decide

what

dynamic

sources

it

wants,

how

it

exposes

it

all

of

the

normal

things,

but

it

could

even

disregard

what

the

application

says

or

not

that's

up

for

discussion,

but

at

least

the

application.

A

F

Yeah,

I'm

a

bit

surprised

by

this

api

surface.

We

need

to

think

a

bit

more

about

the

use

case

and

so

on,

because

it

seems

that

maybe

like

for

the

self

capture

thing

it's

the

user

agent

can

already

do

some

heuristics.

So

that's

one

thing,

but

in

any

case

I'm

not

sure

that

the

web

application

can

decide

whether

it

wants

the

search

change,

supported

to

be

true

or

false.

At

the

time

it

calls

get

display

media.

F

Maybe

it

will

want

the

actual

track

like

oh,

is

it

a

self-capture

or

is

it

oh,

it's

not

self-capture

and

so

on

to

actually

decide

whether

it

wants

to

be

able

to

change

source.

So

maybe

there

should

maybe

there's

a

I.

I

wonder

whether

you

you

had

discussions

for

a

more

flexible

api

surface

or

not

or

it

if

this

a

particular

api

surfaces.

A

So,

thank

you.

You've

mentioned

two

things

going

now

in

the

reverse

direct

order.

If

you

want

to

make

the

application

give

the

application

even

more

power

to

make

it

a

later

make

an

even

more

informed

decision,

I'm

up

for

it,

and

we

can

discuss

exactly

how

to

do

that

when

you

mentioned

heuristics,

which

was

the

first

thing,

I

would

like

to

push

back

on

that.

I

think

that

heuristics

are

going

to

serve

some

applications,

but

not

others.

A

That

we

can

discuss

that

would

be

we

could

discuss.

I

would

argue

that,

because

we

don't

want

to

break

any

or

even

inconvenience

existing

applications

we

can

just

default

to

the

existing

behavior

of

sources

are

not

dynamic,

but

I'm

open

to

discussion,

because

the

important

thing

thing,

I

think,

is

to

allow

the

application

to

tell

us

what

it

wants.

D

So,

yes,

I'm

a

bit

concerned

about

this

api

because

it

would

let

the

application

limit

the

user's

choices,

and

I,

like

the

feature

in

chrome

that

you

can

switch.

I

think

dynamic.

Switching

is

a

good

idea,

but

it

also

has

some

raises

some

questions

like

if

the

user

changes

source

is

that

another

opportunity

will

it

focus

the

new

source?

Will

it

not

focus

it?

D

It's

not

clear

to

me

how

that

would

work,

and

also

I

tried

this

in,

and

this

might

be

good

for

google

meet

to

to

responsibly

set

this,

but

this

would

be

for

any

web

application

and

they

could

turn

off

this

feature

on

users

and

that

doesn't

seem

as

desirable

as

the

having

this

decision

in

the

user

agent.

So

I

tried.

D

Google,

google

docs

has

the

integrated

meat

feature

now

in

chrome,

and

I

tried

it,

and

so

I'm

wondering

if

this

is

actually

related

to

that

and

that

the

the

problem

there

seems

to

be

to

keep

the

user

from

picking

another

source

which,

in

the

current

chrome,

is

a

problem

already.

When

I

start

presenting,

I'm

actually

get

the

chrome

picker

that

defaults

to

this

tab,

but

there's

still

other

options

that

say

other

tabs,

and

if

I

pick

some

of

those

I

get

the

you

mentioned,

why

do

we

click

this

button?

D

I

click

this

button

and

google

me

google

docs

now

tells

me

sorry.

You

can't

do

that.

You

have

to

pick

the

same

tab.

So

I'm

wondering

if

this

is

a

subcategory.

If

this

is

if

the

problem,

this

is

really

solving.

Is

we

want

self

capture

here

and

we

have

a

specific

use

case

for

soft

capture,

so

maybe

the

user

agent

should

just

determine

this

based

on

whether

this

is

self-capture

or

not,

because

self-capture

seems

very

different

from

capturing

any

source.

D

A

D

A

I

think

that

you

should

look

at

it

the

other

way

around

right

now

there

is

no

user

choice.

You

cannot

actually

press

share

this

tab

instead,

so

this

is

a

boolean

and

if

we

look

at

it

as

we

can

look

at

it

as

actually

introducing

new

behavior,

so

we're

not

limiting

the

user,

we're

actually

giving

him

and

the

new

choice

responsibly.

A

This

would

handle,

and

at

least

with

the

chrome

implementation

of

this

ux

you

are.

Actually

you

must

be

focused

on

the

new

tab

at

the

moment

where

you

press

this.

So

it's

no

issue,

it

could

be

that

you

are

gonna,

choose

a

different

ux

and

then

maybe

you

will

want

to

standardize

a

bit

more

something

about

what

does

with

focus

and

I'm

sure

that

we'll

be

able

to

find

a

good

compromise

there,

and

the

fourth

thing

that

you

said

was

that

it

seems

to

you

like.

A

This

is

specific

for

self-capture,

and

I

will

admit

that

this

is

very

so

self-capture

is

the

easiest

example

of

when

this

is

interesting,

and

then

I

would

go

back

to

what

you

end

said.

It's

like

okay,

why

not

use

a

heuristic?

I

would

claim

that

if

you've

got

two

different

applications,

let's

say

that

google

docs

wants

one

behavior

and

microsoft

office

wants

another.

You

wouldn't

want

different

browsers

to

behave

differently.

You

would,

you

know,

employ

different

heuristics.

You

wouldn't

want

one

application

to.

A

You

know

to

be

ill-served

just

because

the

other

one

was

there

first

or

is

louder

or

whatever.

It

makes

sense.

For

this

to

actually

cater

to

the

specific

application,

I

cannot

come

up

with

a

heuristic

that

we

would

fit

all

of

them.

Otherwise

we

would

just

hard

code

that

behavior

and

the

last

thing

that

you

said

I

forgot

that

one.

Could

you

remind

me.

D

I'm

not

sure

that

well

I

I

didn't

actually

suggest

that

this

could

be

used

to,

of

course,

the

user.

So

I

I

think

my

main

comment

here

is

that

it'd

be

useful

to

see

if

user

agents-

I

oh

you

mentioned

that

this

is

a

way

to

enable

features,

but

I

think

it

would

be

better

to

leave

the

application

out

of

that

decision

and

I

think

it's

fine

neces.

It

doesn't

mean

that

this

seems

early

to

stand

wise

to

me,

because

this

is

still

a

user

agent

space.

D

A

Oh

okay,

I

will

respond

to

this,

but

I

did

actually

recall

now

the

fifth

thing.

The

fifth

thing

that

you

mentioned

was

navigation

and

you

asked

why

this

is

not

the

same

and

yes

and

by

the

way.

Sorry,

if

I

put

words

in

your

mouth

about

queers

in

the

user,

I

thought

that

you

said

something

to

that

effect

in

the

editors

meeting,

but

maybe

I

misremembered

so

you're

saying

it's

good.

That

user

agents

are

experimenting

with

this

and

I

respond

to

that

that

we've

got

implications

even

only

inside

of

google.

A

We've

already

got

applications

that

are

pulling

in

different

directions,

one

of

them.

What

wants

to

have

share

this

tab

instead

and

one

doesn't

and

the

heuristic

does

not

actually

allow

us

to

to

give

them

different

behavior,

but

even

if

it

did

what

happens

if

microsoft

comes

next

and

says

that

the

heuristic

that

we

employ

is

exactly

the

inverse

of

what

they

would

like

to

do.

How

do

we

make

a

fair

decision

there?

A

Is

it

okay?

If

I

also

respond

to

navigation

before

handing

the

mic

back

to

you

aren't

sure?

Okay,

so

at

least

for

self

capture.

Navigation

is

interesting

in

that

it

just

stops

the

capture.

So

it's

a

non-issue

there

and

when

it

comes

for

navigating

a

capture

tab,

you

are

correct

that

okay,

so

the

user

can

do

that

and

break

things.

But

there

are

some

problems

that

we

cannot

solve

today.

It

doesn't

mean

that

we

should

not

try

to

solve

other

problems.

So

that

is

my

answer.

Navigation.

F

A

D

A

So

long

as

you

only

change

between

different

tabs,

I

don't

think

that

control

constraints

need

to

change,

and

that

is

our

current

implementation.

Ideally

one

day

we

would

want

to

support.

You

know

changing

between

more

dynamically

between

more

things,

but

that's

you

know

for

the

future,

and

then

we

can

tackle

the

challenges

then,

but

right

now

you

sure

want

that

you

start

sharing

another.

The

most

that

can

happen

is

that

resolution

changes

all

of

a

sudden,

but

that

can

also

happen

if

you

just

resize

the

the

window

that

contains

the

tab.

D

Right,

so

I'm

not

supportive

of

this,

but

as

an

interest

of

progress.

The

I'd

like

to

at

least

like

said

on

the

default

here,

it

seems

like

requiring

applications

to

provide

this

before

you

get

a

source

change

in

one

browser.

Is

it

would

be

better

to

flip

it

and

say

it

sounds

like

the

constraint

here

is

applications

that

don't

want

a

source

change

and

the

default

should

be

benefits

for

the

user,

so

maybe

like

a

prevent

source

change,

at

least

that's.

A

Okay

for

me,

yes,

if

you

would

like

that,

that's

okay

for

me,

but

just

so

I

could

understand

you're,

saying

you're,

not

supportive.

Does

it

mean

that

you're

not

supportive

but

you'll,

say

but

you'll

not

block

it

or

are

you

saying

you're,

not

supportive,

you're

gonna

block

this,

but

just

in

case

your

mind

is

changed

in

the

future.

Let's

bike

should

so,

which

interpretation

is

it

well.

D

I'm

not

convinced

that

the

user

agent

couldn't

figure

out

eurasic

here

on

his

own,

that

would

work

for

both

chrome

and

other

and

yeah

and

microsoft.

For

example.

I

mean

it

seems

to

me

that

the

question

here

is

that

source

change

is

harmful

on

self-capture

because

it

violates

the

assumptions-

and

this

is

much

clearer

once

we

have

get

viewport

media,

because

I

assume

that's

currently

written.

This

will

display.

Media

constraints

is

also

reused

by

get

viewport

media,

so

I

would

object

to

source

change

on

that.

A

We

can

say

that

this

has

no

effect

on

get

viewport

media

if

you're

concerned

about

that

and

about

microsoft

versus

google

et

cetera.

Well,

what

happens

if

we

decide

okay

source

self

capture?

You

don't

get

the

share

this

type.

Instead

button

then

loom

suddenly

comes

over

and

says,

like

hey

users

often

start

recording

the

current

tab,

then

they

switch

and

start

recording

another.

Why

have

you

made

that

impossible?

For

us?

This

is

exactly

what

we're

looking

for.

A

D

F

A

A

E

Yeah,

I

guess

the

time

has

gone.

I

mean

I,

I

think,

I'd

like

to

understand

better

the

relationship.

Indeed

with

self-capture

I

mean

I

I

I

take

your

point

ela

that

may

maybe

apps

that

would

need

this

beyond

self-capture,

but

then

maybe

we

can

discuss

a

broader

thing

once

we

have

these

apps

knocking

out

order,

but

if

there

is

this

consensus

emerging

around

the

hint,

that

sounds

like

a

pretty

good

approach

to

me.

So.

A

I

have

produced

that.

I

know

that

I

acknowledge

that

it's

a

little

bit

less

convincing

than

self-capture,

but

it

is

what,

if

right

now,

I

capture

slides

in

a

different

tab

and

they

start

remote

controlling

that,

and

then

I

find

out

that

they

cannot

actually

remote

control

other

things

and

let's

say

that

the

entire

experience

is

about

you

know

being

able

to

present

slides

nothing

else.

Right.

Let's

say

it's

a

new

video

conferencing

tool.

That's

built

only

around

that!

It's

not

the

most

convincing

example,

but

it

is

possible.

A

A

E

A

D

So

I

would

flip

it

and

say

that

being

able

to

control

next

previous

slide

and

that

kind

of

stuff,

that's

the

property

of

the

captured

page.

So

I'm

assuming,

if

I

navigate

from

one

page

with

this

ability

to

a

second

one,

with

the

also

with

the

ability

that

would

work

really

well

now

with

capture

handle

right.

So

I

don't

see

a

difference

between

tab,

navigation

and

switching

from

one

presentation

to

another.

So

when

it

works,

it

sounds

like

it's

great

that

it

you

know.

A

It's

a

bit

of

a

question

here

because

let's

say

that

I

capture

dopes

and

now

I

can

scroll

up

and

down

the

dock

from

inside

of

me

and

suddenly

I

think,

like

hey,

that's

a

general

ability,

that's

unrelated

to

like

me

as

a

user.

I

don't

really

know

why

it

works

right

and

then

I

share

this

tab

instead

wikipedia

and

it

stops

working

now.

A

Yeah

I

acknowledge

again.

This

is

mostly

interesting

for

self-capture,

but

I

think

that

it

is

enough

if

we've

got

even

only

two

applications

that

engage

in

self-capture.

One

of

them

wants

it,

the

other

one

doesn't.

If

we

cannot

have

a

heuristic

that

keeps

both

of

them

happy,

then

this

hint

is

going

to

make

them

happy.

F

A

I

would

be

I'm

gonna

be

very

convinced

by

this

argument.

Once

again,

viewport

media

is

implemented

and

in

all

browsers

and

adopted

by

web

developers,

because

at

the

moment-

and

this

has

been

reiterated

for

the

last

year

and

a

half

while

we've

been

talking

about-

get

viewport

media,

we

don't

even

know

of

applications

that

can

actually

that

so

get

hyper.

Media

has

two

requirements.

One

of

them

is

not

even

finalized.

Mozilla

is

actually

everybody

in

was

look.

A

Some

people

in

mozilla

other

than

univr

have

not

actually

consented

to

that

mechanism

being