►

From YouTube: AI features UX maturity requirements (2023-05-24)

Description

Walkthrough of the UX maturity requirements of AI-assisted features at GitLab. To help teams understand when it’s OK to move a feature from Experiment to Beta, and from Beta to Generally Available (GA), from a UX perspective.

Presented by Pedro Moreira da Silva, UX functional lead of the AI Integration Working Group, and Staff Product Designer at GitLab.

Slides: https://docs.google.com/presentation/d/1zIsNFJHgLc5Wex6H26bVcyVieqFlXSEJIVTHWG3IIvo/edit?usp=sharing

A

The

experiments

beta

GA

docs

are

ambiguous

about

the

ux

maturity

of

features,

for

example

in

beta.

As

we

see

here.

What

does

ux

complete

or

near

completion

mean,

and

so

how

might

we

help?

Teams

more

clearly

know

when

it's

okay

to

move

from

an

experiment

to

ga,

and

so

the

following

criteria

and

requirements

focus

on

the

ux

aspect

of

the

maturity

of

AI

assisted

features.

Other

aspects

like

stability

or

documentation

must

also

be

taken

into

account

so

that

we

can

determine

the

appropriate

feature

maturity.

A

So

in

the

AI

integration

effort

teams

are

encouraged

to

prototype

and

ship

experiments

fast

so

that

they

can

assess

the

visibility

and

also

the

potential

value,

and

then

teams

work

to

mature

them

to

Beta

And

ga,

and

this,

as

you

might

have

noticed,

is

slightly

different

from

what

we

normally

do.

Usually

features

are

shipped

straight

to

GA.

There's

no

experiments

or

beta

phase

and

in

Parts,

because

the

product

development

flow

does

not

take

into

account

the

maturing

of

features

through

these

phases

and

also

in

the

end.

A

This

is

specific

to

AI

assisted

features,

because

this

is

a

new

and

slightly

different

way

of

working,

and

so

we

don't

want

to

apply

the

ux

requirements

that

I'm

going

to

share

with

you

to

all

features

and

all

of

the

product

developments

that

we

do

on

non-ai

features.

We

want

to

do

that

with

appropriate

diligence,

so

maybe

in

the

future

we

can

take

inspiration

from

these

requirements

and

integrate

feature

maturity

in

the

product

development

flow.

A

You

can

learn

more

in

the

frequently

Asked

question

link

from

this

slide,

so

to

evaluate

the

ux

Mercury

of

AI

assisted

features.

We

use

three

criteria

from

the

product

development

flow,

validation,

problem

validation.

How

well

do

we

understand

the

problem

solution,

validation,

how

usable

is

the

solution

and

then,

in

the

build

track,

improve

phase?

How

successful

is

the

solution

and

a

fourth

Criterion

related

to

design

standards?

How

compliance

is

the

solution

with

our

design

standards?

A

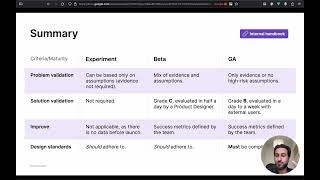

So,

let's

take

a

look

at

a

summary

of

how

these

criteria

map

to

the

requirements,

so

this

is

a

very

brief

summary

and

I

invite

you

to

check

the

complete

requirements

in

the

internal

handbook

section

linked

from

this

slide

in

the

internal

handbook.

You

can

find

each

Criterion

explained

in

full

with

examples

of

how

this

can

work

in

practice

and

also

frequently

asked

questions.

A

A

And

then

evidence

is,

you

know,

credible

information

that

the

team

relies

on

for

their

actions

and

so,

for

example,

evidence

can

be

feedback

from

users

or

an

issue

that

has

user

engagement,

confirming

that

the

problem

exists

or

even

a

series

of

documented

qualitative

research

from

customer

interviews.

So

that

can

be

evidence.

A

Solution.

Validation,

as

you

can

see

here,

is

about

how

usable

is

the

solution

and

so

experiments.

It's

not

required

to

do

any

solution,

validation

to

get

to

Beta.

We

require

a

grade

C

evaluated

in

half

a

day

by

a

product

designer

and

then

for

GA.

We

require

at

least

a

Grade

B,

which

would

be

evaluated

with

external

users

and

I'll

get

into

this

more

in

the

next

slide.

A

Then

the

third

row

improve,

which

is

in

the

build

track,

is

when

the

feature

has

launched,

as

we

see

here-

experiment

that

is

not

applicable

as

there

is

no

data

before

launch

for

beta.

The

success

metrics

are

but

defined

by

the

team

and

the

same

thing

for

GA

success.

Metrics

are

defined

by

the

team,

and

so

how

successful

is

the

solution?

A

To

answer

how

compliant

dissolution

is

with

our

design

standards,

the

teams

evaluate

the

solutions,

compliance

with

our

pajamas

guidelines

and

also

the

design

and

UI

changes

checklist

in

an

experiment.

We

encourage

teams

to

adhere

to

these

design

standards,

so

it

should

adhere

to

the

to

them

beta

the

same

thing

and

then

for

GA.

The

solution

must

be

compliance.

Sorry,

my

face

is

in

front

of

this,

but

it

says

must

be

compliant

for

GA.

A

So,

as

you

can

see

in

the

First

Column

for

experiment,

the

minimum

requirements

to

launch

an

experiments

are

almost

none

and

that's

intentional

to

balance,

experimentation

and

velocity

with

quality

as

experiments

mature,

and

so

the

caveat

here,

if

you

take

all

of

this

into

account,

is

that

the

minimum

requirements

for

beta

and

GA

are

slightly

more

demanding

and

teams

will

need

to

involve

ux

if

they

want

to

mature

their

features.

So

this

actually

incentivizes

teams

to

involve

ux

early

if

they

can

so

that

they

can

mature

features

quickly

and

avoid

potential

reworking

costs.

A

Now,

let's

dig

into

the

solution:

validation,

as

we

got

many

different

questions

about

how

much

effort

teams

would

put

into

this.

So

again,

how

usable

is

the

solution

for

experiments?

It's

not

required,

and

you

can

also

see

the

rows

below

what

that's

pertaining

to

value

that

this

solution

validation

brings.

A

What

is

the

rigor

and

what

is

the

efforts

that

teams

must

put

into

it

and

if

you

then

look

at

beta

and

GA,

what

we

basically

try

to

do

here

is

Strive

a

balance

between

velocity

and

quality,

increasing

the

rigor

of

ux

evaluation

as

feature

maturity,

increases

and

also

use

existing

ux

methods

and

benchmarks.

That

teams

are

familiar

with

we're,

not

Reinventing

the

wheel,

just

using

boring,

Solutions

things

that

we

already

do,

and

so

the

method

for

beta.

A

As

we

see

here,

it's

a

ux

scorecard

with

a

heuristic

evaluation

and

for

GA

it's

the

ux

scorecard

with

usability

testing

and

heuristic

evaluation,

and

this

is

something

that

we

already

do

often,

so

we

periodically

do

ux

car

cards

as

part

of

our

regular

product

development.

It's

not

something

new

and

product

designers

already

have

experience

with

them.

So

what

teams

do

is

greet

the

experience

using

the

ux

scorecard

process,

which

has

two

possible

approaches

for

beta.

A

The

rigor

and

also

the

effort

for

GA

is

something

that

can

be

done

from

a

day

to

a

week,

depending

on

the

complexity

of

the

feature

of

setup

and

also

the

recruiting

participants

and

to

grade

the

experience.

A

product

designer

observes

representative

users

completing

a

set

of

tasks,

and

here

at

the

minimum,

we

should

be

testing

the

feature

with

five

external

users.

A

So

again,

please

check

the

complete

requirements

in

internal

handbook,

section

linked

from

this

slide

in

internal

handbook.

You

can

find

each

Criterion

expanded

in

full

with

examples

of

how

this

can

work

in

practice

and

also

frequently

ask

questions

if

you

have

more

questions

reach

out

to

the

working

group

in

the

slack

Channel

mentioned

here.

Thank

you.

So

much

for

your

time

and

I'll

see

you

around

bye,

bye,.