►

From YouTube: IETF101-BMWG-20180320-0930

Description

BMWG meeting session at IETF101

2018/03/20 0930

https://datatracker.ietf.org/meeting/101/proceedings/

B

A

A

So

what

we're

gonna

get

going

now

folks,

good

morning.

This

is

the

benchmarking

methodology

group

and

I'm

al

Morton.

Thank

you

for

working

so

hard

to

find

this

location.

It's

one

of

the

more

difficult

rooms

to

find

in

in

the

London

Metropole

I

hope

you

all

brought

your

coats

because

occasionally

it

gets

really

cold

in

these

dungeon

rooms.

A

All

right,

so

so,

as

I

mentioned,

this

is

the

etherpad,

where

we're

going

to

collectively

create

our

meeting

minutes.

I've

got

two

folks,

Pierre

and

Carsten,

who

are

going

to

be

primarily

in

charge

of

that,

but

anyone

who

anyone

who

praises

a

question

at

the

microphone

and

would

like

to

be

sure

that

their

name

is

spelled

correctly

and

would

like

to

be

sure

that

the

phrasing

of

their

question

is

exactly

what

they

want

feel

free

to

type

it

on

the

ether

pad.

A

Thank

you

also.

It

helps

it

helps

actually

what

I'll

do

right

right

up

here

at

the

top.

It

helps

to

say

that

al

is

this

purple

color.

So

if

I

type

anything

in

that

that

way,

folks

know

what

what

I

have

pasted

it.

Other

folks

can

can

do

that

as

well.

So

there's

Pierre

going

in

see

this

works

just

like

magic,

great

and

here's

Carsten

fantastic.

A

All

right,

so

here

we

go,

we

and

we

also

have

us

currently

seven

participants

on

meet

echo

and

they

can

see

the

slides,

but

they

will

only

be

able

to

hear

you

if

you

speak

directly

into

a

microphone.

So

what?

When

we're

conversing

in

general

in

the

room

to

make

to

make

it

more

possible

for

these

folks

to

participate?

A

All

right,

so

this

is

our

meeting

at

ITF

101.

As

I

said,

I'm

al

morton.

Sarah

banks

is

intending

to

join

us

remotely.

Today

she

was

unable

to

to

travel

here,

but

we

will

see

her.

I

suppose,

shortly

and

and

you'll

certainly

hear

her

participating

as

we

go.

I

have

the

suggestion

to

move

close

to

the

front

because

we're

usually

a

small

crowd

but

feel

free

to

sit

wherever

you

want.

A

A

Hallway

conversations

certain

hallway

conversations

are

also

covered,

but

they're

they're

covered

by

our

IPR

policy.

What

we

ask

is

that

you

disclose

any

IPR

can

in

connection

with

those

comments

in

a

timely

fashion,

so

and

actually

the

the

truth

is

now

everyone

who's

registered

has

read

this

because

you

have

to

click

that

you

acknowledge

it

when

you

register

for

the

ITF.

So

this

is

mostly

a

reminder

and

if

you're

unfamiliar

with

any

of

these

best

current

practices

documents,

then

that

would

be

a

good

thing

to

take

a

look

at

as

well.

A

A

I'll

be

working

soon,

all

right,

one,

one

Kumari,

our

ad

advisor

area

director

advisor

sitting

up

front

here

and

he's

so

he's

playing

both

the

important

role

of

AD,

advisor

and

and

jabber

scribe.

Thank

you.

So

I've

just

mentioned

the

intellectual

property

rights

statement

and

the

blue

sheets,

where

we

all

sign

in

on

attendance.

That's

all

moving

around

oh

and

that's

got

to

stop.

A

B

A

So

so

here's

our

room,

here's

our

planned

agenda,

we're

going

to

do

the

working

group

status

and

the

Charter

and

the

milestones

briefly

hopefully

assuming

sarah

joins

us-

will

have

a

quick

status

of

the

SDN

controller

benchmark

drafts.

If

not

I

can

provide

that

and

then

a

sue

Dean

Jacob

is

with

us

and

he's

going

to

be

presenting

the

evpn

and

PBB

evpn

draft

updates.

To

that,

then

we

have

a

whole

list

of

continuing

proposals

here,

some

of

for

which

some

of

the

authors

may

not

join

us.

A

The

last

two

items

are

working

group

discussion.

We

have

the

Etsy

nfe

liaison

on

nfe

benchmarking,

a

normative

specification,

we're

going

to

take

a

look

at

that,

and

our

last

item

is

to

sort

of

close

on

the

topic

of

reach

are

during

the

Working

Group.

We've

circulated

charter

text.

We

circulated

charter

milestones,

let's

finish

that

up

and

move

it

on

to

the

next

stage

of

approval.

A

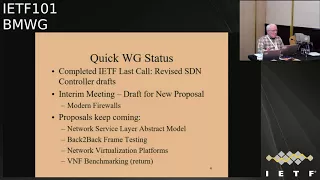

Workgroup,

we

got

quite

a

few

comments

during

last

call

and

the

authors

have

revised

those

drafts.

They

are

now

on

the

agenda

for

the

April

April

mumble

first

week

of

April

I

believe

iesg

meeting.

So

that

means

we'll

probably

get

some

more

comments

from

ADEs

shortly

and

hopefully

that

will

all

go

smoothly

and

we'll

get

those

approved

in

short

order.

A

So

we

we

had

an

interim

meeting

that

was

back

in

February

last

week

of

February,

I

believe

or

first

actually,

first

day

of

March

I

think

was

March

first

and

that's

where

we

really

saw

in

some

detail.

The

new

proposal

for

benchmarking,

modern

firewalls,

had

a

real

chance

to

go

through

that

specification

there

and

had

some

good

comments

on

it.

There's

been

updates,

so

we'll

be

talking

about

that

again

today

and

then

the

our

status

is

really

that

the

proposals

keep

coming

as

you,

as

you

saw

on

the

agenda.

A

All

of

these

are

now

active

proposals,

so

we

will

consider

those

and

milestones

for

them

as

we

consider

retiring

any

questions

on

the

your

working

group

status.

At

least

this

part

of

it.

This

is

sort

of

part

one

because

we

have

no

new

RFC's

this

time,

but

that's

not

bad,

because

at

the

last

meeting

we

announced

about

six,

so

we've

we've

been

making

really

good

progress.

A

We'll

update

the

Charter

as

soon

as

we

can-

and

we

do

have

this

nice

supplementary

you're

working

group

page,

which

has

been

moved

now

to

to

one

of

Sarah's

administrated

websites.

So

we

we

have

that

available

if

you

want,

if

you

want

to

quick

up

quick

heads

up

or

getting

started

guide

to

joining

the

working

group,

this

is

the

place

to

find

it.

I

wrote

this

up

some

time

ago

and

it's

still

still

fairly

valid.

A

All

the

links

are

good

well,

so

let

me

ask

the

question:

who

is

new

to

benchmarking

methodology

working

group

who's

attending

our

session

for

the

first

time,

everyone's

a

repeater?

That's

great,

very

good!

All

right

well

well,

well

welcome

back,

then

everyone,

so

so

that

I

think

that

concludes

the

the

working

group

status,

except

for

the

milestones

and

here's

our

status

on

milestones,

basically

they're

all

done,

which

is

why

we're

reach

are

during.

A

All

right,

so

we

always

have

this

quick

discussion

about

a

standard

paragraph

which

I

have

developed

some

time

ago

and

like

to

see

incorporated

in

our

drafts.

The

reason

for

that

is

is

this

that

we

have

a

scope

which

is

laboratory

testing

only

isolated

test

environment

and

for

years

folks

in

the

security

Directorate

or

other

directorates,

would

look

at

what

we

were

doing

and

just

absolutely

flip

out,

and

you

can't

send

traffic

like

this

over

the

network

and

they

had

never

read

our

Charter

the

scope

of

our

charter

for

lab.

A

Only

testing

was,

you

know,

not

not

generally

included

in

the

drafts,

because

we

all

knew

what

we

were

talking

about

and

and-

and

so

you

know,

we

sort

of

jointly

developed

this

paragraph

for

the

introduction

and

or

security

considerations.

Sections

and

you're

welcome

to

modify

this

or

to

use

it

a

wholesale.

If

it

applies

to

your

work

and

we've

we've

effectively

reduced

the

amount

of

difficulties

that

the

Directorate

reviewers

who've

never

been

in

the

room

with

us

have

with

this

kind

of

thing.

So

just

a

word

to

the

wise

that

that

is

available.

A

A

We

don't

have

Sarah

and

we

do

have

Bala

now.

That's

good.

Okay,

so,

like

I,

can

sort

of

see

who's

joining

us

and

then

so

can

you

in

fact

so

great?

So

so

we

don't

have

Sarah.

We

don't

have

move

on,

who

generally

speak

about

the

Sdn

controller

performance

but

I've

already,

given

a

brief

status

on

that.

A

D

A

D

So

all

this

sections

are

taken

care

and

we

have

modified

based

on

all

the

comments

which

these

are

the

highlights

of

the

comments

and

all

the

sub

comments.

We

have

incorporated

the

draft

so

then

moving

to

the

next

slide,

so

yep

we're

there

yeah

perfect,

thank

you.

So

these

are

all

the

high

level.

You

know

the

benchmarking

parameters

we

have

defined

for

this

services,

that

is,

the

macula,

the

matte

flesh,

the

Mac

aging

high-availability,

the

ARP

and

nd

scaling

scale,

convergence

and

the

soak

so

based

on

eight

parameters.

D

Moved

to

slide

four

sitting.

Oh

thank

you

all.

So

special

thanks

to

Sarah

for

guiding

us

and

she

was

very

helpful

in

giving

the

comments

and

the

total

outlook

of

the

draft

and

thank

you

all

for

the

valuable

feedback

Google

given

in

by

DT

of

ninety

nine

and

offline

comments,

and

really

value

that

and

really

appreciate

that,

and

thanks

al

for

the

support.

D

A

Good

well

then,

thanks

for

thanks

for

updating

the

drafts

again,

this

time

sue,

Dean

and

you

know,

I.

Think,

though

your

your

last

slide

basically

says

next

steps

request

for

adoption,

so

I

think

we're

like

three-quarters,

but

basically

3/4

toward

adopting

this

draft

I

mean

on

the

on

the

summary

of

proposals.

I've

already

got

this

one

marked

green

and

I.

A

A

A

This

item

number

five,

this

one

that

we

we

don't

have

a

presentation

for

this

time,

but

I'm

going

to

give

a

I'm

going

to

give

a

quick

status,

and

that

is

that.

So

this

is

the

MS

Li

M

network

service,

layer,

abstract

model,

so

we're

where

we

had

a

presentation

on

this

remotely

in

the

Singapore

meeting

the

IETF

100.

A

So

what

we've

challenged

the

author

to

do

is

to

is

to

make

this

modeling

effort

more

specific

to

benchmarking

and,

and

so

that

is

something

he's

working

on,

and

he

correspond

with

me

by

email,

basically

to

say

that

the

the

works

in

progress

and

he's

expecting

to

complete

the

draft

this

month

with

this

benchmarking

example

built

in

and

so

we're

looking

forward

to

that.

But

at

the

moment

we're

kind

of

on

hold

waiting

for

update,

so

so

just

so

I'm.

A

E

A

A

It's

defined

in

RFC

1242

as

the

longest

burst

of

frames

that

a

device

under

test

can

process

without

loss,

so

tests

of

this

parameter

are

intended

to

determine

the

extent

of

data

buffering

in

the

device.

Now,

once

we

learned

once

we

learned

that

intent

and

also

the

test

that

was

involved,

we've

got.

A

With

somewhat

further

investigation,

we

found

ways

in

which

we

could

actually

improve

sort

of

getting

at

this

quoted

sentence

and

I'll

describe

that

briefly.

But

one

other

thing

that

we

noticed

is

that

in

RFC

25:44

there's

an

extremely

concise

wording

of

the

objective,

very

concise

procedure

and

very

concise

reporting

and

bye-bye

concise

I

mean

brief,

and

perhaps

at

at

some

point

it

would

be

worthwhile

to

add

some

additional

detail.

A

Almost

able

to

accommodate

the

frame

rate

at

a

given

frame

size

chosen,

then

you

would

see

basically

no

buffering

in

the

device

or

no

no,

no

ability

for

a

burst

of

packets

to

exceed

the

buffer

size,

because

packet

processing

packet,

header

processing

was

was

fast

enough.

That

devices

that

the

packets

were

moving

through

there

was

there

may

have

been

some

buffer

developed

there,

but

it

simply

was

not

possible

to

overflow

with

the

buffers

on

a

regular

basis

every

once

in

a

while.

You

might

see

something

that

was

generally

this:

the

cause

of

inconsistency.

A

So

some

of

the

test

equipment

reported

frame

lengths

which

were

extremely

long

unexpectedly

long,

but

that

turned

out

to

be

just

a

limit

of

the

test

equipment.

It

would

send

a

30

second

frame

of

packets

and

they

would

all

pass

through

because

they

were

at

a

fairly

high

frame

size

and

therefore

a

low

packet

header

rate

and

the

header

handling

rate

was

of

the

device

under

test

was

sufficient

to

handle

those

packets.

So

you

basically

couldn't

create

a

a

loss

and

the

device

test.

A

A

We've

got

different

packet

sizes

here,

64,

bytes,

128,

256,

up

to

15,

18

and,

and

the

most

important

bar

here

is

the

green

bar,

which

is

the

maximum

theoretical

frame

rate

for

the

device

under

test

with

its

interfaces

and

and

so

forth,

and

we

had

a

test

with

a

couple

of

different

V

switches.

The

O

vs

v

switches

in

blue

and

the

VPP

V

switch

vector

packet

processing

is

in

red

and

we

see

throughput

in

frames

per

second.

So

that's

the

y-axis

here

and

and

with

64

byte

frames.

We

could

not

attain

the

maximum

throughput.

A

That's

not

all

that

surprising.

It's

very

common

with

the

smallest

frame

sizes,

and

so

at

this

frame

size

we

were

able

to

very

accurately

estimate

the

buffer

size,

because

now

that

here

the

packet

header

processing

was

less

than

the

theoretical

maximum

throughput

of

back-to-back

frames

and-

and

so

we

always

saw

some

burst

length

which,

which

had

some

loss

in

it

now

here

we're

sort

of

right

on

the

128

byte

frames

we're

kind

of

right

on

the

cusp

of

the

maximum

theoretical.

So

here

we

saw

some

variation,

but

that's

completely

explainable.

A

Sometimes

it

would

handle

it

handle

the

worst.

Sometimes

it

wouldn't.

There

would

be

some

variation

in

the

results.

By

the

time

we

get

to

256

byte

frames,

they're,

their

throughput

is

equal

to

the

maximum

theoretical.

So

now

the

packet

frame,

processing

rate

of

the

device

under

test

is

preventing

the

buffers

from

growing.

So

this

is

this

is

the

effect

that

that

we

felt

was

really

important

to

to

be

able

to

handle

and

to

and

actually

to

cover

as

part

of

the

test

procedure.

So

what

we're

recommending,

then,

is

this

prerequisite

test

of

the

2544

throughput?

A

A

The

trial

requires

sending

a

burst

length

and

Counting

the

forwarded

frames

to

be

sure

that

none

of

them

have

been

lost

and

we're

seeking

the

longest

burst

length

with

zero

loss.

We've

got

a

test.

The

test

outcome

is

the

burst

length

for

each

after

the

searching.

You've

repeat

you,

you

have

found

the

longest

burst

that

you

can

send

without

loss,

and

then

we

repeat

the

test

n

times

with

the

searching

and

and

the

burst

lengths

with

zero

loss

are

then

subsequently

averaged,

and

the

average

link

is

the

benchmark

over

in

Java

and

end-to-end

tests.

A

So

well,

let

me

talk

about

the

updates

now.

Now,

we've

got

a

little

bit

of

background

here.

So

we've

basically

clarified

text

describing

what

is

measured

when

we

report

this

this

length

and

the

corrected

the

corrected

burst

length.

I'll

talk

more

about

that

in

a

minute,

but

let's,

let's

just

stare

at

this.

A

For

a

moment,

knowledge

of

the

approximate

buffer

storage

size

in

timer

bytes

may

be

useful

to

estimate

whether

frame

losses

will

occur

if

device

under

test

forwarding

is

temporarily

suspended

in

a

production

environment

due

to

unexpected

interruption

of

frame

processing,

and

then

this

parenthetical,

an

interruption

of

duration

greater

than

the

estimated

buffer

would

certainly

cost

cause

lost

frames.

Now

you,

you

can't

really

estimate

what

the

sort

of

what

the

minimum.

A

In

in

practice,

what

the,

what

the

guaranteed

number

of

frames

that

that

could

be

a

cover

that

could

be

accommodated

during

a

short

interruption

of

forwarding.

You

can't

really.

You

can't

really

guarantee

that,

because

you

really

don't

know

the

state

of

the

buffers

when

forwarding

is

interrupted,

and

the

truth

is

that

all

the

buffers

could

be

almost

full

when

forwarding

is

interrupted

briefly,

and

that

would

cause

the

sort

of

the

additional

bursts

or

the

the

short

interruption

time

to

be

zero.

A

A

There's

there's

lots

of

detail

in

in

terms

of

background

in

in

these

two

references.

So

what

we've

basically

got

here

is

a

presentation

from

last

summer

at

the

open

platform

for

any

of

the

summit,

and

that's

where

some

of

these

tests

were

analyzed

in

detail

and

we've

also

got

a

wiki

that

supported

that

testing,

which

explains

the

calculations

and

even

more

detail

than

the

slides

in

this

presentation.

A

So

I

I

think,

for

the

sake

of,

for

the

sake

of

time,

I'll,

let

you

read

the

draft

go

over

the

calculations

yourselves,

but

effectively

with

this.

When

you

have

the

the

implied

buffer

time,

it's

it's.

The

average

number

of

back-to-back

frames

that

you

can

send

in

a

burst,

divided

by

the

maximum

theoretical

frame

rate

for

the

interfaces

that

you're

using.

A

So

that's

the

so

that's

the

implied

buffer

time

the

length

of

the

burst

divided

by

the

rate

at

which

the

the

burst

is

sent,

but

when

we

want

to

correct

that,

what

we

need

to

recognize

is

that

with

packet

header

processing

in

progress,

some

of

the

but

some

many

of

the

packets

that

have

been

sent

into

the

device

under

test

have

been

processed

and

passed

out

of

the

device.

By

the

time

the

burst

eventually

causes

a

loss.

A

A

Should

we,

my

guess,

is

that

we

should

always

start

from

a

burst

length

which

were

fairly

sure

that

the

device

under

test

can

accommodate

and

that

way

that

bursts

will

show

zero

loss,

and

then

we

can

begin

to

step

up

in

a

linear

fashion

to

reach

the

burst

size

which

possesses

a

loss.

So

that's

my

thought

on

the

on

one

search

algorithm,

but

there

could

be

others

so

I'm.

A

And

well

then,

let's

consider

this

other

one,

because

it's

also

related

to

we're

searching

so

should

the

search

include

trial,

repetition

whenever

a

frame

loss

is

observed

to

to

potentially

avoid

the

effects

of

background

loss

with

with

virtualized

devices

under

test

virtualized

switches

and

so

forth,

every

once

in

a

while.

You

have

sort

of

this,

this

unexpected

mode

of

operation,

where

some

some

extremely

important

background

process

has

to

be

handled

like

file,

system,

flush

or

something

something

that's

completely

non-maskable

and,

and

you

have

to

have

it,

but

it

could.

A

H

I

Carsten,

a

constant,

wasn't

so

I

think

that's

I

would

like

to

pose

the

question

the

other

way

around

how

to

get

to

a

realistic

measurement

result,

if

only

testing

at

a

given

time,

because

you

know

these

kind

of

things

that

happen

from

time

to

time

in

virtualized

systems

we

see

them

as

well,

but

sometimes

they

happen

only

once

an

hour

and

yeah

I'm,

not

sure

what

is

what

is

the

intention

of

this

methodology?

Should

it

actually

yield

the

optimum

result

or

a

realistic

result

or

the

minimum

results.

A

Well,

if

the,

if

the

phenomenon,

if

they're,

not

phenomenon

that

causes

the

occasional

once

an

hour

of

loss,

is

not

the

phenomenon

we're

trying

to

measure

the

the

capacity

of

the

buffers

in

the

device,

then

it

would

be

really

good

if

we

could

somehow

separate

those

two

effects

and-

and

one

way

to

do

that

is-

is

to

perform

long-term

testing.

Let's

say

at

the

throughput

level,

and

to

look

at

the

results

every

five

minutes

during

the

hour

and

then

to

find

out

how

often

some

background

process

causes

loss

and

influences.

H

A

A

Would

not

necessarily

be

running

or

something

you

know:

I

mean

I'm,

truly

unnecessary

background

processes.

I

think

that

would

be

a

legitimate

thing

to

do,

but

then

some

of

these

other

things

just

like

file

system

flush,

they

have

to

be

lived

with

so

that,

then

your

point

is:

let's

get

that,

let's,

let's

encourage

those

to

happen

before

the

test.

H

E

A

Think

that

I

think

that

the

draft

currently

encourages

people

to

in

general

to

operate

this

test

in

under

circumstances

that

appear

to

be.

You

know,

the

kind

of

test

environment

we've

been

able

to

count

on

in

the

physical

world

where,

if

you,

basically,

if

you

see

unexpected

loss,

you

you

troubleshoot

your

environment

and

try

to

scare

that

stuff

out

I

agree.

It's

going

to

be

much

more

difficult

to

do

that

here

now,

but

I

think

we

should

still

encourage

that,

and

so

anyway,

I

think

that's

I

mean

I.

A

Okay?

Well,

let's

see

so

as

far

as

next

steps

go

I'd

like

folks

to

take

a

look

at

this.

It's

not

that

long,

a

draft

we

could

create

a

milestone

for

this

work.

That's

actually

part

of

the

proposal

for

the

retargeting,

we're

hoping

at

least

I'm

hoping

for

a

working

group

adoption

for

this

draft.

We

can't

ask

anything

about

that

today,

because

who's

read

the

draft

by

the

way

anybody

know

okay,

so

we

can't.

We

can't

ask

that

question

again

today,

but

I

really

encourage

folks

to

read

it

and

well.

A

We

could

still

propose

a

milestone

if

folks

are

interested

in

this

topic-

and

this

is

one

of

the

ones

that

I

think

where

we

can.

We

could

probably

really

learn

a

lot

about

the

environment

that

we're

focusing

on

this

virtualized

testing

environment

through

benchmarking

like

this,

so

I

guess,

that's

it

any

any

further

questions

or

comments

on

this

topic:

No,

okay!

Well,

good!

Thanks

for

your

attention

and

we'll

move

on

to

the

next

thing,.

A

A

H

Okay,

so

actually

my

colleague

Bala

Bala

Raja

has

done

most

of

the

work,

but

he

can't

be

here

today

because

of

another

project,

so

I'm

the

co-author.

So

we've

worked

together

with

a

group

of

people

called

the

the

net

sec

open

group

to

create

this

next-gen

firewall

performance,

benchmarking

methodology

document

as

anybody

but

attended

the

interim

meeting

two

weeks

ago:

okay,

cool

Thanks,

so

I

don't

want

to

spend

too

much

time.

But

there's

some

background

about

this

group

has

been

formed

and

it's

outside

ITF

what

we

decided

to

that.

H

We

would

really

like

to

submit

contribute

our

test

plans

to

the

ITF,

a

vm

WT.

So

the

idea

is

to

have

new

methodology,

a

benchmarking

methodology

and

terminology

for

next-gen

security

devices

firewalls,

but

not

only

firewalls.

Basically,

all

of

the

network

security

solutions

out

there

today,

like

IDs

intuition,

detection,

unified

threat

management,

web

application,

firewalls

and

all

sorts

of

other

beasts.

H

And,

of

course,

there

is

pre-existent

our

efore

firewall

security,

the

firewall

benchmarking,

sorry

testing,

but

that's

about

ten

years

old

now,

and

we

really

want

to

strongly

improve

the

applicability

for

for

today's

solutions,

and

we

also

want

to

improve

the

reproducibility

and

transparency

of

this

kind

of

tests,

because

the

old

IRC

was

like

like

they

used

to

do.

They

provided

some

guidelines.

The

question

is

how

how

do

people

interpret

these

guidelines

in

the

same

way

and

like

tulips

would

come

to

the

same

conclusion?

H

So

one

of

the

ideas

and

requirements

for

our

work

is

also

that

multiple

labs

come

to

the

same

conclusion

and

as

a

footnote.

In

the

end,

we

want

to

create

a

certification

program

about

this,

so

just

a

quick

walk

through

of

the

draft.

Currently

we

are

in

version

zero

to

version

zero.

Three

is

ready,

but

I

didn't

want

to

upload

it

last

minute,

so

it

will

be

uploaded

after

this

meeting

and

so

basically

introduction

scope

and

so

on.

Like

normal,

there

is

a

pretty

extensive

section

on

the

test.

H

Setup

testbed

configuration

how

to

make

sure

the

test

beds

are

all

configured

in

the

same

way.

Some

guidelines

for

the

UT

configuration

participant

configuration

as

well

and

then

a

section

called

test

bed

considerations:

how

to

make

sure

that

things

become

reproducible,

that

everybody

has

the

same

kind

of

test

bed

setup

section.

Six

is

reporting

guidelines,

basically

definitions

of

the

key

performance

indicators

and

we

found

that

even

basic

things

like

I,

don't

know

throughput

or

something

like

that.

H

H

There

is

probably

in

a

few

years

from

now

no

unencrypted

h-e-b

traffic

anymore,

so

if

I

was

need

to

be

tested

specifically

with

SSL

TLS

HTTPS

in

mind,

so

the

test

setup

is

kind

of.

As

expected,

there

is

a

solution

on

the

test

or

device

on

the

tests.

In

the

middle

there

can

be

some

aggregations,

which

is

a

communication

routers

if

the

test

equipment

needs

them

and

the

test

equipment

is

expected

to

emulate

clients

and

servers

on

both

sides

and

routers,

if

needed,

from

the

configuration

perspective

of

the

do

team.

H

So

far,

nothing

No

no

surprises

the

surprise

has

come.

Rather

when

we

talk

about

like

what

do,

we

actually

need

to

test

and

what's

actually

in

scoping

because

of

the

wide

variety

of

different

solutions

that

exists

out

there.

There

is

no

single

set

of

test

methodology

that

can

be

applied

to

everybody

anymore,

and

also

the

amount

of

tests

required

to

test

more

to

validate.

More

advanced

solutions

are

growing

explosively.

H

So

we

we

looked

at

what

kind

of

test

areas

there

could

be

like,

on

the

left

hand,

side

basic

things

like

web

filtering,

antivirus,

SSL

inspection,

it's

actually

not.

The

basic

things

is

like

starting

with

more

advanced

things,

and

we

looked

at

you

know.

What

can

we

actually

provide

and,

of

course,

that

would

be

very

weak

would

become

a

very

long

document

in

the

end,

so

we

said

we're

going

to

focus

on

a

certain

subset

of

test

methodology.

H

For

now

that

we

can

actually

manage

and

of

course

any

contributions

are

welcome,

both

in

this

leftmost

column

area,

which

is

like

the

initial

scope

from

from

the

point

of

view

of

the

current

authors

and

also

in

the

futuroscope.

So

as

my

we

can

get

done,

of

course,

we'd

be

happy,

but

we

wanted

to

confine

our

scope

to

things

that

we

can

do

ourselves

from

the

existing

set

of

contributors,

so

next-gen

firewall

future

scope

would

be

web

filtering.

H

Ddos,

denial

of

service

certificate

validation

and

these

are

all

functions

that

are

implemented

today,

but

they

are

not

implemented

by

everybody,

and

so

we

thought

we

focus

on

that

in

the

future.

And

then

there

are

all

of

these

other

security

functions

which

we

haven't

started

discussing:

next-gen

intrusion

prevention,

service,

web

application,

firewall

breach

prevention

systems,

as

brokers

and

I

forgot

again.

What

80

was

so

so

there

are

lots

of

different

different

functions

and

the

matrix

is

still

open

and

ready

to

be

filled

as

soon

as

we

can

get

some

contributors

for

that.

H

So

the

KPI

definitions

that

we've

that

we

have

so

far

is

on

some

basic

things

like

on

the

TCP

on

the

HTTP

layer

and

things

like

time

to

last

by

10

to

first

byte,

and

actually

it

turns

out.

People

have

a

good

understanding

of

all

time

to

first

byte,

but

we

already

started

to

having

some

discussions

about

type

2

last

byte

and

that's

just

one

example

so

time

to

last

byte.

Just

just

to

give

you

some

insight

means

like

how

long

does

it

take

to

deliver

the

whole

HTTP

response

or

HTTP

response?

H

All

of

the

content

back

and

in

most

cases

people

have

only

looked

at.

How

long

does

it

take

for

the

first

bite

to

arrive,

and

then

the

assumption

was

well.

The

rest

will

just

trickle

through

the

high

in

some

power

but

I

think

that's

that's

not

what

we

see

all

the

time

and

so

time

to

last

byte

is

also

interesting,

but

then

discussions

come

in.

H

You

know

this

depends

on

the

on

the

size

of

the

request

and

response

and

some

people

the

vendors

always

like

to

create

responses

that

are

only

one

byte

large,

because

that

improves

the

performance

of

the

device

and,

of

course

the

operators

want

to

have

realistic

sizes,

and

the

test

left

sometimes

want

to

have

very

large

response

sizes.

So

these

are

the

kind

of

discussions

we've

been

having

in

the

last

few

months.

We

basically

started

discussing

this

around

a

year

ago

and

we

started

the

detailed

test

test

plan

discussions.

H

Brian

would

know

better

what

is

listening,

I

think

six,

seven

eight

months

ago,

so

I'd

like

to

just

walk

you

through

one

of

the

test

cases

as

an

example,

there's

7.1

throughput

performance

with

the

traffic

mix,

and

so

the

first

question

is:

what

do

we

want

to

do

here?

We

just

want

to

determine

the

average

throughput

performance

of

the

next-gen

firewall

and

that

test

case

has

already

been

in

3511.

So

what's

the

difference

here?

H

First,

we

define

a

specific

application

traffic

mix,

so

it's

not

a

uniform

stream

of

frames

or

packets,

that's

coming

in

with

arbitrary

content

or

no

content.

In

fact,

these

are

all

HTTP

HTTP

requests

which

follow

a

certain

distribution

of

URLs

of

certificates

and

so

on,

and

that

has

been

well-defined.

Then

there

are

a

couple

of

variable

test

parameters

that

the

user

can

choose

like

what

how

many

clients

they

want

to

have

how

many

servers

they

want

to

have.

What's

the

traffic

distribution

between

v4

and

v6

I

think

defining

something

statically.

H

It

would

be

unwise

because

v6

traffic

is

growing

over

the

years

and

we'd

have

to

adopt

to

the

reality

and

also

the

initial

end

target

throughput.

So

let's

say

we

start

with

10

percent

of

line

rate

and

then

with

the

target

throughput

would

be

hundred

percent

layer

it

and

we

need

to

see

what

is

the

actual

performance

of

the

solution,

and

so

that

requires

some

binary

search

in

that

case.

So

we

start

in

the

test

procedure

to

run

with

10

percent,

for

example,

then

go

to

100

percent

and

then

go

through

a

binary

search.

H

H

So

that's

why

we

want

to

limit

the

TTL

DTV

ation,

so

they

say

the

difference

between

the

maximum

and

the

minimum

time

to

last.

Byte

shall

not

exceed

a

certain

a

certain

threshold

and

the

same

in

the

same

way.

Also,

the

maximum

TCP

connect

time

is

controlled

in

this

test

case.

Let's

say

the

device

gets

loaded

and

then

it

takes

more

and

more

and

more

time

to

actually

start

responding

in

any

way.

So

it

takes

time

to

connect

new

TCP

sessions,

then

sometimes

a

way

for

our

device

to

manage

very

high

load.

H

A

But

when

we

say

binary

search

did

we

all

know

what

we

mean

when

we

say

that?

Do

we

all

know

that

we're

starting

high

or

starting

low

did

we

all

know

that

there's

a

step

size

involved,

no

matter

how

you

search,

I'm,

I'm

thinking

that

there's

I

see

were

nodding.

I

see,

I,

see

a

possibility

for

us

in

one

of

our

works

to

to

define

this

with

a

little

more

specificity.

Do

you

have

any

thoughts

on

that

yeah.

H

G

A

And,

and

and

and

then

I'm

sort

of

thinking

that

this,

this

repetition

of

checking

certain

results

that

that

would

be

important

to

have

that

if

we,

if

we

agree

that

that's

a

valuable

thing

at

some

point,

that

that

we,

it

would

be

easy

to

see

how

we

would

build

that

into

binary

searches

and

linear

searches

and

so

forth.

If

we

have

the,

if

we

have

the

good

degree

of

specificity,

what.

H

Problem

is

that

binary

search

is

a

very

time

consuming

method.

So

let's

say

we

need

10

iterations

to

get

to

the

precision

of

0.01

percent

failed

transaction

rate

of

water.

Now,

actually,

no,

that's

not

the

point.

I

don't

actually

know

what

what

precision

we

find

here

but

I

remember

for

one

percent

precision

an

average

of

like

seven

iterations

are

required

unless

of

course,

the

device

is

just

passing

the

initial

maximum

rate

with

no

loss

at

all,

in

which

case

it's

only

one

little

rate

or

two

iterations

initial

end

targets.

H

But

if

that's

not

the

case,

then

around

seven

iterations

are

required.

Let's

assume

each

of

those

runs

two

minutes,

then

we

already

have

15

15

minutes,

but

normally

you

want

to

run

the

test

case

a

little

longer.

So

if

you

you

have

half

an

hour

and

then,

if

you

want

to

repeat

these

the

whole

series

of

tests

multiple

times

it

easily

becomes

days

of

testing

just

waiting

for

results,

yeah,

sometimes

a

little

difficult

in

the

lab

sure

so

I'm

actually

open.

H

A

The

tests

tests

of

the

past

should

be

able

to

help

us

fine

tune,

the

parameters

for

the

searches

of

the

future

and

and

that

that

there's,

basically

always

some

correlation

between

results

across

across

tests

that

tests

the

same

configuration

and-

and

there

might

be

there

might

be

a

way

that

we

can

improve

test

time.

I

mean

if

that

test

time

is

always

one

of

our

big

constraints.

H

C

H

A

H

A

Very

good,

so

another

question

I

had

or

comment.

Carsten

is

this

phrase

test

results

acceptance

criteria.

We

have

to

be

with

me

really

careful

with

the

wording

on

this,

because

all

we've

always

said

that

our

benchmarking

and

and

really

any

performance

measurement

in

the

ietf

that

we

don't

declare

kind

of

like

a

pass/fail

criteria.

A

We

we

seldom

set

performance

objectives

here

in

a

numerical

way.

We

may

we

may

recognize

that

they

exist,

but

but

the

ITF

itself

hasn't

standardized

them.

We

allow

others

to

say

here's

the

target

rate

and

and

and

then

you

use

the

ietf

procedure

to

find

out

whether

you've

met

that

or

not

so

I

mean

we.

At

the

same

time,

we've

always

had

a

kind

of

acceptance

criteria.

A

Rfc

2544

throughput

is

run

with

zero

loss

and

and

and

and

that's

a

numerical

sort

of

acceptance,

threshold

or

target

objective

for

the

test

that

you're

running-

and

this

is

this-

is

additional

dimensions

which

have

the

same

I

mean

they

have

the

same

feel

but

I

I

think

we've

got

to

be

careful

about

how

we

word

these,

and

it

may

be

that

we

may

be

that

we

asked

the

testers

to

provide

the

the

point

o

1%

themselves

or

maybe

suggest

this

said.

This

set

of

this

set

of

criteria

has

been

used

in

in.

H

Thought

from

the

beginning

of

my

career

that

the

zero

loss

in

RC

25:44

was

a

strong

and

very

helpful

statement,

and

over

the

last

few

years

we've

jointly

suffered

from

you

know

when

is

trying

to

deviate

from

the

zero

loss

in

the

virtual

world

and

the

subsequent

attempts

to

standardize

any

non

zero

loss

right.

So

I

think

there

is

great

interest

in

these

numbers

and

I

don't

want

to

shy

away

from

them.

If

we

completely

remove

these

targets

from

the

draft,

I

think

it

would

lose

a

lot

of

its

value

because.

C

H

I

H

C

A

A

C

A

H

H

Way

to

make

sure

that

the

numbers,

if

any

here

are

representative

and

realistic,

is

also

to

bring

in

some

users-

and

we

have

this

set

of

calls

weekly

calls

that

are

aside

from

in

WG

calls

they're.

Basically

just

work

working

calls

right

and

currently

we're

bringing

in

some

users

from

financial

sector,

mobile

mobile

service

provider,

mobile

operator

and

automotive

sector.

So

like

just

some

users

enterprise,

and

so

it's

vital

to

make

sure

that

we

actually

fulfill.

A

Know

in

in

in

some

way

include

these

participants

directly

in

BMW

G

work.

If

we

can,

maybe

there

maybe

there's

the

opportunity

for

an

interim

meeting

in

the

future,

where

you

know

sort

of

jointly

BMW,

G

and

net

sec

open

get

together

with

some

of

their

industry

participants,

because

I

mean

just

yesterday

in

the

Operations

Directorate

group,

we

we

talked

about

how

do

we

get

more

user

input

and

then

make

our

specifications

more

relevant,

and-

and

this

is

right

on

point-

if

you

guys

are

able

to

if

net

sec

open,

is

able

to

help

us.

H

Sure

we're

happy

to

so.

It

goes

on

with

the

other

test

cases

and

I.

Don't

want

to

bother

you

with

more

test

case

discussions

here

so

in

7.2,

concurrent

TCP

connection

capacity

with

HTTP

traffic.

It's

a

similar,

you

know

question,

you

know

how

to

get

to

a

good

description,

and

we

also

defined

some

things

here,

like

the

HTP

object

size

and

the

rate

at

which

this

capacity

test

has

been

has

to

be

conducted

to

make

sure

it's

actually

realistic.

H

One

of

the

main

motivations

of

this

homework

has

been

that

the

data

sheets

of

firewall

vendors

have

shown

less

and

less

connection

to

the

reality

of

the

actual

throughput

of

their

devices

in

real-life

scenarios,

and

one

of

the

reasons

was

that

this

test

case,

for

example,

is

typically

carried

out

with

zero

traffic.

So

basically

the

vendors

just

opened

the

session

and

maybe

send

a

request

to

get

one

byte

response

in

the

beginning,

but

then

they

leave

the

session

open

and

they

change

the

time.

H

Also

that

the

sessions

are

in

keeping

kept

open

and

then

sessions

sit.

There

says

this

sit

there

and

it's

basically

just

only

a

memory

game.

You

know

how

much

memory

can

I

put

in

my

firewall

and

how

long

does

it

take

to

fill

that

memory?

But

if

and

then,

usually

we

came

in

as

an

independent

test

lab

and

we

would

try

to

reproduce

this.

It

doesn't

work

because,

of

course,

we

keep

sending

requests

over

all

of

these

connections

or

at

least

a

subset,

and

often

these

can.

H

These

connections

are

dysfunctional,

so

the

the

device

is

completely

overloaded

and

it

doesn't

know

what

to

do

anymore.

It

has

no

capacity

to

process

packet

anymore.

It's

only

busy

opening

more

and

more

and

more

connections

and

that's

what

we

wanted

to

get

away

from

and

make

sure

that

this,

the

result

from

this

test

case

yields

a

realistic

number

of

maximum

connections.

H

H

I

don't

know

for

around

a

year

and

so

they

basically

defined

a

modern

enterprise

parameter

traffic

mix.

We

already

had

this

discussed

in

the

interim

meeting

and

I

remember

Farrah

and

you

and

some

others

saying

yeah.

This

is

wonderful.

Please

not

not

specifically

this

distribution,

but

in

general

the

idea,

please

let

add

more

traffic

mixes

for

other

scenarios,

because

an

enterprise

parameter

is

important,

but

it's

not

the

only

traffic

mix

and,

for

example,

a

mobile

operator

one

would

look

very

different.

So

this

is

a

blend

currently

of

70%

encrypted

30%

unencrypted

traffic.

H

H

But

what

is

important

is

that

the

certificates

don't

met,

don't

change

at

the

same

frequency

and

that

the

type

of

traffic

distribution,

like

how

many

connections

does

the

client

open

to

actually

get

to

office

3

to

use

office

365

that

also

doesn't

change

so

frequently,

and

the

number

of

URLs

used

in

this

parameter

mix

also

doesn't

change

so

frequently.

So

there's

always

a

few