►

Description

Deep Learning for Science School 2019 - Lawrence Berkeley National Lab

Agenda and talk slides are available at: https://dl4sci-school.lbl.gov/agenda

A

B

Hi,

everyone

yeah

so

I'm

here

today

to

talk

to

you

about

some

of

the

projects

that

I'd

worked

on

during

my

PhD,

which

was

focused

on

applying

machine

learning

to

various

chemistry

applications.

So,

by

way

of

a

very

general

introduction,

some

of

the

problems

that

we

care

about

when

we

say

that

we're

trying

to

find

a

molecule

that

will

solve

problems

in

chemistry.

B

It

might

look

like

the

following:

we're

looking

for

a

molecule

that

satisfies

particular

properties

so,

for

example,

and

drug

discovery

we're

interested

in

finding

a

molecule

they'll

fit

in

exactly

this

finding

pocket

inside

of

the

protein,

which

might

be

your

drug

target.

In

the

case

of

something

like

Flo

batteries,

we

care

about

finding

molecules

that

have

the

right

reduction

potential

so

that

when

they're

cycled

thousands,

thousands

tens

of

thousands

times

during

this

celebrity's

lifetime

its

stable

and

it

has

the

right

route

duction

potential.

B

B

It's

very

easy

to

make

small

tweaks

to

a

molecule,

just

change

the

functional

group

to

the

side

and

you'll

get

a

comment

or

explosion

of

moloch

of

molecule

space.

So

it's

very

difficult

to

search

for

molecules

that

will

solve

particular

problems

if

you

do

it

in

just

a

linear

fashion,

and

so

this

is

what

God

does

motivated

to

think

about

ways

of

applying

machine

learning

to

chemical

discovery.

B

Throughout

this

talk

and

to

telling

you

about

my

project,

I

hope

I

can

leave

you

with

a

couple

of

lessons

that

I've

learned

anyways

about

how

to

think

about

machine

learning

how

to

apply

machine

learning

to

chemistry

problems

in

particular,

I

think

it's

really

important

to

be

able

to

frame

both

of

the

input,

representation

and

the

targets,

as

so

something

that

machine

learning

can

model.

So

you

have

to

find

a

way

to

discretize

your

inputs

and

discretize

the

output

to

target

output

in

order

to

be

representative,

all

machine

learning.

B

Obviously,

the

other

big

constraint

is

finding

a

data

set.

That's

large

enough

to

do

what

you

want

to

cover

the

all

the

input

space

that

your

you

care

about,

and,

finally,

as

much

as

possible,

try

to

apply

scientific

knowledge

and

scientific

intuition

into

your

models.

It

really

does

help

the

performance.

Okay,

so

representing

non

regular

inputs.

Molecules

can

vary

in

this

number

of

atoms.

B

They

have

a

number

of

bonds

they

have,

and

this

makes

for

a

rather

complicated

representation

problems

compared

to

say

images

where

you

can

always

have

the

same

number

of

pixels,

and

so

how

do

we

handle

this

for

40

or

50

years

now?

The

drug

discovery

community

has

already

thought

of

some

solutions

to

this

problem.

One

solution

is

to

express

this

graph

as

a

text

screen,

and

so

you

see

here

that

different

fragments

of

the

molecule

correspond

to

different

parts

of

the

text

ring

and

the

opening

and

closing

of

these

rings

are

also

represented

by

numbers.

B

B

That's

local

or

lies

around

that

nodes

of

the

graph

representation.

If

you're

interested

in

learning

more

about

general

representations,

I

encourage

you

to

check

out

this

review

written

by

my

lab

mate,

Ben,

okay,

so

the

first

project

that

I'll

talk

about

is

applying

variational

auto-encoders

to

machine

learning

discovery.

B

So

the

motivation

for

this

is

that,

typically

your

machine

learning,

algorithms,

you

have

input

molecules

you,

trade,

a

machine

learning

algorithm

predict

you

some

properties

that

you

care

about

and

once

you've

trained

this

well,

you

can

go

back

and

iterate

and

generate

new

molecules

that

you

can

then

feed

through

and

actually

test

some

of

these

in

the

lab,

once

you're

satisfied

with

the

with

the

molecules

that

you've

gotten

but

the

predictions

that

you've

made

for

these

molecules.

But

this

can

be

rather

slow

right,

like

it

might.

B

The

first

step

really

is

to

be

able

to

compress

the

representation

in

molecular

space

down

from

10

to

the

60

to

something

more

manageable.

So

the

way

we

did

this

is

by

using

a

variational

oughta

encoder,

so

we

use

a

variational

encoder.

The

first

part

of

it

is

a

convolutional

neural

network

taking

in

this

text

representation-

and

we

end

up

compressing

this

representation

down

to

an

order

of

100

dimensions.

B

Then

we

use

a

recurrent

neural

network

to

decode

the

latent

representation

to

try

to

get

the

same

output,

so

this

is

focusing

on

the

reconstruction.

It

turns

out,

though,

that

there's

no

reason

you

need

to

stop

at

only

using

the

auto

encoder

to

encode

and

decode.

The

molecule

like

this

is

also

a

valid

representation

of

a

molecule.

B

Now

it's

a

learned,

representation

of

a

molecule,

so

we

then

did

was

to

use

this

latent

representation

to

predict

a

target

property

that

we

cared

about,

and

the

idea

here

is

that

now

that

we

have

the

smooth

representation

of

molecule

space,

in

addition

to

optimizing

mo,

we

can

associate

this

representation

with

a

property

and

therefore

we

can

optimize

by

optimizing

on

this

property

surface.

We

can

also

optimize

in

this

latent

space

and

the

code

to

get

whatever

molecule.

B

So

we

did

this

with

toy

example.

We

did

this

with

molecules

from

the

zinc

data

set,

which

is

this

collection

of

drug

molecules,

and

we

sub

sampled

it

so

and

took

250

thousand

molecules

the

labels.

The

properties

that

we

were

tricked

in

here

are

all

chemical,

chemical,

Kevin,

phonetic,

descriptors,

and

the

reason

for

this

is

that

it's

possible

to

have

values

for

all

our

molecules

in

our

data

set

cheaply.

So

this

is

what

the

latent

space

looks

like.

B

Roughly

speaking,

when

you

trading

it

with

just

the

auto-encoder,

I'm

showing

you,

the

coloring

scheme

is

the

value

of

the

log

key,

which

is

a

rough

equivalent,

roach

measure

of

solubility

of

a

molecule,

and

you

can

see

that

the

organization

of

the

lane

space

is

very

disordered

versus

if

you

do

train

it

with

the

two-prong

network

that

I

showed

you,

the

joint

the

property

predicting

autoencoder.

You

end

up

with

a

distribution

of

points

such

that

the

higher

values

are

in

one

part

of

the

lane.

B

Space

and

lower

valleys

are

all

the

other

part

of

the

laden

space,

and

indeed

we

we

didn't

use

this

too.

For

optimization,

we

selected

molecules

with

a

low

percentile,

usually

in

the

in

the

bottom

of

20%,

of

our

objective

value,

and

we

were

able

to

use

this

latent

space

from

bryden's,

with

some

optimization

techniques

to

find

new

molecules

that

had

really

high

property

values

according

to

our

model.

B

So

I

think

this

work

demonstrates

that

optimization

is

possible

when

you

do

have

a

large

label

data

set

and

when

the

property

that

you

want

to

predict

are

pretty

smooth

but

I.

Think

there's

some

obvious

caveats.

In

particular,

we

started

a

lot

with

trying

to

have

the

molecules

generated

be

actually

feasible.

B

In

some

cases,

this

texturing

generated

had

grammar

issues

or

the

molecule

that

it

produced

when

it

was

correct,

was

actually

not

synthesizable

and

there's

a

been

a

lot

of

work

recently

on

finding

ways

to

make

ensure

that

the

molecules

that

are

generated

are

actually

feasible

either

by

applying

a

grammar

rules

like

using

a

grammar

variational

encoder,

to

ensure

that

the

spring

output

is

correct

or

trying

to

generate

molecules

directly

by

building

it

up

from

up

from

fragments

or

even

building

it

up

from

reactions.

There's

been

a

couple

of

papers

in

this

game.

B

Another

big

issue

is

that

this

doesn't

work

well

for

small

datasets

yet,

but

there

needs

to

be

more

work

done

in

terms

of

finding

ways

to

apply

the

learned

representation

in

towards

datasets

that

are

much

smaller

than

twenty.

Fifty

thousand

molecules,

either

by

using

a

transfer

learning

approach

or

by

maybe

using

a

semi

supervised

approach

for

cases

where

you

can

use

some

other

property.

That.

B

So,

in

the

cases

where,

like

in

drug

discovery,

you

might

only

have

a

couple

of

100

labeled

examples,

maybe

you

can

have

some

other

property,

that's

correlated

with

that

property

that,

where

you

can

have

many

more

data

points,

many

more

labelled

examples

and

use

that

help

your

mom

treating

your

model.

The

other

big

issue

with

this

is

that

for

properties

that

are

not

smooth,

this

becomes

a

complicated,

optimization

problem

as

well.

So

it

may

be

difficult

to

use

this

towards

optimizations

of

those

problems.

B

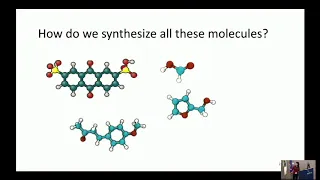

The

next

topic

I'd

like

to

talk

about,

is

synthesis

of

molecules

so,

as

I

said

before,

in

addition

to

these

molecules

being

really

different,

they

all

have

very

different

synthetic

pathways,

and

so

the

problem

of

synthesis

is

a

problem

of

trying

to

identify

which

are

the

building

blocks,

that

you

need.

What

are

the

reactions

that

you

need

to

eventually

synthesize

these

molecules,

so

an

organic

chemist

would

look

at

a

molecule

like

this

and

see

that

this

is

a

particularly

weak

bråten.

B

B

So

what

do

I

mean?

So

how

do

we

frame

the

reaction,

prediction

problem

or

the

synthesis

problem?

The

way

that

machine

learning

algorithm

can

understand

it?

So

when

we

think

about

reaction

prediction,

we're

asking

what?

How

do

the

combination

of

these

two

molecules,

which

I'll

call

reactants

on

this

side

of

the

arrow

and

this

product

interact

so

like

how

old

what

kind

of

product

will

they

smell?

These

molecules

form.

Meanwhile,

the

retrosynthesis

problems,

kind

of

is

the

opposite

problem.

Where,

like

given

a

target

molecule,

what

bill,

what

stuff

should

I

take

to

synthesize

that

molecule?

B

The

data

sets

focus

mostly

on

reaction

predictions

for

now.

The

data

sets

for

reaction.

Prediction

are

one,

that's

the

USPTO

data

set.

So

this

is

a

data

set

of

reactions

that

somebody

scraped

from

all

from

patent

literature.

Basically

and

another

one

is

the

reacts

s

collection,

and

this

is

not

an

open

source

reaction

database,

but

it's

been

scraped

from

all

the

publications

of

reactions.

B

Again,

going

back

to

the

question

of

how

do

we

frame

a

reaction

prediction

in

a

way

that

machine

learning

algorithm

can

generate

prediction?

One

way

of

doing

this

is

by

trying

to

predict

a

reaction

template.

So

what

I

mean

is

that,

given

these

reaction,

molecule

inputs

I

want

to

try

to

predict

which

template?

Which

reaction

is

most

likely

to

occur?

You

can

think

of

this

roughly

as

when

you

play

chess

when

they

be

a

chess

playing

algorithms.

B

They

also

similarly

need

to

predict

which

move

was

the

most

like

was

when

to

help

them

won

the

game.

So

this

is

a

similar

idea.

We

in

my

case

here

for

this

paper.

We

use

of

generate

a

set

of

reactions

and

so

like

constrained.

The

spades

only

consider

16

different

kinds

of

reactions

right.

So

then,

the

process

of

predicting

these

reactions

is

trying

to

as

a

multi.

B

Because

of

the

classification

tasks

then

trying

to

classify

which

reaction

type

is

most

likely

to

occur,

and

once

you

know

which

reaction

type

is

most

likely

to

occur,

you

can

apply

that

transformation

role

to

your

reactants,

the

generative

product.

So

this

is

one

way

of

framing

the

reaction

prediction

question.

Another

way

that

has

been

a

lot

more

popular

in

recent

years

is

to

predict

the

product

directly,

and

so

what

I

mean

by

this

is

well.

B

So

what

would

happen

if

you

combined

all

of

the

do

you

make

all

the

possible

connections

between

atoms

or

break

all

the

possible

connections

between

the

atoms,

and

then

they

rank

those

outputs

to

generate

the

and

then

predict

which

candidate

is

the

most

likely

to

generate

the

product

of

the

reactants

so

we're

doing

fairly

well

on

this

data

set

reaction,

prediction

is

about

91.

The

last

most

recent

paper

is

reported

and

Ike

received

91

percent

on

the

reaction

prediction

task

and

for

synthesis

generation,

which

is

kind

of

the

reverse

problem.

B

We're

getting

about

sixty

three

point:

three

percent

of

the

correct

correctly

predicted

reaction,

synthetic

routes

in

top

ten

matches,

but

they're

also

again

caveats

here.

In

that

the

data

sets

our

reaction.

Prediction

are

quite

limited

in

particularly

the

scope

of

the

reactions

included

in

the

data

sets

can

be

somewhat

limiting

and

also

there's

a

lot

of

noise

within

the

data

set.

So,

for

example,

many

reactions

might

not

include

alcohol

or

water

as

one

of

their

reactions

or

reagents,

because

to

that

community

everybody

already

uses

alcohol

or

water.

So

it's

a

sort

of

implied

for

them.

B

But

when

we

go

to

train

a

neural

network

for

reactions,

then

that

might

not

that

might

be

useful

information

that

shouldn't

be

implied.

That

should

be

added.

The

other

big

issue

is

that

a

lot

of

reaction

datasets,

don't

include

very

many

negative

reactions

or

examples.

Those

reactions

that

don't

work-

and

this

is

a

huge

liability

for

when

you're

trying

to

predict

reactions,

because

if

you

only

see

positive

examples,

you

don't

see

any

negative

examples,

then

you

won't

actually

know

which

ones

of

these

kinds

of

reactions

will

or

will

not

work.

Okay.

B

B

So

what

is

mass

spectrometry

about?

Well,

let's

say

you

have

some

molecule

that

you

don't

know

what

its

identity

is.

One

way

you

can.

One

process

you

can

take

to

try

to

identify

the

molecule

is

to

ionize

it

like

an

electron

beam

and

then

accelerated

through

an

electromagnetic

field

to

get

spectra,

and

the

spectra

is

then

basically

a

histogram

of

the

resulting

ions

from

the

ionization

of

molecules

and

it's

sorted

by

its

master

charge

ratio.

And

then

what

do

you

do

with

the

spectrum?

B

So,

as

a

result,

what

we

prove,

what

we're

saying

is

that

I,

what

you'd

want

to

do

is

to

expand

the

existing

libraries

to

include

new

molecules.

So

how

do

you

expand

the

new

existing

library?

So,

and

so

the

idea

is

that,

if

you

want

to

expand

the

existing

libraries,

you

would

be

able

to

find

matches

in

some

matches

in

the

Augmented

portion

of

the

data

set

of

life

in

the

Augmented

part

of

the

library

so

thereby

helping

to

cut

increase

the

coverage

of

the

existing

libraries.

So

how

do

we

get

new

spectra?

B

There's

been

efforts

by

the

Wishard

lab

to

predict

the

fragmentation

and

behavior

using

machine

learning.

So

it's

a

interesting

approach.

Actually

they

look

at

the

molecular

graph

and

they

try

to

predict

the

fragmentation

probability

across

each

bond

and

then

use

that

to

aggregate

the

results

into

a

spectra.

And

this

is

a

lot

faster

than

quantum

mechanics

predictions,

but

it

still

takes

a

while

for

some

of

the

larger

molecules,

since

it

needs

to

consider

all

the

bonds

within

a

molecule

yeah.

The

problem

is

time,

I'm,

mostly

talking

about

this.

B

On

the

basis

of

assuming

that

you

want

to

generate

predictions

of

spectra

for

thousands

of

molecules

within

an

hour,

yeah

I

mean

it

definitely

depends

on

your

constraints.

Right,

like

I

mean,

if

you

have

the

time

to

generate

spectra

using

or

to

predict

spectra

using

quantum

mechanics

for

all

of

the

molecules

in

your

data

set,

then

I

think

that's

probably

still

the

way

to

go,

but

it's

just.

B

If

you

want

to

generate

these

quickly,

then

maybe

like

you

can

imagine

that

in

some

cases

you

want

to

generate

spectra

for

a

million

molecules,

and

so,

if

you

need

a

million

molecules

by

10

minutes,

it's

going

to

be

really

long

time.

Perhaps

you

would

like

to

generate

them

more

quickly

with

a

rough

estimate,

it

just

depends

on

what

to

what

your

goal

is

all

right.

So

what

we

propose

here,

then,

is

to

try

to

predict

the

spectra

directly.

B

So,

instead

of

we

have

an

end-to-end

prediction

process

where

we

take

in

the

incoming

fingerprint

representation

and

try

to

predict

the

spectra,

and

so

here,

in

our

case

we

represent

the

spectra

as

a

multi-dimensional

regression

task.

So

we

consider

all

bins

from

one

to

a

thousand

and

try

to

predict

the

intensity

of

each

of

those

bins.

B

So

I

want

to

take

a

moment

here

to

talk

about.

Let

me

sow

the

data

set

for

this

prediction

is

the

we

use

the

NIST

library

itself

for

the

prediction

task,

there's

about

250,000

spectra

in

that

prediction

task,

and

it

also

comes

with

this

collection

of

30,000,

replicate

spectra,

and

so

these

recommended

spectra

are

interesting

and

that

these

molecules,

the

spectra

from

these

molecules,

were

considered

too

noisy.

B

So

I'd

like

to

take

a

moment

then,

to

talk

about

the

importance

of

accounting

for

physical

phenomena

in

your

models,

so

in

mass

spectrometry,

it's

you

get

to

ions

right,

it's

possible

to

get

both

a

small

ion

that

corresponds

to

this.

The

actual

fragment

that's

broken

off.

So

in

this

case

we

have

a

methyl

group,

that's

breaking

off

and

this

if

it

gets

a

positive

charge

after

the

ionization

event,

then

you

have

a

peak

at

15.

B

But

if

the

opposite

happens

is

the

positive

charge

ends

up

on

the

larger

fragment,

then

you

get

a

peak

at

in

this

case.

It's

58

minus

15,

which

is

43.

Now

what

happens

if

I

consider

the

same

event

for

a

larger

molecule

for

a

larger

molecule

than

your

molecular

mass

is

72,

and

if

the

positive

charge

goes

on

the

smaller

fragment,

then

you

still

have

a

mass

at

15.

B

But

if

the

larger,

if

you're

the

fragment

the

positive

charge,

goes

on

the

larger

fragment

now,

then

this

is

the

same

fragmentation

event,

but

now

the

occurs

at

57,

just

because

the

molecular

mass

of

the

molecule

is

different.

So

if

you're

interested,

you

can

read

more

about

the

way

that

we

applied

this

technique

and

the

model,

but

basically

we

had

the

model

account

for

the

molecular

mass,

but

you

gave

them

all

the

fuel,

the

molecular

mass

in

the

prediction

process

to

help

it

make

predictions

about

each

pin,

and

this

has

had

a

significant

results.

B

So

what

I'm,

showing

you

here?

This

is

the

baseline

prediction.

So,

if

you're

using

the

original

reference

library

to

perform

this

library

matching,

then

this

is

the

best

possible

performance

you

could

receive

if

you

use

a

linear

regression

model

instead

of

the

full

multi-layer

perceptrons,

all

I

showed

you

before

you

get

about

4

percent

accuracy

by

including

this

molecular

mass

into

the

prediction.

You

can

get

20/20

points

better

prediction

prediction,

and

this

is

just

on

the

linear

regression

model.

B

So

the

extensions

of

this

would

be

to

try

to

consider

this

for

smaller

classes

of

molecules.

But

again,

yes,

we,

you

have

to

make

sure

that

you

have

enough

examples

to

represent

the

spectra

in

this

case,

then,

you

can

also

imagine

using

this

predicts

other

kinds

of

spectra.

So

yes

to

go

back

to

them.

B

Obviously,

it's

also

important

to

find

a

reasonably

large

data

set

for

that

covers.

The

range

of

inputs

that

you

care

about,

the

definitions

are

reasonably

large.

Well,

do

vary

for

different

applications

and

finally,

as

demonstrated

by

the

last

project,

it's

really

important

to

apply

scientific

knowledge

and

apply

intuitions

that

you

already

know

it's.